Kubeadm部署k8s-1.20以上版本

注意:本文通过Docker+kubernetes(k8s)+DevOps企业级架构师实战培训课程实验所得,感谢先超老师!

准备工作

k8s环境规划

| 服务 | IP/Mask |

|---|---|

| Service网段 | 10.10.0.0/16 |

| Pod网段 | 10.244.0.0/16 |

| 操作系统 | centos7.6 |

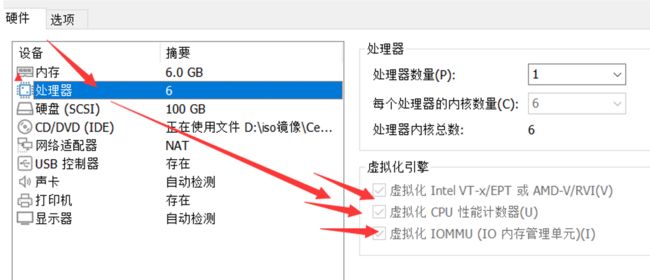

| 配置 | 4Gib内存/6vCPU/100G硬盘 |

| 网络 | NAT |

| 虚拟化服务 | 开启虚拟机的虚拟化 |

| K8S集群角色 | IP | 主机名 | 安装的组件 |

|---|---|---|---|

| 控制节点 | 192.168.40.180 | kaivimaster1 | apiserver、controller-manager、scheduler、etcd、docker、keepalived、nginx 、kube-proxy、calico、kubelet |

| 控制节点 | 192.168.40.181 | kaivimaster2 | apiserver、controller-manager、scheduler、etcd、docker、keepalived、nginx、kube-proxy、calico、kubelet |

| 工作节点 | 192.168.40.182 | kaivinode1 | kubelet、kube-proxy、docker、calico、coredns |

| Vip | 192.168.40.199 | kaivimaster1/kaivimaster2 | keepalived、nginx |

kubeadm和二进制安装k8s适用场景分析

kubeadm是官方提供的开源工具,是一个开源项目,用于快速搭建kubernetes集群,目前是比较方便和推荐使用的。kubeadm init 以及 kubeadm join 这两个命令可以快速创建 kubernetes 集群。Kubeadm初始化k8s,所有的组件都是以pod形式运行的,具备故障自恢复能力。

kubeadm是工具,可以快速搭建集群,也就是相当于用程序脚本帮我们装好了集群,属于自动部署,简化部署操作,自动部署屏蔽了很多细节,使得对各个模块感知很少,如果对k8s架构组件理解不深的话,遇到问题比较难排查。

kubeadm适合需要经常部署k8s,或者对自动化要求比较高的场景下使用。

二进制:在官网下载相关组件的二进制包,如果手动安装,对kubernetes理解也会更全面。

Kubeadm和二进制都适合生产环境,在生产环境运行都很稳定,具体如何选择,可以根据实际项目进行评估。

初始化

配置静态IP

把虚拟机或者物理机配置成静态ip地址,这样机器重新启动后ip地址也不会发生改变。

以kaivimaster1主机修改静态IP为例:

#修改/etc/sysconfig/network-scripts/ifcfg-ens33(具体看网络网卡,有的是eth0)文件,变成如下:

# vim /etc/sysconfig/network-scripts/ifcfg-ens33

TYPE=Ethernet

PROXY_METHOD=none

BROWSER_ONLY=no

BOOTPROTO=static

IPADDR=192.168.40.180

NETMASK=255.255.255.0

GATEWAY=192.168.40.2

DNS1=192.168.40.2

DEFROUTE=yes

IPV4_FAILURE_FATAL=no

IPV6INIT=yes

IPV6_AUTOCONF=yes

IPV6_DEFROUTE=yes

IPV6_FAILURE_FATAL=no

IPV6_ADDR_GEN_MODE=stable-privacy

NAME=ens33

DEVICE=ens33

ONBOOT=yes

#修改配置文件之后需要重启网络服务才能使配置生效,重启网络服务命令如下:

service network restart

注:/etc/sysconfig/network-scripts/ifcfg-ens33文件里的配置说明: NAME=ens33

#网卡名字,跟DEVICE名字保持一致即可 DEVICE=ens33

#网卡设备名,大家ip addr可看到自己的这个网卡设备名,每个人的机器可能这个名字不一样,需要写自己的 BOOTPROTO=static

#static表示静态ip地址 ONBOOT=yes

#开机自启动网络,必须是yes IPADDR=192.168.40.180

#ip地址,需要跟自己电脑所在网段一致 NETMASK=255.255.255.0

#子网掩码,需要跟自己电脑所在网段一致 GATEWAY=192.168.40.2

#网关,在自己电脑打开cmd,输入ipconfig /all可看到 DNS1=192.168.40.2

#DNS,在自己电脑打开cmd,输入ipconfig /all可看到

配置主机名

#配置主机名:

在192.168.40.180上执行如下:

hostnamectl set-hostname kaivimaster1

在192.168.40.181上执行如下:

hostnamectl set-hostname kaivimaster2

在192.168.40.182上执行如下:

hostnamectl set-hostname kaivimaster3

在192.168.40.183上执行如下:

hostnamectl set-hostname kaivinode1

配置hosts文件

#修改kaivimaster1、kaivimaster2、kaivimaster3、kaivinode1机器的/etc/hosts文件,增加如下四行:

# vim /etc/hosts

192.168.40.180 kaivimaster1

192.168.40.181 kaivimaster2

192.168.40.182 kaivimaster3

192.168.40.183 kaivinode1

主机节点免密

配置主机之间无密码登录,每台机器都按照如下操作

#生成ssh 密钥对

ssh-keygen -t rsa #一路回车,不输入密码

把本地的ssh公钥文件安装到远程主机对应的账户

ssh-copy-id -i .ssh/id_rsa.pub kaivimaster1

ssh-copy-id -i .ssh/id_rsa.pub kaivimaster2

ssh-copy-id -i .ssh/id_rsa.pub kaivimaster3

ssh-copy-id -i .ssh/id_rsa.pub kaivinode1

关闭firewalld防火墙

关闭firewalld防火墙,在kaivimaster1、kaivimaster2、kaivimaster3、kaivinode1上操作:

systemctl stop firewalld

systemctl disable firewalld

systemctl status firewalld

关闭selinux

关闭selinux,在kaivimaster1、kaivimaster2、kaivimaster3、kaivinode1上操作:

sed -i 's/SELINUX=enforcing/SELINUX=disabled/g' /etc/selinux/config

#修改selinux配置文件之后,重启机器,selinux配置才能永久生效

重启之后登录机器验证是否修改成功:

getenforce

#显示Disabled说明selinux已经关闭

关闭交换分区swap

关闭交换分区swap,在kaivimaster1、kaivimaster2、kaivimaster3、kaivinode1上操作:

#临时关闭

swapoff -a

#永久关闭:注释swap挂载,给swap这行开头加一下注释

vim /etc/fstab

#/dev/mapper/centos-swap swap swap defaults 0 0

#如果是克隆的虚拟机,需要删除UUID 很重要

重启服务器

修改内核参数

修改内核参数,在kaivimaster1、kaivimaster2、kaivimaster3、kaivinode1上操作:

#加载br_netfilter模块

modprobe br_netfilter

#验证模块是否加载成功:

lsmod |grep br_netfilter

#修改内核参数

cat > /etc/sysctl.d/k8s.conf <<EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

EOF

#使刚才修改的内核参数生效

sysctl -p /etc/sysctl.d/k8s.conf

问题1:sysctl是做什么的? 在运行时配置内核参数 -p

从指定的文件加载系统参数,如不指定即从/etc/sysctl.conf中加载问题2:为什么要执行modprobe br_netfilter? 修改/etc/sysctl.d/k8s.conf文件,增加如下三行参数:

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1 net.ipv4.ip_forward = 1sysctl -p /etc/sysctl.d/k8s.conf出现报错:

sysctl: cannot stat /proc/sys/net/bridge/bridge-nf-call-ip6tables: No such file or directory sysctl: cannot stat

/proc/sys/net/bridge/bridge-nf-call-iptables: No such file or directory解决方法: modprobe br_netfilter

问题3:为什么开启net.bridge.bridge-nf-call-iptables内核参数?

在centos下安装docker,执行docker info出现如下警告: WARNING: bridge-nf-call-iptables is disabled WARNING: bridge-nf-call-ip6tables is disabled解决办法: vim /etc/sysctl.d/k8s.conf net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1

问题4:为什么要开启net.ipv4.ip_forward = 1参数?

kubeadm初始化k8s如果报错:

![]()

就表示没有开启ip_forward,需要开启。

net.ipv4.ip_forward是数据包转发:

出于安全考虑,Linux系统默认是禁止数据包转发的。所谓转发即当主机拥有多于一块的网卡时,其中一块收到数据包,根据数据包的目的ip地址将数据包发往本机另一块网卡,该网卡根据路由表继续发送数据包。这通常是路由器所要实现的功能。

要让Linux系统具有路由转发功能,需要配置一个Linux的内核参数net.ipv4.ip_forward。这个参数指定了Linux系统当前对路由转发功能的支持情况;其值为0时表示禁止进行IP转发;如果是1,则说明IP转发功能已经打开。

配置阿里云repo源

配置阿里云repo源,在kaivimaster1、kaivimaster2、kaivimaster3、kaivinode1上操作:

这里以 kaivimaster1服务器为例

在kaivimaster1上操作:

安装rzsz命令

[root@kaivimaster1]# yum install lrzsz -y

安装scp:

[root@kaivimaster1]#yum install openssh-clients

#备份基础repo源

[root@kaivimaster1 ~]# mkdir /root/repo.bak

[root@kaivimaster1 ~]# cd /etc/yum.repos.d/

[root@kaivimaster1]# mv * /root/repo.bak/

#下载阿里云的repo源

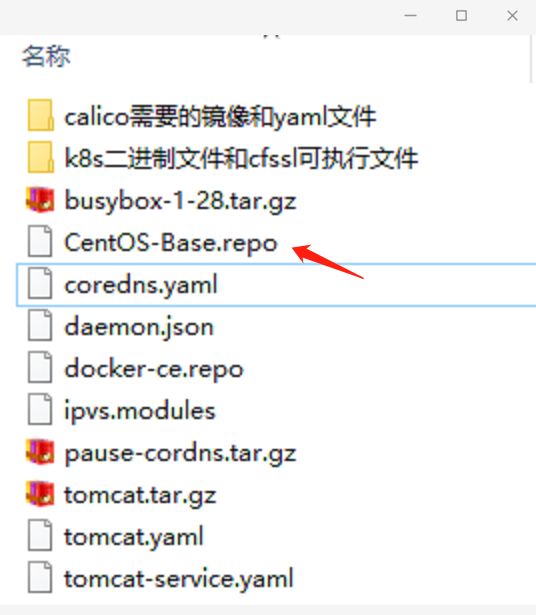

把CentOS-Base.repo文件上传到kaivimaster1主机的/etc/yum.repos.d/目录下

#配置国内阿里云docker的repo源

[root@kaivimaster1 ~]# yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

配置时间同步

配置时间同步,在kaivimaster1、kaivimaster2、kaivimaster3、kaivinode1上操作:

#安装ntpdate命令,

#yum install ntpdate -y

#跟网络源做同步

ntpdate cn.pool.ntp.org

#把时间同步做成计划任务

crontab -e

* */1 * * * /usr/sbin/ntpdate cn.pool.ntp.org

#查看计划任务

crontab -l

#重启crond服务

service crond restart

安装iptables

如果用firewalld不习惯,可以安装iptables ,在kaivimaster1、kaivimaster2、kaivimaster3、kaivinode1上操作:

#安装iptables

yum install iptables-services -y

#禁用iptables

service iptables stop && systemctl disable iptables

#清空防火墙规则

iptables -F

开启ipvs

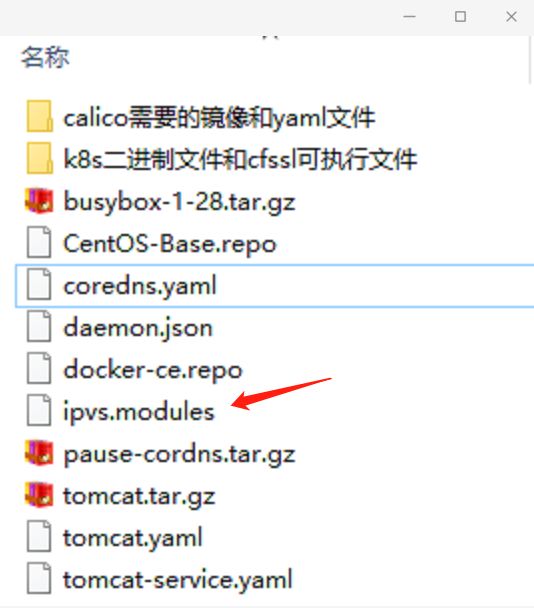

#不开启ipvs将会使用iptables进行数据包转发,但是效率低,所以官网推荐需要开通ipvs。

#把ipvs.modules上传到kaivimaster1机器的/etc/sysconfig/modules/目录下

[root@kaivimaster1# chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep ip_vs

ip_vs_ftp 13079 0

nf_nat 26583 1 ip_vs_ftp

ip_vs_sed 12519 0

ip_vs_nq 12516 0

ip_vs_sh 12688 0

ip_vs_dh 12688 0

······

拷贝到其他服务器节点:kaivimaster2、kaivimaster3、kaivinode1

这里以拷贝到kaivinode1为例

[root@kaivimaster1~]# scp /etc/sysconfig/modules/ipvs.modules kaivinode1:/etc/sysconfig/modules/

[root@kaivinode1]# chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep ip_vs

ip_vs_ftp 13079 0

nf_nat 26583 1 ip_vs_ftp

ip_vs_sed 12519 0

ip_vs_nq 12516 0

ip_vs_sh 12688 0

ip_vs_dh 12688 0

安装基础软件包

安装基础软件包,在kaivimaster1、kaivimaster2、kaivimaster3、kaivinode1上操作:

yum install -y yum-utils device-mapper-persistent-data lvm2 wget net-tools nfs-utils lrzsz gcc gcc-c++ make cmake libxml2-devel openssl-devel curl curl-devel unzip sudo ntp libaio-devel wget vim ncurses-devel autoconf automake zlib-devel python-devel epel-release openssh-server socat ipvsadm conntrack ntpdate telnet rsync

安装docker服务

安装docker-ce

[root@kaivimaster1 ~]# yum install docker-ce-20.10.6 docker-ce-cli-20.10.6 containerd.io -y

[root@kaivimaster1 ~]# systemctl start docker && systemctl enable docker && systemctl status docker

[root@kaivimaster2 ~]# yum install docker-ce-20.10.6 docker-ce-cli-20.10.6 containerd.io -y

[root@kaivimaster2 ~]# systemctl start docker && systemctl enable docker && systemctl status docker

[root@kaivinode1 ~]# yum install docker-ce-20.10.6 docker-ce-cli-20.10.6 containerd.io -y

[root@kaivinode1 ~]# systemctl start docker && systemctl enable docker && systemctl status docker

配置docker镜像加速器和驱动

[root@kaivimaster1 ~]#vim /etc/docker/daemon.json

{

"registry-mirrors":["https://rsbud4vc.mirror.aliyuncs.com","https://registry.docker-cn.com","https://docker.mirrors.ustc.edu.cn","https://dockerhub.azk8s.cn","http://hub-mirror.c.163.com","http://qtid6917.mirror.aliyuncs.com", "https://rncxm540.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

#修改docker文件驱动为systemd,默认为cgroupfs,kubelet默认使用systemd,两者必须一致才可以。

[root@kaivimaster1 ~]# systemctl daemon-reload && systemctl restart docker

[root@kaivimaster1 ~]# systemctl status docker

在kaivimaster2、kaivinode1中进行一样的操作

安装初始化k8s需要的软件包

[root@kaivimaster1 ~]# yum install -y kubelet-1.20.6 kubeadm-1.20.6 kubectl-1.20.6

[root@kaivimaster1 ~]# systemctl enable kubelet && systemctl start kubelet

[root@kaivimaster1]# systemctl status kubelet

#上面可以看到kubelet状态不是running状态,这个是正常的,不用管,等k8s组件起来这个kubelet就正常了。

[root@kaivimaster2 ~]# yum install -y kubelet-1.20.6 kubeadm-1.20.6 kubectl-1.20.6

[root@kaivimaster2~]# systemctl enable kubelet && systemctl start kubelet

[root@kaivimaster2]# systemctl status kubelet

[root@kaivinode1 ~]# yum install -y kubelet-1.20.6 kubeadm-1.20.6 kubectl-1.20.6

[root@kaivinode1 ~]# systemctl enable kubelet && systemctl start kubelet

[root@kaivinode1]# systemctl status kubelet

注:每个软件包的作用

Kubeadm: kubeadm是一个工具,用来初始化k8s集群的

kubelet: 安装在集群所有节点上,用于启动Pod的

kubectl: 通过kubectl可以部署和管理应用,查看各种资源,创建、删除和更新各种组件

通过keepalive+nginx实现k8s apiserver节点高可用

配置epel源

把epel.repo上传到kaivimaster1的/etc/yum.repos.d目录下,这样才能安装keepalived和nginx

#把epel.repo拷贝到远程主机kaivimaster2和kaivinode1上

[root@kaivimaster1 ~]# scp /etc/yum.repos.d/epel.repo kaivimaster2:/etc/yum.repos.d/

[root@kaivimaster1 ~]# scp /etc/yum.repos.d/epel.repo kaivinode1:/etc/yum.repos.d/

安装nginx主备

在kaivimaster1和kaivimaster2上做nginx主备安装

[root@kaivimaster1 ~]# yum install nginx keepalived -y

[root@kaivimaster2 ~]# yum install nginx keepalived -y

修改nginx配置文件。主备一样

[root@kaivimaster1 ~]# vim /etc/nginx/nginx.conf

user nginx;

worker_processes auto;

error_log /var/log/nginx/error.log;

pid /run/nginx.pid;

include /usr/share/nginx/modules/*.conf;

events {

worker_connections 1024;

}

# 四层负载均衡,为两台Master apiserver组件提供负载均衡

stream {

log_format main '$remote_addr $upstream_addr - [$time_local] $status $upstream_bytes_sent';

access_log /var/log/nginx/k8s-access.log main;

upstream k8s-apiserver {

server 192.168.40.180:6443; # Master1 APISERVER IP:PORT

server 192.168.40.181:6443; # Master2 APISERVER IP:PORT

}

server {

listen 16443; # 由于nginx与master节点复用,这个监听端口不能是6443,否则会冲突

proxy_pass k8s-apiserver;

}

}

http {

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log /var/log/nginx/access.log main;

sendfile on;

tcp_nopush on;

tcp_nodelay on;

keepalive_timeout 65;

types_hash_max_size 2048;

include /etc/nginx/mime.types;

default_type application/octet-stream;

server {

listen 80 default_server;

server_name _;

location / {

}

}

}

[root@kaivimaster2 ~]# vim /etc/nginx/nginx.conf

user nginx;

worker_processes auto;

error_log /var/log/nginx/error.log;

pid /run/nginx.pid;

include /usr/share/nginx/modules/*.conf;

events {

worker_connections 1024;

}

# 四层负载均衡,为两台Master apiserver组件提供负载均衡

stream {

log_format main '$remote_addr $upstream_addr - [$time_local] $status $upstream_bytes_sent';

access_log /var/log/nginx/k8s-access.log main;

upstream k8s-apiserver {

server 192.168.40.180:6443; # Master1 APISERVER IP:PORT

server 192.168.40.181:6443; # Master2 APISERVER IP:PORT

}

server {

listen 16443; # 由于nginx与master节点复用,这个监听端口不能是6443,否则会冲突

proxy_pass k8s-apiserver;

}

}

http {

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log /var/log/nginx/access.log main;

sendfile on;

tcp_nopush on;

tcp_nodelay on;

keepalive_timeout 65;

types_hash_max_size 2048;

include /etc/nginx/mime.types;

default_type application/octet-stream;

server {

listen 80 default_server;

server_name _;

location / {

}

}

}

keepalive配置

主keepalived

[root@kaivimaster1 ~]# vim /etc/keepalived/keepalived.conf

global_defs {

notification_email {

[email protected]

[email protected]

[email protected]

}

notification_email_from [email protected]

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id NGINX_MASTER

}

vrrp_script check_nginx {

script "/etc/keepalived/check_nginx.sh"

}

vrrp_instance VI_1 {

state MASTER

interface ens33 # 修改为实际网卡名

virtual_router_id 51 # VRRP 路由 ID实例,每个实例是唯一的

priority 100 # 优先级,备服务器设置 90

advert_int 1 # 指定VRRP 心跳包通告间隔时间,默认1秒

authentication {

auth_type PASS

auth_pass 1111

}

# 虚拟IP

virtual_ipaddress {

192.168.40.199/24

}

track_script {

check_nginx

}

}

#vrrp_script:指定检查nginx工作状态脚本(根据nginx状态判断是否故障转移)

#virtual_ipaddress:虚拟IP(VIP)

[root@kaivimaster1 ~]# vim /etc/keepalived/check_nginx.sh

#!/bin/bash

count=$(ps -ef |grep nginx | grep sbin | egrep -cv "grep|$$")

if [ "$count" -eq 0 ];then

systemctl stop keepalived

fi

[root@kaivimaster1 ~]# chmod +x /etc/keepalived/check_nginx.sh #加执行权限

备keepalive

[root@kaivimaster2 ~]# vim /etc/keepalived/keepalived.conf

global_defs {

notification_email {

[email protected]

[email protected]

[email protected]

}

notification_email_from [email protected]

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id NGINX_BACKUP

}

vrrp_script check_nginx {

script "/etc/keepalived/check_nginx.sh"

}

vrrp_instance VI_1 {

state BACKUP

interface ens33

virtual_router_id 51 # VRRP 路由 ID实例,每个实例是唯一的

priority 90

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.40.199/24

}

track_script {

check_nginx

}

}

[root@kaivimaster2 ~]# vim /etc/keepalived/check_nginx.sh

#!/bin/bash

count=$(ps -ef |grep nginx | grep sbin | egrep -cv "grep|$$")

if [ "$count" -eq 0 ];then

systemctl stop keepalived

fi

[root@kaivimaster2 ~]# chmod +x /etc/keepalived/check_nginx.sh

#注:keepalived根据脚本返回状态码(0为工作正常,非0不正常)判断是否故障转移。

启动服务

[root@kaivimaster1 ~]# systemctl daemon-reload

[root@kaivimaster1 ~]# systemctl start nginx

[root@kaivimaster1 ~]# systemctl start keepalived

[root@kaivimaster1 ~]# systemctl enable nginx keepalived

[root@kaivimaster1]# systemctl status keepalived

[root@kaivimaster2 ~]# systemctl daemon-reload

[root@kaivimaster2 ~]# systemctl start nginx

[root@kaivimaster2 ~]# systemctl start keepalived

[root@kaivimaster2 ~]# systemctl enable nginx keepalived

[root@kaivimaster2]# systemctl status keepalived

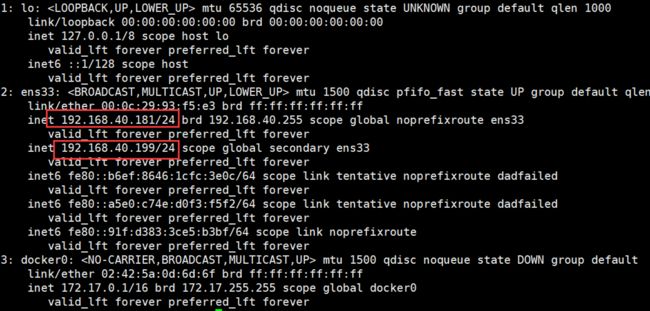

测试vip是否绑定成功

[root@kaivimaster1 ~]# ip addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:0c:29:79:9e:36 brd ff:ff:ff:ff:ff:ff

inet 192.168.40.180/24 brd 192.168.40.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 192.168.40.199/24 scope global secondary ens33

valid_lft forever preferred_lft forever

inet6 fe80::b6ef:8646:1cfc:3e0c/64 scope link noprefixroute

valid_lft forever preferred_lft forever

测试keepalived

停掉kaivimaster1上的nginx。Vip会漂移到kaivimaster2

[root@kaivimaster1 ~]# service nginx stop

[root@kaivimaster2]# ip addr

#启动kaivimaster1上的nginx和keepalived,vip又会漂移回来

[root@kaivimaster1 ~]# systemctl daemon-reload

[root@kaivimaster1 ~]# systemctl start nginx

[root@kaivimaster1 ~]# systemctl start keepalived

[root@kaivimaster1]# ip addr

kubeadm初始化k8s集群

在kaivimaster1上创建kubeadm-config.yaml文件:

[root@kaivimaster1 ~]# cd /root/

[root@kaivimaster1]# vim kubeadm-config.yaml

apiVersion: kubeadm.k8s.io/v1beta2

kind: ClusterConfiguration

kubernetesVersion: v1.20.6

controlPlaneEndpoint: 192.168.40.199:16443

imageRepository: registry.aliyuncs.com/google_containers

apiServer:

certSANs:

- 192.168.40.180

- 192.168.40.181

- 192.168.40.182

- 192.168.40.199

networking:

podSubnet: 10.244.0.0/16

serviceSubnet: 10.10.0.0/16

---

apiVersion: kubeproxy.config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

mode: ipvs

使用kubeadm初始化k8s集群

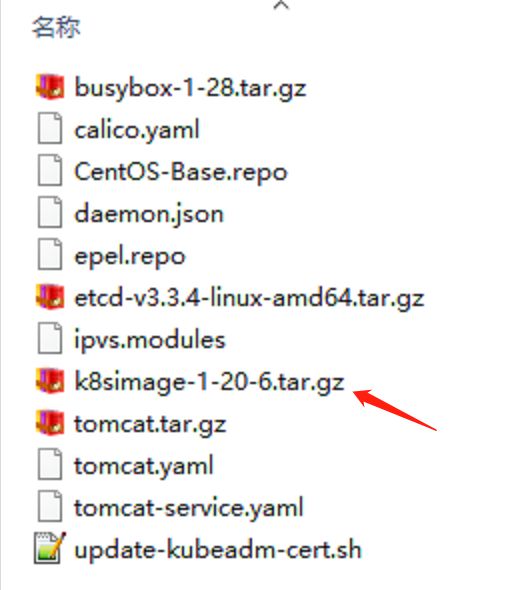

#把初始化k8s集群需要的离线镜像包上传到kaivimaster1、kaivimaster2、kaivinode1机器上,手动解压:

[root@kaivimaster1 ~]# docker load -i k8simage-1-20-6.tar.gz

[root@kaivimaster2 ~]# docker load -i k8simage-1-20-6.tar.gz

[root@kaivinode1 ~]# docker load -i k8simage-1-20-6.tar.gz

[root@kaivimaster1]# kubeadm init --config kubeadm-config.yaml

注:–image-repository registry.aliyuncs.com/google_containers:手动指定仓库地址为registry.aliyuncs.com/google_containers。kubeadm默认从k8s.grc.io拉取镜像,但是k8s.gcr.io访问不到,所以需要指定从registry.aliyuncs.com/google_containers仓库拉取镜像。

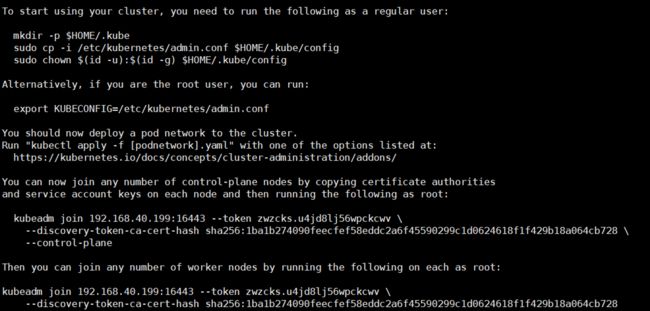

显示如下,说明安装完成:

kubeadm join 192.168.40.199:16443 --token zwzcks.u4jd8lj56wpckcwv --discovery-token-ca-cert-hash sha256:1ba1b274090feecfef58eddc2a6f45590299c1d0624618f1f429b18a064cb728 --control-plane

#上面命令是把master节点加入集群,需要保存下来,每个人的都不一样kubeadm join 192.168.40.199:16443 --token zwzcks.u4jd8lj56wpckcwv --discovery-token-ca-cert-hash sha256:1ba1b274090feecfef58eddc2a6f45590299c1d0624618f1f429b18a064cb728

#上面命令是把node节点加入集群,需要保存下来,每个人的都不一样

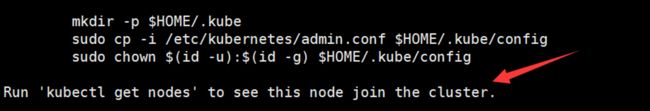

#配置kubectl的配置文件config,相当于对kubectl进行授权,这样kubectl命令可以使用这个证书对k8s集群进行管理

[root@kaivimaster1 ~]# mkdir -p $HOME/.kube

[root@kaivimaster1 ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@kaivimaster1 ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

[root@kaivimaster1 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

kaivimaster1 NotReady control-plane,master 60s v1.20.6

此时集群状态还是NotReady状态,因为没有安装网络插件。

扩容k8s集群-添加master节点

#把kaivimaster1节点的证书拷贝到kaivimaster2上

在kaivimaster2创建证书存放目录:

[root@kaivimaster2 ~]# cd /root && mkdir -p /etc/kubernetes/pki/etcd &&mkdir -p ~/.kube/

#把kaivimaster1节点的证书拷贝到kaivimaster2上:

[root@kaivimaster1 ~]# scp /etc/kubernetes/pki/ca.crt kaivimaster2:/etc/kubernetes/pki/

[root@kaivimaster1 ~]# scp /etc/kubernetes/pki/ca.key kaivimaster2:/etc/kubernetes/pki/

[root@kaivimaster1 ~]# scp /etc/kubernetes/pki/sa.key kaivimaster2:/etc/kubernetes/pki/

[root@kaivimaster1 ~]# scp /etc/kubernetes/pki/sa.pub kaivimaster2:/etc/kubernetes/pki/

[root@kaivimaster1 ~]# scp /etc/kubernetes/pki/front-proxy-ca.crt kaivimaster2:/etc/kubernetes/pki/

[root@kaivimaster1 ~]# scp /etc/kubernetes/pki/front-proxy-ca.key kaivimaster2:/etc/kubernetes/pki/

[root@kaivimaster1 ~]# scp /etc/kubernetes/pki/etcd/ca.crt kaivimaster2:/etc/kubernetes/pki/etcd/

[root@kaivimaster1 ~]# scp /etc/kubernetes/pki/etcd/ca.key kaivimaster2:/etc/kubernetes/pki/etcd/

#证书拷贝之后在kaivimaster2上执行如下命令,大家复制自己的,这样就可以把kaivimaster2和加入到集群,成为控制节点:

在kaivimaster1上查看加入节点的命令:

[root@kaivimaster1 ~]# kubeadm token create --print-join-command

kubeadm join 192.168.40.199:16443 --token zwzcks.u4jd8lj56wpckcwv \

--discovery-token-ca-cert-hash sha256:1ba1b274090feecfef58eddc2a6f45590299c1d0624618f1f429b18a064cb728 \

--control-plane #这个参数很重要

在chaomaster2服务器中执行:

[root@kaivimaster2 ~]# kubeadm token create --print-join-command

kubeadm join 192.168.40.199:16443 --token zwzcks.u4jd8lj56wpckcwv \

--discovery-token-ca-cert-hash sha256:1ba1b274090feecfef58eddc2a6f45590299c1d0624618f1f429b18a064cb728 \

--control-plane

#看到上面说明kaivimaster2节点已经加入到集群了

还要执行上面三条命令

[root@kaivimaster2 ~]# mkdir -p $HOME/.kube

[root@kaivimaster2 ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@kaivimaster2 ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

在kaivimaster1上查看集群状况:

[root@kaivimaster1 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

kaivimaster1 NotReady control-plane,master 49m v1.20.6

kaivimaster2 NotReady <none> 39s v1.20.6

上面可以看到kaivimaster2已经加入到集群了

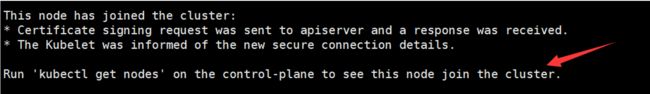

扩容k8s集群-添加node节点

在kaivimaster1上查看加入节点的命令:

[root@kaivimaster1 ~]# kubeadm token create --print-join-command

#显示如下:

kubeadm join 192.168.40.199:16443 --token y23a82.hurmcpzedblv34q8 --discovery-token-ca-cert-hash sha256:1ba1b274090feecfef58eddc2a6f45590299c1d0624618f1f429b18a064cb728

把kaivinode1加入k8s集群:

[root@kaivinode1~]# kubeadm token create --print-join-command

kubeadm join 192.168.40.199:16443 --token y23a82.hurmcpzedblv34q8 --discovery-token-ca-cert-hash sha256:1ba1b274090feecfef58eddc2a6f45590299c1d0624618f1f429b18a064cb728

#看到上面说明kaivinode1节点已经加入到集群了,充当工作节点

#在kaivimaster1上查看集群节点状况:

[root@kaivimaster1 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

kaivimaster1 NotReady control-plane,master 53m v1.20.6

kaivimaster2 NotReady control-plane,master 5m13s v1.20.6

kaivinode1 NotReady <none> 59s v1.20.6

#可以看到kaivinode1的ROLES角色为空,就表示这个节点是工作节点。

#可以把kaivinode1的ROLES变成work,按照如下方法:

[root@kaivimaster1 ~]# kubectl label node kaivinode1 node-role.kubernetes.io/worker=worker

注意:上面状态都是NotReady状态,说明没有安装网络插件

[root@kaivimaster1 ~]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-7f89b7bc75-lh28j 0/1 Pending 0 18h

coredns-7f89b7bc75-p7nhj 0/1 Pending 0 18h

etcd-kaivimaster1 1/1 Running 0 18h

etcd-kaivimaster2 1/1 Running 0 15m

kube-apiserver-kaivimaster1 1/1 Running 0 18h

kube-apiserver-kaivimaster2 1/1 Running 0 15m

kube-controller-manager-kaivimaster1 1/1 Running 1 18h

kube-controller-manager-kaivimaster2 1/1 Running 0 15m

kube-proxy-n26mf 1/1 Running 0 4m33s

kube-proxy-sddbv 1/1 Running 0 18h

kube-proxy-sgqm2 1/1 Running 0 15m

kube-scheduler-kaivimaster1 1/1 Running 1 18h

kube-scheduler-kaivimaster2 1/1 Running 0 15m

coredns-7f89b7bc75-lh28j是pending状态,这是因为还没有安装网络插件,等到下面安装好网络插件之后这个cordns就会变成running了

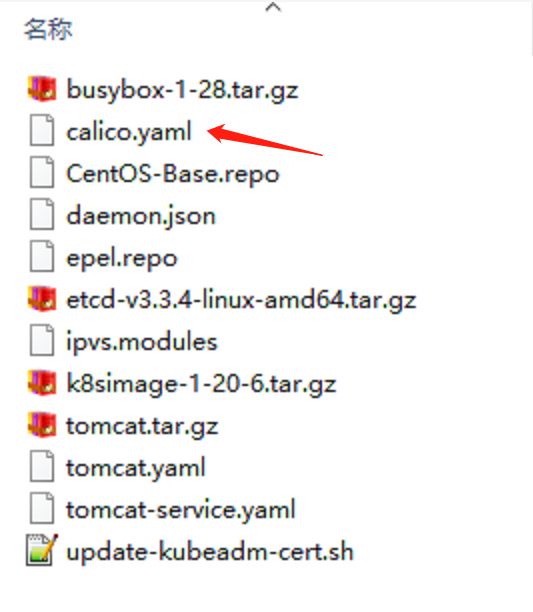

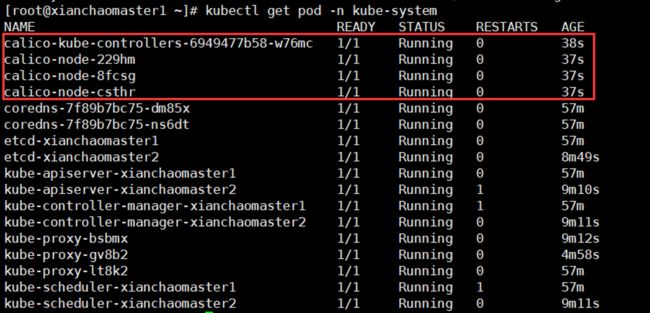

安装kubernetes网络组件-Calico

上传calico.yaml到kaivimaster1上,使用yaml文件安装calico 网络插件可以做网络策略) 。

[root@kaivimaster1 ~]# kubectl apply -f calico.yaml

注:在线下载配置文件地址是: https://docs.projectcalico.org/manifests/calico.yaml

[root@kaivimaster1 ~]# kubectl get pod -n kube-system

coredns-这个pod现在是running状态,运行正常

再次查看集群状态。

[root@kaivimaster1 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

kaivimaster1 Ready control-plane,master 58m v1.20.6

kaivimaster2 Ready control-plane,master 10m v1.20.6

kaivinode1 Ready <none> 5m46s v1.20.6

#STATUS状态是Ready,说明k8s集群正常运行了

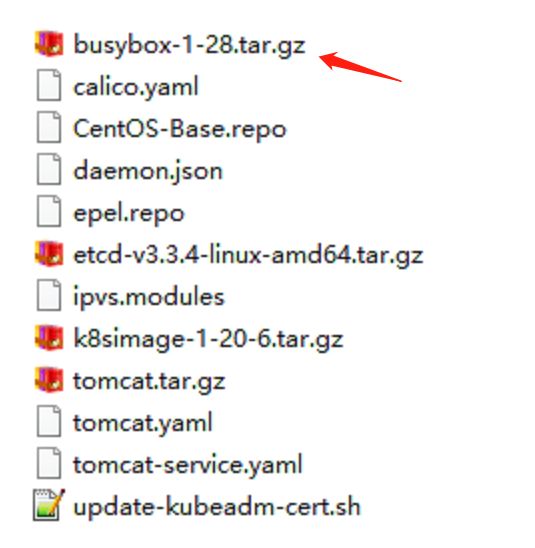

测试在k8s创建pod是否可以正常访问网络

#把busybox-1-28.tar.gz上传到kaivinode1节点,手动解压

[root@kaivinode1 ~]# docker load -i busybox-1-28.tar.gz

[root@kaivimaster1 ~]# kubectl run busybox --image busybox:1.28 --restart=Never --rm -it busybox -- sh

/ # ping www.baidu.com

PING www.baidu.com (39.156.66.18): 56 data bytes

64 bytes from 39.156.66.18: seq=0 ttl=127 time=39.3 ms

#通过上面可以看到能访问网络,说明calico网络插件已经被正常安装了

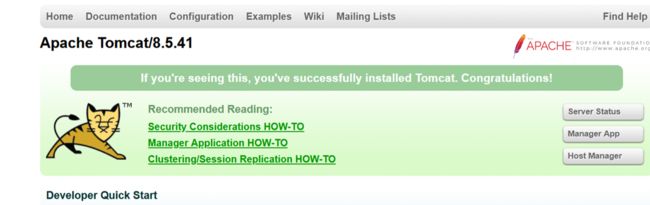

测试k8s集群中部署tomcat服务

#把tomcat.tar.gz、tomcat.yamltomcat-service.yaml、上传到kaivinode1,手动解压

[root@kaivinode1 ~]# docker load -i tomcat.tar.gz

[root@kaivimaster1 ~]# kubectl apply -f tomcat.yaml

[root@kaivimaster1 ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

demo-pod 1/1 Running 0 10s

[root@kaivimaster1 ~]# kubectl apply -f tomcat-service.yaml

[root@kaivimaster1 ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.255.0.1 <none> 443/TCP 158m

tomcat NodePort 10.255.227.179 <none> 8080:30080/TCP 19m

在浏览器访问kaivinode1节点的ip:30080即可请求到浏览器

测试coredns是否正常

[root@kaivimaster1 ~]# kubectl run busybox --image busybox:1.28 --restart=Never --rm -it busybox -- sh

If you don't see a command prompt, try pressing enter.

/ # nslookup kubernetes.default.svc.cluster.local

Server: 10.10.0.10

Address 1: 10.10.0.10 kube-dns.kube-system.svc.cluster.local

Name: kubernetes.default.svc.cluster.local

Address 1: 10.10.0.1 kubernetes.default.svc.cluster.local

/ # nslookup tomcat.default.svc.cluster.local

Server: 10.10.0.10

Address 1: 10.10.0.10 kube-dns.kube-system.svc.cluster.local

Name: tomcat.default.svc.cluster.local

Address 1: 10.10.13.88 tomcat.default.svc.cluster.local

10.10.13.88就是我们coreDNS的clusterIP,说明coreDNS配置好了。

解析内部Service的名称,是通过coreDNS去解析的。

10.10.0.10是创建的tomcat的service ip

#注意:

busybox要用指定的1.28版本,不能用最新版本,最新版本,nslookup会解析不到dns和ip