轻量级 K8S 环境、本地 K8S 环境Minikube,一键使用 (史上最全)

文章很长,而且持续更新,建议收藏起来,慢慢读!疯狂创客圈总目录 博客园版 为您奉上珍贵的学习资源 :

免费赠送 :《尼恩Java面试宝典》 持续更新+ 史上最全 + 面试必备 2000页+ 面试必备 + 大厂必备 +涨薪必备

免费赠送 经典图书:《Java高并发核心编程(卷1)加强版》 面试必备 + 大厂必备 +涨薪必备 加尼恩免费领

免费赠送 经典图书:《Java高并发核心编程(卷2)加强版》 面试必备 + 大厂必备 +涨薪必备 加尼恩免费领

免费赠送 经典图书:《Java高并发核心编程(卷3)加强版》 面试必备 + 大厂必备 +涨薪必备 加尼恩免费领

免费赠送 经典图书:《尼恩Java面试宝典 V11》 面试必备 + 大厂必备 +涨薪必备 加尼恩免费领

免费赠送 资源宝库: Java 必备 百度网盘资源大合集 价值>10000元 加尼恩领取

学习 云原生+ 微服务的神器

本地、轻量级 K8S 环境,一键启动, 学习 云原生+ 微服务 , 非常方便

尼恩会给大家准备好 虚拟机的box文件,可以直接用,省去 折腾的烦恼

最小化K8s环境部署之Minikube

minikube 背景

徒手搭建过k8s的同学都晓得其中的煎熬,复杂的认证,配置环节相当折磨人,出错率相当高,

而minikube就是为解决这个问题而衍生出来的工具,它基于go语言开发,

minikube可以在单机环境下快速搭建可用的k8s集群,非常适合测试和本地开发,现有的大部分在线k8s实验环境也是基于minikube

可以在minikube上体验kubernetes的相关功能。

minikube基于go语言开发, 是一个易于在本地运行 Kubernetes 的工具,可在你的笔记本电脑上的虚拟机内轻松创建单机版 Kubernetes 集群。

便于尝试 Kubernetes 或使用 Kubernetes 日常开发。

可以在单机环境下快速搭建可用的k8s集群,非常适合测试和本地开发。

所以,可以在本地实验环境来安装minikube,来入门学习kubernetes相关的知识;

什么是minikube

minikube 是本地 Kubernetes,

优点是:快速启动,消耗机器资源较少,非常适合新手体验与开发。

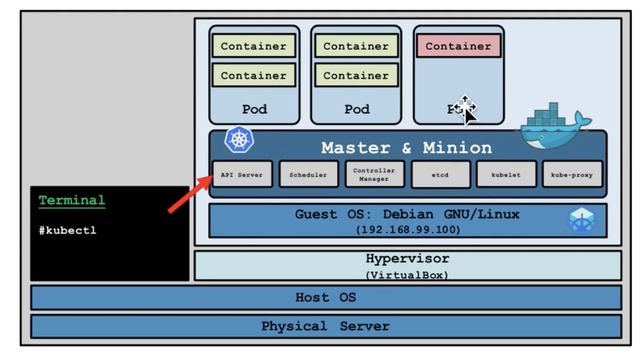

1、Kubernetes集群架构

通常情况下,一套完整的Kubernetes集群至少需要包括master节点和node节点,

下图是常规k8s的集群架构,master节点一般是独立的,用于协调调试其它节点之用,而容器实际运行都是在node节点上,kubectl位于 master节点。

2、Minikube架构

下图是 Minikube的架构,可以看出,master 节点与其它节点合为一体,而整体则通过宿主机上的 kubectl 进行管理,这样可以更加节省资源。

其支持大部分kubernetes的功能,列表如下

- DNS

- NodePorts

- ConfigMaps and Secrets

- Dashboards

- Container Runtime: Docker, and rkt

- Enabling CNI (Container Network Interface)

- Ingress

- …

Minikube 支持 Windows、macOS、Linux 三种 OS,会根据平台不同,下载对应的虚拟机镜像,并在镜像内安装 k8s。

minikube安装前准备

推荐在linux主机上安装,我本地用的是 centos。

安装minikube的主机必要配置:

- 2 CPUs or more

- 2GB of free memory

- 20GB of free disk space

- Internet connection

- Container or virtual machine manager, such as: Docker, Hyperkit, Hyper-V, KVM, Parallels, Podman, VirtualBox, or VMware

Container 容器我本地安装是docker;

docker的安装

安装过程,请参见下面的文档:

Docker 入门到精通 (图解+秒懂+史上最全) - 疯狂创客圈 - 博客园 (cnblogs.com)

注:由于国内访问docker镜像库很是缓慢,所以建议配置阿里云的代理,通过修改daemon配置文件/etc/docker/daemon.json来使用加速器:

$ cd /etc/docker

# 在daemon.json文件末尾追加如下配置:

$ sudo tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": [

"https://bjtzu1jb.mirror.aliyuncs.com",

"http://f1361db2.m.daocloud.io",

"https://hub-mirror.c.163.com",

"https://docker.mirrors.ustc.edu.cn",

"https://reg-mirror.qiniu.com",

"https://dockerhub.azk8s.cn",

"https://registry.docker-cn.com"

]

}

EOF

# 重启docker

sudo systemctl daemon-reload

sudo systemctl restart docker

版本要求

For improved Docker performance, Upgrade Docker to a newer version (Minimum recommended version is 18.09.0)

! docker is currently using the devicemapper storage driver, consider switching to overlay2 for better performance

* Using image repository registry.cn-hangzhou.aliyuncs.com/google_containers

* Starting control plane node minikube in cluster minikube

* Pulling base image ...

* Creating docker container (CPUs=2, Memory=2200MB) ...

* Preparing Kubernetes v1.23.1 on Docker 20.10.8 ...

docker 版本:

[root@cdh1 ~]# docker version

Client: Docker Engine - Community

Version: 20.10.23

API version: 1.41

Go version: go1.18.10

Git commit: 7155243

Built: Thu Jan 19 17:36:21 2023

OS/Arch: linux/amd64

Context: default

Experimental: true

Server: Docker Engine - Community

Engine:

Version: 20.10.23

API version: 1.41 (minimum version 1.12)

Go version: go1.18.10

Git commit: 6051f14

Built: Thu Jan 19 17:34:26 2023

OS/Arch: linux/amd64

Experimental: false

containerd:

Version: 1.6.15

GitCommit: 5b842e528e99d4d4c1686467debf2bd4b88ecd86

runc:

Version: 1.1.4

GitCommit: v1.1.4-0-g5fd4c4d

docker-init:

Version: 0.19.0

GitCommit: de40ad0

关闭虚拟机swap、selinux、firewalld

# 临时关闭swap

swapoff -a

# 临时关闭selinux,如永久关闭请配置为permissive

setenforce 0

# 关闭防火墙

systemctl stop firewalld

systemctl disable firewalld

永久关闭swap可注释掉/etc/fstab中的swap行,然后重启。

永久关闭selinux可编辑/etc/sysconfig/selinux,配置为SELINUX=permissive,然后重启。

此处为常规操作不详述。

编辑虚拟机hosts文件

与安装k8s类似,需要添加主机名解析

echo "127.0.0.1 test1" >> /etc/hosts

其中test1为虚拟机主机名。

如果不添加该解析,启动minikube时会有如下报错:

[WARNING Hostname]: hostname "test1" could not be reached[WARNING Hostname]: hostname "test1": lookup test1 on 172.18.3.4:53: no such host

登录阿里云

注册阿里云账号, 开通容器镜像服务

docker login --username=修改成你自己的账号 registry.cn-hangzhou.aliyuncs.com

docker tag [ImageId] registry.cn-hangzhou.aliyuncs.com/kaigejava/my_kaigejava:[镜像版本号]

docker push registry.cn-hangzhou.aliyuncs.com/kaigejava/my_kaigejava:[镜像版本号]

创建用户,加入docker用户组

新建一个minikube用户

useradd minikube

新建一个用户组

groupadd docker

将minikube添加到docker组

usermod -aG docker minikube

将当前用户添加到该docker组(root)

usermod -aG docker $USER

更新用户组

newgrp docker

重启docker

sudo systemctl daemon-reload

sudo systemctl restart docker

让用户minikube获得root权限

1、添加用户,首先用adduser命令添加一个普通用户,命令如下:

添加一个名为minikube的用户

-

adduser minikube 添加用户

-

passwd minikube//修改密码

2、赋予root权限 (三种方法,推荐第三)

方法一: 修改 /etc/sudoers 文件,找到下面一行,把前面的注释(#)去掉

## Allows people in group wheel to run all commands

%wheel ALL=(ALL) ALL

然后修改用户,使其属于root组(wheel),命令如下:

#usermod -g root minikube

修改完毕,现在可以用minikube帐号登录,然后用命令 su – ,即可获得root权限进行操作。

方法二: 修改 /etc/sudoers 文件,找到下面一行,在root下面添加一行,如下所示:

## Allow root to run any commands anywhere

root ALL=(ALL) ALL

minikube ALL=(ALL) ALL

修改完毕,现在可以用minikube帐号登录,然后用命令 su – ,即可获得root权限进行操作。

su minikube

su -

方法三: 修改 /etc/passwd 文件,直接修改用户id为0,就是root的用户id

cat /etc/passwd

7 个字段的详细信息如下:

(1)用户名 (user1): 已创建用户的用户名,字符长度 1 个到 12 个字符。如果是“*”的话,那么就表示该账号被查封了,系统不允许持有该账号的用户登录。

(2)密码(x):代表加密密码,保存在 /etc/shadow 文件中。

(3)用户 ID(1001):代表用户的 ID 号,每个用户都要有一个唯一的 ID 。UID 号为 0 的是为 root 用户保留的,UID 号 1 到99 是为系统用户保留的,UID 号 100-999 是为系统账户和群组保留的。

(4)群组 ID (100):代表user1用户所属群组的 ID 号,每个群组都要有一个唯一的 GID ,群组信息保存在 /etc/group文件中。

(5)用户信息(用户1):代表描述字段,可以用来描述用户的信息。

(6)家目录(/usr/testUser):代表用户的主目录。

(7)Shell(/bin/bash):代表用户使用的 shell 类型。

找到如下行,把用户ID修改为 0 ,如下所示:

minikube:x:1001:1001::/hminikubekube:/bin/bash

修改后如下

minikube:x:0:1001::/home/minikube:/bin/bash

保存,用minikube账户登录后,直接获取的就是root帐号的权限。

安装minikube

minikube的官网:minikube start | minikube (k8s.io)

安装与启动minikube

官网上的安装minikube网速实在太慢了,推荐使用阿里云的镜像来进行安装minikube,

直接安装minikube

#阿里云镜像

curl -Lo minikube https://kubernetes.oss-cn-hangzhou.aliyuncs.com/minikube/releases/v1.23.1/minikube-linux-amd64 && chmod +x minikube && sudo mv minikube /usr/local/bin/

#官方二进制包下载

curl -LO https://storage.googleapis.com/minikube/releases/latest/minikube-linux-amd64

sudo install minikube-linux-amd64 /usr/local/bin/minikube

65M

linux 下载太慢,我用浏览器下载后, 放在虚拟机共享目录

然后 复制到 /usr/local/bin

[root@cdh1 ~]# su kube

[root@cdh1 root]# cp /vagrant/minikube-linux-amd64 /usr/local/bin/

[root@cdh1 root]# cp /vagrant/minikube-linux-amd64 /usr/local/bin

[root@cdh1 root]# chmod +x /usr/local/bin/minikube-linux-amd64

[root@cdh1 root]# mv /usr/local/bin/minikube-linux-amd64 /usr/local/bin/minikube

启动minikube

minikube start

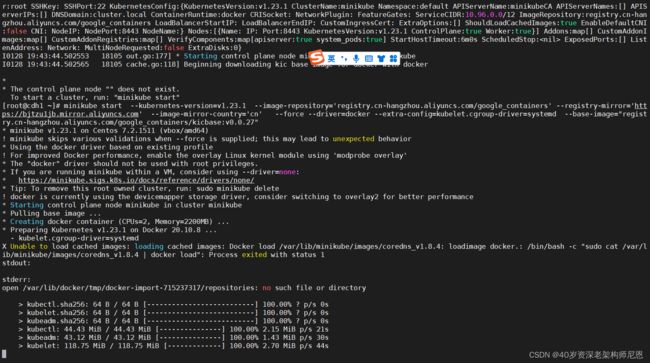

执行minikube start出现 The “docker” driver should not be used with root privileges 的报错.

如果是本地测试环境,根本就不需要考虑那么多,直接执行以下命令,强制使用docker:

minikube start --force --driver=docker

# 或者使用阿里云镜像启动

minikube start --force --driver=docker --image-mirror-country cn --iso-url=https://kubernetes.oss-cn-hangzhou.aliyuncs.com/minikube/iso/minikube-v1.5.0.iso --registry-mirror=https://xxxxxx.mirror.aliyuncs.com

minikube start --force --driver=docker --image-mirror-country='cn' --iso-url=https://kubernetes.oss-cn-hangzhou.aliyuncs.com/minikube/iso/minikube-v1.5.0.iso --registry-mirror=https://xxxxxx.mirror.aliyuncs.com

还是有问题, docker image 下载不了

血和泪,用过的 命令list

minikube start --image-repository=registry.cn-hangzhou.aliyuncs.com/google_containers --registry-mirror=https://ovfftd6p.mirror.aliyuncs.com --image-mirror-country='cn' --force --driver=docker

sudo minikube start --kubernetes-version=v1.23.1 --image-mirror-country='cn' --image-repository='registry.cn-hangzhou.aliyuncs.com/google_containers'

minikube start --kubernetes-version=v1.23.1 --image-repository='registry.cn-hangzhou.aliyuncs.com/google_containers' --registry-mirror='https://ovfftd6p.mirror.aliyuncs.com' --image-mirror-country='cn' --force --driver=docker

minikube start --kubernetes-version=v1.23.1 --image-repository='registry.cn-hangzhou.aliyuncs.com/google_containers' --image-mirror-country='cn' --force --driver=docker

minikube start --kubernetes-version=v1.23.1 --image-repository='registry.cn-hangzhou.aliyuncs.com/google_containers' --image-mirror-country='cn' --force --driver=docker

minikube start --kubernetes-version=v1.23.1 --image-repository='registry.cn-hangzhou.aliyuncs.com/google_containers' --registry-mirror='https://ovfftd6p.mirror.aliyuncs.com' --image-mirror-country='cn' --force --driver=docker

minikube start --kubernetes-version=v1.23.1 --image-repository='registry.aliyuncs.com/google_containers' --registry-mirror='https://ovfftd6p.mirror.aliyuncs.com' --image-mirror-country='cn' --force --driver=docker

minikube start --kubernetes-version=v1.23.1 --image-mirror-country='cn' --force --driver=docker

minikube start --kubernetes-version=v1.23.1 --image-repository='registry.aliyuncs.com/google_containers' --registry-mirror='https://ovfftd6p.mirror.aliyuncs.com' --image-mirror-country='cn' --force --driver=none

su minikube

minikube delete

minikube start --kubernetes-version=v1.23.1 --image-mirror-country='cn' --image-repository='registry.aliyuncs.com/google_containers' --registry-mirror='https://ovfftd6p.mirror.aliyuncs.com' --force --driver=docker

minikube start --kubernetes-version=v1.23.1 --image-mirror-country='cn' --image-repository='registry.cn-hangzhou.aliyuncs.com/google_containers' --force --driver=docker

minikube start 参数

启动命令:minikube start "参数"

- –image-mirror-country cn 将缺省利用 registry.cn-hangzhou.aliyuncs.com/google_containers 作为安装Kubernetes的容器镜像仓库,

- –iso-url=*** 利用阿里云的镜像地址下载相应的 .iso 文件

- –cpus=2: 为minikube虚拟机分配CPU核数

- –memory=2000mb: 为minikube虚拟机分配内存数

- –kubernetes-version=***: minikube 虚拟机将使用的 kubernetes 版本 ,e.g. --kubernetes-version v 1.17.3

- –docker-env http_proxy 传递代理地址

默认启动使用的是 VirtualBox 驱动,使用 --vm-driver 参数可以指定其它驱动

# https://minikube.sigs.k8s.io/docs/drivers/

- --vm-driver=none 表示用容器;

- --vm-driver=virtualbox 表示用虚拟机;

注意: To use kubectl or minikube commands as your own user, you may need to relocate them. For example, to overwrite your own settings, run:

sudo mv /root/.kube /root/.minikube $HOME

sudo chown -R $USER $HOME/.kube $HOME/.minikube

示例#

–vm-driver=kvm2

参考: https://minikube.sigs.k8s.io/docs/drivers/kvm2/

minikube start --image-mirror-country cn --image-repository=registry.cn-hangzhou.aliyuncs.com/google_containers --registry-mirror=https://ovfftd6p.mirror.aliyuncs.com --driver=kvm2

–vm-driver=hyperv

# 创建基于Hyper-V的Kubernetes测试环境

minikube.exe start --image-mirror-country cn \

--iso-url=https://kubernetes.oss-cn-hangzhou.aliyuncs.com/minikube/iso/minikube-v1.5.0.iso \

--registry-mirror=https://xxxxxx.mirror.aliyuncs.com \

--vm-driver="hyperv" \

--hyperv-virtual-switch="MinikubeSwitch" \

--memory=4096

–vm-driver=none

sudo minikube start --image-mirror-country cn --vm-driver=none

sudo minikube start --vm-driver=none --docker-env http_proxy=http://$host_IP:8118 --docker-env https_proxy=https:// $host_IP:8118

其中$host_IP指的是host的IP,可以通过ifconfig查看;比如在我这台机器是10.0.2.15,用virtualbox部署,则用下列命令启动minikube

sudo minikube start --vm-driver=none --docker-env http_proxy=http://10.0.2.15:8118 --docker-env https_proxy=https://10.0.2.15:8118

sudo minikube start --kubernetes-version=v1.23.1 --image-mirror-country='cn' --image-repository='registry.cn-hangzhou.aliyuncs.com/google_containers'

sudo minikube start --kubernetes-version=v1.23.1 --image-mirror-country='cn' --image-repository='registry.cn-hangzhou.aliyuncs.com/google_containers' --extra-config=kubelet.cgroup

拉取镜像的问题

错误日志查看

minikube logs

Q1:解决minikube拉取镜像速度缓慢的问题

需要进入minikube进程内部,修改远程镜像仓库

minikube ssh

sudo mkdir -p /etc/docker

sudo tee /etc/docker/daemon.json <<-'EOF'

{"registry-mirrors": ["http://hub-mirror.c.163.com"]

}

EOF

sudo systemctl daemon-reload

sudo systemctl restart docker

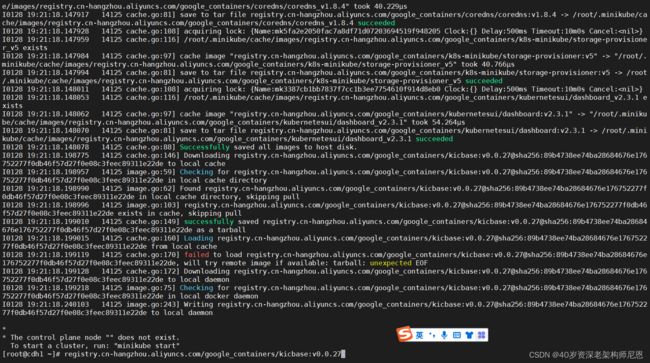

解决 minikube start 过程中拉取镜像慢的问题

之前下载失败后的minikube,想要重新下载记得先删除

minikube delete --all

拉取镜像慢可以拉取国内仓库,minikube start的时候会帮我们下载新版的kubernetes,但是我这里不太支持最新版的,所以需要指定kubernetes版本

minikube start --kubernetes-version=v1.23.1 --image-mirror-country='cn' --image-repository='registry.cn-hangzhou.aliyuncs.com/google_containers'

minikube start --kubernetes-version=v1.23.1 --image-repository='registry.cn-hangzhou.aliyuncs.com/google_containers' --registry-mirror='https://ovfftd6p.mirror.aliyuncs.com' --image-mirror-country='cn' --force --driver=docker

minikube start --kubernetes-version=v1.23.1 --image-repository='registry.cn-hangzhou.aliyuncs.com/google_containers' --registry-mirror='https://ovfftd6p.mirror.aliyuncs.com' --image-mirror-country='cn' --force --driver=docker --extra-config=kubelet.cgroup-driver=systemd

minikube start --kubernetes-version=v1.23.1 --image-repository='registry.aliyuncs.com/google_containers' --registry-mirror=https://kfwkfulq.mirror.aliyuncs.com --image-mirror-country='cn' --force --driver=docker --extra-config=kubelet.cgroup-driver=systemd

--registry-mirror=https://bjtzu1jb.mirror.aliyuncs.com

minikube start --kubernetes-version=v1.23.1 --image-repository='registry.cn-hangzhou.aliyuncs.com/google_containers' --registry-mirror='https://bjtzu1jb.mirror.aliyuncs.com' --image-mirror-country='cn' --force --driver=docker --extra-config=kubelet.cgroup-driver=systemd

minikube start --kubernetes-version=v1.23.1 --image-repository='registry.cn-hangzhou.aliyuncs.com/google_containers' --registry-mirror='https://bjtzu1jb.mirror.aliyuncs.com' --image-mirror-country='cn' --force --driver=docker --extra-config=kubelet.cgroup-driver=systemd --base-image="registry.cn-hangzhou.aliyuncs.com/google_containers/kicbase:v0.0.27"

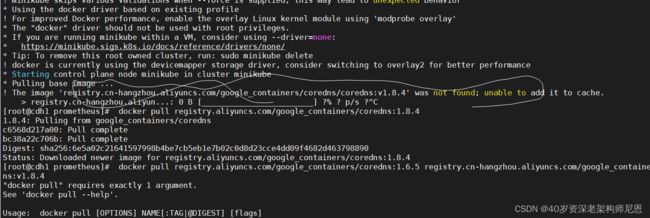

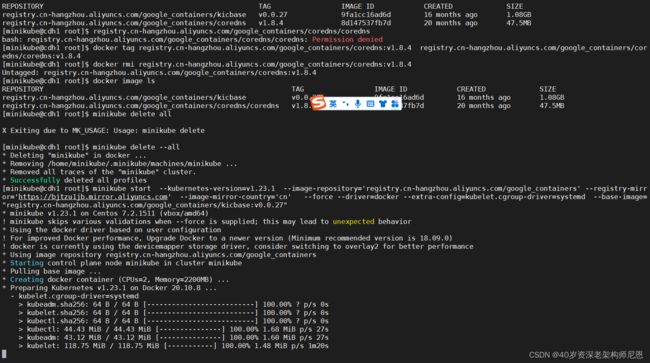

Q2:基础镜像拉不下来

错误日志查看

minikube logs

基础镜像拉不下来

registry.cn-hangzhou.aliyuncs.com/google_containers/kicbase:v0.0.27

镜像地址调整为 registry.aliyuncs.com

registry.aliyuncs.com/google_containers/kicbase:v0.0.27

单独下载

docker pull registry.aliyuncs.com/google_containers/kicbase:v0.0.27

打tag

docker tag registry.aliyuncs.com/google_containers/kicbase:v0.0.27 registry.cn-hangzhou.aliyuncs.com/google_containers/kicbase:v0.0.27

删除老的tag

[root@cdh1 ~]# docker image ls

REPOSITORY TAG IMAGE ID CREATED SIZE

registry.aliyuncs.com/google_containers/kicbase v0.0.27 9fa1cc16ad6d 16 months ago 1.08GB

registry.cn-hangzhou.aliyuncs.com/google_containers/kicbase v0.0.27 9fa1cc16ad6d 16 months ago 1.08GB

[root@cdh1 ~]# docker rmi registry.aliyuncs.com/google_containers/kicbase:v0.0.27

Untagged: registry.aliyuncs.com/google_containers/kicbase:v0.0.27

Untagged: registry.aliyuncs.com/google_containers/kicbase@sha256:89b4738ee74ba28684676e176752277f0db46f57d27f0e08c3feec89311e22de

2.指定镜像启动

因为 registry.cn-hangzhou.aliyuncs.com/google_containers/kicbase:v0.0.27 自动下载、sha校验失败,而无法启动集群!

手动下载后,打tag后

还有验证环节,需要指定镜像,忽略SHA校验

| 参数 | 值 |

|---|---|

| –base-image | 指定镜像,忽略SHA校验 |

使用以下命令启动minikube:

minikube start --kubernetes-version=v1.23.1 --image-repository='registry.cn-hangzhou.aliyuncs.com/google_containers' --registry-mirror='https://bjtzu1jb.mirror.aliyuncs.com' --image-mirror-country='cn' --force --driver=docker --extra-config=kubelet.cgroup-driver=systemd --base-image="registry.cn-hangzhou.aliyuncs.com/google_containers/kicbase:v0.0.27"

minikube start --kubernetes-version=v1.23.1 --image-repository='registry.cn-hangzhou.aliyuncs.com/google_containers' --registry-mirror='https://bjtzu1jb.mirror.aliyuncs.com' --image-mirror-country='cn' --force --driver=docker --base-image="registry.cn-hangzhou.aliyuncs.com/google_containers/kicbase:v0.0.27"

终于开始创建容器,开始启动了

Q3: 新的问题来了:coredns 镜像找不到

X Unable to load cached images: loading cached images: Docker load /var/lib/minikube/images/coredns_v1.8.4: loadimage docker.: /bin/bash -c "sudo cat /var/lib/minikube/images/coredns_v1.8.4 | docker load": Process exited with status 1

直接下载

docker pull coredns/coredns:1.8.4

改tag

docker tag coredns/coredns:1.8.4 registry.cn-hangzhou.aliyuncs.com/google_containers/coredns/coredns:v1.8.4

删除旧tag

docker rmi coredns/coredns:1.8.4

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:v1.8.4 registry.cn-hangzhou.aliyuncs.com/google_containers/coredns/coredns:v1.8.4

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/coredns/coredns:v1.8.4 registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:v1.8.4

再次启动

Q4:继续下载镜像

#从国内镜像拉取

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.1-0

docker pull coredns/coredns:1.8.6

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.23.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.23.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.23.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.23.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.5

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.23.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.23.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.23.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.23.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.5

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.0-0

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/coredns/coredns:v1.8.4

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/k8s-minikube/storage-provisioner:v5

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kubernetesui/dashboard:v2.3.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kubernetesui/metrics-scraper:v1.0.7

docker pull k8s-minikube/storage-provisioner:v5

docker pull kubernetesui/dashboard:v2.3.1

docker pull kubernetesui/metrics-scraper:v1.0.7

docker pull registry.aliyuncs.com/k8s-minikube/storage-provisioner:v5

registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:v1.8.6

registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:v1.8.6

registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.1-0

docker pull etcd/etcd:3.5.1-0

改tag

docker tag coredns/coredns:1.8.4 registry.cn-hangzhou.aliyuncs.com/google_containers/coredns/coredns:v1.8.4

Q5:使用阿里云代理http://k8s.gcr.io镜像仓库

国内根本访问不了k8s的镜像库:k8s.gsc.io。

比如下载k8s.gcr.io/coredns:1.6.5镜像,在国内默认是下载失败的!

[root@k8s-vm03 ~]# docker pull k8s.gcr.io/coredns:1.6.5

Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers)

部署K8S最大的难题是镜像下载,在国内无环境情况下很难从k8s.gcr.io等镜像源里下载镜像。

这种情况下正确做法是:

- 直接指定国内镜像代理仓库(如阿里云代理仓库)进行镜像拉取下载。

- 成功拉取代理仓库中的镜像后,再将其tag打标签成为k8s.gcr.io对应镜像。

- 最后再删除从代理仓库中拉取下来的镜像。

- 要确保imagePullPolicy策略是IfNotPresent,即本地有镜像则使用本地镜像,不拉取!

或者将下载的镜像放到harbor私有仓库里,然后将image下载源指向harbor私仓地址。

# 阿里云代理仓库地址为:registry.aliyuncs.com/google_containers

# 比如下载

k8s.gcr.io/coredns:1.6.5

# 可以代理为:

registry.aliyuncs.com/google_containers/coredns:1.6.5

下面以阿里云代理仓库为例进行说明:

# 比如下载k8s.gcr.io/coredns:1.6.5镜像,在国内默认是下载失败的!

[root@k8s-vm01 coredns]# pwd

/opt/k8s/work/kubernetes/cluster/addons/dns/coredns

[root@k8s-vm01 coredns]# fgrep "image" ./*

./coredns.yaml: image: k8s.gcr.io/coredns:1.6.5

./coredns.yaml: imagePullPolicy: IfNotPresent

[root@k8s-vm03 ~]# docker pull k8s.gcr.io/coredns:1.6.5

Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers)

# 这时候去指定国内的阿里云镜像代理仓库进行下载

[root@k8s-vm03 ~]# docker pull registry.aliyuncs.com/google_containers/coredns:1.6.5

1.6.5: Pulling from google_containers/coredns

c6568d217a00: Pull complete

fc6a9081f665: Pull complete

Digest: sha256:608ac7ccba5ce41c6941fca13bc67059c1eef927fd968b554b790e21cc92543c

Status: Downloaded newer image for registry.aliyuncs.com/google_containers/coredns:1.6.5

registry.aliyuncs.com/google_containers/coredns:1.6.5

# 然后打tag,并删除之前从代理仓库下载的镜像

[root@k8s-vm03 ~]# docker tag registry.aliyuncs.com/google_containers/coredns:1.6.5 k8s.gcr.io/coredns:1.6.5

[root@k8s-vm03 ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

k8s.gcr.io/coredns 1.6.5 70f311871ae1 5 months ago 41.6MB

registry.aliyuncs.com/google_containers/coredns 1.6.5 70f311871ae1 5 months ago 41.6MB

[root@k8s-vm03 ~]# docker rmi registry.aliyuncs.com/google_containers/coredns:1.6.5

Untagged: registry.aliyuncs.com/google_containers/coredns:1.6.5

Untagged: registry.aliyuncs.com/google_containers/coredns@sha256:608ac7ccba5ce41c6941fca13bc67059c1eef927fd968b554b790e21cc92543c

[root@k8s-vm03 ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

k8s.gcr.io/coredns 1.6.5 70f311871ae1 5 months ago 41.6MB

# 最终发现我们想要的k8s.gcr.io/coredns:1.6.5镜像被成功下载下来了!

# 最后要记得:

# 确定imagePullPolicy镜像下载策略是IfNotPresent,即本地有镜像则使用本地镜像,不拉取!

# 或者将下载好的镜像放到harbor私有仓库里,然后将image下载地址指向harbor仓库地址。

以上总结三个步骤:

docker pull registry.aliyuncs.com/google_containers/coredns:1.6.5

docker tag registry.aliyuncs.com/google_containers/coredns:1.6.5 k8s.gcr.io/coredns:1.6.5

docker rmi registry.aliyuncs.com/google_containers/coredns:1.6.5

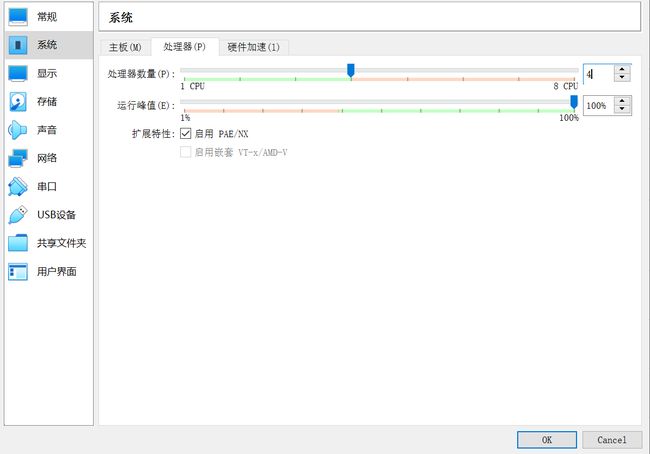

Virtual Box 使用的问题

Q1:嵌套虚拟化问题

什么是嵌套 虚拟化特性?

我们知道,在Intel处理器上,Vitural box使用Intel的vmx(virtul machine eXtensions)来提高虚拟机性能, 即硬件辅助虚拟化技术,

现在如果我们需要需要多台具备"vmx"支持的主机, 但是又没有太多物理服务器可使用,

如果我们的虚拟机能够和物理机一样支持"vmx",那么问题就解决了,

而正常情况下,一台虚拟机无法使自己成为一个hypervisors并在其上再次安装虚拟机,因为这些虚拟机并不支持"vmx", 此时,可以使用 嵌套式虚拟nested

嵌套式虚拟nested是一个可通过内核参数来启用的功能。

它能够使一台虚拟机具有物理机CPU特性,支持vmx或者svm(AMD)硬件虚拟化,

虚拟机启用嵌套VT-x/AMD-V

嵌套 虚拟化特性在VirtualBox虚拟机中默认是不启用的(设置-系统-处理器):

打开Windows Powershell,进入VirtualBox安装目录,将要安装minikube的虚拟机启用嵌套VT-x/AMD-V。

# 进入安装目录

cd 'C:\Program Files\Oracle\VirtualBox\'

# 列出所有虚拟机

C:\Program Files\Oracle\VirtualBox>.\VBoxManage.exe list vms

"cdh1" {309cd81a-248c-4184-9f99-8fe72d01c1f0}

# 打开嵌套虚拟化功能

.\VBoxManage.exe modifyvm "cdh1" --nested-hw-virt on

启用完成后可以看到界面中该选项已勾选:

Q2:conntrack依赖

安装conntrack(后面使用–driver=none启动,依赖此包)

yum install conntrack -y

使用如下命令启动minikube

minikube start --registry-mirror="https://na8xypxe.mirror.aliyuncs.com" --driver=none

使用–driver=none的好处是可以直接使用root运行minikube,无需再配置其他用户。

缺点是安全性降低、稳定性降低、数据丢失风险、无法使用–cpus、–memory进行资源限制等等,

但这不是我们需要考虑的,因为本身安装minikube就是测试学习用的。

关于driver的选择,详细可以参看:none | minikube (k8s.io)

启动时我们看到如下报错:

stderr:

error execution phase preflight: [preflight] Some fatal errors occurred:

[ERROR FileContent--proc-sys-net-bridge-bridge-nf-call-iptables]: /proc/sys/net/bridge/bridge-nf-call-iptables contents are not set to 1

[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

根据提示进行解决即可:

echo '1' > /proc/sys/net/bridge/bridge-nf-call-iptables

再次尝试启动,启动成功:

[root@test1 ~]# minikube start --registry-mirror="https://na8xypxe.mirror.aliyuncs.com" --driver=none

* minikube v1.18.1 on Centos 7.6.1810

* Using the none driver based on existing profile

* Starting control plane node minikube in cluster minikube

* Restarting existing none bare metal machine for "minikube" ...

* OS release is CentOS Linux 7 (Core)

* Preparing Kubernetes v1.20.2 on Docker 1.13.1 ...

- Generating certificates and keys ...

- Booting up control plane ...

- Configuring RBAC rules ...

* Configuring local host environment ...

*

! The 'none' driver is designed for experts who need to integrate with an existing VM

* Most users should use the newer 'docker' driver instead, which does not require root!

* For more information, see: https://minikube.sigs.k8s.io/docs/reference/drivers/none/

*

! kubectl and minikube configuration will be stored in /root

! To use kubectl or minikube commands as your own user, you may need to relocate them. For example, to overwrite your own settings, run:

*

- sudo mv /root/.kube /root/.minikube $HOME

- sudo chown -R $USER $HOME/.kube $HOME/.minikube

*

* This can also be done automatically by setting the env var CHANGE_MINIKUBE_NONE_USER=true

* Verifying Kubernetes components...

- Using image registry.cn-hangzhou.aliyuncs.com/google_containers/storage-provisioner:v4 (global image repository)

* Enabled addons: storage-provisioner, default-storageclass

* Done! kubectl is now configured to use "minikube" cluster and "default" namespace by default

Q3:依赖kubectl、kubelet

添加阿里云kubenetes yum源

# /etc/yum.repos.d/kubenetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

生成元数据缓存

# 生成元数据缓存

yum makecache

安装kubectl、kubelet

yum install kubectl -y

yum install kubelet -y

systemctl enable kubelet

带着版本安装

yum list available kubectl

kubectl version

yum remove kubectl

yum install -y kubelet-1.23.1 kubectl-1.23.1 kubeadm-1.23.1

查看版本

$ kubectl version

Client Version: version.Info{Major:"1", Minor:"23", GitVersion:"v1.23.1", GitCommit:"86ec240af8cbd1b60bcc4c03c20da9b98005b92e", GitTreeState:"clean", BuildDate:"2021-12-16T11:41:01Z", GoVersion:"go1.17.5", Compiler:"gc", Platform:"linux/amd64"}

The connection to the server localhost:8080 was refused - did you specify the right host or port?

Q4: 桥接问题

启动时我们看到如下报错:

stderr:error execution phase preflight: [preflight] Some fatal errors occurred: [ERROR FileContent--proc-sys-net-bridge-bridge-nf-call-iptables]: /proc/sys/net/bridge/bridge-nf-call-iptables contents are not set to 1[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

根据提示进行解决即可:

echo '1' > /proc/sys/net/bridge/bridge-nf-call-iptables

Q5:初始化失败报错,升级内核

error execution phase preflight: [preflight] Some fatal errors occurred:

[ERROR SystemVerification]: unexpected kernel config: CONFIG_CGROUP_PIDS

[ERROR SystemVerification]: missing required cgroups: pids

[preflight] If you know what you are doing, you can make a check non-fatal with --ignore-preflight-errors=...

To see the stack trace of this error execute with --v=5 or higher

首先,你要在cat /boot/config-uname -r | grep CGROUP这个文件里面加CONFIG_CGROUP_PIDS=y,

然后你再升级一下内核就可以了。

内核升级参考

rpm --import https://www.elrepo.org/RPM-GPG-KEY-elrepo.org

rpm -Uvh http://www.elrepo.org/elrepo-release-7.0-2.el7.elrepo.noarch.rpm

yum --disablerepo="*" --enablerepo="elrepo-kernel" list available

yum --enablerepo=elrepo-kernel install kernel-ml

cp /etc/default/grub /etc/default/grub_bak

vi /etc/default/grub

grub2-mkconfig -o /boot/grub2/grub.cfg

systemctl enable docker.service

re

查看内核版本

[root@cdh1 ~]# awk -F\' ' $1=="menuentry " {print i++ " : " $2}' /etc/grub2.cfg

0 : CentOS Linux (6.1.8-1.el7.elrepo.x86_64) 7 (Core)

1 : CentOS Linux (3.10.0-327.4.5.el7.x86_64) 7 (Core)

2 : CentOS Linux (3.10.0-327.el7.x86_64) 7 (Core)

3 : CentOS Linux (0-rescue-e147b422673549a3b4fda77127bd4bcd) 7 (Core)

编辑 /etc/default/grub 文件

设置 GRUB_DEFAULT=0,通过上面查询显示的编号为 0 的内核作为默认内核:

(sed ‘s, release .*$,g’ /etc/system-release)"

GRUB_DEFAULT=0

GRUB_DISABLE_SUBMENU=true

GRUB_TERMINAL_OUTPUT=“console”

GRUB_CMDLINE_LINUX=“crashkernel=auto rd.lvm.lv=cl/root rhgb quiet”

GRUB_DISABLE_RECOVERY=“true”

生成 grub 配置文件并重启

$ grub2-mkconfig -o /boot/grub2/grub.cfg

Generating grub configuration file …

Found linux image: /boot/vmlinuz-5.12.1-1.el7.elrepo.x86_64

Found initrd image: /boot/initramfs-5.12.1-1.el7.elrepo.x86_64.img

Found linux image: /boot/vmlinuz-3.10.0-1160.25.1.el7.x86_64

Found initrd image: /boot/initramfs-3.10.0-1160.25.1.el7.x86_64.img

Found linux image: /boot/vmlinuz-3.10.0-1160.el7.x86_64

Found initrd image: /boot/initramfs-3.10.0-1160.el7.x86_64.img

Found linux image: /boot/vmlinuz-0-rescue-16ba4d58b7b74338bfd60f5ddb0c8483

Found initrd image: /boot/initramfs-0-rescue-16ba4d58b7b74338bfd60f5ddb0c8483.img

done

$ reboot

查看内核版本

$ uname -sr

Linux 6.1.8-1.el7.elrepo.x86_64

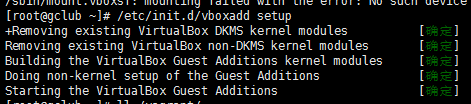

Q6:vboxsf 共享文件系统丢失

升级centos内核后出现以下异常:

Vagrant was unable to mount VirtualBox shared folders. This is usually

because the filesystem “vboxsf” is not available. This filesystem is

made available via the VirtualBox Guest Additions and kernel module.

Please verify that these guest additions are properly installed in the

guest. This is not a bug in Vagrant and is usually caused by a faulty

Vagrant box. For context, the command attempted was:

mount -t vboxsf -o uid=1000,gid=1000 vagrant /vagrant

The error output from the command was:

/sbin/mount.vboxsf: mounting failed with the error: No such device

解决方法一:

vagrant plugin install vagrant-vbguest

vagrant reload --provision

好像不行

解决方法2:

将kernel-devel也升级到

# yum --enablerepo=elrepo-kernel install kernel-lt-devel

yum install kernel

yum install kernel-headers

yum install kernel-devel

yum install gcc*

yum install make

安装完成之后,执行

/etc/init.d/vboxadd setup

成功!

挂载共享目录

mount -t vboxsf -o uid=0,gid=0,_netdev vagrant /vagrant

按照对应的kernel-devel

LINUX中的kernel-devel工具是干什么的?

如果某个程序需要内核提供的一些功能,它就需要内核的 C header 来编译程序,这个时候 linux-devel 里面的东西就用上了。

kernel-devel 不光是 C Header 文件,它还有内核的配置文件,以及其他的开发用的资料。

区别:kernel-devel包只包含用于内核开发环境所需的内核头文件以及Makefile,而kernel-souce包含所有内核源代码。

如果仅仅是用于你自己编写的模块开发的话,因为只需引用相应的内核头文件,所以只有devel包即可,如果你要修改现有的内核源代码并重新编译,那必须是kernel-souce。

kernel-souce在RH某些版本之后不再附带在发行版中了,必须自己通过kernel-XXX.src.rpm做出来。

kernel-devel是用做内核一般开发的,比如编写内核模块,原则上,可以不需要内核的原代码。

kernel则是专指内核本身的开发,因此需要内核的原代码。

rpm 去

https://pkgs.org/download/kernel(list_del) 找 kernel-xxx.rpm

https://pkgs.org/download/kernel-devel 找 kernel-devel-xxxx.rpm

[root@cdh1 ~]# rpm -qa | grep kernel

kernel-devel-3.10.0-327.el7.x86_64

kernel-ml-6.1.8-1.el7.elrepo.x86_64

kernel-3.10.0-1160.83.1.el7.x86_64

kernel-tools-3.10.0-327.4.5.el7.x86_64

kernel-lt-devel-5.4.230-1.el7.elrepo.x86_64

kernel-tools-libs-3.10.0-327.4.5.el7.x86_64

kernel-3.10.0-327.4.5.el7.x86_64

kernel-devel-3.10.0-327.4.5.el7.x86_64

kernel-headers-3.10.0-1160.83.1.el7.x86_64

kernel-3.10.0-327.el7.x86_64

kernel-devel-3.10.0-1160.83.1.el7.x86_64

[root@cdh1 ~]# uname -sr

Linux 6.1.8-1.el7.elrepo.x86_64

[root@cdh1 ~]# awk -F\' ' $1=="menuentry " {print i++ " : " $2}' /etc/grub2.cfg

0 : CentOS Linux (6.1.8-1.el7.elrepo.x86_64) 7 (Core)

1 : CentOS Linux (3.10.0-327.4.5.el7.x86_64) 7 (Core)

2 : CentOS Linux (3.10.0-327.el7.x86_64) 7 (Core)

3 : CentOS Linux (0-rescue-e147b422673549a3b4fda77127bd4bcd) 7 (Core)

[root@cdh1 ~]# cat /etc/redhat-release

CentOS Linux release 7.2.1511 (Core)

https://pkgs.org/download/kernel-devel 找 kernel-devel-xxxx.rpm

kernel-devel-6.1.8-1.el7.elrepo.x86_64.rpm

1.下载rpm ,网址

https://pkgs.org/download/kernel-devel

查看Install Howto 部分,进入对应网址,如

http://mirror.rackspace.com/elrepo/elrepo/el6/i386/RPMS/

下载对应elrepo-release*rpm

kernel-ml-devel-6.1.8-1.el7.elrepo.x86_64.rpm CentOS 7, RHEL 7, Rocky Linux 7, AlmaLinux 7 Download (pkgs.org)

POD 容器的问题

K8s的常用命令

kubectl get pods -A 查看所有的命令空间下的pods

kubectl describe node 查看所有节点的cpu和内存使用情况

kubectl describe node nodename |grep Taints 查看该节点是否可达,是否可以部署内容;一般三种情况

kubectl -n namespace名 logs -f --tail 200 pod名 -n namespace 查看命名空间下的 pods日志(运行后才有日志,此命令查看实时的200条日志)

kubectl exec -it -n namespace名 pod名 sh 进入pod

kubectl get services,pods -o wide 查看所有的pods和services, -o 输出格式为wide或者yaml

kubectl describe pod pod名 -n namespace名 查看pod的描述状态

kubectl describe job/ds/deployment pod名 -n namespace名 查看三个控制器下pod描述

kubectl exec -it pod名 -c 容器名 – /bin/bash

kubectl get pod pod名 -n namespace名 -oyaml | kubectl replace --force -f - 重启pod命令

kubectl get pods -n namespace名

kubectl get pods pod名 -o yaml -n namespace名

kubectl get ds -n namespace名 查看命名空间下daemonset的信息

kubectl get ds ds名 -o yaml -n namespace名

kubectl get deployment -n namespace名

kubectl get deployment deployment名 -o yaml -n namespace名

后面加–force --grace-period=0;立刻强制删除与下面的一起用

删除当前的应用:kubectl delete ds daemonset名 -n namespace名、kubectl delete deployment deployment名 -n namespace名(备注:如果是没删除ds/deployment/job,直接删除对应的pod(kubectl delete pod pod名 -n namespace名,pod会一直重启)

查看容器实时最新的10条日志 docker logs -f -t --tail 10 容器名

kubectl delete job jobname -n namespace名(job任务也是如此)

查看所有的pod,看看哪些有问题

kubectl get pods -A

[root@cdh1 ~]# kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system coredns-6d8c4cb4d-grphf 1/1 Running 0 8m16s

kube-system etcd-cdh1 1/1 Running 1 8m29s

kube-system kube-apiserver-cdh1 1/1 Running 1 8m31s

kube-system kube-controller-manager-cdh1 1/1 Running 1 8m29s

kube-system kube-proxy-78trt 1/1 Running 0 8m16s

kube-system kube-scheduler-cdh1 1/1 Running 1 8m29s

kube-system storage-provisioner 0/1 ImagePullBackOff 0 8m28s

kubernetes-dashboard dashboard-metrics-scraper-5496b5d99f-llh9t 0/1 ImagePullBackOff 0 6m28s

kubernetes-dashboard kubernetes-dashboard-58b48666f8-hsn8g 0/1 ImagePullBackOff 0 6m28s

storage-provisioner 的ImagePullBackOff 状态

kubectl get pods -A

kubectl describe pod XXX -n kube-system

通过kubectl describe命令详细查看redis-master-0这个pod:

[root@cdh1 ~]# kubectl describe pod storage-provisioner -n kube-system

Name: storage-provisioner

Namespace: kube-system

Priority: 0

Node: cdh1/10.0.2.15

Start Time: Sun, 29 Jan 2023 05:17:14 +0800

Labels: addonmanager.kubernetes.io/mode=Reconcile

integration-test=storage-provisioner

Annotations:

Status: Pending

IP: 10.0.2.15

IPs:

IP: 10.0.2.15

Containers:

storage-provisioner:

Container ID:

Image: registry.aliyuncs.com/google_containers/k8s-minikube/storage-provisioner:v5

Image ID:

Port:

Host Port:

Command:

/storage-provisioner

State: Waiting

Reason: ImagePullBackOff

Ready: False

Restart Count: 0

Environment:

Mounts:

/tmp from tmp (rw)

/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-l5h2b (ro)

Conditions:

Type Status

Initialized True

Ready False

ContainersReady False

PodScheduled True

Volumes:

tmp:

Type: HostPath (bare host directory volume)

Path: /tmp

HostPathType: Directory

kube-api-access-l5h2b:

Type: Projected (a volume that contains injected data from multiple sources)

TokenExpirationSeconds: 3607

ConfigMapName: kube-root-ca.crt

ConfigMapOptional:

DownwardAPI: true

QoS Class: BestEffort

Node-Selectors:

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning FailedScheduling 27m default-scheduler 0/1 nodes are available: 1 node(s) had taint {node.kubernetes.io/not-ready: }, that the pod didn't tolerate.

Normal Scheduled 27m default-scheduler Successfully assigned kube-system/storage-provisioner to cdh1

Normal Pulling 26m (x4 over 27m) kubelet Pulling image "registry.aliyuncs.com/google_containers/k8s-minikube/storage-provisioner:v5"

Warning Failed 26m (x4 over 27m) kubelet Failed to pull image "registry.aliyuncs.com/google_containers/k8s-minikube/storage-provisioner:v5": rpc error: code = Unknown desc = Error response from daemon: pull access denied for registry.aliyuncs.com/google_containers/k8s-minikube/storage-provisioner, repository does not exist or may require 'docker login': denied: requested access to the resource is denied

Warning Failed 26m (x4 over 27m) kubelet Error: ErrImagePull

Warning Failed 25m (x6 over 27m) kubelet Error: ImagePullBackOff

Normal BackOff 2m39s (x109 over 27m) kubelet Back-off pulling image "registry.aliyuncs.com/google_containers/k8s-minikube/storage-provisioner:v5"

dashboard-metrics-scraper-5496b5d99f-wj2d9

我们查看一下storage-provisioner pod的imagePullPolicy:

# kubectl get pod dashboard-metrics-scraper-5496b5d99f-wj2d9 -n kubernetes-dashboard -o yaml

... ...

spec:

containers:

- command:

- /storage-provisioner

image: registry.cn-hangzhou.aliyuncs.com/google_containers/k8s-minikube/storage-provisioner:v5

imagePullPolicy: IfNotPresent

name: storage-provisioner

我们发现storage-provisioner的imagePullPolicy为ifNotPresent,这意味着如果本地有storage-provisioner:v5这个镜像的话,minikube不会再去远端下载该image。这样我们可以先将storage-provisioner:v5下载到本地并重新tag为registry.cn-hangzhou.aliyuncs.com/google_containers/k8s-minikube/storage-provisioner:v5。

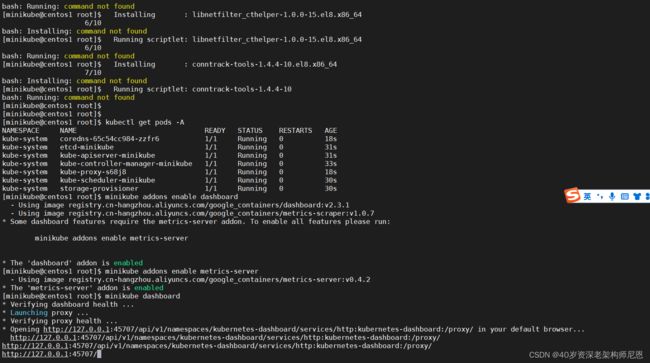

启动Dashboard

使用如下命令启动dashboard:

$ minikube addons enable dashboard

▪ Using image kubernetesui/dashboard:v2.3.1

▪ Using image kubernetesui/metrics-scraper:v1.0.7

Some dashboard features require the metrics-server addon. To enable all features please run:

minikube addons enable metrics-server

The 'dashboard' addon is enabled

$ minikube addons enable metrics-server

▪ Using image k8s.gcr.io/metrics-server/metrics-server:v0.4.2

The 'metrics-server' addon is enabled

$ minikube dashboard

Verifying dashboard health ...

Launching proxy ...

Verifying proxy health ...

Opening http://127.0.0.1:39887/api/v1/namespaces/kubernetes-dashboard/services/http:kubernetes-dashboard:/proxy/ in your default browser...

http://127.0.0.1:39887/api/v1/namespaces/kubernetes-dashboard/services/http:kubernetes-dashboard:/proxy/

直接用minikube addons启动Dashboard,提示还需要一并启用metrics-server,都enable下

终于启动了

[minikube@cdh1 root]$ kubectl get pod -A

如何从宿主机也就是我们的Windows中访问dashborad呢

从上面输出的信息可以看到,dashboard绑定的IP地址为本地回环地址127.0.0.1,这意味着该地址只能在本地访问。

虚拟机是没有GUI的,那么如何从宿主机也就是我们的Windows中访问dashborad呢?

可以进行如下操作:

使用 nginx、openresty 进行 反向代理

http://127.0.0.1:39887/api/v1/namespaces/kubernetes-dashboard/services/http:kubernetes-dashboard:/proxy/

http://k8s:80/api/v1/namespaces/kubernetes-dashboard/services/http:kubernetes-dashboard:/proxy/

直接使用

此时的minikube kubectl --就相当于k8s里的kubectl命令,当然我们实际不会这样使用,

我们可以minikube的命令给alias一下:

$ alias kubectl="minikube kubectl --"

此时再直接运行kubectl命令:

$ kubectl

kubectl controls the Kubernetes cluster manager.

Find more information at: https://kubernetes.io/docs/reference/kubectl/overview/

Basic Commands (Beginner):

create Create a resource from a file or from stdin

expose Take a replication controller, service, deployment or pod and expose it as a new

Kubernetes service

run 在集群中运行一个指定的镜像

set 为 objects 设置一个指定的特征

Basic Commands (Intermediate):

explain Get documentation for a resource

get 显示一个或更多 resources

edit 在服务器上编辑一个资源

delete Delete resources by file names, stdin, resources and names, or by resources and

label selector

...

好了,就可以愉快的k8s玩耍了。

minikube重建

如果环境搞乱了想重新部署,很简单就可以实现

$ minikube delete

$ minikube start --driver=docker // 这里指定了用docker,不指定也会自动检测

docker-compose to minikube

需要将docker-compose.yaml转变为k8s deploy、svc、configmap,以swagger-ui为例

docker-compose.yaml

version: "3.0"

services:

swiagger-ui:

image: swaggerapi/swagger-ui

container_name: swagger_ui_container

ports:

- "9092:8080"

volumes:

- ../docs/openapi:/usr/share/nginx/html/doc

environment:

API_URL: ./doc/api.yaml

k8s deploy、svc、configmap

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

io.minikube.service: swagger-ui

name: swagger-ui

spec:

replicas: 1

selector:

matchLabels:

io.minikube.service: swagger-ui

template:

metadata:

labels:

io.minikube.service: swagger-ui

spec:

containers:

- env:

- name: SWAGGER_JSON

value: /openapi/api.yaml

image: swaggerapi/swagger-ui

name: swagger-ui

ports:

- containerPort: 8080

imagePullPolicy: IfNotPresent

volumeMounts:

- mountPath: /openapi

name: swagger-ui-cm

volumes:

- name: swagger-ui-cm

configMap:

name: swagger-ui-cm

---

apiVersion: v1

kind: Service

metadata:

name: swagger-ui

labels:

io.minikube.service: swagger-ui

spec:

ports:

- port: 8080

protocol: TCP

targetPort: 8080

selector:

io.minikube.service: swagger-ui

---

apiVersion: v1

kind: ConfigMap

metadata:

name: swagger-ui-cm

data:

api.yaml: |

openapi: 3.0.0

version: "1.0"

...

遇到的问题

外部访问问题

minikube内的k8s网络明显与host不一样,不论使用不使用Type=NodePort,都无法直接访问,都需要用port-forward

这里查了些资料,可能的一个解释是,minikube使用docker-machine为底层,实现可以部署到vm、container、host等基础设施上,docker-machine会构建自身的docker环境,与host不同,网络也不在一个平面,所以使用NodePort,从host也无法访问,需要借助kubectl port-forward --address=0.0.0.0 service/hello-minikube 7080:8080。

------------ 2021-11-26 update---------------

可以使用以下命令获取minikube的ip,然后通过该ip+nodeport访问

$ minikube ip

192.168.49.2

也可以通过一下命令直接获取对应service的url

$ minikube service hello-minikube --url

http://192.168.49.2:30660

pull image问题

minikube内的docker daemon与host docker daemon不一样,且k8s不与host上的docker共享信息,host上的docker images和daemon.json配置对minikube内的docker daemon不可见,minikube内的docker daemon总是从dockerhub pull image,会遇到

You have reached your pull rate limit.

You may increase the limit by authenticating and upgrading: https://www.docker.com/increase-rate-limits.

You must authenticate your pull requests.

解决办法

可以先用host docker pull images,然后load到minikube

$ docker pull

$ minikube image load

注意k8s默认的imagePullPolicy

Default image pull policy

When you (or a controller) submit a new Pod to the API server, your cluster sets theimagePullPolicyfield when specific conditions are met:

if you omit theimagePullPolicyfield, and the tag for the container image is:latest,imagePullPolicyis automatically set toAlways;

if you omit theimagePullPolicyfield, and you don’t specify the tag for the container image,imagePullPolicyis automatically set toAlways;

if you omit theimagePullPolicyfield, and you specify the tag for the container image that isn’t:latest, theimagePullPolicyis automatically set toIfNotPresent.

- 如果没有设置imagePullPolicy,但image tag是latest,那么默认就是imagePullPolicy: Always

- 如果没有设置imagePullPolicy,也没有设置image tag,那么默认也是imagePullPolicy: Always

- 如果设置了image tag,默认imagePullPolicy: IfNotPresent

为了不出错,建议直接指定imagePullPolicy: IfNotPresent

参考资料

Minikube - Kubernetes本地实验环境-阿里云开发者社区 (aliyun.com)

minikube start | minikube (k8s.io)

安装Istio - 肖祥 - 博客园 (cnblogs.com)

https://cn.dubbo.apache.org/zh/overview/tasks/traffic-management/

Kubernetes入门,使用minikube 搭建本地k8s 环境 (bbsmax.com)

CentOS7下minikube的安装 - 拾月凄辰 - 博客园 (cnblogs.com)

按照Kubernetes官网教程Installing kubeadm遇到的几个大坑_阿里云__小鱼塘-DevPress官方社区 (csdn.net)

(14条消息) 如何在VirtualBox的CentOS虚拟机中安装阿里云版本Minikube_洒满阳光的午后的博客-CSDN博客_宿主机怎么访问虚机中的minikube

7.我在B站学云原生之Kubernetes入门实践基于containerd容器运行时安装部署K8S集群环境 - 哔哩哔哩 (bilibili.com)

(26条消息) centos升级系统内核_centos 升级内核_月夜楓的博客-CSDN博客

https://blog.csdn.net/m0_62948770/article/details/127678600

内核升级

https://www.topunix.com/post-4883.html

https://blog.csdn.net/inthat/article/details/117074180

删除旧内核

https://blog.csdn.net/weixin_45661908/article/details/123377496

centos-8.2

https://app.vagrantup.com/bento/boxes/centos-8.2