pytorch 笔记:VAE 变分自编码器

1 导入库

import torch

import torchvision

from torch import nn

from torch import optim

import torch.nn.functional as F

from torch.autograd import Variable

from torch.utils.data import DataLoader

from torchvision import transforms

from torchvision.utils import save_image

from torchvision.datasets import MNIST

import os

import numpy as np2 VAE 模型

class VAE(nn.Module):

def __init__(self, input_dim, hidden_dim, latent_dim):

super(VAE, self).__init__()

self.fc1 = nn.Linear(input_dim, hidden_dim)

self.fc2 = nn.Linear(hidden_dim, latent_dim * 2)

self.fc3 = nn.Linear(latent_dim, hidden_dim)

self.fc4 = nn.Linear(hidden_dim, input_dim)

def encode(self, x):

h = F.relu(self.fc1(x))

mu, logvar = torch.split(self.fc2(h), latent_dim, dim=1)

return mu, logvar

#两层全连接层作为encoder,返回均值μ和σ^2

def reparameterize(self, mu, logvar):

std = torch.exp(0.5 * logvar)

# 从log var中计算标准差

eps = torch.randn_like(std)

return mu + eps * std

# 根据N(μ,σ^2)采样

def decode(self, z):

h = F.relu(self.fc3(z))

return torch.sigmoid(self.fc4(h))

#根据encoder采样得到的z,通过全连接层获得输出

def forward(self, x):

mu, logvar = self.encode(x)

#encoder:获取均值μ和标准差σ^2

z = self.reparameterize(mu, logvar)

#encoder:采样z

return self.decode(z), mu, logvar

## Decode the latent code to reconstruction

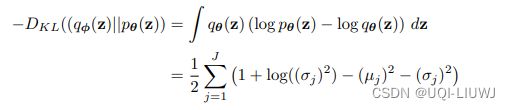

3 定义损失函数

![]()

其中KL散度VAE 论文有推导:

# Define the loss function

def loss_function(recon_x, x, mu, logvar):

BCE = F.binary_cross_entropy(recon_x, x, reduction='sum')

#使用BCE计算重构误差

# Calculate latent code regularization loss using Kullback-Leibler divergence

KLD = -0.5 * torch.sum(1 + logvar - mu.pow(2) - logvar.exp())

# Return total loss

return BCE + KLD4 生成模型

# Initialize parameters

input_dim = 784

hidden_dim = 128

latent_dim = 32

model = VAE(input_dim,hidden_dim,latent_dim)

print(model)

'''

VAE(

(fc1): Linear(in_features=784, out_features=128, bias=True)

(fc2): Linear(in_features=128, out_features=64, bias=True)

(fc3): Linear(in_features=32, out_features=128, bias=True)

(fc4): Linear(in_features=128, out_features=784, bias=True)

)

'''5 数据部分

num_epochs = 50

batch_size = 128

learning_rate = 1e-3

img_transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize([0.5], [0.5])

])

dataset = MNIST('./data', transform=img_transform, download=False)

dataloader = DataLoader(dataset, batch_size=batch_size, shuffle=True)

optimizer = optim.Adam(model.parameters(), lr=learning_rate)6 training部分

for epoch in range(num_epochs):

model.train()

train_loss = 0

for batch_idx, data in enumerate(dataloader):

img, _ = data

img = img.view(img.size(0), -1)

optimizer.zero_grad()

recon_batch, mu, logvar = model(img)

loss = loss_function(recon_batch, img, mu, logvar)

loss.backward()

train_loss += loss.data.item()

optimizer.step()