Hadoop框架:HDFS高可用环境配置

一、HDFS高可用

1、基础描述

在单点或者少数节点故障的情况下,集群还可以正常的提供服务,HDFS高可用机制可以通过配置Active/Standby两个NameNodes节点实现在集群中对NameNode的热备来消除单节点故障问题,如果单个节点出现故障,可通过该方式将NameNode快速切换到另外一个节点上。

2、机制详解

- 基于两个NameNode做高可用,依赖共享Edits文件和Zookeeper集群;

- 每个NameNode节点配置一个ZKfailover进程,负责监控所在NameNode节点状态;

- NameNode与ZooKeeper集群维护一个持久会话;

- 如果Active节点故障停机,ZooKeeper通知Standby状态的NameNode节点;

- 在ZKfailover进程检测并确认故障节点无法工作后;

- ZKfailover通知Standby状态的NameNode节点切换为Active状态继续服务;

ZooKeeper在大数据体系中非常重要,协调不同组件的工作,维护并传递数据,例如上述高可用下自动故障转移就依赖于ZooKeeper组件。

二、HDFS高可用

1、整体配置

| 服务列表 | HDFS文件 | YARN调度 | 单服务 | 共享文件 | Zk集群 |

|---|---|---|---|---|---|

| hop01 | DataNode | NodeManager | NameNode | JournalNode | ZK-hop01 |

| hop02 | DataNode | NodeManager | ResourceManager | JournalNode | ZK-hop02 |

| hop03 | DataNode | NodeManager | SecondaryNameNode | JournalNode | ZK-hop03 |

2、配置JournalNode

创建目录

[root@hop01 opt]# mkdir hopHA

拷贝Hadoop目录

cp -r /opt/hadoop2.7/ /opt/hopHA/

配置core-site.xml

<configuration>

<property>

<name>fs.defaultFSname>

<value>hdfs://myclustervalue>

property>

<property>

<name>hadoop.tmp.dirname>

<value>/opt/hopHA/hadoop2.7/data/tmpvalue>

property>

configuration>

配置hdfs-site.xml,添加内容如下

<property>

<name>dfs.nameservicesname>

<value>myclustervalue>

property>

<property>

<name>dfs.ha.namenodes.myclustername>

<value>nn1,nn2value>

property>

<property>

<name>dfs.namenode.rpc-address.mycluster.nn1name>

<value>hop01:9000value>

property>

<property>

<name>dfs.namenode.rpc-address.mycluster.nn2name>

<value>hop02:9000value>

property>

<property>

<name>dfs.namenode.http-address.mycluster.nn1name>

<value>hop01:50070value>

property>

<property>

<name>dfs.namenode.http-address.mycluster.nn2name>

<value>hop02:50070value>

property>

<property>

<name>dfs.namenode.shared.edits.dirname>

<value>qjournal://hop01:8485;hop02:8485;hop03:8485/myclustervalue>

property>

<property>

<name>dfs.ha.fencing.methodsname>

<value>sshfencevalue>

property>

<property>

<name>dfs.ha.fencing.ssh.private-key-filesname>

<value>/root/.ssh/id_rsavalue>

property>

<property>

<name>dfs.journalnode.edits.dirname>

<value>/opt/hopHA/hadoop2.7/data/jnvalue>

property>

<property>

<name>dfs.permissions.enablename>

<value>falsevalue>

property>

<property>

<name>dfs.client.failover.proxy.provider.myclustername>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvidervalue>

property>

依次启动journalnode服务

[root@hop01 hadoop2.7]# pwd

/opt/hopHA/hadoop2.7

[root@hop01 hadoop2.7]# sbin/hadoop-daemon.sh start journalnode

删除hopHA下数据

[root@hop01 hadoop2.7]# rm -rf data/ logs/

NN1格式化并启动NameNode

[root@hop01 hadoop2.7]# pwd

/opt/hopHA/hadoop2.7

bin/hdfs namenode -format

sbin/hadoop-daemon.sh start namenode

NN2同步NN1数据

[root@hop02 hadoop2.7]# bin/hdfs namenode -bootstrapStandby

NN2启动NameNode

[root@hop02 hadoop2.7]# sbin/hadoop-daemon.sh start namenode

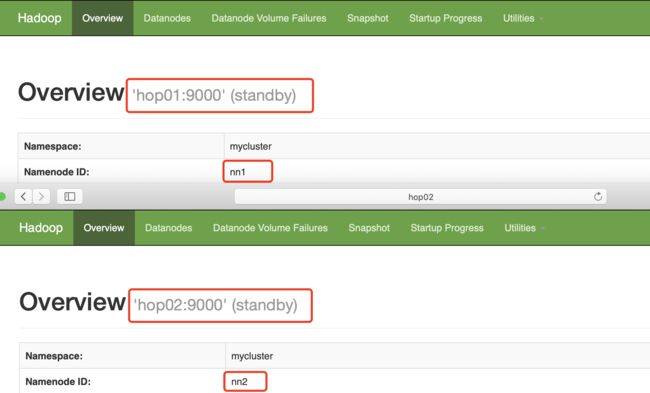

查看当前状态

在NN1上启动全部DataNode

[root@hop01 hadoop2.7]# sbin/hadoop-daemons.sh start datanode

NN1切换为Active状态

[root@hop01 hadoop2.7]# bin/hdfs haadmin -transitionToActive nn1

[root@hop01 hadoop2.7]# bin/hdfs haadmin -getServiceState nn1

active

3、故障转移配置

配置hdfs-site.xml,新增内容如下,同步集群

<property>

<name>dfs.ha.automatic-failover.enabledname>

<value>truevalue>

property>

配置core-site.xml,新增内容如下,同步集群

<property>

<name>ha.zookeeper.quorumname>

<value>hop01:2181,hop02:2181,hop03:2181value>

property>

关闭全部HDFS服务

[root@hop01 hadoop2.7]# sbin/stop-dfs.sh

启动Zookeeper集群

/opt/zookeeper3.4/bin/zkServer.sh start

hop01初始化HA在Zookeeper中状态

[root@hop01 hadoop2.7]# bin/hdfs zkfc -formatZK

hop01启动HDFS服务

[root@hop01 hadoop2.7]# sbin/start-dfs.sh

NameNode节点启动ZKFailover

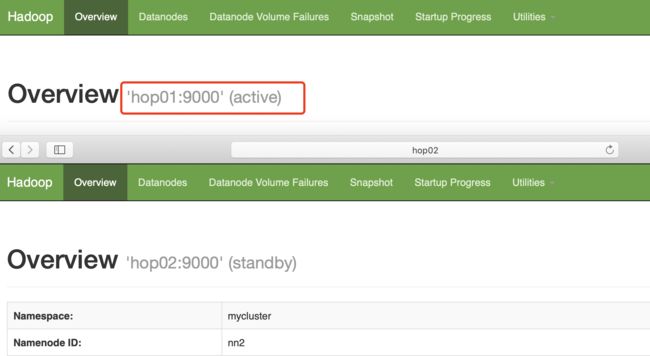

这里hop01和hop02先启动的服务状态就是Active,这里先启动hop02。

[hadoop2.7]# sbin/hadoop-daemon.sh start zkfc

结束hop02的NameNode进程

kill -9 14422

等待一下查看hop01状态

[root@hop01 hadoop2.7]# bin/hdfs haadmin -getServiceState nn1

active

三、YARN高可用

1、基础描述

基本流程和思路与HDFS机制类似,依赖Zookeeper集群,当Active节点故障时,Standby节点会切换为Active状态持续服务。

2、配置详解

环境同样基于hop01和hop02来演示。

配置yarn-site.xml,同步集群下服务

<configuration>

<property>

<name>yarn.nodemanager.aux-servicesname>

<value>mapreduce_shufflevalue>

property>

<property>

<name>yarn.resourcemanager.ha.enabledname>

<value>truevalue>

property>

<property>

<name>yarn.resourcemanager.cluster-idname>

<value>cluster-yarn01value>

property>

<property>

<name>yarn.resourcemanager.ha.rm-idsname>

<value>rm1,rm2value>

property>

<property>

<name>yarn.resourcemanager.hostname.rm1name>

<value>hop01value>

property>

<property>

<name>yarn.resourcemanager.hostname.rm2name>

<value>hop02value>

property>

<property>

<name>yarn.resourcemanager.zk-addressname>

<value>hop01:2181,hop02:2181,hop03:2181value>

property>

<property>

<name>yarn.resourcemanager.recovery.enabledname>

<value>truevalue>

property>

<property>

<name>yarn.resourcemanager.store.classname> <value>org.apache.hadoop.yarn.server.resourcemanager.recovery.ZKRMStateStorevalue>

property>

configuration>

重启journalnode节点

sbin/hadoop-daemon.sh start journalnode

在NN1服务格式化并启动

[root@hop01 hadoop2.7]# bin/hdfs namenode -format

[root@hop01 hadoop2.7]# sbin/hadoop-daemon.sh start namenode

NN2上同步NN1元数据

[root@hop02 hadoop2.7]# bin/hdfs namenode -bootstrapStandby

启动集群下DataNode

[root@hop01 hadoop2.7]# sbin/hadoop-daemons.sh start datanode

NN1设置为Active状态

先启动hop01即可,然后启动hop02。

[root@hop01 hadoop2.7]# sbin/hadoop-daemon.sh start zkfc

hop01启动yarn

[root@hop01 hadoop2.7]# sbin/start-yarn.sh

hop02启动ResourceManager

[root@hop02 hadoop2.7]# sbin/yarn-daemon.sh start resourcemanager

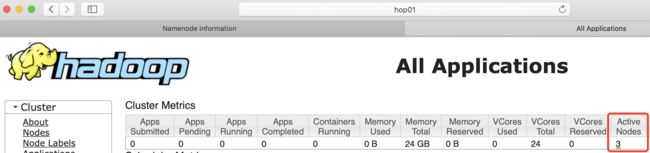

查看状态

[root@hop01 hadoop2.7]# bin/yarn rmadmin -getServiceState rm1