CentOS7下安装Hadoop伪分布式

前提条件

拥有CentOS7服务器版环境

软件版本说明

jdk1.8

hadoop2.7.3

虚拟机环境准备

通网络

能ping通外网,例如:

ping baidu.com

如果ping不通,可以修改如下文件:

vi /etc/sysconfig/network-scripts/ifcfg-ens33

将ONBOOT=no改为ONBOOT=yes

重启网络或重启机器

重启网络

systemctl restart network

重启机器

reboot

修改主机名

将主机名从localhost改为node1,操作过程如下:

[root@localhost ~]# hostname localhost.localdomain [root@localhost ~]# hostnamectl set-hostname node1 [root@localhost ~]# hostname node1 未重启时主机名未变 [root@localhost ~]# reboot ... Connecting to 192.168.193.140:22... Connection established. To escape to local shell, press 'Ctrl+Alt+]'. WARNING! The remote SSH server rejected X11 forwarding request. Last login: Tue Mar 8 00:55:08 2022 from 192.168.193.1 [root@node1 ~]# 重启后,命令行中的主机名改变了

新增普通用户

因为root用户权限太高,误操作可能造成一些麻烦,一般情况下,我们不会用root来安装hadoop,所以我们需要创建普通用户来安装hadoop。创建新用户方法如下:

创建新用户,例如用户名为:hadoop,并设置新用户密码,重复设置2次,看不到输入的密码,这是linux的安全机制,输入即可。

[root@node1 ~]# adduser hadoop [root@node1 ~]# passwd hadoop Changing password for user hadoop. New password: BAD PASSWORD: The password is shorter than 8 characters Retype new password: passwd: all authentication tokens updated successfully.

给普通用户添加sudo执行权限

[root@node1 ~]# chmod -v u+w /etc/sudoers mode of ‘/etc/sudoers’ changed from 0440 (r--r-----) to 0640 (rw-r-----) [root@node1 ~]# sudo vim /etc/sudoers 在%wheel ALL=(ALL) ALL一行下面添加如下语句: hadoop ALL=(ALL) ALL [root@node1 ~]# chmod -v u-w /etc/sudoers mode of ‘/etc/sudoers’ changed from 0640 (rw-r-----) to 0440 (r--r-----)

静态IP(非不要)

因为电脑连接网络不通,CentOS的IP可能会变化,可以设置静态IP来解决。

网关查询:

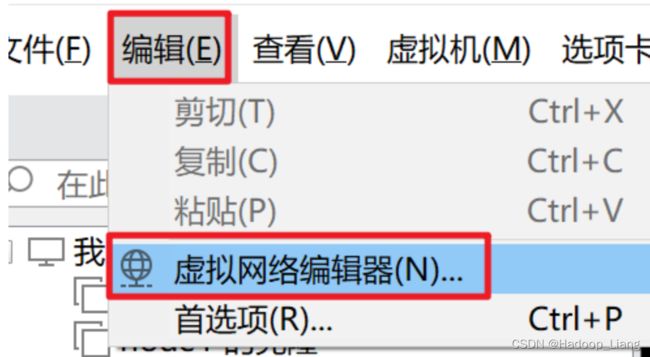

Vmware-->编辑-->虚拟机网络编辑器

注意:这里查询到网关为192.168.193.2,以自己实际查到的网关为准。

设置静态ip

[root@node1 ~]# vi /etc/sysconfig/network-scripts/ifcfg-ens33

修改或添加如下几项:

BOOTPROTO=static IPADDR=192.168.193.140 GATEWAY=192.168.193.2 DNS1=192.168.193.2

注意:

1.GATEWAY和DNS1的值都填写上一步在vmware中实际查到的网关地址。

2.IPADDR为设置的静态IP地址,要与查到的网关处于同一网络,查到的网关所处的网段为192.168.193,IP地址不要与网关一致,最后一位建议设置为128-255之间,这里IP最后一位设置为140。

关闭防火墙

查看防火墙状态

[root@node1 ~]# systemctl status firewalld ● firewalld.service - firewalld - dynamic firewall daemon Loaded: loaded (/usr/lib/systemd/system/firewalld.service; disabled; vendor preset: enabled) Active: inactive (dead) Docs: man:firewalld(1) [root@node1 ~]#

inactive (dead)为关闭状态

如果是active (running),为开启状态,可以用如下命令关闭防火墙

[root@node1 ~]# systemctl stop firewalld

安装hadoop伪分布式

登录普通用户

使用普通用户登录CentOS7,这里登录的普通用户为hadoop

[hadoop@node1 ~]$

映射IP

[hadoop@node1 ~]$ sudo vim /etc/hosts

文件末尾,添加如下内容:

192.168.193.140 node1

目录准备

创建2个目录:installfile和soft,installfile存放软件安装包,soft是软件的安装目录。

[hadoop@node1 ~]$ mkdir installfile [hadoop@node1 ~]$ mkdir soft [hadoop@node1 soft]$ ls soft installfile

安装配置jdk

官网下载jdk安装包:jdk-8u271-linux-x64.tar.gz,并将安装包上传到Linux的installfile目录。

进入安装包所在目录

cd ~/installfile

解压安装包

[hadoop@node1 installfile]$ tar -zxvf jdk-8u271-linux-x64.tar.gz -C ~/soft/

创建软链接

切换到解压目录 [hadoop@node1 installfile]$ cd ~/soft/ [hadoop@node1 soft]$ ls jdk1.8.0_271 创建软链接 [hadoop@node1 soft]$ ln -s jdk1.8.0_271 jdk 查看软链接 [hadoop@node1 soft]$ ls jdk jdk1.8.0_271

安装nano命令(非必要,因为个人习惯使用nano编辑命令)

yum install nano -y

nano命令的功能和vi命令一样(如果不习惯用nano,后面可以用vi代替nano)。

nano的使用方法:nano 文件名,输入相关内容,保存按Ctrl+O,回车确认文件名,退出按Ctrl+X

配置环境变量

这里在.bashrc文件配置环境变量,也可以配置在/etc/profile文件。

[hadoop@node1 soft]$ nano ~/.bashrc

在文件末尾添加如下语句

export JAVA_HOME=~/soft/jdk

export JRE_HOME=${JAVA_HOME}/jre

export CLASSPATH=.:${JAVA_HOME}/lib:${JRE_HOME}/lib

export PATH=${JAVA_HOME}/bin:$PATH

让配置立即生效

[hadoop@node1 soft]$ source ~/.bashrc

验证

[hadoop@node1 soft]$ java -version java version "1.8.0_271" Java(TM) SE Runtime Environment (build 1.8.0_271-b09) Java HotSpot(TM) 64-Bit Server VM (build 25.271-b09, mixed mode)

能看到输出版本号即为安装配置成功。

安装配置hadoop

下载并上传安装包到Linux目录中,例如:~/installfile

[hadoop@node1 installfile]$ cd ../installfile/

解压

[hadoop@node1 installfile]$ tar -zxvf hadoop-2.7.3.tar.gz -C ~/soft/

创建软链接

[hadoop@node1 installfile]$ cd ~/soft/ [hadoop@node1 soft]$ ls hadoop-2.7.3 jdk jdk1.8.0_271 创建软链接 [hadoop@node1 soft]$ ln -s hadoop-2.7.3 hadoop [hadoop@node1 soft]$ ls hadoop hadoop-2.7.3 jdk jdk1.8.0_271

配置环境变量

nano ~/.bashrc

文件末尾添加如下内容:

export HADOOP_HOME=~/soft/hadoop export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

让环境变量立即生效

source ~/.bashrc

验证

[hadoop@node1 soft]$ hadoop version Hadoop 2.7.3 Subversion https://git-wip-us.apache.org/repos/asf/hadoop.git -r baa91f7c6bc9cb92be5982de4719c1c8af91ccff Compiled by root on 2016-08-18T01:41Z Compiled with protoc 2.5.0 From source with checksum 2e4ce5f957ea4db193bce3734ff29ff4 This command was run using /home/hadoop/soft/hadoop-2.7.3/share/hadoop/common/hadoop-common-2.7.3.jar

能看到hadoop版本号,说明环境变量设置成功。

设置免密登录,执行ssh-keygen -t rsa命令后,再连续敲击3次回车

[hadoop@node1 soft]$ ssh-keygen -t rsa Generating public/private rsa key pair. Enter file in which to save the key (/home/hadoop/.ssh/id_rsa): Created directory '/home/hadoop/.ssh'. Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /home/hadoop/.ssh/id_rsa. Your public key has been saved in /home/hadoop/.ssh/id_rsa.pub. The key fingerprint is: SHA256:sXZw/coSYm19gGSLdOLQGeskm+vPVr6lXENvmmQ8eeo hadoop@node1 The key's randomart image is: +---[RSA 2048]----+ | ..+o+ | | +oB + | | . B + o | | * * . o | | o S = o o | | + +.= = | | . o. % + | | . ....B O | | .oo +oE | +----[SHA256]-----+

查看生成的密钥对

[hadoop@node1 soft]$ ls ~/.ssh/ id_rsa id_rsa.pub

追加公钥,执行命令后,根据提示输入yes再次回车

[hadoop@node1 soft]$ ssh-copy-id node1 /usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/home/hadoop/.ssh/id_rsa.pub" The authenticity of host 'node1 (192.168.193.140)' can't be established. ECDSA key fingerprint is SHA256:r4LfTp6UJiZJbbxCrCaF8ouB1XEySe9c1/5d+7a39+E. ECDSA key fingerprint is MD5:08:e4:65:b1:9a:16:5d:fd:f8:ac:13:fc:4c:88:f4:8e. Are you sure you want to continue connecting (yes/no)? yes /usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed /usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys hadoop@node1's password: Number of key(s) added: 1 Now try logging into the machine, with: "ssh 'node1'" and check to make sure that only the key(s) you wanted were added. [hadoop@node1 soft]$

查看生成的认证文件authorized_keys

[hadoop@node1 soft]$ ls ~/.ssh/ authorized_keys id_rsa id_rsa.pub known_hosts 查看认证文件内容authorized_keys [hadoop@node1 soft]$ cat ~/.ssh/authorized_keys ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABAQCuG6EHCrL93O355sr7dkLO8S75QqizvCXNQztwY0F2mrVmUZNk9BleNRE1jAirOQccFTR11SCGIhwa1klPYvGwAtY66YG+y47tsKkLc9VHoIvfpwjG3MzSwuAJhhsnv2UOIGX8kkP0/cSVT1YyfM3cJO0OzN9gB4vTE/0VUJRgGk43qO3v84kWIPTZrJlzr8wz5GSD3quteRea6Gxv7EPksWfP18sZDsynvYZ8zvXzmiv1Qf1g5Pcc52RhF0ghsZoVHB03KAPX72d4N33budSy7jnRTz81NSqnTZ8ajLOSIQVxeFk6tJwHOLW/uDQGy124PaYkiElrVyHesxJbC0Vl hadoop@node1 查看公钥 [hadoop@node1 soft]$ cat ~/.ssh/id_rsa.pub ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABAQCuG6EHCrL93O355sr7dkLO8S75QqizvCXNQztwY0F2mrVmUZNk9BleNRE1jAirOQccFTR11SCGIhwa1klPYvGwAtY66YG+y47tsKkLc9VHoIvfpwjG3MzSwuAJhhsnv2UOIGX8kkP0/cSVT1YyfM3cJO0OzN9gB4vTE/0VUJRgGk43qO3v84kWIPTZrJlzr8wz5GSD3quteRea6Gxv7EPksWfP18sZDsynvYZ8zvXzmiv1Qf1g5Pcc52RhF0ghsZoVHB03KAPX72d4N33budSy7jnRTz81NSqnTZ8ajLOSIQVxeFk6tJwHOLW/uDQGy124PaYkiElrVyHesxJbC0Vl hadoop@node1 可发现,认证文件的内容和公钥一致。

验证免密登录

ssh登录到node1,不需要输入密码,注意路径的变化:由soft目录切换到了~目录,执行exit退出ssh登录 [hadoop@node1 soft]$ ssh node1 Last login: Tue Mar 8 05:54:02 2022 from 192.168.193.1 [hadoop@node1 ~]$ exit logout Connection to node1 closed. [hadoop@node1 soft]$ 也可以ssh ip [hadoop@node1 soft]$ ssh 192.168.193.140 Last login: Tue Mar 8 06:16:58 2022 from node1 [hadoop@node1 ~]$ exit logout Connection to 192.168.193.140 closed.

配置hadoop伪分布式

进入hadoop配置目录

cd ${HADOOP_HOME}/etc/hadoop

[hadoop@node1 soft]$ cd ${HADOOP_HOME}/etc/hadoop

[hadoop@node1 hadoop]$ ls

capacity-scheduler.xml hadoop-policy.xml kms-log4j.properties ssl-client.xml.example

configuration.xsl hdfs-site.xml kms-site.xml ssl-server.xml.example

container-executor.cfg httpfs-env.sh log4j.properties yarn-env.cmd

core-site.xml httpfs-log4j.properties mapred-env.cmd yarn-env.sh

hadoop-env.cmd httpfs-signature.secret mapred-env.sh yarn-site.xml

hadoop-env.sh httpfs-site.xml mapred-queues.xml.template

hadoop-metrics2.properties kms-acls.xml mapred-site.xml.template

hadoop-metrics.properties kms-env.sh slaves

配置hadoop-env.sh

[hadoop@node1 hadoop]$ nano hadoop-env.sh

配置JAVA_HOME

export JAVA_HOME=/home/hadoop/soft/jdk

配置core-site.xml, 在之间添加如下配置

fs.defaultFS hdfs://node1:8020 hadoop.tmp.dir /home/hadoop/soft/hadoop/tmp

配置hdfs-site.xml

同样在之间添加配置内容如下:

dfs.replication 1

复制模板文件mapred-site.xml.template为mapred-site.xml

[hadoop@node1 hadoop]$ cp mapred-site.xml.template mapred-site.xml

配置mapred-site.xml

同样在之间添加配置内容如下:

mapreduce.framework.name yarn

以上设置了mapreduce运行在yarn框架之上。

配置yarn-site.xml

同样在之间添加配置内容如下:

yarn.resourcemanager.hostname node1 yarn.nodemanager.aux-services mapreduce_shuffle

配置slaves

配置从节点所在的机器

nano slaves

将localhost修改为主机名,例如: node1

node1

格式化文件系统

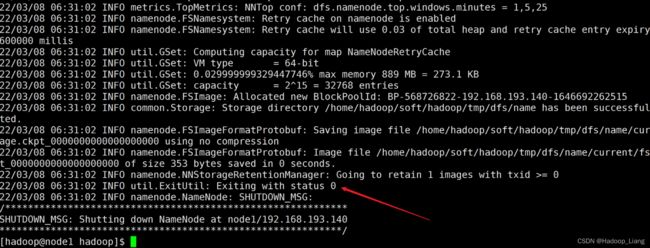

hdfs namenode -format

[hadoop@node1 hadoop]$ hdfs namenode -format 22/03/08 06:31:01 INFO namenode.NameNode: STARTUP_MSG: /************************************************************ STARTUP_MSG: Starting NameNode STARTUP_MSG: host = node1/192.168.193.140 STARTUP_MSG: args = [-format] STARTUP_MSG: version = 2.7.3 STARTUP_MSG: classpath = /home/hadoop/soft/hadoop-2.7.3/etc/hadoop:/home/hadoop/soft/hadoop-2.7.3/share/hadoop/common/lib/jaxb-impl-2.2.3-1.jar:/home/hadoop/soft/hadoop- ... 2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-plugins-2.7.3.jar:/home/hadoop/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.3.jar:/home/hadoop/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.3-tests.jar:/home/hadoop/soft/hadoop/contrib/capacity-scheduler/*.jar STARTUP_MSG: build = https://git-wip-us.apache.org/repos/asf/hadoop.git -r baa91f7c6bc9cb92be5982de4719c1c8af91ccff; compiled by 'root' on 2016-08-18T01:41Z STARTUP_MSG: java = 1.8.0_271 ************************************************************/ 22/03/08 06:31:01 INFO namenode.NameNode: registered UNIX signal handlers for [TERM, HUP, INT] 22/03/08 06:31:01 INFO namenode.NameNode: createNameNode [-format] Formatting using clusterid: CID-911f3db2-a66f-4748-a2bd-bb427d00f2e4 22/03/08 06:31:01 INFO namenode.FSNamesystem: No KeyProvider found. 22/03/08 06:31:01 INFO namenode.FSNamesystem: fsLock is fair:true 22/03/08 06:31:01 INFO blockmanagement.DatanodeManager: dfs.block.invalidate.limit=1000 22/03/08 06:31:01 INFO blockmanagement.DatanodeManager: dfs.namenode.datanode.registration.ip-hostname-check=true 22/03/08 06:31:01 INFO blockmanagement.BlockManager: dfs.namenode.startup.delay.block.deletion.sec is set to 000:00:00:00.000 22/03/08 06:31:01 INFO blockmanagement.BlockManager: The block deletion will start around 2022 Mar 08 06:31:01 22/03/08 06:31:01 INFO util.GSet: Computing capacity for map BlocksMap 22/03/08 06:31:01 INFO util.GSet: VM type = 64-bit 22/03/08 06:31:01 INFO util.GSet: 2.0% max memory 889 MB = 17.8 MB 22/03/08 06:31:01 INFO util.GSet: capacity = 2^21 = 2097152 entries 22/03/08 06:31:02 INFO blockmanagement.BlockManager: dfs.block.access.token.enable=false 22/03/08 06:31:02 INFO blockmanagement.BlockManager: defaultReplication = 1 22/03/08 06:31:02 INFO blockmanagement.BlockManager: maxReplication = 512 22/03/08 06:31:02 INFO blockmanagement.BlockManager: minReplication = 1 22/03/08 06:31:02 INFO blockmanagement.BlockManager: maxReplicationStreams = 2 22/03/08 06:31:02 INFO blockmanagement.BlockManager: replicationRecheckInterval = 3000 22/03/08 06:31:02 INFO blockmanagement.BlockManager: encryptDataTransfer = false 22/03/08 06:31:02 INFO blockmanagement.BlockManager: maxNumBlocksToLog = 1000 22/03/08 06:31:02 INFO namenode.FSNamesystem: fsOwner = hadoop (auth:SIMPLE) 22/03/08 06:31:02 INFO namenode.FSNamesystem: supergroup = supergroup 22/03/08 06:31:02 INFO namenode.FSNamesystem: isPermissionEnabled = true 22/03/08 06:31:02 INFO namenode.FSNamesystem: HA Enabled: false 22/03/08 06:31:02 INFO namenode.FSNamesystem: Append Enabled: true 22/03/08 06:31:02 INFO util.GSet: Computing capacity for map INodeMap 22/03/08 06:31:02 INFO util.GSet: VM type = 64-bit 22/03/08 06:31:02 INFO util.GSet: 1.0% max memory 889 MB = 8.9 MB 22/03/08 06:31:02 INFO util.GSet: capacity = 2^20 = 1048576 entries 22/03/08 06:31:02 INFO namenode.FSDirectory: ACLs enabled? false 22/03/08 06:31:02 INFO namenode.FSDirectory: XAttrs enabled? true 22/03/08 06:31:02 INFO namenode.FSDirectory: Maximum size of an xattr: 16384 22/03/08 06:31:02 INFO namenode.NameNode: Caching file names occuring more than 10 times 22/03/08 06:31:02 INFO util.GSet: Computing capacity for map cachedBlocks 22/03/08 06:31:02 INFO util.GSet: VM type = 64-bit 22/03/08 06:31:02 INFO util.GSet: 0.25% max memory 889 MB = 2.2 MB 22/03/08 06:31:02 INFO util.GSet: capacity = 2^18 = 262144 entries 22/03/08 06:31:02 INFO namenode.FSNamesystem: dfs.namenode.safemode.threshold-pct = 0.9990000128746033 22/03/08 06:31:02 INFO namenode.FSNamesystem: dfs.namenode.safemode.min.datanodes = 0 22/03/08 06:31:02 INFO namenode.FSNamesystem: dfs.namenode.safemode.extension = 30000 22/03/08 06:31:02 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.window.num.buckets = 10 22/03/08 06:31:02 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.num.users = 10 22/03/08 06:31:02 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.windows.minutes = 1,5,25 22/03/08 06:31:02 INFO namenode.FSNamesystem: Retry cache on namenode is enabled 22/03/08 06:31:02 INFO namenode.FSNamesystem: Retry cache will use 0.03 of total heap and retry cache entry expiry time is 600000 millis 22/03/08 06:31:02 INFO util.GSet: Computing capacity for map NameNodeRetryCache 22/03/08 06:31:02 INFO util.GSet: VM type = 64-bit 22/03/08 06:31:02 INFO util.GSet: 0.029999999329447746% max memory 889 MB = 273.1 KB 22/03/08 06:31:02 INFO util.GSet: capacity = 2^15 = 32768 entries 22/03/08 06:31:02 INFO namenode.FSImage: Allocated new BlockPoolId: BP-568726822-192.168.193.140-1646692262515 22/03/08 06:31:02 INFO common.Storage: Storage directory /home/hadoop/soft/hadoop/tmp/dfs/name has been successfully formatted. 22/03/08 06:31:02 INFO namenode.FSImageFormatProtobuf: Saving image file /home/hadoop/soft/hadoop/tmp/dfs/name/current/fsimage.ckpt_0000000000000000000 using no compression 22/03/08 06:31:02 INFO namenode.FSImageFormatProtobuf: Image file /home/hadoop/soft/hadoop/tmp/dfs/name/current/fsimage.ckpt_0000000000000000000 of size 353 bytes saved in 0 seconds. 22/03/08 06:31:02 INFO namenode.NNStorageRetentionManager: Going to retain 1 images with txid >= 0 22/03/08 06:31:02 INFO util.ExitUtil: Exiting with status 0 22/03/08 06:31:02 INFO namenode.NameNode: SHUTDOWN_MSG: /************************************************************ SHUTDOWN_MSG: Shutting down NameNode at node1/192.168.193.140 ************************************************************/ [hadoop@node1 hadoop]$

若能看到Exiting with status 0,则格式化成功

注意:格式化只需要做一次,格式化成功后,以后就不能再次格式化了。

启动hdfs

start-dfs.sh

验证

jps查看hdfs进程,hdfs进程包括:NameNode、DataNode、SecondaryNameNode

[hadoop@node1 hadoop]$ jps 1989 NameNode 2424 Jps 2300 SecondaryNameNode 2125 DataNode

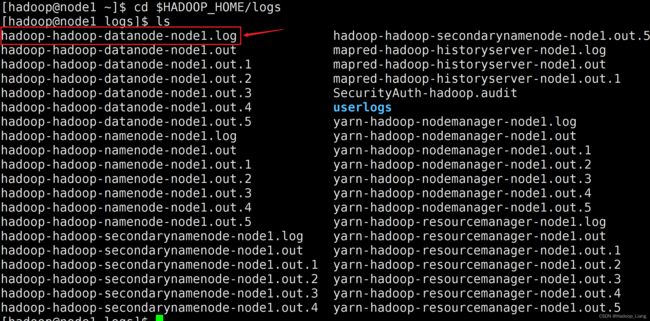

如果少进程,可以切换到$HADOOP_HOME/logs目录,查看对应进程的log文件排查原因,例如:缺少DataNode,应该查看的DataNode的log文件,查看log报的错误、警告、异常信息(Error、Warning、Exception),找到少进程的原因,并解决。

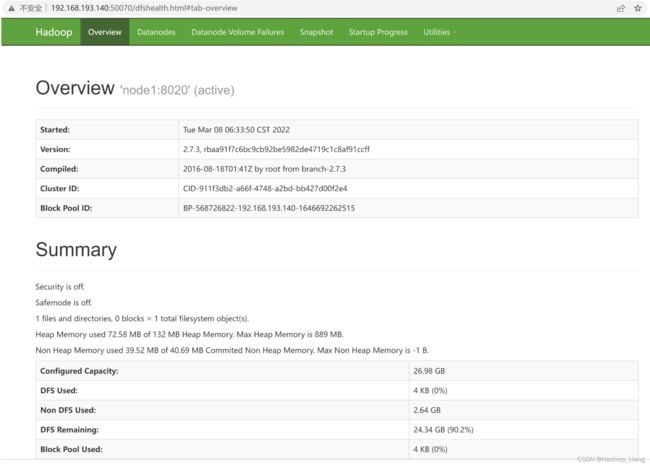

浏览器验证ip:50070,例如:192.168.193.140:50070

启动yarn

start-yarn.sh

验证

jps查yarn进程,yarn进程包括:ResourceManager和NodeManager

[hadoop@node1 hadoop]$ jps 2706 NodeManager 1989 NameNode 2885 Jps 2475 ResourceManager 2300 SecondaryNameNode 2125 DataNode

如果少进程,同样可查看相关log文件来排查。

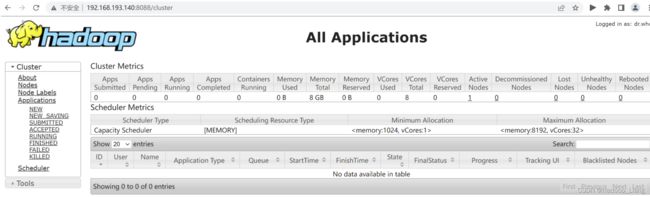

浏览器访问ip:8088 例如:192.168.193.140:8088

完成!enjoy it!