图像融合质量评价方法的python代码实现——Qabf

文章目录

- 1 前言

- 2 Qabf介绍

- 3 Qabf的代码

-

- 3.1 matlab代码

- 3.2 python代码

- 3.3 效果对比

- 4 总结

1 前言

在评估融合图像质量时,由于作者使用的是python代码进行融合,但 Q A B / F Q^{AB/F} QAB/F只有matlab代码,在使用时非常不方便,所以作者就根据matlab代码实现了 Q A B / F Q^{AB/F} QAB/F的python代码。

2 Qabf介绍

Q A B / F Q^{AB/F} QAB/F是由C. S. Xydeas, V. Petrović提出了一种像素级图像融合质量评估指标,该指标反映了从输入图像融合中获得的视觉信息的质量,可用于比较不同图像融合算法的性能。从Objective Image Fusion Performance Measure中的实验结果表明,该度量是有感知意义的,具体公式可以看文章。

3 Qabf的代码

3.1 matlab代码

matlab的代码可以看图像融合质量评价方法MSSIM、MS-SSIM、FS、Qmi、Qabf与VIFF(三)

3.2 python代码

python代码如下,相较于matlab简洁了一点:

import math

import numpy as np

import cv2

#model parameters 模型参数

L = 1;

Tg = 0.9994;

kg = -15;

Dg = 0.5;

Ta = 0.9879;

ka = -22;

Da = 0.8;

#Sobel Operator Sobel算子

h1 = np.array([[1, 2, 1], [0, 0, 0], [-1, -2, -1]]).astype(np.float32)

h2 = np.array([[0, 1, 2], [-1, 0, 1], [-2, -1, 0]]).astype(np.float32)

h3 = np.array([[-1, 0, 1], [-2, 0, 2], [-1, 0, 1]]).astype(np.float32)

#if y is the response to h1 and x is the response to h3;then the intensity is sqrt(x^2+y^2) and is arctan(y/x);

#如果y对应h1,x对应h2,则强度为sqrt(x^2+y^2),方向为arctan(y/x)

strA = cv2.imread("images/MDDataset/c01_1.tif", 0).astype(np.float32)

strB = cv2.imread("images/MDDataset/c01_2.tif", 0).astype(np.float32)

strF = cv2.imread("results/our/guided_1.png", 0).astype(np.float32)

# 数组旋转180度

def flip180(arr):

new_arr = arr.reshape(arr.size)

new_arr = new_arr[::-1]

new_arr = new_arr.reshape(arr.shape)

return new_arr

#相当于matlab的Conv2

def convolution(k, data):

k = flip180(k)

data = np.pad(data, ((1, 1), (1, 1)), 'constant', constant_values=(0, 0))

n,m = data.shape

img_new = []

for i in range(n-2):

line = []

for j in range(m-2):

a = data[i:i+3,j:j+3]

line.append(np.sum(np.multiply(k, a)))

img_new.append(line)

return np.array(img_new)

#用h3对strA做卷积并保留原形状得到SAx,再用h1对strA做卷积并保留原形状得到SAy

#matlab会对图像进行补0,然后卷积核选择180度

#gA = sqrt(SAx.^2 + SAy.^2);

#定义一个和SAx大小一致的矩阵并填充0定义为aA,并计算aA的值

def getArray(img):

SAx = convolution(h3,img)

SAy = convolution(h1,img)

gA = np.sqrt(np.multiply(SAx,SAx)+np.multiply(SAy,SAy))

n, m = img.shape

aA = np.zeros((n,m))

for i in range(n):

for j in range(m):

if(SAx[i,j]==0):

aA[i,j] = math.pi/2

else:

aA[i, j] = math.atan(SAy[i,j]/SAx[i,j])

return gA,aA

#对strB和strF进行相同的操作

gA,aA = getArray(strA)

gB,aB = getArray(strB)

gF,aF = getArray(strF)

#the relative strength and orientation value of GAF,GBF and AAF,ABF;

def getQabf(aA,gA,aF,gF):

n, m = aA.shape

GAF = np.zeros((n,m))

AAF = np.zeros((n,m))

QgAF = np.zeros((n,m))

QaAF = np.zeros((n,m))

QAF = np.zeros((n,m))

for i in range(n):

for j in range(m):

if(gA[i,j]>gF[i,j]):

GAF[i,j] = gF[i,j]/gA[i,j]

elif(gA[i,j]==gF[i,j]):

GAF[i, j] = gF[i, j]

else:

GAF[i, j] = gA[i,j]/gF[i, j]

AAF[i,j] = 1-np.abs(aA[i,j]-aF[i,j])/(math.pi/2)

QgAF[i,j] = Tg/(1+math.exp(kg*(GAF[i,j]-Dg)))

QaAF[i,j] = Ta/(1+math.exp(ka*(AAF[i,j]-Da)))

QAF[i,j] = QgAF[i,j]*QaAF[i,j]

return QAF

QAF = getQabf(aA,gA,aF,gF)

QBF = getQabf(aB,gB,aF,gF)

#计算QABF

deno = np.sum(gA+gB)

nume = np.sum(np.multiply(QAF,gA)+np.multiply(QBF,gB))

output = nume/deno

print(output)

3.3 效果对比

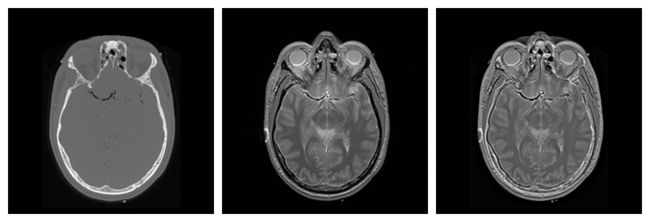

选择了配准好的MRI和CT图像各一张,将其融合后,如下:

matlab代码运行结果如下:

python代码运行结果如下:

可以看到两种代码运行结果是一致的。

4 总结

目前就这一篇吧