Hadoop运行环境搭建

虚拟机配置要求

关闭防火墙

关闭防火墙开机自启

虚拟机可正常上网

安装vim

SSH无密登录配置

修改主机名称

[root@guo147 .ssh]# vim /etc/hostname 主机名称映射

主机名称映射

后期会有很多配置IP地址的地方,如后续需修改IP地址则改需要改动的地方较多,设置映射后,只需要改变一处即可

[root@guo147 .ssh]# vim /etc/hosts生成公钥和私钥

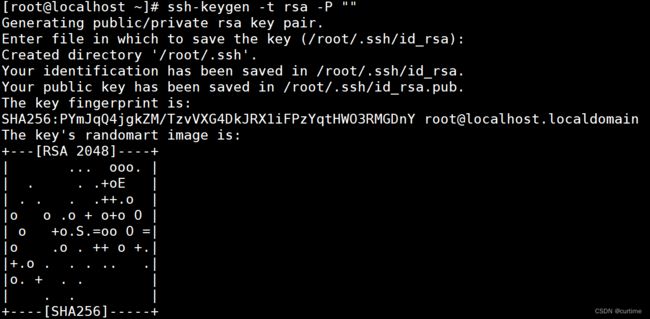

[root@localhost ~]# ssh-keygen -t rsa -P ""

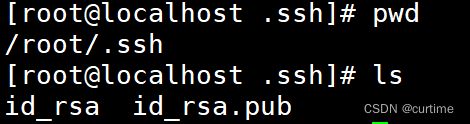

输入命令后敲回车,中间再敲一次回车就会生成两个文件id_rsa(私钥)、id_rsa.pub(公钥)

将公钥拷贝到本地的authorized_keys和要免密登录的目标机器上

[root@localhost .ssh]# cat ./id_rsa.pub >> authorized_keys

[root@localhost .ssh]# ssh-copy-id -i ./id_rsa.pub -p22 [email protected]远程登录

[root@localhost .ssh]# ssh -p22 [email protected]同步时间(系统时间不正确时可设置)

//安装同步时间插件

[root@localhost .ssh]# yum install -y ntpdate

//同步时间

[root@localhost .ssh]# ntpdate time.windows.com

定时更新时间

[root@localhost .ssh]# crontab -e

*/5 * * * * /usr/sbin/ntpdate -u time.windows.com

启动定时任务

[root@localhost .ssh]# service crond start/stop/restart/reload/status安装JDK

解压文件到指定目录

[root@localhost install]# tar -zxvf ./jdk-8u321-linux-x64.tar.gz -C ../soft/

//同时把hadoop的压缩包也解压方便后续操作

[root@localhost install]# tar -zxvf ./hadoop-3.1.3.tar.gz -C ../soft/配置JDK环境变量

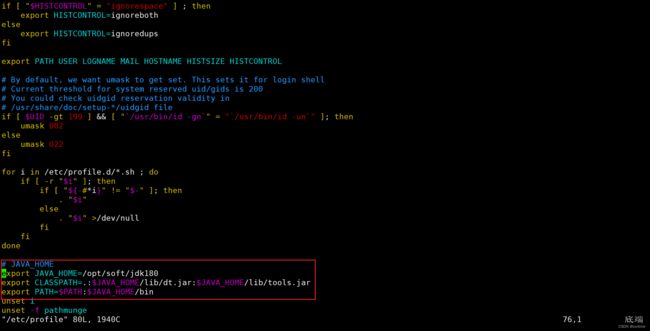

[root@localhost soft]# vim /etc/profile# JAVA_HOME

export JAVA_HOME=/opt/soft/jdk180

export CLASSPATH=.:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

export PATH=$PATH:$JAVA_HOME/bin

//刷新配置文件

[root@localhost soft]# source /etc/profile

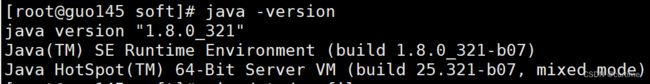

[root@localhost soft]# java -version出现以下内容配置成功

安装Hadoop

修改hadoop313文件及子目录文件的所有者

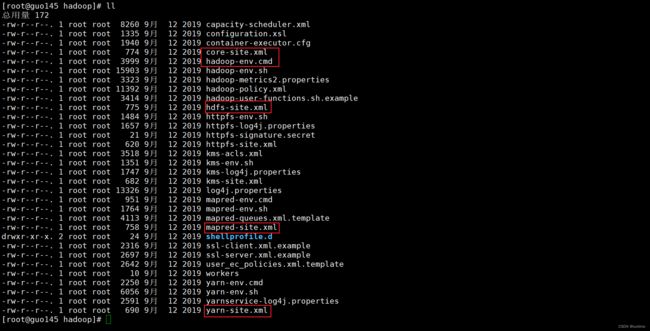

[root@guo147 hadoop313]# chown -R root:root ../hadoop313/修改配置文件

修改core-site.xml

fs.defaultFS

hdfs://guo147:9000

hadoop.tmp.dir

/opt/soft/hadoop313/data

namenode上本地的hadoop临时文件夹

hadoop.http.staticuser.user

root

io.file.buffer.size

131072

读写队列缓存:128K

hadoop.proxyuser.root.hosts

*

hadoop.proxyuser.root.groups

*

修改hadoop-env.sh 第54行内容:

54 export JAVA_HOME=/opt/soft/jdk18

修改hdfs-site.xml

dfs.replication

1

hadoop中每一个block文件的备份数量

dfs.namenode.name.dir

/opt/soft/hadoop313/data/dfs/name

namenode上存储hdfsq名字空间元数据的目录

dfs.datanode.data.dir

/opt/soft/hadoop313/data/dfs/data

datanode上数据块的物理存储位置目录

dfs.permissions.enabled

false

关闭权限验证

修改mapred-site.xml

mapreduce.framework.name

yarn

job执行框架: local, classic or yarn

true

mapreduce.application.classpath

/opt/soft/hadoop313/etc/hadoop:/opt/soft/hadoop313/share/hadoop/common/lib/*:/opt/soft/hadoop313/share/hadoop/common/*:/opt/soft/hadoop313/share/hadoop/hdfs/*:/opt/soft/hadoop313/share/hadoop/hdfs/lib/*:/opt/soft/hadoop313/share/hadoop/mapreduce/*:/opt/soft/hadoop313/share/hadoop/mapreduce/lib/*:/opt/soft/hadoop313/share/hadoop/yarn/*:/opt/soft/hadoop313/share/hadoop/yarn/lib/*

mapreduce.jobhistory.address

guo147:10020

mapreduce.jobhistory.webapp.address

guo147:19888

mapreduce.map.memory.mb

1024

mapreduce.reduce.memory.mb

1024

修改yarn-site.xml

yarn.resourcemanager.connect.retry-interval.ms

20000

yarn.resourcemanager.scheduler.class

org.apache.hadoop.yarn.server.resourcemanager.scheduler.fair.FairScheduler

yarn.nodemanager.localizer.address

guo147:8040

yarn.nodemanager.address

guo147:8050

yarn.nodemanager.webapp.address

guo147:8042

yarn.nodemanager.aux-services

mapreduce_shuffle

yarn.nodemanager.local-dirs

/opt/soft/hadoop313/yarndata/yarn

yarn.nodemanager.log-dirs

/opt/soft/hadoop313/yarndata/log

添加hadoop环境变量

[root@guo147 hadoop]# vim /etc/profile# HADOOP_HOME

export HADOOP_HOME=/opt/soft/hadoop313

export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$HADOOP_HOME/lib

export HDFS_NAMENODE_USER=root

export HDFS_DATANODE_USER=root

export HDFS_SECONDARYNAMENODE_USER=root

export HDFS_JOURNALNODE_USER=root

export HDFS_ZKFC_USER=root

export YARN_RESOURCEMANAGER_USER=root

export YARN_NODEMANAGER_USER=root

export HADOOP_MAPRED_HOME=$HADOOP_HOME

export HADOOP_COMMON_HOME=$HADOOP_HOME

export HADOOP_HDFS_HOME=$HADOOP_HOME

export HADOOP_YARN_HOME=$HADOOP_HOME

export HADOOP_INSTALL=$HADOOP_HOME

export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_HOME/lib/native

export HADOOP_LIBEXEC_DIR=$HADOOP_HOME/libexec

export HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoop

刷新配置文件

[root@guo147 hadoop]# source /etc/profile

[root@guo147 hadoop]# echo $HADOOP_HOME格式化hadoop配置

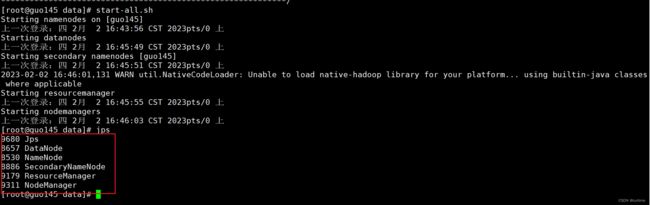

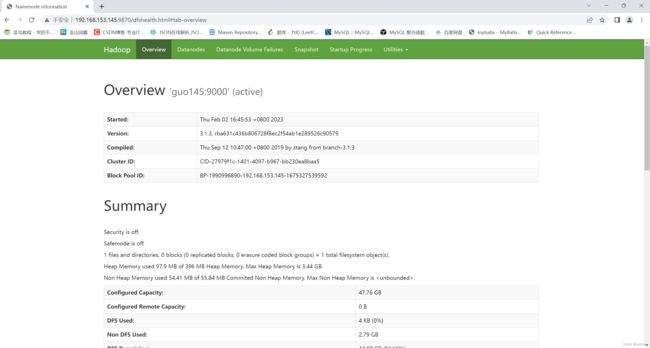

[root@guo147 hadoop]# hdfs namenode -format启动服务

[root@guo147 hadoop]# start-all.sh

[root@guo147 data]# jps