本文内容基于webpack 5.74.0版本进行分析

webpack5核心流程专栏共有5篇,使用流程图的形式分析了webpack5的构建原理:

- 「Webpack5源码」make阶段(流程图)分析

- 「Webpack5源码」enhanced-resolve路径解析库源码分析

- 「Webpack5源码」seal阶段(流程图)分析(一)

- 「Webpack5源码」seal阶段分析(二)-SplitChunksPlugin源码

- 「Webpack5源码」seal阶段分析(三)-生成代码&runtime

前言

在上一篇文章「Webpack5源码」seal阶段(流程图)分析(一)中,我们已经分析了seal阶段相关的逻辑,主要包括:

new ChunkGraph()- 遍历

this.entries,进行Chunk和ChunkGroup的创建 buildChunkGraph()的整体流程

seal(callback) {

const chunkGraph = new ChunkGraph(

this.moduleGraph,

this.outputOptions.hashFunction

);

this.chunkGraph = chunkGraph;

//...

this.logger.time("create chunks");

/** @type {Map} */

for (const [name, { dependencies, includeDependencies, options }] of this.entries) {

const chunk = this.addChunk(name);

const entrypoint = new Entrypoint(options);

//...

}

//...

buildChunkGraph(this, chunkGraphInit);

this.logger.timeEnd("create chunks");

this.logger.time("optimize");

//...

while (this.hooks.optimizeChunks.call(this.chunks, this.chunkGroups)) {

/* empty */

}

//...

this.logger.timeEnd("optimize");

this.logger.time("code generation");

this.codeGeneration(err => {

//...

this.logger.timeEnd("code generation");

}

} buildChunkGraph()是上篇文章分析中最核心的部分,主要分为3个部分展开

const buildChunkGraph = (compilation, inputEntrypointsAndModules) => {

// PART ONE

logger.time("visitModules");

visitModules(...);

logger.timeEnd("visitModules");

// PART TWO

logger.time("connectChunkGroups");

connectChunkGroups(...);

logger.timeEnd("connectChunkGroups");

for (const [chunkGroup, chunkGroupInfo] of chunkGroupInfoMap) {

for (const chunk of chunkGroup.chunks)

chunk.runtime = mergeRuntime(chunk.runtime, chunkGroupInfo.runtime);

}

// Cleanup work

logger.time("cleanup");

cleanupUnconnectedGroups(compilation, allCreatedChunkGroups);

logger.timeEnd("cleanup");

};其中visitModules()整体逻辑如下所示

在上篇文章结束分析buildChunkGraph()之后,我们将开始hooks.optimizeChunks()的相关逻辑分析

文章内容

在没有使用SplitChunksPlugin进行分包优化的情况下,如上图所示,一共会生成6个chunk(4个入口文件形成的chunk,2个异步加载形成的chunk),从上图可以看出,有多个依赖库都被重复打包进入不同的chunk中,对于这种情况,我们可以使用SplitChunksPlugin进行分包优化,如下图所示,分包出两个新的chunk:test2和test3,将重复的依赖都打包进去test2和test3,避免重复打包造成的打包文件体积过大的问题

本文以上面例子作为核心,分析SplitChunksPlugin分包优化的流程

1.hooks.optimizeChunks�

while (this.hooks.optimizeChunks.call(this.chunks, this.chunkGroups)) {

/* empty */

}在经过visitModules()处理后,会调用hooks.optimizeChunks.call()进行chunks的优化,如下图所示,会触发多个Plugin执行,其中我们最熟悉的就是SplitChunksPlugin插件,下面会集中SplitChunksPlugin插件进行讲解

![]()

2.SplitChunksPlugin源码解析

配置cacheGroups,可以对目前已经划分好的chunks再进行优化,将一个大的chunk划分为两个及以上的chunk,减少重复打包,增加代码的复用性

比如入口文件打包形成app1.js和app2.js,这两个文件(chunk)存在重复的打包代码:第三方库js-cookie

我们是否能将js-cookie打包形成一个新的chunk,这样就可以提出app1.js和app2.js里面的第三方库js-cookie代码,同时只需要一个地方打包js-cookie代码

module.exports = {

//...

optimization: {

splitChunks: {

chunks: 'async',

cacheGroups: {

defaultVendors: {

test: /[\\/]node_modules[\\/]/,

priority: -10,

reuseExistingChunk: true,

},

default: {

minChunks: 2,

priority: -20,

reuseExistingChunk: true,

},

},

},

},

}2.0 整体流程图和代码流程概述

2.0.1 代码

根据logger.time进行划分,整个流程主要分为:

prepare:初始化一些数据结构和方法,为下面流程做准备modules:遍历所有模块,构建出chunksInfoMap�数据queue:根据minSize进行chunk的分包,遍历chunksInfoMap�数据maxSize:根据maxSize进行chunk的分包

compilation.hooks.optimizeChunks.tap(

{

name: "SplitChunksPlugin",

stage: STAGE_ADVANCED

},

chunks => {

logger.time("prepare");

//...

logger.timeEnd("prepare");

logger.time("modules");

for (const module of compilation.modules) {

//...

}

logger.timeEnd("modules");

logger.time("queue");

for (const [key, info] of chunksInfoMap) {

//...

}

while (chunksInfoMap.size > 0) {

//...

}

logger.timeEnd("queue");

logger.time("maxSize");

for (const chunk of Array.from(compilation.chunks)) {

//...

}

logger.timeEnd("maxSize");

}

}根据配置参数进行对应的源码解析,比如maxSize、minSize、enforce、maxInitialRequests等等

2.0.2 流程图

2.1 cacheGroups默认配置

默认cacheGroups配置是在初始化过程中就设置好的参数,不是SplitChunksPlugin.js文件中执行的代码

从下面代码块可以知道,初始化阶段就定义了两个默认的cacheGroups配置,其中一个是node_modules的配置

// node_modules/webpack/lib/config/defaults.js

const { splitChunks } = optimization;

if (splitChunks) {

A(splitChunks, "defaultSizeTypes", () =>

css ? ["javascript", "css", "unknown"] : ["javascript", "unknown"]

);

D(splitChunks, "hidePathInfo", production);

D(splitChunks, "chunks", "async");

D(splitChunks, "usedExports", optimization.usedExports === true);

D(splitChunks, "minChunks", 1);

F(splitChunks, "minSize", () => (production ? 20000 : 10000));

F(splitChunks, "minRemainingSize", () => (development ? 0 : undefined));

F(splitChunks, "enforceSizeThreshold", () => (production ? 50000 : 30000));

F(splitChunks, "maxAsyncRequests", () => (production ? 30 : Infinity));

F(splitChunks, "maxInitialRequests", () => (production ? 30 : Infinity));

D(splitChunks, "automaticNameDelimiter", "-");

const { cacheGroups } = splitChunks;

F(cacheGroups, "default", () => ({

idHint: "",

reuseExistingChunk: true,

minChunks: 2,

priority: -20

}));

// const NODE_MODULES_REGEXP = /[\\/]node_modules[\\/]/i;

F(cacheGroups, "defaultVendors", () => ({

idHint: "vendors",

reuseExistingChunk: true,

test: NODE_MODULES_REGEXP,

priority: -10

}));

}将上面的默认配置转化为webpack.config.js就是下面代码块所示,一共有两个默认配置

- node_modules相关会打包形成一个chunk

- 默认会根据其它参数打包形成chunk

splitChunks.chunks表明将选择哪些 chunk 进行优化,默认chunks为async模式

async表示只会从异步类型的chunk拆分出新的chunkinitial只会从入口chunk拆分出新的chunkall表示无论是异步还是非异步,都会考虑拆分chunk详细分析请看下面的

cacheGroup.chunkFilter的相关分析

module.exports = {

//...

optimization: {

splitChunks: {

chunks: 'async',

minSize: 20000,

minRemainingSize: 0,

minChunks: 1,

maxAsyncRequests: 30,

maxInitialRequests: 30,

enforceSizeThreshold: 50000,

cacheGroups: {

defaultVendors: {

test: /[\\/]node_modules[\\/]/,

priority: -10,

reuseExistingChunk: true,

},

default: {

minChunks: 2,

priority: -20,

reuseExistingChunk: true,

},

},

},

},

};2.2 modules阶段:遍历compilation.modules,根据cacheGroup形成chunksInfoMap�数据

for (const module of compilation.modules) {

let cacheGroups = this.options.getCacheGroups(module, context);

let cacheGroupIndex = 0;

for (const cacheGroupSource of cacheGroups) {

const cacheGroup = this._getCacheGroup(cacheGroupSource);

// ============步骤1============

const combs = cacheGroup.usedExports

? getCombsByUsedExports()

: getCombs();

for (const chunkCombination of combs) {

const count =

chunkCombination instanceof Chunk ? 1 : chunkCombination.size;

if (count < cacheGroup.minChunks) continue;

// ============步骤2============

const { chunks: selectedChunks, key: selectedChunksKey } =

getSelectedChunks(chunkCombination, cacheGroup.chunksFilter);

// ============步骤3============

addModuleToChunksInfoMap(

cacheGroup,

cacheGroupIndex,

selectedChunks,

selectedChunksKey,

module

);

}

cacheGroupIndex++;

}

}2.2.1 步骤1: getCombsByUsedExports()

for (const module of compilation.modules) {

let cacheGroups = this.options.getCacheGroups(module, context);

const getCombsByUsedExports = memoize(() => {

// fill the groupedByExportsMap

getExportsChunkSetsInGraph();

/** @type {Set | Chunk>} */

const set = new Set();

const groupedByUsedExports = groupedByExportsMap.get(module);

for (const chunks of groupedByUsedExports) {

const chunksKey = getKey(chunks);

for (const comb of getExportsCombinations(chunksKey))

set.add(comb);

}

return set;

});

for (const cacheGroupSource of cacheGroups) {

const cacheGroup = this._getCacheGroup(cacheGroupSource);

// ============步骤1============

const combs = cacheGroup.usedExports

? getCombsByUsedExports()

: getCombs();

//...

}

} getCombsByUsedExports()的逻辑中涉及到多个方法(在prepare阶段进行初始化的方法),整体流程如下所示

遍历compilation.modules的过程中,触发groupedByExportsMap.get(module),拿到当前module对应的chunks数据集合,最终形成的数据结构是:

// item[0]是通过key拿到的chunk数组

// item[1]是符合minChunks拿到的chunks集合

// item[2]和item[3]是符合minChunks拿到的chunks集合

[new Set(3), new Set(2), Chunk, Chunk]moduleGraph.getExportsInfo

拿到对应module的exports对象信息,比如common__g.js

common__g.js拿到的数据如下

根据exportsInfo.getUsageKey(chunk.runtime)进行对应chunks集合数据的收集

以getUsageKey(chunk.runtime)作为key进行chunks集合数据的收集,在当前的示例中,app1、app2、app3、app4拿到的getUsageKey(chunk.runtime)都是一样的,这个方法的解析请参考其它文章进行理解

const groupChunksByExports = module => {

const exportsInfo = moduleGraph.getExportsInfo(module);

const groupedByUsedExports = new Map();

for (const chunk of chunkGraph.getModuleChunksIterable(module)) {

const key = exportsInfo.getUsageKey(chunk.runtime);

const list = groupedByUsedExports.get(key);

if (list !== undefined) {

list.push(chunk);

} else {

groupedByUsedExports.set(key, [chunk]);

}

}

return groupedByUsedExports.values();

};因此对于entry1.js这样的入口文件来说,得到的groupedByUsedExports.values()就是一个chunks:[app1]

对于common__g.js这种被4个入口文件所使用的依赖,得到的groupedByUsedExports.values()就是一个chunks:[app1,app2,app3,app4]

chunkGraph.getModuleChunksIterable

拿到对应module所在的chunks集合,比如下图中的common__g.js可以拿到的chunks集合为app1、app2、app3、app4

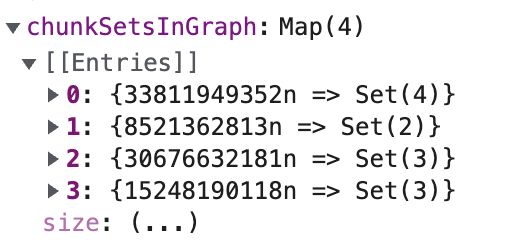

singleChunkSets、chunkSetsInGraph和chunkSets

一共有4种chunks集合,分别是:

[app1,app2,app3,app4][app1,app2,app3][app1,app2][app2,app3,app4]

对应着上面每一个module的chunks集合

而入口文件和异步文件对应的module所形成的chunk由于数量为1,因此放在singleChunkSets中

chunkSetsByCount

chunkSetsInGraph数据的变形,根据chunkSetsInGraph中item的长度,进行chunkSetsByCount的拼接,比如上面例子,形成的chunkSetsInGraph为:

一共有4种chunks集合,分别是:

[app1,app2,app3,app4][app1,app2,app3][app1,app2][app2,app3,app4]

转化为chunkSetsByCount:

小结

- 使用

groupChunksExports(module)拿到该module对应的所有chunk集合数据,放入到groupedByExportsMap,groupedByExportsMap是以key=module,value=[[chunk1,chunk2], chunk1]的数据结构 - 将所有

chunk集合数据通过getKey(chunks)放入到chunkSetsInGraph中,chunkSetsInGraph是以key=getKey(chunks),value=chunk集合数据的数据结构

当我们处理某一个module时,通过groupedByExportsMap拿到该module对应的所有chunk集合数据,称为groupedByUsedExports

const groupedByUsedExports = groupedByExportsMap.get(module);然后遍历所有chunk集合A,通过该数据集合A形成的chunksKey拿到chunkSetsInGraph对应的chunk集合数据(该chunk集合数据其实也是数据集合A),同时还会利用chunkSetsByCount获取数量比较少,但是属于数据集合A子集的数据集合B(数据集合B可能是其它module拿到的chunk集合)

const groupedByUsedExports = groupedByExportsMap.get(module);

for (const chunks of groupedByUsedExports) {

const chunksKey = getKey(chunks);

for (const comb of getExportsCombinations(chunksKey))

set.add(comb);

}

return set;2.2.2 步骤2: getSelectedChunks()和cacheGroup.chunksFilter

for (const module of compilation.modules) {

let cacheGroups = this.options.getCacheGroups(module, context);

let cacheGroupIndex = 0;

for (const cacheGroupSource of cacheGroups) {

const cacheGroup = this._getCacheGroup(cacheGroupSource);

// ============步骤1============

const combs = cacheGroup.usedExports

? getCombsByUsedExports()

: getCombs();

for (const chunkCombination of combs) {

const count =

chunkCombination instanceof Chunk ? 1 : chunkCombination.size;

if (count < cacheGroup.minChunks) continue;

// ============步骤2============

const { chunks: selectedChunks, key: selectedChunksKey } =

getSelectedChunks(chunkCombination, cacheGroup.chunksFilter);

//...

}

cacheGroupIndex++;

}

}cacheGroup.chunksFilter

webpack.config.js如果传入"all",那么cacheGroup.chunksFilter的内容为const ALL_CHUNK_FILTER = chunk => true;

const INITIAL_CHUNK_FILTER = chunk => chunk.canBeInitial();

const ASYNC_CHUNK_FILTER = chunk => !chunk.canBeInitial();

const ALL_CHUNK_FILTER = chunk => true;

const normalizeChunksFilter = chunks => {

if (chunks === "initial") {

return INITIAL_CHUNK_FILTER;

}

if (chunks === "async") {

return ASYNC_CHUNK_FILTER;

}

if (chunks === "all") {

return ALL_CHUNK_FILTER;

}

if (typeof chunks === "function") {

return chunks;

}

};

const createCacheGroupSource = (options, key, defaultSizeTypes) => {

//...

return {

//...

chunksFilter: normalizeChunksFilter(options.chunks),

//...

};

};

const { chunks: selectedChunks, key: selectedChunksKey } =

getSelectedChunks(chunkCombination, cacheGroup.chunksFilter);webpack.config.js如果传入"async"/"initial"呢?

从下面代码块我们可以知道

ChunkGroup:chunk.canBeInitial()=false- 同步

Entrypoint:chunk.canBeInitial()=true - 异步

Entrypoint:chunk.canBeInitial()=false

class ChunkGroup {

isInitial() {

return false;

}

}

class Entrypoint extends ChunkGroup {

constructor(entryOptions, initial = true) {

this._initial = initial;

}

isInitial() {

return this._initial;

}

}

// node_modules/webpack/lib/Compilation.js

addAsyncEntrypoint(options, module, loc, request) {

const entrypoint = new Entrypoint(options, false);

}getSelectedChunks()

从下面代码块可以知道,使用chunkFilter()进行chunks数组的过滤,由于例子使用"all",chunkFilter()任何条件下都会返回true,因此这里的过滤条件基本没有使用,所有chunk都符合题意

chunkFilter()本质就是通过splitChunks.chunks配置的参数决定要不要通过_initial来筛选,然后结合

普通ChunkGroup:_initial=false

Entrypoint类型的ChunkGroup:_initial=true

AsyncEntrypoint类型的ChunkGroup:_initial=false

进行数据的筛选

const getSelectedChunks = (chunks, chunkFilter) => {

let entry = selectedChunksCacheByChunksSet.get(chunks);

if (entry === undefined) {

entry = new WeakMap();

selectedChunksCacheByChunksSet.set(chunks, entry);

}

let entry2 = entry.get(chunkFilter);

if (entry2 === undefined) {

const selectedChunks = [];

if (chunks instanceof Chunk) {

if (chunkFilter(chunks)) selectedChunks.push(chunks);

} else {

for (const chunk of chunks) {

if (chunkFilter(chunk)) selectedChunks.push(chunk);

}

}

entry2 = {

chunks: selectedChunks,

key: getKey(selectedChunks)

};

entry.set(chunkFilter, entry2);

}

return entry2;

}2.2.3 步骤3: addModuleToChunksInfoMap

for (const module of compilation.modules) {

let cacheGroups = this.options.getCacheGroups(module, context);

let cacheGroupIndex = 0;

for (const cacheGroupSource of cacheGroups) {

const cacheGroup = this._getCacheGroup(cacheGroupSource);

// ============步骤1============

const combs = cacheGroup.usedExports

? getCombsByUsedExports()

: getCombs();

for (const chunkCombination of combs) {

const count =

chunkCombination instanceof Chunk ? 1 : chunkCombination.size;

if (count < cacheGroup.minChunks) continue;

// ============步骤2============

const { chunks: selectedChunks, key: selectedChunksKey } =

getSelectedChunks(chunkCombination, cacheGroup.chunksFilter);

// ============步骤3============

addModuleToChunksInfoMap(

cacheGroup,

cacheGroupIndex,

selectedChunks,

selectedChunksKey,

module

);

}

cacheGroupIndex++;

}

}构建chunksInfoMap数据,每一个key对应的item(包含modules、chunks、chunksKeys...)就是chunksInfoMap的元素

const addModuleToChunksInfoMap = (...) => {

let info = chunksInfoMap.get(key);

if (info === undefined) {

chunksInfoMap.set(

key,

(info = {

modules: new SortableSet(

undefined,

compareModulesByIdentifier

),

chunks: new Set(),

chunksKeys: new Set()

})

);

}

const oldSize = info.modules.size;

info.modules.add(module);

if (info.modules.size !== oldSize) {

for (const type of module.getSourceTypes()) {

info.sizes[type] = (info.sizes[type] || 0) + module.size(type);

}

}

const oldChunksKeysSize = info.chunksKeys.size;

info.chunksKeys.add(selectedChunksKey);

if (oldChunksKeysSize !== info.chunksKeys.size) {

for (const chunk of selectedChunks) {

info.chunks.add(chunk);

}

}

};2.2.4 具体例子

webpack.config.js的配置如下所示

cacheGroups:{

defaultVendors: {

test: /[\\/]node_modules[\\/]/,

priority: -10,

reuseExistingChunk: true,

},

default: {

minChunks: 2,

priority: -20,

reuseExistingChunk: true,

},

test3: {

chunks: 'all',

minChunks: 3,

name: "test3",

priority: 3

},

test2: {

chunks: 'all',

minChunks: 2,

name: "test2",

priority: 2

}

}在示例中,一共有4个入口文件

app1.js:使用了js-cookie、loadsh第三方库app2.js:使用了js-cookie、loadsh、vaca第三方库app3.js:使用了js-cookie、vaca第三方库app3.js:使用了vaca第三方库

这个时候回顾下整体的流程代码,外部循环是module,拿到该module对应的chunks集合,也就是combs

内部循环是cacheGroup(也就是webpack.config.js配置的分组),使用combs[i]对每一个cacheGroup进行遍历,本质就是minChunks+chunksFilter的筛选,然后将满足条件的数据通过addModuleToChunksInfoMap()塞入到chunksInfoMap中

for (const module of compilation.modules) {

let cacheGroups = this.options.getCacheGroups(module, context);

let cacheGroupIndex = 0;

for (const cacheGroupSource of cacheGroups) {

const cacheGroup = this._getCacheGroup(cacheGroupSource);

// ============步骤1============

const combs = cacheGroup.usedExports

? getCombsByUsedExports()

: getCombs();

for (const chunkCombination of combs) {

const count =

chunkCombination instanceof Chunk ? 1 : chunkCombination.size;

if (count < cacheGroup.minChunks) continue;

// ============步骤2============

const { chunks: selectedChunks, key: selectedChunksKey } =

getSelectedChunks(chunkCombination, cacheGroup.chunksFilter);

// ============步骤3============

addModuleToChunksInfoMap(

cacheGroup,

cacheGroupIndex,

selectedChunks,

selectedChunksKey,

module

);

}

cacheGroupIndex++;

}

}由于每一个入口文件都会形成一个Chunk,因此一共会形成4个Chunk,由于addModuleToChunksInfoMap()是以module为单位进行遍历的,因此我们可以整理出每一个module包含的Chunk的关系如下:

从上面的代码可以知道,当我们使用NormalModule="js-cookie"时,通过getCombsByUserdExports()会拿到5个chunks集合数据,也就是

注意:chunkSetsByCount中Set(2){app1,app2}本身不包含js-cookie的,按照上图所示,应该包含的是loadsh,但是满足isSubSet()条件

[Set(3){app1,app2,app3}, Set(2){app1,app2}, app1, app2, app3]而getCombsByUserdExports()具体的执行逻辑如下图所示,通过chunkSetsByCount获取对应的chunks集合

chunkSetsByCount是key=chunk数量,value=对应的chunk集合(数量为key),比如key=3,value=[Set(3){app1, app2, app3}]

而我们代码中是遍历cacheGroup的,因此我们还要考虑会命中哪些cacheGroup

NormalModule="js-cookie"

- cacheGroup=test3,拿到的combs集合是

combs = [["app1","app2","app3"],["app1","app2"],"app1","app2","app3"]遍历combs,由于cacheGroup.minChunks=3,因此最终过滤完成后,触发addModuleToChunksInfoMap()的数据是

["app1","app2","app3"]- cacheGroup=test2

combs = [["app1","app2","app3"],["app1","app2"],"app1","app2","app3"]遍历combs,由于cacheGroup.minChunks=2,因此最终过滤完成后,触发addModuleToChunksInfoMap()的数据是

["app1","app2","app3"]

["app1","app2"]- cacheGroup=default

combs = [["app1","app2","app3"],["app1","app2"],"app1","app2","app3"]遍历combs,由于cacheGroup.minChunks=2,因此最终过滤完成后,触发addModuleToChunksInfoMap()的数据是

["app1","app2","app3"]

["app1","app2"]- cacheGroup=defaultVendors

combs = [["app1","app2","app3"],["app1","app2"],"app1","app2","app3"]遍历combs,由于cacheGroup.minChunks=2,因此最终过滤完成后,触发addModuleToChunksInfoMap()的数据是

["app1","app2","app3"]

["app1","app2"]

["app1"]

["app2"]

["app3"]chunksInfoMap的key

在整个流程中,我们会使用一个属性key贯穿整个流程

比如下面代码中的chunksKey

const getCombs = memoize(() => {

const chunks = chunkGraph.getModuleChunksIterable(module);

const chunksKey = getKey(chunks);

return getCombinations(chunksKey);

});addModuleToChunksInfoMap()传入的selectedChunksKey

const getSelectedChunks = (chunks, chunkFilter) => {

let entry = selectedChunksCacheByChunksSet.get(chunks);

if (entry === undefined) {

entry = new WeakMap();

selectedChunksCacheByChunksSet.set(chunks, entry);

}

/** @type {SelectedChunksResult} */

let entry2 = entry.get(chunkFilter);

if (entry2 === undefined) {

/** @type {Chunk[]} */

const selectedChunks = [];

if (chunks instanceof Chunk) {

if (chunkFilter(chunks)) selectedChunks.push(chunks);

} else {

for (const chunk of chunks) {

if (chunkFilter(chunk)) selectedChunks.push(chunk);

}

}

entry2 = {

chunks: selectedChunks,

key: getKey(selectedChunks)

};

entry.set(chunkFilter, entry2);

}

return entry2;

};

const { chunks: selectedChunks, key: selectedChunksKey } =

getSelectedChunks(chunkCombination, cacheGroup.chunksFilter);

addModuleToChunksInfoMap(

cacheGroup,

cacheGroupIndex,

selectedChunks,

selectedChunksKey,

module

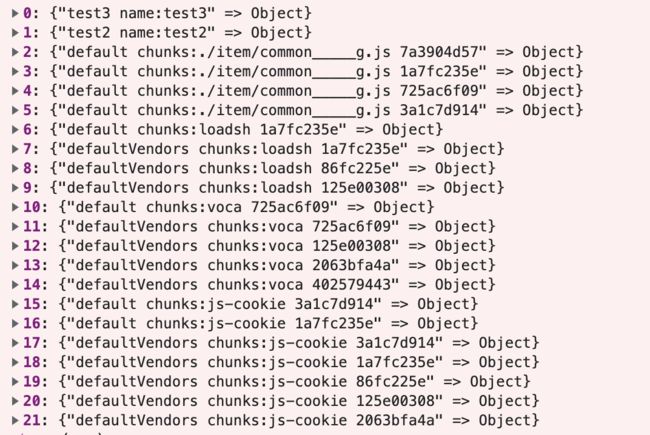

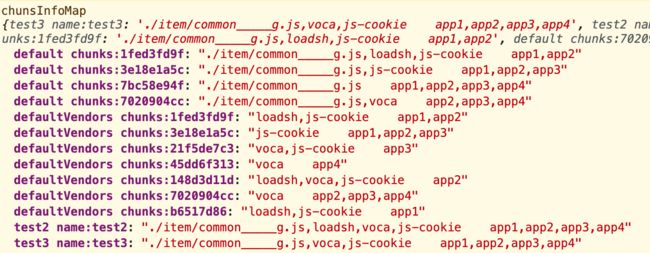

);我们将addModuleToChunksInfoMap()最终形成的数据chunksInfoMap�改造下,如下所示,将对应的selectedChunksKey换成当前module的路径

const key =

cacheGroup.key +

(name

? ` name:${name}`

: ` chunks:${keyToString(selectedChunksKey)}`);

// 如果没有name,则添加对应的module.rawRequest

const key =

cacheGroup.key +

(name

? ` name:${name}`

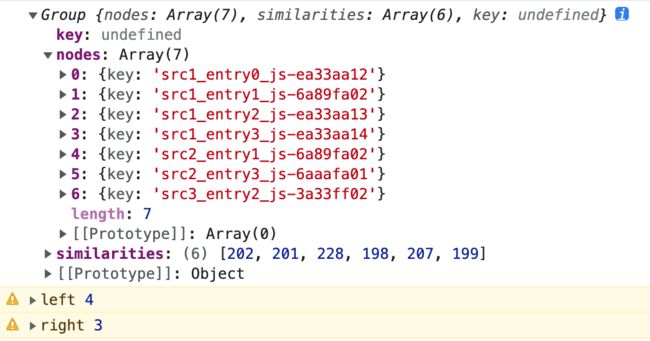

: ` chunks:${module.rawRequestkey} ${ToString(selectedChunksKey)}`);最终形成的chunksInfoMap如下所示,拿我们上面的举例js-cookie为参考,最终会根据不同的chunks集合形成不同的selectedChunksKey,最终不同chunks数据集合形成chunksInfoMap中不同key的value一部分,而不是把所有不同chunks数据集合都塞入到同一个key中

2.3 queue阶段:根据minSize和minSizeReduction筛选chunksInfoMap�数据

compilation.hooks.optimizeChunks.tap(

{

name: "SplitChunksPlugin",

stage: STAGE_ADVANCED

},

chunks => {

logger.time("prepare");

//...

logger.timeEnd("prepare");

logger.time("modules");

for (const module of compilation.modules) {

//...

}

logger.timeEnd("modules");

logger.time("queue");

for (const [key, info] of chunksInfoMap) {

//...

}

while (chunksInfoMap.size > 0) {

//...

}

logger.timeEnd("queue");

logger.time("maxSize");

for (const chunk of Array.from(compilation.chunks)) {

//...

}

logger.timeEnd("maxSize");

}

}maxSize 比 maxInitialRequest/maxAsyncRequests 具有更高的优先级,优先级maxInitialRequest/maxAsyncRequests<maxSize<minSize

// Filter items were size < minSize

for (const [key, info] of chunksInfoMap) {

if (removeMinSizeViolatingModules(info)) {

chunksInfoMap.delete(key);

} else if (

!checkMinSizeReduction(

info.sizes,

info.cacheGroup.minSizeReduction,

info.chunks.size

)

) {

chunksInfoMap.delete(key);

}

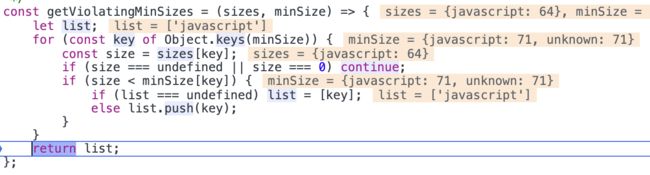

}removeMinSizeViolatingModules(): 如下面代码块和图片所示,通过cacheGroup.minSize判断目前info的module类型,比如javascript的总体大小size是否小于cacheGroup.minSize,如果小于,则剔除这些类型的modules,不形成新的chunk了

在上面拼凑info.sizes[type]时,会将同种类型的size累加

const removeMinSizeViolatingModules = info => {

const violatingSizes = getViolatingMinSizes(

info.sizes,

info.cacheGroup.minSize

);

if (violatingSizes === undefined) return false;

removeModulesWithSourceType(info, violatingSizes);

return info.modules.size === 0;

};

const removeModulesWithSourceType = (info, sourceTypes) => {

for (const module of info.modules) {

const types = module.getSourceTypes();

if (sourceTypes.some(type => types.has(type))) {

info.modules.delete(module);

for (const type of types) {

info.sizes[type] -= module.size(type);

}

}

}

};checkMinSizeReduction(): 涉及到cacheGroup.minSizeReduction配置,生成 chunk 所需的主 chunk(bundle)的最小体积(以字节为单位)缩减。这意味着如果分割成一个 chunk 并没有减少主 chunk(bundle)的给定字节数,它将不会被分割,即使它满足 splitChunks.minSize

为了生成 chunk,splitChunks.minSizeReduction与splitChunks.minSize都需要被满足,如果提取出这些chunk,使得主chunk减少的体积少于cacheGroup.minSizeReduction,那就不要提取出来形成新的chunk了

const checkMinSizeReduction = (sizes, minSizeReduction, chunkCount) => {

// minSizeReduction数据结构跟minSize一样,都是{javascript: 200;unknown: 200}

for (const key of Object.keys(minSizeReduction)) {

const size = sizes[key];

if (size === undefined || size === 0) continue;

if (size * chunkCount < minSizeReduction[key]) return false;

}

return true;

};2.4 queue阶段:遍历chunksInfoMap,根据规则进行chunk的重新组织

compilation.hooks.optimizeChunks.tap(

{

name: "SplitChunksPlugin",

stage: STAGE_ADVANCED

},

chunks => {

logger.time("prepare");

//...

logger.timeEnd("prepare");

logger.time("modules");

for (const module of compilation.modules) {

//...

}

logger.timeEnd("modules");

logger.time("queue");

for (const [key, info] of chunksInfoMap) {

//...

}

while (chunksInfoMap.size > 0) {

//...

}

logger.timeEnd("queue");

logger.time("maxSize");

for (const chunk of Array.from(compilation.chunks)) {

//...

}

logger.timeEnd("maxSize");

}

}chunksInfoMap每一个元素info,本质就是一个cacheGroup,这个cacheGroup带有chunks和modules

while (chunksInfoMap.size > 0) {

//compareEntries比较优先级构建bestEntry

//

}

// ...处理maxSize,下一个小节2.4.1 compareEntries�找到优先级最高的chunksInfoMap的item

找出cacheGroup的优先级哪个比较高,因为有一些chunk是符合多个cacheGroup的,优先级高优先进行分割,优先产生打包结果

根据以下属性从上到下的优先级进行两个info的排序,拿到最高级的那个info,即chunksInfoMap的item

let bestEntryKey;

let bestEntry;

for (const pair of chunksInfoMap) {

const key = pair[0];

const info = pair[1];

if (

bestEntry === undefined ||

compareEntries(bestEntry, info) < 0

) {

bestEntry = info;

bestEntryKey = key;

}

}

const item = bestEntry;

chunksInfoMap.delete(bestEntryKey);具体的比较方法在compareEntries()中,从代码中可以看出

priority�:数值越大,优先级越高chunks.size�:数量最多,优先级越高size reduction�:totalSize(a.sizes) * (a.chunks.size - 1)�数值越大,优先级越高cache group index�:数值越小,优先级越高number of modules�:数值越大,优先级越高module identifiers�:数值越大,优先级越高

const compareEntries = (a, b) => {

// 1. by priority

const diffPriority = a.cacheGroup.priority - b.cacheGroup.priority;

if (diffPriority) return diffPriority;

// 2. by number of chunks

const diffCount = a.chunks.size - b.chunks.size;

if (diffCount) return diffCount;

// 3. by size reduction

const aSizeReduce = totalSize(a.sizes) * (a.chunks.size - 1);

const bSizeReduce = totalSize(b.sizes) * (b.chunks.size - 1);

const diffSizeReduce = aSizeReduce - bSizeReduce;

if (diffSizeReduce) return diffSizeReduce;

// 4. by cache group index

const indexDiff = b.cacheGroupIndex - a.cacheGroupIndex;

if (indexDiff) return indexDiff;

// 5. by number of modules (to be able to compare by identifier)

const modulesA = a.modules;

const modulesB = b.modules;

const diff = modulesA.size - modulesB.size;

if (diff) return diff;

// 6. by module identifiers

modulesA.sort();

modulesB.sort();

return compareModuleIterables(modulesA, modulesB);

};拿到bestEntry后,从chunksInfoMap删除掉它,然后就对这个bestEntry进行处理

let bestEntryKey;

let bestEntry;

for (const pair of chunksInfoMap) {

const key = pair[0];

const info = pair[1];

if (

bestEntry === undefined ||

compareEntries(bestEntry, info) < 0

) {

bestEntry = info;

bestEntryKey = key;

}

}

const item = bestEntry;

chunksInfoMap.delete(bestEntryKey);2.4.2 开始处理chunksInfoMap中拿到优先级最大的item

拿到优先级最大的chunksInfoMap item,称为bestEntry

isExistingChunk

先进行了isExistingChunk的检测,如果cacheGroup有名称,并且从名称中拿到了已经存在的chunk,直接复用该chunk

涉及到webpack.config.js配置参数reuseExistingChunk,可以参考reuseExistingChunk具体例子,主要就是复用现有的chunk,而不是创建新的chunk

Chunk 1 (named one): modules A

Chunk 2 (named two): no modules(removed by optimization)

Chunk 3 (named one~two): modules B, C上面是配置了reuseExistingChunk=

false

下面是配置了reuseExistingChunk=trueChunk 1 (named one): modules A

Chunk 2 (named two): modules B, C

如果cacheGroup没有名称,则遍历item.chunks寻找能复用的chunk

最终结果是将能否复用的chunk赋值给newChunk,并且设置isExistingChunk=true

let chunkName = item.name;

let newChunk;

if (chunkName) {

const chunkByName = compilation.namedChunks.get(chunkName);

if (chunkByName !== undefined) {

newChunk = chunkByName;

const oldSize = item.chunks.size;

item.chunks.delete(newChunk);

isExistingChunk = item.chunks.size !== oldSize;

}

} else if (item.cacheGroup.reuseExistingChunk) {

outer: for (const chunk of item.chunks) {

if (

chunkGraph.getNumberOfChunkModules(chunk) !==

item.modules.size

) {

continue;

}

if (

item.chunks.size > 1 &&

chunkGraph.getNumberOfEntryModules(chunk) > 0

) {

continue;

}

for (const module of item.modules) {

if (!chunkGraph.isModuleInChunk(module, chunk)) {

continue outer;

}

}

if (!newChunk || !newChunk.name) {

newChunk = chunk;

} else if (

chunk.name &&

chunk.name.length < newChunk.name.length

) {

newChunk = chunk;

} else if (

chunk.name &&

chunk.name.length === newChunk.name.length &&

chunk.name < newChunk.name

) {

newChunk = chunk;

}

}

if (newChunk) {

item.chunks.delete(newChunk);

chunkName = undefined;

isExistingChunk = true;

isReusedWithAllModules = true;

}

}enforceSizeThreshold

webpack.config.js配置参数splitChunks.enforceSizeThreshold

当一个chunk的大小超过enforceSizeThreshold时,执行拆分的大小阈值和其他限制(minRemainingSize、maxAsyncRequests、maxInitialRequests)将被忽略

如下面代码所示,如果item.sizes[i]大于enforceSizeThreshold,那么enforced=true,就不用执行接下来的maxInitialRequests和maxAsyncRequests检验

const hasNonZeroSizes = sizes => {

for (const key of Object.keys(sizes)) {

if (sizes[key] > 0) return true;

}

return false;

};

const checkMinSize = (sizes, minSize) => {

for (const key of Object.keys(minSize)) {

const size = sizes[key];

if (size === undefined || size === 0) continue;

if (size < minSize[key]) return false;

}

return true;

};

// _conditionalEnforce: hasNonZeroSizes(enforceSizeThreshold)

const enforced =

item.cacheGroup._conditionalEnforce &&

checkMinSize(item.sizes, item.cacheGroup.enforceSizeThreshold); maxInitialRequests和maxAsyncRequests

maxInitialRequests: 入口点的最大并行请求数maxAsyncRequests: 按需加载时的最大并行请求数。

maxSize 比 maxInitialRequest/maxAsyncRequests 具有更高的优先级。实际优先级是 maxInitialRequest/maxAsyncRequests < maxSize < minSize

检测目前usedChunks中每一个chunk所持有的chunkGroup总体数量是否大于cacheGroup.maxInitialRequests或者是cacheGroup.maxAsyncRequests,如果超过这个限制,则删除这个chunk

const usedChunks = new Set(item.chunks);

if (

!enforced &&

(Number.isFinite(item.cacheGroup.maxInitialRequests) ||

Number.isFinite(item.cacheGroup.maxAsyncRequests))

) {

for (const chunk of usedChunks) {

// respect max requests

const maxRequests = chunk.isOnlyInitial()

? item.cacheGroup.maxInitialRequests

: chunk.canBeInitial()

? Math.min(

item.cacheGroup.maxInitialRequests,

item.cacheGroup.maxAsyncRequests

)

: item.cacheGroup.maxAsyncRequests;

if (

isFinite(maxRequests) &&

getRequests(chunk) >= maxRequests

) {

usedChunks.delete(chunk);

}

}

}chunkGraph.isModuleInChunk(module, chunk)

进行一些chunk的剔除,在不断迭代进行切割分组时,可能存在某一个bestEntry的module已经被其它bestEntry分走,但是chunk还没清理的情况,这个时候通过chunkGraph.isModuleInChunk检测是否存在chunk不被bestEntry里面所有module所需要,如果存在,直接删除该chunk

outer: for (const chunk of usedChunks) {

for (const module of item.modules) {

if (chunkGraph.isModuleInChunk(module, chunk)) continue outer;

}

usedChunks.delete(chunk);

}usedChunks.size

如果usedChunks删除了一些chunk,那么重新使用addModuleToChunksInfoMap()建立新的元素到chunksInfoMap,即去除不符合条件的chunk之后的重新加入chunksInfoMap形成新的cacheGroup组

一开始我们遍历chunksInfoMap�时,会删除目前处理的bestEntry,这个时候处理完毕后重新加入到chunksInfoMap,然后再进入循环进行处理

// Were some (invalid) chunks removed from usedChunks?

// => readd all modules to the queue, as things could have been changed

if (usedChunks.size < item.chunks.size) {

if (isExistingChunk) usedChunks.add(newChunk);

if (usedChunks.size >= item.cacheGroup.minChunks) {

const chunksArr = Array.from(usedChunks);

for (const module of item.modules) {

addModuleToChunksInfoMap(

item.cacheGroup,

item.cacheGroupIndex,

chunksArr,

getKey(usedChunks),

module

);

}

}

continue;

}

minRemainingSize

webpack.config.js配置参数splitChunks.minRemainingSize,仅在剩余单个 chunk 时生效

通过确保拆分后剩余的最小chunk体积超过限制来避免大小为零的模块,"development"模式中默认为0

const getViolatingMinSizes = (sizes, minSize) => {

let list;

for (const key of Object.keys(minSize)) {

const size = sizes[key];

if (size === undefined || size === 0) continue;

if (size < minSize[key]) {

if (list === undefined) list = [key];

else list.push(key);

}

}

return list;

};

// Validate minRemainingSize constraint when a single chunk is left over

if (

!enforced &&

item.cacheGroup._validateRemainingSize &&

usedChunks.size === 1

) {

const [chunk] = usedChunks;

let chunkSizes = Object.create(null);

for (const module of chunkGraph.getChunkModulesIterable(chunk)) {

if (!item.modules.has(module)) {

for (const type of module.getSourceTypes()) {

chunkSizes[type] =

(chunkSizes[type] || 0) + module.size(type);

}

}

}

const violatingSizes = getViolatingMinSizes(

chunkSizes,

item.cacheGroup.minRemainingSize

);

if (violatingSizes !== undefined) {

const oldModulesSize = item.modules.size;

removeModulesWithSourceType(item, violatingSizes);

if (

item.modules.size > 0 &&

item.modules.size !== oldModulesSize

) {

// queue this item again to be processed again

// without violating modules

chunksInfoMap.set(bestEntryKey, item);

}

continue;

}

}

如上面代码所示,先使用getViolatingMinSizes()得到size太小的类型集合,然后使用removeModulesWithSourceType()删除对应的module(如下面代码所示),同时更新对应的sizes属性,最终将更新完毕的item重新放入到chunksInfoMap

此时的chunksInfoMap对应的bestEntryKey数据已经删除小的modules

const removeModulesWithSourceType = (info, sourceTypes) => {

for (const module of info.modules) {

const types = module.getSourceTypes();

if (sourceTypes.some(type => types.has(type))) {

info.modules.delete(module);

for (const type of types) {

info.sizes[type] -= module.size(type);

}

}

}

};

创建newChunk以及chunk.split(newChunk)

如果没有可以复用的Chunk,就使用compilation.addChunk(chunkName)建立一个新的Chunk

// Create the new chunk if not reusing one

if (newChunk === undefined) {

newChunk = compilation.addChunk(chunkName);

}

// Walk through all chunks

for (const chunk of usedChunks) {

// Add graph connections for splitted chunk

chunk.split(newChunk);

}

在上面的分析可以知道,isReusedWithAllModules=true代表的是cacheGroup没有名称,遍历所有item.chunks找到可以复用的Chunk,因此这里不用connectChunkAndModule()建立新的联系,只需要将所有的item.modules跟item.chunks解除关联

而当isReusedWithAllModules=false时,需要将所有的item.modules跟item.chunks解除关联,将所有item.modules与newChunk建立联系

比如构建出往app3这个入口Chunk的ChunkGroup插入newChunk,建立它们的依赖关系,在后面生成代码时可以正确生成对应的依赖关系,即app3-Chunk可以加载newChunk,毕竟是从app1、app2、app3、app4这4个Chunk分离出来的newChunk

if (!isReusedWithAllModules) {

// Add all modules to the new chunk

for (const module of item.modules) {

if (!module.chunkCondition(newChunk, compilation)) continue;

// Add module to new chunk

chunkGraph.connectChunkAndModule(newChunk, module);

// Remove module from used chunks

for (const chunk of usedChunks) {

chunkGraph.disconnectChunkAndModule(chunk, module);

}

}

} else {

// Remove all modules from used chunks

for (const module of item.modules) {

for (const chunk of usedChunks) {

chunkGraph.disconnectChunkAndModule(chunk, module);

}

}

}

将目前newChunk更新到maxSizeQueueMap,等待后续maxSize阶段处理

if (

Object.keys(item.cacheGroup.maxAsyncSize).length > 0 ||

Object.keys(item.cacheGroup.maxInitialSize).length > 0

) {

const oldMaxSizeSettings = maxSizeQueueMap.get(newChunk);

maxSizeQueueMap.set(newChunk, {

minSize: oldMaxSizeSettings

? combineSizes(

oldMaxSizeSettings.minSize,

item.cacheGroup._minSizeForMaxSize,

Math.max

)

: item.cacheGroup.minSize,

maxAsyncSize: oldMaxSizeSettings

? combineSizes(

oldMaxSizeSettings.maxAsyncSize,

item.cacheGroup.maxAsyncSize,

Math.min

)

: item.cacheGroup.maxAsyncSize,

maxInitialSize: oldMaxSizeSettings

? combineSizes(

oldMaxSizeSettings.maxInitialSize,

item.cacheGroup.maxInitialSize,

Math.min

)

: item.cacheGroup.maxInitialSize,

automaticNameDelimiter: item.cacheGroup.automaticNameDelimiter,

keys: oldMaxSizeSettings

? oldMaxSizeSettings.keys.concat(item.cacheGroup.key)

: [item.cacheGroup.key]

});

}

删除其它chunksInfoMap item的info.modules[i]

当前处理的是最高优先级的chunksInfoMap item,处理完毕后,检测其它chunksInfoMap item的info.chunks是否有目前最高优先级的chunksInfoMap item的chunks

有的话使用info.modules和item.modules比较,删除其它chunksInfoMap item的info.modules[i]

删除完成后,检测info.modules.size是否等于0以及checkMinSizeReduction(),然后决定是否要进行cacheGroup的清除工作

checkMinSizeReduction()涉及到cacheGroup.minSizeReduction配置,生成 chunk 所需的主 chunk(bundle)的最小体积(以字节为单位)缩减。这意味着如果分割成一个 chunk 并没有减少主 chunk(bundle)的给定字节数,它将不会被分割,即使它满足 splitChunks.minSize

const isOverlap = (a, b) => {

for (const item of a) {

if (b.has(item)) return true;

}

return false;

};

// remove all modules from other entries and update size

for (const [key, info] of chunksInfoMap) {

if (isOverlap(info.chunks, usedChunks)) {

// update modules and total size

// may remove it from the map when < minSize

let updated = false;

for (const module of item.modules) {

if (info.modules.has(module)) {

// remove module

info.modules.delete(module);

// update size

for (const key of module.getSourceTypes()) {

info.sizes[key] -= module.size(key);

}

updated = true;

}

}

if (updated) {

if (info.modules.size === 0) {

chunksInfoMap.delete(key);

continue;

}

if (

removeMinSizeViolatingModules(info) ||

!checkMinSizeReduction(

info.sizes,

info.cacheGroup.minSizeReduction,

info.chunks.size

)

) {

chunksInfoMap.delete(key);

continue;

}

}

}

}

2.5 maxSize阶段:根据maxSize,将过大的chunk进行再分包

compilation.hooks.optimizeChunks.tap(

{

name: "SplitChunksPlugin",

stage: STAGE_ADVANCED

},

chunks => {

logger.time("prepare");

//...

logger.timeEnd("prepare");

logger.time("modules");

for (const module of compilation.modules) {

//...

}

logger.timeEnd("modules");

logger.time("queue");

for (const [key, info] of chunksInfoMap) {

//...

}

while (chunksInfoMap.size > 0) {

//...

}

logger.timeEnd("queue");

logger.time("maxSize");

for (const chunk of Array.from(compilation.chunks)) {

//...

}

logger.timeEnd("maxSize");

}

}

maxSize 比 maxInitialRequest/maxAsyncRequests 具有更高的优先级。实际优先级是 maxInitialRequest/maxAsyncRequests < maxSize < minSize

使用 maxSize告诉 webpack 尝试将大于 maxSize 个字节的 chunk 分割成较小的部分。 这些较小的部分在体积上至少为 minSize(仅次于 maxSize)

maxSize 选项旨在与 HTTP/2 和长期缓存一起使用。它增加了请求数量以实现更好的缓存。它还可以用于减小文件大小,以加快二次构建速度

在上面cacheGroups生成的chunks合并到入口和异步形成的chunks后,我们将校验maxSize值,如果生成的chunks体积过大,还需要再次分包!

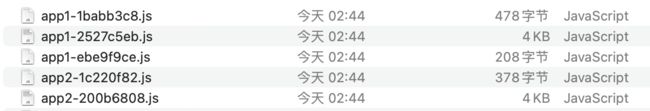

比如我们下面配置中,声明一个maxSize: 50

splitChunks: {

minSize: 1,

chunks: 'all',

maxInitialRequests: 10,

maxAsyncRequests: 10,

maxSize: 50,

cacheGroups: {

test3: {

chunks: 'all',

minChunks: 3,

name: "test3",

priority: 3

},

test2: {

chunks: 'all',

minChunks: 2,

name: "test2",

priority: 2

}

}

}

最终生成的文件中,原本只有app1.js和app2.js,因为maxSize的限制,我们切割为3个app1-xxx.js和2个app2-xxx.js文件

发现问题

- maxSize是如何切割的?根据

NormalModule进行切割的吗?

- 如果maxSize过小,会不会有数量限制?

- 这些切割的文件是如何在运行中合并起来的呢?是通过

runtime代码吗?还是通过chunkGroup?同一个chunkGroup中有不同的chunks?

- maxSize是如何处理切割不均的情况,比如切割成为两部分,如何保证两部分都大于

minSize又小于maxSize?

接下来的源码会主要围绕这上面问题进行分析

如下面代码所示,我们使用deterministicGroupingForModules进行chunk的切割得到多个结果results

如果切割结果result.length<=1,那么证明不用切割,不用处理

如果切割结果result.length>1

- 我们需要将切割出来的

newPart插入到chunk对应的ChunkGroup中

- 我们需要将切割完的每一个

chunk和它对应的module关联:chunkGraph.connectChunkAndModule(newPart, module)

- 同时需要将原来一个大的

chunk跟目前newPartChunk对应的module进行解除关联:chunkGraph.disconnectChunkAndModule(chunk, module)

//node_modules/webpack/lib/optimize/SplitChunksPlugin.js

for (const chunk of Array.from(compilation.chunks)) {

//...

const results = deterministicGroupingForModules({ ...});

if (results.length <= 1) {

continue;

}

for (let i = 0; i < results.length; i++) {

const group = results[i];

//...

if (i !== results.length - 1) {

const newPart = compilation.addChunk(name);

chunk.split(newPart);

newPart.chunkReason = chunk.chunkReason;

// Add all modules to the new chunk

for (const module of group.items) {

if (!module.chunkCondition(newPart, compilation)) {

continue;

}

// Add module to new chunk

chunkGraph.connectChunkAndModule(newPart, module);

// Remove module from used chunks

chunkGraph.disconnectChunkAndModule(chunk, module);

}

} else {

// change the chunk to be a part

chunk.name = name;

}

}

}

2.5.1 deterministicGroupingForModules分割核心方法

切割的最小单位是NormalModule,如果一个NormalModule非常大,则直接成为一个组,也就是新的chunk

const nodes = Array.from(

items,

item => new Node(item, getKey(item), getSize(item))

);

for (const node of nodes) {

if (isTooBig(node.size, maxSize) && !isTooSmall(node.size, minSize)) {

result.push(new Group([node], []));

} else {

//....

}

}

如果单个NormalModule小于maxSize,则加入到initialNodes中

for (const node of nodes) {

if (isTooBig(node.size, maxSize) && !isTooSmall(node.size, minSize)) {

result.push(new Group([node], []));

} else {

initialNodes.push(node);

}

}

然后我们会进行initialNodes的处理,因为initialNodes[i]是小于maxSize的,但是多个initialNodes[i]合并起来未必小于maxSize,因此我们我们得分情况讨论

if (initialNodes.length > 0) {

const initialGroup = new Group(initialNodes, getSimilarities(initialNodes));

if (initialGroup.nodes.length > 0) {

const queue = [initialGroup];

while (queue.length) {

const group = queue.pop();

// 步骤1:判断整体大小是否还小于maxSize

// 步骤2:removeProblematicNodes()后重新处理

// 步骤3:分割左边和右边部分,使得leftSize>minSize && rightSize>minSize

// 步骤3-1:判断分割是否重叠,即left-1>right

// 步骤3-2:判断left和right中间是否有元素还没纳入左右两个区间内,即分割中间仍然有空余的部分

// 步骤4: 为左区间和右区间创建不同的Group数据,然后压入queue中重新处理

}

}

// 步骤5: 赋值key,形成最终数据结构返回

}

步骤1: 判断是否存在类型大于maxSize

如果所有type类型数据的大小都无法大于maxSize,那就没有分割的必要性,直接加入结果result即可

这里的group.size是所有类型加起来的大小

if (initialNodes.length > 0) {

const initialGroup = new Group(initialNodes, getSimilarities(initialNodes));

if (initialGroup.nodes.length > 0) {

const queue = [initialGroup];

while (queue.length) {

const group = queue.pop();

// 步骤1: 判断是否存在类型大于maxSize

if (!isTooBig(group.size, maxSize)) {

result.push(group);

continue;

}

// 步骤2:removeProblematicNodes()找出是否有类型是小于minSize

// 步骤3:分割左边和右边部分,使得leftSize>minSize && rightSize>minSize

// 步骤3-1:判断分割是否重叠,即left-1>right

// 步骤3-2:判断left和right中间是否有元素还没纳入左右两个区间内,即分割中间仍然有空余的部分

// 步骤4: 为左区间和右区间创建不同的Group数据,然后压入queue中重新处理

}

}

}

步骤2:removeProblematicNodes()找出是否有类型是小于minSize

如果有类型小于minSize,尝试拆出来group中包含该类型的node数据,然后合并到其它Group中,然后重新处理group

这个方法非常高频,后面多个流程都出现该方法,因此需要好好分析下,见下面的步骤2

if (initialNodes.length > 0) {

const initialGroup = new Group(initialNodes, getSimilarities(initialNodes));

if (initialGroup.nodes.length > 0) {

const queue = [initialGroup];

while (queue.length) {

const group = queue.pop();

// 步骤1: 判断是否存在类型大于maxSize

if (!isTooBig(group.size, maxSize)) {

result.push(group);

continue;

}

// 步骤2:removeProblematicNodes()找出是否有类型是小于minSize

if (removeProblematicNodes(group)) {

// This changed something, so we try this group again

queue.push(group);

continue;

}

// 步骤3:分割左边和右边部分,使得leftSize>minSize && rightSize>minSize

// 步骤3-1:判断分割是否重叠,即left-1>right

// 步骤3-2:判断left和right中间是否有元素还没纳入左右两个区间内,即分割中间仍然有空余的部分

// 步骤4: 为左区间和右区间创建不同的Group数据,然后压入queue中重新处理

}

}

}

getTooSmallTypes():传入的size={**javascript**: 125},minSize={**javascript**: 61,**unknown: 61}**,比较得到目前不满足要求的类型的数组,比如types=["javascript"]

const removeProblematicNodes = (group, consideredSize = group.size) => {

const problemTypes = getTooSmallTypes(consideredSize, minSize);

if (problemTypes.size > 0) {

//...

return true;

}

else return false;

};

const getTooSmallTypes = (size, minSize) => {

const types = new Set();

for (const key of Object.keys(size)) {

const s = size[key];

if (s === 0) continue;

const minSizeValue = minSize[key];

if (typeof minSizeValue === "number") {

if (s < minSizeValue) types.add(key);

}

}

return types;

};

我们从getTooSmallTypes()得到目前group中不满足minSize的类型数组problemTypes

getNumberOfMatchingSizeTypes():根据传入的node.size和problemTypes判断该node是否是问题节点,如果该node包含不满足minSize的types

我们通过group.popNodes+getNumberOfMatchingSizeTypes()获取问题的节点problemNodes,然后通过result+getNumberOfMatchingSizeTypes()获取那些本身满足minSize+maxSize的集合possibleResultGroups

const removeProblematicNodes = (group, consideredSize = group.size) => {

const problemTypes = getTooSmallTypes(consideredSize, minSize);

if (problemTypes.size > 0) {

const problemNodes = group.popNodes(

n => getNumberOfMatchingSizeTypes(n.size, problemTypes) > 0

);

if (problemNodes === undefined) return false;

// Only merge it with result nodes that have the problematic size type

const possibleResultGroups = result.filter(

n => getNumberOfMatchingSizeTypes(n.size, problemTypes) > 0

);

}

else return false;

}

const getNumberOfMatchingSizeTypes = (size, types) => {

let i = 0;

for (const key of Object.keys(size)) {

if (size[key] !== 0 && types.has(key)) i++;

}

return i;

};

// for (const node of nodes) {

// if (isTooBig(node.size, maxSize) && !isTooSmall(node.size, minSize)) {

// result.push(new Group([node], []));

// } else {

// initialNodes.push(node);

// }

// }

那为什么我们要获取本身满足minSize+maxSize的集合possibleResultGroups呢?从下面代码我们可以知道,我们拿到集合后,再进行了筛选,筛选出那些更加符合problemTypes问题类型的group,称为bestGroup

const removeProblematicNodes = (group, consideredSize = group.size) => {

const problemTypes = getTooSmallTypes(consideredSize, minSize);

if (problemTypes.size > 0) {

const problemNodes = group.popNodes(

n => getNumberOfMatchingSizeTypes(n.size, problemTypes) > 0

);

if (problemNodes === undefined) return false;

const possibleResultGroups = result.filter(

n => getNumberOfMatchingSizeTypes(n.size, problemTypes) > 0

);

if (possibleResultGroups.length > 0) {

const bestGroup = possibleResultGroups.reduce((min, group) => {

const minMatches = getNumberOfMatchingSizeTypes(min, problemTypes);

const groupMatches = getNumberOfMatchingSizeTypes(

group,

problemTypes

);

if (minMatches !== groupMatches)

return minMatches < groupMatches ? group : min;

if (

selectiveSizeSum(min.size, problemTypes) >

selectiveSizeSum(group.size, problemTypes)

)

return group;

return min;

});

for (const node of problemNodes) bestGroup.nodes.push(node);

bestGroup.nodes.sort((a, b) => {

if (a.key < b.key) return -1;

if (a.key > b.key) return 1;

return 0;

});

} else {

//...

}

return true;

}

else return false;

}

//Group的一个方法

popNodes(filter) {

debugger;

const newNodes = [];

const newSimilarities = [];

const resultNodes = [];

let lastNode;

for (let i = 0; i < this.nodes.length; i++) {

const node = this.nodes[i];

if (filter(node)) {

resultNodes.push(node);

} else {

if (newNodes.length > 0) {

newSimilarities.push(

lastNode === this.nodes[i - 1]

? this.similarities[i - 1]

: similarity(lastNode.key, node.key)

);

}

newNodes.push(node);

lastNode = node;

}

}

if (resultNodes.length === this.nodes.length) return undefined;

this.nodes = newNodes;

this.similarities = newSimilarities;

this.size = sumSize(newNodes);

return resultNodes;

}

然后将这些不满足minSize的node合并到本身满足minSize+maxSize的group中,主要核心就是下面这一句代码,那为什么要这么做呢?

for (const node of problemNodes) bestGroup.nodes.push(node);

原因就是这些node本身是无法满足minSize的,也就是整体太小了,这个时候将它合并到目前最好最有可能接纳它的集合中,就可以解决满足它所需要的minSize

当然,也有可能找不到可以接纳它的集合,那我们只能重新创建一个new Group()接纳它了

if (possibleResultGroups.length > 0) {

//...

} else {

// There are no other nodes with the same size types

// We create a new group and have to accept that it's smaller than minSize

result.push(new Group(problemNodes, null));

}

return true;

小结

removeProblematicNodes() 传输group和consideredSize,其中consideredSize是一个Object对象,它需要跟minSize对象进行对比,然后获取对应的类型数组problemTypes,然后检测能否从传入的集合group抽离出problemTypes类型的一些node集合,然后合并到已经确定的result集合/新建一个new Group()集合中

如果抽离出来的node集合等于group本身,则直接返回false,不进行任何合并/新建操作

如果group集合中没有任何类型是小于minSize的,则返回false,不进行任何合并/新建操作

如果group集合的问题类型数组找不到对应可以合并的result集合,则放入到new Group()集合

发现问题

- bestGroup.nodes.push(node)之后会不会超过maxSize?

步骤3:分割左边和右边部分,使得leftSize>minSize && rightSize>minSize

步骤1是判断整体的size是否不满足maxSize切割,而步骤2则是判断部分属性是否不满足minSize的要求,如果有,则需要合并到其它group/新建new Group()接纳它,无论是哪种结果,都需要将旧的group/新的group压入queue中重新进行处理

在经历步骤1和步骤2对于minSize的处理后,步骤3开始进行左右区域的合并,要求左右区域都满足大于或等于minSize

// left v v right

// [ O O O ] O O O [ O O O ]

// ^^^^^^^^^ leftSize

// rightSize ^^^^^^^^^

// leftSize > minSize

// rightSize > minSize

// r l

// Perfect split: [ O O O ] [ O O O ]

// right === left - 1

let left = 1;

let leftSize = Object.create(null);

addSizeTo(leftSize, group.nodes[0].size);

while (left < group.nodes.length && isTooSmall(leftSize, minSize)) {

addSizeTo(leftSize, group.nodes[left].size);

left++;

}

let right = group.nodes.length - 2;

let rightSize = Object.create(null);

addSizeTo(rightSize, group.nodes[group.nodes.length - 1].size);

while (right >= 0 && isTooSmall(rightSize, minSize)) {

addSizeTo(rightSize, group.nodes[right].size);

right--;

}

合并后,会出现三种情况

right === left - 1: 完美切割,不用处理right < left - 1: 两个区域有重叠的地方right > left - 1: 两个区域中间存在没有使用的区域

�right < left - 1

比较目前left和right的位置,取占据较为位的一边,减去最左边/最右边的size,此时prevSize肯定不满足minSize,因为从上面的分析可以知道,都是直接addSizeTo使得leftArea和rightArea都满足minSize

通过removeProblematicNodes()传入当前group和prevSize,通过prevSize和minSize的比较获取问题类型数组problemTypes,然后根据目前的problemTypes获取子集合(group的一部分或者undefined)

如果根据目前的problemTypes拿到的就是group,则无法合并该子集到其它chunk中,removeProblematicNodes()返回false

如果该子集是group的一部分,则合并到其它已经形成的result(多个group集合)中最适合的一个(根据problemTypes类型越多符合的原则),然后将剩下的符合minSize的group部分放入queue中,重新进行处理

if (left - 1 > right) {

let prevSize;

// left右边剩余的数量 比 right左边的数量 大

// a b c d e f g

// r l

if (right < group.nodes.length - left) {

subtractSizeFrom(rightSize, group.nodes[right + 1].size);

prevSize = rightSize;

} else {

subtractSizeFrom(leftSize, group.nodes[left - 1].size);

prevSize = leftSize;

}

if (removeProblematicNodes(group, prevSize)) {

queue.push(group);

continue;

}

// can't split group while holding minSize

// because minSize is preferred of maxSize we return

// the problematic nodes as result here even while it's too big

// To avoid this make sure maxSize > minSize * 3

result.push(group);

continue;

}

我们从步骤1可以知道,目前group肯定存在大于maxSize的类型,并且经过步骤2的removeProblematicNodes(),我们要么剔除不了那些小于minSize类型的数据,要么不存在小于小于minSize类型的数据

而left - 1 > right代表着步骤2中我们剔除不了那些小于minSize类型的数据,因此我们在步骤3再次尝试剔除小于minSize类型的数据,如果失败,由于优先级minSize>maxSize,即使当前group存在类型大于maxSize,但是强行分区leftArea和rightArea肯定不能满足minSize的要求,因此忽略maxSize,直接为当前group建立一个chunk

具体例子

从下面的例子可以知道,app1这个chunk的大小是超过maxSize=124的,但是它是满足minSize大小的,如果强行拆分为两个chunk,maxSize能够满足,但是minSize就无法满足,由于优先级minSize>maxSize,因此只能放弃maxSize而选择minSize

下面例子只是其中一种比较常见的情况,肯定还有其它情况,由于笔者精力有限,在该逻辑代码中没有再继续深入研究,请参考他人文章进行深入了解left - 1 > right步骤3的处理

right > left - 1

两个区域中间存在没有使用的区域,使用similarity寻找最佳分割点,寻找最小的similarity进行切割,分为左右两半

其中pos=left,然后在[left, right]中进行递增,其中rightSize为[pos, nodes.length-1]的总和

在不断递增pos的过程中,不断增加leftSize以及不断减少对应的rightSize,判断是否会小于minSize,通过group.similarities找到最小的值,也就是相似度最小的两个位置(文件路径差距最大的两个位置),进行切割

if (left <= right) {

let best = -1;

let bestSimilarity = Infinity;

let pos = left;

let rightSize = sumSize(group.nodes.slice(pos));

while (pos <= right + 1) {

const similarity = group.similarities[pos - 1];

if (

similarity < bestSimilarity &&

!isTooSmall(leftSize, minSize) &&

!isTooSmall(rightSize, minSize)

) {

best = pos;

bestSimilarity = similarity;

}

addSizeTo(leftSize, group.nodes[pos].size);

subtractSizeFrom(rightSize, group.nodes[pos].size);

pos++;

}

if (best < 0) {

result.push(group);

continue;

}

left = best;

right = best - 1;

}

而group.similarities[pos - 1]是什么意思呢?

根据两个相邻的node.key,similarity()进行每一个字符的比较,比如

- 最接近一样的两个key,

ca-cb=5,10 - Math.abs(ca - cb)=5

- 不相同的两个key,

ca-cb=6,10 - Math.abs(ca - cb)=4

- 两个key不相同到离谱,则10 - Math.abs(ca - cb)<0,最终

Math.max(0, 10 - Math.abs(ca - cb))=0

因此两个相邻node对应的node.key最接近,similarities[x]最大

const initialGroup = new Group(initialNodes, getSimilarities(initialNodes))

const getSimilarities = nodes => {

// calculate similarities between lexically adjacent nodes

/** @type {number[]} */

const similarities = [];

let last = undefined;

for (const node of nodes) {

if (last !== undefined) {

similarities.push(similarity(last.key, node.key));

}

last = node;

}

return similarities;

};

const similarity = (a, b) => {

const l = Math.min(a.length, b.length);

let dist = 0;

for (let i = 0; i < l; i++) {

const ca = a.charCodeAt(i);

const cb = b.charCodeAt(i);

dist += Math.max(0, 10 - Math.abs(ca - cb));

}

return dist;

};

而在一开始的时候,我们就根据node.key进行了排序

const initialNodes = [];

// lexically ordering of keys

nodes.sort((a, b) => {

if (a.key < b.key) return -1;

if (a.key > b.key) return 1;

return 0;

});

因此使用similarity寻找最佳分割点,寻找最小的similarity进行切割,分为左右两半,就是在寻找两个node的key最不相同的一个index

具体例子

node.key是如何生成的?

根据下面getKey()代码可以知道,先获取相对应的路径name="./src/entry1.js",然后通过hashFilename()得到对应的hash值,最终拼成

路径fullKey="./src/entry1.js-6a89fa05",然后requestToId()转化为key="src_entry1_js-6a89fa05"

// node_modules/webpack/lib/optimize/SplitChunksPlugin.js

const results = deterministicGroupingForModules({

//...

getKey(module) {

debugger;

const cache = getKeyCache.get(module);

if (cache !== undefined) return cache;

const ident = cachedMakePathsRelative(module.identifier());

const nameForCondition =

module.nameForCondition && module.nameForCondition();

const name = nameForCondition

? cachedMakePathsRelative(nameForCondition)

: ident.replace(/^.*!|\?[^?!]*$/g, "");

const fullKey =

name +

automaticNameDelimiter +

hashFilename(ident, outputOptions);

const key = requestToId(fullKey);

getKeyCache.set(module, key);

return key;

}

}

本质就是拿到文件路径最不相同的一个点?比如其中5个module都在a文件夹,其中3个module都在b文件夹,那么就以此为切割点?切割a文件夹为leftArea,切割b文件夹为rightArea??

字符串的比较是按照字符(母)逐个进行比较的,从头到尾,一位一位进行比较,谁大则该字符串大,比如

"Z"> "A""ABC"> "ABA""ABC"< "AC""ABC"> "AB"

直接模拟一系列的nodes数据,手动制定left和right,移除对应的leftSize和rightSize

size只是为了判断目前分割的大小是否满足minSize,我们下面例子主要是为了模拟使用similarity寻找最佳分割点,寻找最小的similarity进行切割,分为左右两半的逻辑,因此暂时不关注size

class Group {

constructor(nodes, similarities, size) {

this.nodes = nodes;

this.similarities = similarities;

this.key = undefined;

}

}

const getSimilarities = nodes => {

const similarities = [];

let last = undefined;

for (const node of nodes) {

if (last !== undefined) {

similarities.push(similarity(last.key, node.key));

}

last = node;

}

return similarities;

};

const similarity = (a, b) => {

const l = Math.min(a.length, b.length);

let dist = 0;

for (let i = 0; i < l; i++) {

const ca = a.charCodeAt(i);

const cb = b.charCodeAt(i);

dist += Math.max(0, 10 - Math.abs(ca - cb));

}

return dist;

};

function test() {

const initialNodes = [

{

key: "src2_entry1_js-6a89fa02"

},

{

key: "src3_entry2_js-3a33ff02"

},

{

key: "src2_entry3_js-6aaafa01"

},

{

key: "src1_entry0_js-ea33aa12"

},

{

key: "src1_entry1_js-6a89fa02"

},

{

key: "src1_entry2_js-ea33aa13"

},

{

key: "src1_entry3_js-ea33aa14"

}

];

initialNodes.sort((a, b) => {

if (a.key < b.key) return -1;

if (a.key > b.key) return 1;

return 0;

});

const initialGroup = new Group(initialNodes, getSimilarities(initialNodes));

console.info(initialGroup);

let left = 1;

let right = 4;

if (left <= right) {

let best = -1;

let bestSimilarity = Infinity;

let pos = left;

while (pos <= right + 1) {

const similarity = initialGroup.similarities[pos - 1];

if (

similarity < bestSimilarity

) {

best = pos;

bestSimilarity = similarity;

}

pos++;

}

left = best;

right = best - 1;

}

console.warn("left", left);

console.warn("right", right);

}

test();

最终执行结果如下所示,文件夹不同文件之间的similarities是最小的,因此会按照文件夹分成左右两个区域

虽然表现是按照文件夹分割,但是并不能说明都是如此,笔者没有深入研究这方面为什么要根据similarities进行分割,请读者参考其它文章进行研究,目前举例只是作为right > left - 1流程的个人理解

步骤4: 为左区间和右区间创建不同的Group数据,然后压入queue中重新处理

根据上面几个步骤确定的left和right,为leftArea和rightArea创建对应的new Group(),然后压入queue,再次重新处理分好的两个组,看看这两个组是否需要再进行分组

const rightNodes = [group.nodes[right + 1]];

/** @type {number[]} */

const rightSimilarities = [];

for (let i = right + 2; i < group.nodes.length; i++) {

rightSimilarities.push(group.similarities[i - 1]);

rightNodes.push(group.nodes[i]);

}

queue.push(new Group(rightNodes, rightSimilarities));

const leftNodes = [group.nodes[0]];

/** @type {number[]} */

const leftSimilarities = [];

for (let i = 1; i < left; i++) {

leftSimilarities.push(group.similarities[i - 1]);

leftNodes.push(group.nodes[i]);

}

queue.push(new Group(leftNodes, leftSimilarities));

步骤5: 赋值key,形成最终数据结构返回

result.sort((a, b) => {

if (a.nodes[0].key < b.nodes[0].key) return -1;

if (a.nodes[0].key > b.nodes[0].key) return 1;

return 0;

});

// give every group a name

const usedNames = new Set();

for (let i = 0; i < result.length; i++) {

const group = result[i];

if (group.nodes.length === 1) {

group.key = group.nodes[0].key;

} else {

const first = group.nodes[0];

const last = group.nodes[group.nodes.length - 1];

const name = getName(first.key, last.key, usedNames);

group.key = name;

}

}

// return the results

return result.map(group => {

return {

key: group.key,

items: group.nodes.map(node => node.item),

size: group.size

};

});

2.6 具体示例

2.6.1 形成新chunk:test3

在上面具体实例中,我们一开始的chunksInfoMap如下面所示,通过compareEntries()拿出bestEntry=test3的cacheGroup,然后经过一系列的参数校验后,开始检测其它chunksInfoMap[j]的info.chunks是否有目前最高优先级的chunksInfoMap[i]的chunks

bestEntry=test3具有的chunks是app1、app2、app3、app4,已经覆盖了所有入口文件chunk,因此所有chunksInfoMap[j]都得使用info.modules和item.modules比较,删除其它chunksInfoMap[j]的info.modules[i]

经过最高级别的cacheGroup:test3的整理后,我们将minChunks=3的common___g、js-cookie、voca放入到newChunk中,删除其它cacheGroup中这三个NormalModule

然后触发代码,进行chunksInfoMap的key删除

if (info.modules.size === 0) {

chunksInfoMap.delete(key);

continue;

}

最终chunksInfoMap的数据只剩下5个key,如下面所示

2.6.2 形成新chunk:test2

拆分出chunk:test3后,进入下一轮循环,通过compareEntries()拿出bestEntry=test2相关的cacheGroup

在经历

- isExistingChunk

- maxInitialRequests和maxAsyncRequests

的流程处理后,进入了chunkGraph.isModuleInChunk环节

outer: for (const chunk of usedChunks) {

for (const module of item.modules) {

if (chunkGraph.isModuleInChunk(module, chunk)) continue outer;

}

usedChunks.delete(chunk);

}

从下图可以知道,目前bestEntry=test2中,modules只剩下loadsh,但是chunks还存在app1、app2、app3、app4

从下图可以知道,loadsh只拥有app1、app2,因此上面代码块会触发usedChunks.delete(chunk)删除掉app3、app4

那为什么会存在cacheGroup=test2会拥有app3、app4呢?

那是因为在modules阶段:遍历compilation.modules,根据cacheGroup形成chunksInfoMap�数据的过程中,它对每一个module进行遍历,然后进行每一个cacheGroup的遍历,只要符合cacheGroup.minChunks=2都会被加入到cacheGroup=test2中

那为什么现在cacheGroup=test2又对应不上app3、app4呢?

那是因为cacheGroup=test3的优先级比cacheGroup=test2高,它把一些module:common_g、js-cookie、voca都已经并入到chunk=test3中,因此导致了cacheGroup=test2只剩下module:loadsh,这个时候loadsh只需要app1、app2这两个chunk,因此现在得删除app3、app4这两个失去作用的chunk

处理完毕chunkGraph.isModuleInChunk环节后,会进入usedChunks.size环节,由于上面的环节已经删除了usedChunks的两个元素,因此这里满足usedChunks.size,会将目前这个bestEntry重新加入到chunksInfoMap再次处理

// Were some (invalid) chunks removed from usedChunks?

// => readd all modules to the queue, as things could have been changed

if (usedChunks.size < item.chunks.size) {

if (isExistingChunk) usedChunks.add(newChunk);

if (usedChunks.size >= item.cacheGroup.minChunks) {

const chunksArr = Array.from(usedChunks);

for (const module of item.modules) {

addModuleToChunksInfoMap(

item.cacheGroup,

item.cacheGroupIndex,

chunksArr,

getKey(usedChunks),

module

);

}

}

continue;

}

加入完成后,chunksInfoMap的数据如下所示,test2就只剩下一个module以及它对应的两个chunk

再度触发新chunk:test2的处理逻辑

2.6.3 再度触发形成新chunk:test2

重新执行所有流程

- isExistingChunk

- maxInitialRequests和maxAsyncRequests

- chunkGraph.isModuleInChunk

- 不符合usedChunks.size

- minRemainingSize检测通过

最终触发了创建newChunk以及chunk.split(newChunk)的逻辑

// Create the new chunk if not reusing one

if (newChunk === undefined) {

newChunk = compilation.addChunk(chunkName);

}

// Walk through all chunks

for (const chunk of usedChunks) {

// Add graph connections for splitted chunk

chunk.split(newChunk);

}

然后进行删除其它chunksInfoMap其它item的info.modules[i]

const isOverlap = (a, b) => {

for (const item of a) {

if (b.has(item)) return true;

}

return false;

};

// remove all modules from other entries and update size

for (const [key, info] of chunksInfoMap) {

if (isOverlap(info.chunks, usedChunks)) {

// update modules and total size

// may remove it from the map when < minSize

let updated = false;

for (const module of item.modules) {

if (info.modules.has(module)) {

// remove module

info.modules.delete(module);

// update size

for (const key of module.getSourceTypes()) {

info.sizes[key] -= module.size(key);

}

updated = true;

}

}

if (updated) {

if (info.modules.size === 0) {

chunksInfoMap.delete(key);

continue;

}

if (

removeMinSizeViolatingModules(info) ||

!checkMinSizeReduction(

info.sizes,

info.cacheGroup.minSizeReduction,

info.chunks.size

)

) {

chunksInfoMap.delete(key);

continue;

}

}

}

}

由下图可以知道,需要删除的是app1和app2,因此所有chunksInfoMap其它item都会被删除,至此整个queue阶段:遍历chunksInfoMap,根据规则进行chunk的重新组织结束,形成了两个新的chunk:test3和test2

queue阶段结束后进入了maxSize阶段

2.6.3 检测是否配置maxSize,是否要切割chunk

具体可以看上面maxSize阶段的具体示例,这里不再赘述

3.codeGeneration�: 模块转译

由于篇幅原因,具体分析请看下一篇文章《「Webpack5源码」seal阶段分析三)》

参考

其它工程化文章

如果usedChunks删除了一些chunk,那么重新使用addModuleToChunksInfoMap()建立新的元素到chunksInfoMap,即去除不符合条件的chunk之后的重新加入chunksInfoMap形成新的cacheGroup组

一开始我们遍历chunksInfoMap�时,会删除目前处理的bestEntry,这个时候处理完毕后重新加入到chunksInfoMap,然后再进入循环进行处理

// Were some (invalid) chunks removed from usedChunks?

// => readd all modules to the queue, as things could have been changed

if (usedChunks.size < item.chunks.size) {

if (isExistingChunk) usedChunks.add(newChunk);

if (usedChunks.size >= item.cacheGroup.minChunks) {

const chunksArr = Array.from(usedChunks);

for (const module of item.modules) {

addModuleToChunksInfoMap(

item.cacheGroup,

item.cacheGroupIndex,

chunksArr,

getKey(usedChunks),

module

);

}

}

continue;

}minRemainingSize

webpack.config.js配置参数splitChunks.minRemainingSize,仅在剩余单个 chunk 时生效

通过确保拆分后剩余的最小chunk体积超过限制来避免大小为零的模块,"development"模式中默认为0

const getViolatingMinSizes = (sizes, minSize) => {

let list;

for (const key of Object.keys(minSize)) {

const size = sizes[key];

if (size === undefined || size === 0) continue;

if (size < minSize[key]) {

if (list === undefined) list = [key];

else list.push(key);

}

}

return list;

};

// Validate minRemainingSize constraint when a single chunk is left over

if (

!enforced &&

item.cacheGroup._validateRemainingSize &&

usedChunks.size === 1

) {

const [chunk] = usedChunks;

let chunkSizes = Object.create(null);

for (const module of chunkGraph.getChunkModulesIterable(chunk)) {

if (!item.modules.has(module)) {

for (const type of module.getSourceTypes()) {

chunkSizes[type] =

(chunkSizes[type] || 0) + module.size(type);

}

}

}

const violatingSizes = getViolatingMinSizes(

chunkSizes,

item.cacheGroup.minRemainingSize

);

if (violatingSizes !== undefined) {

const oldModulesSize = item.modules.size;

removeModulesWithSourceType(item, violatingSizes);

if (

item.modules.size > 0 &&

item.modules.size !== oldModulesSize

) {

// queue this item again to be processed again

// without violating modules

chunksInfoMap.set(bestEntryKey, item);

}

continue;

}

}如上面代码所示,先使用getViolatingMinSizes()得到size太小的类型集合,然后使用removeModulesWithSourceType()删除对应的module(如下面代码所示),同时更新对应的sizes属性,最终将更新完毕的item重新放入到chunksInfoMap

此时的chunksInfoMap对应的bestEntryKey数据已经删除小的modules

const removeModulesWithSourceType = (info, sourceTypes) => {

for (const module of info.modules) {

const types = module.getSourceTypes();

if (sourceTypes.some(type => types.has(type))) {

info.modules.delete(module);

for (const type of types) {

info.sizes[type] -= module.size(type);

}

}

}

};创建newChunk以及chunk.split(newChunk)

如果没有可以复用的Chunk,就使用compilation.addChunk(chunkName)建立一个新的Chunk

// Create the new chunk if not reusing one

if (newChunk === undefined) {

newChunk = compilation.addChunk(chunkName);

}

// Walk through all chunks

for (const chunk of usedChunks) {

// Add graph connections for splitted chunk

chunk.split(newChunk);

}在上面的分析可以知道,isReusedWithAllModules=true代表的是cacheGroup没有名称,遍历所有item.chunks找到可以复用的Chunk,因此这里不用connectChunkAndModule()建立新的联系,只需要将所有的item.modules跟item.chunks解除关联

而当isReusedWithAllModules=false时,需要将所有的item.modules跟item.chunks解除关联,将所有item.modules与newChunk建立联系

比如构建出往app3这个入口Chunk的ChunkGroup插入newChunk,建立它们的依赖关系,在后面生成代码时可以正确生成对应的依赖关系,即app3-Chunk可以加载newChunk,毕竟是从app1、app2、app3、app4这4个Chunk分离出来的newChunk

if (!isReusedWithAllModules) {

// Add all modules to the new chunk

for (const module of item.modules) {

if (!module.chunkCondition(newChunk, compilation)) continue;

// Add module to new chunk

chunkGraph.connectChunkAndModule(newChunk, module);

// Remove module from used chunks

for (const chunk of usedChunks) {

chunkGraph.disconnectChunkAndModule(chunk, module);

}

}

} else {

// Remove all modules from used chunks

for (const module of item.modules) {

for (const chunk of usedChunks) {

chunkGraph.disconnectChunkAndModule(chunk, module);

}

}

}将目前newChunk更新到maxSizeQueueMap,等待后续maxSize阶段处理

if (

Object.keys(item.cacheGroup.maxAsyncSize).length > 0 ||

Object.keys(item.cacheGroup.maxInitialSize).length > 0

) {

const oldMaxSizeSettings = maxSizeQueueMap.get(newChunk);

maxSizeQueueMap.set(newChunk, {

minSize: oldMaxSizeSettings

? combineSizes(

oldMaxSizeSettings.minSize,

item.cacheGroup._minSizeForMaxSize,

Math.max

)

: item.cacheGroup.minSize,

maxAsyncSize: oldMaxSizeSettings

? combineSizes(

oldMaxSizeSettings.maxAsyncSize,

item.cacheGroup.maxAsyncSize,

Math.min

)

: item.cacheGroup.maxAsyncSize,

maxInitialSize: oldMaxSizeSettings

? combineSizes(

oldMaxSizeSettings.maxInitialSize,

item.cacheGroup.maxInitialSize,

Math.min

)

: item.cacheGroup.maxInitialSize,

automaticNameDelimiter: item.cacheGroup.automaticNameDelimiter,

keys: oldMaxSizeSettings

? oldMaxSizeSettings.keys.concat(item.cacheGroup.key)

: [item.cacheGroup.key]

});

}删除其它chunksInfoMap item的info.modules[i]

当前处理的是最高优先级的chunksInfoMap item,处理完毕后,检测其它chunksInfoMap item的info.chunks是否有目前最高优先级的chunksInfoMap item的chunks

有的话使用info.modules和item.modules比较,删除其它chunksInfoMap item的info.modules[i]

删除完成后,检测info.modules.size是否等于0以及checkMinSizeReduction(),然后决定是否要进行cacheGroup的清除工作

checkMinSizeReduction()涉及到cacheGroup.minSizeReduction配置,生成 chunk 所需的主 chunk(bundle)的最小体积(以字节为单位)缩减。这意味着如果分割成一个 chunk 并没有减少主 chunk(bundle)的给定字节数,它将不会被分割,即使它满足splitChunks.minSize

const isOverlap = (a, b) => {

for (const item of a) {

if (b.has(item)) return true;

}

return false;

};

// remove all modules from other entries and update size

for (const [key, info] of chunksInfoMap) {

if (isOverlap(info.chunks, usedChunks)) {

// update modules and total size

// may remove it from the map when < minSize

let updated = false;

for (const module of item.modules) {

if (info.modules.has(module)) {

// remove module

info.modules.delete(module);

// update size

for (const key of module.getSourceTypes()) {

info.sizes[key] -= module.size(key);

}

updated = true;

}

}

if (updated) {

if (info.modules.size === 0) {

chunksInfoMap.delete(key);

continue;

}

if (

removeMinSizeViolatingModules(info) ||

!checkMinSizeReduction(

info.sizes,

info.cacheGroup.minSizeReduction,

info.chunks.size

)

) {

chunksInfoMap.delete(key);

continue;

}

}

}

}2.5 maxSize阶段:根据maxSize,将过大的chunk进行再分包

compilation.hooks.optimizeChunks.tap(

{

name: "SplitChunksPlugin",

stage: STAGE_ADVANCED

},

chunks => {

logger.time("prepare");

//...

logger.timeEnd("prepare");

logger.time("modules");

for (const module of compilation.modules) {

//...

}

logger.timeEnd("modules");

logger.time("queue");

for (const [key, info] of chunksInfoMap) {

//...

}

while (chunksInfoMap.size > 0) {

//...

}

logger.timeEnd("queue");

logger.time("maxSize");

for (const chunk of Array.from(compilation.chunks)) {

//...

}

logger.timeEnd("maxSize");

}

}maxSize 比 maxInitialRequest/maxAsyncRequests 具有更高的优先级。实际优先级是 maxInitialRequest/maxAsyncRequests < maxSize < minSize

使用 maxSize告诉 webpack 尝试将大于 maxSize 个字节的 chunk 分割成较小的部分。 这些较小的部分在体积上至少为 minSize(仅次于 maxSize)

maxSize 选项旨在与 HTTP/2 和长期缓存一起使用。它增加了请求数量以实现更好的缓存。它还可以用于减小文件大小,以加快二次构建速度

在上面cacheGroups生成的chunks合并到入口和异步形成的chunks后,我们将校验maxSize值,如果生成的chunks体积过大,还需要再次分包!

比如我们下面配置中,声明一个maxSize: 50

splitChunks: {

minSize: 1,

chunks: 'all',

maxInitialRequests: 10,

maxAsyncRequests: 10,

maxSize: 50,

cacheGroups: {

test3: {

chunks: 'all',

minChunks: 3,

name: "test3",

priority: 3

},

test2: {

chunks: 'all',

minChunks: 2,

name: "test2",

priority: 2

}

}

}最终生成的文件中,原本只有app1.js和app2.js,因为maxSize的限制,我们切割为3个app1-xxx.js和2个app2-xxx.js文件

发现问题

- maxSize是如何切割的?根据

NormalModule进行切割的吗? - 如果maxSize过小,会不会有数量限制?

- 这些切割的文件是如何在运行中合并起来的呢?是通过

runtime代码吗?还是通过chunkGroup?同一个chunkGroup中有不同的chunks? - maxSize是如何处理切割不均的情况,比如切割成为两部分,如何保证两部分都大于

minSize又小于maxSize?

接下来的源码会主要围绕这上面问题进行分析

如下面代码所示,我们使用deterministicGroupingForModules进行chunk的切割得到多个结果results

如果切割结果result.length<=1,那么证明不用切割,不用处理

如果切割结果result.length>1

- 我们需要将切割出来的

newPart插入到chunk对应的ChunkGroup中 - 我们需要将切割完的每一个

chunk和它对应的module关联:chunkGraph.connectChunkAndModule(newPart, module) - 同时需要将原来一个大的

chunk跟目前newPartChunk对应的module进行解除关联:chunkGraph.disconnectChunkAndModule(chunk, module)

//node_modules/webpack/lib/optimize/SplitChunksPlugin.js

for (const chunk of Array.from(compilation.chunks)) {

//...

const results = deterministicGroupingForModules({ ...});

if (results.length <= 1) {

continue;

}

for (let i = 0; i < results.length; i++) {

const group = results[i];

//...

if (i !== results.length - 1) {

const newPart = compilation.addChunk(name);

chunk.split(newPart);

newPart.chunkReason = chunk.chunkReason;

// Add all modules to the new chunk

for (const module of group.items) {

if (!module.chunkCondition(newPart, compilation)) {

continue;

}

// Add module to new chunk

chunkGraph.connectChunkAndModule(newPart, module);

// Remove module from used chunks

chunkGraph.disconnectChunkAndModule(chunk, module);

}

} else {

// change the chunk to be a part

chunk.name = name;

}

}

}2.5.1 deterministicGroupingForModules分割核心方法

切割的最小单位是NormalModule,如果一个NormalModule非常大,则直接成为一个组,也就是新的chunk

const nodes = Array.from(

items,

item => new Node(item, getKey(item), getSize(item))

);

for (const node of nodes) {

if (isTooBig(node.size, maxSize) && !isTooSmall(node.size, minSize)) {

result.push(new Group([node], []));

} else {

//....

}

}如果单个NormalModule小于maxSize,则加入到initialNodes中

for (const node of nodes) {

if (isTooBig(node.size, maxSize) && !isTooSmall(node.size, minSize)) {

result.push(new Group([node], []));

} else {

initialNodes.push(node);

}

}然后我们会进行initialNodes的处理,因为initialNodes[i]是小于maxSize的,但是多个initialNodes[i]合并起来未必小于maxSize,因此我们我们得分情况讨论

if (initialNodes.length > 0) {

const initialGroup = new Group(initialNodes, getSimilarities(initialNodes));

if (initialGroup.nodes.length > 0) {

const queue = [initialGroup];

while (queue.length) {

const group = queue.pop();

// 步骤1:判断整体大小是否还小于maxSize

// 步骤2:removeProblematicNodes()后重新处理

// 步骤3:分割左边和右边部分,使得leftSize>minSize && rightSize>minSize

// 步骤3-1:判断分割是否重叠,即left-1>right

// 步骤3-2:判断left和right中间是否有元素还没纳入左右两个区间内,即分割中间仍然有空余的部分

// 步骤4: 为左区间和右区间创建不同的Group数据,然后压入queue中重新处理

}

}

// 步骤5: 赋值key,形成最终数据结构返回

}步骤1: 判断是否存在类型大于maxSize

如果所有type类型数据的大小都无法大于maxSize,那就没有分割的必要性,直接加入结果result即可

这里的group.size是所有类型加起来的大小

if (initialNodes.length > 0) {