【深度学习】yolov7 pytorch模型转onnx,转ncnn模型和mnn模型使用细节

文章目录

- 前言

- 1.前置

-

- 1.1 安装必要的库

- 1.2 .pt 权重转ncnn 和mnn所需要的权重

- 2、编码C++项目

-

- 1.ncnn

- 2.mnn

- 总结

前言

yolov7 pytorch模型转onnx,转ncnn模型和mnn模型使用细节,记录一下

git仓库:

yolov7 https://github.com/WongKinYiu/yolov7

ncnn:https://github.com/Tencent/ncnn

mnn:https://github.com/alibaba/MNN

1.前置

1.1 安装必要的库

安装opencv, 我是编译安装的,编了一个多小时,少不更事啊

sudo apt-get update

sudo apt-get install libopencv-dev

后面会用到opencv库,等会会提到;

编译安装ncnn和mnn

ncnn

cd 到 ncnn的文件夹

cd /home/ubuntu/workplace/ncnn

209 mkdir build

210 cd build/

211 cmake ..

212 make install

213 sudo make install

cmake ,, 它会找到上一级目录的cmakelist进行编译

mnn:

套路是一样的,

但需要改一下,cmakelist文件 第41行,将off 改成on 这是将onnx转成.mnn 所需要的二进制文件。

option(MNN_BUILD_CONVERTER “Build Converter” ON)

cd /home/ubuntu/workplace/mnn

209 mkdir build

210 cd build/

211 cmake ..

212 make install

213 sudo make install

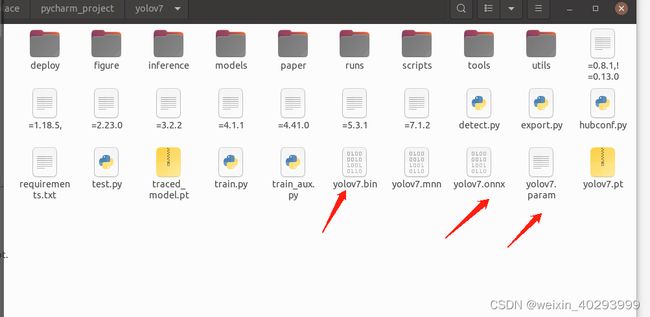

1.2 .pt 权重转ncnn 和mnn所需要的权重

其实2步走:

1, .pt 转 .onnx

cd 到yolov7的目录,转模型到onnx,不要把nms加

cd /home/ubuntu/workplace/pycharm_project/yolov7

python export.py --weights yolov7.pt --simplify --img-size 640

2.1 对ncnn .onnx 转成 .bin 和 .param 经过1已经生成了 所需要的权重

也可以

也可以

ubuntu@ubuntu:~/ncnn/build/install/bin$ ./onnx2ncnn /home/ubuntu/yolov7/yolov7.onnx /home/ubuntu/yolov7/yolov7/yolov7.param /home/ubuntu/yolov7/yolov7.bin

在使用ncnn库加载模型时,通常需要两个文件:.param文件和.bin文件。其中,.param 文件主要用於描述模型的结构和参数信息,而.bin文件则包含了模型中的权重和偏置等信息。这两个文件都是由模型训练过程中产生的。

2.2 对mnn .onnx 转 .mnn

去编译好的mnn文件夹下

./MNNConvert -f ONNX --modelFile /home/ubuntu/workplace/pycharm_project/yolov7/yolov7.onnx --MNNModel /home/ubuntu/workplace/pycharm_project/yolov7/yolov7.mnn --bizCode MNN

就会转出.mnn 的权重

2、编码C++项目

1.ncnn

cmakelist.txt

cmake_minimum_required(VERSION 3.16)

project(untitled22)

set(CMAKE_CXX_FLAGS "-std=c++11")

set(CMAKE_C_FLAGS "${CMAKE_C_FLAGS} -fopenmp ")

set(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS} -fopenmp")

set(CMAKE_CXX_STANDARD 11)

include_directories(${CMAKE_SOURCE_DIR})

include_directories(${CMAKE_SOURCE_DIR}/include)

include_directories(${CMAKE_SOURCE_DIR}/include/ncnn)

find_package(OpenCV REQUIRED)

#message(STATUS ${OpenCV_INCLUDE_DIRS})

#添加头文件

include_directories(${OpenCV_INCLUDE_DIRS})

#链接Opencv库

add_library(libncnn STATIC IMPORTED)

set_target_properties(libncnn PROPERTIES IMPORTED_LOCATION ${CMAKE_SOURCE_DIR}/lib/libncnn.a)

add_executable(untitled22 main.cpp)

target_link_libraries(untitled22 ${OpenCV_LIBS} libncnn )

// Tencent is pleased to support the open source community by making ncnn available.

//

// Copyright (C) 2020 THL A29 Limited, a Tencent company. All rights reserved.

//

// Licensed under the BSD 3-Clause License (the "License"); you may not use this file except

// in compliance with the License. You may obtain a copy of the License at

//

// https://opensource.org/licenses/BSD-3-Clause

//

// Unless required by applicable law or agreed to in writing, software distributed

// under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR

// CONDITIONS OF ANY KIND, either express or implied. See the License for the

// specific language governing permissions and limitations under the License.

#include "layer.h"

#include "net.h"

#if defined(USE_NCNN_SIMPLEOCV)

#include "simpleocv.h"

#else

#include

#include

#include

#endif

#include

#include

#include

#define MAX_STRIDE 32

struct Object

{

cv::Rect_ rect;

int label;

float prob;

};

static inline float intersection_area(const Object& a, const Object& b)

{

cv::Rect_ inter = a.rect & b.rect;

return inter.area();

}

static void qsort_descent_inplace(std::vector 参考源码https://github.com/Tencent/ncnn/tree/master/examples

模型需要改掉后面的param文件这三个红框改成-1,否则会出现乱框

效果图

2.mnn

cmake_minimum_required(VERSION 3.16)

project(untitled22)

set(CMAKE_CXX_FLAGS "-std=c++11")

set(CMAKE_C_FLAGS "${CMAKE_C_FLAGS} -fopenmp ")

set(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS} -fopenmp")

set(CMAKE_CXX_STANDARD 11)

include_directories(${CMAKE_SOURCE_DIR})

include_directories(${CMAKE_SOURCE_DIR}/include)

include_directories(${CMAKE_SOURCE_DIR}/include/MNN)

find_package(OpenCV REQUIRED)

#message(STATUS ${OpenCV_INCLUDE_DIRS})

#添加头文件

include_directories(${OpenCV_INCLUDE_DIRS})

#链接Opencv库

add_library(libmnn SHARED IMPORTED)

set_target_properties(libmnn PROPERTIES IMPORTED_LOCATION ${CMAKE_SOURCE_DIR}/libMNN.so)

add_executable(untitled22 main.cpp)

target_link_libraries(untitled22 ${OpenCV_LIBS} libmnn )

main.cpp

#include

#include

#include

#include

#include

#include

#include

#include

#include

using namespace std;

using namespace cv;

typedef struct {

int width;

int height;

} YoloSize;

typedef struct {

std::string name;

int stride;

std::vector anchors;

} YoloLayerData;

class BoxInfo

{

public:

int x1,y1,x2,y2,label,id;

float score;

};

static inline float sigmoid(float x)

{

return static_cast(1.f / (1.f + exp(-x)));

}

double GetIOU(cv::Rect_ bb_test, cv::Rect_ bb_gt)

{

float in = (bb_test & bb_gt).area();

float un = bb_test.area() + bb_gt.area() - in;

if (un < DBL_EPSILON)

return 0;

return (double)(in / un);

}

std::vector decode_infer(MNN::Tensor & data, int stride, int net_size, int num_classes,

const std::vector &anchors, float threshold)

{

std::vector result;

int batchs, channels, height, width, pred_item ;

batchs = data.shape()[0];

channels = data.shape()[1];

height = data.shape()[2];

width = data.shape()[3];

pred_item = data.shape()[4];

auto data_ptr = data.host();

for(int bi=0; bi threshold)

{

float cx = (sigmoid(width_ptr[0]) * 2.f - 0.5f + wi) * (float) stride;

float cy = (sigmoid(width_ptr[1]) * 2.f - 0.5f + hi) * (float) stride;

float w = pow(sigmoid(width_ptr[2]) * 2.f, 2) * anchors[ci].width;

float h = pow(sigmoid(width_ptr[3]) * 2.f, 2) * anchors[ci].height;

BoxInfo box;

box.x1 = std::max(0, std::min(net_size, int((cx - w / 2.f) )));

box.y1 = std::max(0, std::min(net_size, int((cy - h / 2.f) )));

box.x2 = std::max(0, std::min(net_size, int((cx + w / 2.f) )));

box.y2 = std::max(0, std::min(net_size, int((cy + h / 2.f) )));

box.score = score;

box.label = cls_id;

result.push_back(box);

}

}

}

}

}

}

return result;

}

void nms(std::vector &input_boxes, float NMS_THRESH) {

std::sort(input_boxes.begin(), input_boxes.end(), [](BoxInfo a, BoxInfo b) { return a.score > b.score; });

std::vector vArea(input_boxes.size());

for (int i = 0; i < int(input_boxes.size()); ++i) {

vArea[i] = (input_boxes.at(i).x2 - input_boxes.at(i).x1 + 1)

* (input_boxes.at(i).y2 - input_boxes.at(i).y1 + 1);

}

for (int i = 0; i < int(input_boxes.size()); ++i) {

for (int j = i + 1; j < int(input_boxes.size());) {

float xx1 = std::max(input_boxes[i].x1, input_boxes[j].x1);

float yy1 = std::max(input_boxes[i].y1, input_boxes[j].y1);

float xx2 = std::min(input_boxes[i].x2, input_boxes[j].x2);

float yy2 = std::min(input_boxes[i].y2, input_boxes[j].y2);

float w = std::max(float(0), xx2 - xx1 + 1);

float h = std::max(float(0), yy2 - yy1 + 1);

float inter = w * h;

float ovr = inter / (vArea[i] + vArea[j] - inter);

if (ovr >= NMS_THRESH) {

input_boxes.erase(input_boxes.begin() + j);

vArea.erase(vArea.begin() + j);

} else {

j++;

}

}

}

}

void scale_coords(std::vector &boxes, int w_from, int h_from, int w_to, int h_to)

{

float w_ratio = float(w_to)/float(w_from);

float h_ratio = float(h_to)/float(h_from);

for(auto &box: boxes)

{

box.x1 *= w_ratio;

box.x2 *= w_ratio;

box.y1 *= h_ratio;

box.y2 *= h_ratio;

}

return ;

}

cv::Mat draw_box(cv::Mat & cv_mat, std::vector &boxes, const std::vector &labels,unsigned char colors[][3])

{

for(auto box : boxes)

{

int width = box.x2-box.x1;

int height = box.y2-box.y1;

cv::Point p = cv::Point(box.x1, box.y1);

cv::Rect rect = cv::Rect(box.x1, box.y1, width, height);

cv::rectangle(cv_mat, rect, cv::Scalar(colors[box.label][0],colors[box.label][1],colors[box.label][2]));

string text = labels[box.label] + ":" + std::to_string(box.score) ;

cv::putText(cv_mat, text, p, cv::FONT_HERSHEY_PLAIN, 1, cv::Scalar(colors[box.label][0],colors[box.label][1],colors[box.label][2]));

}

return cv_mat;

}

int main(int argc, char **argv) {

std::vector labels = {

"person", "bicycle", "car", "motorcycle", "airplane", "bus", "train", "truck", "boat", "traffic light",

"fire hydrant", "stop sign", "parking meter", "bench", "bird", "cat", "dog", "horse", "sheep", "cow",

"elephant", "bear", "zebra", "giraffe", "backpack", "umbrella", "handbag", "tie", "suitcase", "frisbee",

"skis", "snowboard", "sports ball", "kite", "baseball bat", "baseball glove", "skateboard", "surfboard",

"tennis racket", "bottle", "wine glass", "cup", "fork", "knife", "spoon", "bowl", "banana", "apple",

"sandwich", "orange", "broccoli", "carrot", "hot dog", "pizza", "donut", "cake", "chair", "couch",

"potted plant", "bed", "dining table", "toilet", "tv", "laptop", "mouse", "remote", "keyboard", "cell phone",

"microwave", "oven", "toaster", "sink", "refrigerator", "book", "clock", "vase", "scissors", "teddy bear",

"hair drier", "toothbrush"

};

unsigned char colors[][3] = {

{255, 0, 0}

};

cv::Mat bgr = cv::imread("/home/ubuntu/workplace/ncnn/examples/bus.jpg");;// 预处理和源码不太一样,所以影响了后面的

int target_size = 640;

cv::Mat resize_img;

cv::resize(bgr, resize_img, cv::Size(target_size, target_size));

float cls_threshold = 0.25;

// MNN inference

auto mnnNet = std::shared_ptr(

MNN::Interpreter::createFromFile("/home/ubuntu/workplace/pycharm_project/yolov7/yolov7.mnn"));

auto t1 = std::chrono::steady_clock::now();

MNN::ScheduleConfig netConfig;

netConfig.type = MNN_FORWARD_CPU;

netConfig.numThread = 4;

auto session = mnnNet->createSession(netConfig);

auto input = mnnNet->getSessionInput(session, "images");

mnnNet->resizeTensor(input, {1, 3, (int) target_size, (int) target_size});

mnnNet->resizeSession(session);

MNN::CV::ImageProcess::Config config;

const float mean_vals[3] = {0, 0, 0};

const float norm_255[3] = {1.f / 255, 1.f / 255.f, 1.f / 255};

std::shared_ptr pretreat(

MNN::CV::ImageProcess::create(MNN::CV::BGR, MNN::CV::RGB, mean_vals, 3,

norm_255, 3));

pretreat->convert(resize_img.data, (int) target_size, (int) target_size, resize_img.step[0], input);

mnnNet->runSession(session);

std::vector yolov7_layers{

{"528", 32, {{142, 110}, {192, 243}, {459, 401}}},

{"516", 16, {{36, 75}, {76, 55}, {72, 146}}},

{"output", 8, {{12, 16}, {19, 36}, {40, 28}}},

};

auto output = mnnNet->getSessionOutput(session, yolov7_layers[2].name.c_str());

MNN::Tensor outputHost(output, output->getDimensionType());

output->copyToHostTensor(&outputHost);

//毫秒级

std::vector vec_scores;

std::vector vec_new_scores;

std::vector vec_labels;

int outputHost_shape_c = outputHost.channel();

int outputHost_shape_d = outputHost.dimensions();

int outputHost_shape_w = outputHost.width();

int outputHost_shape_h = outputHost.height();

printf("shape_d=%d shape_c=%d shape_h=%d shape_w=%d outputHost.elementSize()=%d\n", outputHost_shape_d,

outputHost_shape_c, outputHost_shape_h, outputHost_shape_w, outputHost.elementSize());

auto yolov7_534 = mnnNet->getSessionOutput(session, yolov7_layers[1].name.c_str());

MNN::Tensor output_534_Host(yolov7_534, yolov7_534->getDimensionType());

yolov7_534->copyToHostTensor(&output_534_Host);

outputHost_shape_c = output_534_Host.channel();

outputHost_shape_d = output_534_Host.dimensions();

outputHost_shape_w = output_534_Host.width();

outputHost_shape_h = output_534_Host.height();

printf("shape_d=%d shape_c=%d shape_h=%d shape_w=%d output_534_Host.elementSize()=%d\n", outputHost_shape_d,

outputHost_shape_c, outputHost_shape_h, outputHost_shape_w, output_534_Host.elementSize());

auto yolov7_554 = mnnNet->getSessionOutput(session, yolov7_layers[0].name.c_str());

MNN::Tensor output_544_Host(yolov7_554, yolov7_554->getDimensionType());

yolov7_554->copyToHostTensor(&output_544_Host);

outputHost_shape_c = output_544_Host.channel();

outputHost_shape_d = output_544_Host.dimensions();

outputHost_shape_w = output_544_Host.width();

outputHost_shape_h = output_544_Host.height();

printf("shape_d=%d shape_c=%d shape_h=%d shape_w=%d output_544_Host.elementSize()=%d\n", outputHost_shape_d,

outputHost_shape_c, outputHost_shape_h, outputHost_shape_w, output_544_Host.elementSize());

std::vector & layers = yolov7_layers;

std::vector result;

std::vector boxes;

float threshold = 0.5;

float nms_threshold = 0.7;

boxes = decode_infer(outputHost, layers[2].stride, target_size, labels.size(), layers[2].anchors, threshold);

result.insert(result.begin(), boxes.begin(), boxes.end());

boxes = decode_infer(output_534_Host, layers[1].stride, target_size, labels.size(), layers[1].anchors, threshold);

result.insert(result.begin(), boxes.begin(), boxes.end());

boxes = decode_infer(output_544_Host, layers[0].stride, target_size, labels.size(), layers[0].anchors, threshold);

result.insert(result.begin(), boxes.begin(), boxes.end());

nms(result, nms_threshold);

scale_coords(result, target_size, target_size, bgr.cols, bgr.rows);

cv::Mat frame_show = draw_box(bgr, result, labels,colors);

cv::imshow("out",bgr);

cv::imwrite("dp.jpg",bgr);

cv::waitKey(0);

mnnNet->releaseModel();

mnnNet->releaseSession(session);

return 0;

}

总结

前后处理是硬功夫,加油!!!