视觉SLAM十四讲——ch8实践(视觉里程计2)

视觉SLAM十四讲----ch8的实践操作及避坑

- 0.实践前小知识介绍

- 1. 实践操作前的准备工作

- 2. 实践过程

-

- 2.1 LK光流

- 2.2 直接法

- 3. 遇到的问题及解决办法

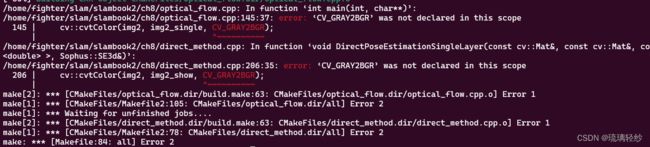

- 3.1 编译时遇到的问题

0.实践前小知识介绍

里程计的历史渊源是什么?

里程计是一种用来测量车辆或机器人行驶距离的装置,它通常通过检测车辆轮子或机器人轮子的旋转来进行测量。里程计的历史可以追溯到17世纪早期,当时人们开始使用机械装置来测量车辆行驶的距离。这些装置通常使用一个机械计数器,它们可以在车轮旋转的过程中记录里程数。18世纪末期,发明家托马斯·戈德史密斯发明了一种称为“奥多米特”的装置,它使用一个机械计数器来记录马车或自行车行驶的里程。这个装置被认为是现代里程计的早期形式。

随着时间的推移,里程计逐渐发展成为电子化和计算机化的设备。现代车辆和机器人通常使用激光或红外线传感器来测量轮子的旋转,并将数据传输到计算机或控制系统中。总的来说,里程计的历史经历了从机械装置到电子化和计算机化的过程。

1. 实践操作前的准备工作

- 在终端中进入ch8文件夹下,顺序执行以下命令进行编译。

mkdir build

cd build

cmake ..

//注意,j8还是其他主要看自己的电脑情况

make -j8

- 在build文件中进行运行。

注意: 在make之前,尽量将文件中的获取图片的路径都更改以下,否则后期运行有问题还得再更改,再make。

2. 实践过程

2.1 LK光流

代码:

//

// Created by Xiang on 2017/12/19.

//

#include 在build中执行语句:

./optical_flow

运行结果:

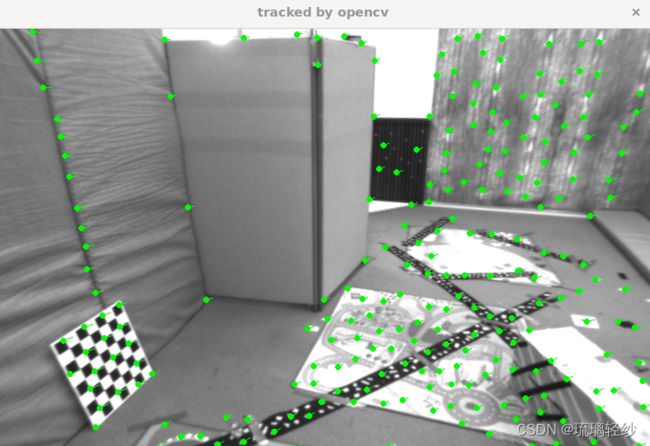

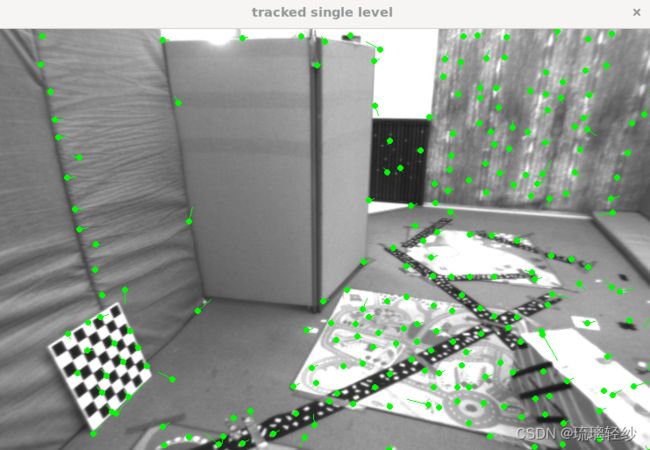

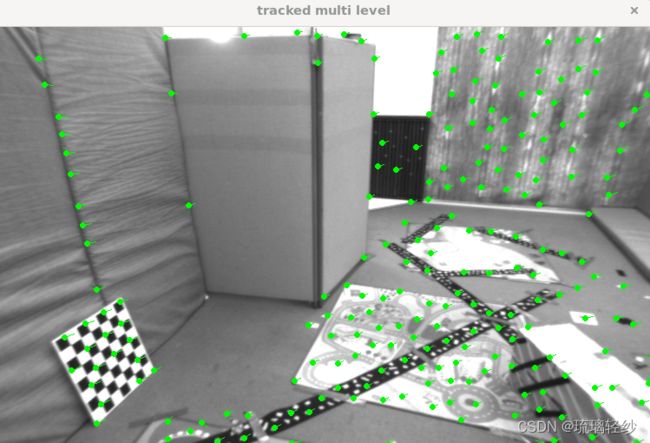

运行后展示使用opencv、单层、多层的追踪

同时终端输出:

build pyramid time: 0.0072683

track pyr 3 cost time: 0.0004321

track pyr 2 cost time: 0.0002794

track pyr 1 cost time: 0.0002624

track pyr 0 cost time: 0.0003014

optical flow by gauss-newton: 0.0087955

optical flow by opencv: 0.0054821

2.2 直接法

代码:

#include 在build中执行语句: ./direct_method

运行结果:

运行结果图可以看出追踪流,需要不停的对窗口关闭,可以看出来其变化。

终端输出相应信息:

iteration: 0, cost: 1.59797e+06

iteration: 1, cost: 651716

iteration: 2, cost: 243255

iteration: 3, cost: 176884

cost increased: 183909, 176884

T21 =

0.999991 0.00245009 0.0033858 0.00303273

-0.00245906 0.999993 0.00264927 0.000424829

-0.00337929 -0.00265757 0.999991 -0.730917

0 0 0 1

direct method for single layer: 0.0016574

iteration: 0, cost: 186361

T21 =

0.999989 0.00302157 0.00347121 0.000762356

-0.00302936 0.999993 0.00224074 0.00666315

-0.00346442 -0.00225123 0.999991 -0.728227

0 0 0 1

direct method for single layer: 0.002358

iteration: 0, cost: 247529

iteration: 1, cost: 229117

T21 =

0.999991 0.00251345 0.00346578 -0.00270253

-0.00252155 0.999994 0.00233534 0.00243076

-0.00345989 -0.00234406 0.999991 -0.734719

0 0 0 1

direct method for single layer: 0.00523089

iteration: 0, cost: 348441

T21 =

0.999991 0.00248082 0.00343389 -0.00373965

-0.00248836 0.999994 0.00219448 0.00304522

-0.00342843 -0.00220301 0.999992 -0.732343

0 0 0 1

direct method for single layer: 0.0012425

iteration: 0, cost: 1.315e+06

iteration: 1, cost: 906037

iteration: 2, cost: 603626

iteration: 3, cost: 399435

iteration: 4, cost: 280889

iteration: 5, cost: 237691

cost increased: 238395, 237691

T21 =

0.999971 0.000902974 0.00759567 0.00772499

-0.000938067 0.999989 0.00461783 0.00179863

-0.00759142 -0.00462482 0.99996 -1.46052

0 0 0 1

direct method for single layer: 0.0045787

iteration: 0, cost: 355480

iteration: 1, cost: 348267

cost increased: 348423, 348267

T21 =

0.999972 0.00120085 0.00742895 0.0085892

-0.00123022 0.999991 0.00395007 0.00531883

-0.00742414 -0.0039591 0.999965 -1.46883

0 0 0 1

direct method for single layer: 0.0009226

iteration: 0, cost: 443225

iteration: 1, cost: 435054

cost increased: 437537, 435054

T21 =

0.999971 0.000737127 0.00764046 -0.000242531

-0.000767091 0.999992 0.00391957 0.00279348

-0.00763751 -0.00392532 0.999963 -1.4818

0 0 0 1

direct method for single layer: 0.0009165

iteration: 0, cost: 501709

iteration: 1, cost: 463084

cost increased: 463953, 463084

T21 =

0.999971 0.000695392 0.00758989 -0.00249798

-0.000723685 0.999993 0.00372567 0.00395279

-0.00758725 -0.00373106 0.999964 -1.48132

0 0 0 1

direct method for single layer: 0.0008786

iteration: 0, cost: 1.37107e+06

iteration: 1, cost: 1.10683e+06

iteration: 2, cost: 921990

iteration: 3, cost: 794740

iteration: 4, cost: 601342

iteration: 5, cost: 559319

iteration: 6, cost: 394434

iteration: 7, cost: 363978

cost increased: 374118, 363978

T21 =

0.999945 0.00160897 0.0103684 0.0493737

-0.00166631 0.999983 0.00552457 0.0132374

-0.0103594 -0.00554155 0.999931 -2.18064

0 0 0 1

direct method for single layer: 0.0020563

iteration: 0, cost: 461649

iteration: 1, cost: 443603

iteration: 2, cost: 436513

iteration: 3, cost: 432080

iteration: 4, cost: 423494

cost increased: 431930, 423494

T21 =

0.999938 0.00146627 0.011054 0.0282033

-0.00152599 0.999984 0.0053958 0.00256267

-0.0110459 -0.00541233 0.999924 -2.21468

0 0 0 1

direct method for single layer: 0.0015141

iteration: 0, cost: 646880

iteration: 1, cost: 614318

iteration: 2, cost: 613113

cost increased: 620133, 613113

T21 =

0.999935 0.00152579 0.0112714 0.0183767

-0.00158773 0.999984 0.00548783 -0.00540064

-0.0112629 -0.00550537 0.999921 -2.23461

0 0 0 1

direct method for single layer: 0.0011636

iteration: 0, cost: 924370

iteration: 1, cost: 828022

iteration: 2, cost: 821445

iteration: 3, cost: 803411

cost increased: 811368, 803411

T21 =

0.999934 0.00125001 0.0114068 0.00255272

-0.00131019 0.999985 0.00527034 -0.000605904

-0.0114001 -0.00528494 0.999921 -2.24055

0 0 0 1

direct method for single layer: 0.0015292

iteration: 0, cost: 1.43709e+06

iteration: 1, cost: 1.31501e+06

iteration: 2, cost: 1.06723e+06

iteration: 3, cost: 938977

iteration: 4, cost: 788005

iteration: 5, cost: 680776

iteration: 6, cost: 605861

iteration: 7, cost: 548408

iteration: 8, cost: 516721

iteration: 9, cost: 513621

T21 =

0.999872 -0.000312873 0.0159856 0.0259369

0.000197362 0.999974 0.00722705 -0.00480823

-0.0159874 -0.00722297 0.999846 -2.96617

0 0 0 1

direct method for single layer: 0.0048151

iteration: 0, cost: 640692

iteration: 1, cost: 616653

iteration: 2, cost: 610486

cost increased: 615297, 610486

T21 =

0.999864 -0.000319108 0.0164719 0.00993795

0.000208632 0.999977 0.00670821 -0.00627072

-0.0164737 -0.00670386 0.999842 -3.005

0 0 0 1

direct method for single layer: 0.0009756

iteration: 0, cost: 848724

iteration: 1, cost: 823518

iteration: 2, cost: 780844

cost increased: 802765, 780844

T21 =

0.999865 -0.000227727 0.0164536 0.0022434

0.000124997 0.99998 0.0062444 -0.00399514

-0.0164547 -0.00624149 0.999845 -3.01734

0 0 0 1

direct method for single layer: 0.0010828

iteration: 0, cost: 1.26838e+06

iteration: 1, cost: 1.16447e+06

cost increased: 1.19957e+06, 1.16447e+06

T21 =

0.999865 0.00017071 0.0164584 -0.00906366

-0.000267333 0.999983 0.00586871 0.000576184

-0.0164571 -0.00587231 0.999847 -3.02444

0 0 0 1

direct method for single layer: 0.0008361

iteration: 0, cost: 1.64476e+06

iteration: 1, cost: 1.49383e+06

iteration: 2, cost: 1.23318e+06

iteration: 3, cost: 950472

iteration: 4, cost: 794112

iteration: 5, cost: 686345

iteration: 6, cost: 671817

iteration: 7, cost: 659908

iteration: 8, cost: 652671

iteration: 9, cost: 605440

T21 =

0.999803 0.00057056 0.0198394 0.0427397

-0.000712283 0.999974 0.00713717 0.0136135

-0.0198348 -0.00714989 0.999778 -3.76444

0 0 0 1

direct method for single layer: 0.0088727

iteration: 0, cost: 983836

iteration: 1, cost: 948750

iteration: 2, cost: 945444

iteration: 3, cost: 895561

cost increased: 898341, 895561

T21 =

0.99978 0.000643056 0.0209471 0.000477452

-0.000787155 0.999976 0.00687165 0.00707341

-0.0209422 -0.00688663 0.999757 -3.83472

0 0 0 1

direct method for single layer: 0.0012023

iteration: 0, cost: 1.27161e+06

iteration: 1, cost: 1.22543e+06

iteration: 2, cost: 1.04807e+06

cost increased: 1.2001e+06, 1.04807e+06

T21 =

0.999777 0.00108579 0.0210816 -0.00872002

-0.00121752 0.99998 0.00623637 0.0124058

-0.0210744 -0.00626065 0.999758 -3.85459

0 0 0 1

direct method for single layer: 0.0010238

iteration: 0, cost: 1.67716e+06

iteration: 1, cost: 1.64927e+06

iteration: 2, cost: 1.63771e+06

cost increased: 1.64371e+06, 1.63771e+06

T21 =

0.999786 0.00136909 0.0206569 -0.00336234

-0.00149442 0.999981 0.0060529 0.00874311

-0.0206482 -0.00608247 0.999768 -3.86001

0 0 0 1

direct method for single layer: 0.001018