配置spark

配置spark

- Yarn 模式

- Standalone 模式

- Local 模式

Yarn 模式

tar -zxvf spark-3.0.0-bin-hadoop3.2.tgz -C /opt/module

cd /opt/module

mv spark-3.0.0-bin-hadoop3.2 spark-yarn

修改 hadoop 配置文件/opt/module/hadoop/etc/hadoop/yarn-site.xml, 并分发

yarn.nodemanager.pmem-check-enabled

false

yarn.nodemanager.vmem-check-enabled

false

cd /opt/module/

xsync hadoop-3.1.3

修改 conf/spark-env.sh,添加 JAVA_HOME 和 YARN_CONF_DIR 配置

export JAVA_HOME=/opt/module/jdk1.8.0_212

YARN_CONF_DIR=/opt/module/hadoop-3.1.3/etc/hadoop

bin/spark-submit \

--class org.apache.spark.examples.SparkPi \

--master yarn \

--deploy-mode cluster \

./examples/jars/spark-examples_2.12-3.0.0.jar \

10

配置历史服务器

mv spark-defaults.conf.template spark-defaults.conf

修改 spark-default.conf 文件,配置日志存储路径

spark.eventLog.enabled true

spark.eventLog.dir hdfs://hadoop102:8020/directory

[root@linux1 hadoop]# sbin/start-dfs.sh

[root@linux1 hadoop]# hadoop fs -mkdir /directory

修改 spark-env.sh 文件, 添加日志配置

export SPARK_HISTORY_OPTS="

-Dspark.history.ui.port=18080

-Dspark.history.fs.logDirectory=hdfs://hadoop102:8020/directory

-Dspark.history.retainedApplications=30"

修改 spark-defaults.conf

spark.yarn.historyServer.address=hadoop102:18080

spark.history.ui.port=18080

启动历史服务

sbin/start-history-server.sh

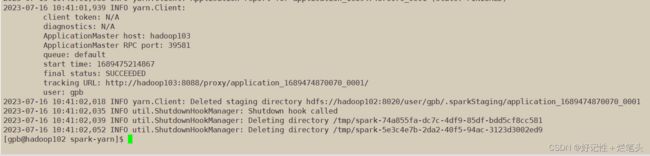

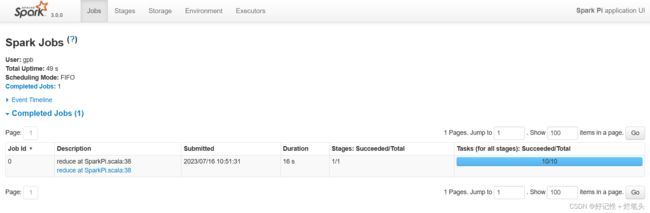

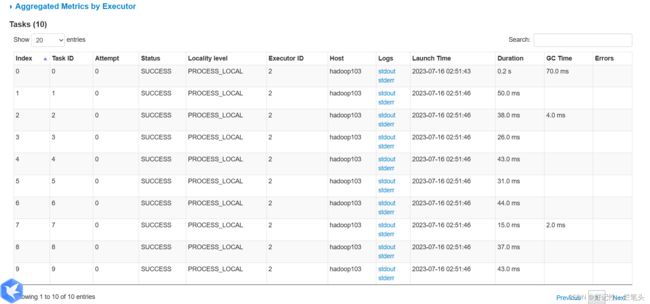

重新提交应用

bin/spark-submit \

--class org.apache.spark.examples.SparkPi \

--master yarn \

--deploy-mode client \

./examples/jars/spark-examples_2.12-3.0.0.jar \

10

Standalone 模式

tar -zxvf spark-3.0.0-bin-hadoop3.2.tgz -C /opt/module

cd /opt/module

mv spark-3.0.0-bin-hadoop3.2 spark-standalone

mv slaves.template slaves

linux1

linux2

linux3

mv spark-env.sh.template spark-env.sh

export JAVA_HOME=/opt/module/jdk1.8.0_212

SPARK_MASTER_HOST=hadoop102

SPARK_MASTER_PORT=7077

xsync spark-standalone

sbin/start-all.sh

配置历史服务

mv spark-defaults.conf.template spark-defaults.conf

//修改 spark-default.conf 文件,配置日志存储路径

spark.eventLog.enabled true

spark.eventLog.dir hdfs://hadoop102:8020/directory

sbin/start-dfs.sh

hadoop fs -mkdir /directory

//修改 spark-env.sh 文件, 添加日志配置

export SPARK_HISTORY_OPTS="

-Dspark.history.ui.port=18080

-Dspark.history.fs.logDirectory=hdfs://hadoop102:8020/directory

-Dspark.history.retainedApplications=30"

xsync conf

sbin/start-all.sh

sbin/start-history-server.sh

配置高可用

?先配置zookeeper

sbin/stop-all.sh

Local 模式

sudo mv spark-3.0.0-bin-hadoop3.2.tgz /opt/software/

tar -zxvf spark-3.0.0-bin-hadoop3.2.tgz -C /opt/module

cd /opt/module

mv spark-3.0.0-bin-hadoop3.2 spark-local