Python 爬虫

requests

下载

pip install -i https://pypi.tuna.tsinghua.edu.cn/simple requests

发送 get 请求

案例:百度

import requests

url = "http://www.baidu.com"

response = requests.get(url)

response.encoding = 'utf8'

print(response.text)

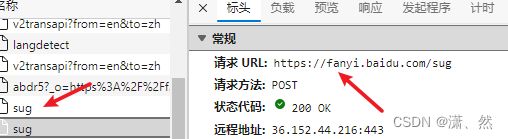

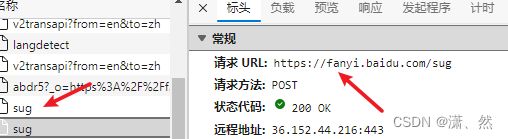

发送 post 请求

案例:百度翻译

import requests

url = "https://fanyi.baidu.com/sug"

data = {

"kw":"love"

}

response = requests.post(url,data)

print(response.json())

UA 伪装

headers = {

"user-agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/106.0.0.0 Safari/537.36 Edg/106.0.1370.52"

}

response = requests.get(url, headers=headers)

代理

proxy = {

"http": "121.233.212.34:3432"

}

response = requests.get(url, proxies=proxy)

cookies 登录

案例:17k小说网

import requests

sessions = requests.session()

url = 'https://passport.17k.com/ck/user/login'

data = {

"loginName": "15675521581",

"password": "**"

}

res = sessions.post(url, data=data)

result = sessions.get(url = 'https://user.17k.com/ck/author/shelf?page=1&appKey=2406394919')

print(result.json())

防盗链 Referer

案例:梨视频

import requests

url = 'https://www.pearvideo.com/videoStatus.jsp?contId=1007270&mrd=0.2400885482825692'

refererUrl = "https://www.pearvideo.com/video_1007270"

contId = refererUrl.split('_')[1]

headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/86.0.4240.198 Safari/537.36",

"Referer": refererUrl

}

res = requests.get(url, headers=headers)

dict = res.json()

print(dict)

systemTime = dict['systemTime']

srcUrl = dict['videoInfo']['videos']['srcUrl']

newUrl = srcUrl.replace(systemTime, f"cont-{contId}")

print(newUrl)

with open('test.mp4', mode='wb') as f:

f.write(requests.get(newUrl).content)

re 模块

findall

import re

result = re.findall(r"\d+","今天写了2行代码,赚了200元。")

print(result)

search

import re

result = re.search(r"\d+","今天写了2行代码,赚了200元。")

print(result)

print(result.group())

finditer

import re

result = re.finditer(r"\d+","今天写了2行代码,赚了200元。")

print(result)

for item in result:

print(item,item.group())

预加载

提前写好正则表达式

import re

obj = re.compile(r"\d+")

result = obj.findall("今天写了2行代码,赚了200元。")

print(result)

匹配换行 re.S

obj = re.compile(r"\d+",re.S)

在 html 中使用

import re

s = """

"""

obj = re.compile(r'')

result = obj.finditer(s)

for item in result:

print(item.group(1),item.group(2))

原子组使用别名

obj = re.compile(r'(?P.*?)</a></div>'</span><span class="token punctuation">)</span>

result <span class="token operator">=</span> obj<span class="token punctuation">.</span>finditer<span class="token punctuation">(</span>s<span class="token punctuation">)</span>

<span class="token keyword">for</span> item <span class="token keyword">in</span> result<span class="token punctuation">:</span>

<span class="token keyword">print</span><span class="token punctuation">(</span>item<span class="token punctuation">.</span>group<span class="token punctuation">(</span><span class="token string">'url'</span><span class="token punctuation">)</span><span class="token punctuation">,</span>item<span class="token punctuation">.</span>group<span class="token punctuation">(</span><span class="token string">'title'</span><span class="token punctuation">)</span><span class="token punctuation">)</span>

</code></pre>

<h3>爬取豆瓣电影 https://movie.douban.com/chart</h3>

<p><a href="http://img.e-com-net.com/image/info8/56cb9f754de24f00a504e3d1fb510b4f.jpg" target="_blank"><img src="http://img.e-com-net.com/image/info8/56cb9f754de24f00a504e3d1fb510b4f.jpg" alt="Python 爬虫之 requests模块(ua伪装、代理、cookies、防盗链 Referer)、re模块、xpath模块、selenium_第3张图片" width="650" height="300" style="border:1px solid black;"></a></p>

<pre><code class="prism language-python"><span class="token keyword">import</span> requests

<span class="token keyword">import</span> re

url <span class="token operator">=</span> <span class="token string">'https://movie.douban.com/chart'</span>

headers <span class="token operator">=</span> <span class="token punctuation">{</span>

<span class="token string">"user-agent"</span><span class="token punctuation">:</span> <span class="token string">"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/106.0.0.0 Safari/537.36 Edg/106.0.1370.52"</span>

<span class="token punctuation">}</span>

response <span class="token operator">=</span> requests<span class="token punctuation">.</span>get<span class="token punctuation">(</span>url<span class="token punctuation">,</span> headers<span class="token operator">=</span>headers<span class="token punctuation">,</span><span class="token punctuation">)</span>

response<span class="token punctuation">.</span>encoding <span class="token operator">=</span> <span class="token string">'utf-8'</span>

<span class="token comment"># re.S 可以让 re 匹配到换行符</span>

obj <span class="token operator">=</span> re<span class="token punctuation">.</span><span class="token builtin">compile</span><span class="token punctuation">(</span><span class="token string">r'<dl class="">.*?<dd>.*?<a .*?class="">(.*?)</a>'</span><span class="token punctuation">,</span>re<span class="token punctuation">.</span>S<span class="token punctuation">)</span>

content <span class="token operator">=</span> obj<span class="token punctuation">.</span>finditer<span class="token punctuation">(</span>response<span class="token punctuation">.</span>text<span class="token punctuation">)</span>

<span class="token keyword">for</span> item <span class="token keyword">in</span> content<span class="token punctuation">:</span>

<span class="token keyword">print</span><span class="token punctuation">(</span><span class="token builtin">str</span><span class="token punctuation">(</span>item<span class="token punctuation">.</span>group<span class="token punctuation">(</span><span class="token number">1</span><span class="token punctuation">)</span><span class="token punctuation">)</span><span class="token punctuation">.</span>strip<span class="token punctuation">(</span><span class="token punctuation">)</span><span class="token punctuation">)</span>

<span class="token comment"># 哈利·波特与凤凰社</span>

<span class="token comment"># 茶馆</span>

<span class="token comment"># 喜剧之王</span>

<span class="token comment"># 萤火虫之墓</span>

<span class="token comment"># 少年派的奇幻漂流</span>

<span class="token comment"># 上帝之城</span>

<span class="token comment"># 疯狂原始人</span>

<span class="token comment"># 机器人总动员</span>

<span class="token comment"># 泰坦尼克号</span>

<span class="token comment"># 谍影重重</span>

<span class="token comment"># 红辣椒</span>

<span class="token comment"># 神偷奶爸</span>

</code></pre>

<h2>xpath</h2>

<h3>lxml.etree.XMLSyntaxError: Opening and ending tag mismatch: meta line 4 and head, line 6, column 8 错误</h3>

<p>错误的原因是解析的html文件中,开始的标签和结束的标签不匹配</p>

<p>根据报错提示,找到对应的位置添加结束标签即可<br> 单标签在括号里添加 /<br> 双标签写上对应的结束标签</p>

<h3>lxml</h3>

<p>下载</p>

<pre><code class="prism language-python">pip install <span class="token operator">-</span>i https<span class="token punctuation">:</span><span class="token operator">//</span>pypi<span class="token punctuation">.</span>tuna<span class="token punctuation">.</span>tsinghua<span class="token punctuation">.</span>edu<span class="token punctuation">.</span>cn<span class="token operator">/</span>simple lxml

</code></pre>

<h3>解析本地</h3>

<pre><code class="prism language-python"><span class="token keyword">from</span> lxml <span class="token keyword">import</span> etree

tree <span class="token operator">=</span> etree<span class="token punctuation">.</span>parse<span class="token punctuation">(</span><span class="token string">'index.html'</span><span class="token punctuation">)</span>

</code></pre>

<h3>解析服务器响应文件</h3>

<pre><code class="prism language-python"><span class="token keyword">from</span> lxml <span class="token keyword">import</span> etree

tree <span class="token operator">=</span> etree<span class="token punctuation">.</span>HTML<span class="token punctuation">(</span><span class="token punctuation">)</span>

</code></pre>

<h3>基本语法</h3>

<p>html</p>

<pre><code class="prism language-html"><span class="token doctype"><span class="token punctuation"><!</span><span class="token doctype-tag">DOCTYPE</span> <span class="token name">html</span><span class="token punctuation">></span></span>

<span class="token tag"><span class="token tag"><span class="token punctuation"><</span>html</span> <span class="token attr-name">lang</span><span class="token attr-value"><span class="token punctuation attr-equals">=</span><span class="token punctuation">"</span>en<span class="token punctuation">"</span></span><span class="token punctuation">></span></span>

<span class="token tag"><span class="token tag"><span class="token punctuation"><</span>head</span><span class="token punctuation">></span></span>

<span class="token tag"><span class="token tag"><span class="token punctuation"><</span>meta</span> <span class="token attr-name">charset</span><span class="token attr-value"><span class="token punctuation attr-equals">=</span><span class="token punctuation">"</span>UTF-8<span class="token punctuation">"</span></span> <span class="token punctuation">/></span></span>

<span class="token tag"><span class="token tag"><span class="token punctuation"><</span>title</span><span class="token punctuation">></span></span>Title<span class="token tag"><span class="token tag"><span class="token punctuation"></</span>title</span><span class="token punctuation">></span></span>

<span class="token tag"><span class="token tag"><span class="token punctuation"></</span>head</span><span class="token punctuation">></span></span>

<span class="token tag"><span class="token tag"><span class="token punctuation"><</span>body</span><span class="token punctuation">></span></span>

<span class="token tag"><span class="token tag"><span class="token punctuation"><</span>ul</span><span class="token punctuation">></span></span>

<span class="token tag"><span class="token tag"><span class="token punctuation"><</span>li</span> <span class="token attr-name">class</span><span class="token attr-value"><span class="token punctuation attr-equals">=</span><span class="token punctuation">"</span>zs<span class="token punctuation">"</span></span><span class="token punctuation">></span></span>zs<span class="token tag"><span class="token tag"><span class="token punctuation"></</span>li</span><span class="token punctuation">></span></span>

<span class="token tag"><span class="token tag"><span class="token punctuation"><</span>li</span> <span class="token punctuation">></span></span>ls<span class="token tag"><span class="token tag"><span class="token punctuation"></</span>li</span><span class="token punctuation">></span></span>

<span class="token tag"><span class="token tag"><span class="token punctuation"><</span>li</span> <span class="token attr-name">class</span><span class="token attr-value"><span class="token punctuation attr-equals">=</span><span class="token punctuation">"</span>xr<span class="token punctuation">"</span></span><span class="token punctuation">></span></span>xr<span class="token tag"><span class="token tag"><span class="token punctuation"></</span>li</span><span class="token punctuation">></span></span>

<span class="token tag"><span class="token tag"><span class="token punctuation"><</span>li</span> <span class="token attr-name">id</span><span class="token attr-value"><span class="token punctuation attr-equals">=</span><span class="token punctuation">"</span>xr<span class="token punctuation">"</span></span> <span class="token attr-name">class</span><span class="token attr-value"><span class="token punctuation attr-equals">=</span><span class="token punctuation">"</span>xr<span class="token punctuation">"</span></span><span class="token punctuation">></span></span>xr2<span class="token tag"><span class="token tag"><span class="token punctuation"></</span>li</span><span class="token punctuation">></span></span>

<span class="token tag"><span class="token tag"><span class="token punctuation"></</span>ul</span><span class="token punctuation">></span></span>

<span class="token tag"><span class="token tag"><span class="token punctuation"><</span>img</span> <span class="token attr-name">src</span><span class="token attr-value"><span class="token punctuation attr-equals">=</span><span class="token punctuation">"</span>icon.png<span class="token punctuation">"</span></span> <span class="token attr-name">alt</span><span class="token attr-value"><span class="token punctuation attr-equals">=</span><span class="token punctuation">"</span><span class="token punctuation">"</span></span> <span class="token punctuation">/></span></span>

<span class="token tag"><span class="token tag"><span class="token punctuation"></</span>body</span><span class="token punctuation">></span></span>

<span class="token tag"><span class="token tag"><span class="token punctuation"></</span>html</span><span class="token punctuation">></span></span>

</code></pre>

<p>基本语法示例</p>

<pre><code class="prism language-python"><span class="token keyword">from</span> lxml <span class="token keyword">import</span> etree

tree <span class="token operator">=</span> etree<span class="token punctuation">.</span>parse<span class="token punctuation">(</span><span class="token string">'index.html'</span><span class="token punctuation">)</span>

<span class="token comment"># 查找 ul 下的所有 li</span>

<span class="token keyword">print</span><span class="token punctuation">(</span>tree<span class="token punctuation">.</span>xpath<span class="token punctuation">(</span><span class="token string">"//ul/li"</span><span class="token punctuation">)</span><span class="token punctuation">)</span> <span class="token comment"># [<Element li at 0x20b094f3dc0>, <Element li at 0x20b094f3e80>, <Element li at 0x20b094f3f00>]</span>

<span class="token comment"># 获取 ul 下 li class="xr" 值</span>

<span class="token keyword">print</span><span class="token punctuation">(</span>tree<span class="token punctuation">.</span>xpath<span class="token punctuation">(</span><span class="token string">"//ul/li[@class='xr']/text()"</span><span class="token punctuation">)</span><span class="token punctuation">)</span> <span class="token comment"># ['xr', 'xr2']</span>

<span class="token comment"># 查找 ul 下第一个 li</span>

<span class="token keyword">print</span><span class="token punctuation">(</span>tree<span class="token punctuation">.</span>xpath<span class="token punctuation">(</span><span class="token string">"//ul/li[1]/text()"</span><span class="token punctuation">)</span><span class="token punctuation">)</span> <span class="token comment"># ['zs']</span>

<span class="token comment"># 获取 img 标签 src 属性值</span>

<span class="token keyword">print</span><span class="token punctuation">(</span>tree<span class="token punctuation">.</span>xpath<span class="token punctuation">(</span><span class="token string">"//img/@src"</span><span class="token punctuation">)</span><span class="token punctuation">)</span> <span class="token comment"># ['icon.png']</span>

<span class="token comment"># 查找 ul 下 li id="xr" 并且 class="xr"</span>

<span class="token keyword">print</span><span class="token punctuation">(</span>tree<span class="token punctuation">.</span>xpath<span class="token punctuation">(</span><span class="token string">"//ul/li[@class='xr' and @id='xr']/text()"</span><span class="token punctuation">)</span><span class="token punctuation">)</span> <span class="token comment"># ['xr2']</span>

</code></pre>

<h2>selenium</h2>

<p>下载</p>

<pre><code class="prism language-python">pip install selenium

</code></pre>

<p>下载谷歌驱动</p>

<pre><code>https://chromedriver.storage.googleapis.com/index.html

</code></pre>

<p>基本使用</p>

<pre><code class="prism language-python"><span class="token keyword">from</span> selenium <span class="token keyword">import</span> webdriver

<span class="token keyword">from</span> selenium<span class="token punctuation">.</span>webdriver<span class="token punctuation">.</span>chrome<span class="token punctuation">.</span>service <span class="token keyword">import</span> Service

<span class="token keyword">from</span> selenium<span class="token punctuation">.</span>webdriver<span class="token punctuation">.</span>common<span class="token punctuation">.</span>by <span class="token keyword">import</span> By

<span class="token keyword">from</span> time <span class="token keyword">import</span> sleep

url <span class="token operator">=</span> <span class="token string">'https://www.lagou.com'</span>

<span class="token comment"># path = Service('./chromedriver.exe')</span>

<span class="token comment"># 取消自动关闭浏览器</span>

option <span class="token operator">=</span> webdriver<span class="token punctuation">.</span>ChromeOptions<span class="token punctuation">(</span><span class="token punctuation">)</span>

option<span class="token punctuation">.</span>add_experimental_option<span class="token punctuation">(</span><span class="token string">"detach"</span><span class="token punctuation">,</span> <span class="token boolean">True</span><span class="token punctuation">)</span>

<span class="token comment"># 创建浏览器对象</span>

driver <span class="token operator">=</span> webdriver<span class="token punctuation">.</span>Chrome<span class="token punctuation">(</span>options<span class="token operator">=</span>option<span class="token punctuation">)</span>

<span class="token comment"># driver = webdriver.Chrome()</span>

<span class="token comment"># 最大化窗口</span>

<span class="token comment"># driver.maximize_window()</span>

<span class="token comment"># 最小化窗口</span>

<span class="token comment"># driver.minimize_window()</span>

<span class="token comment"># 打开地址</span>

driver<span class="token punctuation">.</span>get<span class="token punctuation">(</span>url<span class="token punctuation">)</span>

<span class="token comment"># 获取网站的 title 标签内容</span>

<span class="token comment"># print(driver.title)</span>

<span class="token comment"># 通过 xpath 查找元素</span>

btn <span class="token operator">=</span> driver<span class="token punctuation">.</span>find_element<span class="token punctuation">(</span>by<span class="token operator">=</span>By<span class="token punctuation">.</span>XPATH<span class="token punctuation">,</span> value<span class="token operator">=</span><span class="token string">'//*[@id="cboxClose"]'</span><span class="token punctuation">)</span>

<span class="token comment"># <button type="button" id="cboxClose">close</button></span>

<span class="token comment"># 获取值 和 type 属性 ---- close button</span>

<span class="token keyword">print</span><span class="token punctuation">(</span>btn<span class="token punctuation">.</span>text<span class="token punctuation">,</span> btn<span class="token punctuation">.</span>get_attribute<span class="token punctuation">(</span><span class="token string">'type'</span><span class="token punctuation">)</span><span class="token punctuation">)</span>

<span class="token comment"># 点击按钮</span>

btn<span class="token punctuation">.</span>click<span class="token punctuation">(</span><span class="token punctuation">)</span>

sleep<span class="token punctuation">(</span><span class="token number">2</span><span class="token punctuation">)</span>

<span class="token comment"># 根据 id 获取</span>

search_btn <span class="token operator">=</span> driver<span class="token punctuation">.</span>find_element<span class="token punctuation">(</span>by<span class="token operator">=</span>By<span class="token punctuation">.</span>ID<span class="token punctuation">,</span>value<span class="token operator">=</span><span class="token string">'search_input'</span><span class="token punctuation">)</span>

<span class="token comment"># 向 input 输入内容</span>

search_btn<span class="token punctuation">.</span>send_keys<span class="token punctuation">(</span><span class="token string">'python'</span><span class="token punctuation">)</span>

sleep<span class="token punctuation">(</span><span class="token number">1</span><span class="token punctuation">)</span>

<span class="token comment"># 清空输入框</span>

search_btn<span class="token punctuation">.</span>clear<span class="token punctuation">(</span><span class="token punctuation">)</span>

<span class="token comment"># 执行 js 代码,删除某元素</span>

driver<span class="token punctuation">.</span>execute_script<span class="token punctuation">(</span><span class="token triple-quoted-string string">"""

const ad = document.querySelector('.un-login-banner')

ad.parentNode.removeChild(ad)

"""</span><span class="token punctuation">)</span>

<span class="token comment"># 切换窗口 从 0 开始 -1 为最后一个</span>

<span class="token comment"># driver.switch_to.window(driver.window_handles[-1])</span>

<span class="token comment"># driver.switch_to.window(driver.window_handles[0])</span>

<span class="token comment"># 关闭窗口</span>

<span class="token comment"># driver.close()</span>

<span class="token comment"># 关闭浏览器</span>

<span class="token comment"># driver.quit()</span>

</code></pre>

<p>无头浏览器</p>

<pre><code class="prism language-python"><span class="token comment"># 无头浏览器 即不打开浏览器</span>

option<span class="token punctuation">.</span>add_argument<span class="token punctuation">(</span><span class="token string">"--headless"</span><span class="token punctuation">)</span>

option<span class="token punctuation">.</span>add_argument<span class="token punctuation">(</span><span class="token string">"--disable-gpu"</span><span class="token punctuation">)</span>

<span class="token comment"># 创建浏览器对象</span>

driver <span class="token operator">=</span> webdriver<span class="token punctuation">.</span>Chrome<span class="token punctuation">(</span>options<span class="token operator">=</span>option<span class="token punctuation">)</span>

</code></pre>

<p>iframe</p>

<pre><code class="prism language-python">

<span class="token comment"># iframe 切换到 iframe</span>

<span class="token comment"># iframe = driver.find_element(by=By.ID, value='search_button')</span>

<span class="token comment"># driver.switch_to.frame(iframe)</span>

<span class="token comment"># 跳出 iframe</span>

<span class="token comment"># driver.switch_to.parent_frame()</span>

</code></pre>

<pre><code class="prism language-python"><span class="token comment"># 获取页面代码 是 f12 里的代码 不是源代码</span>

<span class="token comment"># driver.page_source</span>

</code></pre>

</div>

</div>

</div>

</div>

</div>

<!--PC和WAP自适应版-->

<div id="SOHUCS" sid="1682665663644119040"></div>

<script type="text/javascript" src="/views/front/js/chanyan.js"></script>

<!-- 文章页-底部 动态广告位 -->

<div class="youdao-fixed-ad" id="detail_ad_bottom"></div>

</div>

<div class="col-md-3">

<div class="row" id="ad">

<!-- 文章页-右侧1 动态广告位 -->

<div id="right-1" class="col-lg-12 col-md-12 col-sm-4 col-xs-4 ad">

<div class="youdao-fixed-ad" id="detail_ad_1"> </div>

</div>

<!-- 文章页-右侧2 动态广告位 -->

<div id="right-2" class="col-lg-12 col-md-12 col-sm-4 col-xs-4 ad">

<div class="youdao-fixed-ad" id="detail_ad_2"></div>

</div>

<!-- 文章页-右侧3 动态广告位 -->

<div id="right-3" class="col-lg-12 col-md-12 col-sm-4 col-xs-4 ad">

<div class="youdao-fixed-ad" id="detail_ad_3"></div>

</div>

</div>

</div>

</div>

</div>

</div>

<div class="container">

<h4 class="pt20 mb15 mt0 border-top">你可能感兴趣的:(Python,python,爬虫)</h4>

<div id="paradigm-article-related">

<div class="recommend-post mb30">

<ul class="widget-links">

<li><a href="/article/1946386624736718848.htm"

title="mac mlx大模型框架的安装和使用" target="_blank">mac mlx大模型框架的安装和使用</a>

<span class="text-muted">liliangcsdn</span>

<a class="tag" taget="_blank" href="/search/python/1.htm">python</a><a class="tag" taget="_blank" href="/search/java/1.htm">java</a><a class="tag" taget="_blank" href="/search/%E5%89%8D%E7%AB%AF/1.htm">前端</a><a class="tag" taget="_blank" href="/search/%E4%BA%BA%E5%B7%A5%E6%99%BA%E8%83%BD/1.htm">人工智能</a><a class="tag" taget="_blank" href="/search/macos/1.htm">macos</a>

<div>mlx是apple平台的大模型推理框架,对macm1系列处理器支持较好。这里记录mlx安装和运行示例。1安装mlx框架condacreate-nmlxpython=3.12condaactivatemlxpipinstallmlx-lm2运行mlx测试例以下是测试程序,使用方法和hf、vllm等推理框架基本一致。importosos.environ['HF_ENDPOINT']="https://</div>

</li>

<li><a href="/article/1943993659967991808.htm"

title="系统学习Python——并发模型和异步编程:进程、线程和GIL" target="_blank">系统学习Python——并发模型和异步编程:进程、线程和GIL</a>

<span class="text-muted"></span>

<div>分类目录:《系统学习Python》总目录在文章《并发模型和异步编程:基础知识》我们简单介绍了Python中的进程、线程和协程。本文就着重介绍Python中的进程、线程和GIL的关系。Python解释器的每个实例都是一个进程。使用multiprocessing或concurrent.futures库可以启动额外的Python进程。Python的subprocess库用于启动运行外部程序(不管使用何种</div>

</li>

<li><a href="/article/1943992776169418752.htm"

title="Flask框架入门:快速搭建轻量级Python网页应用" target="_blank">Flask框架入门:快速搭建轻量级Python网页应用</a>

<span class="text-muted">「已注销」</span>

<a class="tag" taget="_blank" href="/search/python-AI/1.htm">python-AI</a><a class="tag" taget="_blank" href="/search/python%E5%9F%BA%E7%A1%80/1.htm">python基础</a><a class="tag" taget="_blank" href="/search/%E7%BD%91%E7%AB%99%E7%BD%91%E7%BB%9C/1.htm">网站网络</a><a class="tag" taget="_blank" href="/search/python/1.htm">python</a><a class="tag" taget="_blank" href="/search/flask/1.htm">flask</a><a class="tag" taget="_blank" href="/search/%E5%90%8E%E7%AB%AF/1.htm">后端</a>

<div>转载:Flask框架入门:快速搭建轻量级Python网页应用1.Flask基础Flask是一个使用Python编写的轻量级Web应用框架。它的设计目标是让Web开发变得快速简单,同时保持应用的灵活性。Flask依赖于两个外部库:Werkzeug和Jinja2,Werkzeug作为WSGI工具包处理Web服务的底层细节,Jinja2作为模板引擎渲染模板。安装Flask非常简单,可以使用pip安装命令</div>

</li>

<li><a href="/article/1943991891796226048.htm"

title="Python Flask 框架入门:快速搭建 Web 应用的秘诀" target="_blank">Python Flask 框架入门:快速搭建 Web 应用的秘诀</a>

<span class="text-muted">Python编程之道</span>

<a class="tag" taget="_blank" href="/search/Python%E4%BA%BA%E5%B7%A5%E6%99%BA%E8%83%BD%E4%B8%8E%E5%A4%A7%E6%95%B0%E6%8D%AE/1.htm">Python人工智能与大数据</a><a class="tag" taget="_blank" href="/search/Python%E7%BC%96%E7%A8%8B%E4%B9%8B%E9%81%93/1.htm">Python编程之道</a><a class="tag" taget="_blank" href="/search/python/1.htm">python</a><a class="tag" taget="_blank" href="/search/flask/1.htm">flask</a><a class="tag" taget="_blank" href="/search/%E5%89%8D%E7%AB%AF/1.htm">前端</a><a class="tag" taget="_blank" href="/search/ai/1.htm">ai</a>

<div>PythonFlask框架入门:快速搭建Web应用的秘诀关键词Flask、微框架、路由系统、Jinja2模板、请求处理、WSGI、Web开发摘要想快速用Python搭建一个灵活的Web应用?Flask作为“微框架”代表,凭借轻量、可扩展的特性,成为初学者和小型项目的首选。本文将从Flask的核心概念出发,结合生活化比喻、代码示例和实战案例,带你一步步掌握:如何用Flask搭建第一个Web应用?路由</div>

</li>

<li><a href="/article/1943988487875260416.htm"

title="python_虚拟环境" target="_blank">python_虚拟环境</a>

<span class="text-muted">阿_焦</span>

<a class="tag" taget="_blank" href="/search/python/1.htm">python</a>

<div>第一、配置虚拟环境:virtualenv(1)pipvirtualenv>安装虚拟环境包(2)pipinstallvirtualenvwrapper-win>安装虚拟环境依赖包(3)c盘创建虚拟目录>C:\virtualenv>配置环境变量【了解一下】:(1)如何使用virtualenv创建虚拟环境a、cd到C:\virtualenv目录下:b、mkvirtualenvname>创建虚拟环境nam</div>

</li>

<li><a href="/article/1943985208218939392.htm"

title="Python爱心光波" target="_blank">Python爱心光波</a>

<span class="text-muted"></span>

<div>系列文章序号直达链接Tkinter1Python李峋同款可写字版跳动的爱心2Python跳动的双爱心3Python蓝色跳动的爱心4Python动漫烟花5Python粒子烟花Turtle1Python满屏飘字2Python蓝色流星雨3Python金色流星雨4Python漂浮爱心5Python爱心光波①6Python爱心光波②7Python满天繁星8Python五彩气球9Python白色飘雪10Pyt</div>

</li>

<li><a href="/article/1943985208697090048.htm"

title="Python流星雨" target="_blank">Python流星雨</a>

<span class="text-muted">Want595</span>

<a class="tag" taget="_blank" href="/search/python/1.htm">python</a><a class="tag" taget="_blank" href="/search/%E5%BC%80%E5%8F%91%E8%AF%AD%E8%A8%80/1.htm">开发语言</a>

<div>文章目录系列文章写在前面技术需求完整代码代码分析1.模块导入2.画布设置3.画笔设置4.颜色列表5.流星类(Star)6.流星对象创建7.主循环8.流星运动逻辑9.视觉效果10.总结写在后面系列文章序号直达链接表白系列1Python制作一个无法拒绝的表白界面2Python满屏飘字表白代码3Python无限弹窗满屏表白代码4Python李峋同款可写字版跳动的爱心5Python流星雨代码6Python</div>

</li>

<li><a href="/article/1943983065500020736.htm"

title="Python之七彩花朵代码实现" target="_blank">Python之七彩花朵代码实现</a>

<span class="text-muted">PlutoZuo</span>

<a class="tag" taget="_blank" href="/search/Python/1.htm">Python</a><a class="tag" taget="_blank" href="/search/python/1.htm">python</a><a class="tag" taget="_blank" href="/search/%E5%BC%80%E5%8F%91%E8%AF%AD%E8%A8%80/1.htm">开发语言</a>

<div>Python之七彩花朵代码实现文章目录Python之七彩花朵代码实现下面是一个简单的使用Python的七彩花朵。这个示例只是一个简单的版本,没有很多高级功能,但它可以作为一个起点,你可以在此基础上添加更多功能。importturtleastuimportrandomasraimportmathtu.setup(1.0,1.0)t=tu.Pen()t.ht()colors=['red','skybl</div>

</li>

<li><a href="/article/1943982902379343872.htm"

title="Python 脚本最佳实践2025版" target="_blank">Python 脚本最佳实践2025版</a>

<span class="text-muted"></span>

<div>前文可以直接把这篇文章喂给AI,可以放到AI角色设定里,也可以直接作为提示词.这样,你只管提需求,写脚本就让AI来.概述追求简洁和清晰:脚本应简单明了。使用函数(functions)、常量(constants)和适当的导入(import)实践来有逻辑地组织你的Python脚本。使用枚举(enumerations)和数据类(dataclasses)等数据结构高效管理脚本状态。通过命令行参数增强交互性</div>

</li>

<li><a href="/article/1943982558085705728.htm"

title="(Python基础篇)了解和使用分支结构" target="_blank">(Python基础篇)了解和使用分支结构</a>

<span class="text-muted">EternityArt</span>

<a class="tag" taget="_blank" href="/search/%E5%9F%BA%E7%A1%80%E7%AF%87/1.htm">基础篇</a><a class="tag" taget="_blank" href="/search/python/1.htm">python</a>

<div>目录一、引言二、Python分支结构的类型与语法(一)if语句(单分支)(二)if-else语句(双分支)(三)if-elif-else语句(多分支)三、分支结构的应用场景(一)提示用户输入用户名,然后再提示输入密码,如果用户名是“admin”并且密码是“88888”则提示正确,否则,如果用户名不是admin还提示用户用户名不存在,(二)提示用户输入用户名,然后再提示输入密码,如果用户名是“adm</div>

</li>

<li><a href="/article/1943982558555467776.htm"

title="(Python基础篇)循环结构" target="_blank">(Python基础篇)循环结构</a>

<span class="text-muted">EternityArt</span>

<a class="tag" taget="_blank" href="/search/%E5%9F%BA%E7%A1%80%E7%AF%87/1.htm">基础篇</a><a class="tag" taget="_blank" href="/search/python/1.htm">python</a>

<div>一、什么是Python循环结构?循环结构是编程中重复执行代码块的机制。在Python中,循环允许你:1.迭代处理数据:遍历列表、字典、文件内容等。2.自动化重复任务:如批量处理数据、生成序列等。3.控制执行流程:根据条件决定是否继续或终止循环。二、为什么需要循环结构?假设你需要打印1到100的所有偶数:没有循环:需手动编写100行print()语句。print(0)print(2)print(4)</div>

</li>

<li><a href="/article/1943982559000064000.htm"

title="(Python基础篇)字典的操作" target="_blank">(Python基础篇)字典的操作</a>

<span class="text-muted">EternityArt</span>

<a class="tag" taget="_blank" href="/search/%E5%9F%BA%E7%A1%80%E7%AF%87/1.htm">基础篇</a><a class="tag" taget="_blank" href="/search/python/1.htm">python</a><a class="tag" taget="_blank" href="/search/%E5%BC%80%E5%8F%91%E8%AF%AD%E8%A8%80/1.htm">开发语言</a>

<div>一、引言在Python编程中,字典(Dictionary)是一种极具灵活性的数据结构,它通过“键-值对”(key-valuepair)的形式存储数据,如同现实生活中的字典——通过“词语(键)”快速查找“释义(值)”。相较于列表和元组的有序索引访问,字典的优势在于基于键的快速查找,这使得它在处理需要频繁通过唯一标识获取数据的场景中极为高效。掌握字典的操作,能让我们更高效地组织和管理复杂数据,是Pyt</div>

</li>

<li><a href="/article/1943981927514042368.htm"

title="Python七彩花朵" target="_blank">Python七彩花朵</a>

<span class="text-muted">Want595</span>

<a class="tag" taget="_blank" href="/search/python/1.htm">python</a><a class="tag" taget="_blank" href="/search/%E5%BC%80%E5%8F%91%E8%AF%AD%E8%A8%80/1.htm">开发语言</a>

<div>系列文章序号直达链接Tkinter1Python李峋同款可写字版跳动的爱心2Python跳动的双爱心3Python蓝色跳动的爱心4Python动漫烟花5Python粒子烟花Turtle1Python满屏飘字2Python蓝色流星雨3Python金色流星雨4Python漂浮爱心5Python爱心光波①6Python爱心光波②7Python满天繁星8Python五彩气球9Python白色飘雪10Pyt</div>

</li>

<li><a href="/article/1943975627472302080.htm"

title="用OpenCV标定相机内参应用示例(C++和Python)" target="_blank">用OpenCV标定相机内参应用示例(C++和Python)</a>

<span class="text-muted"></span>

<div>下面是一个完整的使用OpenCV进行相机内参标定(CameraCalibration)的示例,包括C++和Python两个版本,基于棋盘格图案标定。一、目标:相机标定通过拍摄多张带有棋盘格图案的图像,估计相机的内参:相机矩阵(内参)K畸变系数distCoeffs可选外参(R,T)标定精度指标(如重投影误差)二、棋盘格参数设置(根据自己的棋盘格设置):棋盘格角点数:9x6(内角点,9列×6行);每个</div>

</li>

<li><a href="/article/1943974492640440320.htm"

title="Anaconda 详细下载与安装教程" target="_blank">Anaconda 详细下载与安装教程</a>

<span class="text-muted"></span>

<div>Anaconda详细下载与安装教程1.简介Anaconda是一个用于科学计算的开源发行版,包含了Python和R的众多常用库。它还包括了conda包管理器,可以方便地安装、更新和管理各种软件包。2.下载Anaconda2.1访问官方网站首先,打开浏览器,访问Anaconda官方网站。2.2选择适合的版本在页面中,你会看到两个主要的下载选项:AnacondaIndividualEdition:适用于</div>

</li>

<li><a href="/article/1943972473032732672.htm"

title="python中 @注解 及内置注解 的使用方法总结以及完整示例" target="_blank">python中 @注解 及内置注解 的使用方法总结以及完整示例</a>

<span class="text-muted">慧一居士</span>

<a class="tag" taget="_blank" href="/search/Python/1.htm">Python</a><a class="tag" taget="_blank" href="/search/python/1.htm">python</a>

<div>在Python中,装饰器(Decorator)使用@符号实现,是一种修改函数/类行为的语法糖。它本质上是一个高阶函数,接受目标函数作为参数并返回包装后的函数。Python也提供了多个内置装饰器,如@property、@staticmethod、@classmethod等。一、核心概念装饰器本质:@decorator等价于func=decorator(func)执行时机:在函数/类定义时立即执行装饰</div>

</li>

<li><a href="/article/1943971717185597440.htm"

title="Python中的静态方法和类方法详解" target="_blank">Python中的静态方法和类方法详解</a>

<span class="text-muted"></span>

<div>在Python中,`@staticmethod`和`@classmethod`是两种装饰器,它们用于定义类中的方法,但是它们的行为和用途有所不同。###@staticmethod`@staticmethod`装饰器用于定义一个静态方法。静态方法不接收类或实例的引用作为第一个参数,因此它不能访问类的状态或实例的状态。静态方法可以看作是与类关联的普通函数,但它们可以通过类名直接调用。classMath</div>

</li>

<li><a href="/article/1943969448046161920.htm"

title="Python中类静态方法:@classmethod/@staticmethod详解和实战示例" target="_blank">Python中类静态方法:@classmethod/@staticmethod详解和实战示例</a>

<span class="text-muted"></span>

<div>在Python中,类方法(@classmethod)和静态方法(@staticmethod)是类作用域下的两种特殊方法。它们使用装饰器定义,并且与实例方法(deffunc(self))的行为有所不同。1.三种方法的对比概览方法类型是否访问实例(self)是否访问类(cls)典型用途实例方法✅是❌否访问对象属性类方法@classmethod❌否✅是创建类的替代构造器,访问类变量等静态方法@stati</div>

</li>

<li><a href="/article/1943968314044772352.htm"

title="Python多版本管理与pip升级全攻略:解决冲突与高效实践" target="_blank">Python多版本管理与pip升级全攻略:解决冲突与高效实践</a>

<span class="text-muted">码界奇点</span>

<a class="tag" taget="_blank" href="/search/Python/1.htm">Python</a><a class="tag" taget="_blank" href="/search/python/1.htm">python</a><a class="tag" taget="_blank" href="/search/pip/1.htm">pip</a><a class="tag" taget="_blank" href="/search/%E5%BC%80%E5%8F%91%E8%AF%AD%E8%A8%80/1.htm">开发语言</a><a class="tag" taget="_blank" href="/search/python3.11/1.htm">python3.11</a><a class="tag" taget="_blank" href="/search/%E6%BA%90%E4%BB%A3%E7%A0%81%E7%AE%A1%E7%90%86/1.htm">源代码管理</a><a class="tag" taget="_blank" href="/search/%E8%99%9A%E6%8B%9F%E7%8E%B0%E5%AE%9E/1.htm">虚拟现实</a><a class="tag" taget="_blank" href="/search/%E4%BE%9D%E8%B5%96%E5%80%92%E7%BD%AE%E5%8E%9F%E5%88%99/1.htm">依赖倒置原则</a>

<div>引言Python作为最流行的编程语言之一,其版本迭代速度与生态碎片化给开发者带来了巨大挑战。据统计,超过60%的Python开发者需要同时维护基于Python3.6+和Python2.7的项目。本文将系统解决以下核心痛点:如何安全地在同一台机器上管理多个Python版本pip依赖冲突的根治方案符合PEP标准的生产环境最佳实践第一部分:Python多版本管理核心方案1.1系统级多版本共存方案Wind</div>

</li>

<li><a href="/article/1943967555555225600.htm"

title="基于Python的健身数据分析工具的搭建流程day1" target="_blank">基于Python的健身数据分析工具的搭建流程day1</a>

<span class="text-muted">weixin_45677320</span>

<a class="tag" taget="_blank" href="/search/python/1.htm">python</a><a class="tag" taget="_blank" href="/search/%E5%BC%80%E5%8F%91%E8%AF%AD%E8%A8%80/1.htm">开发语言</a><a class="tag" taget="_blank" href="/search/%E6%95%B0%E6%8D%AE%E6%8C%96%E6%8E%98/1.htm">数据挖掘</a><a class="tag" taget="_blank" href="/search/%E7%88%AC%E8%99%AB/1.htm">爬虫</a>

<div>基于Python的健身数据分析工具的搭建流程分数据挖掘、数据存储和数据分析三个步骤。本文主要介绍利用Python实现健身数据分析工具的数据挖掘部分。第一步:加载库加载本文需要的库,如下代码所示。若库未安装,请按照python如何安装各种库(保姆级教程)_python安装库-CSDN博客https://blog.csdn.net/aobulaien001/article/details/133298</div>

</li>

<li><a href="/article/1943952054795956224.htm"

title="seaborn又一个扩展heatmapz" target="_blank">seaborn又一个扩展heatmapz</a>

<span class="text-muted">qq_21478261</span>

<a class="tag" taget="_blank" href="/search/%23/1.htm">#</a><a class="tag" taget="_blank" href="/search/Python%E5%8F%AF%E8%A7%86%E5%8C%96/1.htm">Python可视化</a><a class="tag" taget="_blank" href="/search/matplotlib/1.htm">matplotlib</a>

<div>推荐阅读:Pythonmatplotlib保姆级教程嫌Matplotlib繁琐?试试Seaborn!</div>

</li>

<li><a href="/article/1943951549751422976.htm"

title="NGS测序基础梳理01-文库构建(Library Preparation)" target="_blank">NGS测序基础梳理01-文库构建(Library Preparation)</a>

<span class="text-muted">qq_21478261</span>

<a class="tag" taget="_blank" href="/search/%23/1.htm">#</a><a class="tag" taget="_blank" href="/search/%E7%94%9F%E7%89%A9%E4%BF%A1%E6%81%AF/1.htm">生物信息</a><a class="tag" taget="_blank" href="/search/%E7%94%9F%E7%89%A9%E5%AD%A6/1.htm">生物学</a>

<div>本文介绍Illumina测序平台文库构建(LibraryPreparation)步骤,文库结构。写作时间:2020.05。推荐阅读:10W字《Python可视化教程1.0》来了!一份由公众号「pythonic生物人」精心制作的PythonMatplotlib可视化系统教程,105页PDFhttps://mp.weixin.qq.com/s/QaSmucuVsS_DR-klfpE3-Q10W字《Rg</div>

</li>

<li><a href="/article/1943943735205228544.htm"

title="Python 常用内置函数详解(七):dir()函数——获取当前本地作用域中的名称列表或对象的有效属性列表" target="_blank">Python 常用内置函数详解(七):dir()函数——获取当前本地作用域中的名称列表或对象的有效属性列表</a>

<span class="text-muted"></span>

<div>目录一、功能二、语法和示例一、功能dir()函数获取当前本地作用域中的名称列表或对象的有效属性列表。二、语法和示例dir()函数有两种形式,如果没有实参,则返回当前本地作用域中的名称列表。如果有实参,它会尝试返回该对象的有效属性列表。如果对象有一个名为__dir__()的方法,那么该方法将被调用,并且必须返回一个属性列表。dir()函数的语法格式如下:C:\Users\amoxiang>ipyth</div>

</li>

<li><a href="/article/1943941718281875456.htm"

title="pythonjson中list操作_Python json.dumps 特殊数据类型的自定义序列化操作" target="_blank">pythonjson中list操作_Python json.dumps 特殊数据类型的自定义序列化操作</a>

<span class="text-muted"></span>

<div>场景描述:Python标准库中的json模块,集成了将数据序列化处理的功能;在使用json.dumps()方法序列化数据时候,如果目标数据中存在datetime数据类型,执行操作时,会抛出异常:TypeError:datetime.datetime(2016,12,10,11,04,21)isnotJSONserializable那么遇到json.dumps序列化不支持的数据类型,该怎么办!首先,</div>

</li>

<li><a href="/article/1943940206172368896.htm"

title="Python 日期格式转json.dumps的解决方法" target="_blank">Python 日期格式转json.dumps的解决方法</a>

<span class="text-muted">douyaoxin</span>

<a class="tag" taget="_blank" href="/search/python/1.htm">python</a><a class="tag" taget="_blank" href="/search/json/1.htm">json</a><a class="tag" taget="_blank" href="/search/%E5%BC%80%E5%8F%91%E8%AF%AD%E8%A8%80/1.htm">开发语言</a>

<div>classDateEncoder(json.JSONEncoder):defdefault(self,obj):ifisinstance(obj,datetime.datetime):returnobj.strftime('%Y-%m-%d%H:%M:%S')elifisinstance(obj,datetime.date):returnobj.strftime("%Y-%m-%d")json.d</div>

</li>

<li><a href="/article/1943934034132398080.htm"

title="Python 爬虫实战:视频平台播放量实时监控(含反爬对抗与数据趋势预测)" target="_blank">Python 爬虫实战:视频平台播放量实时监控(含反爬对抗与数据趋势预测)</a>

<span class="text-muted">西攻城狮北</span>

<a class="tag" taget="_blank" href="/search/python/1.htm">python</a><a class="tag" taget="_blank" href="/search/%E7%88%AC%E8%99%AB/1.htm">爬虫</a><a class="tag" taget="_blank" href="/search/%E9%9F%B3%E8%A7%86%E9%A2%91/1.htm">音视频</a>

<div>一、引言在数字内容蓬勃发展的当下,视频平台的播放量数据已成为内容创作者、营销人员以及行业分析师手中极为关键的情报资源。它不仅能够实时反映内容的受欢迎程度,更能在竞争分析、营销策略制定以及内容优化等方面发挥不可估量的作用。然而,视频平台为了保护自身数据和用户隐私,往往会设置一系列反爬虫机制,对数据爬取行为进行限制。这就向我们发起了挑战:如何巧妙地突破这些限制,同时精准地捕捉并预测播放量的动态变化趋势</div>

</li>

<li><a href="/article/1943933655697125376.htm"

title="Python技能手册 - 模块module" target="_blank">Python技能手册 - 模块module</a>

<span class="text-muted">金色牛神</span>

<a class="tag" taget="_blank" href="/search/Python/1.htm">Python</a><a class="tag" taget="_blank" href="/search/python/1.htm">python</a><a class="tag" taget="_blank" href="/search/windows/1.htm">windows</a><a class="tag" taget="_blank" href="/search/%E5%BC%80%E5%8F%91%E8%AF%AD%E8%A8%80/1.htm">开发语言</a>

<div>系列Python常用技能手册-基础语法Python常用技能手册-模块modulePython常用技能手册-包package目录module模块指什么typing数据类型int整数float浮点数str字符串bool布尔值TypeVar类型变量functools高阶函数工具functools.partial()函数偏置functools.lru_cache()函数缓存sorted排序列表排序元组排序</div>

</li>

<li><a href="/article/1943930629099941888.htm"

title="Ubuntu基础(Python虚拟环境和Vue)" target="_blank">Ubuntu基础(Python虚拟环境和Vue)</a>

<span class="text-muted">aaiier</span>

<a class="tag" taget="_blank" href="/search/ubuntu/1.htm">ubuntu</a><a class="tag" taget="_blank" href="/search/python/1.htm">python</a><a class="tag" taget="_blank" href="/search/linux/1.htm">linux</a>

<div>Python虚拟环境sudoaptinstallpython3python3-venv进入项目目录cdXXX创建虚拟环境python3-mvenvvenv激活虚拟环境sourcevenv/bin/activate退出虚拟环境deactivateVue安装Node.js和npm#安装Node.js和npm(Ubuntu默认仓库可能版本较旧,适合入门)sudoaptinstallnodejsnpm#验</div>

</li>

<li><a href="/article/1943930421968433152.htm"

title="苦练Python第9天:if-else分支九剑" target="_blank">苦练Python第9天:if-else分支九剑</a>

<span class="text-muted"></span>

<a class="tag" taget="_blank" href="/search/python%E5%90%8E%E7%AB%AF%E5%89%8D%E7%AB%AF%E4%BA%BA%E5%B7%A5%E6%99%BA%E8%83%BD/1.htm">python后端前端人工智能</a>

<div>苦练Python第9天:if-else分支九剑前言大家好,我是倔强青铜三。是一名热情的软件工程师,我热衷于分享和传播IT技术,致力于通过我的知识和技能推动技术交流与创新,欢迎关注我,微信公众号:倔强青铜三。欢迎点赞、收藏、关注,一键三连!!!欢迎来到100天Python挑战第9天!今天我们不练循环,改磨“分支剑法”——ifelse三式:单分支、双分支、多分支,以及嵌套和三元运算符,全部实战演练,让</div>

</li>

<li><a href="/article/1943930420689170432.htm"

title="苦练Python第8天:while 循环之妙用" target="_blank">苦练Python第8天:while 循环之妙用</a>

<span class="text-muted"></span>

<a class="tag" taget="_blank" href="/search/python%E5%90%8E%E7%AB%AF%E5%89%8D%E7%AB%AF%E4%BA%BA%E5%B7%A5%E6%99%BA%E8%83%BD/1.htm">python后端前端人工智能</a>

<div>苦练Python第8天:while循环之妙用原文链接:https://dev.to/therahul_gupta/day-9100-while-loops-with-real-world-examples-528f作者:RahulGupta译者:倔强青铜三前言大家好,我是倔强青铜三。是一名热情的软件工程师,我热衷于分享和传播IT技术,致力于通过我的知识和技能推动技术交流与创新,欢迎关注我,微信公众</div>

</li>

<li><a href="/article/93.htm"

title="java工厂模式" target="_blank">java工厂模式</a>

<span class="text-muted">3213213333332132</span>

<a class="tag" taget="_blank" href="/search/java/1.htm">java</a><a class="tag" taget="_blank" href="/search/%E6%8A%BD%E8%B1%A1%E5%B7%A5%E5%8E%82/1.htm">抽象工厂</a>

<div>工厂模式有

1、工厂方法

2、抽象工厂方法。

下面我的实现是抽象工厂方法,

给所有具体的产品类定一个通用的接口。

package 工厂模式;

/**

* 航天飞行接口

*

* @Description

* @author FuJianyong

* 2015-7-14下午02:42:05

*/

public interface SpaceF</div>

</li>

<li><a href="/article/220.htm"

title="nginx频率限制+python测试" target="_blank">nginx频率限制+python测试</a>

<span class="text-muted">ronin47</span>

<a class="tag" taget="_blank" href="/search/nginx+%E9%A2%91%E7%8E%87+python/1.htm">nginx 频率 python</a>

<div>

部分内容参考:http://www.abc3210.com/2013/web_04/82.shtml

首先说一下遇到这个问题是因为网站被攻击,阿里云报警,想到要限制一下访问频率,而不是限制ip(限制ip的方案稍后给出)。nginx连接资源被吃空返回状态码是502,添加本方案限制后返回599,与正常状态码区别开。步骤如下:</div>

</li>

<li><a href="/article/347.htm"

title="java线程和线程池的使用" target="_blank">java线程和线程池的使用</a>

<span class="text-muted">dyy_gusi</span>

<a class="tag" taget="_blank" href="/search/ThreadPool/1.htm">ThreadPool</a><a class="tag" taget="_blank" href="/search/thread/1.htm">thread</a><a class="tag" taget="_blank" href="/search/Runnable/1.htm">Runnable</a><a class="tag" taget="_blank" href="/search/timer/1.htm">timer</a>

<div>java线程和线程池

一、创建多线程的方式

java多线程很常见,如何使用多线程,如何创建线程,java中有两种方式,第一种是让自己的类实现Runnable接口,第二种是让自己的类继承Thread类。其实Thread类自己也是实现了Runnable接口。具体使用实例如下:

1、通过实现Runnable接口方式 1 2 </div>

</li>

<li><a href="/article/474.htm"

title="Linux" target="_blank">Linux</a>

<span class="text-muted">171815164</span>

<a class="tag" taget="_blank" href="/search/linux/1.htm">linux</a>

<div>ubuntu kernel

http://kernel.ubuntu.com/~kernel-ppa/mainline/v4.1.2-unstable/

安卓sdk代理

mirrors.neusoft.edu.cn 80

输入法和jdk

sudo apt-get install fcitx

su</div>

</li>

<li><a href="/article/601.htm"

title="Tomcat JDBC Connection Pool" target="_blank">Tomcat JDBC Connection Pool</a>

<span class="text-muted">g21121</span>

<a class="tag" taget="_blank" href="/search/Connection/1.htm">Connection</a>

<div> Tomcat7 抛弃了以往的DBCP 采用了新的Tomcat Jdbc Pool 作为数据库连接组件,事实上DBCP已经被Hibernate 所抛弃,因为他存在很多问题,诸如:更新缓慢,bug较多,编译问题,代码复杂等等。

Tomcat Jdbc P</div>

</li>

<li><a href="/article/728.htm"

title="敲代码的一点想法" target="_blank">敲代码的一点想法</a>

<span class="text-muted">永夜-极光</span>

<a class="tag" taget="_blank" href="/search/java/1.htm">java</a><a class="tag" taget="_blank" href="/search/%E9%9A%8F%E7%AC%94/1.htm">随笔</a><a class="tag" taget="_blank" href="/search/%E6%84%9F%E6%83%B3/1.htm">感想</a>

<div> 入门学习java编程已经半年了,一路敲代码下来,现在也才1w+行代码量,也就菜鸟水准吧,但是在整个学习过程中,我一直在想,为什么很多培训老师,网上的文章都是要我们背一些代码?比如学习Arraylist的时候,教师就让我们先参考源代码写一遍,然</div>

</li>

<li><a href="/article/855.htm"

title="jvm指令集" target="_blank">jvm指令集</a>

<span class="text-muted">程序员是怎么炼成的</span>

<a class="tag" taget="_blank" href="/search/jvm+%E6%8C%87%E4%BB%A4%E9%9B%86/1.htm">jvm 指令集</a>

<div>转自:http://blog.csdn.net/hudashi/article/details/7062675#comments

将值推送至栈顶时 const ldc push load指令

const系列

该系列命令主要负责把简单的数值类型送到栈顶。(从常量池或者局部变量push到栈顶时均使用)

0x02 &nbs</div>

</li>

<li><a href="/article/982.htm"

title="Oracle字符集的查看查询和Oracle字符集的设置修改" target="_blank">Oracle字符集的查看查询和Oracle字符集的设置修改</a>

<span class="text-muted">aijuans</span>

<a class="tag" taget="_blank" href="/search/oracle/1.htm">oracle</a>

<div> 本文主要讨论以下几个部分:如何查看查询oracle字符集、 修改设置字符集以及常见的oracle utf8字符集和oracle exp 字符集问题。

一、什么是Oracle字符集

Oracle字符集是一个字节数据的解释的符号集合,有大小之分,有相互的包容关系。ORACLE 支持国家语言的体系结构允许你使用本地化语言来存储,处理,检索数据。它使数据库工具,错误消息,排序次序,日期,时间,货</div>

</li>

<li><a href="/article/1109.htm"

title="png在Ie6下透明度处理方法" target="_blank">png在Ie6下透明度处理方法</a>

<span class="text-muted">antonyup_2006</span>

<a class="tag" taget="_blank" href="/search/css/1.htm">css</a><a class="tag" taget="_blank" href="/search/%E6%B5%8F%E8%A7%88%E5%99%A8/1.htm">浏览器</a><a class="tag" taget="_blank" href="/search/Firebug/1.htm">Firebug</a><a class="tag" taget="_blank" href="/search/IE/1.htm">IE</a>

<div>由于之前到深圳现场支撑上线,当时为了解决个控件下载,我机器上的IE8老报个错,不得以把ie8卸载掉,换个Ie6,问题解决了,今天出差回来,用ie6登入另一个正在开发的系统,遇到了Png图片的问题,当然升级到ie8(ie8自带的开发人员工具调试前端页面JS之类的还是比较方便的,和FireBug一样,呵呵),这个问题就解决了,但稍微做了下这个问题的处理。

我们知道PNG是图像文件存储格式,查询资</div>

</li>

<li><a href="/article/1236.htm"

title="表查询常用命令高级查询方法(二)" target="_blank">表查询常用命令高级查询方法(二)</a>

<span class="text-muted">百合不是茶</span>

<a class="tag" taget="_blank" href="/search/oracle/1.htm">oracle</a><a class="tag" taget="_blank" href="/search/%E5%88%86%E9%A1%B5%E6%9F%A5%E8%AF%A2/1.htm">分页查询</a><a class="tag" taget="_blank" href="/search/%E5%88%86%E7%BB%84%E6%9F%A5%E8%AF%A2/1.htm">分组查询</a><a class="tag" taget="_blank" href="/search/%E8%81%94%E5%90%88%E6%9F%A5%E8%AF%A2/1.htm">联合查询</a>

<div>----------------------------------------------------分组查询 group by having --平均工资和最高工资 select avg(sal)平均工资,max(sal) from emp ; --每个部门的平均工资和最高工资</div>

</li>

<li><a href="/article/1363.htm"

title="uploadify3.1版本参数使用详解" target="_blank">uploadify3.1版本参数使用详解</a>

<span class="text-muted">bijian1013</span>

<a class="tag" taget="_blank" href="/search/JavaScript/1.htm">JavaScript</a><a class="tag" taget="_blank" href="/search/uploadify3.1/1.htm">uploadify3.1</a>

<div>使用:

绑定的界面元素<input id='gallery'type='file'/>$("#gallery").uploadify({设置参数,参数如下});

设置的属性:

id: jQuery(this).attr('id'),//绑定的input的ID

langFile: 'http://ww</div>

</li>

<li><a href="/article/1490.htm"

title="精通Oracle10编程SQL(17)使用ORACLE系统包" target="_blank">精通Oracle10编程SQL(17)使用ORACLE系统包</a>

<span class="text-muted">bijian1013</span>

<a class="tag" taget="_blank" href="/search/oracle/1.htm">oracle</a><a class="tag" taget="_blank" href="/search/%E6%95%B0%E6%8D%AE%E5%BA%93/1.htm">数据库</a><a class="tag" taget="_blank" href="/search/plsql/1.htm">plsql</a>

<div>/*

*使用ORACLE系统包

*/

--1.DBMS_OUTPUT

--ENABLE:用于激活过程PUT,PUT_LINE,NEW_LINE,GET_LINE和GET_LINES的调用

--语法:DBMS_OUTPUT.enable(buffer_size in integer default 20000);

--DISABLE:用于禁止对过程PUT,PUT_LINE,NEW</div>

</li>

<li><a href="/article/1617.htm"

title="【JVM一】JVM垃圾回收日志" target="_blank">【JVM一】JVM垃圾回收日志</a>

<span class="text-muted">bit1129</span>

<a class="tag" taget="_blank" href="/search/%E5%9E%83%E5%9C%BE%E5%9B%9E%E6%94%B6/1.htm">垃圾回收</a>

<div>将JVM垃圾回收的日志记录下来,对于分析垃圾回收的运行状态,进而调整内存分配(年轻代,老年代,永久代的内存分配)等是很有意义的。JVM与垃圾回收日志相关的参数包括:

-XX:+PrintGC

-XX:+PrintGCDetails

-XX:+PrintGCTimeStamps

-XX:+PrintGCDateStamps

-Xloggc

-XX:+PrintGC

通</div>

</li>

<li><a href="/article/1744.htm"

title="Toast使用" target="_blank">Toast使用</a>

<span class="text-muted">白糖_</span>

<a class="tag" taget="_blank" href="/search/toast/1.htm">toast</a>

<div>Android中的Toast是一种简易的消息提示框,toast提示框不能被用户点击,toast会根据用户设置的显示时间后自动消失。

创建Toast

两个方法创建Toast

makeText(Context context, int resId, int duration)

参数:context是toast显示在</div>

</li>

<li><a href="/article/1871.htm"

title="angular.identity" target="_blank">angular.identity</a>

<span class="text-muted">boyitech</span>

<a class="tag" taget="_blank" href="/search/AngularJS/1.htm">AngularJS</a><a class="tag" taget="_blank" href="/search/AngularJS+API/1.htm">AngularJS API</a>

<div>angular.identiy 描述: 返回它第一参数的函数. 此函数多用于函数是编程. 使用方法: angular.identity(value); 参数详解: Param Type Details value

*

to be returned. 返回值: 传入的value 实例代码:

<!DOCTYPE HTML>

</div>

</li>

<li><a href="/article/1998.htm"

title="java-两整数相除,求循环节" target="_blank">java-两整数相除,求循环节</a>

<span class="text-muted">bylijinnan</span>

<a class="tag" taget="_blank" href="/search/java/1.htm">java</a>

<div>

import java.util.ArrayList;

import java.util.List;

public class CircleDigitsInDivision {

/**

* 题目:求循环节,若整除则返回NULL,否则返回char*指向循环节。先写思路。函数原型:char*get_circle_digits(unsigned k,unsigned j)

</div>

</li>

<li><a href="/article/2125.htm"

title="Java 日期 周 年" target="_blank">Java 日期 周 年</a>

<span class="text-muted">Chen.H</span>

<a class="tag" taget="_blank" href="/search/java/1.htm">java</a><a class="tag" taget="_blank" href="/search/C%2B%2B/1.htm">C++</a><a class="tag" taget="_blank" href="/search/c/1.htm">c</a><a class="tag" taget="_blank" href="/search/C%23/1.htm">C#</a>

<div>

/**

* java日期操作(月末、周末等的日期操作)

*

* @author

*

*/

public class DateUtil {

/** */

/**

* 取得某天相加(减)後的那一天

*

* @param date

* @param num

* </div>

</li>

<li><a href="/article/2252.htm"

title="[高考与专业]欢迎广大高中毕业生加入自动控制与计算机应用专业" target="_blank">[高考与专业]欢迎广大高中毕业生加入自动控制与计算机应用专业</a>

<span class="text-muted">comsci</span>

<a class="tag" taget="_blank" href="/search/%E8%AE%A1%E7%AE%97%E6%9C%BA/1.htm">计算机</a>

<div>

不知道现在的高校还设置这个宽口径专业没有,自动控制与计算机应用专业,我就是这个专业毕业的,这个专业的课程非常多,既要学习自动控制方面的课程,也要学习计算机专业的课程,对数学也要求比较高.....如果有这个专业,欢迎大家报考...毕业出来之后,就业的途径非常广.....

以后</div>

</li>

<li><a href="/article/2379.htm"

title="分层查询(Hierarchical Queries)" target="_blank">分层查询(Hierarchical Queries)</a>

<span class="text-muted">daizj</span>

<a class="tag" taget="_blank" href="/search/oracle/1.htm">oracle</a><a class="tag" taget="_blank" href="/search/%E9%80%92%E5%BD%92%E6%9F%A5%E8%AF%A2/1.htm">递归查询</a><a class="tag" taget="_blank" href="/search/%E5%B1%82%E6%AC%A1%E6%9F%A5%E8%AF%A2/1.htm">层次查询</a>

<div>Hierarchical Queries

If a table contains hierarchical data, then you can select rows in a hierarchical order using the hierarchical query clause:

hierarchical_query_clause::=

start with condi</div>

</li>

<li><a href="/article/2506.htm"

title="数据迁移" target="_blank">数据迁移</a>

<span class="text-muted">daysinsun</span>

<a class="tag" taget="_blank" href="/search/%E6%95%B0%E6%8D%AE%E8%BF%81%E7%A7%BB/1.htm">数据迁移</a>

<div>最近公司在重构一个医疗系统,原来的系统是两个.Net系统,现需要重构到java中。数据库分别为SQL Server和Mysql,现需要将数据库统一为Hana数据库,发现了几个问题,但最后通过努力都解决了。

1、原本通过Hana的数据迁移工具把数据是可以迁移过去的,在MySQl里面的字段为TEXT类型的到Hana里面就存储不了了,最后不得不更改为clob。

2、在数据插入的时候有些字段特别长</div>

</li>

<li><a href="/article/2633.htm"

title="C语言学习二进制的表示示例" target="_blank">C语言学习二进制的表示示例</a>

<span class="text-muted">dcj3sjt126com</span>

<a class="tag" taget="_blank" href="/search/c/1.htm">c</a><a class="tag" taget="_blank" href="/search/basic/1.htm">basic</a>

<div>进制的表示示例

# include <stdio.h>

int main(void)

{

int i = 0x32C;

printf("i = %d\n", i);

/*

printf的用法

%d表示以十进制输出

%x或%X表示以十六进制的输出

%o表示以八进制输出

*/

return 0;

}

</div>

</li>

<li><a href="/article/2760.htm"

title="NsTimer 和 UITableViewCell 之间的控制" target="_blank">NsTimer 和 UITableViewCell 之间的控制</a>

<span class="text-muted">dcj3sjt126com</span>

<a class="tag" taget="_blank" href="/search/ios/1.htm">ios</a>

<div>情况是这样的:

一个UITableView, 每个Cell的内容是我自定义的 viewA viewA上面有很多的动画, 我需要添加NSTimer来做动画, 由于TableView的复用机制, 我添加的动画会不断开启, 没有停止, 动画会执行越来越多.

解决办法:

在配置cell的时候开始动画, 然后在cell结束显示的时候停止动画

查找cell结束显示的代理</div>

</li>

<li><a href="/article/2887.htm"

title="MySql中case when then 的使用" target="_blank">MySql中case when then 的使用</a>

<span class="text-muted">fanxiaolong</span>

<a class="tag" taget="_blank" href="/search/casewhenthenend/1.htm">casewhenthenend</a>

<div>select "主键", "项目编号", "项目名称","项目创建时间", "项目状态","部门名称","创建人"

union

(select

pp.id as "主键",

pp.project_number as &</div>

</li>

<li><a href="/article/3014.htm"

title="Ehcache(01)——简介、基本操作" target="_blank">Ehcache(01)——简介、基本操作</a>

<span class="text-muted">234390216</span>

<a class="tag" taget="_blank" href="/search/cache/1.htm">cache</a><a class="tag" taget="_blank" href="/search/ehcache/1.htm">ehcache</a><a class="tag" taget="_blank" href="/search/%E7%AE%80%E4%BB%8B/1.htm">简介</a><a class="tag" taget="_blank" href="/search/CacheManager/1.htm">CacheManager</a><a class="tag" taget="_blank" href="/search/crud/1.htm">crud</a>

<div>Ehcache简介

目录

1 CacheManager

1.1 构造方法构建

1.2 静态方法构建

2 Cache

2.1&</div>

</li>

<li><a href="/article/3141.htm"

title="最容易懂的javascript闭包学习入门" target="_blank">最容易懂的javascript闭包学习入门</a>

<span class="text-muted">jackyrong</span>

<a class="tag" taget="_blank" href="/search/JavaScript/1.htm">JavaScript</a>

<div>http://www.ruanyifeng.com/blog/2009/08/learning_javascript_closures.html

闭包(closure)是Javascript语言的一个难点,也是它的特色,很多高级应用都要依靠闭包实现。

下面就是我的学习笔记,对于Javascript初学者应该是很有用的。

一、变量的作用域

要理解闭包,首先必须理解Javascript特殊</div>

</li>

<li><a href="/article/3268.htm"

title="提升网站转化率的四步优化方案" target="_blank">提升网站转化率的四步优化方案</a>

<span class="text-muted">php教程分享</span>

<a class="tag" taget="_blank" href="/search/%E6%95%B0%E6%8D%AE%E7%BB%93%E6%9E%84/1.htm">数据结构</a><a class="tag" taget="_blank" href="/search/PHP/1.htm">PHP</a><a class="tag" taget="_blank" href="/search/%E6%95%B0%E6%8D%AE%E6%8C%96%E6%8E%98/1.htm">数据挖掘</a><a class="tag" taget="_blank" href="/search/Google/1.htm">Google</a><a class="tag" taget="_blank" href="/search/%E6%B4%BB%E5%8A%A8/1.htm">活动</a>

<div>网站开发完成后,我们在进行网站优化最关键的问题就是如何提高整体的转化率,这也是营销策略里最最重要的方面之一,并且也是网站综合运营实例的结果。文中分享了四大优化策略:调查、研究、优化、评估,这四大策略可以很好地帮助用户设计出高效的优化方案。

PHP开发的网站优化一个网站最关键和棘手的是,如何提高整体的转化率,这是任何营销策略里最重要的方面之一,而提升网站转化率是网站综合运营实力的结果。今天,我就分</div>

</li>

<li><a href="/article/3395.htm"

title="web开发里什么是HTML5的WebSocket?" target="_blank">web开发里什么是HTML5的WebSocket?</a>

<span class="text-muted">naruto1990</span>

<a class="tag" taget="_blank" href="/search/Web/1.htm">Web</a><a class="tag" taget="_blank" href="/search/html5/1.htm">html5</a><a class="tag" taget="_blank" href="/search/%E6%B5%8F%E8%A7%88%E5%99%A8/1.htm">浏览器</a><a class="tag" taget="_blank" href="/search/socket/1.htm">socket</a>

<div>当前火起来的HTML5语言里面,很多学者们都还没有完全了解这语言的效果情况,我最喜欢的Web开发技术就是正迅速变得流行的 WebSocket API。WebSocket 提供了一个受欢迎的技术,以替代我们过去几年一直在用的Ajax技术。这个新的API提供了一个方法,从客户端使用简单的语法有效地推动消息到服务器。让我们看一看6个HTML5教程介绍里 的 WebSocket API:它可用于客户端、服</div>

</li>

<li><a href="/article/3522.htm"

title="Socket初步编程——简单实现群聊" target="_blank">Socket初步编程——简单实现群聊</a>

<span class="text-muted">Everyday都不同</span>

<a class="tag" taget="_blank" href="/search/socket/1.htm">socket</a><a class="tag" taget="_blank" href="/search/%E7%BD%91%E7%BB%9C%E7%BC%96%E7%A8%8B/1.htm">网络编程</a><a class="tag" taget="_blank" href="/search/%E5%88%9D%E6%AD%A5%E8%AE%A4%E8%AF%86/1.htm">初步认识</a>

<div>初次接触到socket网络编程,也参考了网络上众前辈的文章。尝试自己也写了一下,记录下过程吧:

服务端:(接收客户端消息并把它们打印出来)

public class SocketServer {

private List<Socket> socketList = new ArrayList<Socket>();

public s</div>

</li>

<li><a href="/article/3649.htm"

title="面试:Hashtable与HashMap的区别(结合线程)" target="_blank">面试:Hashtable与HashMap的区别(结合线程)</a>

<span class="text-muted">toknowme</span>

<div>昨天去了某钱公司面试,面试过程中被问道

Hashtable与HashMap的区别?当时就是回答了一点,Hashtable是线程安全的,HashMap是线程不安全的,说白了,就是Hashtable是的同步的,HashMap不是同步的,需要额外的处理一下。

今天就动手写了一个例子,直接看代码吧

package com.learn.lesson001;

import java</div>

</li>

<li><a href="/article/3776.htm"

title="MVC设计模式的总结" target="_blank">MVC设计模式的总结</a>

<span class="text-muted">xp9802</span>

<a class="tag" taget="_blank" href="/search/%E8%AE%BE%E8%AE%A1%E6%A8%A1%E5%BC%8F/1.htm">设计模式</a><a class="tag" taget="_blank" href="/search/mvc/1.htm">mvc</a><a class="tag" taget="_blank" href="/search/%E6%A1%86%E6%9E%B6/1.htm">框架</a><a class="tag" taget="_blank" href="/search/IOC/1.htm">IOC</a>

<div>随着Web应用的商业逻辑包含逐渐复杂的公式分析计算、决策支持等,使客户机越

来越不堪重负,因此将系统的商业分离出来。单独形成一部分,这样三层结构产生了。

其中‘层’是逻辑上的划分。

三层体系结构是将整个系统划分为如图2.1所示的结构[3]

(1)表现层(Presentation layer):包含表示代码、用户交互GUI、数据验证。

该层用于向客户端用户提供GUI交互,它允许用户</div>

</li>

</ul>

</div>

</div>

</div>

<div>

<div class="container">

<div class="indexes">

<strong>按字母分类:</strong>

<a href="/tags/A/1.htm" target="_blank">A</a><a href="/tags/B/1.htm" target="_blank">B</a><a href="/tags/C/1.htm" target="_blank">C</a><a

href="/tags/D/1.htm" target="_blank">D</a><a href="/tags/E/1.htm" target="_blank">E</a><a href="/tags/F/1.htm" target="_blank">F</a><a

href="/tags/G/1.htm" target="_blank">G</a><a href="/tags/H/1.htm" target="_blank">H</a><a href="/tags/I/1.htm" target="_blank">I</a><a

href="/tags/J/1.htm" target="_blank">J</a><a href="/tags/K/1.htm" target="_blank">K</a><a href="/tags/L/1.htm" target="_blank">L</a><a

href="/tags/M/1.htm" target="_blank">M</a><a href="/tags/N/1.htm" target="_blank">N</a><a href="/tags/O/1.htm" target="_blank">O</a><a

href="/tags/P/1.htm" target="_blank">P</a><a href="/tags/Q/1.htm" target="_blank">Q</a><a href="/tags/R/1.htm" target="_blank">R</a><a

href="/tags/S/1.htm" target="_blank">S</a><a href="/tags/T/1.htm" target="_blank">T</a><a href="/tags/U/1.htm" target="_blank">U</a><a

href="/tags/V/1.htm" target="_blank">V</a><a href="/tags/W/1.htm" target="_blank">W</a><a href="/tags/X/1.htm" target="_blank">X</a><a

href="/tags/Y/1.htm" target="_blank">Y</a><a href="/tags/Z/1.htm" target="_blank">Z</a><a href="/tags/0/1.htm" target="_blank">其他</a>

</div>

</div>

</div>

<footer id="footer" class="mb30 mt30">

<div class="container">

<div class="footBglm">

<a target="_blank" href="/">首页</a> -

<a target="_blank" href="/custom/about.htm">关于我们</a> -

<a target="_blank" href="/search/Java/1.htm">站内搜索</a> -

<a target="_blank" href="/sitemap.txt">Sitemap</a> -

<a target="_blank" href="/custom/delete.htm">侵权投诉</a>

</div>

<div class="copyright">版权所有 IT知识库 CopyRight © 2000-2050 E-COM-NET.COM , All Rights Reserved.

<!-- <a href="https://beian.miit.gov.cn/" rel="nofollow" target="_blank">京ICP备09083238号</a><br>-->

</div>

</div>

</footer>

<!-- 代码高亮 -->

<script type="text/javascript" src="/static/syntaxhighlighter/scripts/shCore.js"></script>

<script type="text/javascript" src="/static/syntaxhighlighter/scripts/shLegacy.js"></script>

<script type="text/javascript" src="/static/syntaxhighlighter/scripts/shAutoloader.js"></script>

<link type="text/css" rel="stylesheet" href="/static/syntaxhighlighter/styles/shCoreDefault.css"/>

<script type="text/javascript" src="/static/syntaxhighlighter/src/my_start_1.js"></script>

</body>

</html>