iOS播放/渲染/解析MIDI

什么是MIDI

MIDI:乐器数字接口, Musical Instrument Digital Interface。

MIDI 是计算机能理解的乐谱,计算机和电子乐器都可以处理的乐器格式。

MIDI 不是音频信号,不包含 pcm buffer。

通过音序器 sequencer,结合音频数据 / 乐器 ,播放 MIDI Event 数据

( 通过音色库 SoundFont,播放乐器的声音。iOS上一般称sound bank )。

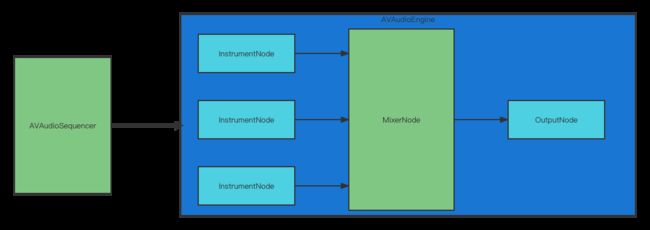

通过 AVAudioEngine/AVAudioSequencer 播放

连接 AVAudioEngine 的输入和输出,

输入 AVAudioUnitMIDIInstrument → 混频器 engine.mainMixerNode → 输出 engine.outputNode

用AVAudioEngine ,创建 AVAudioSequencer ,就可以播放 MIDI 了。

配置 AVAudioEngine 的输入输出

var engine = AVAudioEngine()

var sampler = AVAudioUnitSampler() // AVAudioUnitMIDIInstrument的子类

engine.attach(sampler)

// 节点 node 的 bus 0 是输出,

// bus 1 是输入

let outputHWFormat = engine.outputNode.outputFormat(forBus: 0)

engine.connect(sampler, to: engine.mainMixerNode, format: outputHWFormat)

guard let bankURL = Bundle.main.url(forResource: soundFontMuseCoreName, withExtension: "sf2") else {

fatalError("\(self.soundFontMuseCoreName).sf2 file not found.")

}

// 载入资源

do {

try

self.sampler.loadSoundBankInstrument(at: bankURL,

program: 0,

bankMSB: UInt8(kAUSampler_DefaultMelodicBankMSB),

bankLSB: UInt8(kAUSampler_DefaultBankLSB))

try engine.start()

} catch { print(error) }

engine.mainMixerNode是AVAudioEngine自带的node。负责混音,它有多路输入,一路输出。

用 AVAudioSequencer ,播放 MIDI

AVAudioSequencer 可以用不同的音频轨道 track,对应不同的乐器声音

tracks[index] 指向不同的音频产生节点

var sequencer = AVAudioSequencer(audioEngine: engine)

guard let fileURL = Bundle.main.url(forResource: "sibeliusGMajor", withExtension: "mid") else {

fatalError("\"sibeliusGMajor.mid\" file not found.")

}

do {

try sequencer.load(from: fileURL, options: .smfChannelsToTracks)

print("loaded \(fileURL)")

} catch {

fatalError("something screwed up while loading midi file \n \(error)")

}

// 指定每个track的dest AudioUnit

for track in sequencer.tracks {

track.destinationAudioUnit = self.sampler

}

sequencer.prepareToPlay()

do {

try sequencer.start()

} catch {

print("\(error)")

}

加载多个音色库

有这样一种case,随着业务发展,音色库需要有更新,增加新的乐器,如果每次更新都要全量更新音色库,流量消耗大。所以需要对音色库做增量更新。这样就会在客户端出现多个音色库,看前面的代码,每次播放只能加载一个音色库。那么有没有什么办法可以加载多个音色库播放一个MIDI文件呢?

答案是可以的。

我们看前面的数据流图,实际上,AudioEngine的mainMixerNode是有多路输入的,那么它应该可以连接多个输入Instrument。代码类似下面:

engine.connect(midiSynth0, to: engine.mainMixerNode, format: nil)

engine.connect(midiSynth1, to: engine.mainMixerNode, format: nil)

engine.connect(midiSynth2, to: engine.mainMixerNode, format: nil)

engine.connect(midiSynth3, to: engine.mainMixerNode, format: nil)

engine.connect(midiSynth4, to: engine.mainMixerNode, format: nil)

engine.connect(engine.mainMixerNode, to: engine.outputNode, format: nil)

这里,假定midiSynth0~midiSynth4是五个不同的AVAudioUnitSampler实例,他们分别加载不同的音色库,假定为soundBank 0~4用他们来播放一个MIDI文件。我们期望,可以正常播放出MIDI中描述的所有轨道的音色。但是实际测试发现,只有midiSynth0挂载的音色库里的音色被渲染了出来。奇怪,这是为什么呢?

实际上,答案就隐藏在之前的数据流图上。这里,我们虽然创建了多个AVAudioUnitSampler作为input node,但是,因为我们没有指定MIDI每一个track的destinationAudioUnit,MIDI默认所有的track都通过midiSynth0,而midiSynth0只挂载了soundBank0的音色,所以,只有soundBank0被渲染了出来。

那么,这个问题怎么解决呢?其实,之前的代码里,我们已经给出了答案,即这一句:

for track in sequencer.tracks {

track.destinationAudioUnit = self.sampler

}

指定每一个track的destinationAudioUnit为对应的挂载了改track上instrument音色的音色库的AVAudioUnitSampler,让对应的track上的event通过对应AudioUnitSampler,则可以渲染出完整的音色。

MIDI文件渲染成音频文件

我们可以把MIDI文件渲染为wav/caf等音频文件,这需要开启AVAudioEngine的离线渲染模式。代码如下:

/**

* 渲染midi到音频文件(wav格式)。耗时操作。

* @param midiPath 要渲染的midi文件路径

* @param audioPath 输出的音频文件路径

*/

public func render(midiPath: String, audioPath: String) {

let renderEngine = AVAudioEngine()

let renderMidiSynth = AVAudioUnitMIDISynth()

loadSoundFont(midiSynth: renderMidiSynth, path:soundFontPath)

renderEngine.attach(renderMidiSynth)

renderEngine.connect(renderMidiSynth, to: renderEngine.mainMixerNode, format: nil)

let renderSequencer = AVAudioSequencer(audioEngine: renderEngine)

if renderSequencer.isPlaying {

renderSequencer.stop()

}

renderSequencer.currentPositionInBeats = TimeInterval(0)

do {

let format = AVAudioFormat(standardFormatWithSampleRate: 44100, channels: 2)!

do {

let maxFrames: AVAudioFrameCount = 4096

if #available(iOS 11.0, *) {

try renderEngine.enableManualRenderingMode(.offline, format: format,

maximumFrameCount: maxFrames)

} else {

fatalError("Enabling manual rendering mode failed")

}

} catch {

fatalError("Enabling manual rendering mode failed: \(error).")

}

if (!renderEngine.isRunning) {

do {

try renderEngine.start()

} catch {

print("start render engine failed")

}

}

setupSequencerFile(sequencer: renderSequencer, midiPath:midiPath)

print("attempting to play")

do {

try renderSequencer.start()

print("playing")

} catch {

print("cannot start \(error)")

}

if #available(iOS 11.0, *) {

// The output buffer to which the engine renders the processed data.

let buffer = AVAudioPCMBuffer(pcmFormat: renderEngine.manualRenderingFormat,

frameCapacity: renderEngine.manualRenderingMaximumFrameCount)!

let avChannelLayoutKey: [UInt8] = [0x02, 0x00, 0x65, 0x00, 0x03, 0x00, 0x00, 0x00, 0x00, 0x00, 0x00, 0x00]

let avChannelLayoutData = NSData.init(bytes: avChannelLayoutKey, length: 12)

let settings: [String: Any] = ["AVLinearPCMIsFloatKey": 0, "AVFormatIDKey":1819304813, "AVSampleRateKey": 44100, "AVLinearPCMBitDepthKey": 16,

"AVLinearPCMIsNonInterleaved": 0, "AVLinearPCMIsBigEndianKey": 1, "AVChannelLayoutKey": avChannelLayoutData, "AVNumberOfChannelsKey": 2]

let outputFile: AVAudioFile

do {

let outputURL = URL(fileURLWithPath: audioPath)

outputFile = try AVAudioFile(forWriting: outputURL, settings: settings)

} catch {

fatalError("Unable to open output audio file: \(error).")

}

var totalFrames: Int = 0;

for track in renderSequencer.tracks {

let trackFrames = track.lengthInSeconds * 1000 * 44100 / (1024 / 1.0)

if (Int(trackFrames) > totalFrames) {

totalFrames = Int(trackFrames)

}

}

let totalFramesCount = AVAudioFramePosition(totalFrames)

while renderEngine.manualRenderingSampleTime < totalFrames {

do {

let frameCount = totalFramesCount - renderEngine.manualRenderingSampleTime

let framesToRender = min(AVAudioFrameCount(frameCount), buffer.frameCapacity)

let status = try renderEngine.renderOffline(framesToRender, to: buffer)

switch status {

case .success:

try outputFile.write(from: buffer)

case .insufficientDataFromInputNode:

break

case .cannotDoInCurrentContext:

break

case .error:

fatalError("The manual rendering failed.")

}

} catch {

fatalError("The manual rendering failed: \(error).")

}

}

print("isPlaying 2: " + String(renderSequencer.isPlaying) + ", " + String(renderSequencer.currentPositionInBeats) + ", " + String(renderSequencer.currentPositionInSeconds))

} else {

// Fallback on earlier versions

}

}

renderEngine.stop()

}

渲染的流程其实和播放完全一致,只是需要为渲染单独创建AVAudioEngine和AVAudioSequencer的实例。通过AVAudioEngine的enableManualRenderingMode开启离线渲染模式。代码中的settings是一个字典,存储输出文件相关的参数。其中各个参数的具体含义可以在苹果开发者网站查到了。比如AVSampleRateKey表示采样率,AVLinearPCMBitDepthKey表示采样深度。

循环遍历MIDI的所有track,计算出总帧数。秒数与帧的换算公式track.lengthInSeconds * 1000 * 44100 / (1024 / 1.0)。然后,循环输出所有帧到音频文件。

此外,还可以解析MIDI文件,获取每个track,以及每个track对应的,编辑MIDI文件,重新生成一个新的MIDI文件。

可以从这里获取源码:iOS MIDI播放