【大数据】M1 mac win docker安装kafka+mysql+canal

文章目录

- kafka

-

- docker-compose创建kafka

- 容器启动以后,访问容器,并且发送消息测试

- 问题

- Exception in thread "main" kafka.zookeeper.ZooKeeperClientTimeoutException: Timed out waiting for connection while in state: CONNECTING

- mysql

-

- docker-compose创建mysql

- 修改mysqlconf

- 进入容器

- 问题

-

- ERROR 1045 (28000): Access denied for user 'root'@'localhost' (using password: NO)

- canal

-

- mysql 创建canal用户

- docker-compose创建canal

-

- 问题, window docker 创建 canal 会有 canal-server exited 139的问题, 这个是因为 canal 是基于 centos6, 太老, 无奈只能放弃!!

- docker 创建 canal

kafka

docker-compose创建kafka

docker-compose.yml

version: "2.2"

services:

zookeeper:

image: 'bitnami/zookeeper:latest'

ports:

- '2181:2181'

environment:

# 匿名登录--必须开启

- ALLOW_ANONYMOUS_LOGIN=yes

#volumes:

#- ./zookeeper:/bitnami/zookeeper

# 该镜像具体配置参考 https://github.com/bitnami/bitnami-docker-kafka/blob/master/README.md

kafka:

image: 'bitnami/kafka:2.8.0'

container_name: 'small-kafka'

ports:

- '9092:9092'

- '9999:9095'

environment:

- KAFKA_BROKER_ID=1

- KAFKA_CFG_LISTENERS=PLAINTEXT://:9092

# 客户端访问地址,更换成自己的

- KAFKA_CFG_ADVERTISED_LISTENERS=PLAINTEXT://192.168.1.24:9092

- KAFKA_CFG_ZOOKEEPER_CONNECT=zookeeper:2181

# 允许使用PLAINTEXT协议(镜像中默认为关闭,需要手动开启)

- ALLOW_PLAINTEXT_LISTENER=yes

# 关闭自动创建 topic 功能

- KAFKA_CFG_AUTO_CREATE_TOPICS_ENABLE=false

# 全局消息过期时间 6 小时(测试时可以设置短一点)

- KAFKA_CFG_LOG_RETENTION_HOURS=6

# 开启JMX监控

# - JMX_PORT=9999

#volumes:

#- ./kafka:/bitnami/kafka

depends_on:

- zookeeper

# Web 管理界面 另外也可以用exporter+prometheus+grafana的方式来监控 https://github.com/danielqsj/kafka_exporter

kafka_manager:

image: 'hlebalbau/kafka-manager:latest'

ports:

- "9000:9000"

environment:

ZK_HOSTS: "zookeeper:2181"

APPLICATION_SECRET: letmein

depends_on:

- zookeeper

- kafka

在cmd输入

cd C:\wxy\docker-test\kafka

docker-compose up -d

容器启动以后,访问容器,并且发送消息测试

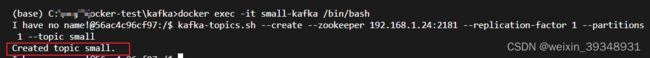

docker exec -it small-kafka /bin/bash

kafka-topics.sh --create --zookeeper XXX.XXX.XXX.XXX:2181 --replication-factor 1 --partitions 1 --topic canaltopic

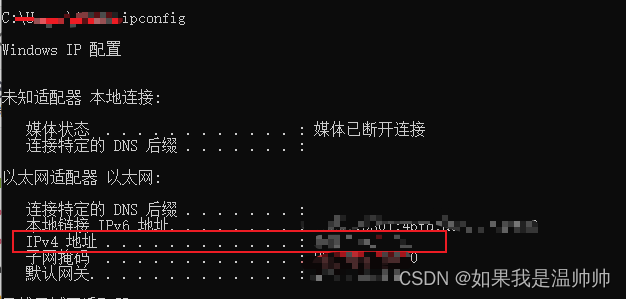

XXX.XXX.XXX.XXX为你的IP地址,获取方法为打开cmd,输入

ipconfig

通过浏览器访问kafka管理界面

http://localhost:9000/

问题

Exception in thread “main” kafka.zookeeper.ZooKeeperClientTimeoutException: Timed out waiting for connection while in state: CONNECTING

Exception in thread “main” kafka.zookeeper.ZooKeeperClientTimeoutException: Timed out waiting for connection while in state: CONNECTING

解决方法就是上面的修改ip地址

mysql

docker-compose创建mysql

version: '3.3'

services:

mysql:

image: mysql:latest ## 镜像

restart: always

hostname: mysql

container_name: mysql

privileged: true

ports:

- 3306:3306

environment:

MYSQL_ROOT_PASSWORD: 123456

volumes:

- /docker-compose/data/:/var/lib/mysql/

command: --default-authentication-plugin=mysql_native_password --lower-case-table-names=1

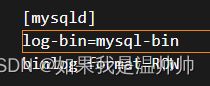

修改mysqlconf

在vs中修改

# 开启 binlog

log-bin=mysql-bin

# 选择 ROW 模式

binlog-format=ROW

# 配置 MySQL replaction 需要定义,不要和 canal 的 slaveId 重复

server_id=1

进入容器

docker exec -it mysql /bin/bash

mysql -u root

问题

ERROR 1045 (28000): Access denied for user ‘root’@‘localhost’ (using password: NO)

ERROR 1045 (28000): Access denied for user ‘root’@‘localhost’ (using password: NO)

解决方法“

skip-grant-tables

docker exec -it mysql /bin/bash

mysql -u root

mysql -version

查看版本为8.0

bash-4.4# mysql --version

mysql Ver 8.0.31 for Linux on x86_64 (MySQL Community Server - GPL)

mysql8.0修改密码如下:

mysql -u root

进入mysql

mysql> use mysql

Reading table information for completion of table and column names

You can turn off this feature to get a quicker startup with -A

Database changed

mysql> update user set authentication_string ='' where user = 'root';

Query OK, 2 rows affected (0.03 sec)

Rows matched: 2 Changed: 2 Warnings: 0

mysql> alter user 'root'@'localhost' identified by '123456';

ERROR 1290 (HY000): The MySQL server is running with the --skip-grant-tables option so it cannot execute this statement

mysql> FLUSH PRIVILEGES

-> ;

Query OK, 0 rows affected (0.02 sec)

mysql> alter user 'root'@'localhost' identified by '123456';

Query OK, 0 rows affected (0.01 sec)

mysql> FLUSH PRIVILEGES

-> ;

Query OK, 0 rows affected (0.01 sec)

删除skip-grant-tables后重启容器,再次进入

(base) C:\wxy\docker-test\mysql>docker exec -it mysql /bin/bash\

OCI runtime exec failed: exec failed: container_linux.go:380: starting container process caused: exec: "/bin/bash\\": stat /bin/bash\: no such file or directory: unknown

(base) C:\wxy\docker-test\mysql>docker exec -it mysql /bin/bash

bash-4.4# mysql -u root

ERROR 1045 (28000): Access denied for user 'root'@'localhost' (using password: NO)

bash-4.4# mysql -u root -p

Enter password:

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 9

Server version: 8.0.31 MySQL Community Server - GPL

Copyright (c) 2000, 2022, Oracle and/or its affiliates.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql>

canal

mysql 创建canal用户

mysql> CREATE USER canal IDENTIFIED BY '123456';

Query OK, 0 rows affected (0.06 sec)

mysql> GRANT SELECT , REPLICATION SLAVE, REPLICATION CLIENT ON *.* to 'canal'@'%';

Query OK, 0 rows affected (0.01 sec)

mysql> FLUSH PRIVILEGES;

Query OK, 0 rows affected (0.00 sec)

mysql> drop database if exists data_pipe

-> ;

Query OK, 0 rows affected, 1 warning (0.01 sec)

mysql> create database if not exists data_pipe default character set utf8mb4 collate utf8mb4_unicode_ci;

Query OK, 1 row affected (0.00 sec)

mysql> user data_pipe

-> ;

ERROR 1064 (42000): You have an error in your SQL syntax; check the manual that corresponds to your MySQL server version for the right syntax to use near 'user data_pipe' at line 1

mysql> use data_pipe;

Database changed

mysql> create table job_info (a,b,c)

-> ;

ERROR 1064 (42000): You have an error in your SQL syntax; check the manual that corresponds to your MySQL server version for the right syntax to use near ',b,c)' at line 1

mysql> create table job_info (id int(11),name varchar(25))

-> ;

Query OK, 0 rows affected, 1 warning (0.02 sec)

mysql> insert into job_info(id,name) values(1,1);

Query OK, 1 row affected (0.02 sec)

mysql> insert into job_info(id,name) values(2,2);

Query OK, 1 row affected (0.00 sec)

mysql> show master status;

docker-compose创建canal

问题, window docker 创建 canal 会有 canal-server exited 139的问题, 这个是因为 canal 是基于 centos6, 太老, 无奈只能放弃!!

解决方法: 用另一台 mac, 搭建 canal 去连接win 上的 mysql 和 kafka,曲线救国 QAQ

docker 创建 canal

docker run canal/canal-server:v1.1.5

docker exec -it 你的容器名 /bin/bash

修改canal.propertites

#################################################

######### common argument #############

#################################################

# tcp bind ip

canal.ip =

# register ip to zookeeper

canal.register.ip =

canal.port = 11111

canal.metrics.pull.port = 11112

# canal instance user/passwd

# canal.user = canal

# canal.passwd = E3619321C1A937C46A0D8BD1DAC39F93B27D4458

# canal admin config

#canal.admin.manager = 127.0.0.1:8089

canal.admin.port = 11110

canal.admin.user = admin

canal.admin.passwd = 4ACFE3202A5FF5CF467898FC58AAB1D615029441

# admin auto register

#canal.admin.register.auto = true

#canal.admin.register.cluster =

#canal.admin.register.name =

canal.zkServers =

# flush data to zk

canal.zookeeper.flush.period = 1000

canal.withoutNetty = false

# tcp, kafka, rocketMQ, rabbitMQ

canal.serverMode = kafka

# flush meta cursor/parse position to file

canal.file.data.dir = ${canal.conf.dir}

canal.file.flush.period = 1000

## memory store RingBuffer size, should be Math.pow(2,n)

canal.instance.memory.buffer.size = 16384

## memory store RingBuffer used memory unit size , default 1kb

canal.instance.memory.buffer.memunit = 1024

## meory store gets mode used MEMSIZE or ITEMSIZE

canal.instance.memory.batch.mode = MEMSIZE

canal.instance.memory.rawEntry = true

## detecing config

canal.instance.detecting.enable = false

#canal.instance.detecting.sql = insert into retl.xdual values(1,now()) on duplicate key update x=now()

canal.instance.detecting.sql = select 1

canal.instance.detecting.interval.time = 3

canal.instance.detecting.retry.threshold = 3

canal.instance.detecting.heartbeatHaEnable = false

# support maximum transaction size, more than the size of the transaction will be cut into multiple transactions delivery

canal.instance.transaction.size = 1024

# mysql fallback connected to new master should fallback times

canal.instance.fallbackIntervalInSeconds = 60

# network config

canal.instance.network.receiveBufferSize = 16384

canal.instance.network.sendBufferSize = 16384

canal.instance.network.soTimeout = 30

# binlog filter config

canal.instance.filter.druid.ddl = true

canal.instance.filter.query.dcl = false

canal.instance.filter.query.dml = false

canal.instance.filter.query.ddl = false

canal.instance.filter.table.error = false

canal.instance.filter.rows = false

canal.instance.filter.transaction.entry = false

canal.instance.filter.dml.insert = false

canal.instance.filter.dml.update = false

canal.instance.filter.dml.delete = false

# binlog format/image check

canal.instance.binlog.format = ROW,STATEMENT,MIXED

canal.instance.binlog.image = FULL,MINIMAL,NOBLOB

# binlog ddl isolation

canal.instance.get.ddl.isolation = false

# parallel parser config

canal.instance.parser.parallel = true

## concurrent thread number, default 60% available processors, suggest not to exceed Runtime.getRuntime().availableProcessors()

#canal.instance.parser.parallelThreadSize = 16

## disruptor ringbuffer size, must be power of 2

canal.instance.parser.parallelBufferSize = 256

# table meta tsdb info

canal.instance.tsdb.enable = true

canal.instance.tsdb.dir = ${canal.file.data.dir:../conf}/${canal.instance.destination:}

canal.instance.tsdb.url = jdbc:h2:${canal.instance.tsdb.dir}/h2;CACHE_SIZE=1000;MODE=MYSQL;

canal.instance.tsdb.dbUsername = canal

canal.instance.tsdb.dbPassword = canal

# dump snapshot interval, default 24 hour

canal.instance.tsdb.snapshot.interval = 24

# purge snapshot expire , default 360 hour(15 days)

canal.instance.tsdb.snapshot.expire = 360

#################################################

######### destinations #############

#################################################

canal.destinations = data_pipe

# conf root dir

canal.conf.dir = ../conf

# auto scan instance dir add/remove and start/stop instance

canal.auto.scan = true

canal.auto.scan.interval = 5

# set this value to 'true' means that when binlog pos not found, skip to latest.

# WARN: pls keep 'false' in production env, or if you know what you want.

canal.auto.reset.latest.pos.mode = false

canal.instance.tsdb.spring.xml = classpath:spring/tsdb/h2-tsdb.xml

#canal.instance.tsdb.spring.xml = classpath:spring/tsdb/mysql-tsdb.xml

canal.instance.global.mode = spring

canal.instance.global.lazy = false

canal.instance.global.manager.address = ${canal.admin.manager}

#canal.instance.global.spring.xml = classpath:spring/memory-instance.xml

canal.instance.global.spring.xml = classpath:spring/file-instance.xml

#canal.instance.global.spring.xml = classpath:spring/default-instance.xml

##################################################

######### MQ Properties #############

##################################################

# aliyun ak/sk , support rds/mq

canal.aliyun.accessKey =

canal.aliyun.secretKey =

canal.aliyun.uid=

canal.mq.flatMessage = true

canal.mq.canalBatchSize = 50

canal.mq.canalGetTimeout = 100

# Set this value to "cloud", if you want open message trace feature in aliyun.

canal.mq.accessChannel = local

canal.mq.database.hash = true

canal.mq.send.thread.size = 30

canal.mq.build.thread.size = 8

##################################################

######### Kafka #############

##################################################

kafka.bootstrap.servers = 192.168.1.24:9092

kafka.acks = all

kafka.compression.type = none

kafka.batch.size = 16384

kafka.linger.ms = 1

kafka.max.request.size = 1048576

kafka.buffer.memory = 33554432

kafka.max.in.flight.requests.per.connection = 1

kafka.retries = 0

kafka.kerberos.enable = false

kafka.kerberos.krb5.file = "../conf/kerberos/krb5.conf"

kafka.kerberos.jaas.file = "../conf/kerberos/jaas.conf"

修改instance.propertites

#################################################

## mysql serverId , v1.0.26+ will autoGen

# canal.instance.mysql.slaveId=0

# enable gtid use true/false

canal.instance.gtidon=false

# position info

canal.instance.master.address=192.168.1.24:3306

canal.instance.master.journal.name=mysql-bin.000003

canal.instance.master.position=2147

canal.instance.master.timestamp=

canal.instance.master.gtid=

# rds oss binlog

canal.instance.rds.accesskey=

canal.instance.rds.secretkey=

canal.instance.rds.instanceId=

# table meta tsdb info

canal.instance.tsdb.enable=true

#canal.instance.tsdb.url=jdbc:mysql://127.0.0.1:3306/canal_tsdb

#canal.instance.tsdb.dbUsername=canal

#canal.instance.tsdb.dbPassword=canal

#canal.instance.standby.address =

#canal.instance.standby.journal.name =

#canal.instance.standby.position =

#canal.instance.standby.timestamp =

#canal.instance.standby.gtid=

# username/password

canal.instance.dbUsername=canal

canal.instance.dbPassword=canal

canal.instance.connectionCharset = UTF-8

# enable druid Decrypt database password

canal.instance.enableDruid=false

#canal.instance.pwdPublicKey=MFwwDQYJKoZIhvcNAQEBBQADSwAwSAJBALK4BUxdDltRRE5/zXpVEVPUgunvscYFtEip3pmLlhrWpacX7y7GCMo2/JM6LeHmiiNdH1FWgGCpUfircSwlWKUCAwEAAQ==

# table regex

canal.instance.filter.regex=.*\\..*

# table black regex

canal.instance.filter.black.regex=mysql\\.slave_.*

# table field filter(format: schema1.tableName1:field1/field2,schema2.tableName2:field1/field2)

#canal.instance.filter.field=test1.t_product:id/subject/keywords,test2.t_company:id/name/contact/ch

# table field black filter(format: schema1.tableName1:field1/field2,schema2.tableName2:field1/field2)

#canal.instance.filter.black.field=test1.t_product:subject/product_image,test2.t_company:id/name/contact/ch

# mq config

canal.mq.topic=canaltopic

# dynamic topic route by schema or table regex

#canal.mq.dynamicTopic=mytest1.user,mytest2\\..*,.*\\..*

canal.mq.partition=0

# hash partition config

#canal.mq.partitionsNum=3

#canal.mq.partitionHash=test.table:id^name,.*\\..*

#canal.mq.dynamicTopicPartitionNum=test.*:4,mycanal:6

#################################################