手把手教你 在IDEA搭建 SparkSQL的开发环境

目录

1. spark版本和scala版本如何选择

1.1 查看官网

1.2 如何获取pom依赖信息

2. 创建Maven项目、添加Scala插件、Scala的sdk

3. 配置pom.xml 添加相关jar依赖

3.1 pom.xml 示例 (spark版本: 3.3.2 scala版本: 2.12)

4. 运行官网测试案例

5. 设置日志级别

5.1 提交任务时,设置任务级别

5.2 修改环境默认日志级别

6. FAQ

6.1 因Spark版本和Scala版本不一致导致的报错

1. spark版本和scala版本如何选择

在IDEA搭建SparkSQL开发环境时,最应该注意的是 依赖包和Scala版本的对应关系

1.1 查看官网

从官网发布的信息中,我们可以得到下面几个信息:

spark最新的发行版本

适配的Hadoop版本

编译使用的Scala版本

依赖jar的pom信息

官网链接:Downloads | Apache Spark

1.2 如何获取pom依赖信息

方式1: 官网

方式2: https://mvnrepository.com

参考链接: https://www.cnblogs.com/bajiaotai/p/16270971.html#_label0

2. 创建Maven项目、添加Scala插件、Scala的sdk

参考链接: https://www.cnblogs.com/bajiaotai/p/15381309.html

3. 配置pom.xml 添加相关jar依赖

3.1 pom.xml 示例 (spark版本: 3.3.2 scala版本: 2.12)

4.0.0

com.spark

sparkAPI

1.0-SNAPSHOT

3.3.2

2.12

org.apache.spark

spark-core_${scala.version}

${spark.version}

org.apache.spark

spark-yarn_${scala.version}

${spark.version}

org.apache.spark

spark-sql_${scala.version}

${spark.version}

mysql

mysql-connector-java

5.1.27

org.apache.spark

spark-hive_${scala.version}

${spark.version}

org.apache.hive

hive-exec

1.2.1

4. 运行官网测试案例

官网链接: https://spark.apache.org/docs/latest/sql-getting-started.html

test("运行官网案例") {

/*

* TODO 运行官网测试案例

*

* */

//1.初始化 SparkSession 对象

import org.apache.spark.sql.SparkSession

val spark: SparkSession = SparkSession

.builder()

.master("local")

.appName("Spark SQL basic example")

.config("spark.some.config.option", "some-value")

.getOrCreate()

//2.创建 DataFrame

val df: DataFrame = spark.read.json("/usr/local/lib/mavne01/sparkAPI/src/main/resources/person.json")

// 打印df

df.show()

// +----+----+

// | age|name|

// +----+----+

// |null|刘备|

// | 30|关羽|

// | 19|张飞|

// +----+----+

//3.关闭资源

spark.stop()

}

5. 设置日志级别

在工程中有两处地方可以修改日志级别

1.提交任务时,设置任务级别

2.使用环境默认的日志级别 (log4j.properties)

优先级: 提交任务时设置 > 环境默认设置

5.1 提交任务时,设置任务级别

/*

* TODO 设置日志级别

* 说明:

* 这里设置的日志级别,优先级是最高的(会将外部设置覆盖掉)

* 但是这里设置,只对当前任务有效

* 级别枚举值:

* ALL, DEBUG, ERROR, FATAL, INFO, OFF, TRACE, WARN

* */

//设置日志级别

spark.sparkContext.setLogLevel("ERROR")5.2 修改环境默认日志级别

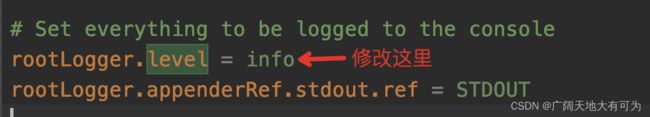

可以通过 在resources目录下添加log4j2.properties 配置文件 来修改环境默认的日志级别

当不添加时,默认使用

Using Spark's default log4j profile: org/apache/spark/log4j2-defaults.properties

log4j2.properties 模板

#

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#

# Set everything to be logged to the console

rootLogger.level = info

rootLogger.appenderRef.stdout.ref = STDOUT

appender.console.type = Console

appender.console.name = STDOUT

appender.console.target = SYSTEM_OUT

appender.console.layout.type = PatternLayout

appender.console.layout.pattern = %d{yy/MM/dd HH:mm:ss} %p %c{1}: %m%n%ex

# Settings to quiet third party logs that are too verbose

logger.jetty.name = org.sparkproject.jetty

logger.jetty.level = warn

logger.jetty2.name = org.sparkproject.jetty.util.component.AbstractLifeCycle

logger.jetty2.level = error

logger.repl1.name = org.apache.spark.repl.SparkIMain$exprTyper

logger.repl1.level = info

logger.repl2.name = org.apache.spark.repl.SparkILoop$SparkILoopInterpreter

logger.repl2.level = info

# Set the default spark-shell log level to WARN. When running the spark-shell, the

# log level for this class is used to overwrite the root logger's log level, so that

# the user can have different defaults for the shell and regular Spark apps.

logger.repl.name = org.apache.spark.repl.Main

logger.repl.level = warn

# SPARK-9183: Settings to avoid annoying messages when looking up nonexistent UDFs

# in SparkSQL with Hive support

logger.metastore.name = org.apache.hadoop.hive.metastore.RetryingHMSHandler

logger.metastore.level = fatal

logger.hive_functionregistry.name = org.apache.hadoop.hive.ql.exec.FunctionRegistry

logger.hive_functionregistry.level = error

# Parquet related logging

logger.parquet.name = org.apache.parquet.CorruptStatistics

logger.parquet.level = error

logger.parquet2.name = parquet.CorruptStatistics

logger.parquet2.level = error6. FAQ

6.1 因Spark版本和Scala版本不一致导致的报错

报错信息:

scalac: Error: illegal cyclic inheritance involving trait Iterable

scala.reflect.internal.Types$TypeError: illegal cyclic inheritance involving trait Iterable

这个报错信息可能不一致,具体要看你使用的spark版本和scala版本

可以使用下面这行代码,查看当前环境的scala版本

println(util.Properties.versionString)

// version 2.12.15