k8s之StorageClass(NFS)

一、前言

1、环境 k8s v1.23.5 ,服务器是centos7.9

192.168.164.20 k8s-master1

192.168.164.30 k8s-node1

192.168.164.40 k8s-node2

2、貌似storageClass在kubernetes v1.20就被砍了。

因为它比较慢,而且耗资源,但可以通过不同的实现镜像绕过,本帖就绕过了

3、本帖的这个sc底层是NFS实现的,所以需要NFS支持

二、创建NFS服务

1、给所有节点安装nfs-utils

yum install -y nfs-utils2、在Master节点上搭建NFS服务

2-1、创建NFS共享目录

mkdir -p /data/k8s-nfs/nfs-provisioner注:以后k8s申请的pv就自动被创建在这个目录下了

2-2、挂载目录到nfs

echo "/data/k8s-nfs/nfs-provisioner 192.168.164.0/24(rw,no_root_squash)" >> /etc/exports注:192.168.164.0/24指的是你的谁可以访问这个nfs服务,通常是和k8s同网段的内网主机IP段。

2-3、启动NFS服务

systemctl start nfs-server.service

systemctl enable nfs-server.service3、在任意Node节点上测试NFS服务是否生效

[root@k8s-node1 ~]# showmount -e 192.168.164.20

Export list for 192.168.164.20:

/data/k8s-nfs/nfs-provisioner 192.168.164.0/24注:192.168.164.20是你的NFS服务器,也是你的k8s-master节点ip

三、创建storageClass所需的配置文件和示例eg文件

1、创建sc所用的rbac

vim init-sc-serviceaccount.yamlapiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: nfs-client-provisioner-runner

rules:

- apiGroups: [""]

resources: ["nodes"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["create", "update", "patch"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: run-nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

roleRef:

kind: ClusterRole

name: nfs-client-provisioner-runner

apiGroup: rbac.authorization.k8s.io

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

rules:

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

---

kind: RoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

roleRef:

kind: Role

name: leader-locking-nfs-client-provisioner

apiGroup: rbac.authorization.k8s.io2、部署 NFS-Subdir-External-Provisioner

vim init-sc-deployment.yamlapiVersion: apps/v1

kind: Deployment

metadata:

name: nfs-client-provisioner

labels:

app: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

spec:

replicas: 1

strategy:

type: Recreate

selector:

matchLabels:

app: nfs-client-provisioner

template:

metadata:

labels:

app: nfs-client-provisioner

spec:

nodeName: k8s-master1 # 这里是你的k8s-master节点的主机名,设置在master节点运行

tolerations: # 设置容忍master节点污点

- key: node-role.kubernetes.io/master

operator: Equal

value: "true"

serviceAccountName: nfs-client-provisioner

containers:

- name: nfs-client-provisioner

image: registry.cn-beijing.aliyuncs.com/mydlq/nfs-subdir-external-provisioner:v4.0.0

imagePullPolicy: IfNotPresent

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME

value: k8s/nfs-subdir-external-provisioner

- name: NFS_SERVER

value: 192.168.164.20 # 这里是你的k8s-master节点的ip,也是nfs服务器地址

- name: NFS_PATH

value: /data/k8s-nfs/nfs-provisioner # 这是NFS服务器端共享出来的路径

volumes:

- name: nfs-client-root

nfs:

server: 192.168.164.20 # 这里是你的k8s-master节点的ip,也是nfs服务器地址

path: /data/k8s-nfs/nfs-provisioner # 这是NFS服务器端共享出来的路径镜像可替换为:

37213690/nfs-subdir-external-provisioner:v4.0.0

registry.cn-hangzhou.aliyuncs.com/kahnxiao/nfs-subdir-external-provisioner:v4.0.0

3、创建NFS StorageClass

vim init-sc-storage.yamlapiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: managed-nfs-storage

annotations:

storageclass.kubernetes.io/is-default-class: "false" # 是否设置为默认的storageclass

provisioner: k8s/nfs-subdir-external-provisioner # or choose another name, must match deployment's env PROVISIONER_NAME'

allowVolumeExpansion: true

parameters:

archiveOnDelete: "false" # 设置为"false"时删除PVC不会保留数据,"true"则保留数据4、创建PVC

vim init-sc-pvc.yamlapiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: nfs-claim

spec:

storageClassName: managed-nfs-storage

accessModes: ["ReadWriteMany","ReadOnlyMany"]

resources:

requests:

storage: 100Mi5、创建Pod并绑定PV

vim eg-sc-busybox.yamlapiVersion: v1

kind: Pod

metadata:

name: test-pod

spec:

containers:

- name: test-pod

image: busybox:1.28

imagePullPolicy: IfNotPresent

command:

- "/bin/sh"

args:

- "-c"

- "sleep 3600"

volumeMounts:

- name: nfs-pvc

mountPath: "/mnt/busybox"

restartPolicy: "Never"

volumes:

- name: nfs-pvc

persistentVolumeClaim:

claimName: nfs-claim四、使用配置文件生成StorageClass和示例eg资源

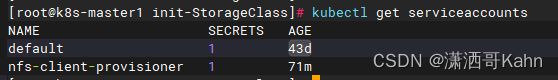

1、生成ServiceAccount

[root@k8s-master1 init-StorageClass]# kubectl apply -f init-sc-serviceaccount.yaml

serviceaccount/nfs-client-provisioner created

clusterrole.rbac.authorization.k8s.io/nfs-client-provisioner-runner created

clusterrolebinding.rbac.authorization.k8s.io/run-nfs-client-provisioner created

role.rbac.authorization.k8s.io/leader-locking-nfs-client-provisioner created

rolebinding.rbac.authorization.k8s.io/leader-locking-nfs-client-provisioner created![]()

2、生成NFS-Subdir-External-Provisioner

[root@k8s-master1 init-StorageClass]# kubectl apply -f init-sc-deployment.yaml

deployment.apps/nfs-client-provisioner created3、生成NFS StorageClass

[root@k8s-master1 init-StorageClass]# kubectl apply -f init-sc-storage.yaml4、生成PVC

[root@k8s-master1 init-StorageClass]# kubectl apply -f init-sc-pvc.yaml5、生成示例pod

[root@k8s-master1 init-StorageClass]# kubectl apply -f eg-sc-busybox.yaml

pod/test-pod created五、测试动态NFS存储的效果

[root@k8s-master1 init-StorageClass]# kubectl exec -it test-pod -- ls /mnt/busybox/

[root@k8s-master1 init-StorageClass]# kubectl exec -it test-pod -- touch /mnt/busybox/test.txt

[root@k8s-master1 init-StorageClass]# ls /data/k8s-nfs/nfs-provisioner/

default-nfs-claim-pvc-3d0d0a1f-3ba1-4935-a420-d5b8aba38ab0

[root@k8s-master1 init-StorageClass]# echo "haha" > /data/k8s-nfs/nfs-provisioner/default-nfs-claim-pvc-3d0d0a1f-3ba1-4935-a420-d5b8aba38ab0/test.txt

#再去看容器的挂载目录,发现刚写的内容已经跑到了容器里了

[root@k8s-master1 init-StorageClass]# kubectl exec -it test-pod -- cat /mnt/busybox/test.txt

haha