基于metrics-server弹性伸缩

目录

Kubernetes部署方式

基于kubeadm部署K8S集群

一、环境准备

1.1、主机初始化配置

1.2、部署docker环境

二、部署kubernetes集群

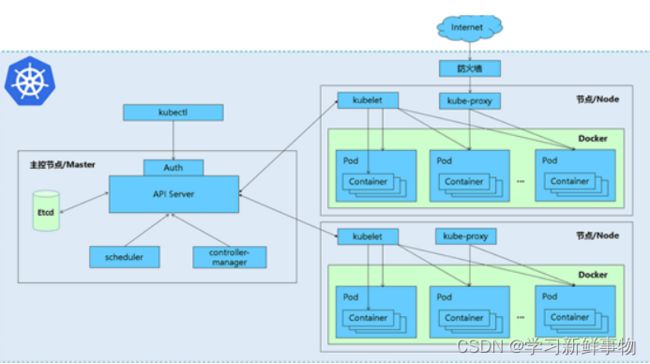

2.1、组件介绍

2.2、配置阿里云yum源

2.3、安装kubelet kubeadm kubectl

2.4、配置init-config.yaml

2.5、安装master节点

2.6、安装node节点

2.7、安装flannel

三、安装Dashboard UI

3.1、部署Dashboard

3.2、开放端口设置

3.3、权限配置

四、metrics-server服务部署

4.1、在Node节点上下载镜像

4.2、修改 Kubernetes apiserver 启动参数

4.3、Master上进行部署

五、弹性伸缩

5.1、弹性伸缩介绍

5.2、弹性伸缩工作原理

5.3、弹性伸缩实战

Kubernetes部署方式

官方提供Kubernetes部署3种方式

minikube

Minikube是一个工具,可以在本地快速运行一个单点的Kubernetes,尝试Kubernetes或日常开发的用户使用。不能用于生产环境。

官方文档:Install Tools | Kubernetes

二进制包

从官方下载发行版的二进制包,手动部署每个组件,组成Kubernetes集群。目前企业生产环境中主要使用该方式。

下载地址:https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG-1.11.md#v1113

Kubeadm

Kubeadm 是谷歌推出的一个专门用于快速部署 kubernetes 集群的工具。在集群部署的过程中,可以通过 kubeadm init 来初始化 master 节点,然后使用 kubeadm join 将其他的节点加入到集群中。

Kubeadm 通过简单配置可以快速将一个最小可用的集群运行起来。它在设计之初关注点是快速安装并将集群运行起来,而不是一步步关于各节点环境的准备工作。同样的,kubernetes 集群在使用过程中的各种插件也不是 kubeadm 关注的重点,比如 kubernetes集群 WEB Dashboard、prometheus 监控集群业务等。kubeadm 应用的目的是作为所有部署的基础,并通过 kubeadm 使得部署 kubernetes 集群更加容易。

Kubeadm 的简单快捷的部署可以应用到如下三方面:

- 新用户可以从 kubeadm 开始快速搭建 Kubernete 并了解。

- 熟悉 Kubernetes 的用户可以使用 kubeadm 快速搭建集群并测试他们的应用。

- 大型的项目可以将 kubeadm 配合其他的安装工具一起使用,形成一个比较复杂的系统。

- 官方文档:

Kubeadm | Kubernetes

Installing kubeadm | Kubernetes

基于kubeadm部署K8S集群

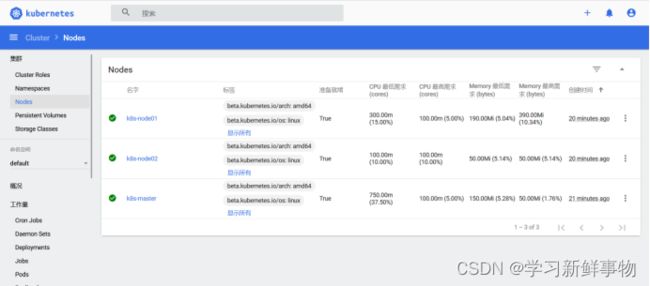

一、环境准备

| 操作系统 |

IP地址 |

主机名 |

组件 |

| CentOS7.5 |

192.168.50.53 |

k8s-master |

kubeadm、kubelet、kubectl、docker-ce |

| CentOS7.5 |

192.168.50.51 |

k8s-node01 |

kubeadm、kubelet、kubectl、docker-ce |

| CentOS7.5 |

192.168.50.50 |

k8s-node02 |

kubeadm、kubelet、kubectl、docker-ce |

注意:所有主机配置推荐CPU:2C+ Memory:2G+

1.1、主机初始化配置

所有主机配置

[root@localhost ~]# setenforce 0

[root@localhost ~]# iptables -F

[root@localhost ~]# systemctl stop firewalld

[root@localhost ~]# systemctl disable firewalld

Removed symlink /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed symlink /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

[root@localhost ~]# systemctl stop NetworkManager

[root@localhost ~]# systemctl disable NetworkManager

Removed symlink /etc/systemd/system/multi-user.target.wants/NetworkManager.service.

Removed symlink /etc/systemd/system/dbus-org.freedesktop.nm-dispatcher.service.

Removed symlink /etc/systemd/system/network-online.target.wants/NetworkManager-wait-online.service.

[root@localhost ~]# sed -i '/^SELINUX=/s/enforcing/disabled/' /etc/selinux/config

配置主机名并绑定hosts,不同主机名称不同

[root@localhost ~]# hostname k8s-master

[root@localhost ~]# bash

[root@k8s-master ~]# cat << EOF >> /etc/hosts

> 192.168.200.111 k8s-master

> 192.168.200.112 k8s-node01

> 192.168.200.113 k8s-node02

> EOF

[root@k8s-master ~]# vim /etc/hosts

[root@k8s-master ~]# hostname k8s-node02

[root@k8s-master ~]# bash

[root@k8s-node02 ~]# scp /etc/hosts 192.168.50.53:/etc

[root@k8s-node02 ~]# scp /etc/hosts 192.168.50.50:/etc

所有主机配置初始化

[root@k8s-master ~]# yum -y install vim wget net-tools lrzsz

[root@k8s-master ~]# swapoff -a

[root@k8s-master ~]# sed -i '/swap/s/^/#/' /etc/fstab

[root@k8s-master ~]# cat << EOF >> /etc/sysctl.conf

> net.bridge.bridge-nf-call-ip6tables = 1

> net.bridge.bridge-nf-call-iptables = 1

> EOF

[root@k8s-master ~]# modprobe br_netfilter

[root@k8s-master ~]# sysctl -p

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

1.2、部署docker环境

三台主机上分别部署 Docker 环境,因为 Kubernetes 对容器的编排需要 Docker 的支持。

[root@k8s-master ~]# wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo

[root@k8s-master ~]# yum install -y yum-utils device-mapper-persistent-data lvm2

使用 YUM 方式安装 Docker 时,推荐使用阿里的 YUM 源。阿里的官方开源站点地址是:https://developer.aliyun.com/mirror/,可以在站点内找到 Docker 的源地址。

[root@k8s-master ~]# yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

已加载插件:fastestmirror

adding repo from: https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

grabbing file https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo to /etc/yum.repos.d/docker-ce.repo

repo saved to /etc/yum.repos.d/docker-ce.repo

[root@k8s-node02 ~]# yum clean all && yum makecache fast

[root@k8s-node02 ~]# systemctl start docker

[root@k8s-node02 ~]# systemctl enable docker

Created symlink from /etc/systemd/system/multi-user.target.wants/docker.service to /usr/lib/systemd/system/docker.service.

镜像加速器(所有主机配置)

很多镜像都是在国外的服务器上,由于网络上存在的问题,经常导致无法拉取镜像的错误,所以最好将镜像拉取地址设置成国内的。目前国内很多公有云服务商都提供了镜像加速服务。镜像加速配置如下所示。

[root@k8s-node02 ~]# cat << END > /etc/docker/daemon.json

> {

> "registry-mirrors":[ "https://nyakyfun.mirror.aliyuncs.com" ]

> }

> END

[root@k8s-node02 ~]# systemctl daemon-reload

[root@k8s-node02 ~]# systemctl restart docker

将镜像加速地址直接写入/etc/docker/daemon.json 文件内,如果文件不存在,可直接新建文件并保存。通过该文件扩展名可以看出,daemon.json 的内容必须符合 json 格式,书写时要注意。同时,由于单一镜像服务存在不可用的情况,在配置加速时推荐配置两个或多个加速地址,从而达到冗余、高可用的目的。

二、部署kubernetes集群

2.1、组件介绍

三个节点都需要安装下面三个组件

- kubeadm:安装工具,使所有的组件都会以容器的方式运行

- kubectl:客户端连接K8S API工具

- kubelet:运行在node节点,用来启动容器的工具

2.2、配置阿里云yum源

推荐使用阿里云的yum源安装:

[root@k8s-node02 ~]# cat <

/etc/yum.repos.d/kubernetes.repo > [kubernetes]

> name=Kubernetes

> baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

> enabled=1

> gpgcheck=1

> repo_gpgcheck=1

> gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

> https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

> EOF

2.3、安装kubelet kubeadm kubectl

所有主机配置

[root@k8s-node02 ~]# yum install -y kubelet-1.20.0 kubeadm-1.20.0 kubectl-1.20.0

[root@k8s-master ~]# systemctl enable kubelet

Created symlink from /etc/systemd/system/multi-user.target.wants/kubelet.service to /usr/lib/systemd/system/kubelet.service.

kubelet 刚安装完成后,通过 systemctl start kubelet 方式是无法启动的,需要加入节点或初始化为 master 后才可启动成功。

如果在命令执行过程中出现索引 gpg 检查失败的情况, 请使用 yum install -y --nogpgcheck kubelet kubeadm kubectl 来安装。

2.4、配置init-config.yaml

Kubeadm 提供了很多配置项,Kubeadm 配置在 Kubernetes 集群中是存储在ConfigMap 中的,也可将这些配置写入配置文件,方便管理复杂的配置项。Kubeadm 配内容是通过 kubeadm config 命令写入配置文件的。

在master节点安装,master 定于为192.168.50.53,通过如下指令创建默认的init-config.yaml文件:

[root@k8s-master ~]# kubeadm config print init-defaults > init-config.yaml

其中,kubeadm config 除了用于输出配置项到文件中,还提供了其他一些常用功能,如下所示。

- kubeadm config view:查看当前集群中的配置值。

- kubeadm config print join-defaults:输出 kubeadm join 默认参数文件的内容。

- kubeadm config images list:列出所需的镜像列表。

- kubeadm config images pull:拉取镜像到本地。

- kubeadm config upload from-flags:由配置参数生成 ConfigMap。

init-config.yaml配置

[root@k8s-master ~]# vim init-config.yaml

[root@k8s-master ~]# cat init-config.yaml

apiVersion: kubeadm.k8s.io/v1beta2

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 192.168.50.53 //master节点IP地址

bindPort: 6443

nodeRegistration:

criSocket: /var/run/dockershim.sock

name: k8s-master

taints:

- effect: NoSchedule

key: node-role.kubernetes.io/master

---

apiServer:

timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta2

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controllerManager: {}

dns:

type: CoreDNS

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: registry.aliyuncs.com/google_containers //修改为国内地址

kind: ClusterConfiguration

kubernetesVersion: v1.20.0

networking:

dnsDomain: cluster.local

serviceSubnet: 10.96.0.0/12

podSubnet: 10.244.0.0/16 //新增加 Pod 网段

scheduler: {}

2.5、安装master节点

拉取所需镜像

[root@k8s-master ~]# kubeadm config images list --config init-config.yaml

registry.aliyuncs.com/google_containers/kube-apiserver:v1.20.0

registry.aliyuncs.com/google_containers/kube-controller-manager:v1.20.0

registry.aliyuncs.com/google_containers/kube-scheduler:v1.20.0

registry.aliyuncs.com/google_containers/kube-proxy:v1.20.0

registry.aliyuncs.com/google_containers/pause:3.2

registry.aliyuncs.com/google_containers/etcd:3.4.13-0

registry.aliyuncs.com/google_containers/coredns:1.7.0

[root@k8s-master ~]# kubeadm config images pull --config=init-config.yaml

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-apiserver:v1.20.0

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-controller-manager:v1.20.0

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-scheduler:v1.20.0

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-proxy:v1.20.0

[config/images] Pulled registry.aliyuncs.com/google_containers/pause:3.2

[config/images] Pulled registry.aliyuncs.com/google_containers/etcd:3.4.13-0

[config/images] Pulled registry.aliyuncs.com/google_containers/coredns:1.7.0

安装matser节点

[root@k8s-master ~]# kubeadm init --config=init-config.yaml //初始化安装K8S

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.50.53:6443 --token abcdef.0123456789abcdef \

--discovery-token-ca-cert-hash sha256:e5a09207519f36610659db414c77a8aeb7dff4532f49f44418cbf351d539a160

根据提示操作

kubectl 默认会在执行的用户家目录下面的.kube 目录下寻找config 文件。这里是将在初始化时[kubeconfig]步骤生成的admin.conf 拷贝到.kube/config

[root@k8s-master ~]# mkdir -p $HOME/.kube

[root@k8s-master ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@k8s-master ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

| kubeadm init 主要执行了以下操作: l [init]:指定版本进行初始化操作 l [preflight] :初始化前的检查和下载所需要的Docker镜像文件 l [kubelet-start] :生成kubelet 的配置文件”/var/lib/kubelet/config.yaml”,没有这个文件kubelet无法启动,所以初始化之前的kubelet 实际上启动失败。 l [certificates]:生成Kubernetes 使用的证书,存放在/etc/kubernetes/pki 目录中。 l [kubeconfig] :生成 Kubeconfig 文件,存放在/etc/kubernetes 目录中,组件之间通信需要使用对应文件。 l [control-plane]:使用/etc/kubernetes/manifest 目录下的YAML 文件,安装 Master 组件。 l [etcd]:使用/etc/kubernetes/manifest/etcd.yaml 安装Etcd 服务。 l [wait-control-plane]:等待control-plan 部署的Master 组件启动。 l [apiclient]:检查Master组件服务状态。 l [uploadconfig]:更新配置 l [kubelet]:使用configMap 配置kubelet。 l [patchnode]:更新CNI信息到Node 上,通过注释的方式记录。 l [mark-control-plane]:为当前节点打标签,打了角色Master,和不可调度标签,这样默认就不会使用Master 节点来运行Pod。 l [bootstrap-token]:生成token 记录下来,后边使用kubeadm join 往集群中添加节点时会用到 l [addons]:安装附加组件CoreDNS 和kube-proxy Kubeadm 通过初始化安装是不包括网络插件的,也就是说初始化之后是不具备相关网络功能的,比如 k8s-master 节点上查看节点信息都是“Not Ready”状态、Pod 的 CoreDNS无法提供服务等。 |

2.6、安装node节点

根据master安装时的提示信息

[root@k8s-node01 ~]# kubeadm join 192.168.50.53:6443 --token abcdef.0123456789abcdef \

> --discovery-token-ca-cert-hash sha256:e5a09207519f36610659db414c77a8aeb7dff4532f49f44418cbf351d539a160

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/

[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 24.0.5. Latest validated version: 19.03

[WARNING Hostname]: hostname "k8s-node01" could not be reached

[WARNING Hostname]: hostname "k8s-node01": lookup k8s-node01 on 223.5.5.5:53: no such host

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

[root@k8s-node02 ~]# kubeadm join 192.168.50.53:6443 --token abcdef.0123456789abcdef \

> --discovery-token-ca-cert-hash sha256:e5a09207519f36610659db414c77a8aeb7dff4532f49f44418cbf351d539a160

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/

[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 24.0.5. Latest validated version: 19.03

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

[root@k8s-master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master NotReady control-plane,master 112s v1.20.0

k8s-node01 NotReady

43s v1.20.0 k8s-node02 NotReady

48s v1.20.0

前面已经提到,在初始化 k8s-master 时并没有网络相关配置,所以无法跟 node 节点通信,因此状态都是“NotReady”。但是通过 kubeadm join 加入的 node 节点已经在k8s-master 上可以看到。

2.7、安装flannel

Master 节点NotReady 的原因就是因为没有使用任何的网络插件,此时Node 和Master的连接还不正常。目前最流行的Kubernetes 网络插件有Flannel、Calico、Canal、Weave 这里选择使用flannel。

所有主机:

master上传kube-flannel.yml,所有主机上传flannel_v0.12.0-amd64.tar

[root@k8s-master ~]# docker load < flannel_v0.12.0-amd64.tar

256a7af3acb1: Loading layer 5.844MB/5.844MB

d572e5d9d39b: Loading layer 10.37MB/10.37MB

57c10be5852f: Loading layer 2.249MB/2.249MB

7412f8eefb77: Loading layer 35.26MB/35.26MB

05116c9ff7bf: Loading layer 5.12kB/5.12kB

Loaded image: quay.io/coreos/flannel:v0.12.0-amd64

所有主机

[root@k8s-master ~]# tar xf cni-plugins-linux-amd64-v0.8.6.tgz

[root@k8s-master ~]# cp flannel /opt/cni/bin

master主机

[root@k8s-master ~]# kubectl apply -f kube-flannel.yml

podsecuritypolicy.policy/psp.flannel.unprivileged created

Warning: rbac.authorization.k8s.io/v1beta1 ClusterRole is deprecated in v1.17+, unavailable in v1.22+; use rbac.authorization.k8s.io/v1 ClusterRole

clusterrole.rbac.authorization.k8s.io/flannel created

Warning: rbac.authorization.k8s.io/v1beta1 ClusterRoleBinding is deprecated in v1.17+, unavailable in v1.22+; use rbac.authorization.k8s.io/v1 ClusterRoleBinding

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds-amd64 created

daemonset.apps/kube-flannel-ds-arm64 created

daemonset.apps/kube-flannel-ds-arm created

daemonset.apps/kube-flannel-ds-ppc64le created

daemonset.apps/kube-flannel-ds-s390x created

查看

[root@k8s-master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready control-plane,master 5m16s v1.20.0

k8s-node01 Ready

4m7s v1.20.0 k8s-node02 Ready

4m12s v1.20.0

[root@k8s-master ~]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-7f89b7bc75-9v7m4 1/1 Running 0 5m36s

coredns-7f89b7bc75-s57lh 1/1 Running 0 5m36s

etcd-k8s-master 1/1 Running 0 5m45s

kube-apiserver-k8s-master 1/1 Running 0 5m45s

kube-controller-manager-k8s-master 1/1 Running 0 5m45s

kube-flannel-ds-amd64-crdzl 1/1 Running 0 54s

kube-flannel-ds-amd64-n7df4 1/1 Running 0 54s

kube-flannel-ds-amd64-xcbq8 1/1 Running 0 54s

kube-proxy-fngm4 1/1 Running 0 4m50s

kube-proxy-h2f4c 1/1 Running 0 4m45s

kube-proxy-m5tht 1/1 Running 0 5m37s

kube-scheduler-k8s-master 1/1 Running 0 5m45s

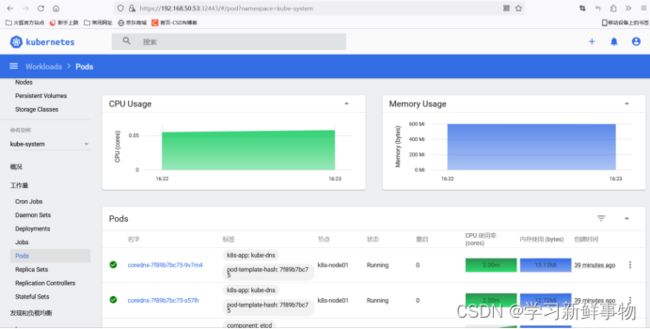

三、安装Dashboard UI

3.1、部署Dashboard

dashboard的github仓库地址:https://github.com/kubernetes/dashboard

代码仓库当中,有给出安装示例的相关部署文件,我们可以直接获取之后,直接部署即可

[root@k8s-master ~]# wget https://raw.githubusercontent.com/kubernetes/dashboard/master/aio/deploy/recommended.yaml 或者 直接下好的文件

3.2、开放端口设置

在默认情况下,dashboard并不对外开放访问端口,这里简化操作,直接使用nodePort的方式将其端口暴露出来,修改serivce部分的定义:

[root@k8s-master ~]# vim recommended.yaml

40 type: NodePort

44 nodePort: 32443

[root@k8s-master ~]# docker pull kubernetesui/dashboard:v2.0.0

v2.0.0: Pulling from kubernetesui/dashboard

2a43ce254c7f: Pull complete

Digest: sha256:06868692fb9a7f2ede1a06de1b7b32afabc40ec739c1181d83b5ed3eb147ec6e

Status: Downloaded newer image for kubernetesui/dashboard:v2.0.0

docker.io/kubernetesui/dashboard:v2.0.0

[root@k8s-master ~]# docker pull kubernetesui/metrics-scraper:v1.0.4

v1.0.4: Pulling from kubernetesui/metrics-scraper

07008dc53a3e: Pull complete

1f8ea7f93b39: Pull complete

04d0e0aeff30: Pull complete

Digest: sha256:555981a24f184420f3be0c79d4efb6c948a85cfce84034f85a563f4151a81cbf

Status: Downloaded newer image for kubernetesui/metrics-scraper:v1.0.4

docker.io/kubernetesui/metrics-scraper:v1.0.4

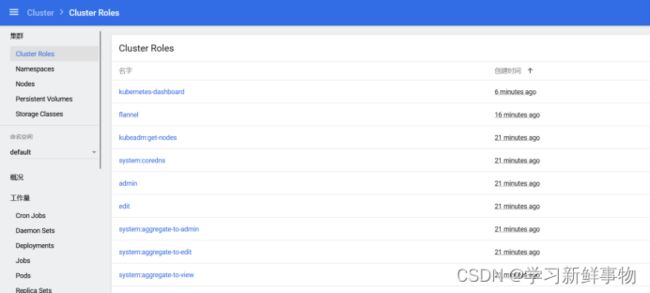

3.3、权限配置

配置一个超级管理员权限

164 name: cluster-admin

[root@k8s-master ~]# kubectl apply -f recommended.yaml

namespace/kubernetes-dashboard created

serviceaccount/kubernetes-dashboard created

service/kubernetes-dashboard created

secret/kubernetes-dashboard-certs created

secret/kubernetes-dashboard-csrf created

secret/kubernetes-dashboard-key-holder created

configmap/kubernetes-dashboard-settings created

role.rbac.authorization.k8s.io/kubernetes-dashboard created

clusterrole.rbac.authorization.k8s.io/kubernetes-dashboard created

rolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created

clusterrolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created

deployment.apps/kubernetes-dashboard created

service/dashboard-metrics-scraper created

deployment.apps/dashboard-metrics-scraper created

[root@k8s-master ~]# kubectl get pods -A -o wide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

kube-system coredns-7f89b7bc75-9v7m4 1/1 Running 0 15m 10.244.2.2 k8s-node01

kube-system coredns-7f89b7bc75-s57lh 1/1 Running 0 15m 10.244.2.3 k8s-node01

kube-system etcd-k8s-master 1/1 Running 0 16m 192.168.50.53 k8s-master

kube-system kube-apiserver-k8s-master 1/1 Running 0 16m 192.168.50.53 k8s-master

kube-system kube-controller-manager-k8s-master 1/1 Running 0 16m 192.168.50.53 k8s-master

kube-system kube-flannel-ds-amd64-crdzl 1/1 Running 0 11m 192.168.50.51 k8s-node01

kube-system kube-flannel-ds-amd64-n7df4 1/1 Running 0 11m 192.168.50.53 k8s-master

kube-system kube-flannel-ds-amd64-xcbq8 1/1 Running 0 11m 192.168.50.50 k8s-node02

kube-system kube-proxy-fngm4 1/1 Running 0 15m 192.168.50.50 k8s-node02

kube-system kube-proxy-h2f4c 1/1 Running 0 15m 192.168.50.51 k8s-node01

kube-system kube-proxy-m5tht 1/1 Running 0 15m 192.168.50.53 k8s-master

kube-system kube-scheduler-k8s-master 1/1 Running 0 16m 192.168.50.53 k8s-master

kubernetes-dashboard dashboard-metrics-scraper-7b59f7d4df-vrkgl 1/1 Running 0 107s 10.244.2.5 k8s-node01

kubernetes-dashboard kubernetes-dashboard-74d688b6bc-fbtk8 1/1 Running 0 107s 10.244.2.4 k8s-node01

[root@k8s-master ~]# kubectl get pods -n kubernetes-dashboard

NAME READY STATUS RESTARTS AGE

dashboard-metrics-scraper-7b59f7d4df-vrkgl 1/1 Running 0 109s

kubernetes-dashboard-74d688b6bc-fbtk8 1/1 Running 0 109s

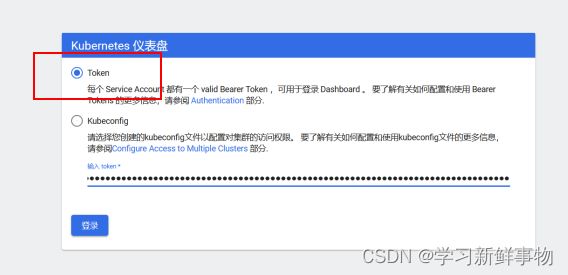

3.4、访问Token配置

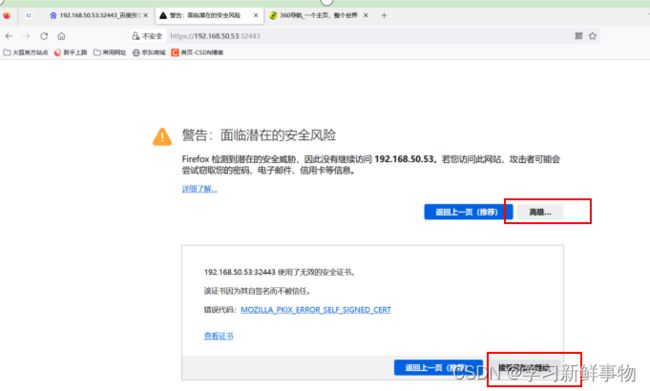

使用浏览器测试访问 https://192.168.50.53:32443

可以看到出现如上图画面,需要我们输入一个kubeconfig文件或者一个token。事实上在安装dashboard时,也为我们默认创建好了一个serviceaccount,为kubernetes-dashboard,并为其生成好了token,我们可以通过如下指令获取该sa的token:

[root@k8s-master ~]# kubectl describe secret -n kubernetes-dashboard $(kubectl get secret -n kubernetes-dashboard |grep kubernetes-dashboard-token | awk '{print $1}') |grep token | awk '{print $2}'

kubernetes-dashboard-token-rkbt7

kubernetes.io/service-account-token

eyJhbGciOiJSUzI1NiIsImtpZCI6InpaZjBUd1kzQ3pFOFJLZElNSlhVZWlyTEhzcnRIU2MxZkhaR3hFYVR2eFUifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJrdWJlcm5ldGVzLWRhc2hib2FyZC10b2tlbi1ya2J0NyIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50Lm5hbWUiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50LnVpZCI6IjE3OWVhZTcwLWY2YWQtNDY4Ny1iZTI5LTkxMmE2ODQ5NzI3MCIsInN1YiI6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDprdWJlcm5ldGVzLWRhc2hib2FyZDprdWJlcm5ldGVzLWRhc2hib2FyZCJ9.wL32PeNumXPwonxUfYIxNfySh_r8Wf4_k_ay0avtwZvhP-PZCvBHizeagDKoTtUhEJRQhMbCH7dza9zrR_vPPJgmxXeOJjbnB4oUQXDGdfZIKhsBGUhX8zzuZ94gBueR3fNWPxHikH4rFvWs8mmgWi2UvMCkHPAUBOp0xhkUfe8k8jhlwOQLfsFvbrohTAyfKGcrKVK5EGpxeD1TbbaxzP17bPHNXsaZ78c44Srgf6WBj3L3hT2RYZMMeZP1XbB7T3CqiQi-6lLoGmgUVfQ9CIIlZ2_VUyplfA3vQQcv5hFdxkIDjVT630rdjr2Hkj0Kp5XQ3TUUDRUfZWXY6kDqCg

四、metrics-server服务部署

4.1、在Node节点上下载镜像

heapster已经被metrics-server取代,metrics-server是K8S中的资源指标获取工具

所有node节点上

[root@k8s-node01 ~]# docker pull bluersw/metrics-server-amd64:v0.3.6

v0.3.6: Pulling from bluersw/metrics-server-amd64

Digest: sha256:c9c4e95068b51d6b33a9dccc61875df07dc650abbf4ac1a19d58b4628f89288b

Status: Image is up to date for bluersw/metrics-server-amd64:v0.3.6

docker.io/bluersw/metrics-server-amd64:v0.3.6

Usage: docker tag SOURCE_IMAGE[:TAG] TARGET_IMAGE[:TAG]

Create a tag TARGET_IMAGE that refers to SOURCE_IMAGE

[root@k8s-node01 ~]# docker tag bluersw/metrics-server-amd64:v0.3.6 k8s.gcr.io/metrics-server-amd64:v0.3.6

4.2、修改 Kubernetes apiserver 启动参数

在kube-apiserver项中添加如下配置选项 修改后apiserver会自动重启

在39行下加一行

[root@k8s-master ~]# vim /etc/kubernetes/manifests/kube-apiserver.yaml

40 - --enable-aggregator-routing=true

4.3、Master上进行部署

[root@k8s-master manifests]# wget https://github.com/kubernetes-sigs/metrics-server/releases/downloadv0.3.6/components.yaml

正在连接 objects.githubusercontent.com (objects.githubusercontent.com)|185.199.110.133|:443... 已连接。

已发出 HTTP 请求,正在等待回应... 200 OK

长度:3335 (3.3K) [application/octet-stream]

正在保存至: “components.yaml”

100%[=============================================================>] 3,335 --.-K/s 用时 0s

2023-08-17 16:18:16 (58.9 MB/s) - 已保存 “components.yaml” [3335/3335])

修改安装脚本:

[root@k8s-master ~]# vim components.yaml

91 - --kubelet-preferred-address-types=InternalIP

92 - --kubelet-insecure-tls

[root@k8s-master ~]# kubectl create -f components.yaml

clusterrole.rbac.authorization.k8s.io/system:aggregated-metrics-reader created

clusterrolebinding.rbac.authorization.k8s.io/metrics-server:system:auth-delegator created

rolebinding.rbac.authorization.k8s.io/metrics-server-auth-reader created

Warning: apiregistration.k8s.io/v1beta1 APIService is deprecated in v1.19+, unavailable in v1.22+; use apiregistration.k8s.io/v1 APIService

apiservice.apiregistration.k8s.io/v1beta1.metrics.k8s.io created

serviceaccount/metrics-server created

deployment.apps/metrics-server created

service/metrics-server created

clusterrole.rbac.authorization.k8s.io/system:metrics-server created

clusterrolebinding.rbac.authorization.k8s.io/system:metrics-server created

[root@k8s-master ~]# kubectl top nodes

error: metrics not available yet

等待1-2分钟后查看结果

[root@k8s-master ~]# kubectl top nodes

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

k8s-master 84m 4% 1475Mi 53%

k8s-node01 32m 1% 1071Mi 29%

k8s-node02 20m 2% 425Mi 48%

五、弹性伸缩

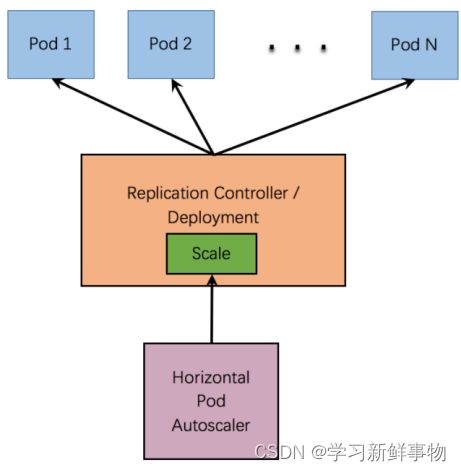

5.1、弹性伸缩介绍

HPA(Horizontal Pod Autoscaler,Pod水平自动伸缩)的操作对象是replication controller, deployment, replica set, stateful set 中的pod数量。注意,Horizontal Pod Autoscaling不适用于无法伸缩的对象,例如DaemonSets。

HPA根据观察到的CPU使用量与用户的阈值进行比对,做出是否需要增减实例(Pods)数量的决策。控制器会定期调整副本控制器或部署中副本的数量,以使观察到的平均CPU利用率与用户指定的目标相匹配。

5.2、弹性伸缩工作原理

Horizontal Pod Autoscaler 会实现为一个控制循环,其周期由--horizontal-pod-autoscaler-sync-period选项指定(默认15秒)。

在每个周期内,controller manager都会根据每个HorizontalPodAutoscaler定义的指定的指标去查询资源利用率。 controller manager从资源指标API(针对每个pod资源指标)或自定义指标API(针对所有其他指标)获取指标。

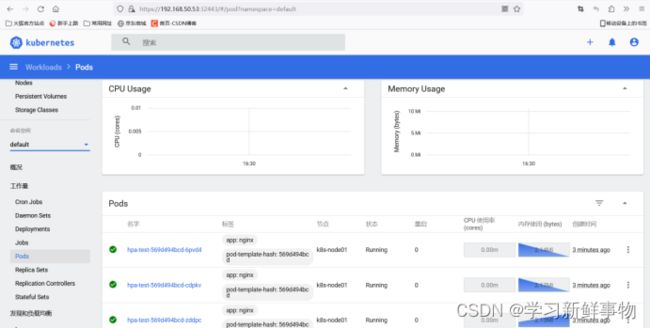

5.3、弹性伸缩实战

[root@k8s-master ~]# mkdir hpa

[root@k8s-master ~]# cd hpa/

创建hpa测试应用的deployment

[root@k8s-master hpa]# vim nginx.yaml

[root@k8s-master hpa]# cat nginx.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: hpa-test

labels:

app: hpa

spec:

replicas: 3

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:1.19.6

ports:

- containerPort: 80

resources:

requests:

cpu: 0.010

memory: 100Mi

limits:

[root@k8s-master hpa]# kubectl apply -f nginx.yaml

deployment.apps/hpa-test created

[root@k8s-master hpa]# kubectl get pod

NAME READY STATUS RESTARTS AGE

hpa-test-569d494bcd-6pvd4 0/1 ContainerCreating 0 2m14s

hpa-test-569d494bcd-cdpkv 1/1 Running 0 2m14s

hpa-test-569d494bcd-zddpc 1/1 Running 0 2m14s

创建hpa策略

[root@k8s-master hpa]# kubectl autoscale --max=10 --min=1 --cpu-percent=5 deployment hpa-test

horizontalpodautoscaler.autoscaling/hpa-test autoscaled

[root@k8s-master hpa]# kubectl get hpa

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE

hpa-test Deployment/hpa-test 0%/5% 1 10 3 46s

我们看到了一个名为hpa-test的HorizontalPodAutoscaler对象,它关联着一个名为hpa-test的Deployment,并且当前的目标CPU利用率为0%,最小和最大副本数分别为1和10,当前的Pod副本数为3。

[root@k8s-master hpa]# kubectl get pod

NAME READY STATUS RESTARTS AGE

hpa-test-569d494bcd-6pvd4 1/1 Running 0 2m36s

hpa-test-569d494bcd-cdpkv 1/1 Running 0 2m36s

hpa-test-569d494bcd-zddpc 1/1 Running 0 2m36s

命令显示了当前集群中所有的Pod对象,并显示每个对象的名称、状态、已准备好的容器数量和重启次数等等。在这个特定的输出中,我们看到名称为hpa-test-569d494bcd-6pvd4、hpa-test-569d494bcd-cdpkv和hpa-test-569d494bcd-zddpc的三个Pod已经准备好了运行,它们的状态都为Running,并且都只有一个容器,并且从未重启过。

模拟业务压力测试

[root@k8s-master hpa]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

hpa-test-569d494bcd-6pvd4 1/1 Running 0 4m7s 10.244.2.9 k8s-node01

hpa-test-569d494bcd-cdpkv 1/1 Running 0 4m7s 10.244.2.8 k8s-node01

hpa-test-569d494bcd-zddpc 1/1 Running 0 4m7s 10.244.2.7 k8s-node01

[root@k8s-master hpa]# while true;do curl -l 10.244.2.8 ;done

它的作用是不断执行curl命令来访问IP地址为10.244.2.8的服务(或容器),直到手动停止脚本执行(比如按Ctrl+C键)。其中while true表示无限循环执行,curl是一个命令行工具,用于发送HTTP请求并显示响应内容。这个命令的实际作用可能是为了测试一个应用或服务是否能够正常响应HTTP请求,同时也可以观察到请求的响应时间和内容。

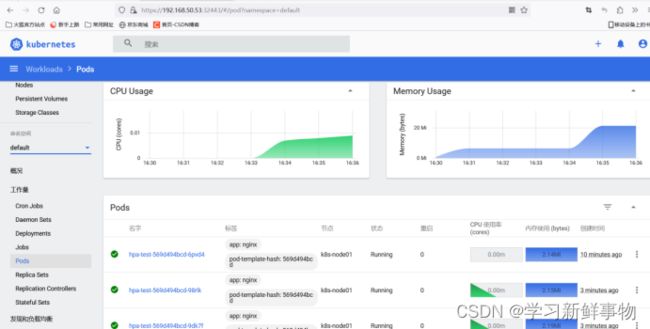

观察资源使用情况及弹性伸缩情况

[root@k8s-master hpa]# kubectl get hpa

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE

hpa-test Deployment/hpa-test 11%/5% 1 10 10 12m

[root@k8s-master hpa]# kubectl get pod

NAME READY STATUS RESTARTS AGE

hpa-test-569d494bcd-6pvd4 1/1 Running 0 8m8s

hpa-test-569d494bcd-98rlk 1/1 Running 0 92s

hpa-test-569d494bcd-9dk7f 1/1 Running 0 92s

hpa-test-569d494bcd-cdpkv 1/1 Running 0 8m8s

hpa-test-569d494bcd-jm4lw 1/1 Running 0 107s

hpa-test-569d494bcd-lghrq 1/1 Running 0 92s

hpa-test-569d494bcd-rn9nq 1/1 Running 0 107s

hpa-test-569d494bcd-rsr4g 1/1 Running 0 92s

hpa-test-569d494bcd-zddpc 1/1 Running 0 8m8s

hpa-test-569d494bcd-zm6bx 1/1 Running 0 107s

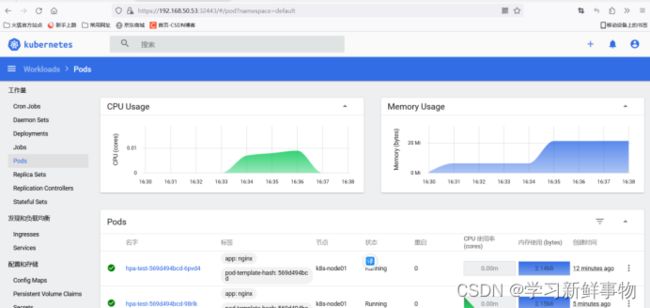

将压力测试终止后,稍等一小会儿pod数量会自动缩减

[root@k8s-master hpa]# kubectl get hpa

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE

hpa-test Deployment/hpa-test 0%/5% 1 10 10 16m

删除hpa策略

[root@k8s-master ~]# kubectl delete hpa hpa-test

horizontalpodautoscaler.autoscaling "hpa-test" deleted

[root@k8s-master ~]# kubectl get hpa

No resources found in default namespace.

再见拜拜