使用kubeadm方式快速部署一个K8S集群

目录

一、环境准备

二、环境初始化

三、在所有主机上安装相关软件

1、安装docker

2、配置k8s的yum源

3、安装kubelet、kubeadm、kubectl

四、部署Kubernetes Master

五、加入Kubernets Node

六、部署CNI网络插件

七、测试k8s集群

一、环境准备

我的是CentOS7系统,然后准备三台虚拟主机

一台master,和两台node:node1、node2

我设置的主机名以及对应IP如下:

| 主机名 | IP地址 |

| k8smaster | 192.168.198.150 |

| k8snode1 | 192.168.198.151 |

| k8snode2 | 192.168.198.152 |

二、环境初始化

虚拟主机准备好之后,每一台都必须要关闭防火墙和selinux服务,以及关闭swap

在所有主机上执行:

#所有主机都要执行的操作

#关闭防火墙

临时:systemctl stop firewalld

永久:systemctl disable firewall

#关闭selinux

临时:setenforce 0

永久:sed -i 's/enforcing/disabled' /etc/selinux/config

#关闭swap

临时:swapoff -a

永久:sed -ri 's/.*swap.*/#&/' /etc/fstab

#开启流量转发,将桥接的IPv4流量传递到iptables

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

使其生效:sysctl --system

#设置时间同步

yum install ntpdate -y

ntp time.windows.com

仅在192.168.198.150(master)主机上执行:

#master上做的操作

#在master上添加hosts,根据自己设置的主机名和对应IP添加

cat >> /etc/hosts << EOF

192.168.198.150 k8smaster

192.168.198.151 k8snode1

192.168.198.152 k8snode2

EOF

三、在所有主机上安装相关软件

所有主机上执行以下所有安装操作

1、安装docker

#使用阿里云的提供的docker仓库

curl -o /etc/yum.repos.d/docker-ce.repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

#更新yum缓存

yum clean all && yum makecache

#查看yum源中可用的版本

yum list docker-ce --showduplicates | sort -r

#直接yum安装

yum install -y docker-ce-20.10.6

#也可以直接安装旧版本

#yum install -y docker-ce-18.09.9

#启动docker,并设置开机自启

systemctl start docker

systemctl enable docker#查看版本信息,能看到则安装启动成功

docker version

然后配置加速器,可以去登录自己的阿里云账号,获取容器镜像服务

点击链接:

阿里云登录 - 欢迎登录阿里云,安全稳定的云计算服务平台

复制步骤就可以完成了

2、配置k8s的yum源

#配置阿里的官方yum源,方便后面软件的安装

cat <

/etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

3、安装kubelet、kubeadm、kubectl

#由于版本更新频繁,所以我这里指定版本号部署,也可以不指定版本

yum install -y kubelet-1.19.4 kubeadm-1.19.4 kubectl-1.19.4

#设置开机自启

systemctl enable kubelet

四、部署Kubernetes Master

仅在主机192.168.198.150(master)上执行:

#执行命令初始化

kubeadm init \

--apiserver-advertise-address=192.168.198.150 \ #写主机的IP

--image-repository registry.aliyuncs.com/google_containers \ #指定为阿里云仓库地址

--kubernetes-version v1.19.4 \ #指定版本信息,和你安装的版本要一致

--service-cidr=10.88.0.0/12 \ #这个无所谓,只要不和其他的IP冲突即可

--pod-network-cidr=10.240.0.0/16 #同样的,不和其他IP冲突即可

#上面的斜杠\表示换行,方便展示命令,其实是一条完整命令,如下

kubeadm init --apiserver-advertise-address=192.168.198.150 --image-repository registry.aliyuncs.com/google_containers --kubernetes-version v1.19.4 --service-cidr=10.88.0.0/12 --pod-network-cidr=10.240.0.0/16

#然后就可以看到拉取到了这些镜像

[root@k8smaster ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

registry.aliyuncs.com/google_containers/kube-proxy v1.19.4 635b36f4d89f 2 years ago 118MB

registry.aliyuncs.com/google_containers/kube-controller-manager v1.19.4 4830ab618586 2 years ago 111MB

registry.aliyuncs.com/google_containers/kube-apiserver v1.19.4 b15c6247777d 2 years ago 119MB

registry.aliyuncs.com/google_containers/kube-scheduler v1.19.4 14cd22f7abe7 2 years ago 45.7MB

registry.aliyuncs.com/google_containers/etcd 3.4.13-0 0369cf4303ff 2 years ago 253MB

registry.aliyuncs.com/google_containers/coredns 1.7.0 bfe3a36ebd25 3 years ago 45.2MB

registry.aliyuncs.com/google_containers/pause 3.2 80d28bedfe5d 3 years ago 683kB

[root@k8smaster ~]#我们刚才在执行完kubeamd init命令之后,结尾会有以下信息

然后就可以直接复制这三条命令去执行

#执行以下命令即可使用kubectl工具

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

五、加入Kubernets Node

仅在两个Node节点(node1、node2)上执行:

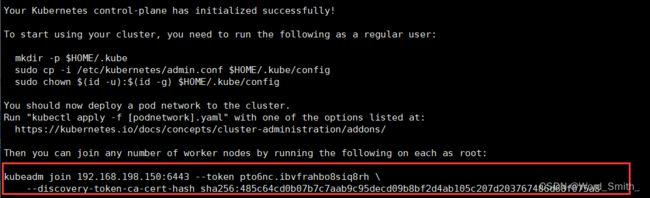

继续看到刚才在Master上执行完kubeamd init命令之后的结尾信息

#复制命令到Node节点:192.168.198.151(node1)和192.168.198.152(node2)上执行

kubeadm join 192.168.198.150:6443 --token pto6nc.ibvfrahbo8siq8rh \

--discovery-token-ca-cert-hash sha256:485c64cd0b07b7c7aab9c95decd09b8bf2d4ab105c207d203767486d68f075a8

在Master上可以看到节点信息,k8snode1和k8snode2就被加入进来了

[root@k8smaster ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8smaster NotReady master 21m v1.19.4

k8snode1 NotReady 98s v1.19.4

k8snode2 NotReady 88s v1.19.4 #默认token有效期为24小时,过期后就不可用了,需要重新创建token可以执行以下命令

kubeadm token create --print-join-command

六、部署CNI网络插件

在192.168.198.150(master)上执行

#从docker hub上下载镜像仓库,由于是国外网站,可能会失败,多试几次

kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

如果一直失败的话,可以试试以下办法:

vim kube-flannel.yml,然后复制下面代码框中的内容进去,保存退出之后,再执行kubectl apply -f kube-flannel.yml命令即可

---

kind: Namespace

apiVersion: v1

metadata:

name: kube-flannel

labels:

k8s-app: flannel

pod-security.kubernetes.io/enforce: privileged

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: flannel

name: flannel

rules:

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

- apiGroups:

- networking.k8s.io

resources:

- clustercidrs

verbs:

- list

- watch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: flannel

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-flannel

---

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: flannel

name: flannel

namespace: kube-flannel

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-flannel

labels:

tier: node

k8s-app: flannel

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-flannel

labels:

tier: node

app: flannel

k8s-app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni-plugin

image: docker.io/flannel/flannel-cni-plugin:v1.2.0

command:

- cp

args:

- -f

- /flannel

- /opt/cni/bin/flannel

volumeMounts:

- name: cni-plugin

mountPath: /opt/cni/bin

- name: install-cni

image: docker.io/flannel/flannel:v0.22.2

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: docker.io/flannel/flannel:v0.22.2

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: EVENT_QUEUE_DEPTH

value: "5000"

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

- name: xtables-lock

mountPath: /run/xtables.lock

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni-plugin

hostPath:

path: /opt/cni/bin

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

- name: xtables-lock

hostPath:

path: /run/xtables.lock

type: FileOrCreate#执行命令下载

[root@k8smaster ~]# kubectl apply -f kube-flannel.yml

namespace/kube-flannel created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds created

[root@k8smaster ~]#

#然后再来查看status状态,还没好的多等一会儿就会好

[root@k8smaster ~]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-6d56c8448f-82vp9 0/1 Pending 0 64m

coredns-6d56c8448f-vdlrw 0/1 Pending 0 64m

etcd-k8smaster 1/1 Running 0 64m

kube-apiserver-k8smaster 1/1 Running 0 64m

kube-controller-manager-k8smaster 1/1 Running 0 64m

kube-proxy-89dm9 1/1 Running 0 64m

kube-proxy-ltrtj 1/1 Running 0 44m

kube-proxy-ngph4 1/1 Running 0 44m

kube-scheduler-k8smaster 1/1 Running 0 64m

#查看nodes状态,都是Ready即可

[root@k8smaster ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8smaster Ready master 91m v1.19.4

k8snode1 Ready 71m v1.19.4

k8snode2 Ready 71m v1.19.4 七、测试k8s集群

在192.168.198.150(master)上执行

在Kubernetes集群中创建一个pod,验证是否正常运行

#拉取nginx镜像

[root@k8smaster ~]# kubectl create deployment nginx --image=nginx

deployment.apps/nginx created

#等待状态变成running

[root@k8smaster ~]# kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-6799fc88d8-s2pt9 0/1 ContainerCreating 0 67s

#设置对外暴露的端口,提供访问

[root@k8smaster ~]# kubectl expose deployment nginx --port=80 --type=NodePort

service/nginx exposed

#查看对外暴露的端口信息,因为我目前还没启动好,状态显示还在连网拉取当中

[root@k8smaster ~]# kubectl get pod,svc

NAME READY STATUS RESTARTS AGE

pod/nginx-6799fc88d8-s2pt9 0/1 ContainerCreating 0 11m

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/kubernetes ClusterIP 10.80.0.1 443/TCP 109m

service/nginx NodePort 10.81.133.109 80:32061/TCP 48s

[root@k8smaster ~]#

##可以看到端口是80映射到32061 由于我的状态还在ContainerCreating中,很慢,泡的枸杞都喝完两杯了还没好(不知道是网速问题,还是设备资源给小了的问题),就没法演示了,等到状态是Running就可以测试了。测试的时候就可以用任意一个Node节点的IP,后面跟上刚查看到的32061这个端口,便可以访问到nginx的欢迎界面。