【运维】hadoop3.0.3集群安装(一)多节点安装

文章目录

- 一.Purpose

- 二. Prerequisites

- 三. Installation

-

- 1. 节点规划

- 2. Configuring Hadoop in Non-Secure Mode

- 3. 准备工作

- 4. 配置

-

- core-site.xml

- hdfs-site.xml

- yarn-site.xml

- mapred-site.xml

- workers

- 4. 分发配置、创建文件夹

- 5. 格式化

- 6. 操作进程

-

- 6.1. hdfs

-

- 启动

- 停止

- 6.2. yarn

-

- 启动

- 停止

- 7. 访问

一.Purpose

This document describes how to install and configure Hadoop clusters ranging from a few nodes to extremely large clusters with thousands of nodes.

This document does not cover advanced topics such as Security or High Availability.

此文章目的在于多节点hadoop(从几个节点到上千个节点)的安装,但这里不包括高可用和安全相关的内容。

二. Prerequisites

- java 8

- 稳定版的hadoop镜像:本文下载的是hadoop3.0.3版本

三. Installation

Typically one machine in the cluster is designated as the NameNode and another machine as the ResourceManager, exclusively. These are the masters.

Other services (such as Web App Proxy Server and MapReduce Job History server) are usually run either on dedicated hardware or on shared infrastructure, depending upon the load.

The rest of the machines in the cluster act as both DataNode and NodeManager. These are the slaves.

- 管理节点:通常,集群中的一台机器被指定为NameNode,另一台机器被指定为ResourceManager。

- 工作节点:集群中的其余机器同时充当DataNode和NodeManager。

- 其他服务:(如Web App Proxy Server和MapReduce Job History Server)通常在专用硬件或共享基础设施上运行,具体取决于负载,这里我放在了除管理节点之外的节点

1. 节点规划

根据上面的建议,我这里选择了两个安装节点进行组件规划

| 节点 | hdfs组件 | yarn组件 |

|---|---|---|

| 10.xxx(node1) | namenode、datanode | resourcemanager、nodemanager |

| 10.xxx(node2) | secondaryNameNode、datanode | nodemanager、jobHistorynode |

2. Configuring Hadoop in Non-Secure Mode

HDFS daemons are NameNode, SecondaryNameNode, and DataNode. YARN daemons are ResourceManager, NodeManager, and WebAppProxy. If MapReduce is to be used, then the MapReduce Job History Server will also be running. For large installations, these are generally running on separate hosts.

Hdfs 包括:namenode、secondaryNamenode、datanode

yarn包括:resourcemanager、nodemanger、和WebAppProxy(暂时没有规划此进程)

如果运行mr,则MapReduce Job History Server也需要

注意:

对于大型安装,上述组件都是分散在不同机器中的。

3. 准备工作

每个节点【node1、node2】操作:

mkdir -p /home/user/hadoop

cd /home/user/hadoop

tar -zxvf hadoop.tar.gz

ln -s hadoop-3.0.3 hadoop

设置环境变量:

vim ~/.bashrc

# 添加如下内容

export HADOOP_HOME=/home/user/hadoop/hadoop

export PATH=$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH

export HADOOP_CONF_DIR=/home/user/hadoop/hadoop/etc/hadoop

# 执行

source ~/.bashrc

4. 配置

在/{user_home}/hadoop/hadoop/etc/hadoop/ 下

core-site.xml

<configuration>

<property>

<name>fs.defaultFSname>

<value>hdfs://namenodeIp:9000value>

<description>

ip 为namenode所在ip

description>

property>

configuration>

hdfs-site.xml

<property>

<name>dfs.namenode.name.dirname>

<value>/opt/data/hdfs/namenode,/opt/data02/hdfs/namenodevalue>

<description>If this is a comma-delimited list of directories then the name table is replicated in all of the

directories, for redundancy.

Path on the local filesystem where the NameNode stores the namespace and transactions logs persistently.

用于保存Namenode的namespace和事务日志的路径

description>

property>

<property>

<name>dfs.datanode.data.dirname>

<value>/opt/data/hdfs/data,/opt/data02/hdfs/datavalue>

<description>

If this is a comma-delimited list of directories,

then data will be stored in all named directories,

typically on different devices.

description>

property>

yarn-site.xml

<property>

<name>yarn.resourcemanager.addressname>

<value>node1:8832value>

property>

<property>

<name>yarn.resourcemanager.scheduler.addressname>

<value>node1:8830value>

property>

<property>

<name>yarn.resourcemanager.resource-tracker.addressname>

<value>node1:8831value>

property>

<property>

<name>yarn.resourcemanager.admin.addressname>

<value>node1:8833value>

property>

<property>

<name>yarn.resourcemanager.webapp.addressname>

<value>node1:8888value>

property>

<property>

<name>yarn.resourcemanager.hostnamename>

<value>rmhostnamevalue>

property>

<property>

<name>yarn.nodemanager.local-dirsname>

<value>/data/yarn/nm-local-dir,/data02/yarn/nm-local-dirvalue>

property>

<property>

<name>yarn.nodemanager.log-dirsname>

<value>/home/taiyi/hadoop/yarn/userlogsvalue>

property>

<property>

<name>yarn.nodemanager.remote-app-log-dirname>

<value>/home/taiyi/hadoop/yarn/containerlogsvalue>

property>

<property>

<name>yarn.nodemanager.resource.memory-mbname>

<value>61440value>

<description>通过free -h 查看机器具体内存设定

description>

property>

mapred-site.xml

<property>

<name>mapreduce.jobhistory.addressname>

<value>node2:10020value>

property>

<property>

<name>mapreduce.jobhistory.webapp.addressname>

<value>node2:19888value>

property>

workers

配置工作节点

node1

node2

4. 分发配置、创建文件夹

配置分发到另外一个节点

scp -r \

/home/user/hadoop/hadoop/etc/hadoop/ \

root@node2hostname:/home/user/hadoop/hadoop/etc/

所有节点创建文件夹

mkdir -p /data/yarn/nm-local-dir /data02/yarn/nm-local-dir

chown -R user:user /data/yarn /data02/yarn

mkdir -p /opt/data/hdfs/namenode /opt/data02/hdfs/namenode /opt/data/hdfs/data /opt/data02/hdfs/data

chown -R user:user /opt/data /opt/data02

5. 格式化

namenode所在节点执行

hdfs namenode -format

如果看到这些信息格式化成功

2022-08-12 17:43:11,039 INFO common.Storage: Storage directory /Users/lianggao/MyWorkSpace/002install/hadoop-3.3.1/hadoop_repo/dfs/name

has been successfully formatted.

2022-08-12 17:43:11,069 INFO namenode.FSImageFormatProtobuf: Saving image file /Users/lianggao/MyWorkSpace/002install/hadoop-3.3.1/hadoop_repo/dfs/name/current/fsimage.ckpt_0000000000000000000 using no compression

2022-08-12 17:43:11,200 INFO namenode.FSImageFormatProtobuf: Image file /Users/lianggao/MyWorkSpace/002install/hadoop-3.3.1/hadoop_repo/dfs/name/current/fsimage.ckpt_0000000000000000000 of size 403 bytes saved in 0 seconds .

如果格式化失败需要先删除nn的管理目录

因为格式化的时候是创建了nn文件的管理目录 common.Storage: Storage directory /data/hadoopdata/name has been successfully formatted.

6. 操作进程

6.1. hdfs

启动

node1

hdfs --daemon start namenode

hdfs --daemon start datanode

node2

hdfs --daemon start secondarynamenode

hdfs --daemon start datanode

停止

hdfs --daemon stop namenode

hdfs --daemon stop secondarynamenode

hdfs --daemon stop datanode

6.2. yarn

启动

node1

yarn --daemon start resourcemanager

yarn --daemon start nodemanager

node2

mapred --daemon start historyserver

yarn --daemon start nodemanager

停止

yarn --daemon stop resourcemanager

yarn --daemon stop nodemanager

mapred --daemon stop historyserver

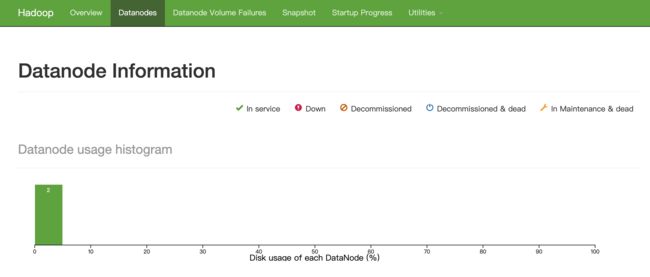

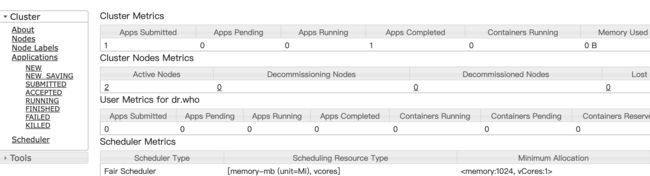

7. 访问

http://node1:9870/

http://node2:8088/