注意力机制讲解与代码解析

一、SEBlock(通道注意力机制)

先在H*W维度进行压缩,全局平均池化将每个通道平均为一个值。

(B, C, H, W)---- (B, C, 1, 1)

利用各channel维度的相关性计算权重

(B, C, 1, 1) --- (B, C//K, 1, 1) --- (B, C, 1, 1) --- sigmoid

import torch

import torch.nn as nn

class SELayer(nn.Module):

def __init__(self, channel, reduction = 4):

super(SELayer, self).__init__()

self.avg_pool = nn.AdaptiveAvgPool2d(1) //自适应全局池化,只需要给出池化后特征图大小

self.fc1 = nn.Sequential(

nn.Conv2d(channel, channel//reduction, 1, bias = False),

nn.ReLu(implace = True),

nn.Conv2d(channel//reduction, channel, 1, bias = False),

nn.sigmoid()

)

def forward(self, x):

y = self.avg_pool(x)

y_out = self.fc1(y)

return x * y二、CBAM(通道注意力+空间注意力机制)

CBAM里面既有通道注意力机制,也有空间注意力机制。

通道注意力同SE的大致相同,但额外加入了全局最大池化与全局平均池化并行。

空间注意力机制:先在channel维度进行最大池化和均值池化,然后在channel维度合并,MLP进行特征交融。最终和原始特征相乘。

import torch

import torch.nn as nn

class ChannelAttention(nn.Module):

def __init__(self, channel, rate = 4):

super(ChannelAttention, self).__init__()

self.avg_pool = nn.AdaptiveAvgPool2d(1)

self.max_pool = nn.AdaptiveMaxPool2d(1)

self.fc1 = nn.Sequential(

nn.Conv2d(channel, channel//rate, 1, bias = False)

nn.ReLu(implace = True)

nn.Conv2d(channel//rate, channel, 1, bias = False)

)

self.sig = nn.sigmoid()

def forward(self, x):

avg = sefl.avg_pool(x)

avg_feature = self.fc1(avg)

max = self.max_pool(x)

max_feature = self.fc1(max)

out = max_feature + avg_feature

out = self.sig(out)

return x * out

import torch

import torch.nn as nn

class SpatialAttention(nn.Module):

def __init__(self):

super(SpatialAttention, self).__init__()

//(B,C,H,W)---(B,1,H,W)---(B,2,H,W)---(B,1,H,W)

self.conv1 = nn.Conv2d(2, 1, kernel_size = 3, padding = 1, bias = False)

self.sigmoid = nn.sigmoid()

def forward(self, x):

mean_f = torch.mean(x, dim = 1, keepdim = True)

max_f = torch.max(x, dim = 1, keepdim = True)

cat = torch.cat([mean_f, max_f], dim = 1)

out = self.conv1(cat)

return x*self.sigmod(out)三、transformer里的注意力机制

Scaled Dot-Product Attention

该注意力机制的输入是QKV。

1.先Q,K相乘。

2.scale

3.softmax

4.求output

import torch

import torch.nn as nn

class ScaledDotProductAttention(nn.Module):

def __init__(self, scale):

super(ScaledDotProductAttention, self)

self.scale = scale

self.softmax = nn.softmax(dim = 2)

def forward(self, q, k, v):

u = torch.bmm(q, k.transpose(1, 2))

u = u / scale

attn = self.softmax(u)

output = torch.bmm(attn, v)

return output

scale = np.power(d_k, 0.5) //缩放系数为K维度的根号。

//Q (B, n_q, d_q) , K (B, n_k, d_k) V (B, n_v, d_v),Q与K的特征维度一定要一样。KV的个数一定要一样。MultiHeadAttention

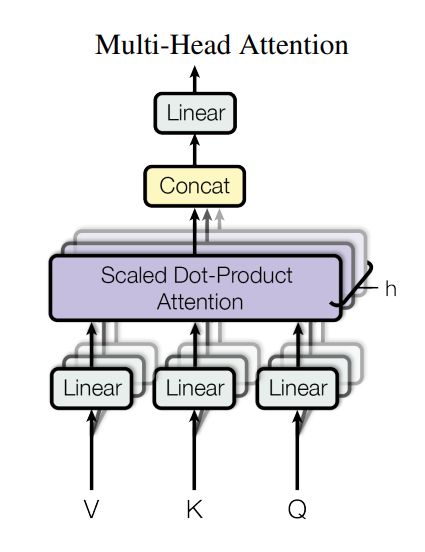

将QKVchannel维度转换为n*C的形式,相当于分成n份,分别做注意力机制。

1.QKV单头变多头 channel ----- n * new_channel通过linear变换,然后把head和batch先合并

2.求单头注意力机制输出

3.维度拆分 将最终的head和channel合并。

4.linear得到最终输出维度

import torch

import torch.nn as nn

class MultiHeadAttention(nn.Module):

def __init__(self, n_head, d_k, d_k_, d_v, d_v_, d_o):

super(MultiHeadAttention, self)

self.n_head = n_head

self.d_k = d_k

self.d_v = d_v

self.fc_k = nn.Linear(d_k_, n_head * d_k)

self.fc_v = nn.Linear(d_v_, n_head * d_v)

self.fc_q = nn.Linear(d_k_, n_head * d_k)

self.attention = ScaledDotProductAttention(scale=np.power(d_k, 0.5))

self.fc_o = nn.Linear(n_head * d_v, d_0)

def forward(self, q, k, v):

batch, n_q, d_q_ = q.size()

batch, n_k, d_k_ = k.size()

batch, n_v, d_v_ = v.size()

q = self.fc_q(q)

k = self.fc_k(k)

v = self.fc_v(v)

q = q.view(batch, n_q, n_head, d_q).permute(2, 0, 1, 3).contiguous().view(-1, n_q, d_q)

k = k.view(batch, n_k, n_head, d_k).permute(2, 0, 1, 3).contiguous().view(-1, n_k, d_k)

v = v.view(batch, n_v, n_head, d_v).permute(2, 0, 1, 3).contiguous().view(-1. n_v, d_v)

output = self.attention(q, k, v)

output = output.view(n_head, batch, n_q, d_v).permute(1, 2, 0, 3).contiguous().view(batch, n_q, -1)

output = self.fc_0(output)

return output