循环神经网络——中篇【深度学习】【PyTorch】【d2l】

文章目录

- 6、循环神经网络

-

- 6.4、循环神经网络(`RNN`)

-

- 6.4.1、理论部分

- 6.4.2、代码实现

- 6.5、长短期记忆网络(`LSTM`)

-

- 6.5.1、理论部分

- 6.5.2、代码实现

- 6.6、门控循环单元(`GRU`)

-

- 6.6.1、理论部分

- 6.6.2、代码实现

6、循环神经网络

6.4、循环神经网络(RNN)

6.4.1、理论部分

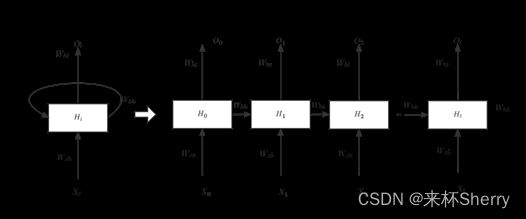

原理图

更新隐藏状态

H t = Φ ( W h h h t − 1 + W h x X t + b h ) H_t = Φ(W_{hh}h_{t-1}+W_{hx}X_t+b_h) Ht=Φ(Whhht−1+WhxXt+bh)

H t : H_t: Ht:当前隐层, X t : X_t: Xt:输入

W h h : W_{hh}: Whh:上层隐层分配到的权重

W h x : W_{hx}: Whx:输入分配到的权重

b:偏移

循环指的是什么?

隐状态使用的定义与前一个时间步中使用的定义相同, 因此 上式计算是循环的(recurrent)。 于是基于循环计算的隐状态神经网络被命名为 循环神经网络(recurrent neural network)。 在循环神经网络中执行如上计算的层 称为循环层(recurrent layer)。

输出

O t = Φ ( W h o H t + b o ) Ot = Φ(W_{ho}H_t+b_o) Ot=Φ(WhoHt+bo)

文本预测展示

困惑度

用来衡量一个语言模型好坏的标准,可以用平均交叉熵,如下:

Π = 1 n ∑ t = 1 n − l o g p ( x t ∣ x t − 1 , . . . x 1 ) Π = \frac{1}{n}\sum_{t=1}^n -log \ p(x_t|x_{t-1},...x_1) Π=n1t=1∑n−log p(xt∣xt−1,...x1)

其中,p为预测概率, x t x_t xt为真实词。

历史原因,NLP使用困惑度 e x p ( Π ) exp(Π) exp(Π)来衡量,是平均每次可能选项,1为完美,最差为∞。

梯度裁剪

用于抑制RNN梯度爆炸,如果梯度长度超过 Θ Θ Θ,那么 g g g长度将拖回 Θ Θ Θ,反之任由 g g g变化。

g ← m i n ( 1 , Θ ∣ ∣ g ∣ ∣ ) g g ← min(1,\frac{Θ}{||g||})g g←min(1,∣∣g∣∣Θ)g

6.4.2、代码实现

import torch

from torch import nn

from torch.nn import functional as F

from d2l import torch as d2l

batch_size, num_steps = 32, 35

train_iter, vocab = d2l.load_data_time_machine(batch_size, num_steps)

定义模型

num_hiddens = 256

rnn_layer = nn.RNN(len(vocab), num_hiddens)

隐状态:(隐藏层数,批量大小,隐藏单元数)

state = torch.zeros((1, batch_size, num_hiddens)) state.shapetorch.Size([1, 32, 256])

#@save

class RNNModel(nn.Module):

"""循环神经网络模型"""

def __init__(self, rnn_layer, vocab_size, **kwargs):

super(RNNModel, self).__init__(**kwargs)

self.rnn = rnn_layer

self.vocab_size = vocab_size

self.num_hiddens = self.rnn.hidden_size

# 如果RNN是双向的(之后将介绍),num_directions应该是2,否则应该是1

if not self.rnn.bidirectional:

self.num_directions = 1

self.linear = nn.Linear(self.num_hiddens, self.vocab_size)

else:

self.num_directions = 2

self.linear = nn.Linear(self.num_hiddens * 2, self.vocab_size)

def forward(self, inputs, state):

X = F.one_hot(inputs.T.long(), self.vocab_size)

X = X.to(torch.float32)

Y, state = self.rnn(X, state)

# 全连接层首先将Y的形状改为(时间步数*批量大小,隐藏单元数)

# 它的输出形状是(时间步数*批量大小,词表大小)。

output = self.linear(Y.reshape((-1, Y.shape[-1])))

return output, state

def begin_state(self, device, batch_size=1):

if not isinstance(self.rnn, nn.LSTM):

# nn.GRU以张量作为隐状态

return torch.zeros((self.num_directions * self.rnn.num_layers,

batch_size, self.num_hiddens),

device=device)

else:

# nn.LSTM以元组作为隐状态

return (torch.zeros((

self.num_directions * self.rnn.num_layers,

batch_size, self.num_hiddens), device=device),

torch.zeros((

self.num_directions * self.rnn.num_layers,

batch_size, self.num_hiddens), device=device))

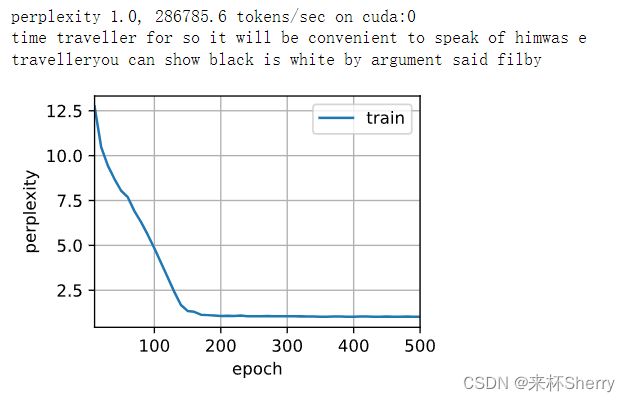

训练&预测

这里是,随即权重预测(效果不好)

device = d2l.try_gpu()

net = RNNModel(rnn_layer, vocab_size=len(vocab))

net = net.to(device)

d2l.predict_ch8('time traveller', 10, net, vocab, device)

'time travellermkkkkkkkkk'

高级API训练预测

num_epochs, lr = 500, 1

d2l.train_ch8(net, train_iter, vocab, lr, num_epochs, device)

调试报错,未解决

6.5、长短期记忆网络(LSTM)

6.5.1、理论部分

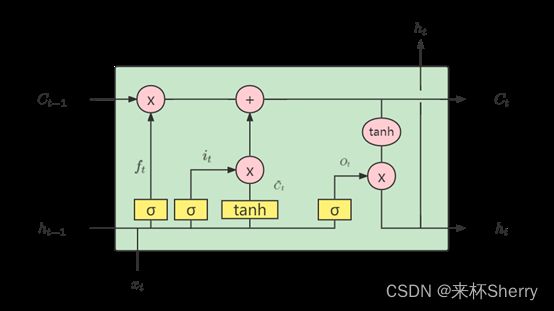

原理图

遗忘门

将值朝0减少。

F t = σ ( X t W x f + H t − 1 W h f + b f ) F_t = σ(X_tW_{xf} + H_{t-1}W_{hf}+b_f) Ft=σ(XtWxf+Ht−1Whf+bf)

输入门

决定是否忽略输入数据。

I t = σ ( X t W x i + H t − 1 W h i + b i ) I_t = σ(X_tW_{xi} + H_{t-1}W_{hi}+b_i) It=σ(XtWxi+Ht−1Whi+bi)

输出门

决定是否使用隐状态。

O t = σ ( X t W x o + H t − 1 W h o + b o ) O_t = σ(X_tW_{xo} + H_{t-1}W_{ho}+b_o) Ot=σ(XtWxo+Ht−1Who+bo)

候选记忆单元

C ~ = t a n h ( X t W x c + H t − 1 W h c + b c ) \tilde{C} = tanh(X_tW_{xc}+H_{t-1}W_{hc}+b_c) C~=tanh(XtWxc+Ht−1Whc+bc)

记忆单元

C t = F t ⊚ C t − 1 + I t ⊚ C ~ t C_t = F_t ⊚ C_{t-1}+I_t ⊚ \tilde{C}_t Ct=Ft⊚Ct−1+It⊚C~t

隐状态

H t = O t ⊚ t a n h ( C ~ ) H_t = O_t ⊚ tanh(\tilde{C}) Ht=Ot⊚tanh(C~)

6.5.2、代码实现

1)手写实现

import torch

from torch import nn

from d2l import torch as d2l

batch_size, num_steps = 32, 35

train_iter, vocab = d2l.load_data_time_machine(batch_size, num_steps)

初始化模型参数

def get_lstm_params(vocab_size, num_hiddens, device):

num_inputs = num_outputs = vocab_size

def normal(shape):

return torch.randn(size=shape, device=device)*0.01

def three():

return (normal((num_inputs, num_hiddens)),

normal((num_hiddens, num_hiddens)),

torch.zeros(num_hiddens, device=device))

W_xi, W_hi, b_i = three() # 输入门参数

W_xf, W_hf, b_f = three() # 遗忘门参数

W_xo, W_ho, b_o = three() # 输出门参数

W_xc, W_hc, b_c = three() # 候选记忆元参数

# 输出层参数

W_hq = normal((num_hiddens, num_outputs))

b_q = torch.zeros(num_outputs, device=device)

# 附加梯度

params = [W_xi, W_hi, b_i, W_xf, W_hf, b_f, W_xo, W_ho, b_o, W_xc, W_hc,

b_c, W_hq, b_q]

for param in params:

param.requires_grad_(True)

return params

定义模型

def init_lstm_state(batch_size, num_hiddens, device):

return (torch.zeros((batch_size, num_hiddens), device=device),

torch.zeros((batch_size, num_hiddens), device=device))

def lstm(inputs, state, params):

[W_xi, W_hi, b_i, W_xf, W_hf, b_f, W_xo, W_ho, b_o, W_xc, W_hc, b_c,

W_hq, b_q] = params

(H, C) = state

outputs = []

for X in inputs:

I = torch.sigmoid((X @ W_xi) + (H @ W_hi) + b_i)

F = torch.sigmoid((X @ W_xf) + (H @ W_hf) + b_f)

O = torch.sigmoid((X @ W_xo) + (H @ W_ho) + b_o)

C_tilda = torch.tanh((X @ W_xc) + (H @ W_hc) + b_c)

C = F * C + I * C_tilda

H = O * torch.tanh(C)

Y = (H @ W_hq) + b_q

outputs.append(Y)

return torch.cat(outputs, dim=0), (H, C)

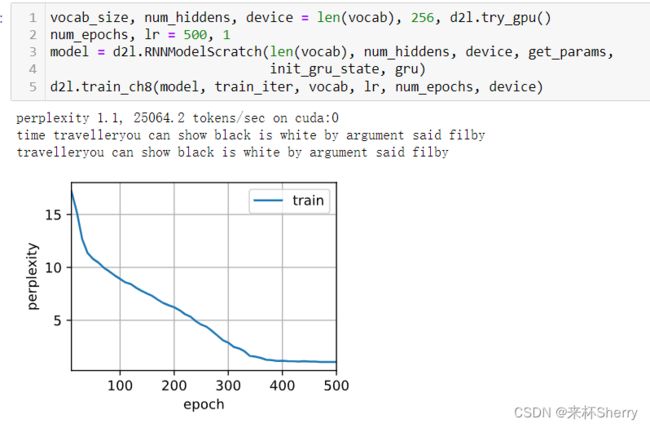

训练&预测

vocab_size, num_hiddens, device = len(vocab), 256, d2l.try_gpu()

num_epochs, lr = 500, 1

model = d2l.RNNModelScratch(len(vocab), num_hiddens, device, get_lstm_params,

init_lstm_state, lstm)

d2l.train_ch8(model, train_iter, vocab, lr, num_epochs, device)

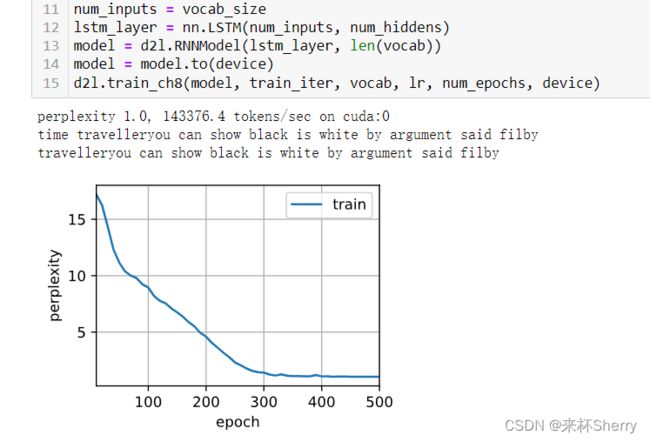

2)简洁实现

num_inputs = vocab_size

lstm_layer = nn.LSTM(num_inputs, num_hiddens)

model = d2l.RNNModel(lstm_layer, len(vocab))

model = model.to(device)

d2l.train_ch8(model, train_iter, vocab, lr, num_epochs, device)

测试中,比手写实现快,甚至更准的原因?

深度学习框架的高级API对代码进行了更多的优化, 该模型在较短的时间内达到了较低的困惑度。

6.6、门控循环单元(GRU)

6.6.1、理论部分

直白理解:不是每个观察都重要,更新门实现关注机制,重置门实现遗忘机制。

原理图

重置门

R t = σ ( X t W x r + H t − 1 W h r + b r ) R_t =σ(X_{tW}x_r+H_{t-1}W_{hr}+b_r) Rt=σ(XtWxr+Ht−1Whr+br)

更新门

Z t = σ ( X t W x z + H t − 1 W h z + b z ) Z_t =σ(X_tW_{xz}+H_{t-1}W_{hz}+b_z) Zt=σ(XtWxz+Ht−1Whz+bz)

候选隐状态

H ~ t = t a n h ( X t W x h + ( R t ⊚ H t − 1 ) W h h + b h ) \tilde{H}_t = tanh(X_tW_{xh}+(R_t ⊚ H_{t-1})W_{hh}+b_h) H~t=tanh(XtWxh+(Rt⊚Ht−1)Whh+bh)

隐状态

H t = Z t ⊚ H t − 1 + ( 1 − Z t ) ⊚ H ~ t H_t = Z_t ⊚ H_{t-1}+(1-Z_t)⊚\tilde{H}_t Ht=Zt⊚Ht−1+(1−Zt)⊚H~t

6.6.2、代码实现

1)手写实现

import torch

from torch import nn

from d2l import torch as d2l

batch_size, num_steps = 32, 35

train_iter, vocab = d2l.load_data_time_machine(batch_size, num_steps)

初始化模型参数

def get_params(vocab_size, num_hiddens, device):

num_inputs = num_outputs = vocab_size

def normal(shape):

return torch.randn(size=shape, device=device)*0.01

def three():

return (normal((num_inputs, num_hiddens)),

normal((num_hiddens, num_hiddens)),

torch.zeros(num_hiddens, device=device))

W_xz, W_hz, b_z = three() # 更新门参数

W_xr, W_hr, b_r = three() # 重置门参数

W_xh, W_hh, b_h = three() # 候选隐状态参数

# 输出层参数

W_hq = normal((num_hiddens, num_outputs))

b_q = torch.zeros(num_outputs, device=device)

# 附加梯度

params = [W_xz, W_hz, b_z, W_xr, W_hr, b_r, W_xh, W_hh, b_h, W_hq, b_q]

for param in params:

param.requires_grad_(True)

return params

定义模型

def init_gru_state(batch_size, num_hiddens, device):

return (torch.zeros((batch_size, num_hiddens), device=device), )

def gru(inputs, state, params):

W_xz, W_hz, b_z, W_xr, W_hr, b_r, W_xh, W_hh, b_h, W_hq, b_q = params

H, = state

outputs = []

for X in inputs:

Z = torch.sigmoid((X @ W_xz) + (H @ W_hz) + b_z)

R = torch.sigmoid((X @ W_xr) + (H @ W_hr) + b_r)

H_tilda = torch.tanh((X @ W_xh) + ((R * H) @ W_hh) + b_h)

H = Z * H + (1 - Z) * H_tilda

Y = H @ W_hq + b_q

outputs.append(Y)

return torch.cat(outputs, dim=0), (H,)

训练&预测

vocab_size, num_hiddens, device = len(vocab), 256, d2l.try_gpu()

num_epochs, lr = 500, 1

model = d2l.RNNModelScratch(len(vocab), num_hiddens, device, get_params,

init_gru_state, gru)

d2l.train_ch8(model, train_iter, vocab, lr, num_epochs, device)

2)简洁实现

num_inputs = vocab_size

gru_layer = nn.GRU(num_inputs, num_hiddens)

model = d2l.RNNModel(gru_layer, len(vocab))

model = model.to(device)

d2l.train_ch8(model, train_iter, vocab, lr, num_epochs, device)