12、Kubernetes中KubeProxy实现之iptables和ipvs

目录

一、概述

二、iptables 代理模式

三、iptables案例分析

四、ipvs案例分析

一、概述

iptables和ipvs其实都是依赖的一个共同的Linux内核模块:Netfilter。Netfilter是Linux 2.4.x引入的一个子系统,它作为一个通用的、抽象的框架,提供一整套的hook函数的管理机制,使得诸如数据包过滤、网络地址转换(NAT)和基于协议类型的连接跟踪成为了可能。

Netfilter的架构就是在整个网络流程的若干位置放置了一些检测点(HOOK),而在每个检测点上登记了一些处理函数进行处理。

在一个网络包进入Linux网卡后,有可能经过这上面五个Hook点,我们可以自己写Hook函数,注册到任意一个点上,而iptables和ipvs都是在这个基础上实现的。

二、iptables 代理模式

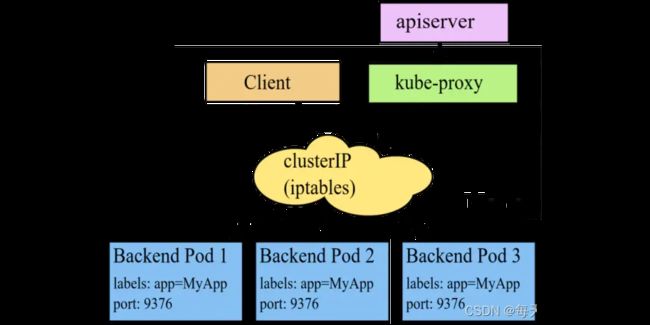

iptables模式下 kube-proxy 为每一个pod创建相对应的 iptables 规则,发送给 ClusterIP 的请求会被直接发送给后端pod之上。

在该模式下 kube-proxy 不承担负载均衡器的角色,其只会负责创建相应的转发策略,该模式的优点在于效率较高,但是不能提供灵活的LB策略,当后端Pod不可用的时候无法进行重试。

iptables是把一些特定规则以“链”的形式挂载到每个Hook点,这些链的规则是固定的,而是是比较通用的,可以通过iptables命令在用户层动态的增删,这些链必须串行的执行。执行到某条规则,如果不匹配,则继续执行下一条,如果匹配,根据规则,可能继续向下执行,也可能跳到某条规则上,也可能下面的规则都跳过。

三、iptables案例分析

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

spec:

replicas: 3

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx

---

apiVersion: v1

kind: Service

metadata:

name: nginx-basic

spec:

type: ClusterIP

ports:

- port: 80

protocol: TCP

name: http

selector:

app: nginx 我们这里创建一个副本数量为3的nginx pod,并创建一个对应的service对外暴露服务,service类型为ClusterIP。

创建完成后,资源如下:

$ kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-deployment-748c667d99-dtr82 1/1 Running 0 38m 192.168.1.3 node01

nginx-deployment-748c667d99-jzpqf 1/1 Running 0 38m 192.168.0.7 controlplane

nginx-deployment-748c667d99-whgr5 1/1 Running 0 38m 192.168.1.4 node01

$ kubectl get svc nginx-basic -o wide

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

nginx-basic ClusterIP 10.105.250.129 80/TCP 39m app=nginx 可以看到,三个pod的IP分别为192.168.1.3、192.168.0.7、192.168.1.4。service的ClusterIP地址为10.105.250.129。

下面我们通过【iptables-save -t nat】命令输出iptables中nat表的信息,说明iptables如何实现转发。

$ iptables-save -t nat

# Generated by iptables-save v1.8.4 on Mon Feb 20 01:52:41 2023

*nat

:PREROUTING ACCEPT [11:660]

:INPUT ACCEPT [11:660]

:OUTPUT ACCEPT [4706:282406]

:POSTROUTING ACCEPT [4706:282406]

:DOCKER - [0:0]

:KUBE-KUBELET-CANARY - [0:0]

:KUBE-MARK-DROP - [0:0]

:KUBE-MARK-MASQ - [0:0]

:KUBE-NODEPORTS - [0:0]

:KUBE-POSTROUTING - [0:0]

:KUBE-PROXY-CANARY - [0:0]

:KUBE-SEP-ABG426TTTCGMOND7 - [0:0]

:KUBE-SEP-BEMSLTMJA26ZCLYL - [0:0]

:KUBE-SEP-D23ODROEH5R4FSGE - [0:0]

:KUBE-SEP-G44RJ25JKAEOKO42 - [0:0]

:KUBE-SEP-GHNVWKROTCCBFQKZ - [0:0]

:KUBE-SEP-NGZXZA7GIJDM26Z3 - [0:0]

:KUBE-SEP-NH2YM7NDCNPLOCTP - [0:0]

:KUBE-SEP-UF65ZV4QBOPNRP6O - [0:0]

:KUBE-SEP-UG4OSZR4VSWZ27C5 - [0:0]

:KUBE-SEP-WQR67ZLSTPDXXME4 - [0:0]

:KUBE-SERVICES - [0:0]

:KUBE-SVC-ERIFXISQEP7F7OF4 - [0:0]

:KUBE-SVC-JD5MR3NA4I4DYORP - [0:0]

:KUBE-SVC-NPX46M4PTMTKRN6Y - [0:0]

:KUBE-SVC-TCOU7JCQXEZGVUNU - [0:0]

:KUBE-SVC-WWRFY3PZ7W3FGMQW - [0:0]

:cali-OUTPUT - [0:0]

:cali-POSTROUTING - [0:0]

:cali-PREROUTING - [0:0]

:cali-fip-dnat - [0:0]

:cali-fip-snat - [0:0]

:cali-nat-outgoing - [0:0]

-A PREROUTING -m comment --comment "cali:6gwbT8clXdHdC1b1" -j cali-PREROUTING

-A PREROUTING -m comment --comment "kubernetes service portals" -j KUBE-SERVICES

-A PREROUTING -m addrtype --dst-type LOCAL -j DOCKER

-A OUTPUT -m comment --comment "cali:tVnHkvAo15HuiPy0" -j cali-OUTPUT

-A OUTPUT -m comment --comment "kubernetes service portals" -j KUBE-SERVICES

-A OUTPUT ! -d 127.0.0.0/8 -m addrtype --dst-type LOCAL -j DOCKER

-A POSTROUTING -m comment --comment "cali:O3lYWMrLQYEMJtB5" -j cali-POSTROUTING

-A POSTROUTING -m comment --comment "kubernetes postrouting rules" -j KUBE-POSTROUTING

-A POSTROUTING -s 172.17.0.0/16 ! -o docker0 -j MASQUERADE

-A POSTROUTING -s 192.168.0.0/16 -d 192.168.0.0/16 -j RETURN

-A POSTROUTING -s 192.168.0.0/16 ! -d 224.0.0.0/4 -j MASQUERADE --random-fully

-A POSTROUTING ! -s 192.168.0.0/16 -d 192.168.0.0/24 -j RETURN

-A POSTROUTING ! -s 192.168.0.0/16 -d 192.168.0.0/16 -j MASQUERADE --random-fully

-A DOCKER -i docker0 -j RETURN

-A KUBE-MARK-DROP -j MARK --set-xmark 0x8000/0x8000

-A KUBE-MARK-MASQ -j MARK --set-xmark 0x4000/0x4000

-A KUBE-POSTROUTING -m mark ! --mark 0x4000/0x4000 -j RETURN

-A KUBE-POSTROUTING -j MARK --set-xmark 0x4000/0x0

-A KUBE-POSTROUTING -m comment --comment "kubernetes service traffic requiring SNAT" -j MASQUERADE --random-fully

-A KUBE-SEP-ABG426TTTCGMOND7 -s 192.168.1.2/32 -m comment --comment "kube-system/kube-dns:dns-tcp" -j KUBE-MARK-MASQ

-A KUBE-SEP-ABG426TTTCGMOND7 -p tcp -m comment --comment "kube-system/kube-dns:dns-tcp" -m tcp -j DNAT --to-destination 192.168.1.2:53

-A KUBE-SEP-BEMSLTMJA26ZCLYL -s 192.168.0.7/32 -m comment --comment "default/nginx-basic:http" -j KUBE-MARK-MASQ

-A KUBE-SEP-BEMSLTMJA26ZCLYL -p tcp -m comment --comment "default/nginx-basic:http" -m tcp -j DNAT --to-destination 192.168.0.7:80

-A KUBE-SEP-D23ODROEH5R4FSGE -s 172.30.1.2/32 -m comment --comment "default/kubernetes:https" -j KUBE-MARK-MASQ

-A KUBE-SEP-D23ODROEH5R4FSGE -p tcp -m comment --comment "default/kubernetes:https" -m tcp -j DNAT --to-destination 172.30.1.2:6443

-A KUBE-SEP-G44RJ25JKAEOKO42 -s 192.168.0.6/32 -m comment --comment "kube-system/kube-dns:dns-tcp" -j KUBE-MARK-MASQ

-A KUBE-SEP-G44RJ25JKAEOKO42 -p tcp -m comment --comment "kube-system/kube-dns:dns-tcp" -m tcp -j DNAT --to-destination 192.168.0.6:53

-A KUBE-SEP-GHNVWKROTCCBFQKZ -s 192.168.1.2/32 -m comment --comment "kube-system/kube-dns:dns" -j KUBE-MARK-MASQ

-A KUBE-SEP-GHNVWKROTCCBFQKZ -p udp -m comment --comment "kube-system/kube-dns:dns" -m udp -j DNAT --to-destination 192.168.1.2:53

-A KUBE-SEP-NGZXZA7GIJDM26Z3 -s 192.168.0.6/32 -m comment --comment "kube-system/kube-dns:dns" -j KUBE-MARK-MASQ

-A KUBE-SEP-NGZXZA7GIJDM26Z3 -p udp -m comment --comment "kube-system/kube-dns:dns" -m udp -j DNAT --to-destination 192.168.0.6:53

-A KUBE-SEP-NH2YM7NDCNPLOCTP -s 192.168.0.6/32 -m comment --comment "kube-system/kube-dns:metrics" -j KUBE-MARK-MASQ

-A KUBE-SEP-NH2YM7NDCNPLOCTP -p tcp -m comment --comment "kube-system/kube-dns:metrics" -m tcp -j DNAT --to-destination 192.168.0.6:9153

-A KUBE-SEP-UF65ZV4QBOPNRP6O -s 192.168.1.3/32 -m comment --comment "default/nginx-basic:http" -j KUBE-MARK-MASQ

-A KUBE-SEP-UF65ZV4QBOPNRP6O -p tcp -m comment --comment "default/nginx-basic:http" -m tcp -j DNAT --to-destination 192.168.1.3:80

-A KUBE-SEP-UG4OSZR4VSWZ27C5 -s 192.168.1.2/32 -m comment --comment "kube-system/kube-dns:metrics" -j KUBE-MARK-MASQ

-A KUBE-SEP-UG4OSZR4VSWZ27C5 -p tcp -m comment --comment "kube-system/kube-dns:metrics" -m tcp -j DNAT --to-destination 192.168.1.2:9153

-A KUBE-SEP-WQR67ZLSTPDXXME4 -s 192.168.1.4/32 -m comment --comment "default/nginx-basic:http" -j KUBE-MARK-MASQ

-A KUBE-SEP-WQR67ZLSTPDXXME4 -p tcp -m comment --comment "default/nginx-basic:http" -m tcp -j DNAT --to-destination 192.168.1.4:80

-A KUBE-SERVICES -d 10.96.0.10/32 -p tcp -m comment --comment "kube-system/kube-dns:dns-tcp cluster IP" -m tcp --dport 53 -j KUBE-SVC-ERIFXISQEP7F7OF4

-A KUBE-SERVICES -d 10.96.0.10/32 -p tcp -m comment --comment "kube-system/kube-dns:metrics cluster IP" -m tcp --dport 9153 -j KUBE-SVC-JD5MR3NA4I4DYORP

-A KUBE-SERVICES -d 10.96.0.10/32 -p udp -m comment --comment "kube-system/kube-dns:dns cluster IP" -m udp --dport 53 -j KUBE-SVC-TCOU7JCQXEZGVUNU

-A KUBE-SERVICES -d 10.105.250.129/32 -p tcp -m comment --comment "default/nginx-basic:http cluster IP" -m tcp --dport 80 -j KUBE-SVC-WWRFY3PZ7W3FGMQW

-A KUBE-SERVICES -d 10.96.0.1/32 -p tcp -m comment --comment "default/kubernetes:https cluster IP" -m tcp --dport 443 -j KUBE-SVC-NPX46M4PTMTKRN6Y

-A KUBE-SERVICES -m comment --comment "kubernetes service nodeports; NOTE: this must be the last rule in this chain" -m addrtype --dst-type LOCAL -j KUBE-NODEPORTS

-A KUBE-SVC-ERIFXISQEP7F7OF4 ! -s 192.168.0.0/16 -d 10.96.0.10/32 -p tcp -m comment --comment "kube-system/kube-dns:dns-tcp cluster IP" -m tcp --dport 53 -j KUBE-MARK-MASQ

-A KUBE-SVC-ERIFXISQEP7F7OF4 -m comment --comment "kube-system/kube-dns:dns-tcp -> 192.168.0.6:53" -m statistic --mode random --probability 0.50000000000 -j KUBE-SEP-G44RJ25JKAEOKO42

-A KUBE-SVC-ERIFXISQEP7F7OF4 -m comment --comment "kube-system/kube-dns:dns-tcp -> 192.168.1.2:53" -j KUBE-SEP-ABG426TTTCGMOND7

-A KUBE-SVC-JD5MR3NA4I4DYORP ! -s 192.168.0.0/16 -d 10.96.0.10/32 -p tcp -m comment --comment "kube-system/kube-dns:metrics cluster IP" -m tcp --dport 9153 -j KUBE-MARK-MASQ

-A KUBE-SVC-JD5MR3NA4I4DYORP -m comment --comment "kube-system/kube-dns:metrics -> 192.168.0.6:9153" -m statistic --mode random --probability 0.50000000000 -j KUBE-SEP-NH2YM7NDCNPLOCTP

-A KUBE-SVC-JD5MR3NA4I4DYORP -m comment --comment "kube-system/kube-dns:metrics -> 192.168.1.2:9153" -j KUBE-SEP-UG4OSZR4VSWZ27C5

-A KUBE-SVC-NPX46M4PTMTKRN6Y ! -s 192.168.0.0/16 -d 10.96.0.1/32 -p tcp -m comment --comment "default/kubernetes:https cluster IP" -m tcp --dport 443 -j KUBE-MARK-MASQ

-A KUBE-SVC-NPX46M4PTMTKRN6Y -m comment --comment "default/kubernetes:https -> 172.30.1.2:6443" -j KUBE-SEP-D23ODROEH5R4FSGE

-A KUBE-SVC-TCOU7JCQXEZGVUNU ! -s 192.168.0.0/16 -d 10.96.0.10/32 -p udp -m comment --comment "kube-system/kube-dns:dns cluster IP" -m udp --dport 53 -j KUBE-MARK-MASQ

-A KUBE-SVC-TCOU7JCQXEZGVUNU -m comment --comment "kube-system/kube-dns:dns -> 192.168.0.6:53" -m statistic --mode random --probability 0.50000000000 -j KUBE-SEP-NGZXZA7GIJDM26Z3

-A KUBE-SVC-TCOU7JCQXEZGVUNU -m comment --comment "kube-system/kube-dns:dns -> 192.168.1.2:53" -j KUBE-SEP-GHNVWKROTCCBFQKZ

-A KUBE-SVC-WWRFY3PZ7W3FGMQW ! -s 192.168.0.0/16 -d 10.105.250.129/32 -p tcp -m comment --comment "default/nginx-basic:http cluster IP" -m tcp --dport 80 -j KUBE-MARK-MASQ

-A KUBE-SVC-WWRFY3PZ7W3FGMQW -m comment --comment "default/nginx-basic:http -> 192.168.0.7:80" -m statistic --mode random --probability 0.33333333349 -j KUBE-SEP-BEMSLTMJA26ZCLYL

-A KUBE-SVC-WWRFY3PZ7W3FGMQW -m comment --comment "default/nginx-basic:http -> 192.168.1.3:80" -m statistic --mode random --probability 0.50000000000 -j KUBE-SEP-UF65ZV4QBOPNRP6O

-A KUBE-SVC-WWRFY3PZ7W3FGMQW -m comment --comment "default/nginx-basic:http -> 192.168.1.4:80" -j KUBE-SEP-WQR67ZLSTPDXXME4

-A cali-OUTPUT -m comment --comment "cali:GBTAv2p5CwevEyJm" -j cali-fip-dnat

-A cali-POSTROUTING -m comment --comment "cali:Z-c7XtVd2Bq7s_hA" -j cali-fip-snat

-A cali-POSTROUTING -m comment --comment "cali:nYKhEzDlr11Jccal" -j cali-nat-outgoing

-A cali-PREROUTING -m comment --comment "cali:r6XmIziWUJsdOK6Z" -j cali-fip-dnat

-A cali-nat-outgoing -m comment --comment "cali:flqWnvo8yq4ULQLa" -m set --match-set cali40masq-ipam-pools src -m set ! --match-set cali40all-ipam-pools dst -j MASQUERADE --random-fully

COMMITiptables-save命令,可以查看当前所有的iptables规则,涉及到k8s的比较多,基本在每个链上都有,包括一些SNAT的,就是Pod访问外部的地址的时候,需要做一下NAT转换,我们只看涉及到Service负载均衡的。

首先,PREROUTING和OUTPUT都加了一个KUBE-SERVICES规则,也就是进来的包和出去的包都要都走一下KUBE-SERVICES规则。

-A PREROUTING -m comment --comment "kubernetes service portals" -j KUBE-SERVICES

-A OUTPUT -m comment --comment "kubernetes service portals" -j KUBE-SERVICES接着,因为我们service的ClusterIP地址为10.105.250.129,所以我们从nat表中找出跟10.105.250.129有关的转发规则。

-A KUBE-SERVICES -d 10.105.250.129/32 -p tcp -m comment --comment "default/nginx-basic:http cluster IP" -m tcp --dport 80 -j KUBE-SVC-WWRFY3PZ7W3FGMQW可以看到,当目标地址是10.105.250.129/32并且目标端口是80的,也就是这个服务的ClusterIP,走到KUBE-SVC-WWRFY3PZ7W3FGMQW规则,这个规则其实就是Kube-Proxy。

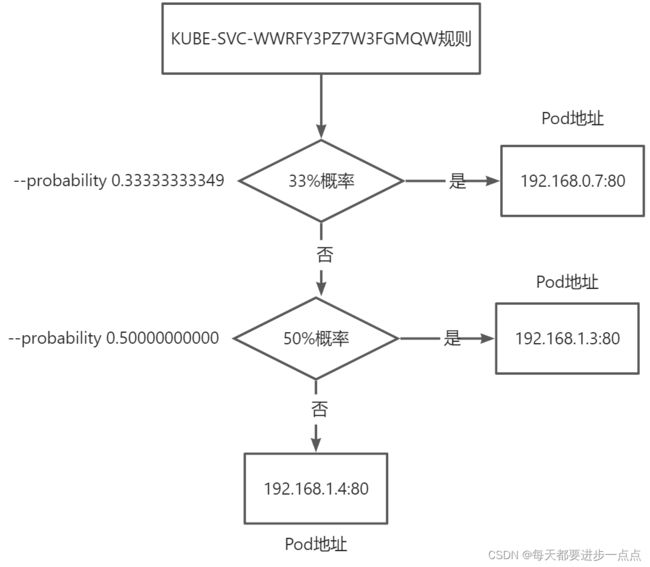

下面我们查看KUBE-SVC-WWRFY3PZ7W3FGMQW规则:

-A KUBE-SVC-WWRFY3PZ7W3FGMQW -m comment --comment "default/nginx-basic:http -> 192.168.0.7:80" -m statistic --mode random --probability 0.33333333349 -j KUBE-SEP-BEMSLTMJA26ZCLYL

-A KUBE-SVC-WWRFY3PZ7W3FGMQW -m comment --comment "default/nginx-basic:http -> 192.168.1.3:80" -m statistic --mode random --probability 0.50000000000 -j KUBE-SEP-UF65ZV4QBOPNRP6O

-A KUBE-SVC-WWRFY3PZ7W3FGMQW -m comment --comment "default/nginx-basic:http -> 192.168.1.4:80" -j KUBE-SEP-WQR67ZLSTPDXXME4可以看到,KUBE-SVC-WWRFY3PZ7W3FGMQW又对应了三条规则,分别来看下:

1)、第一条规则

-A KUBE-SVC-WWRFY3PZ7W3FGMQW -m comment --comment "default/nginx-basic:http -> 192.168.0.7:80" -m statistic --mode random --probability 0.33333333349 -j KUBE-SEP-BEMSLTMJA26ZCLYL

# 这条规则对应的又会走KUBE-SEP-BEMSLTMJA26ZCLYL规则

-A KUBE-SEP-BEMSLTMJA26ZCLYL -p tcp -m comment --comment "default/nginx-basic:http" -m tcp -j DNAT --to-destination 192.168.0.7:80

# 可以看到, 有33%的概率将会被转发到【192.168.0.7:80】这个pod2)、第二条规则

-A KUBE-SVC-WWRFY3PZ7W3FGMQW -m comment --comment "default/nginx-basic:http -> 192.168.1.3:80" -m statistic --mode random --probability 0.50000000000 -j KUBE-SEP-UF65ZV4QBOPNRP6O

# 这条规则对应的又会走KUBE-SEP-UF65ZV4QBOPNRP6O规则

-A KUBE-SEP-UF65ZV4QBOPNRP6O -p tcp -m comment --comment "default/nginx-basic:http" -m tcp -j DNAT --to-destination 192.168.1.3:80

# 可以看到, 有50%的概率将会被转发到【192.168.1.3:80】这个pod3)、第三条规则

-A KUBE-SVC-WWRFY3PZ7W3FGMQW -m comment --comment "default/nginx-basic:http -> 192.168.1.4:80" -j KUBE-SEP-WQR67ZLSTPDXXME4

# 这条规则对应的又会走KUBE-SEP-WQR67ZLSTPDXXME4规则

-A KUBE-SEP-WQR67ZLSTPDXXME4 -p tcp -m comment --comment "default/nginx-basic:http" -m tcp -j DNAT --to-destination 192.168.1.4:80

# 可以看到,这条规则没有加--probability,所以有100%的概率将会被转发到【192.168.1.4:80】这个pod可以看到,最重要的就是KUBE-SVC-WWRFY3PZ7W3FGMQW规则,它以不同的概率走到了不同的规则,这些规则最终走到了Pod里,这就是负载均衡策略。

我们把这个负载均衡用图画出来,大体就是如下:

上述我们演示的是ClusterIP类型的Service,我们可以尝试修改为NodePort类型,实际上,在iptables规则里,最终也是会转发到KUBE-SVC-WWRFY3PZ7W3FGMQW规则,综上,不管是ClusterIP,还是NodePort,都走到了同一个规则上。

四、ipvs案例分析

IPVS模式工作在内核态,在同步代理规则时具有更好的性能,同时提高网络吞吐量为大型集群提供了更好的可扩展性,同时提供了大量的负责均衡算法。

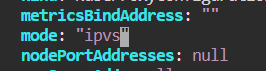

还是通过一个例子说明ipvs怎么转发的,首先我们需要修改kube-proxy的代理模式为ipvs,我们可以通过修改configmap来实现:

$ kubectl edit configmap kube-proxy -n kube-system

configmap/kube-proxy edited将mode修改为ipvs即可,如下图:

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

spec:

replicas: 3

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx

---

apiVersion: v1

kind: Service

metadata:

name: nginx-basic

spec:

type: ClusterIP

ports:

- port: 80

protocol: TCP

name: http

selector:

app: nginx 创建对应的pod和service:

$ vim ipvs.yaml

controlplane $ kubectl create -f ipvs.yaml

deployment.apps/nginx-deployment created

service/nginx-basic created

$ kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-deployment-748c667d99-fpsh6 1/1 Running 0 12s 192.168.1.3 node01

nginx-deployment-748c667d99-hsnzw 1/1 Running 0 12s 192.168.0.7 controlplane

nginx-deployment-748c667d99-sb9b2 1/1 Running 0 12s 192.168.1.4 node01

$ kubectl get svc -o wide

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

nginx-basic ClusterIP 10.96.115.68 80/TCP 36s app=nginx 我们通过【ipvsadm -L】命令查看ipvs转发规则:

$ ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

...省略...

TCP 10.96.115.68:80 rr

-> 192.168.0.7:80 Masq 1 0 0

-> 192.168.1.3:80 Masq 1 0 0

-> 192.168.1.4:80 Masq 1 0 0

...省略...可以看到,到ClusterIP:Port的流量,都被以rr(round robin)的策略转发到3个后端pod上。

五、iptables vs ipvs

●1、iptables更通用,主要是应用在防火墙上,也能应用于路由转发等功能,ipvs更聚焦,它只能做负载均衡,不能实现其它的例如防火墙上。

●2、iptables在处理规则时,是按“链”逐条匹配,如果规则过多,性能会变差,它匹配规则的复杂度是O(n),而ipvs处理规则时,在专门的模块内处理,查找规则的复杂度是O(1)。

●3、iptables虽然可以实现负载均衡,但是它的策略比较简单,只能以概率转发,而ipvs可以实现多种策略。