Ultralytics(YoloV8)开发环境配置,训练,模型转换,部署全流程测试记录

关键词:windows docker tensorRT Ultralytics YoloV8

配置开发环境的方法:

1.Windows的虚拟机上配置:

Python3.10

使用Ultralytics 可以得到pt onnx,但无法转为engine,找不到GPU,手动转也不行,找不到GPU。这个应该是需要可以支持硬件虚拟化的GPU,才能在虚拟机中使用GPU。

2.Windows 上配置:

Python3.10

Cuda 12.1

Cudnn 8.9.4

TensorRT-8.6.1.6

使用Ultralytics 可以得到pt onnx,但无法转为engine,需要手动转换。这个实际上是跑通了的。

3.Docker中的配置(推荐)

Windows上的docker

使用的是Nvidia配置好环境的docker,包括tensorflow,nvcc,等。

启动镜像:

docker run --shm-size 8G --gpus all -it --rm tensorflow/tensorflow:latest-gpu

在docker上安装libgl,Ultralytics等。

apt-get update && apt-get install libgl1

pip install ultralytics

pip install nvidia-tensorrt

然后进行提交,重新生成一个新的镜像文件:

如果不进行提交,则刚才安装的所有软件包,在重启以后就会丢失,需要重新再装一遍。

在docker desktop中可以看到所有的镜像

docker run --shm-size 8G --gpus all -it --rm yolov8:2.0

–shm-size 8G 一定要有,否则在dataloader阶段会报错,如下所示:

![]()

为了搜索引擎可以识别到这篇文章,将内容打出来:

RuntimeError: DataLoader worker (pid 181032) is killed by signal: Bus error. It is possible that dataloader’s workers are out of shared memory. Please try to raise your shared memory limit

更加详细的介绍,可以参考:https://blog.csdn.net/zywvvd/article/details/110647825

新生成的镜像,可以进行打包,在离线环境中使用。

docker save yolov8:2.0 |gzip > yolov8.tar.gz

将生成的镜像拷贝到离线环境,

docker load < yolov8.tar.gz

ultralytics 快速上手

参考:https://docs.ultralytics.com/modes/

官网的介绍很详细,按照指引,基本上可以配置成功。

模型训练:

def train():

#model = YOLO("yolov8n.yaml") # build a new model from scratch

model = YOLO("yolov8n.pt") # load a pretrained model (recommended for training)

model.train(data="coco128.yaml", epochs=3,batch=8) # train the model

metrics = model.val() # evaluate model performance on the validation set

#results = model("https://ultralytics.com/images/bus.jpg") # predict on an image

path = model.export(format="onnx") # export the model to ONNX format

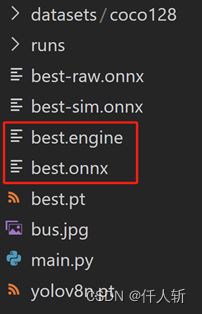

模型转换:

def eval():

model = YOLO("best.pt") # load a pretrained model (recommended for training)

model.export(format="engine",device=0,simplify=True)

model.export(format="onnx", simplify=True) # export the model to onnx format

当使用Ultralytics无法导出engine格式的文件时,需要使用tensorRT提供的trtexec进行转换。

事实上,在笔者的测试过程中,即使Ultralytics可以导出engine格式的模型,c++API的tensorrt也无法加载使用。即使python中和c++中使用的tensorRT的版本一致。

在windows平台下,我们可以使用如下的方法进行转换,可以写一个.bat脚本

@echo off

trtexec.exe --onnx=best.onnx --saveEngine=best.engine --fp16 --workspace=2048

:end

PAUSE

对于可变尺寸,需要

@echo off

trtexec.exe --onnx=best.onnx --saveEngine=best.engine --minShapes=images:1x3x640x640 --optShapes=images:8x3x640x640 --maxShapes=images:8x3x640x640 --fp16 --workspace=2048

:end

PAUSE

使用tensorrt加载engine文件进行推理

方法1:python

Python,需要安装pycuda

直接使用

pip install pycuda

进行安装。

def engineeval():

# 创建logger:日志记录器

logger = trt.Logger(trt.Logger.WARNING)

# 创建runtime并反序列化生成engine

with open("best.engine", "rb") as f, trt.Runtime(logger) as runtime:

engine = runtime.deserialize_cuda_engine(f.read())

# 创建cuda流

stream = cuda.Stream()

# 创建context并进行推理

with engine.create_execution_context() as context:

# 分配CPU锁页内存和GPU显存

h_input = cuda.pagelocked_empty(trt.volume(context.get_binding_shape(0)), dtype=np.float32)

h_output = cuda.pagelocked_empty(trt.volume(context.get_binding_shape(1)), dtype=np.float32)

d_input = cuda.mem_alloc(h_input.nbytes)

d_output = cuda.mem_alloc(h_output.nbytes)

# Transfer input data to the GPU.

cuda.memcpy_htod_async(d_input, h_input, stream)

# Run inference.

context.execute_async_v2(bindings=[int(d_input), int(d_output)], stream_handle=stream.handle)

# Transfer predictions back from the GPU.

cuda.memcpy_dtoh_async(h_output, d_output, stream)

# Synchronize the stream

stream.synchronize()

# Return the host output. 该数据等同于原始模型的输出数据

在调试界面,可以看到输入矩阵维度是1228800=13640*640

至于推理的精度,还需要传入实际的图像进行测试。这里就不在python环境下测试了。

方法2:c++

生产环境一般是c++,使用tensorrt c++ API进行engine文件的加载与推理,

参考:https://docs.nvidia.com/deeplearning/tensorrt/developer-guide/index.html#perform_inference_c

代码实现:

#include 然后可以做一些工程化的工作,比如对c++代码封装成为一个dll。后面还需要加一些前处理和后处理的步骤,将模型的结果进行解析。