安装hadoop,并配置hue

0、说明

对于大数据学习的初始阶段,我也曾尝试搭建相应的集群环境。通过搭建环境了解组件的一些功能、配置、原理。

在实际学习过程中,我更多的还是使用docker来快速搭建环境。

这里记录一下我搭建hadoop的过程。

1、下载hadoop

下载地址:Apache Hadoop

wget https://mirrors.bfsu.edu.cn/apache/hadoop/common/hadoop-2.10.1/hadoop-2.10.1.tar.gz

# 解压

tar -zxvf hadoop-2.10.1.tar.gz

# 复制到 /usr/hadoop/目录下

# sudo mkdir /usr/hadoop/

# cp -r hadoop-2.10.1 /usr/hadoop/

# 添加HADOOP_HOME

sudo /etc/profile

# 添加如下内容,并保存退出

#HADOOP_HOME

export HADOOP_HOME=/home/airwalk/bigdata/soft/hadoop-2.10.1

export PATH=$HADOOP_HOME/bin:$PATH

export PATH=$HADOOP_HOME/sbin:$PATH

# 使生效

source /etc/profile

# 测试

hdfs version

#结果如下

airwalk@svr43:/usr/hadoop/hadoop-2.10.1$ hdfs version

Hadoop 2.10.1

Subversion https://github.com/apache/hadoop -r 1827467c9a56f133025f28557bfc2c562d78e816

Compiled by centos on 2020-09-14T13:17Z

Compiled with protoc 2.5.0

From source with checksum 3114edef868f1f3824e7d0f68be03650

This command was run using /home/airwalk/bigdata/soft/hadoop-2.10.1/share/hadoop/common/hadoop-common-2.10.1.jar

# 测试

cd bigdata/soft/hadoop-2.10.1

mkdir input

cp etc/hadoop/* input

hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.10.1.jar grep input/ output '[a-z.]+'

至此,在一台机器上的hadoop安装成功

2、配置

| svr43 | server42 | server37 | |

|---|---|---|---|

| hdfs | namenode,datanode | datanode | SecondaryNamenode,datanode |

| yarn | nodeManager | resourceManager,nodeManager | nodeManager |

0:免密登录配置

- 在svr43机器上生成密钥(hdfs的namenode节点)

cd ~/.ssh

## 下面一直回车即可

ssh-keygen -t rsa

## 然后在该目录下执行

ssh-copy-id server42

ssh-copy-id server37

# 自己也需要免密登录自己

ssh-copy-id svr43

- 在 server42机器上生成密钥,免密登录其它节点,因为该节点是yarn 的resourceManger

cd ~/.ssh

## 下面一直回车即可

ssh-keygen -t rsa

## 然后在该目录下执行

# 自己也需要免密登录自己

ssh-copy-id server42

ssh-copy-id server37

ssh-copy-id svr43

!! 注意,出现如下异常

airwalk@server42:~/.ssh$ ssh svr43

Warning: the ECDSA host key for 'svr43' differs from the key for the IP address '192.168.0.43'

Offending key for IP in /home/airwalk/.ssh/known_hosts:3

Matching host key in /home/airwalk/.ssh/known_hosts:11

Are you sure you want to continue connecting (yes/no)? yes

Welcome to Ubuntu 16.04.6 LTS (GNU/Linux 4.4.0-142-generic x86_64)

# 解决方法

ssh-keygen -R 192.168.0.43

- 在svr43机器上生成root密钥(hdfs的namenode节点)

# 切换到root账户下

sudo su root

cd /root/.ssh

ssh-keygen -t rsa

ssh-copy-id server42

ssh-copy-id server37

ssh-copy-id svr43

1:配置core-site.xml

临时文件的位置,注意不能放在太小的磁盘里,这里使用的是如下目录

/home/airwalk/bigdata/soft/hadoop-2.10.1/data/tmp

<property>

<name>fs.defaultFSname>

<value>hdfs://192.168.0.43:9000value>

#<value>hdfs://svr43:9000value>

property>

<property>

<name>hadoop.tmp.dirname>

<value>/home/airwalk/bigdata/soft/hadoop-2.10.1/data/tmpvalue>

property>

2:hdfs的配置文件

配置hadoop-env.sh

echo $JAVA_HOME

vim hadoop-env.sh

export JAVA_HOME=/usr/lib/jvm/java-8-openjdk-amd64

配置hdfs-site.xml

因为集群时3个,所以这里改为副本为3

<property>

<name>dfs.replicationname>

<value>3value>

property>

<property>

<name>dfs.namenode.secondary.http-addressname>

<value>server37:50090value>

property>

3:yarn配置

配置yarn-env.sh

vim yarn-env.sh

export JAVA_HOME=/usr/lib/jvm/java-8-openjdk-amd64

配置yarn-site.xml

<property>

<name>yarn.nodemanager.aux-servicesname>

<value>mapreduce_shufflevalue>

property>

<property>

<name>yarn.resourcemanager.hostnamename>

<value>192.168.0.42value>

property>

4:MapReduce配置

配置mapred-env.sh

vim yarn-env.sh

export JAVA_HOME=/usr/lib/jvm/java-8-openjdk-amd64

配置mapred-site.xml

mapreduce.framework.name

yarn

3、启动

- 在namenode节点上,进行格式化操作

hdfs namenode -format

- 启动namenode

cd sbin

./hadoop-daemon.sh start namenode

./sbin/hadoop-daemon.sh start namenode

- 启动datanode

# 三台机器均启动

./sbin/hadoop-daemon.sh start datanode

- 退出

./sbin/hadoop-daemon.sh stop namenode

# 三台机器均退出

./sbin/hadoop-daemon.sh stop datanode

4、集群启动

1:配置slaves, 所有节点都要修改

cd /home/airwalk/bigdata/soft/hadoop-2.10.1/etc/hadoop

vim salves

# 添加从主机的名字,不允许有空格和空行

svr43

server42

server37

2: 启动hdfs集群

可以自动启动集群中的所有datanode和namenode

# 在hdfs的namenode节点上执行下面的命令

./sbin/start-dfs.sh

3:启动yarn

# 需要再resourceManger节点上进行处理(server42)

./sbin/start-yarn.sh

starting yarn daemons

resourcemanager running as process 10206. Stop it first.

server42: nodemanager running as process 10550. Stop it first.

svr43: starting nodemanager, logging to /home/airwalk/bigdata/soft/hadoop-2.10.1/logs/yarn-airwalk-nodemanager-svr43.out

server37: starting nodemanager, logging to /home/airwalk/bigdata/soft/hadoop-2.10.1/logs/yarn-airwalk-nodemanager-server37.out

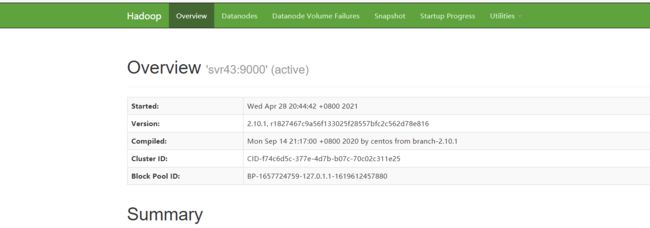

5、查看

namenode 节点上的链接

http://192.168.0.43:50070/

6、配置hue

Hadoop配置文件修改

hdfs-site.xml

dfs.webhdfs.enabled

true

core-site.html

hadoop.proxyuser.airwalk.hosts

*

hadoop.proxyuser.airwalk.groups

*

hadoop.proxyuser.root.hosts

*

hadoop.proxyuser.root.groups

*

httpfs-site.xml配置

httpfs.proxyuser.airwalk.hosts

*

httpfs.proxyuser.airwalk.groups

*

HUE配置文件修改

[[hdfs_clusters]] [[[default]]]

fs_defaultfs=hdfs://mycluster

webhdfs_url=http://node1:50070/webhdfs/v1

hadoop_bin=/usr/hadoop-2.5.1/bin

hadoop_conf_dir=/usr/hadoop-2.5.1/etc/hadoop

启动hdfs、重启hue

解决方法:

1、 关闭hdfs的权限验证

hdfs-site.xml

<property>

<name>dfs.permissions.enabledname>

<value>falsevalue>

property>

docker run -tid --name hue88 -p 8888:8888 -v /home/airwalk/bigdata/soft/hadoop-2.10.1/etc/hadoop:/etc/hadoop gethue/hue:latest

docker cp hue.ini hue88:/usr/share/hue/desktop/conf/

docker restart hue88

docker exec -it --user root <container id> /bin/bash

sudo apt-get install ant asciidoc cyrus-sasl-devel cyrus-sasl-gssapi cyrus-sasl-plain gcc gcc-c++ krb5-devel libffi-devel libxml2-devel libxslt-devel make mysql mysql-devel openldap-devel python-devel sqlite-devel gmp-devel rsync

# 作者:一拳超疼

# 链接:https://www.jianshu.com/p/a80ec32afb27

# 来源:简书

# 参考文档

[Install :: Hue SQL Assistant Documentation (gethue.com)](https://docs.gethue.com/administrator/installation/install/)

# 安装完所以的依赖后,再

# /home/airwalk/bigdata/soft/hue 是想要安装的目录

sudo PREFIX=/home/airwalk/bigdata/soft/hue make install

- python3.8 安装

https://blog.csdn.net/qq_39779233/article/details/106875184

- 安装npm

sudo apt install npm

npm install --unsafe-perm=true --allow-root

- 安装node

# 选择源,及版本号,这里是10.x,其它版本只需要更改为一些如:12.x,注意后面就是一个x

curl -sL https://deb.nodesource.com/setup_10.x | sudo -E bash -

# 安装相应的node

sudo apt-get install -y nodejs

# 查看

node --version

- 问题

# gyp ERR! stack Error: EACCES: permission denied, mkdir问题解决方案

# npm 有些命令不允许在root用户下执行,会自动从root用户切换到普通用户,这里设置一下,就可以在当前用户下执行

sudo npm i --unsafe-perm

# 然后在root权限下执行如下命令

PREFIX=/home/airwalk/bigdata/soft/hue make install

编译安装成功!!!