K8S:K8S对外服务之Ingress

文章目录

- 一.Ingress基础介绍

-

- 1.Ingress概念

- 2.K8S对外暴露服务(service)主要方式

-

- (1)NodePort

- (2)LoadBalancer

- (3)externalIPs

- (4)Ingress

- 3.Ingress 组成

-

- (1)ingress(nginx配置文件)

- (2)ingress-controller(当作反向代理或者转发器)

- (3)Ingress组成组件的请求过程

- 4.Ingress Controller 工作原理

-

- (1)工作原理图示

- (2)工作原理流程概述

- 5.ingress 暴露服务的方式

-

- (1)方式一:Deployment+LoadBalancer 模式的 Service

- (2)方式二:DaemonSet+HostNetwork+nodeSelector

- 示例:ingress+tomcat

- (3)方式三:Deployment+NodePort模式的Service

- 示例:ingress+tomcat

- 二.部署 nginx-ingress-controller

-

- 1.部署ingress-controller pod及相关资源

- 2.修改 ClusterRole 资源配置

- 3.指定 nginx-ingress-controller 运行在 node02 节点(采用方式二:DaemonSet+HostNetwork+nodeSelector)

- 4.修改 Deployment 为 DaemonSet ,指定节点运行,并开启 hostNetwork 网络

- 5.在所有 node 节点上传 nginx-ingress-controller 镜像压缩包 ingree.contro.tar.gz 到/opt/ingress 目录,并解压和加载镜像

- 6.启动 nginx-ingress-controller

- 7.创建 ingress 规则

-

- (1)创建一个 deploy 和 svc

- (2)创建 ingress配置文件

- 8.测试访问

- 9.查看 nginx-ingress-controller

- 三.采用方式三:Deployment+NodePort模式的Service

-

- 1.下载 nginx-ingress-controller 和 ingress-nginx 暴露端口配置文件

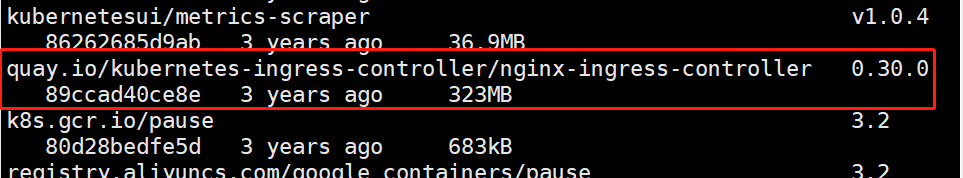

- 2.在所有 node 节点上传镜像包 ingress-controller-0.30.0.tar 到 /opt/ingress-nodeport 目录,并加载镜像

- 3.启动 nginx-ingress-controller

- 4.Ingress HTTP 代理访问

- 5.测试访问

- 6.Ingress HTTP 代理访问虚拟主机

- 7.测试访问

- 8.Ingress HTTPS 代理访问

-

- (1)创建ssl证书

- (2)创建 secret 资源进行存储

- (3)创建 deployment、Service、Ingress Yaml 资源

- 9.Nginx 进行 BasicAuth

-

- (1)生成用户密码认证文件,创建 secret 资源进行存储

- (2)创建 ingress 资源

- (3)访问测试

- 10.Nginx 进行重写

-

- (1)metadata.annotations 配置说明

- (2)编写ingress-rewrite.yaml

- (3)访问测试

- 总

一.Ingress基础介绍

1.Ingress概念

(1)service的作用体现在两个方面:

①对集群内部,它不断跟踪pod的变化,更新endpoint中对应pod的对象,提供了ip不断变化的pod的服务发现机制;

②对集群外部,他类似负载均衡器,可以在集群内外部对pod进行访问。

(2)ingress使用举例

lb负载+ingress对外提供的方式

2.K8S对外暴露服务(service)主要方式

(1)NodePort

①将service暴露在节点网络上,NodePort背后就是Kube-Proxy,Kube-Proxy是沟通service网络、Pod网络和节点网络的桥梁。

②测试环境使用还行,当有几十上百的服务在集群中运行时,NodePort的端口管理就是个灾难。因为每个端口只能是一种服务,端口范围只能是 30000-32767。

(2)LoadBalancer

通过设置LoadBalancer映射到云服务商提供的LoadBalancer地址。这种用法仅用于在公有云服务提供商的云平台上设置 Service 的场景。受限于云平台,且通常在云平台部署LoadBalancer还需要额外的费用。

在service提交后,Kubernetes就会调用CloudProvider在公有云上为你创建一个负载均衡服务,并且把被代理的Pod的IP地址配置给负载均衡服务做后端。

(3)externalIPs

service允许为其分配外部IP,如果外部IP路由到集群中一个或多个Node上,Service会被暴露给这些externalIPs。通过外部IP进入到集群的流量,将会被路由到Service的Endpoint上。

(4)Ingress

只需一个或者少量的公网IP和LB,即可同时将多个HTTP服务暴露到外网,七层反向代理。

可以简单理解为service的service,它其实就是一组基于域名和URL路径,把用户的请求转发到一个或多个service的规则。

3.Ingress 组成

Ingress-Nginx github 地址:https://github.com/kubernetes/ingress-nginx

Ingress-Nginx 官方网站:https://kubernetes.github.io/ingress-nginx/

(1)ingress(nginx配置文件)

ingress是一个API对象,通过yaml文件来配置,ingress对象的作用是定义请求如何转发到service的规则,可以理解为配置模板。

ingress通过http或https暴露集群内部service,给service提供外部URL、负载均衡、SSL/TLS能力以及基于域名的反向代理。ingress要依靠 ingress-controller 来具体实现以上功能。

(2)ingress-controller(当作反向代理或者转发器)

ingress-controller是具体实现反向代理及负载均衡的程序,对ingress定义的规则进行解析,根据配置的规则来实现请求转发。

ingress-controller并不是k8s自带的组件,实际上ingress-controller只是一个统称,用户可以选择不同的ingress-controller实现,目前,由k8s维护的ingress-controller只有google云的GCE与ingress-nginx两个,其他还有很多第三方维护的ingress-controller,具体可以参考官方文档。但是不管哪一种ingress-controller,实现的机制都大同小异,只是在具体配置上有差异。

一般来说,ingress-controller的形式都是一个pod,里面跑着daemon程序和反向代理程序。daemon负责不断监控集群的变化,根据 ingress对象生成配置并应用新配置到反向代理,比如ingress-nginx就是动态生成nginx配置,动态更新upstream,并在需要的时候reload程序应用新配置。为了方便,后面的例子都以k8s官方维护的ingress-nginx为例。

总:

ingress-controller才是负责具体转发的组件,通过各种方式将它暴露在集群入口,外部对集群的请求流量会先到 ingress-controller, 而ingress对象是用来告诉ingress-controller该如何转发请求,比如哪些域名、哪些URL要转发到哪些service等等。

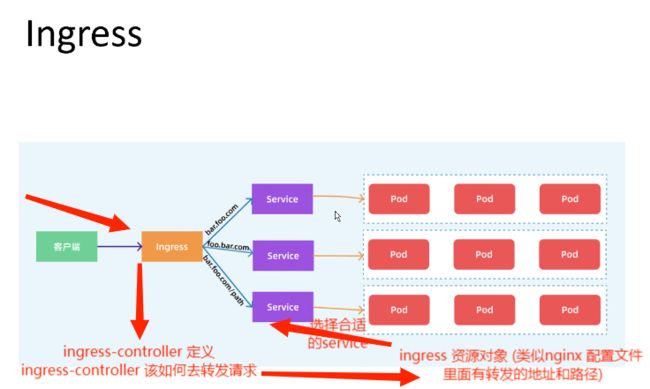

(3)Ingress组成组件的请求过程

客户请求过来到ingress-controller,不知道选择哪个service,将请求发送给ingress资源对象,根据配置文件选择合适的service再到pod

ingress——七层

service——四层(IP+端口号)

总:

ingress-controller才是负责具体转发的组件,通过各种方式将它暴露在集群入口,外部对集群的请求流量会先到 ingress-controller, 而ingress对象是用来告诉ingress-controller该如何转发请求,比如哪些域名、哪些URL要转发到哪些service等等。

4.Ingress Controller 工作原理

(1)工作原理图示

配置文件会保存在etcd中

(2)工作原理流程概述

①ingress-controller通过和 kubernetes APIServer 交互,动态的去感知集群中ingress规则变化,

②然后读取它,按照自定义的规则,规则就是写明了哪个域名对应哪个service,生成一段nginx配置,

③再写到nginx-ingress-controller的pod里,这个ingress-controller的pod里运行着一个Nginx服务,控制器会把生成的 nginx配置写入 /etc/nginx.conf文件中,

④然后reload一下使配置生效。以此达到域名区分配置和动态更新的作用。

5.ingress 暴露服务的方式

(1)方式一:Deployment+LoadBalancer 模式的 Service

如果要把ingress部署在公有云,那用这种方式比较合适。用Deployment部署ingress-controller,创建一个 type为 LoadBalancer 的 service 关联这组 pod。大部分公有云,都会为 LoadBalancer 的 service 自动创建一个负载均衡器,通常还绑定了公网地址。 只要把域名解析指向该地址,就实现了集群服务的对外暴露

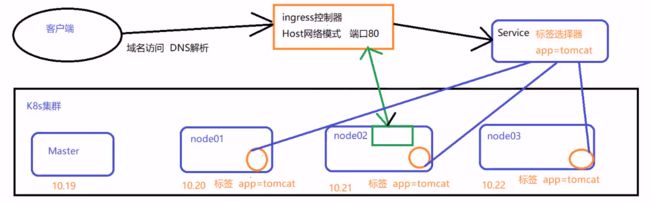

(2)方式二:DaemonSet+HostNetwork+nodeSelector

用DaemonSet结合nodeselector来部署ingress-controller到特定的node上,然后使用HostNetwork直接把该pod与宿主机node的网络打通,直接使用宿主机的80/433端口就能访问服务。这时,ingress-controller所在的node机器就很类似传统架构的边缘节点,比如机房入口的nginx服务器。该方式整个请求链路最简单,性能相对NodePort模式更好。缺点是由于直接利用宿主机节点的网络和端口,一个node只能部署一个ingress-controller pod。 比较适合大并发的生产环境使用。

示例:ingress+tomcat

daemonset + hostNetwork 模式部署 ingress-controller

daemonset + hostNetwork 模式部署 ingress-controller数据流向:

客户端——ingress-controller(pod和host共享IP和端口)——业务应用的Service——业务应用的pod

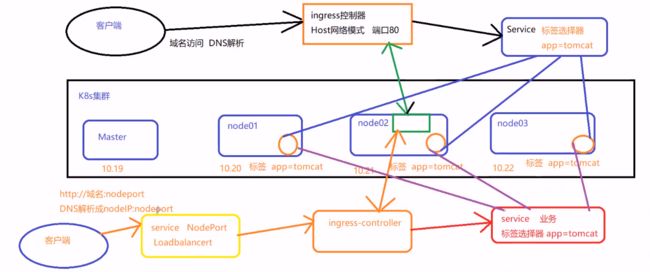

(3)方式三:Deployment+NodePort模式的Service

同样用deployment模式部署ingress-controller,并创建对应的service,但是type为NodePort。这样,ingress就会暴露在集群节点ip的特定端口上。由于nodeport暴露的端口是随机端口,一般会在前面再搭建一套负载均衡器来转发请求。该方式一般用于宿主机是相对固定的环境ip地址不变的场景。(相当于多个一个Service)

NodePort方式暴露ingress虽然简单方便,但是NodePort多了一层NAT,在请求量级很大时可能对性能会有一定影响。

示例:ingress+tomcat

Deployment+NodePort模式部署 ingress-controller

deployment + Service (Nodeport LoadBalancer)模式部署 ingress-controller数据流向:

客户端——ingress的service——ingress-controller(pod)——业务应用的Service——业务应用的pod

ingress问题:添加一个nginx负载均衡出现始终分发ingress到达不了 Service的问题

解决方法:如果是ingress直接暴露的HTTPS就放在ingress里就行;如果中间还有nginx反向代理出去需要在nginx这台上做HTTPS的ssl证书认证,ssl证书收费的一般三到五千每年

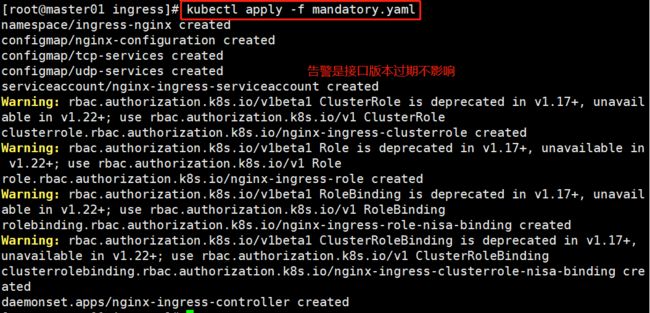

二.部署 nginx-ingress-controller

1.部署ingress-controller pod及相关资源

master节点操作

mkdir /opt/ingress

cd /opt/ingress

官方下载地址:

wget https://raw.githubusercontent.com/kubernetes/ingress-nginx/nginx-0.25.0/deploy/static/mandatory.yaml

上面可能无法下载,可用国内的 gitee

wget https://gitee.com/mirrors/ingress-nginx/raw/nginx-0.25.0/deploy/static/mandatory.yaml

wget https://gitee.com/mirrors/ingress-nginx/raw/nginx-0.30.0/deploy/static/mandatory.yaml

#mandatory.yaml文件中包含了很多资源的创建,包括namespace、ConfigMap、role,ServiceAccount等等所有部署ingress-controller需要的资源。

2.修改 ClusterRole 资源配置

vim mandatory.yaml

......

apiVersion: rbac.authorization.k8s.io/v1beta1

#RBAC相关资源从1.17版本开始改用rbac.authorization.k8s.io/v1,rbac.authorization.k8s.io/v1beta1在1.22版本即将弃用

kind: ClusterRole

metadata:

name: nginx-ingress-clusterrole

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

rules:

- apiGroups:

- ""

resources:

- configmaps

- endpoints

- nodes

- pods

- secrets

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes

verbs:

- get

- apiGroups:

- ""

resources:

- services

verbs:

- get

- list

- watch

- apiGroups:

- "extensions"

- "networking.k8s.io" # (0.25版本)增加 networking.k8s.io Ingress 资源的 api

resources:

- ingresses

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- events

verbs:

- create

- patch

- apiGroups:

- "extensions"

- "networking.k8s.io" # (0.25版本)增加 networking.k8s.io/v1 Ingress 资源的 api

resources:

- ingresses/status

verbs:

- update

3.指定 nginx-ingress-controller 运行在 node02 节点(采用方式二:DaemonSet+HostNetwork+nodeSelector)

#查看有没有true标签

kubectl get node --show-labels

kubectl label node node02 ingress=true

kubectl get nodes --show-labels

4.修改 Deployment 为 DaemonSet ,指定节点运行,并开启 hostNetwork 网络

vim mandatory.yaml

...

apiVersion: apps/v1

# 修改 kind

# kind: Deployment

kind: DaemonSet

metadata:

name: nginx-ingress-controller

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

spec:

# 删除Replicas

# replicas: 1

selector:

matchLabels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

template:

metadata:

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

annotations:

prometheus.io/port: "10254"

prometheus.io/scrape: "true"

spec:

#添加以下hostNetwork和nodeSelector

# 使用主机网络

hostNetwork: true

# 选择节点运行,node节点有true就漂到上面

nodeSelector:

ingress: "true"

serviceAccountName: nginx-ingress-serviceaccount

......

5.在所有 node 节点上传 nginx-ingress-controller 镜像压缩包 ingree.contro.tar.gz 到/opt/ingress 目录,并解压和加载镜像

node02节点操作

cd /opt

tar zxvf ingree.contro.tar.gz

docker load -i ingree.contro.tar

scp ingree.contro.tar node01:/opt/

node01节点操作

docker load -i ingree.contro.tar

docker images

6.启动 nginx-ingress-controller

master节点操作

#告警不影响

cd /opt/ingress/

kubectl apply -f mandatory.yaml

#nginx-ingress-controller 已经运行 node02 节点

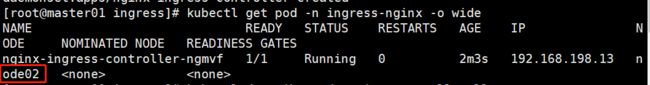

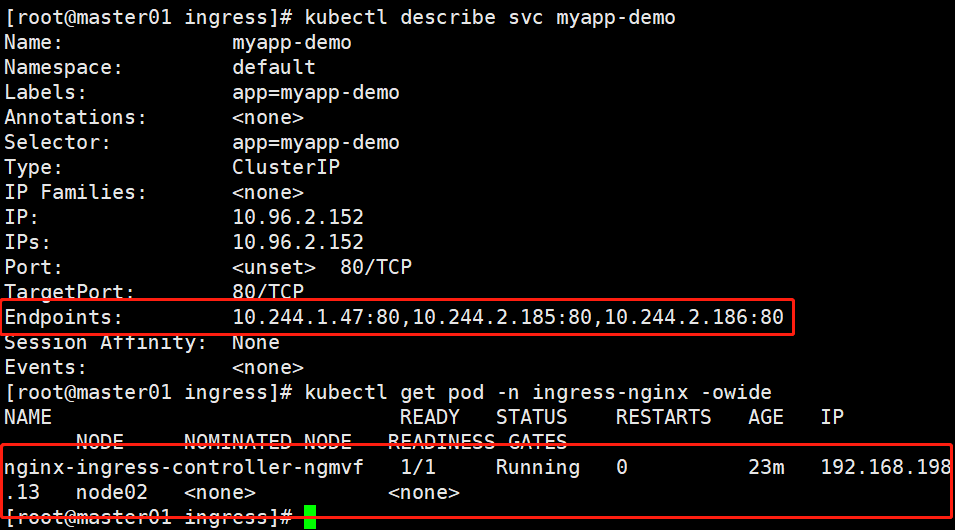

kubectl get pod -n ingress-nginx -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-ingress-controller-ngmvf 1/1 Running 0 2m3s 192.168.198.13 node02

kubectl describe pod -n ingress-nginx

如果此处报错:bind to 0.0.0.00 failed或查看日志kubectl logs -n ingress-nginx nginx-ingress-controller-ngmvf

在node节点查看80端口是否存在,存在禁掉即可

lsof -i:80

kubectl get cm,daemonset -n ingress-nginx -o wide

NAME DATA AGE

configmap/ingress-controller-leader-nginx 0 12m

configmap/kube-root-ca.crt 1 12m

configmap/nginx-configuration 0 12m

configmap/tcp-services 0 12m

configmap/udp-services 0 12m

NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE CONTAINERS IMAGES SELECTOR

daemonset.apps/nginx-ingress-controller 1 1 1 1 1 ingress=true 12m nginx-ingress-controller quay.io/kubernetes-ingress-controller/nginx-ingress-controller:0.25.0 app.kubernetes.io/name=ingress-nginx,app.kubernetes.io/part-of=ingress-nginx

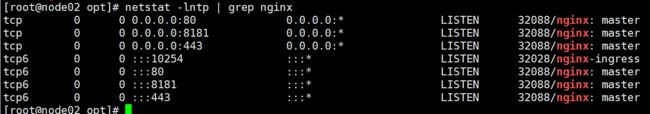

node02 节点查看

netstat -lntp | grep nginx

由于配置了 hostnetwork,nginx 已经在 node 主机本地监听 80/443/8181 端口。其中 8181 是 nginx-controller 默认配置的一个 default backend(Ingress 资源没有匹配的 rule 对象时,流量就会被导向这个 default backend)。这样,只要访问 node 主机有公网 IP,就可以直接映射域名来对外网暴露服务了。如果要 nginx 高可用的话,可以在多个 node上部署,并在前面再搭建一套 LVS+keepalived 做负载均衡。

7.创建 ingress 规则

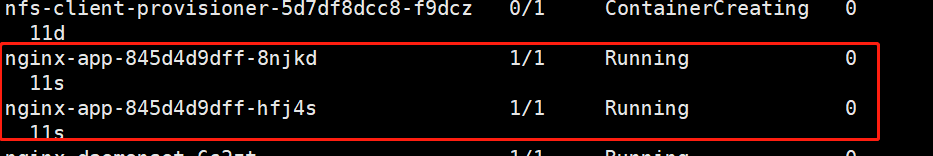

(1)创建一个 deploy 和 svc

master节点操作

#空跑

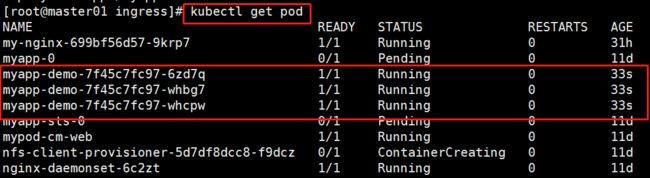

kubectl create deployment myapp-demo --image=soscscs/myapp:v1 --replicas=3 --port=80

kubectl get pod

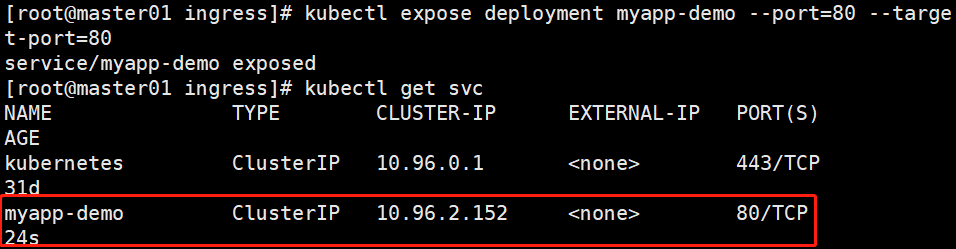

kubectl expose deployment myapp-demo --port=80 --target-port=80

kubectl get svc

kubectl describe svc myapp-demo

kubectl get pod -n ingress-nginx -owide

vim service-nginx.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-app

spec:

replicas: 2

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: nginx-app-svc

spec:

type: ClusterIP

ports:

- protocol: TCP

port: 80

targetPort: 80

selector:

app: nginx

(2)创建 ingress配置文件

用的是方法二

#方法一:(extensions/v1beta1 Ingress 在1.22版本即将弃用)

vim ingress-app.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: nginx-app-ingress

spec:

rules:

- host: www.blue.com

http:

paths:

- path: /

backend:

serviceName: nginx-app-svc

servicePort: 80

#方法二:

vim ingress-app.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: nginx-app-ingress

spec:

rules:

- host: www.blue.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: nginx-app-svc

port:

number: 80

kubectl apply -f service-nginx.yaml

kubectl apply -f ingress-app.yaml

kubectl get pods

kubectl get svc

kubectl get ingress

8.测试访问

本地 host 添加域名解析

vim /etc/hosts

#里面添加node02地址和解析:IP地址 www.blue.com

192.168.198.13 www.blue.com

curl www.blue.com

#如果还需要添加服务就可以

kubectl create deployment myapp-sun --image=soscscs/myapp:v2 --replicas=3 --port=80

kubectl get pod

#再进行暴露

kubectl expose deployment myapp-sun --port=8080 --target-port=80

#报错信息出现以下则是已经存在,可以将

Error from server (AlreadyExists): services "myapp-sun" already exists

#解决方案:

①查看是否已存在

kubectl get svc

②存在删除即可(生产中不会出现相同的)

kubectl delete svc myapp-sun

③再次重新暴露

kubectl expose deployment myapp-sun --port=8080 --target-port=80

#再次查看

kubectl get svc

#修改配置文件

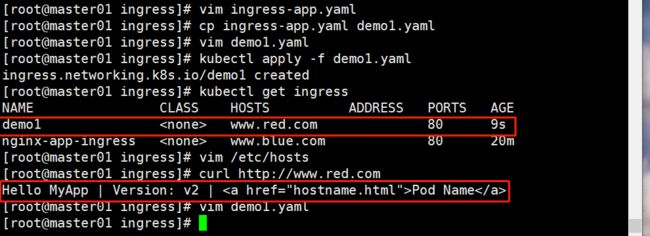

cp ingress-app.yaml demo1.yaml

vim demo1.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: demo1

spec:

rules:

- host: www.red.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: myapp-sun

port:

number: 80

kubectl apply -f demo1.yaml

kubectl get ingress

vim /etc/hosts

192.168.198.13 www.red.com

curl http://www.red.com

对外访问只需要做主机映射即可

9.查看 nginx-ingress-controller

kubectl get pod -n ingress-nginx -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-ingress-controller-ngmvf 1/1 Running 0 50m 192.168.198.13 node02

kubectl exec -it nginx-ingress-controller-ngmvf -n ingress-nginx /bin/bash

# more /etc/nginx/nginx.conf

//可以看到从 start server www.blue.com 到 end server www.blue.com 之间包含了此域名用于反向代理的配置

三.采用方式三:Deployment+NodePort模式的Service

1.下载 nginx-ingress-controller 和 ingress-nginx 暴露端口配置文件

mkdir /opt/ingress-nodeport

cd /opt/ingress-nodeport

官方下载地址:

wget https://raw.githubusercontent.com/kubernetes/ingress-nginx/nginx-0.30.0/deploy/static/mandatory.yaml

wget https://raw.githubusercontent.com/kubernetes/ingress-nginx/nginx-0.30.0/deploy/static/provider/baremetal/service-nodeport.yaml

国内 gitee 资源地址:

wget https://gitee.com/mirrors/ingress-nginx/raw/nginx-0.30.0/deploy/static/mandatory.yaml

wget https://gitee.com/mirrors/ingress-nginx/raw/nginx-0.30.0/deploy/static/provider/baremetal/service-nodeport.yaml

2.在所有 node 节点上传镜像包 ingress-controller-0.30.0.tar 到 /opt/ingress-nodeport 目录,并加载镜像

tar zxvf ingree.contro-0.30.0.tar.gz

docker load -i ingree.contro-0.30.0.tar

docker images

3.启动 nginx-ingress-controller

kubectl apply -f mandatory.yaml

kubectl apply -f service-nodeport.yaml

如果K8S Pod 调度失败,在 kubectl describe pod资源时显示:

Warning FailedScheduling 18s (x2 over 18s) default-scheduler 0/2 nodes are available: 2 node(s) didn’t match node selector解决方案:

1.给需要调度的node加上对应标签

相对上面这个Yaml文件的例子

kubectl label nodes node_name kubernetes.io/os=linux

2.删除Yaml文件中的nodeSelector,如果对节点没有要求的话,直接删除节点选择器即可

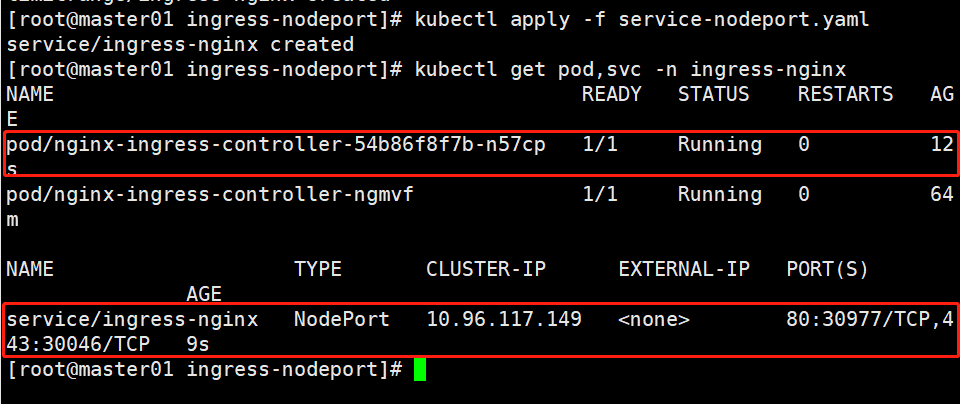

kubectl get pod,svc -n ingress-nginx

NAME READY STATUS RESTARTS AGE

pod/nginx-ingress-controller-54b86f8f7b-n57cp 1/1 Running 0 12s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/ingress-nginx NodePort 10.96.117.149 80:30977/TCP,443:30046/TCP 9s

4.Ingress HTTP 代理访问

创建 deployment、Service、Ingress Yaml 资源

cd /opt/ingress-nodeport

vim ingress-nginx.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-myapp

spec:

replicas: 2

selector:

matchLabels:

name: nginx

template:

metadata:

labels:

name: nginx

spec:

containers:

- name: nginx

image: nginx

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: nginx-svc

spec:

ports:

- port: 80

targetPort: 80

protocol: TCP

selector:

name: nginx

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: nginx-test

spec:

rules:

- host: www.long.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: nginx-svc

port:

number: 80

kubectl apply -f ingress-nginx.yaml

kubectl get pods,svc -o wide

pod/nginx-myapp-57dd86f5cc-wggmp 1/1 Running 0 5s 10.244.2.190 node01

pod/nginx-myapp-57dd86f5cc-znt7n 1/1 Running 0 5s 10.244.1.51 node02

service/nginx-svc ClusterIP 10.96.54.120 80/TCP 5s name=nginx

kubectl exec -it pod/nginx-myapp-57dd86f5cc-wggmp bash

# cd /usr/share/nginx/html/

# echo 'this is web1' >> index.html

kubectl exec -it pod/nginx-myapp-57dd86f5cc-znt7n bash

# cd /usr/share/nginx/html/

# echo 'this is web2' >> index.html

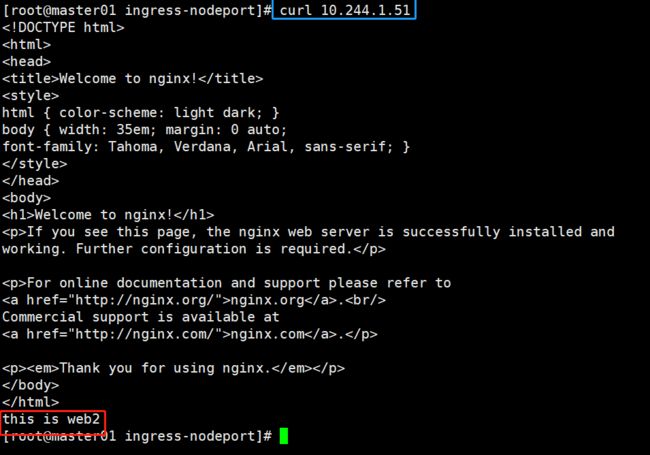

5.测试访问

curl 10.244.2.190

curl 10.244.1.51

kubectl get svc -n ingress-nginx

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

ingress-nginx NodePort 10.96.117.149 80:30977/TCP,443:30046/TCP 7m36s

#本地 host 添加域名解析

vim /etc/hosts

#添加域名解析

192.168.198.13 www.red.com www.long.com

#外部访问

curl http://www.long.com:30046

6.Ingress HTTP 代理访问虚拟主机

mkdir /opt/ingress-nodeport/vhost

cd /opt/ingress-nodeport/vhost

#创建虚拟主机1资源

vim deployment1.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: deployment1

spec:

replicas: 2

selector:

matchLabels:

name: nginx1

template:

metadata:

labels:

name: nginx1

spec:

containers:

- name: nginx1

image: soscscs/myapp:v1

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: svc-1

spec:

ports:

- port: 80

targetPort: 80

protocol: TCP

selector:

name: nginx1

kubectl apply -f deployment1.yaml

#创建虚拟主机2资源

vim deployment2.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: deployment2

spec:

replicas: 2

selector:

matchLabels:

name: nginx2

template:

metadata:

labels:

name: nginx2

spec:

containers:

- name: nginx2

image: soscscs/myapp:v2

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: svc-2

spec:

ports:

- port: 80

targetPort: 80

protocol: TCP

selector:

name: nginx2

kubectl apply -f deployment2.yaml

#创建ingress资源

vim ingress-nginx.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: ingress1

spec:

rules:

- host: www1.mcl.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: svc-1

port:

number: 80

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: ingress2

spec:

rules:

- host: www2.mcl.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: svc-2

port:

number: 80

kubectl apply -f ingress-nginx.yaml

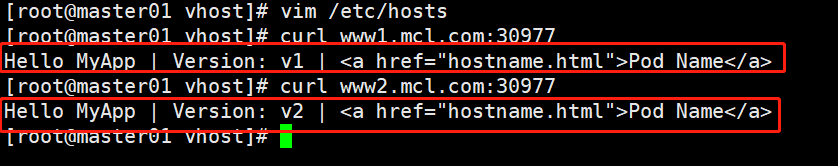

7.测试访问

kubectl get svc -n ingress-nginx

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

ingress-nginx NodePort 10.96.117.149 80:30977/TCP,443:30046/TCP 11m

#做主机映射

vim /etc/hosts

192.168.198.13 www1.mcl.com www2.mcl.com

curl www1.mcl.com:30977

curl www2.mcl.com:30977

8.Ingress HTTPS 代理访问

mkdir /opt/ingress-nodeport/https

cd /opt/ingress-nodeport/https

(1)创建ssl证书

openssl req -x509 -sha256 -nodes -days 365 -newkey rsa:2048 -keyout tls.key -out tls.crt -subj "/CN=nginxsvc/O=nginxsvc"

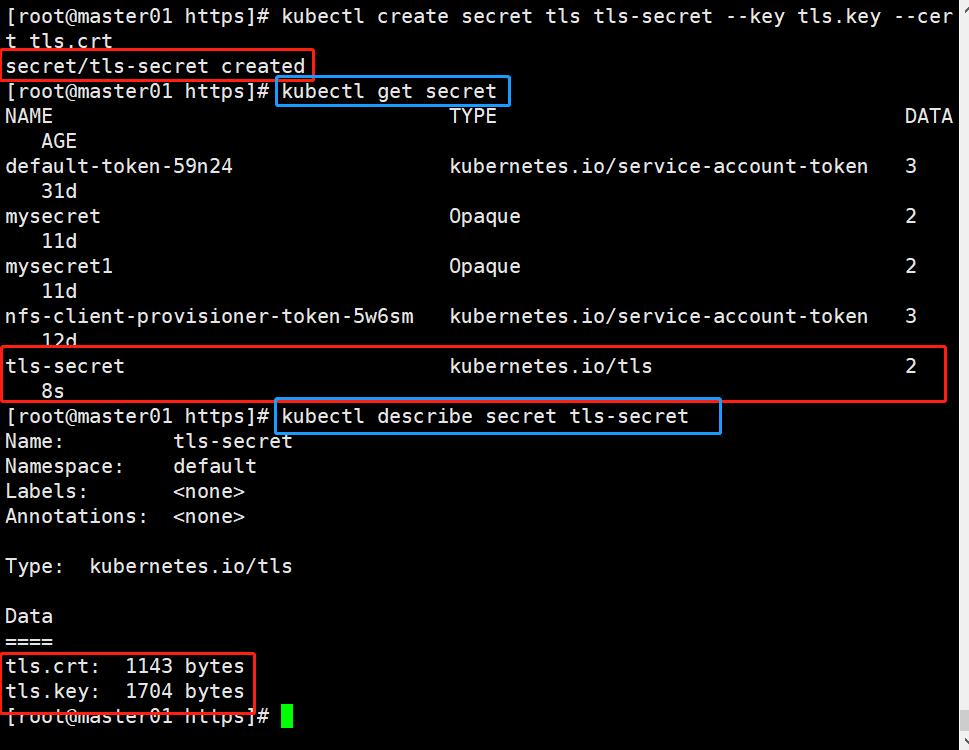

(2)创建 secret 资源进行存储

kubectl create secret tls tls-secret --key tls.key --cert tls.crt

kubectl get secret

kubectl describe secret tls-secret

(3)创建 deployment、Service、Ingress Yaml 资源

vim ingress-https.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-app

spec:

replicas: 2

selector:

matchLabels:

name: nginx

template:

metadata:

labels:

name: nginx

spec:

containers:

- name: nginx

image: nginx

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: nginx-svc

spec:

ports:

- port: 80

targetPort: 80

protocol: TCP

selector:

name: nginx

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: nginx-https

spec:

tls:

- hosts:

- www3.long.com

secretName: tls-secret

rules:

- host: www3.blue.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: nginx-svc

port:

number: 80

kubectl apply -f ingress-https.yaml

kubectl get svc -n ingress-nginx

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

ingress-nginx NodePort 10.96.117.149 80:30977/TCP,443:30046/TCP 22m

kubectl get ingress

nginx-https www3.blue.com 80, 443 14m

#访问测试

在宿主机的 C:\Windows\System32\drivers\etc\hosts 文件中添加 192.168.198.13 www3.blue.com 记录。

使用谷歌浏览器访问 https://www3.blue.com:30046

9.Nginx 进行 BasicAuth

mkdir /opt/ingress-nodeport/basic-auth

cd /opt/ingress-nodeport/basic-auth

(1)生成用户密码认证文件,创建 secret 资源进行存储

yum -y install httpd

htpasswd -c auth mcl #认证文件名必须为 auth

kubectl create secret generic basic-auth --from-file=auth

kubectl get secrets

kubectl describe secrets basic-auth

(2)创建 ingress 资源

vim ingress-auth.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: ingress-auth

annotations:

#设置认证类型basic

nginx.ingress.kubernetes.io/auth-type: basic

#设置secret资源名称basic-auth

nginx.ingress.kubernetes.io/auth-secret: basic-auth

#设置认证窗口提示信息

nginx.ingress.kubernetes.io/auth-realm: 'Authentication Required - mcl'

spec:

rules:

- host: auth.mcl.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: nginx-svc

port:

number: 80

//具体详细设置方法可参考官网https://kubernetes.github.io/ingress-nginx/examples/auth/basic/

kubectl apply -f ingress-auth.yaml

(3)访问测试

kubectl get svc -n ingress-nginx

ingress-nginx NodePort 10.96.117.149 80:30977/TCP,443:30046/TCP 64m

echo '192.168.198.13 auth.mcl.com' >> /etc/hosts

浏览器访问:http://auth.mcl.com:30046

10.Nginx 进行重写

(1)metadata.annotations 配置说明

nginx.ingress.kubernetes.io/rewrite-target: <字符串> #必须重定向流量的目标URI

nginx.ingress.kubernetes.io/ssl-redirect: <布尔值> #指示位置部分是否仅可访问SSL(当Ingress包含证书时,默认为true)

nginx.ingress.kubernetes.io/force-ssl-redirect: <布尔值> #即使Ingress未启用TLS,也强制重定向到HTTPS

nginx.ingress.kubernetes.io/app-root: <字符串> #定义Controller必须重定向的应用程序根,如果它在'/'上下文中

nginx.ingress.kubernetes.io/use-regex: <布尔值> #指示Ingress上定义的路径是否使用正则表达式

(2)编写ingress-rewrite.yaml

vim ingress-rewrite.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: nginx-rewrite

annotations:

nginx.ingress.kubernetes.io/rewrite-target: http://www1.mcl.com:31751

spec:

rules:

- host: re.mcl.com

http:

paths:

- path: /

pathType: Prefix

backend:

#由于re.kgc.com只是用于跳转不需要真实站点存在,因此svc资源名称可随意定义

service:

name: nginx-svc

port:

number: 80

(3)访问测试

kubectl apply -f ingress-rewrite.yaml

echo '192.168.198.13 re.mcl.com' >> /etc/hosts

浏览器访问:http://re.mcl.com:30046

总

ingress是k8s集群的请求入口,可以理解为对多个service的再次抽象

通常说的ingress一般包括ingress资源对象及ingress-controller两部分组成

ingress-controller有多种实现,社区原生的是ingress-nginx,根据具体需求选择

ingress自身的暴露有多种方式,需要根据基础环境及业务类型选择合适的方式