TiDB5.4分布式安装部署(一)

| 192.168.31.28 | pd,tidb,tikv,tiflash,monitoring,grafana,alertmanager |

| 192.168.31.196 | pd,tidb,tikv,tiflash |

| 192.168.31.112 | pd,tidb,tikv |

一、安装

1、执行如下命令安装 TiUP 工具:

curl --proto '=https' --tlsv1.2 -sSf https://tiup-mirrors.pingcap.com/install.sh | sh2、设置 TiUP 环境变量:

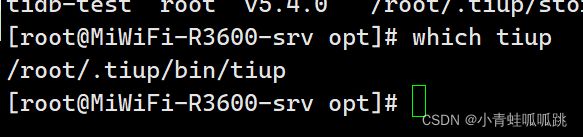

/root/.tiup/bin3、安装 TiUP cluster 组件:

tiup cluster4、如果已经安装,则更新 TiUP cluster 组件至最新版本

tiup update --self && tiup update cluster预期输出 “Update successfully!” 字样。

5、验证当前 TiUP cluster 版本信息。执行如下命令查看 TiUP cluster 组件版本:

tiup --binary cluster二、初始化集群拓扑文件

tiup cluster template > topology.yaml

#单台机器部署多个实

tiup cluster template --full > topology.yaml

#跨机房部署 TiDB 集群

tiup cluster template --multi-dc > topology.yaml执行 vi topology.yaml

# # Global variables are applied to all deployments and used as the default value of

# # the deployments if a specific deployment value is missing.

global:

# # The user who runs the tidb cluster.

user: "root"

# # group is used to specify the group name the user belong to if it's not the same as user.

# group: "tidb"

# # SSH port of servers in the managed cluster.

ssh_port: 22

# # Storage directory for cluster deployment files, startup scripts, and configuration files.

deploy_dir: "/tidb-deploy"

# # TiDB Cluster data storage directory

data_dir: "/tidb-data"

# # Supported values: "amd64", "arm64" (default: "amd64")

arch: "amd64"

# # Resource Control is used to limit the resource of an instance.

# # See: https://www.freedesktop.org/software/systemd/man/systemd.resource-control.html

# # Supports using instance-level `resource_control` to override global `resource_control`.

# resource_control:

# # See: https://www.freedesktop.org/software/systemd/man/systemd.resource-control.html#MemoryLimit=bytes

# memory_limit: "2G"

# # See: https://www.freedesktop.org/software/systemd/man/systemd.resource-control.html#CPUQuota=

# # The percentage specifies how much CPU time the unit shall get at maximum, relative to the total CPU time available on one CPU. Use values > 100% for allotting CPU time on more than one CPU.

# # Example: CPUQuota=200% ensures that the executed processes will never get more than two CPU time.

# cpu_quota: "200%"

# # See: https://www.freedesktop.org/software/systemd/man/systemd.resource-control.html#IOReadBandwidthMax=device%20bytes

# io_read_bandwidth_max: "/dev/disk/by-path/pci-0000:00:1f.2-scsi-0:0:0:0 100M"

# io_write_bandwidth_max: "/dev/disk/by-path/pci-0000:00:1f.2-scsi-0:0:0:0 100M"

# # Monitored variables are applied to all the machines.

monitored:

# # The communication port for reporting system information of each node in the TiDB cluster.

node_exporter_port: 9100

# # Blackbox_exporter communication port, used for TiDB cluster port monitoring.

blackbox_exporter_port: 9115

# # Storage directory for deployment files, startup scripts, and configuration files of monitoring components.

# deploy_dir: "/tidb-deploy/monitored-9100"

# # Data storage directory of monitoring components.

# data_dir: "/tidb-data/monitored-9100"

# # Log storage directory of the monitoring component.

# log_dir: "/tidb-deploy/monitored-9100/log"

# # Server configs are used to specify the runtime configuration of TiDB components.

# # All configuration items can be found in TiDB docs:

# # - TiDB: https://pingcap.com/docs/stable/reference/configuration/tidb-server/configuration-file/

# # - TiKV: https://pingcap.com/docs/stable/reference/configuration/tikv-server/configuration-file/

# # - PD: https://pingcap.com/docs/stable/reference/configuration/pd-server/configuration-file/

# # - TiFlash: https://docs.pingcap.com/tidb/stable/tiflash-configuration

# #

# # All configuration items use points to represent the hierarchy, e.g:

# # readpool.storage.use-unified-pool

# # ^ ^

# # - example: https://github.com/pingcap/tiup/blob/master/examples/topology.example.yaml.

# # You can overwrite this configuration via the instance-level `config` field.

# server_configs:

# tidb:

# tikv:

# pd:

# tiflash:

# tiflash-learner:

# # Server configs are used to specify the configuration of PD Servers.

pd_servers:

# # The ip address of the PD Server.

- host: 192.168.31.28

- host: 192.168.31.196

- host: 192.168.31.112

# # Server configs are used to specify the configuration of TiDB Servers.

tidb_servers:

# # The ip address of the TiDB Server.

- host: 192.168.31.28

- host: 192.168.31.196

- host: 192.168.31.112

# # SSH port of the server.

# ssh_port: 22

# # The port for clients to access the TiDB cluster.

# port: 4000

# # TiDB Server status API port.

# status_port: 10080

# # TiDB Server deployment file, startup script, configuration file storage directory.

# deploy_dir: "/tidb-deploy/tidb-4000"

# # TiDB Server log file storage directory.

# log_dir: "/tidb-deploy/tidb-4000/log"

# # The ip address of the TiDB Server.

# # Server configs are used to specify the configuration of TiKV Servers.

tikv_servers:

# # The ip address of the TiKV Server.

- host: 192.168.31.28

deploy_dir: "/tidb-deploy/tikv-20160"

data_dir: "/tidb-data/tikv-20160"

- host: 192.168.31.196

deploy_dir: "/tidb-deploy/tikv-20160"

data_dir: "/tidb-data/tikv-20160"

- host: 192.168.31.112

deploy_dir: "/tidb-deploy/tikv-20160"

data_dir: "/tidb-data/tikv-20160"

# # SSH port of the server.

# ssh_port: 22

# # TiKV Server communication port.

# port: 20160

# # TiKV Server status API port.

# status_port: 20180

# # TiKV Server deployment file, startup script, configuration file storage directory.

# deploy_dir: "/tidb-deploy/tikv-20160"

# # TiKV Server data storage directory.

# data_dir: "/tidb-data/tikv-20160"

# # TiKV Server log file storage directory.

# log_dir: "/tidb-deploy/tikv-20160/log"

# # The following configs are used to overwrite the `server_configs.tikv` values.

# config:

# log.level: warn

# # The ip address of the TiKV Server.

# # Server configs are used to specify the configuration of TiFlash Servers.

tiflash_servers:

# # The ip address of the TiFlash Server.

- host: 192.168.31.28

data_dir: /tidb-data/tiflash-9000

log_dir: /tidb-deploy/tiflash-9000/log

- host: 192.168.31.196

data_dir: /tidb-data/tiflash-9000

log_dir: /tidb-deploy/tiflash-9000/log

# # SSH port of the server.

# ssh_port: 22

# # TiFlash TCP Service port.

# tcp_port: 9000

# # TiFlash HTTP Service port.

# http_port: 8123

# # TiFlash raft service and coprocessor service listening address.

# flash_service_port: 3930

# # TiFlash Proxy service port.

# flash_proxy_port: 20170

# # TiFlash Proxy metrics port.

# flash_proxy_status_port: 20292

# # TiFlash metrics port.

# metrics_port: 8234

# # TiFlash Server deployment file, startup script, configuration file storage directory.

# deploy_dir: /tidb-deploy/tiflash-9000

## With cluster version >= v4.0.9 and you want to deploy a multi-disk TiFlash node, it is recommended to

## check config.storage.* for details. The data_dir will be ignored if you defined those configurations.

## Setting data_dir to a ','-joined string is still supported but deprecated.

## Check https://docs.pingcap.com/tidb/stable/tiflash-configuration#multi-disk-deployment for more details.

# # TiFlash Server data storage directory.

# data_dir: /tidb-data/tiflash-9000

# # TiFlash Server log file storage directory.

# log_dir: /tidb-deploy/tiflash-9000/log

# # The ip address of the TiKV Server.

# # Server configs are used to specify the configuration of Prometheus Server.

monitoring_servers:

# # The ip address of the Monitoring Server.

- host: 192.168.31.28

# # SSH port of the server.

# ssh_port: 22

# # Prometheus Service communication port.

# port: 9090

# # ng-monitoring servive communication port

# ng_port: 12020

# # Prometheus deployment file, startup script, configuration file storage directory.

# deploy_dir: "/tidb-deploy/prometheus-8249"

# # Prometheus data storage directory.

# data_dir: "/tidb-data/prometheus-8249"

# # Prometheus log file storage directory.

# log_dir: "/tidb-deploy/prometheus-8249/log"

# # Server configs are used to specify the configuration of Grafana Servers.

grafana_servers:

# # The ip address of the Grafana Server.

- host: 192.168.31.28

# # Grafana web port (browser access)

# port: 3000

# # Grafana deployment file, startup script, configuration file storage directory.

# deploy_dir: /tidb-deploy/grafana-3000

# # Server configs are used to specify the configuration of Alertmanager Servers.

alertmanager_servers:

# # The ip address of the Alertmanager Server.

- host: 192.168.31.28

# # SSH port of the server.

# ssh_port: 22

# # Alertmanager web service port.

# web_port: 9093

# # Alertmanager communication port.

# cluster_port: 9094

# # Alertmanager deployment file, startup script, configuration file storage directory.

# deploy_dir: "/tidb-deploy/alertmanager-9093"

# # Alertmanager data storage directory.

# data_dir: "/tidb-data/alertmanager-9093"

# # Alertmanager log file storage directory.

# log_dir: "/tidb-deploy/alertmanager-9093/log"

三、执行部署命令

-

检查集群存在的潜在风险:

tiup cluster check ./topology.yaml --user root [-p] [-i /home/root/.ssh/gcp_rsa] -

自动修复集群存在的潜在风险:

tiup cluster check ./topology.yaml --apply --user root [-p] [-i /home/root/.ssh/gcp_rsa] -

部署 TiDB 集群:

tiup cluster deploy tidb-test v5.4.0 ./topology.yaml --user root [-p] [-i /home/root/.ssh/gc预期日志结尾输出 Deployed cluster `tidb-test` successfully 关键词,表示部署成功。

以上部署示例中:

-

tidb-test为部署的集群名称。 v5.4.0为部署的集群版本,可以通过执行tiup list tidb来查看 TiUP 支持的最新可用版本。- 初始化配置文件为

topology.yaml。 --user root表示通过 root 用户登录到目标主机完成集群部署,该用户需要有 ssh 到目标机器的权限,并且在目标机器有 sudo 权限。也可以用其他有 ssh 和 sudo 权限的用户完成部署。- [-i] 及 [-p] 为可选项,如果已经配置免密登录目标机,则不需填写。否则选择其一即可,[-i] 为可登录到目标机的 root 用户(或 --user 指定的其他用户)的私钥,也可使用 [-p] 交互式输入该用户的密码。

四、查看 TiUP 管理的集群情况

tiup cluster list五、启动集群

方式一:安全启动

tiup cluster start tidb-test --init预期结果如下,表示启动成功。

Started cluster `tidb-test` successfully.

The root password of TiDB database has been changed.

The new password is: 'y_+3Hwp=*AWz8971s6'.

Copy and record it to somewhere safe, it is only displayed once, and will not be stored.

The generated password can NOT be got again in future.方式二:普通启动

tiup cluster start tidb-test预期结果输出 Started cluster `tidb-test` successfully,表示启动成功。使用普通启动方式后,可通过无密码的 root 用户登录数据库。

验证集群运行状态

tiup cluster display tidb-test预期结果输出:各节点 Status 状态信息为 Up 说明集群状态正常。

六、遇见的问题

在第三步,检查集群存在的潜在风险,如果有风险,是部署失败的。

1、关闭透明大页

执行以下命令查看透明大页的开启状态。

cat sys/kernel/mm/transparent_hugepage/enabled[always] madvise never 表示透明大页处于启用状态,需要关闭。

关闭透明大页

echo never > sys/kernel/mm/transparent_hugepage/enabled

echo never > sys/kernel/mm/transparent_hugepage/defrag2、检测及关闭系统 swap

echo "vm.swappiness = 0">> etc/sysctl.conf

swapoff -a

sysctl -p3、安装 numactl

安装 numactl 工具在生产环境中,因为硬件机器配置往往高于需求,为了更合理规划资源,会考虑单机多实例部署 TiDB 或者 TiKV。NUMA 绑核工具的使用,主要为了防止 CPU 资源的争抢,引发性能衰退。

yum -y install numactl4、关闭防火墙

#centos

systemctl status firewalld.service

systemctl stop firewalld

systemctl disable firewalld

#ubuntu

sudo ufw status

ufw disable

ufw enable七、常用命令

#停止

tiup cluster stop tidb-test

#清理数据

tiup cluster clean tidb-test --all

#卸载

tiup cluster destroy tidb-test

tiup cluster list

tiup cluster start tidb-test --init

tiup cluster display tidb-test#创建用户

create user test identified by '123456';

#修改密码

alter user test identified by '123789';

#创建库

create database test1 char set utf8;

#授权

grant all privileges on test1.* to test identified by '123456';

#取消授权

revoke all on test1.* from 'test';

#查看授权

show grants for 'test';

#授权

GRANT ALL PRIVILEGES ON root TO 'test'@'%';

flush privileges;