k8s安装kubeadm安装1.22

实验环境

系统都是centos 7

| IP地址 | 主机名称 |

|---|---|

| 192.168.0.2 | k8s-master01 |

| 192.168.0.3 | k8s-node01 |

| 192.168.0.4 | k8s-node01 |

所有节点修改主机名称

cat <> /etc/hosts

192.168.0.2 k8s-master01

192.168.0.3 K8s-node01

192.168.0.4 K8s-node02

EOF

所有节点配置阿里云镜像源

curl -o /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-7.repo # 配置centos 7的镜像源

yum install -y yum-utils device-mapper-persistent-data lvm2 # 安装一些后期或需要的的一下依赖

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

sed -i 's/http/https/g' /etc/yum.repos.d/CentOS-Base.repo

cat < /etc/yum.repos.d/kubernetes.repo # 配置阿里云的k8s源

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

sed -i -e '/mirrors.cloud.aliyuncs.com/d' -e '/mirrors.aliyuncs.com/d' /etc/yum.repos.d/CentOS-Base.repo

所有节点安装一些所需要的环境

yum install wget jq psmisc vim net-tools telnet yum-utils device-mapper-persistent-data lvm2 git -y

所有节点关闭服务器防火墙:如果是云服务器记得开放安全组访问权限

systemctl disable --now firewalld

systemctl disable --now dnsmasq

systemctl disable --now NetworkManager

setenforce 0

sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/sysconfig/selinux

sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/selinux/config

关闭swap分区

swapoff -a && sysctl -w vm.swappiness=0

sed -ri '/^[^#]*swap/s@^@#@' /etc/fstab

所有节点配置limit

ulimit -SHn 65535

-a 显示目前资源限制的设定。

-c 设定core文件的最大值,单位为区块。

-d <数据节区大小> 程序数据节区的最大值,单位为KB。

-f <文件大小> shell所能建立的最大文件,单位为KB。

-H 设定资源的硬性限制,也就是管理员所设下的限制。

-m <内存大小> 指定可使用内存的上限,单位为KB。

-n <文件数目> 指定同一时间最多可打开的文件数。

-p <缓冲区大小> 指定管道缓冲区的大小,单位512字节。

-s <堆栈大小> 指定堆叠的上限,单位为KB。

-S 设定资源的弹性限制。

-t 指定CPU使用时间的上限,单位为秒。

-u <进程数目> 用户最多可启动的进程数目。

vim /etc/security/limits.conf

# 末尾添加如下内容 相当于配置永久生效

cat << EOF >> /etc/security/limits.conf

* soft nofile 65536

* hard nofile 131072

* soft nproc 65535

* hard nproc 655350

* soft memlock unlimited

* hard memlock unlimited

EOF

安装免登方便操作

ssh-keygen -t rsa

for i in k8s-master01 k8s-master02 k8s-master03 k8s-node01 k8s-node02;do ssh-copy-id -i .ssh/id_rsa.pub $i;done

升级内核

cd /root

wget http://193.49.22.109/elrepo/kernel/el7/x86_64/RPMS/kernel-ml-devel-4.19.12-1.el7.elrepo.x86_64.rpm

wget http://193.49.22.109/elrepo/kernel/el7/x86_64/RPMS/kernel-ml-4.19.12-1.el7.elrepo.x86_64.rpm

yum update -y --exclude=kernel* && reboot

cd /root && yum localinstall -y kernel-ml*

所有节点更改内核启动顺序

grub2-set-default 0 && grub2-mkconfig -o /etc/grub2.cfg

grubby --args="user_namespace.enable=1" --update-kernel="$(grubby --default-kernel)"

reboot

[root@k8s-master01 ~]# uname -a # 查看系统内核

Linux k8s-master02 4.19.12-1.el7.elrepo.x86_64 #1 SMP Fri Dec 21 11:06:36 EST 2018 x86_64 x86_64 x86_64 GNU/Linux

下载安装所有的源码文件

所有节点安装ipvsadm(简单来说介绍LVS)

yum install ipvsadm ipset sysstat conntrack libseccomp -y

所有节点配置ipvs模块,在内核4.19+版本nf_conntrack_ipv4已经改为nf_conntrack, 4.18以下使用nf_conntrack_ipv4即可

yum install ipvsadm ipset sysstat conntrack libseccomp -y

# 配置内核模块

cat << EOF >> /etc/modules-load.d/ipvs.conf

# 加入以下内容

ip_vs

ip_vs_lc

ip_vs_wlc

ip_vs_rr

ip_vs_wrr

ip_vs_lblc

ip_vs_lblcr

ip_vs_dh

ip_vs_sh

ip_vs_fo

ip_vs_nq

ip_vs_sed

ip_vs_ftp

ip_vs_sh

nf_conntrack

ip_tables

ip_set

xt_set

ipt_set

ipt_rpfilter

ipt_REJECT

ipip

EOF

然后执行systemctl enable --now systemd-modules-load.service即可 # 开启的时候加载内核模块

开启一些k8s集群中必须的内核参数,所有节点配置k8s内核:

cat < /etc/sysctl.d/k8s.conf

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

fs.may_detach_mounts = 1

net.ipv4.conf.all.route_localnet = 1

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.netfilter.nf_conntrack_max=2310720

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_keepalive_intvl =15

net.ipv4.tcp_max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp_max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 65536

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.tcp_timestamps = 0

net.core.somaxconn = 16384

EOF

sysctl --system

准备安装docker和其他组件:

yum install docker-ce-19.03.* docker-ce-cli-19.03.* -y

# 由于新版kubelet建议使用systemd,所以可以把docker的CgroupDriver改成systemd

# "live-restore": true这个此参数相当于是进行加载docker不进行重启里面的镜像

mkdir /etc/docker

cat > /etc/docker/daemon.json <<EOF

{

"registry-mirrors": [

"https://registry.docker-cn.com",

"http://hub-mirror.c.163.com",

"https://docker.mirrors.ustc.edu.cn"

],

"exec-opts": ["native.cgroupdriver=systemd"],

"max-concurrent-downloads": 10,

"max-concurrent-uploads": 5,

"log-opts": {

"max-size": "300m",

"max-file": "2"

},

"live-restore": true

}

EOF

# 所有节点设置开机自启动Docker:

systemctl daemon-reload && systemctl enable --now docker

安装kubectl等组件

yum install -y --nogpgcheck kubeadm-1.22* kubelet-1.22* kubectl-1.22*

# 配置阿里的pause源

cat >/etc/sysconfig/kubelet<安装完成kubectl开始进行集群初始化

mastart01 执行

kubeadm init --apiserver-advertise-address=192.168.0.163 --pod-network-cidr=172.16.0.0/12 --image-repository=registry.aliyuncs.com/google_containers

# 可参考官方文档

https://kubernetes.io/zh/docs/setup/production-environment/tools/kubeadm/create-cluster-kubeadm/

# k8s 命令永久补全

yum install bash-completion -y

source /usr/share/bash-completion/bash_completion

source <(kubectl completion bash)

echo "source <(kubectl completion bash)" >> ~/.bashrc

进行安装k8s网络组件

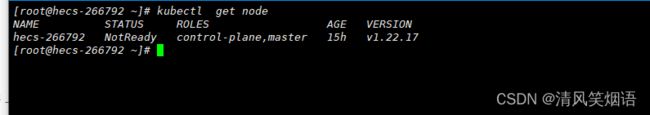

进行查看是否有网络组件

进行安装网络组件

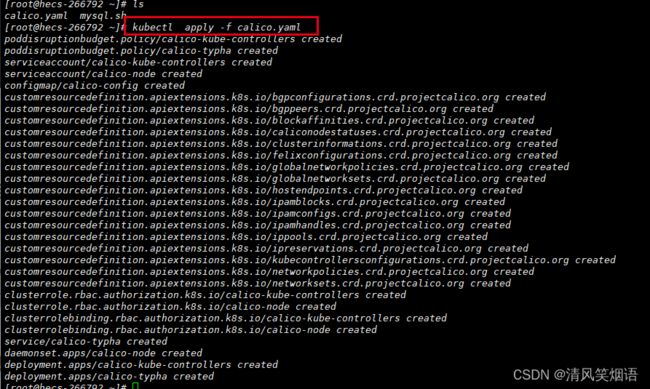

curl https://raw.githubusercontent.com/projectcalico/calico/v3.25.0/manifests/calico-typha.yaml -o calico.yaml # 注意,这边使用的官网的地址,可能会导致无法下载下来

kubectl apply -f calico.yaml # 进行加载此配置即可

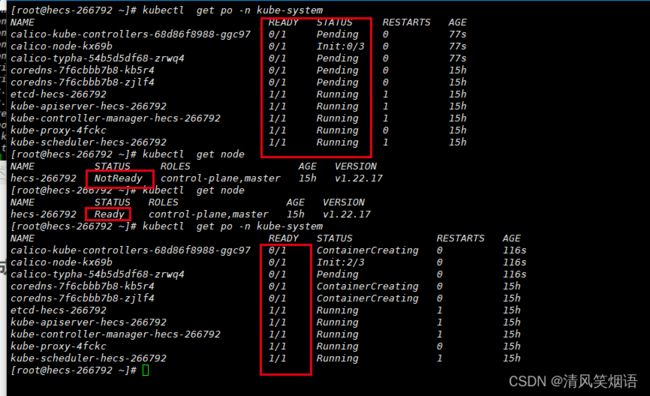

查看是否安装成功

[root@hecs-266792 ~]# kubectl get po -n kube-system

NAME READY STATUS RESTARTS AGE

calico-kube-controllers-68d86f8988-ggc97 0/1 Pending 0 77s

calico-node-kx69b 0/1 Init:0/3 0 77s

calico-typha-54b5d5df68-zrwq4 0/1 Pending 0 77s

coredns-7f6cbbb7b8-kb5r4 0/1 Pending 0 15h

coredns-7f6cbbb7b8-zjlf4 0/1 Pending 0 15h

etcd-hecs-266792 1/1 Running 1 15h

kube-apiserver-hecs-266792 1/1 Running 1 15h

kube-controller-manager-hecs-266792 1/1 Running 1 15h

kube-proxy-4fckc 1/1 Running 0 15h

kube-scheduler-hecs-266792 1/1 Running 1 15h

[root@hecs-266792 ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

hecs-266792 NotReady control-plane,master 15h v1.22.17

[root@hecs-266792 ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

hecs-266792 Ready control-plane,master 15h v1.22.17

[root@hecs-266792 ~]# kubectl get po -n kube-system

NAME READY STATUS RESTARTS AGE

calico-kube-controllers-68d86f8988-ggc97 0/1 ContainerCreating 0 116s

calico-node-kx69b 0/1 Init:2/3 0 116s

calico-typha-54b5d5df68-zrwq4 0/1 Pending 0 116s

coredns-7f6cbbb7b8-kb5r4 0/1 ContainerCreating 0 15h

coredns-7f6cbbb7b8-zjlf4 0/1 ContainerCreating 0 15h

etcd-hecs-266792 1/1 Running 1 15h

kube-apiserver-hecs-266792 1/1 Running 1 15h

kube-controller-manager-hecs-266792 1/1 Running 1 15h

kube-proxy-4fckc 1/1 Running 0 15h

kube-scheduler-hecs-266792 1/1 Running 1 15h

[root@hecs-266792 ~]#