@Slf4j将日志记录到磁盘和数据库

文章目录

-

- 1、背景介绍

- 2、存本地

-

- 2.1、配置文件

- 2.2、使用

- 3、存数据库

-

- 3.1、配置文件改造

- 3.2、过滤器编写

- 3.3、表准备

- 3.4、添加依赖

- 3.5、测试

- 4、优化

-

- 4.1、日志定期删除

1、背景介绍

现在我一个SpringBoot项目想记录日志,大概可以分为下面这几种:

- 用户操作日志:作用是记录什么用户在什么时间点访问了什么接口做了什么操作,相当于对用户在系统中的一举一动做了一个监控;

- 登录登出日志:就是将所有用户登录系统和登出系统记录到数据库,比如时间、IP、IP归属地等做一个记录,这个简单,就不过多赘述了

- 开发调试日志:就是我们常用的log.info(“xxxxx”);记录一些日志信息到磁盘中,方便上生产后,出现Bug时,可以多一些日志信息帮助我们尽快定位问题的

今天我们主要来好好聊一下开发调试日志

2、存本地

我们先来介绍一下,开发调试日志如何存本地磁盘

2.1、配置文件

首先我们需要在SpringBoot工程的resources下新建一个名叫【logback-spring.xml】的文件,内容如下:

<configuration scan="true" scanPeriod="60 seconds" debug="false">

<jmxConfigurator />

<property name="charset" value="UTF-8"/>

<property name="log_dir" value="C:/LOGS/EasyJavaSE" />

<property name="maxHistory" value="30" />

<property name="maxFileSize" value="5MB" />

<property name="FORMAT" value="[%X{TRACE_ID}] - %-5level %d{yyyy-MM-dd HH:mm:ss.SSS} [%thread] %c.%M:%L:%msg%n"/>

<appender name="console" class="ch.qos.logback.core.ConsoleAppender">

<encoder>

<pattern>

${FORMAT}

pattern>

encoder>

appender>

<appender name="ERROR" class="ch.qos.logback.core.rolling.RollingFileAppender">

<filter class="ch.qos.logback.classic.filter.LevelFilter">

<level>ERRORlevel>

<onMatch>ACCEPTonMatch>

<onMismatch>DENYonMismatch>

filter>

<rollingPolicy class="ch.qos.logback.core.rolling.TimeBasedRollingPolicy">

<fileNamePattern>

${log_dir}/error/%d{yyyy-MM-dd}/error-%i.log

fileNamePattern>

<maxHistory>${maxHistory}maxHistory>

<timeBasedFileNamingAndTriggeringPolicy class="ch.qos.logback.core.rolling.SizeAndTimeBasedFNATP">

<maxFileSize>${maxFileSize}maxFileSize>

timeBasedFileNamingAndTriggeringPolicy>

rollingPolicy>

<encoder>

<pattern>

${FORMAT}

pattern>

encoder>

appender>

<appender name="WARN" class="ch.qos.logback.core.rolling.RollingFileAppender">

<filter class="ch.qos.logback.classic.filter.LevelFilter">

<level>WARNlevel>

<onMatch>ACCEPTonMatch>

<onMismatch>DENYonMismatch>

filter>

<rollingPolicy class="ch.qos.logback.core.rolling.TimeBasedRollingPolicy">

<fileNamePattern>${log_dir}/warn/%d{yyyy-MM-dd}/warn-%i.logfileNamePattern>

<maxHistory>${maxHistory}maxHistory>

<timeBasedFileNamingAndTriggeringPolicy class="ch.qos.logback.core.rolling.SizeAndTimeBasedFNATP">

<maxFileSize>${maxFileSize}maxFileSize>

timeBasedFileNamingAndTriggeringPolicy>

rollingPolicy>

<encoder>

<pattern>${FORMAT}pattern>

encoder>

appender>

<appender name="INFO" class="ch.qos.logback.core.rolling.RollingFileAppender">

<filter class="ch.qos.logback.classic.filter.LevelFilter">

<level>INFOlevel>

<onMatch>ACCEPTonMatch>

<onMismatch>DENYonMismatch>

filter>

<rollingPolicy class="ch.qos.logback.core.rolling.TimeBasedRollingPolicy">

<fileNamePattern>${log_dir}/info/%d{yyyy-MM-dd}/info-%i.logfileNamePattern>

<maxHistory>${maxHistory}maxHistory>

<timeBasedFileNamingAndTriggeringPolicy class="ch.qos.logback.core.rolling.SizeAndTimeBasedFNATP">

<maxFileSize>${maxFileSize}maxFileSize>

timeBasedFileNamingAndTriggeringPolicy>

rollingPolicy>

<encoder>

<pattern>${FORMAT}pattern>

encoder>

appender>

<appender name="DEBUG" class="ch.qos.logback.core.rolling.RollingFileAppender">

<filter class="ch.qos.logback.classic.filter.LevelFilter">

<level>DEBUGlevel>

<onMatch>ACCEPTonMatch>

<onMismatch>DENYonMismatch>

filter>

<rollingPolicy class="ch.qos.logback.core.rolling.TimeBasedRollingPolicy">

<fileNamePattern>${log_dir}/debug/%d{yyyy-MM-dd}/debug-%i.logfileNamePattern>

<maxHistory>${maxHistory}maxHistory>

<timeBasedFileNamingAndTriggeringPolicy class="ch.qos.logback.core.rolling.SizeAndTimeBasedFNATP">

<maxFileSize>${maxFileSize}maxFileSize>

timeBasedFileNamingAndTriggeringPolicy>

rollingPolicy>

<encoder>

<pattern>${FORMAT}pattern>

encoder>

appender>

<root>

<level value="info" />

<appender-ref ref="console" />

<appender-ref ref="ERROR" />

<appender-ref ref="INFO" />

<appender-ref ref="WARN" />

<appender-ref ref="DEBUG" />

root>

configuration>

2.2、使用

要想要使用也非常简单,只需要先在pom.xml中导入下面依赖:

<dependency>

<groupId>org.projectlombokgroupId>

<artifactId>lombokartifactId>

dependency>

然后在需要记录日志的类上打一个下面的注解:

@Slf4j

public class TestController{

//........

}

然后就可以直接使用了,像这样:

@Slf4j

@RestController

@RequestMapping("/test")

public class TestController{

@GetMapping("/test002/{name}")

public JSONResult test002(@PathVariable("name") String name){

log.info("这是 info 级别日志");

log.warn("这是 warn 级别日志");

log.error("这是 error 级别日志");

log.debug("这是 debug 级别日志");

return JSONResult.success(name);

}

}

项目启动,浏览器访问:http/localhost:8008/test/test002/tom,然后你去看磁盘对应目录中,就会记录日志成功了

3、存数据库

上面方式虽然可以记录开发调试日志到磁盘,但是有个问题是,每次要看日志的时候,都需要连接生产环境的Linux服务器,然后拉取日志文件到本地了再分析,这样比较耗时,所以我们可以优化一下,将这些日志信息存到数据库中,然后搞一个管理页面直接展示,这样查看生产环境日志就简单多了,改造步骤如下:

3.1、配置文件改造

【logback-spring.xml】配置文件改成下面这样:

<configuration scan="true" scanPeriod="60 seconds" debug="false">

<jmxConfigurator />

<property name="charset" value="UTF-8"/>

<property name="log_dir" value="C:/LOGS/EasyJavaSE" />

<property name="maxHistory" value="30" />

<property name="maxFileSize" value="5MB" />

<property name="FORMAT" value="[%X{TRACE_ID}] - %-5level %d{yyyy-MM-dd HH:mm:ss.SSS} [%thread] %c.%M:%L:%msg%n"/>

<appender name="console" class="ch.qos.logback.core.ConsoleAppender">

<encoder>

<pattern>

${FORMAT}

pattern>

encoder>

appender>

<appender name="ERROR" class="ch.qos.logback.core.rolling.RollingFileAppender">

<filter class="ch.qos.logback.classic.filter.LevelFilter">

<level>ERRORlevel>

<onMatch>ACCEPTonMatch>

<onMismatch>DENYonMismatch>

filter>

<rollingPolicy class="ch.qos.logback.core.rolling.TimeBasedRollingPolicy">

<fileNamePattern>

${log_dir}/error/%d{yyyy-MM-dd}/error-%i.log

fileNamePattern>

<maxHistory>${maxHistory}maxHistory>

<timeBasedFileNamingAndTriggeringPolicy class="ch.qos.logback.core.rolling.SizeAndTimeBasedFNATP">

<maxFileSize>${maxFileSize}maxFileSize>

timeBasedFileNamingAndTriggeringPolicy>

rollingPolicy>

<encoder>

<pattern>

${FORMAT}

pattern>

encoder>

appender>

<appender name="WARN" class="ch.qos.logback.core.rolling.RollingFileAppender">

<filter class="ch.qos.logback.classic.filter.LevelFilter">

<level>WARNlevel>

<onMatch>ACCEPTonMatch>

<onMismatch>DENYonMismatch>

filter>

<rollingPolicy class="ch.qos.logback.core.rolling.TimeBasedRollingPolicy">

<fileNamePattern>${log_dir}/warn/%d{yyyy-MM-dd}/warn-%i.logfileNamePattern>

<maxHistory>${maxHistory}maxHistory>

<timeBasedFileNamingAndTriggeringPolicy class="ch.qos.logback.core.rolling.SizeAndTimeBasedFNATP">

<maxFileSize>${maxFileSize}maxFileSize>

timeBasedFileNamingAndTriggeringPolicy>

rollingPolicy>

<encoder>

<pattern>${FORMAT}pattern>

encoder>

appender>

<appender name="INFO" class="ch.qos.logback.core.rolling.RollingFileAppender">

<filter class="ch.qos.logback.classic.filter.LevelFilter">

<level>INFOlevel>

<onMatch>ACCEPTonMatch>

<onMismatch>DENYonMismatch>

filter>

<rollingPolicy class="ch.qos.logback.core.rolling.TimeBasedRollingPolicy">

<fileNamePattern>${log_dir}/info/%d{yyyy-MM-dd}/info-%i.logfileNamePattern>

<maxHistory>${maxHistory}maxHistory>

<timeBasedFileNamingAndTriggeringPolicy class="ch.qos.logback.core.rolling.SizeAndTimeBasedFNATP">

<maxFileSize>${maxFileSize}maxFileSize>

timeBasedFileNamingAndTriggeringPolicy>

rollingPolicy>

<encoder>

<pattern>${FORMAT}pattern>

encoder>

appender>

<appender name="DEBUG" class="ch.qos.logback.core.rolling.RollingFileAppender">

<filter class="ch.qos.logback.classic.filter.LevelFilter">

<level>DEBUGlevel>

<onMatch>ACCEPTonMatch>

<onMismatch>DENYonMismatch>

filter>

<rollingPolicy class="ch.qos.logback.core.rolling.TimeBasedRollingPolicy">

<fileNamePattern>${log_dir}/debug/%d{yyyy-MM-dd}/debug-%i.logfileNamePattern>

<maxHistory>${maxHistory}maxHistory>

<timeBasedFileNamingAndTriggeringPolicy class="ch.qos.logback.core.rolling.SizeAndTimeBasedFNATP">

<maxFileSize>${maxFileSize}maxFileSize>

timeBasedFileNamingAndTriggeringPolicy>

rollingPolicy>

<encoder>

<pattern>${FORMAT}pattern>

encoder>

appender>

<appender name="DB" class="ch.qos.logback.classic.db.DBAppender">

<connectionSource class="ch.qos.logback.core.db.DriverManagerConnectionSource">

<driverClass>com.mysql.cj.jdbc.DriverdriverClass>

<url>jdbc:mysql://127.0.0.1:3306/easyjavase?useSSL=false&useUnicode=true&characterEncoding=UTF-8&serverTimezone=UTCurl>

<user>rootuser>

<password>123456password>

connectionSource>

<filter class="cn.wujiangbo.filter.LogFilter">

filter>

appender>

<root>

<level value="info" />

<appender-ref ref="console" />

<appender-ref ref="ERROR" />

<appender-ref ref="INFO" />

<appender-ref ref="WARN" />

<appender-ref ref="DEBUG" />

<appender-ref ref="DB" />

root>

configuration>

3.2、过滤器编写

新建一个过滤器,处理日志,哪些需要入库,哪些不需要入库,都在这个过滤器中指定,代码如下:

package cn.wujiangbo.filter;

import ch.qos.logback.classic.spi.LoggingEvent;

import ch.qos.logback.core.filter.Filter;

import ch.qos.logback.core.spi.FilterReply;

import cn.hutool.extra.spring.SpringUtil;

import cn.wujiangbo.domain.system.EasySlf4jLogging;

import cn.wujiangbo.service.system.EasySlf4jLoggingService;

import cn.wujiangbo.utils.DateUtils;

/**

* Slf4j日志入库-过滤器

*

* @author 波波老师(微信:javabobo0513)

*/

public class LogFilter extends Filter<LoggingEvent> {

public EasySlf4jLoggingService easySlf4jLoggingService = null;

@Override

public FilterReply decide(LoggingEvent event) {

String loggerName = event.getLoggerName();

if(loggerName.startsWith("cn.wujiangbo")){

//项目本身的日志才会入库

EasySlf4jLogging log = new EasySlf4jLogging();

log.setLogTime(DateUtils.getCurrentLocalDateTime());

log.setLogThread(event.getThreadName());

log.setLogClass(loggerName);

log.setLogLevel(event.getLevel().levelStr);

log.setTrackId(event.getMDCPropertyMap().get("TRACE_ID"));

log.setLogContent(event.getFormattedMessage());//日志内容

easySlf4jLoggingService = SpringUtil.getBean(EasySlf4jLoggingService.class);

//日志入库

easySlf4jLoggingService.asyncSave(log);

return FilterReply.ACCEPT;

}else{

//非项目本身的日志不会入库

return FilterReply.DENY;

}

}

}

3.3、表准备

数据库准备一张表存开发日志信息,建表语句如下:

DROP TABLE IF EXISTS `easy_slf4j_logging`;

CREATE TABLE `easy_slf4j_logging` (

`id` bigint(20) NOT NULL AUTO_INCREMENT COMMENT '主键ID',

`log_level` varchar(20) CHARACTER SET utf8 COLLATE utf8_general_ci NOT NULL COMMENT '日志级别',

`log_content` text CHARACTER SET utf8 COLLATE utf8_general_ci NULL COMMENT '日志内容',

`log_time` datetime NULL DEFAULT NULL COMMENT '日志时间',

`log_class` varchar(100) CHARACTER SET utf8 COLLATE utf8_general_ci NULL DEFAULT NULL COMMENT '日志类路径',

`log_thread` varchar(50) CHARACTER SET utf8 COLLATE utf8_general_ci NULL DEFAULT NULL COMMENT '日志线程',

`track_id` varchar(50) CHARACTER SET utf8 COLLATE utf8_general_ci NULL DEFAULT NULL COMMENT '全局跟踪ID',

PRIMARY KEY (`id`) USING BTREE

) ENGINE = InnoDB AUTO_INCREMENT = 1 CHARACTER SET = utf8 COLLATE = utf8_general_ci COMMENT = 'Slf4j日志表' ROW_FORMAT = Compact;

3.4、添加依赖

pom.xml中需要添加下面依赖:

<dependency>

<groupId>ch.qos.logbackgroupId>

<artifactId>logback-coreartifactId>

<version>1.2.7version>

dependency>

3.5、测试

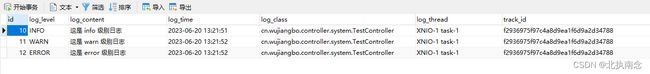

启动项目,浏览器访问:http://localhost:8008/test/test002/tom,然后表中就有数据了,如下:

管理页面就可以直接查到了,如下:

nice

4、优化

4.1、日志定期删除

系统中会有各种各样的日志,防止数据过大,我们可以只保留最近6个月的数据,6个月以前的日志信息将其删除掉,可以搞一个定时任务,每天晚上11:30执行一次,当然,什么时候执行,大家根据自己实际情况修改,我这里定时任务代码如下:

package cn.wujiangbo.task;

import cn.wujiangbo.mapper.system.EasySlf4jLoggingMapper;

import cn.wujiangbo.utils.DateUtils;

import lombok.extern.slf4j.Slf4j;

import org.springframework.scheduling.annotation.Scheduled;

import org.springframework.stereotype.Component;

import javax.annotation.Resource;

/**

* 定时任务类

*

* @author 波波老师(微信 : javabobo0513)

*/

@Component

@Slf4j

public class CommonTask {

@Resource

public EasySlf4jLoggingMapper easySlf4jLoggingMapper;

/**

* 删除日志表数据

*/

@Scheduled(cron = "0 30 23 ? * *")//每天晚上11:30触发

public void task001() {

log.info("--------【定时任务:删除数据库日志-每晚11:30触发一次】-------------执行开始,时间:{}", DateUtils.getCurrentDateString());

easySlf4jLoggingMapper.deleteLog();

log.info("--------【定时任务:删除数据库日志-每晚11:30触发一次】-------------执行结束,时间:{}", DateUtils.getCurrentDateString());

}

}

SQL语句如下:

delete from easy_slf4j_logging where log_time <= DATE_SUB(CURDATE(), INTERVAL 6 MONTH)