智能证件照制作——基于人脸检测与自动人像分割轻松制作个人证件照(C++实现)

前言

1.关于证件照,有好多种制作办法,最常见的是使用PS来做图像处理,或者下载各种证件照相关的APP,一键制作,基本的步骤是先按人脸为基准切出适合的尺寸,然后把人像给抠出来,对人像进行美化处理,然后替换上要使用的背景色,比如蓝色或红色。

2.我这里也按着上面的步骤来用代码实现,先是人脸检测,剪切照片,替换背景色,美化和修脸暂时还没有时间写完。

3.因为是考虑到要移植到移动端(安卓和iOS),这里使用了ncnn做推理加速库,之前做过一些APP,加速库都选了ncnn,不管在安卓或者iOS上,性能都是不错的。

4.我的开发环境是win10, vs2019, opencv4.5, ncnn,如果要启用GPU加速,所以用到VulkanSDK,实现语言是C++。

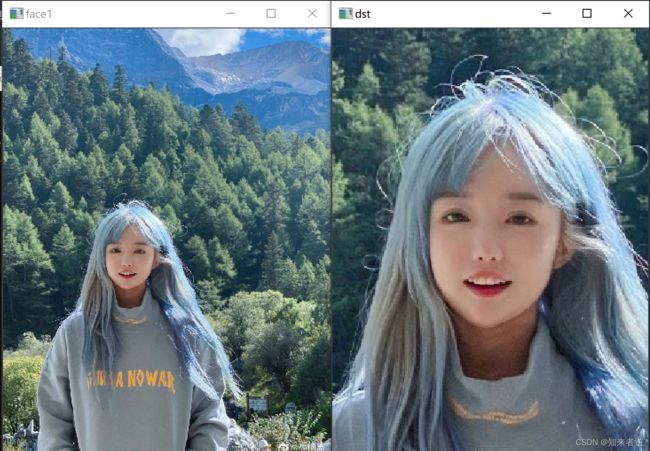

5.先上效果图,对于背景纯度的要求不高,如果使用场景背景复杂的话,也可以完美抠图。

原始图像:

原图:

自动剪切出来的证件照:

一.项目创建

1.使用vs2019新建一个C++项目,把OpenC和NCNN库导入,NCNN可以下载官方编译好的库,我也会在后面上传我使用的库和源码以及用到的模型。

2.如果要启用GPU推理,就要安装VulkanSDK,安装的步骤可以参考我之前的博客。

二.人脸检测

1.人脸检测这里面使用 SCRFD ,它带眼睛,鼻子,嘴角五个关键点的坐标,这个可以用做证件照参考点,人脸检测库这个也可以用libfacedetection,效果都差不多,如果是移动端最好选择SCRFD。

代码实现:

推理代码

#include "scrfd.h"

#include 2.把检测的结果画出来。

int SCRFD::draw(cv::Mat& rgb, const std::vector<FaceObject>& faceobjects)

{

for (size_t i = 0; i < faceobjects.size(); i++)

{

const FaceObject& obj = faceobjects[i];

cv::rectangle(rgb, obj.rect, cv::Scalar(0, 255, 0));

if (has_kps)

{

cv::circle(rgb, obj.landmark[0], 2, cv::Scalar(0, 255, 255), -1);

cv::circle(rgb, obj.landmark[1], 2, cv::Scalar(0, 0, 255), -1);

cv::circle(rgb, obj.landmark[2], 2, cv::Scalar(255, 255, 0), -1);

cv::circle(rgb, obj.landmark[3], 2, cv::Scalar(255, 255, 0), -1);

cv::circle(rgb, obj.landmark[4], 2, cv::Scalar(255, 255, 0), -1);

}

char text[256];

sprintf(text, "%.1f%%", obj.prob * 100);

int baseLine = 0;

cv::Size label_size = cv::getTextSize(text, cv::FONT_HERSHEY_SIMPLEX, 0.5, 1, &baseLine);

int x = obj.rect.x;

int y = obj.rect.y - label_size.height - baseLine;

if (y < 0)

y = 0;

if (x + label_size.width > rgb.cols)

x = rgb.cols - label_size.width;

cv::rectangle(rgb, cv::Rect(cv::Point(x, y), cv::Size(label_size.width, label_size.height + baseLine)), cv::Scalar(255, 255, 255), -1);

cv::putText(rgb, text, cv::Point(x, y + label_size.height), cv::FONT_HERSHEY_SIMPLEX, 0.5, cv::Scalar(0, 0, 0), 1);

}

return 0;

}

三.证件照剪切

1.筛选人脸,如果有一张图像有多张人脸的话,取最大最正的脸的坐标来做基准点。

代码:

int faceFind(const cv::Mat& cv_src, std::vector<FaceObject> &face_object, cv::Rect& cv_rect, std::vector<cv::Point> &five_point)

{

//只检测到一张脸

if (face_object.size() == 1)

{

if (face_object[0].prob > 0.7)

{

for (int i = 0; i < 5; ++i)

{

five_point.push_back(face_object[0].landmark[i]);

}

cv_rect = face_object[0].rect;

return 0;

}

}

//检测到多张脸

else if (face_object.size() >= 2)

{

cv::Rect max_rect;

for (int i = 0; i < face_object.size(); ++i)

{

if (face_object[i].prob >= 0.7)

{

cv::Rect rect = face_object[i].rect;

if (max_rect.area() <= rect.area())

{

max_rect = rect;

}

}

}

for (int i = 0; i < face_object.size(); ++i)

{

if (face_object[i].prob >= 0.7)

{

cv::Rect rect = face_object[i].rect;

if (max_rect.area() == rect.area())

{

for (int j = 0; j < 5; ++j)

{

five_point.push_back(face_object[0].landmark[j]);

}

cv_rect = rect;

}

}

}

return 0;

}

return 1;

}

效果:

2.上面取基准的方法只是一个比较简单的方法,如果算力够的话,或者需要精度更高的话,这里可以加入更多关键点和头部姿态估计和判断。然后用头部姿态估计来判断图像或者摄像头头里的人脸是否摆正了。

3.以人脸为基准剪切出证件照的尺寸图像,先把脸基准中心,计算上下左右的尺寸,然后按比例剪切出合适的证件照的尺寸。

代码:

int faceLocation(const cv::Mat cv_src,cv::Mat& cv_dst, std::vector<cv::Point>& five_point, cv::Rect &cv_rect)

{

float w_block = cv_rect.width / 5.5;

float h_block = cv_rect.height / 8;

//头部

cv::Rect face_rect;

face_rect.x = cv_rect.x - (w_block * 0.8);//加上双耳的大小

face_rect.y = cv_rect.y - (h_block * 2);

face_rect.width = cv_rect.width + (w_block * 1.6);

face_rect.height = cv_rect.height + (h_block * 2);

//人脸离左边边框的距离

int tl_face_w = face_rect.tl().x;

int tr_face_w = cv_src.cols - (face_rect.width + face_rect.tl().x);

int t_face_h = face_rect.tl().y;

int b_face_h = cv_src.rows - face_rect.br().y;

//算出头像的位置

int w_scale = face_rect.width / 7;

int h_scale = face_rect.height / 10;

cv::Rect id_rect;

//判断位置

if (tl_face_w >= (w_scale * 2) && tr_face_w >= (w_scale * 2) && t_face_h >= (h_scale * 0.5) && b_face_h > (h_scale * 5))

{

//判断眼睛的位置

std::cout << five_point.size() << std::endl;

if (abs(five_point.at(0).y - five_point.at(1).y) < 8)

{

id_rect.x = ((face_rect.x - w_scale * 3) <= 0) ? 0 : (face_rect.x - w_scale * 3);

id_rect.y = ((face_rect.y - h_scale * 3) < 0) ? 0 : (face_rect.y - h_scale * 3);

id_rect.width = (w_scale * 13) + id_rect.x > cv_src.size().width ? cv_src.size().width - id_rect.x : w_scale * 13;

id_rect.height = (h_scale * 19) + id_rect.y > cv_src.size().height ? cv_src.size().height - id_rect.y : h_scale * 19;

cv_dst = cv_src(id_rect);

return 0;

}

}

return -1;

}

四.抠图与背景替换

1.经过上面的步骤,已经得到一个证件照的图像,现在要把头像抠出来就可以做背景替换了。

int matting(cv::Mat &cv_src, ncnn::Net& net, ncnn::Mat &alpha)

{

int width = cv_src.cols;

int height = cv_src.rows;

ncnn::Mat in_resize = ncnn::Mat::from_pixels_resize(cv_src.data, ncnn::Mat::PIXEL_RGB, width, height, 256,256);

const float meanVals[3] = { 127.5f, 127.5f, 127.5f };

const float normVals[3] = { 0.0078431f, 0.0078431f, 0.0078431f };

in_resize.substract_mean_normalize(meanVals, normVals);

ncnn::Mat out;

ncnn::Extractor ex = net.create_extractor();

ex.set_vulkan_compute(true);

ex.input("input", in_resize);

ex.extract("output", out);

ncnn::resize_bilinear(out, alpha, width, height);

return 0;

}

2.替换背景色。

void replaceBG(const cv::Mat cv_src, ncnn::Mat &alpha,cv::Mat &cv_matting, std::vector<int> &bg_color)

{

int width = cv_src.cols;

int height = cv_src.rows;

cv_matting = cv::Mat::zeros(cv::Size(width, height), CV_8UC3);

float* alpha_data = (float*)alpha.data;

for (int i = 0; i < height; i++)

{

for (int j = 0; j < width; j++)

{

float alpha_ = alpha_data[i * width + j];

cv_matting.at < cv::Vec3b>(i, j)[0] = cv_src.at < cv::Vec3b>(i, j)[0] * alpha_ + (1 - alpha_) * bg_color[0];

cv_matting.at < cv::Vec3b>(i, j)[1] = cv_src.at < cv::Vec3b>(i, j)[1] * alpha_ + (1 - alpha_) * bg_color[1];

cv_matting.at < cv::Vec3b>(i, j)[2] = cv_src.at < cv::Vec3b>(i, j)[2] * alpha_ + (1 - alpha_) * bg_color[2];

}

}

}

3.效果图。

原图:

证件照:

原图(背景比较复杂的原图):

证件照:

动漫头像:

五.结语

1.这只是个可以实现功能的demo,如果想要应用到商业上,还有很多细节上的处理,比如果头部姿态估计,眼球检测(是否闭眼),皮肤美化,瘦脸,换装等,这些功能有时间我会去试之后放上来。

2.这个demo改改可以在安卓上运行,demo我在安卓上测试过,速度和精度都有不错的表现。

3.整个工程和源码的地址:https://download.csdn.net/download/matt45m/67756246