FFmpeg

FFmpeg下载

FFmpeg源码地址:https://github.com/FFmpeg/FFmpeg

FFmpeg可执行文件地址:https://ffmpeg.org/download.html

ffmpeg & ffplay & ffprobe参数介绍

ffmpeg.exe

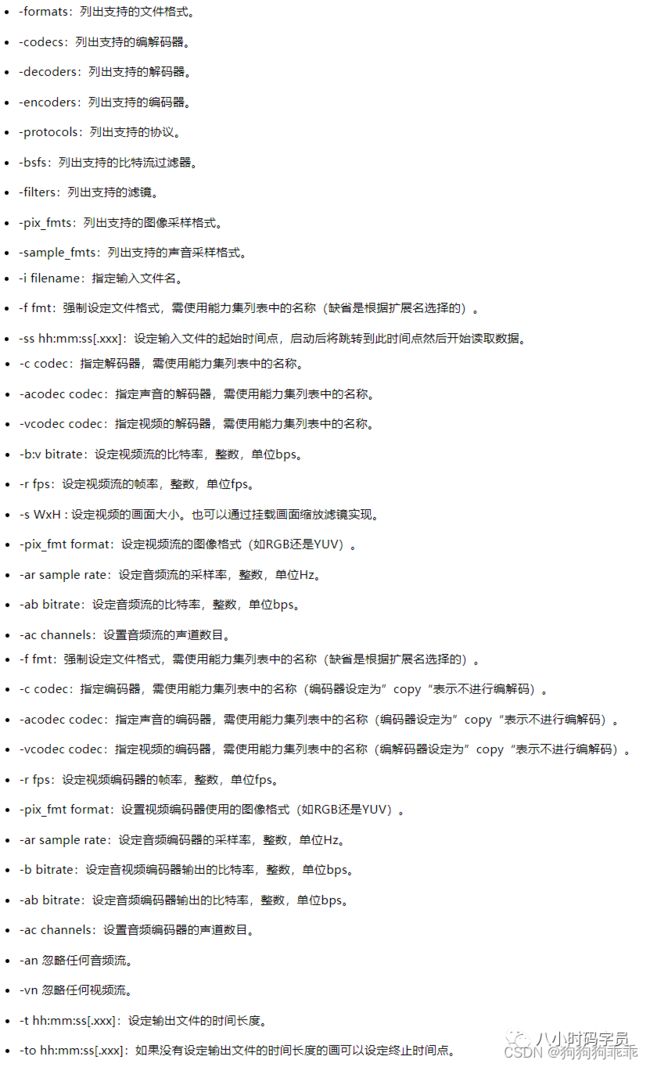

◆ 用于音视频转码, 也可以从url/现场音频/视频源抓取输入源等。笔者从网上摘抄了一部分ffmpeg常用参数如下(尤其在开发过程中,由于ffmpeg版本不同,ffmpeg参数也有少量出入,建议在命令行窗口输入“ffmpeg -h”查看本机部署的ffmpeg支持的参数):

ffmpeg 范例

//从视频第3秒开始剪切,持续4秒,并保存文件

ffmpeg -ss 00:00:03 -t 00:00:04 -i test.mpg -vcodec copy -acodec copy test_cut.mpg

ffplay.exe

◆ 一个非常简单和可移植的媒体播放器,使用FFmpeg库和SDL库。ffplay参数如下:

ffplay.exe范例:

//播放视频

ffplay -i test.mpg

ffprobe.exe

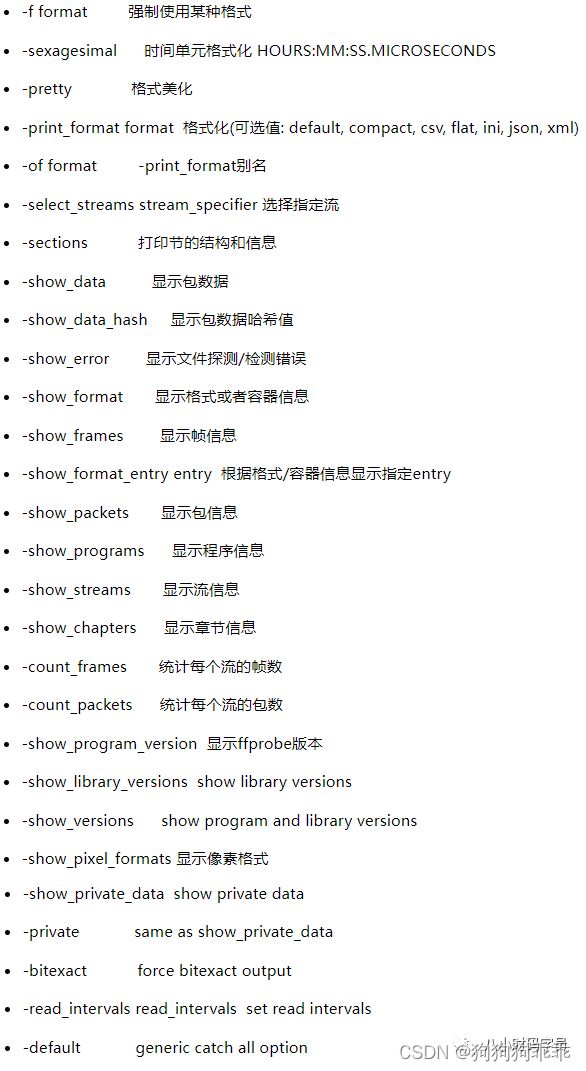

◆ 查看多媒体文件的信息。ffprobe参数如下

◆ ffprobe.exe范例:

//查看视频文件的音频流和视频流信息

ffprobe test.mpg

ffmpeg解码

音视频解码流程:

windows使用SDL播放音视频,流程如下:

代码:

/*

author:八小时码字员

file:vPlayer_sdl2.cpp

*/

#include "vPlayer_sdl2.h"

#include "output_log.h"

#include

#include

using namespace std;

#define __STDC_CONSTANT_MACROS

extern "C"

{

#include

#include

#include

#include

#include

}

static int g_frame_rate = 1;

static int g_sfp_refresh_thread_exit = 0;

static int g_sfp_refresh_thread_pause = 0;

#define SFM_REFRESH_EVENT (SDL_USEREVENT+1)

#define SFM_BREAK_EVENT (SDL_USEREVENT+2)

typedef struct FFmpeg_V_Param_T

{

AVFormatContext *pFormatCtx;

AVCodecContext *pCodecCtx;

SwsContext *pSwsCtx;

int video_index;

}FFmpeg_V_Param;

typedef struct SDL_Param_T

{

SDL_Window *p_sdl_window;

SDL_Renderer *p_sdl_renderer;

SDL_Texture *p_sdl_texture;

SDL_Rect sdl_rect;

SDL_Thread *p_sdl_thread;

}SDL_Param;

/*

return value:zero(success) non-zero(failure)

*/

int init_ffmpeg(FFmpeg_V_Param* p_ffmpeg_param, char* filePath)

{

//init FFmpeg_V_Param

p_ffmpeg_param->pFormatCtx = avformat_alloc_context();

const AVCodec *pCodec = NULL;

//do global initialization of network libraries

avformat_network_init();

//open input stream

if (avformat_open_input(&(p_ffmpeg_param->pFormatCtx), filePath, NULL, NULL) != 0)

{

output_log(LOG_ERROR, "avformat_open_input error");

return -1;

}

//find stream info

if (avformat_find_stream_info(p_ffmpeg_param->pFormatCtx, NULL) < 0)

{

output_log(LOG_ERROR, "avformat_find_stream_info error");

return -1;

}

//get video pCodecParms, codec and frame rate

for (int i = 0; i < p_ffmpeg_param->pFormatCtx->nb_streams; i++)

{

AVStream *pStream = p_ffmpeg_param->pFormatCtx->streams[i];

if (pStream->codecpar->codec_type == AVMEDIA_TYPE_VIDEO)

{

pCodec = avcodec_find_decoder(pStream->codecpar->codec_id);

p_ffmpeg_param->pCodecCtx = avcodec_alloc_context3(pCodec);

avcodec_parameters_to_context(p_ffmpeg_param->pCodecCtx, pStream->codecpar);

g_frame_rate = pStream->avg_frame_rate.num / pStream->avg_frame_rate.den;

p_ffmpeg_param->video_index = i;

}

}

if (!p_ffmpeg_param->pCodecCtx)

{

output_log(LOG_ERROR, "could not find video codecCtx");

return -1;

}

//open codec

if (avcodec_open2(p_ffmpeg_param->pCodecCtx, pCodec, NULL))

{

output_log(LOG_ERROR, "avcodec_open2 error");

return -1;

}

//get scale pixelformat context

p_ffmpeg_param->pSwsCtx = sws_getContext(p_ffmpeg_param->pCodecCtx->width,

p_ffmpeg_param->pCodecCtx->height, p_ffmpeg_param->pCodecCtx->pix_fmt,

p_ffmpeg_param->pCodecCtx->width, p_ffmpeg_param->pCodecCtx->height,

AV_PIX_FMT_YUV420P, SWS_BICUBIC, NULL, NULL, NULL);

av_dump_format(p_ffmpeg_param->pFormatCtx, p_ffmpeg_param->video_index, filePath, 0);

return 0;

}

/*

return value:zero(success) non-zero(failure)

*/

int release_ffmpeg(FFmpeg_V_Param* p_ffmpeg_param)

{

if (!p_ffmpeg_param)

return -1;

//realse scale pixelformat context

if (p_ffmpeg_param->pSwsCtx)

sws_freeContext(p_ffmpeg_param->pSwsCtx);

//close codec

if (p_ffmpeg_param->pCodecCtx)

avcodec_close(p_ffmpeg_param->pCodecCtx);

//close input stream

if (p_ffmpeg_param->pFormatCtx)

avformat_close_input(&(p_ffmpeg_param->pFormatCtx));

//free AVCodecContext

if (p_ffmpeg_param->pCodecCtx)

avcodec_free_context(&(p_ffmpeg_param->pCodecCtx));

//free AVFormatContext

if (p_ffmpeg_param->pFormatCtx)

avformat_free_context(p_ffmpeg_param->pFormatCtx);

//free FFmpeg_V_Param

delete p_ffmpeg_param;

p_ffmpeg_param = NULL;

return 0;

}

int sfp_refresh_thread(void* opaque)

{

g_sfp_refresh_thread_exit = 0;

g_sfp_refresh_thread_pause = 0;

while (!g_sfp_refresh_thread_exit)

{

if (!g_sfp_refresh_thread_pause)

{

SDL_Event sdl_event;

sdl_event.type = SFM_REFRESH_EVENT;

SDL_PushEvent(&sdl_event);

}

SDL_Delay(1000 / g_frame_rate);

}

g_sfp_refresh_thread_exit = 0;

g_sfp_refresh_thread_pause = 0;

SDL_Event sdl_event;

sdl_event.type = SFM_BREAK_EVENT;

SDL_PushEvent(&sdl_event);

return 0;

}

int init_sdl2(SDL_Param_T *p_sdl_param, int screen_w, int screen_h)

{

if (SDL_Init(SDL_INIT_AUDIO | SDL_INIT_VIDEO | SDL_INIT_TIMER))

{

output_log(LOG_ERROR, "SDL_Init error");

return -1;

}

p_sdl_param->p_sdl_window = SDL_CreateWindow("vPlayer_sdl", SDL_WINDOWPOS_UNDEFINED,

SDL_WINDOWPOS_UNDEFINED, screen_w, screen_h, SDL_WINDOW_OPENGL);

if (!p_sdl_param->p_sdl_window)

{

output_log(LOG_ERROR, "SDL_CreateWindow error");

return -1;

}

p_sdl_param->p_sdl_renderer = SDL_CreateRenderer(p_sdl_param->p_sdl_window, -1, 0);

p_sdl_param->p_sdl_texture = SDL_CreateTexture(p_sdl_param->p_sdl_renderer, SDL_PIXELFORMAT_IYUV,

SDL_TEXTUREACCESS_STREAMING, screen_w, screen_h);

p_sdl_param->sdl_rect.x = 0;

p_sdl_param->sdl_rect.y = 0;

p_sdl_param->sdl_rect.w = screen_w;

p_sdl_param->sdl_rect.h = screen_h;

p_sdl_param->p_sdl_thread = SDL_CreateThread(sfp_refresh_thread, NULL, NULL);

return 0;

}

int release_sdl2(SDL_Param_T *p_sdl_param)

{

SDL_DestroyTexture(p_sdl_param->p_sdl_texture);

SDL_DestroyRenderer(p_sdl_param->p_sdl_renderer);

SDL_DestroyWindow(p_sdl_param->p_sdl_window);

SDL_Quit();

return 0;

}

int vPlayer_sdl2(char* filePath)

{

//ffmpeg param

FFmpeg_V_Param *p_ffmpeg_param = NULL;

AVPacket *packet = NULL;

AVFrame *pFrame = NULL, *pFrameYUV = NULL;

int out_buffer_size = 0;

unsigned char* out_buffer = 0;

//sdl param

SDL_Param_T *p_sdl_param = NULL;

SDL_Event sdl_event;

int ret = 0;

//init ffmpeg

p_ffmpeg_param = new FFmpeg_V_Param();

memset(p_ffmpeg_param, 0, sizeof(FFmpeg_V_Param));

if (init_ffmpeg(p_ffmpeg_param, filePath))

{

ret = -1;

goto end;

}

packet = av_packet_alloc();

pFrame = av_frame_alloc();

pFrameYUV = av_frame_alloc();

out_buffer_size = av_image_get_buffer_size(AV_PIX_FMT_YUV420P,

p_ffmpeg_param->pCodecCtx->width, p_ffmpeg_param->pCodecCtx->height, 1);

out_buffer = (unsigned char*)av_malloc(out_buffer_size);

av_image_fill_arrays(pFrameYUV->data, pFrameYUV->linesize, out_buffer,

p_ffmpeg_param->pCodecCtx->pix_fmt,

p_ffmpeg_param->pCodecCtx->width, p_ffmpeg_param->pCodecCtx->height, 1);

//init sdl2

p_sdl_param = new SDL_Param_T();

memset(p_sdl_param, 0, sizeof(SDL_Param_T));

if (init_sdl2(p_sdl_param, p_ffmpeg_param->pCodecCtx->width, p_ffmpeg_param->pCodecCtx->height))

{

ret = -1;

goto end;

}

//demuxing and show

while (true)

{

int temp_ret = 0;

SDL_WaitEvent(&sdl_event);

if (sdl_event.type == SFM_REFRESH_EVENT)

{

while (true)

{

if (av_read_frame(p_ffmpeg_param->pFormatCtx, packet) < 0)

{

g_sfp_refresh_thread_exit = 1;

break;

}

if (packet->stream_index == p_ffmpeg_param->video_index)

{

break;

}

}

if (avcodec_send_packet(p_ffmpeg_param->pCodecCtx, packet))

g_sfp_refresh_thread_exit = 1;

do

{

temp_ret = avcodec_receive_frame(p_ffmpeg_param->pCodecCtx, pFrame);

if (temp_ret == AVERROR_EOF)

{

g_sfp_refresh_thread_exit = 1;

break;

}

if (temp_ret == 0)

{

sws_scale(p_ffmpeg_param->pSwsCtx, (const unsigned char* const*)pFrame->data,

pFrame->linesize, 0, p_ffmpeg_param->pCodecCtx->height, pFrameYUV->data,

pFrameYUV->linesize);

SDL_UpdateTexture(p_sdl_param->p_sdl_texture, &(p_sdl_param->sdl_rect),

pFrameYUV->data[0], pFrameYUV->linesize[0]);

SDL_RenderClear(p_sdl_param->p_sdl_renderer);

SDL_RenderCopy(p_sdl_param->p_sdl_renderer, p_sdl_param->p_sdl_texture,

NULL, &(p_sdl_param->sdl_rect));

SDL_RenderPresent(p_sdl_param->p_sdl_renderer);

}

} while (temp_ret != AVERROR(EAGAIN));

//av_packet_unref(packet);

}

else if (sdl_event.type == SFM_BREAK_EVENT)

{

break;

}

else if (sdl_event.type == SDL_KEYDOWN)

{

if (sdl_event.key.keysym.sym == SDLK_SPACE)

g_sfp_refresh_thread_pause = !g_sfp_refresh_thread_pause;

if (sdl_event.key.keysym.sym == SDLK_q)

g_sfp_refresh_thread_exit = 1;

}

else if (sdl_event.type == SDL_QUIT)

{

g_sfp_refresh_thread_exit = 1;

}

}

end:

release_ffmpeg(p_ffmpeg_param);

av_packet_free(&packet);

av_frame_free(&pFrame);

av_frame_free(&pFrameYUV);

release_sdl2(p_sdl_param);

return ret;

} /*

author:八小时码字员

file:output_log.cpp

*/

#include

#include

#define MAX_BUF_LEN 1024

int g_log_debug_flag = 1;

int g_log_info_flag = 1;

int g_log_warnning_flag = 1;

int g_log_error_flag = 1;

enum LOG_LEVEL

{

LOG_DEBUG,

LOG_INFO,

LOG_WARNING,

LOG_ERROR

};

void set_log_flag(int log_debug_flag, int log_info_flag, int log_warnning_flag,

int log_error_flag)

{

g_log_debug_flag = log_debug_flag;

g_log_info_flag = log_info_flag;

g_log_warnning_flag = log_warnning_flag;

g_log_error_flag = log_error_flag;

}

void output_log(LOG_LEVEL log_level, const char* fmt, ...)

{

va_list args;

va_start(args, fmt);

char buf[MAX_BUF_LEN] = { 0 };

vsnprintf(buf, MAX_BUF_LEN - 1, fmt, args);

switch (log_level)

{

case LOG_DEBUG:

if (g_log_debug_flag)

printf("[Log-Debug]:%s\n", buf);

break;

case LOG_INFO:

if (g_log_info_flag)

printf("[Log-Info]:%s\n", buf);

break;

case LOG_WARNING:

if (g_log_warnning_flag)

printf("[Log-Warnning]:%s\n", buf);

break;

case LOG_ERROR:

if (g_log_error_flag)

printf("[Log-Error]:%s\n", buf);

break;

default:

break;

}

va_end(args);

} ffmpeg编码

(视频)使用FFmpeg库编码YUV:

FFmpeg编码音频(PCM):

FFmpeg转码

转码比较好理解,就是将解码和编码结合起来,过程为:解封装->解码->编码->封装。逻辑如下:

◆ 解封装:将音视频文件的封装格式去掉,获取视频流(H.264)和音频流(AAC)

◆ 解码:将视频流解码成原始图像数据(YUV),将音频流解码成原始音频数据(PCM)

◆ 编码:将原始图像(YUV)进行编码(MPG2),将音频流进行编码(MP3)

◆ 封装:将视频流和音频流封装成视频文件

FFmpeg结构体

FFmpeg结构体主要分为三个层次:协议层(AVIOContext)、封装层(AVInputFormat)、解码层(AVStream)。具体如下:

关键结构体主要有以下八个:

AVFormatContext

描述媒体文件或媒体流的构成和基本信息,贯穿ffmpeg使用整个流程

AVInputFormat *iformat、AVOutputFormat *oformat:输入或者输出流的格式(只能存在一个)

AVIOContext *pb:管理输入输出数据

unsigned int nb_streams:音视频流的个数

AVStream **streams:音视频流

char *url:文件名

int64_t duration:时长

int bit_rate:比特率(单位bite/s)

AVDictionary *metadata:元数据(查看元数据:ffprobe filename)AVInputFormat

文件的封装格式

char* name:封装格式的名字

char* long_name:封装格式的长名字

char* extensions:文件扩展名AVIOContext->URLContext->URLProtocol

AVIOContext:

文件(协议)操作的顶层对象

unsigned char *buffer:缓冲开始位置

int buffer_size:缓冲区大小(默认32768)

unsigned char *buf_ptr:当前指针读取到的位置

unsigned char *buf_end:缓存结束的位置

void *opaque:URLContext结构体

(*read_packet)(...):读取音视频数据的函数指针

(*write_packet)(...):写入音视频数据的函数指针

(*read_pause)(...):网络流媒体协议的暂停或恢复播放函数指针URLContext

每种协议,有一个协议操作对象和一个关联的协议对象

char* name:协议名称

const struct URLProtocol *prot:协议操作对象(ff_file_protocol、ff_librtmp_protocol...)

void *priv_data:协议对象(FileContext、LibRTMPContext)URLProtocol

协议操作对象

AVStream

存储音频流或视频流的结构体

int index:音频流或视频流的索引

AVRational time_base:计算pts或dts是使用的时间戳基本单位(显示时间:pt = av_q2d(video_stream->time_base) * frame->pts)

int64_t duration:该视频/音频流长度

AVRational avg_frame_rate:平均帧率(对于视频来说,frame_rate=avg_frame_rate.num / avg_frame_rate.den)

AVCodecParameters *codecpar:解码器参数AVCodecParameter 和 AVCodecContext

◆新的 ffmpeg 中 AVStream.codecpar(struct AVCodecParameter) 代替 AVStream.codec(struct AVCodecContext):AVCodecParameter 是由 AVCodecContext 分离出来的,AVCodecParameter中没有函数

◆AVCodecContext 结构体仍然是编解码时不可或缺的结构体:avcodec_send_packet 和 avcodec_receive_frame 使用 AVCodecContext

◆ AVCodecContext 和 AVCodec 的获取方法

//=============== new version code ===============

char filePath[] = "test.mp4";

AVFormatContext *pFormatCtx;

AVCodecContext *pCodecCtx;

AVCodec *pCodec;

pFormatCtx = avformat_alloc_context();

av_register_all();

avformat_network_init();

avformat_open_input(&pFormatCtx, filePath, NULL, NULL);

avformat_find_stream_info(pFormatCtx, NULL);

for (int i = 0; i < pFormatCtx->nb_streams; i++)

{

AVStream *pStream = pFormatCtx->streams[i];

pCodec = avcodec_find_decoder(pStream->codecpar->codec_id);

pCodecCtx = avcodec_alloc_context3(pCodec);

avcodec_parameters_to_context(pCodecCtx, pStream->codecpar);

}//============ old version code ============

char filePath[] = "test.mp4";

AVFormatContext *pFormatCtx;

AVCodecContext *pCodecCtx;

AVCodec *pCodec;

pFormatCtx = avformat_alloc_context();

av_register_all();

avformat_network_init();

avformat_open_input(&pFormatCtx, filePath, NULL, NULL);

avformat_find_stream_info(pFormatCtx, NULL);

for (int i = 0; i < pFormatCtx->nb_streams; i++)

{

AVStream *pStream = pFormatCtx->streams[i];

pCodecCtx = pStream->codec;

pCodec = avcodec_find_decoder(pCodecCtx->codec_id);

}◆ 关键参数:可参考 avcodec_parameters_to_context 源码

num AVMediaType codec_type:编解码器的类型(视频,音频...)

enum AVCodecID codec_id:标示特定的编码器

AVCodecContext:struct AVCodec *codec:采用的解码器AVCodec(H.264,MPEG2...)

int bit_rate:平均比特率

uint8_t *extradata; int extradata_size:针对特定编码器包含的附加信息(例如对于H.264解码器来说,存储SPS,PPS等)

AVCodecContext:enum AVPixelFormat pix_fmt:像素格式(视频)

int width, height:宽和高(视频)

AVCodecContext:enum AVSampleFormat sample_fmt:采样格式(音频)

int sample_rate:采样率(音频)

int channels:声道数(音频)

uint64_t channel_layout:声道格式

AVCodecParameters:int format:像素格式(视频)/采样格式(音频AVCodec

编解码器结构体

◆ 每一个解码器对应一个AVCodec结构体,比如:AVCodec ff_h264_decoder,AVCodec ff_jpeg2000_decoder

◆ 关键成员变量如下:

const char *name:编解码器短名字(形如:"h264")

const char *long_name:编解码器全称(形如:"H.264 / AVC / MPEG-4 AVC / MPEG-4 part 10")

enum AVMediaType type:媒体类型:视频、音频或字母

enum AVCodecID id:标示特定的编码器

const AVRational *supported_framerates:支持的帧率(仅视频)

const enum AVPixelFormat *pix_fmts:支持的像素格式(仅视频)

const int *supported_samplerates:支持的采样率(仅音频)

const enum AVSampleFormat *sample_fmts:支持的采样格式(仅音频)

const uint64_t *channel_layouts:支持的声道数(仅音频AVPacket

存储解码前数据的结构体

◆ 关键成员变量

AVBufferRef *buf:管理data指向的数据

uint8_t *data:压缩编码的数据

int size:data的大小

int64_t pts:显示时间戳

int64_t dts:解码时间戳

int stream_index:标识该AVPacket所属的视频/音频流◆ AVPacket的内存管理:AVPacket本身并不包含压缩的数据,通过data指针引用数据的缓存空间

◆ 多个AVPacket共享同一个数据缓存(AVBufferRef、AVBuffer)

◆ AVPacket拥有独立的数据缓存

av_read_frame(pFormatCtx, packet) // 读取Packet

av_packet_ref(dst_pkt,packet) // dst_pkt 和 packet 共享同一个数据缓存空间,引用计数+1

av_packet_unref(dst_pkt); //释放 pkt_pkt 引用的数据缓存空间,引用计数-1◆ AVBuffer 关键成员变量

uint8_t *data:压缩编码的数据

size_t size:数据长度

atomic_uint refcount:引用计数,如果引用计数为0,则释放数据缓存空间AVFrame

存储解码后数据的结构体

uint8_t *data[AV_NUM_DATA_POINTERS]:解码后原始数据(对视频来说是YUV,RGB,对音频来说是PCM)

int linesize[AV_NUM_DATA_POINTERS]:data中“一行”数据的大小。注意:未必等于图像的宽,一般大于图像的宽。

int width, height:视频帧宽和高(1920x1080,1280x720...)

int nb_samples:音频的一个AVFrame中可能包含多个音频帧,在此标记包含了几个

int format:解码后原始数据类型(YUV420,YUV422,RGB24...)

int key_frame:是否是关键帧

enum AVPictureType pict_type:帧类型(I,B,P...)

AVRational sample_aspect_ratio:图像宽高比(16:9,4:3...)

int64_t pts:显示时间戳

int coded_picture_number:编码帧序号

int display_picture_number:显示帧序号