adaboost 预测马病的几率,最大auc取法, 测试集准确率82.09%

1. 以机器学习中的horseColicTraining 为训练样本, horseColicTest为测试样本

2. 实践中当迭代次数较大的时候会过拟合,故以最大训练次数40次, 在训练集错误率不上升的前提下,最大的auc的次数,作为最佳迭代次数

3. 每次训练都会计算auc并绘图, 迭代40次后, 依照最大auc的次数重新训练,得到3个弱分类器,此时 auc 0.526

4.进行测试, 测试集错误率17.91% (准确率82.09%)

最终roc曲线如图:

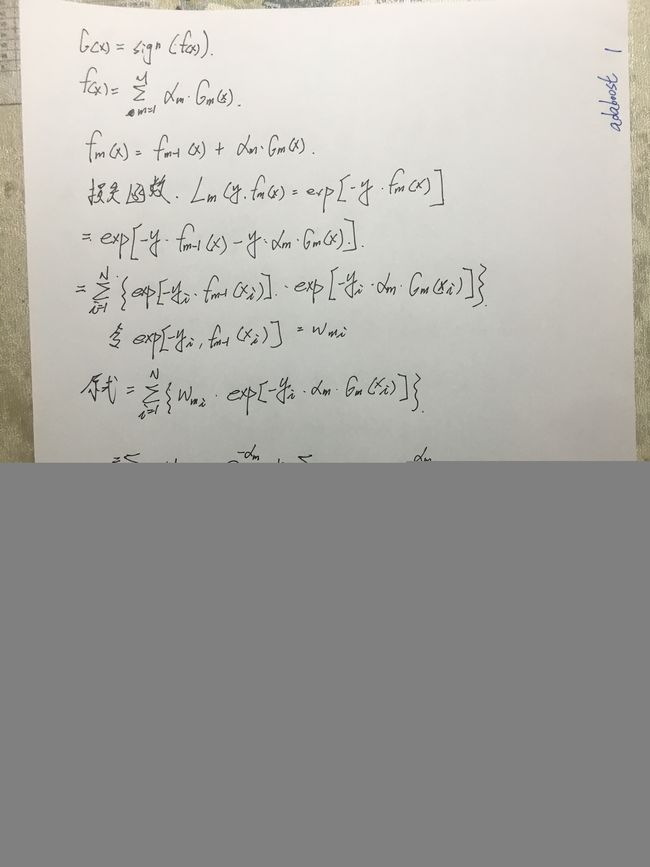

公式推导

代码如下

import numpy as np

import matplotlib.pyplot as plt

def loadData(fileName):

fp = open(fileName)

data = fp.readlines()

xSet = []

yArray = np.ndarray(len(data), dtype=int)

y_count = 0

count = 0

for oneline in data:

xData = []

oneLineList = []

oneline = oneline.split('\t')

for one in oneline:

one = one.split(' ')

for on in one:

if (on.strip().isspace()):

continue

else:

try:

t = float(on.strip())

oneLineList.append(t)

except:

raise TypeError

for i in range(len(oneLineList)):

t = oneLineList[i]

if (i == len(oneLineList) - 1):

if (t == 0):

yArray[y_count] = -1

elif (t == 1):

yArray[y_count] = 1

else:

raise TypeError

y_count += 1

else:

xData.append(t)

xSet.append(xData)

count += 1

# 因为adaboost是依照特征(列)循环的,所以要将行列互换

xMat = np.mat(xSet)

xArray = np.ndarray((xMat.shape[1], xMat.shape[0]))

for j in range(xMat.shape[1]):

array = np.ndarray(xMat.shape[0])

for i in range(xMat.shape[0]):

array[i] = xMat[i, j]

xArray[j] = array

return xArray, yArray

class adaBoost:

def __init__(self):

self.w = None

self.weakC = []

self.test_weakC_list = []

def fit(self, xArray, yArray, maxIt=40):

self.test_weakC_list = []

self.weakC = [] #alpha, threshould, sign, dim

sampleNum = xArray[0].shape[0]

dimNum = xArray.shape[0]

self.w = np.full((sampleNum, dimNum), fill_value=1 / sampleNum)

G = 0

maxAuc = 0.

maxAucIt = 0

minErr=1.0

for i in range(maxIt):

em, threshould, sign, dim = self._getBestEstimate(xArray, yArray)

if (em == 0):

# 某一个弱分类器完美分类

self.weakC.append((1, threshould, sign, dim))

print("em==0")

raise ValueError

alpha = 0.5 * np.log((1 - em) / em)

if (np.isnan(alpha)):

print("alpha is nan")

raise ValueError

Gmx = self._getGmx(xArray[dim].T, threshould, sign)

P = self.w[:, dim] * np.exp(-yArray * alpha * Gmx)

self.w[:, dim] = P

self.weakC.append((alpha, threshould, sign, dim))

# 校验是否停机

G += Gmx * alpha

err_rate = self.cal_errRate(G, yArray)

self.test_weakC_list.append(self.weakC.copy())

auc = self.getAuc(G, yArray, True)

print('第{}次训练,训练集 err rate: {:.2%},auc:{:.2%}'.format(i + 1, err_rate, auc))

if (auc> maxAuc and err_rate 1 or em < 0):

print('em:', em, "j:", j, "count:", count)

raise TypeError

elif (em > 0.5):

em = 1 - em

sign = -1

if (minEm == -1 or em < minEm):

minEm = em

threshould = startPoint

final_sign = sign

final_dim = j

startPoint += minInerval

count += 1

if (minEm == -1 or np.isnan(threshould) or final_dim == -1):

print('j={}出错'.format(j))

raise ValueError

return minEm, threshould, final_sign, final_dim

def _calculate_em(self, xArray, yArray, threshould, w, sign):

err_lt = -(yArray[xArray < threshould] - 1) * w[xArray < threshould] / 2 * sign

err_gt = (yArray[xArray >= threshould] + 1) * w[xArray >= threshould] / 2 * sign

if (len(err_lt) > 0 or len(err_gt) > 0):

t = np.sum(err_lt)

t1 = np.sum(err_gt)

t2 = np.sum(w)

return np.hstack([err_lt, err_gt]).sum() / w.sum()

else:

return 0

def _getGmx(self, xArray, threshould, sign):

result = np.zeros(xArray.shape[0], dtype=int)

result[np.where(xArray < threshould)] = sign

result[np.where(xArray >= threshould)] = -sign

return result

def test_best_trainRun(self, xArray, yArray,record_interval):

previousErr=1.

previousTrend=''

overCount=0

flag=False

for i in range(len(self.test_weakC_list)):

weakC=self.test_weakC_list[i]

result, G, err_rate=self.predit(xArray, yArray, weakC)

trend='down'

if(err_rate>previousErr):

trend = 'up'

if (trend == previousTrend and flag==False):

overCount=(i-1)*record_interval

flag=True

previousErr=err_rate

previousTrend=trend

print("{:.0f}次训练,测试集 err rate:{:.2%}, 趋势:{}".format((i+1)*record_interval,err_rate,trend))

return overCount

def predit(self, xArray, yArray,weakC=None):

if(weakC==None):

weakC=self.weakC

result_sum = 0

G = 0

for i in range(len(weakC)):

alpha = weakC[i][0]

threshould = weakC[i][1]

sign = weakC[i][2]

dim = weakC[i][3]

G += self._getGmx(xArray[dim], threshould, sign) * alpha

result = np.sign(G).astype(int)

err_rate = self.cal_errRate(G, yArray)

return result, G, err_rate

def getAuc(self, predictArray, labelArray, plotROC):

sampleNum = len(labelArray)

pNum = sum(np.array(labelArray) == 1)

nNum = sampleNum - pNum

xStep = 1 / nNum

yStep = 1 / pNum

sortedIndices = predictArray.argsort()

flg = plt.figure()

flg.clf()

ax = plt.subplot(111)

sortedLabels = []

for i in range(sampleNum):

for j in range(sampleNum):

if (sortedIndices[j] == i):

sortedLabels.append(labelArray[j])

point = (0., 0.)

ySum = 0.

for i in range(sampleNum):

if (sortedLabels[i] == 1):

xAdd = 0

yAdd = yStep

if (i == sampleNum - 1):

ySum += point[1] + yAdd

else:

xAdd = xStep

yAdd = 0

ySum += point[1]

if (plotROC):

ax.plot([point[0], point[0] + xAdd], [point[1], point[1] + yAdd], c='r')

point = (point[0] + xAdd, point[1] + yAdd)

aumAdd = point[1] * xStep

auc = ySum * xStep

if (plotROC):

ax.plot([0, 1], [0, 1], 'b--')

plt.xlabel('fpr')

plt.ylabel('tpr')

plt.title('roc')

plt.show()

# print('auc:',auc)

return auc

def run():

xArray_train, yArray_train = loadData('horseColicTraining.txt')

xArray_test, yArray_test = loadData('horseColicTest.txt')

ada = adaBoost()

maxAucIt, minErr, maxAuc=ada.fit(xArray_train, yArray_train)

print("\n",'{:.0f}次训练取得最佳的auc,err rate:{:.2%},auc:{:.2%}'.format(maxAucIt, minErr, maxAuc), "\n")

ada.fit(xArray_train, yArray_train, maxAucIt)

print("\n",'最终的分类器 alpha, threshould, sign, dim', ada.weakC, "\n")

result, G, err_rate = ada.predit(xArray_test, yArray_test)

print("\n",'测试,err rate: {:.2%}'.format(err_rate), "\n")

if __name__ == '__main__':

import sys

run()

sys.exit(0)

运行结果

第1次训练,训练集 err rate: 28.43%,auc:47.80%

第2次训练,训练集 err rate: 28.43%,auc:52.19%

第3次训练,训练集 err rate: 27.42%,auc:52.60%

第4次训练,训练集 err rate: 27.42%,auc:52.28%

第5次训练,训练集 err rate: 25.75%,auc:46.15%

第6次训练,训练集 err rate: 26.09%,auc:49.06%

第7次训练,训练集 err rate: 24.41%,auc:51.86%

第8次训练,训练集 err rate: 24.08%,auc:48.99%

第9次训练,训练集 err rate: 26.09%,auc:50.00%

第10次训练,训练集 err rate: 24.75%,auc:48.60%

第11次训练,训练集 err rate: 27.09%,auc:44.35%

第12次训练,训练集 err rate: 25.08%,auc:52.57%

第13次训练,训练集 err rate: 27.09%,auc:52.31%

第14次训练,训练集 err rate: 25.75%,auc:46.04%

第15次训练,训练集 err rate: 28.43%,auc:47.88%

第16次训练,训练集 err rate: 27.76%,auc:45.22%

第17次训练,训练集 err rate: 29.10%,auc:48.69%

第18次训练,训练集 err rate: 29.43%,auc:55.53%

第19次训练,训练集 err rate: 30.10%,auc:47.07%

第20次训练,训练集 err rate: 31.10%,auc:52.49%

第21次训练,训练集 err rate: 32.11%,auc:43.26%

第22次训练,训练集 err rate: 33.44%,auc:44.85%

第23次训练,训练集 err rate: 34.45%,auc:44.85%

第24次训练,训练集 err rate: 36.79%,auc:44.85%

第25次训练,训练集 err rate: 36.79%,auc:48.53%

第26次训练,训练集 err rate: 36.45%,auc:46.17%

第27次训练,训练集 err rate: 36.79%,auc:50.14%

第28次训练,训练集 err rate: 37.12%,auc:52.33%

第29次训练,训练集 err rate: 35.79%,auc:57.30%

第30次训练,训练集 err rate: 36.79%,auc:44.35%

第31次训练,训练集 err rate: 36.12%,auc:51.44%

第32次训练,训练集 err rate: 36.45%,auc:54.94%

第33次训练,训练集 err rate: 36.12%,auc:47.16%

第34次训练,训练集 err rate: 36.45%,auc:48.71%

第35次训练,训练集 err rate: 36.12%,auc:47.96%

第36次训练,训练集 err rate: 36.45%,auc:48.60%

第37次训练,训练集 err rate: 35.79%,auc:47.03%

第38次训练,训练集 err rate: 36.45%,auc:49.80%

第39次训练,训练集 err rate: 36.45%,auc:53.62%

第40次训练,训练集 err rate: 37.12%,auc:51.69%

3次训练取得最佳的auc,err rate:27.42%,auc:52.60%

第1次训练,训练集 err rate: 28.43%,auc:47.80%

第2次训练,训练集 err rate: 28.43%,auc:52.19%

第3次训练,训练集 err rate: 27.42%,auc:52.60%

最终的分类器 alpha, threshould, sign, dim [(0.4616623792657675, 4.0, 1, 9), (0.4616623792657675, 50.5, 1, 17), (0.4292355804926644, 4.0, 1, 7)]

测试,err rate: 17.91%