Python开发运维:Celery连接Redis

目录

一、理论

1.Celery

二、实验

1.Windows11安装Redis

2.Python3.8环境中配置Celery

3.celery的多目录结构异步执行

三、问题

1.Celery命令报错

2.执行Celery命令报错

3.Win11启动Celery报ValueErro错误

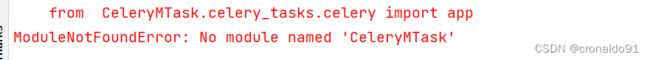

4.Pycharm 无法 import 同目录下的 .py 文件或自定义模块

一、理论

1.Celery

(1) 概念

Celery是一个基于python开发的分布式系统,它是简单、灵活且可靠的,处理大量消息,专注于实时处理的异步任务队列,同时也支持任务调度。

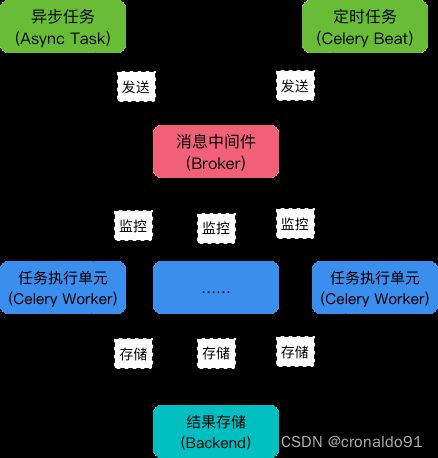

(2) 架构

Celery的架构由三部分组成,消息中间件(message broker),任务执行单元(worker)和任务执行结果存储(task result store)组成。

1)消息中间件

Celery本身不提供消息服务,但是可以方便的和第三方提供的消息中间件集成。包括,RabbitMQ, Redis等等

2)任务执行单元

Worker是Celery提供的任务执行的单元,worker并发的运行在分布式的系统节点中。

3)任务结果存储

Task result store用来存储Worker执行的任务的结果,Celery支持以不同方式存储任务的结果,包括AMQP, redis等(3) 特点

1)简单

Celery易于使用和维护,并且它不需要配置文件并且配置和使用是比较简单的

2)高可用

当任务执行失败或执行过程中发生连接中断,celery会自动尝试重新执行任务

3)快速

单个 Celery 进程每分钟可处理数以百万计的任务,而保持往返延迟在亚毫秒级

4)灵活

Celery几乎所有部分都可以扩展或单独使用,各个部分可以自定义。(4)场景

Celery是一个强大的 分布式任务队列的异步处理框架,它可以让任务的执行完全脱离主程序,甚至可以被分配到其他主机上运行。通常使用它来实现异步任务(async task)和定时任务(crontab)。

1)异步任务

将耗时操作任务提交给Celery去异步执行,比如发送短信/邮件、消息推送、音视频处理等等

2)定时任务

定时执行某件事情,比如每天数据统计二、实验

1.Windows11安装Redis

(1)下载最新版Redis

Redis-x64-xxx.zip压缩包到D盘,解压后,将文件夹重新命名为 Redis(2)查看目录

D:\Redis>dir(3)打开一个 cmd 窗口 使用 cd 命令切换目录到 D:\Redis 运行

redis-server.exe redis.windows.conf

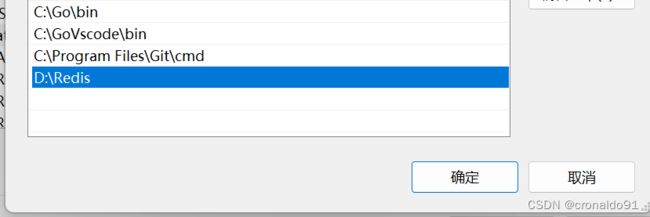

(4)把 redis 的路径加到系统的环境变量

(5)另外开启一个 cmd 窗口,原来的不要关闭,因为先前打开的是redis服务端

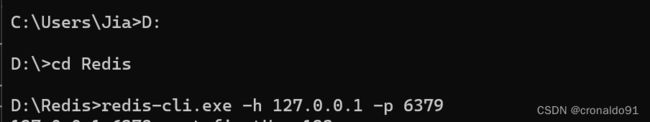

#切换到 redis 目录下运行

redis-cli.exe -h 127.0.0.1 -p 6379

(6)检测连接是否成功

#设置键值对

set firstKey 123

#取出键值对

get firstKey

#退出

exitRedis数据库已显示

(7)ctrl+c 退出先前打开的服务端

(8)注册Redis服务

#通过 cmd 命令行工具进入 Redis 安装目录,将 Redis 服务注册到 Windows 服务中,执行以下命令

redis-server.exe --service-install redis.windows.conf --loglevel verbose

(9)启动Redis服务

#执行以下命令启动 Redis 服务

redis-server --service-start

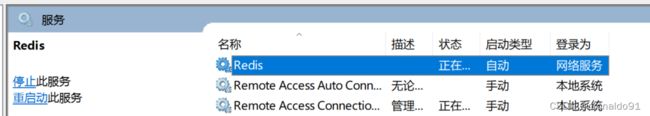

(10)Redis 已经被添加到 Windows 服务中

(11)打开Redis服务,将启动类型设置为自动,即可实现开机自启动

2.Python3.8环境中配置Celery

(1) PyCharm安装celery+redis

#celery是典型的生产者+消费者的模式,生产者生产任务并加入队列中,消费者取出任务消费。多用于处理异步任务或者定时任务。

#第一种方式

pip install celery

pip install redis

#第二种方式

pip install -i https://pypi.douban.com/simple celery

pip install -i https://pypi.douban.com/simple redis

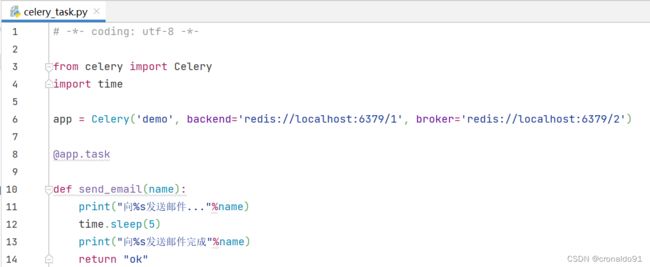

(2)新建异步任务执行文件celery_task.py.相当于注册了celery app

# -*- coding: utf-8 -*-

from celery import Celery

import time

app = Celery('demo', backend='redis://localhost:6379/1', broker='redis://localhost:6379/2')

@app.task

def send_email(name):

print("向%s发送邮件..."%name)

time.sleep(5)

print("向%s发送邮件完成"%name)

return "ok"

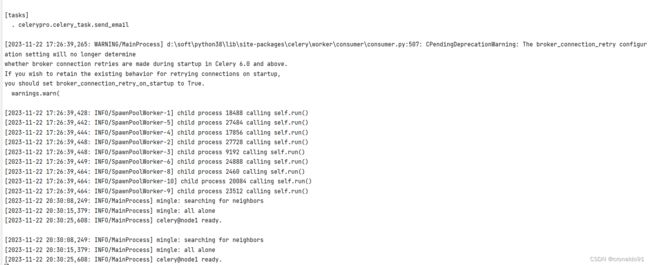

(3) 在项目文件目录下创建worker消费任务

PS D:\soft\pythonProject> celery --app=celerypro.celery_task worker -n node1 -l INFO

-------------- celery@node1 v5.3.5 (emerald-rush)

--- ***** -----

-- ******* ---- Windows-10-10.0.22621-SP0 2023-11-22 17:26:39

- *** --- * ---

- ** ---------- [config]

- ** ---------- .> app: test:0x1e6fa358550

- ** ---------- .> transport: redis://127.0.0.1:6379/2

- ** ---------- .> results: redis://127.0.0.1:6379/1

- *** --- * --- .> concurrency: 32 (prefork)

-- ******* ---- .> task events: OFF (enable -E to monitor tasks in this worker)

--- ***** -----

-------------- [queues]

.> celery exchange=celery(direct) key=celery

[tasks]

. celerypro.celery_task.send_email

[2023-11-22 17:26:39,265: WARNING/MainProcess] d:\soft\python38\lib\site-packages\celery\worker\consumer\consumer.py:507: CPendingDeprecationWarning: The broker_connection_retry configuration setting will no longer determine

[2023-11-22 20:30:08,249: INFO/MainProcess] mingle: searching for neighbors

[2023-11-22 20:30:15,379: INFO/MainProcess] mingle: all alone

[2023-11-22 20:30:25,608: INFO/MainProcess] celery@node1 ready.

(4)ctrl+c 退出

(5)修改celery_task.py文件,增加一个task

# -*- coding: utf-8 -*-

from celery import Celery

import time

app = Celery('demo', backend='redis://localhost:6379/1', broker='redis://localhost:6379/2')

@app.task

def send_email(name):

print("向%s发送邮件..."%name)

time.sleep(5)

print("向%s发送邮件完成"%name)

return "ok"

@app.task

def send_msg(name):

print("向%s发送短信..."%name)

time.sleep(5)

print("向%s发送邮件完成"%name)

return "ok"(6)再次在项目文件目录下创建worker消费任务

PS D:\soft\pythonProject> celery --app=celerypro.celery_task worker -n node1 -l INFO

-------------- celery@node1 v5.3.5 (emerald-rush)

--- ***** -----

-- ******* ---- Windows-10-10.0.22621-SP0 2023-11-22 21:01:43

- *** --- * ---

- ** ---------- [config]

- ** ---------- .> app: demo:0x29cea446250

- ** ---------- .> transport: redis://localhost:6379/2

- ** ---------- .> results: redis://localhost:6379/1

- *** --- * --- .> concurrency: 32 (prefork)

-- ******* ---- .> task events: OFF (enable -E to monitor tasks in this worker)

--- ***** -----

-------------- [queues]

.> celery exchange=celery(direct) key=celery

[tasks]

. celerypro.celery_task.send_email

. celerypro.celery_task.send_msg

[2023-11-22 21:01:43,381: WARNING/MainProcess] d:\soft\python38\lib\site-packages\celery\worker\consumer\consumer.py:507: CPendingDeprecationWarning: The broker_connection_retry configuration setting will no longer determine

[2023-11-22 21:01:43,612: INFO/SpawnPoolWorker-23] child process 23988 calling self.run()

[2023-11-22 21:01:43,612: INFO/SpawnPoolWorker-17] child process 16184 calling self.run()

[2023-11-22 21:01:43,612: INFO/SpawnPoolWorker-21] child process 22444 calling self.run()

[2023-11-22 21:01:43,612: INFO/SpawnPoolWorker-27] child process 29480 calling self.run()

[2023-11-22 21:01:43,612: INFO/SpawnPoolWorker-24] child process 5844 calling self.run()

[2023-11-22 21:01:43,631: INFO/SpawnPoolWorker-25] child process 8896 calling self.run()

[2023-11-22 21:01:43,634: INFO/SpawnPoolWorker-29] child process 28068 calling self.run()

[2023-11-22 21:01:43,634: INFO/SpawnPoolWorker-28] child process 18952 calling self.run()

[2023-11-22 21:01:43,636: INFO/SpawnPoolWorker-26] child process 13680 calling self.run()

[2023-11-22 21:01:43,638: INFO/SpawnPoolWorker-31] child process 25472 calling self.run()

[2023-11-22 21:01:43,638: INFO/SpawnPoolWorker-30] child process 28688 calling self.run()

[2023-11-22 21:01:43,638: INFO/SpawnPoolWorker-32] child process 10072 calling self.run()

[2023-11-22 21:01:45,401: INFO/MainProcess] Connected to redis://localhost:6379/2

[2023-11-22 21:01:45,401: WARNING/MainProcess] d:\soft\python38\lib\site-packages\celery\worker\consumer\consumer.py:507: CPendingDeprecationWarning: The broker_connection_retry configuration setting will no longer determine

whether broker connection retries are made during startup in Celery 6.0 and above.

If you wish to retain the existing behavior for retrying connections on startup,

you should set broker_connection_retry_on_startup to True.

warnings.warn(

[2023-11-22 21:01:49,477: INFO/MainProcess] mingle: searching for neighbors

[2023-11-22 21:01:56,607: INFO/MainProcess] mingle: all alone

[2023-11-22 21:02:04,753: INFO/MainProcess] celery@node1 ready.

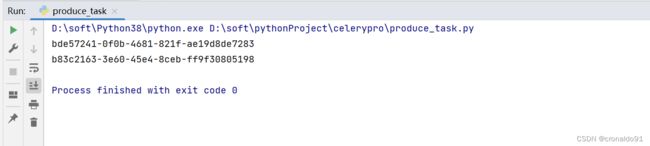

(6)ctrl+c 退出创建执行任务文件produce_task.py

# -*- coding: utf-8 -*-

from celerypro.celery_task import send_email,send_msg

result = send_email.delay("david")

print(result.id)

result2 = send_msg.delay("mao")

print(result2.id)(7)运行produce_task.py

(8)同时取到id值

(9)如遇到报错需要安装包 eventlet

PS D:\soft\pythonProject> pip install eventlet

PS D:\soft\pythonProject> celery --app=celerypro.celery_task worker -n node1 -l INFO -P eventlet

-------------- celery@node1 v5.3.5 (emerald-rush)

--- ***** -----

-- ******* ---- Windows-10-10.0.22621-SP0 2023-11-22 21:29:34

- *** --- * ---

- ** ---------- [config]

- ** ---------- .> app: demo:0x141511962e0

- ** ---------- .> transport: redis://localhost:6379/2

- ** ---------- .> results: redis://localhost:6379/1

- *** --- * --- .> concurrency: 32 (eventlet)

-- ******* ---- .> task events: OFF (enable -E to monitor tasks in this worker)

--- ***** -----

-------------- [queues]

.> celery exchange=celery(direct) key=celery

[tasks]

. celerypro.celery_task.send_email

. celerypro.celery_task.send_msg

r_connection_retry configuration setting will no longer determine

whether broker connection retries are made during startup in Celery 6.0 and above.

If you wish to retain the existing behavior for retrying connections on startup,

you should set broker_connection_retry_on_startup to True.

warnings.warn(

[2023-11-22 21:29:48,022: INFO/MainProcess] pidbox: Connected to redis://localhost:6379/2.

[2023-11-22 21:29:52,117: INFO/MainProcess] celery@node1 ready.

(11) 运行produce_task.py

(12)生成id

(13)查看任务消息

[2023-11-22 21:30:35,194: INFO/MainProcess] Task celerypro.celery_task.send_email[c1a473d5-49ac-4468-9370-19226f377e00] received

[2023-11-22 21:30:35,195: WARNING/MainProcess] 向david发送邮件...

[2023-11-22 21:30:35,197: INFO/MainProcess] Task celerypro.celery_task.send_msg[de30d70b-9110-4dfb-bcfd-45a61403357f] received

[2023-11-22 21:30:35,198: WARNING/MainProcess] 向mao发送短信...

[2023-11-22 21:30:40,210: WARNING/MainProcess] 向david发送邮件完成

[2023-11-22 21:30:40,210: WARNING/MainProcess] 向mao发送邮件完成

[2023-11-22 21:30:42,270: INFO/MainProcess] Task celerypro.celery_task.send_msg[de30d70b-9110-4dfb-bcfd-45a61403357f] succeeded in 7.063000000001921s: 'ok'

[2023-11-22 21:30:42,270: INFO/MainProcess] Task celerypro.celery_task.send_email[c1a473d5-49ac-4468-9370-19226f377e00] succeeded in 7.063000000001921s: 'ok'

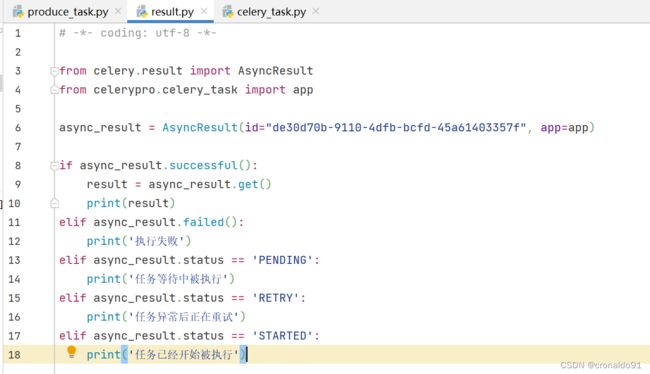

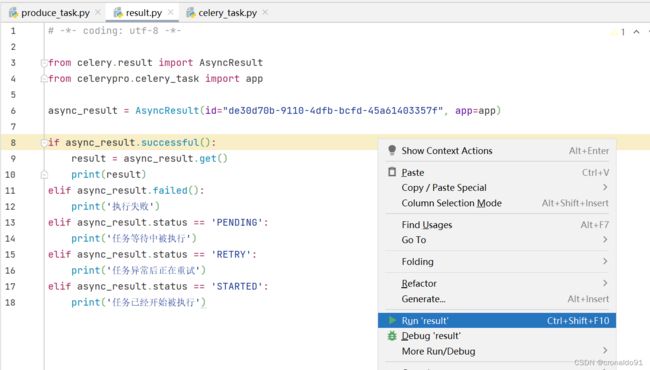

(14)创建py文件:result.py,查看任务执行结果

取第2个id:de30d70b-9110-4dfb-bcfd-45a61403357f

# -*- coding: utf-8 -*-

from celery.result import AsyncResult

from celerypro.celery_task import app

async_result = AsyncResult(id="de30d70b-9110-4dfb-bcfd-45a61403357f", app=app)

if async_result.successful():

result = async_result.get()

print(result)

elif async_result.failed():

print('执行失败')

elif async_result.status == 'PENDING':

print('任务等待中被执行')

elif async_result.status == 'RETRY':

print('任务异常后正在重试')

elif async_result.status == 'STARTED':

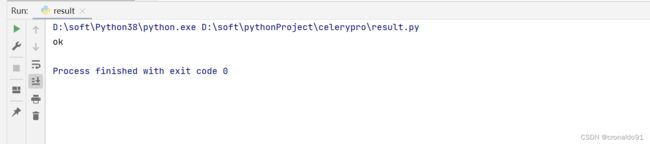

print('任务已经开始被执行')(15) 运行result.py文件

(16)输出ok

(17)Redis可视化界面查看最后2次的task

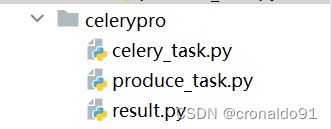

3.celery的多目录结构异步执行

(1)原目录结构

(2)优化目录结构

(3)消费者celery_main.py

# -*- coding: utf-8 -*-

#消费者

from celery import Celery

app = Celery('celery_demo',

backend='redis://localhost:6379/1',

broker='redis://localhost:6379/2',

include=['celery_tasks.task01',

'celery_tasks.task02'

]

)

# 时区

app.conf.timezone = 'Asia/Shanghai'

# 是否使用UTC

app.conf.enable_utc = False(2) 任务一task01.py

# -*- coding: utf-8 -*-

#task01

import time

from celery_tasks.celery_main import app

@app.task

def send_email(res):

print("完成向%s发送邮件任务"%res)

time.sleep(5)

return "邮件完成!"(3) 任务二task02.py

# -*- coding: utf-8 -*-

#task02

import time

from celery_tasks.celery_main import app

@app.task

def send_msg(name):

print("完成向%s发送短信任务"%name)

time.sleep(5)

return "短信完成!"(4) 在项目文件目录下创建worker消费任务

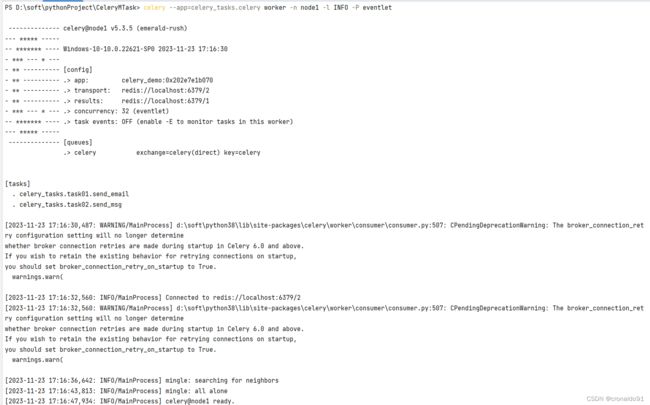

PS D:\soft\pythonProject\CeleryMTask> celery --app=celery_tasks.celery worker -n node1 -l INFO -P eventlet

(5)生产者produce_task.py

# -*- coding: utf-8 -*-

# 生产者

from CeleryMTask import celery_tasks

from celery_tasks.task01 import send_email

from celery_tasks.task02 import send_msg

result = send_email.delay("jack")

print(result.id)

result2 = send_msg.delay("alice")

print(result2.id)(5)运行生产者生产者produce_task.py

(6) 异步结果检查check_result

# -*- coding: utf-8 -*-

# 异步结果检查

from celery.result import AsyncResult

from celery_tasks.celery_main import app

async_result = AsyncResult(id="bb906153-822a-4bd5-aacd-2f2172d3003a", app=app)

if async_result.successful():

result = async_result.get()

print(result)

elif async_result.failed():

print('执行失败')

elif async_result.status == 'PENDING':

print('任务等待中被执行')

elif async_result.status == 'RETRY':

print('任务异常后正在重试')

elif async_result.status == 'STARTED':

print('任务已经开始被执行')(7) 运行异步结果检查check_result

(8)查看运行结果

(8)查看Terminal输出

三、问题

1.Celery命令报错

(1)报错

(2)原因分析

celery版本不同命令不同。

查看帮助命令

PS D:\soft\pythonProject> celery --help

Usage: celery [OPTIONS] COMMAND [ARGS]...

Celery command entrypoint.

Options:

-A, --app APPLICATION

-b, --broker TEXT

--result-backend TEXT

--loader TEXT

--config TEXT

--workdir PATH

-C, --no-color

-q, --quiet

--version

--skip-checks Skip Django core checks on startup. Setting the

SKIP_CHECKS environment variable to any non-empty

string will have the same effect.

--help Show this message and exit.

Commands:

amqp AMQP Administration Shell.

beat Start the beat periodic task scheduler.

call Call a task by name.

control Workers remote control.

events Event-stream utilities.

graph The ``celery graph`` command.

inspect Inspect the worker at runtime.

list Get info from broker.

logtool The ``celery logtool`` command.

migrate Migrate tasks from one broker to another.

multi Start multiple worker instances.

purge Erase all messages from all known task queues.

report Shows information useful to include in bug-reports.

result Print the return value for a given task id.

shell Start shell session with convenient access to celery symbols.

status Show list of workers that are online.

upgrade Perform upgrade between versions.

worker Start worker instance.

PS D:\soft\pythonProject> celery worker --help

Usage: celery worker [OPTIONS]

Start worker instance.

Examples

--------

$ celery --app=proj worker -l INFO

$ celery -A proj worker -l INFO -Q hipri,lopri

$ celery -A proj worker --concurrency=4

$ celery -A proj worker --concurrency=1000 -P eventlet

$ celery worker --autoscale=10,0

Worker Options:

-n, --hostname HOSTNAME Set custom hostname (e.g., 'w1@%%h').

Expands: %%h (hostname), %%n (name) and %%d,

(domain).

-D, --detach Start worker as a background process.

-S, --statedb PATH Path to the state database. The extension

'.db' may be appended to the filename.

-l, --loglevel [DEBUG|INFO|WARNING|ERROR|CRITICAL|FATAL]

Logging level.

-O, --optimization [default|fair]

Apply optimization profile.

--prefetch-multiplier

Set custom prefetch multiplier value for

this worker instance.

Pool Options:

-c, --concurrency

Number of child processes processing the

queue. The default is the number of CPUs

available on your system.

-P, --pool [prefork|eventlet|gevent|solo|processes|threads|custom]

Pool implementation.

-E, --task-events, --events Send task-related events that can be

captured by monitors like celery events,

celerymon, and others.

--time-limit FLOAT Enables a hard time limit (in seconds

int/float) for tasks.

--soft-time-limit FLOAT Enables a soft time limit (in seconds

int/float) for tasks.

--max-tasks-per-child INTEGER Maximum number of tasks a pool worker can

execute before it's terminated and replaced

by a new worker.

--max-memory-per-child INTEGER Maximum amount of resident memory, in KiB,

that may be consumed by a child process

before it will be replaced by a new one. If

a single task causes a child process to

exceed this limit, the task will be

completed and the child process will be

replaced afterwards. Default: no limit.

--scheduler TEXT

Daemonization Options:

-f, --logfile TEXT Log destination; defaults to stderr

--pidfile TEXT

--uid TEXT

--gid TEXT

--umask TEXT

--executable TEXT

Options:

--help Show this message and exit.

(3)解决方法

修改命令

PS D:\soft\pythonProject> celery --app=celerypro.celery_task worker -n node1 -l INFO

成功

2.执行Celery命令报错

(1)报错

AttributeError: 'NoneType' object has no attribute 'Redis'

![]()

(2)原因分析

PyCharm未安装redis插件。

(3)解决方法

安装redis插件

3.Win11启动Celery报ValueErro错误

(1)报错

Windows 在开发 Celery 异步任务,通过命令 celery --app=celerypro.celery_task worker -n node1 -l INFO 启动 Celery 服务后正常;

但在使用 delay() 调用任务时会出现以下报错信息:

Task handler raised error: ValueError('not enough values to unpack (expected 3, got 0)')

(2)原因分析

PyCharm未安装eventlet

(3)解决方法

安装包 eventlet

pip install eventlet通过以下命令启动服务

celery --app=celerypro.celery_task worker -n node1 -l INFO -P eventlet

4.Pycharm 无法 import 同目录下的 .py 文件或自定义模块

(1)报错

(2)原因分析

pycharm 默认情况下只检索项目根目录下的py文件,当引用的py文件不在项目根目录时,,会出现错误。

(3)解决方法

需将要引用的py文件所在的文件夹添加到默认搜索搜索文件夹即可:

方法一

右键py文件所在的文件夹,依次点击:MarkDircetory as -> Sources Root方法二

将py文件所在文件夹作为一个package,在py文件所在文件夹下新建__init__.py文件,在__init__.py中添加如下语句:

from [py文件所在文件夹名].[py文件名] import 模块名