prometheus自动发现之kubernetes_sd_configs

一、服务发现概述

1. 为什么要使用服务发现

通常我们的Kubernetes 集群中会有很多的 Service 和 Pod等资源,这些资源可以随着需求规模的变化而变化,而这些pod的ip,名称也并非一成不变的。那么当k8s资源创建或更新时,如果一个一个的去更改或创建对应的监控Job,那操作将会非常的繁琐。而prometheus的自动发现功能,便轻松的解决了上述问题。

2. 什么是服务发现

对于上述问题,Prometheus这一类基于Pull模式的监控系统,很显然也无法继续使用的static_configs的方式静态的定义监控目标。而Prometheus的解决方案就是引入一个中间的代理人(服务注册中心),这个代理人掌握着当前所有监控目标的访问信息,Prometheus只需要向这个代理人询问有哪些监控目标即可,Prometheus查询到需要监控的Target列表,然后轮训这些Target获取监控数据,这种模式被称为服务发现。

Prometheus支持多种服务发现机制:文件、DNS、Consul、Kubernetes、OpenStack、EC2等。本文以Kubernetes服务发现机制为例,详细探究。

在Kubernetes下,Prometheus 通过与 Kubernetes API 集成主要支持5种服务发现模式:Node、Service、Pod、Endpoints、Ingress。不同的服务发现模式适用于不同的场景,例如:node适用于与主机相关的监控资源,如节点中运行的Kubernetes 组件状态、节点上运行的容器状态等;service 和 ingress 适用于通过黑盒监控的场景,如对服务的可用性以及服务质量的监控;endpoints 和 pod 均可用于获取 Pod 实例的监控数据,如监控用户或者管理员部署的支持 Prometheus 的应用。

二、配置文件分析

1. 配置文件编写流程

prometheus自动发现的核心之处在于relabel_configs的相关配置,首先是通过source_labels配置以__meta_开头的这些元数据标签,声明要匹配的资源,然后通过regex匹配规则找到相关的资源对象,最后再对采集过来的指标做二次处理,比如保留、过来、替换等操作。

2. 配置文件示例

此处以prometheus服务为例,标签为app=prometheus

global:

# 间隔时间

scrape_interval: 30s

# 超时时间

scrape_timeout: 10s

# 另一个独立的规则周期,对告警规则做定期计算

evaluation_interval: 30s

# 外部系统标签用于区分prometheus服务实例

external_labels:

prometheus: monitoring/k8s

prometheus_replica: prometheus-k8s-1

scrape_configs:

# 定义job名称,一个能够被抓取监控数据的endpoint叫做Instance,有着同样目的的Instance集合叫做Job。

- job_name: "prometheus"

# Honor_labels 控制 Prometheus 如何处理已存在于抓取数据中的标签与 Prometheus 将在服务器端附加的标签(“作业”和“实例”标签、手动配置的目标标签以及由服务发现实现生成的标签)之间的冲突。

# 如果honor_labels 设置为“true”,标签冲突通过从抓取的数据中保留标签值并忽略冲突的服务器端标签来解决。

# 如果 Honor_labels 设置为“false”,则通过将抓取数据中的冲突标签重命名为“exported_”来解决标签冲突(

# 将 Honor_labels 设置为“true”对于联邦和抓取 Pushgateway 等用例很有用,其中应保留目标中指定的所有标签。

honor_labels: true

# Honor_timestamps是否采用抓取数据中存在的时间戳默认为true。设置为“true”,则将使用目标公开的指标的时间戳。设置为“false”,则目标公开的指标的时间戳将被忽略。

honor_timestamps: true

# 抓取目标的频率

scrape_interval: 30s

# 抓取请求超时的时间

scrape_timeout: 10s

# 配置抓取请求的 TLS 设置。(https协议时需要填写证书等相关配置)

#tls_config:

# [ ]

# bearer_token_file

# 使用配置的承载令牌在每个scrape请求上设置`Authorization`标头。 它`bearer_token_file`和是互斥的。

#[ bearer_token: ]

# 使用配置的承载令牌在每个scrape请求上设置`Authorization`标头。 它`bearer_token`和是互斥的。

# [ bearer_token_file: /path/to/bearer/token/file ]

# 从目标获取指标的资源路径,默认为/metrics

metrics_path: /metrics

# 配置请求使用的协议方案,默认为http

scheme: http

# 基于kubernetes的API server实现自动发现

kubernetes_sd_configs:

# 角色为 endpoints,通过service来发现后端endpoints,每一个service都有对应的endpoints,如果满足采集条件,那么在service、POD中定义的labels也会被采集进去

- role: endpoints

# 标签匹配处理相关配置

relabel_configs:

# 以prometheus服务为例,他的service标签为app=prometheus。因此source_labels选择__meta_kubernetes_service_label_app,他会列出k8s所有服务对象的标签,其他常用的元数据标签如下

# 节点node

# __meta_kubernetes_node_name: 节点对象的名称

# __meta_kubernetes_node_label_: 节点对象的每个标签

# __meta_kubernetes_node_address_: 如果存在,每一个节点对象类型的第一个地址

# 服务service

# __meta_kubernetes_namespace: 服务对象的命名空间

# __meta_kubernetes_service_cluster_ip: 服务的群集IP地址。(不适用于ExternalName类型的服务)

# __meta_kubernetes_service_external_name: 服务的DNS名称。(适用于ExternalName类型的服务)

# __meta_kubernetes_service_label_: 服务对象的标签。

# __meta_kubernetes_service_name: 服务对象的名称

# __meta_kubernetes_service_port_name: 目标服务端口的名称

# __meta_kubernetes_service_port_protocol: 目标服务端口的协议

# __meta_kubernetes_service_type: 服务的类型

# pod

# __meta_kubernetes_namespace: pod对象的命名空间

# __meta_kubernetes_pod_name: pod对象的名称

# __meta_kubernetes_pod_ip: pod对象的IP地址

# __meta_kubernetes_pod_label_: pod对象的标签

# __meta_kubernetes_pod_container_name: 目标地址的容器名称

# __meta_kubernetes_pod_container_port_name: 容器端口名称

# __meta_kubernetes_pod_ready: 设置pod ready状态为true或者false

# __meta_kubernetes_pod_phase: 在生命周期中设置 Pending, Running, Succeeded, Failed 或 Unknown

# __meta_kubernetes_pod_node_name: pod调度的node名称

# __meta_kubernetes_pod_host_ip: 节点对象的主机IP

# __meta_kubernetes_pod_uid: pod对象的UID。

# __meta_kubernetes_pod_controller_kind: pod控制器的kind对象.

# __meta_kubernetes_pod_controller_name: pod控制器的名称.

# 端点endpoints

# __meta_kubernetes_namespace: 端点对象的命名空间

# __meta_kubernetes_endpoints_name: 端点对象的名称

# __meta_kubernetes_endpoint_hostname: 端点的Hostname

# __meta_kubernetes_endpoint_node_name: 端点所在节点的名称。

# __meta_kubernetes_endpoint_ready: endpoint ready状态设置为true或者false。

# __meta_kubernetes_endpoint_port_name: 端点的端口名称

# __meta_kubernetes_endpoint_port_protocol: 端点的端口协议

# __meta_kubernetes_endpoint_address_target_kind: 端点地址目标的kind。

# __meta_kubernetes_endpoint_address_target_name: 端点地址目标的名称。

# ingress

# __meta_kubernetes_namespace: ingress对象的命名空间

# __meta_kubernetes_ingress_name: ingress对象的名称

# __meta_kubernetes_ingress_label_: ingress对象的每个label。

# __meta_kubernetes_ingress_scheme: 协议方案,如果设置了TLS配置,则为https。默认为http。

# __meta_kubernetes_ingress_path: ingree spec的路径。默认为/。

- source_labels: [__meta_kubernetes_service_label_app]

# 通过正式表达式匹配,条件为service的label标签app=prometheus的资源

regex: prometheus

# 执行动作

# keep:仅收集匹配到regex的源标签,而会丢弃没有匹配到的所有标签,用于选择

# replace:默认行为,不配置action的话就采用这种行为,它会根据regex来去匹配source_labels标签上的值,并将并将匹配到的值写入target_label中

# labelmap:它会根据regex去匹配标签名称,并将匹配到的内容作为新标签的名称,其值作为新标签的值

# drop:丢弃匹配到regex的源标签,而会收集没有匹配到的所有标签,用于排除

# labeldrop:使用regex匹配标签,符合regex规则的标签将从target实例中移除,其实也就是不收集不保存

# labelkeep:使用regex匹配标签,仅收集符合regex规则的标签,不符合的不收集

action: keep

# 添加服务对象的名称空间信息,并替换标签名为namespace

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

# 添加对象的名称信息,并替换为name

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: name

# 添加pod对象的名称信息,并替换为pod

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

三、具体实现步骤

通过部署prometheus服务相关资源,具体说明在实际使用中如何配置prometheus的自动发现

1. 创建ServiceAccount并为其绑定RBAC权限

[root@tiaoban prometheus]# cat rbac.yaml

apiVersion: v1

kind: Namespace

metadata:

name: monitoring

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: prometheus

namespace: monitoring

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRole

metadata:

name: prometheus

namespace: monitoring

rules:

- apiGroups: [""]

resources:

- nodes

- nodes/proxy

- nodes/metrics

- services

- endpoints

- pods

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["configmaps"]

verbs: ["get"]

- nonResourceURLs: ["/metrics"]

verbs: ["get"]

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: prometheus

subjects:

- kind: ServiceAccount

name: prometheus

namespace: monitoring

roleRef:

kind: ClusterRole

name: prometheus

apiGroup: rbac.authorization.k8s.io

[root@tiaoban prometheus]# kubectl apply -f rbac.yaml

namespace/monitoring created

serviceaccount/prometheus created

clusterrole.rbac.authorization.k8s.io/prometheus created

clusterrolebinding.rbac.authorization.k8s.io/prometheus created

2. 创建configmap

[root@tiaoban prometheus]# cat prometheus-config.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-config

namespace: monitoring

data:

prometheus.yaml: |-

global:

scrape_interval: 5s

evaluation_interval: 5s

external_labels:

cluster: prometheus-1

rule_files:

- /etc/prometheus/rules/*.yaml

scrape_configs:

- job_name: prometheus

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_label_app]

regex: prometheus

action: keep

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: service

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

[root@tiaoban prometheus]# kubectl apply -f prometheus-config.yaml

configmap/prometheus-config created

3. 创建prometheus

因为prometheus是有状态服务,因此创建StatefulSet控制器,存储使用local pv。

[root@tiaoban prometheus]# cat prometheus.yaml

kind: Service

apiVersion: v1

metadata:

name: prometheus-headless

namespace: monitoring

labels:

app: prometheus

spec:

type: ClusterIP

clusterIP: None

selector:

app: prometheus

ports:

- name: web

protocol: TCP

port: 9090

targetPort: web

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: prometheus

namespace: monitoring

labels:

app: prometheus

spec:

serviceName: prometheus-headless

podManagementPolicy: Parallel

replicas: 1

selector:

matchLabels:

app: prometheus

template:

metadata:

labels:

app: prometheus

spec:

serviceAccountName: prometheus

containers:

- name: prometheus

image: prom/prometheus:v2.30.0

imagePullPolicy: IfNotPresent

args:

- --config.file=/etc/prometheus/config/prometheus.yaml

- --storage.tsdb.path=/prometheus

- --storage.tsdb.retention.time=10d

- --web.route-prefix=/

- --web.enable-lifecycle

- --storage.tsdb.no-lockfile

- --storage.tsdb.min-block-duration=2h

- --storage.tsdb.max-block-duration=2h

- --log.level=debug

ports:

- containerPort: 9090

name: web

protocol: TCP

livenessProbe:

httpGet:

path: /-/healthy

port: web

scheme: HTTP

readinessProbe:

httpGet:

path: /-/ready

port: web

scheme: HTTP

volumeMounts:

- mountPath: /etc/prometheus/config

name: prometheus-config

readOnly: true

- mountPath: /prometheus

name: data

volumes:

- name: prometheus-config

configMap:

name: prometheus-config

volumeClaimTemplates:

- metadata:

name: data

spec:

accessModes:

- ReadWriteOnce

storageClassName: local-storage

resources:

requests:

storage: 10Gi

[root@tiaoban prometheus]# kubectl apply -f prometheus.yaml

service/prometheus-headless created

statefulset.apps/prometheus created

4. 创建ingress

此处以traefik举例说明

[root@tiaoban prometheus]# cat prometheus-ingress.yaml

apiVersion: traefik.containo.us/v1alpha1

kind: IngressRoute

metadata:

name: prometheus

namespace: monitoring

spec:

routes:

- match: Host(`prometheus.local.com`)

kind: Rule

services:

- name: prometheus-headless

port: 9090

[root@tiaoban prometheus]# kubectl apply -f prometheus-ingress.yaml

ingressroute.traefik.containo.us/prometheus created

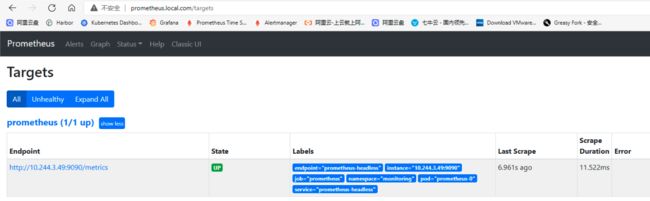

5. 访问验证

修改本地hosts文件后访问验证

四、常见job配置示例

1. apiserver

- 查看apiserver服务信息

[root@tiaoban prometheus]# kubectl describe svc kubernetes

Name: kubernetes

Namespace: default

Labels: component=apiserver

provider=kubernetes

Annotations: >

Selector: >

Type: ClusterIP

IP: 10.96.0.1

Port: https 443/TCP

TargetPort: 6443/TCP

Endpoints: 192.168.10.10:6443

Session Affinity: None

Events: >

根据svc信息可知,apiserver位于default命名空间下,标签为component=apiserver,访问方式为https,端口为6443

- 编写prometheus配置文件

- job_name: kube-apiserver

kubernetes_sd_configs:

- role: endpoints

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name]

action: keep

regex: default;kubernetes

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: service

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

需要注意的是使用https访问时,需要tls相关配置,可以指定ca证书路径或者insecure_skip_verify: true跳过证书验证。除此之外,还要指定bearer_token_file,否则会提示server returned HTTP status 400 Bad Request

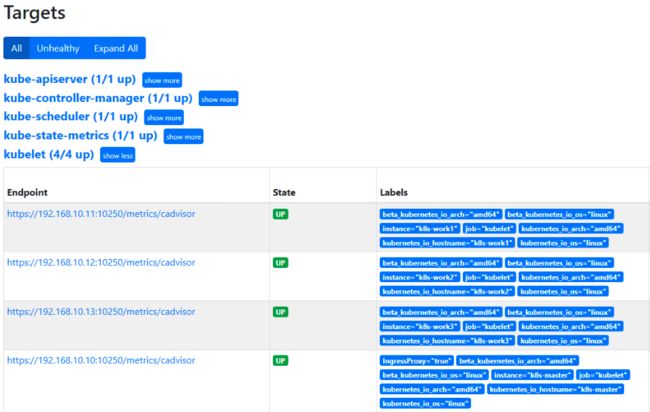

- 结果查看

2. controller-manager

- 查看controller-manager信息

[root@tiaoban prometheus]# kubectl describe pod -n kube-system kube-controller-manager-k8s-master

Name: kube-controller-manager-k8s-master

Namespace: kube-system

……

Labels: component=kube-controller-manager

tier=control-plane

……

Containers:

kube-controller-manager:

……

Command:

kube-controller-manager

--allocate-node-cidrs=true

--authentication-kubeconfig=/etc/kubernetes/controller-manager.conf

--authorization-kubeconfig=/etc/kubernetes/controller-manager.conf

--bind-address=127.0.0.1

……

由上可知,匹配pod对象,lable标签为component=kube-controller-manager即可,但需注意的是controller-manager默认只运行127.0.0.1访问,因此还需先修改controller-manager配置

- 修改/etc/kubernetes/manifests/kube-controller-manager.yaml

[root@k8s-master manifests]# cat /etc/kubernetes/manifests/kube-controller-manager.yaml

……

- command:

- --bind-address=0.0.0.0 # 端口改为0.0.0.0

#- --port=0 # 注释0端口

……

- 编写prometheus配置文件,需要注意的是,他默认匹配到的是80端口,需要手动指定为10252端口

- job_name: kube-controller-manager

kubernetes_sd_configs:

- role: pod

relabel_configs:

- source_labels: [__meta_kubernetes_pod_label_component]

regex: kube-controller-manager

action: keep

- source_labels: [__meta_kubernetes_pod_ip]

regex: (.+)

target_label: __address__

replacement: ${1}:10252

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: service

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

- 结果查看

3. scheduler

- 查看scheduler资源详情

[root@tiaoban prometheus]# kubectl describe pod -n kube-system kube-scheduler-k8s-master

Name: kube-scheduler-k8s-master

Namespace: kube-system

……

Labels: component=kube-scheduler

tier=control-plane

……

由上可知,匹配pod对象,lable标签为component=kube-scheduler即可scheduler和controller-manager一样,默认监听0端口,需要注释

- 编写prometheus配置文件,需要注意的是,他默认匹配到的是80端口,需要手动指定为10251端口,同时指定token,否则会提示server returned HTTP status 400 Bad Request

- job_name: kube-scheduler

kubernetes_sd_configs:

- role: pod

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_pod_label_component]

regex: kube-scheduler

action: keep

- source_labels: [__meta_kubernetes_pod_ip]

regex: (.+)

target_label: __address__

replacement: ${1}:10251

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: service

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

- 结果查看

4. kube-state-metrics

- 查看scheduler资源详情

[root@tiaoban prometheus]# kubectl describe svc -n kube-system kube-state-metrics

Name: kube-state-metrics

Namespace: kube-system

Labels: app.kubernetes.io/component=exporter

app.kubernetes.io/name=kube-state-metrics

app.kubernetes.io/version=2.2.1

Annotations: Selector: app.kubernetes.io/name=kube-state-metrics

Type: ClusterIP

IP: None

Port: http-metrics 8080/TCP

TargetPort: http-metrics/TCP

Endpoints: 10.244.1.47:8080

Port: telemetry 8081/TCP

TargetPort: telemetry/TCP

Endpoints: 10.244.1.47:8081

Session Affinity: None

Events: >

由上可知,匹配svc对象,name为kube-state-metrics即可

- 编写prometheus配置文件,需要注意的是,他默认匹配到的是8080和801两个端口,需要手动指定为8080端口

- job_name: kube-state-metrics

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_name]

regex: kube-state-metrics

action: keep

- source_labels: [__meta_kubernetes_pod_ip]

regex: (.+)

target_label: __address__

replacement: ${1}:8080

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: service

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

- 结果查看

5. kubelet

- 查看kubelet资源详情

[root@k8s-work2 ~]# ss -tunlp | grep kubelet

tcp LISTEN 0 128 127.0.0.1:42735 0.0.0.0:* users:(("kubelet",pid=1064,fd=12))

tcp LISTEN 0 128 127.0.0.1:10248 0.0.0.0:* users:(("kubelet",pid=1064,fd=29))

tcp LISTEN 0 128 *:10250 *:* users:(("kubelet",pid=1064,fd=30))

由上可知,kubelet在每个node节点都有运行,端口为10250,因此role使用node即可。

- 编写prometheus配置文件,需要注意的是,他的指标采集地址为/metrics/cadvisor,需要配置https访问,可以设置insecure_skip_verify: true跳过证书验证

- job_name: kubelet

metrics_path: /metrics/cadvisor

scheme: https

tls_config:

insecure_skip_verify: true

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

kubernetes_sd_configs:

- role: node

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

- 结果查看

6. node_exporter

- 查看scheduler资源详情

[root@tiaoban prometheus]# kubectl describe pod -n monitoring node-exporter-9dsqr

Name: node-exporter-9dsqr

Namespace: monitoring

Priority: 0

Node: k8s-master/192.168.10.10

Start Time: Sun, 17 Oct 2021 16:42:24 +0800

Labels: app=node-exporter

controller-revision-hash=775f546bd4

pod-template-generation=1

……

Port: 9100/TCP

Host Port: 9100/TCP

……

node_exporter也是每个node节点都运行,因此role使用node即可,默认address端口为10250,替换为9100即可

- 编写prometheus配置文件

- job_name: node_exporter

kubernetes_sd_configs:

- role: node

relabel_configs:

- source_labels: [__address__]

regex: '(.*):10250'

replacement: '${1}:9100'

target_label: __address__

action: replace

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

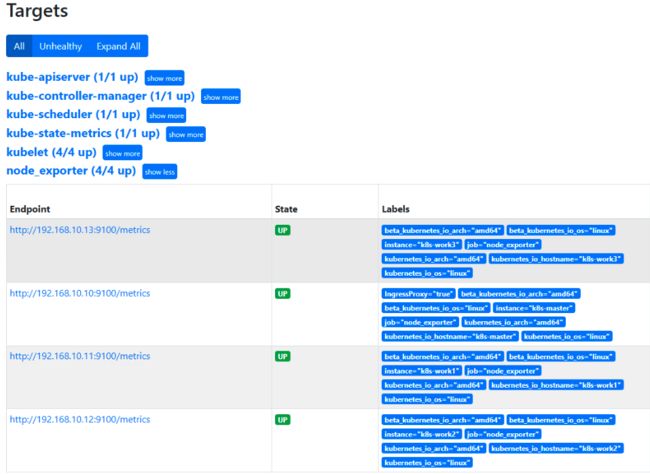

- 结果查看

7. coredns

- 查看coredns资源详情

[root@tiaoban prometheus]# kubectl describe svc -n kube-system kube-dns

Name: kube-dns

Namespace: kube-system

Labels: k8s-app=kube-dns

kubernetes.io/cluster-service=true

kubernetes.io/name=KubeDNS

Annotations: prometheus.io/port: 9153

prometheus.io/scrape: true

Selector: k8s-app=kube-dns

Type: ClusterIP

IP: 10.96.0.10

Port: dns 53/UDP

TargetPort: 53/UDP

Endpoints: 10.244.1.44:53,10.244.2.36:53

Port: dns-tcp 53/TCP

TargetPort: 53/TCP

Endpoints: 10.244.1.44:53,10.244.2.36:53

Port: metrics 9153/TCP

TargetPort: 9153/TCP

Endpoints: 10.244.1.44:9153,10.244.2.36:9153

Session Affinity: None

Events: >

由上可知,匹配pod对象,lable标签为component=kube-scheduler即可

- 编写prometheus配置文件,需要注意的是,他默认匹配到的是53端口,需要手动指定为9153端口

- job_name: coredns

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels:

- __meta_kubernetes_service_label_k8s_app

regex: kube-dns

action: keep

- source_labels: [__meta_kubernetes_pod_ip]

regex: (.+)

target_label: __address__

replacement: ${1}:9153

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: service

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

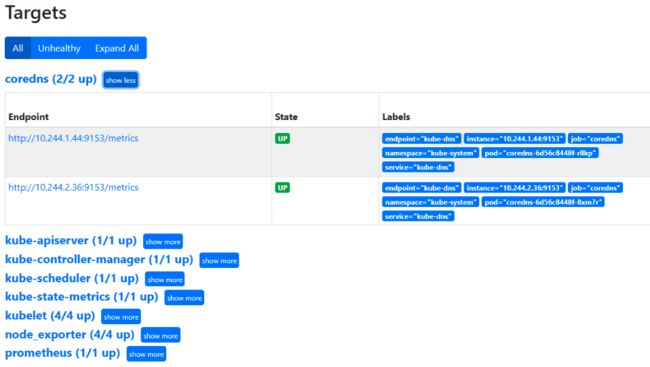

- 结果查看

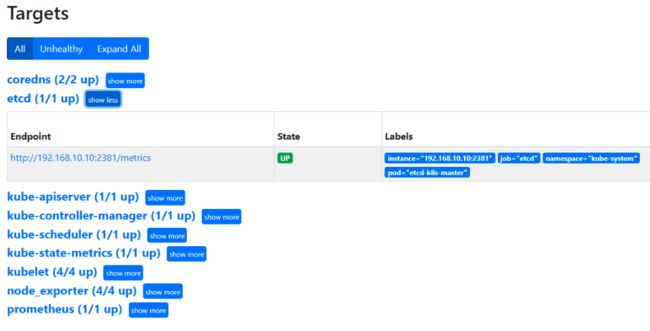

8. etcd

- 查看scheduler资源详情

[root@tiaoban prometheus]# kubectl describe pod -n kube-system etcd-k8s-master

Name: etcd-k8s-master

Namespace: kube-system

Priority: 2000001000

Priority Class Name: system-node-critical

Node: k8s-master/192.168.10.10

Start Time: Sun, 17 Oct 2021 12:31:51 +0800

Labels: component=etcd

tier=control-plane

……

Command:

etcd

--advertise-client-urls=https://192.168.10.10:2379

--cert-file=/etc/kubernetes/pki/etcd/server.crt

--client-cert-auth=true

--data-dir=/var/lib/etcd

--initial-advertise-peer-urls=https://192.168.10.10:2380

--initial-cluster=k8s-master=https://192.168.10.10:2380

--key-file=/etc/kubernetes/pki/etcd/server.key

--listen-client-urls=https://127.0.0.1:2379,https://192.168.10.10:2379

--listen-metrics-urls=http://127.0.0.1:2381

--listen-peer-urls=https://192.168.10.10:2380

--name=k8s-master

--peer-cert-file=/etc/kubernetes/pki/etcd/peer.crt

--peer-client-cert-auth=true

--peer-key-file=/etc/kubernetes/pki/etcd/peer.key

--peer-trusted-ca-file=/etc/kubernetes/pki/etcd/ca.crt

--snapshot-count=10000

--trusted-ca-file=/etc/kubernetes/pki/etcd/ca.crt

……

由上可知,启动参数里面有一个 --listen-metrics-urls=http://127.0.0.1:2381 的配置,该参数就是来指定 Metrics 接口运行在 2381 端口下面的,而且是 http 的协议,所以也不需要什么证书配置,这就比以前的版本要简单许多了,以前的版本需要用 https 协议访问,所以要配置对应的证书。但是还需修改配置文件,地址改为0.0.0.0

- 编写prometheus配置文件,需要注意的是,他默认匹配到的是2379端口,需要手动指定为2381端口

- job_name: etcd

kubernetes_sd_configs:

- role: pod

relabel_configs:

- source_labels:

- __meta_kubernetes_pod_label_component

regex: etcd

action: keep

- source_labels: [__meta_kubernetes_pod_ip]

regex: (.+)

target_label: __address__

replacement: ${1}:2381

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

- 结果查看

参考链接

- prometheus配置文件说明

https://cloud.tencent.com/developer/article/1844101

- prometheus kubernetes_sd_configs配置官方文档:https://prometheus.io/docs/prometheus/latest/configuration/configuration/#kubernetes_sd_config

查看更多

微信公众号

微信公众号同步更新,欢迎关注微信公众号第一时间获取最近文章。![]()

博客网站

崔亮的博客-专注devops自动化运维,传播优秀it运维技术文章。更多原创运维开发相关文章,欢迎访问https://www.cuiliangblog.cn