02-k8s环境部署(生产环境使用kubelet1.16.2)

k8s环境部署

文章目录

-

- k8s环境部署

- 环境准备

- 安装 docker / kubelet

- 初始化API Server

-

- 创建 ApiServer 的 Load Balancer(私网)

- 初始化第一个master节点

- 初始化第二、三个master节点

-

- 方式一:和第一个Master节点一起初始化(两小时内)

- 方式二:第一个Master节点初始化2个小时后再初始化

- 获得 certificate key

- 获得 join 命令

- 初始化第二、三个 master 节点

- 检查 master 初始化结果

- 初始化 worker节点

-

-

- 方式一:和第一个Master节点一起初始化(k8s-mstaer01初始化两个小时内)

-

- 初始化worker

- 方式一:和第一个Master节点一起初始化(k8s-mstaer01初始化两个小时内)

-

- 重新获取token

- 初始化worker

- 检查 worker 初始化结果

- 移除 worker 节点

- 安装 Ingress Controller

- 在 IaaS 层完成如下配置(**公网Load Balancer**)

- 配置域名解析

- 验证配置

- Metrics-Server部署

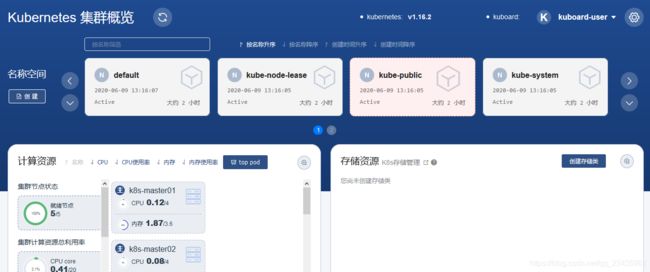

- kuboard部署

-

-

- ①获取kuboard.yaml文件

- ②部署kuboard

- ③获取token

- ④访问kuboard

- ⑤直接访问集群概览页

- ⑥直接访问终端界面

- ⑥直接访问终端界面

-

-

环境准备

检查系统版本和主机名

# 在master 节点和 worker 节点都要执行

cat /etc/redhat-release

# 此处 hostname 的输出将会是该机器在 Kubernetes 集群中的节点名字

hostname

# 修改 hostname

hostnamectl set-hostname your-new-host-name

# 查看修改结果

hostnamectl status

# 设置 hostname 解析

echo "127.0.0.1 $(hostname)" >> /etc/hosts

检查网络

#在所有节点执行命令

[root@k8s-master01 ~]# ip route show

default via 192.168.1.1 dev ens32 proto static metric 100

192.168.1.0/24 dev ens32 proto kernel scope link src 192.168.1.20 metric 100

[root@k8s-master01 ~]# ip address

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens32: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:0c:29:45:de:35 brd ff:ff:ff:ff:ff:ff

inet 192.168.1.20/24 brd 192.168.1.255 scope global noprefixroute ens32

valid_lft forever preferred_lft forever

inet6 fe80::a4dc:eb6e:1a5e:f979/64 scope link tentative noprefixroute dadfailed

valid_lft forever preferred_lft forever

inet6 fe80::3da2:dfff:2f79:82ed/64 scope link tentative noprefixroute dadfailed

valid_lft forever preferred_lft forever

inet6 fe80::5e01:56c4:a44b:ee66/64 scope link noprefixroute

valid_lft forever preferred_lft forever

[root@k8s-master01 ~]#

kubelet使用的IP地址

-

ip route show命令中,可以知道机器的默认网卡,通常是eth0或ens32,如 default via 192.168.1.1 dev ens32 -

ip address命令中,可显示默认网卡的 IP 地址,Kubernetes 将使用此 IP 地址与集群内的其他节点通信,如192.168.1.20 -

所有节点上 Kubernetes 所使用的 IP 地址必须可以互通(无需 NAT 映射、无安全组或防火墙隔离)

安装 docker / kubelet

系统环境:

1、任意节点centos版本为7.6或7.7;

2、CPU内核数大于等于2,内存大于等于4G;

3、hostname不是localhost,切不包含斜划线、小数点、大写字母;

4、每个节点配置固定的IP地址,只有一块网卡(如果有特殊目的,可以在完成 K8S 安装后再增加新的网卡)

5、所有节点IP地址互通(没有NAT映射即可访问),没有防火墙,安全组隔离、黑名单

6、不在任意节点直接使用docker run或docker-compose运行容器

所有节点需要用root身份在所有节点执行以下代码,安装软件:docker、nfs-util、kubectl/kubeadm/kubelet

#!/bin/bash

# 在 master 节点和 worker 节点都要执行

# 安装 docker

# 参考文档如下

# https://docs.docker.com/install/linux/docker-ce/centos/

# https://docs.docker.com/install/linux/linux-postinstall/

# 卸载旧版本

yum remove -y docker \

docker-client \

docker-client-latest \

docker-common \

docker-latest \

docker-latest-logrotate \

docker-logrotate \

docker-selinux \

docker-engine-selinux \

docker-engine

# 设置 yum repository

yum install -y yum-utils \

device-mapper-persistent-data \

lvm2

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

# 安装并启动 docker

yum install -y docker-ce-18.09.7 docker-ce-cli-18.09.7 containerd.io

systemctl enable docker

systemctl start docker

# 安装 nfs-utils

# 必须先安装 nfs-utils 才能挂载 nfs 网络存储

yum install -y nfs-utils

yum install -y wget

# 关闭 防火墙

systemctl stop firewalld

systemctl disable firewalld

# 关闭 SeLinux

setenforce 0

sed -i "s/SELINUX=enforcing/SELINUX=disabled/g" /etc/selinux/config

# 关闭 swap

swapoff -a

yes | cp /etc/fstab /etc/fstab_bak

cat /etc/fstab_bak |grep -v swap > /etc/fstab

# 修改 /etc/sysctl.conf

# 如果有配置,则修改

sed -i "s#^net.ipv4.ip_forward.*#net.ipv4.ip_forward=1#g" /etc/sysctl.conf

sed -i "s#^net.bridge.bridge-nf-call-ip6tables.*#net.bridge.bridge-nf-call-ip6tables=1#g" /etc/sysctl.conf

sed -i "s#^net.bridge.bridge-nf-call-iptables.*#net.bridge.bridge-nf-call-iptables=1#g" /etc/sysctl.conf

# 可能没有,追加

echo "net.ipv4.ip_forward = 1" >> /etc/sysctl.conf

echo "net.bridge.bridge-nf-call-ip6tables = 1" >> /etc/sysctl.conf

echo "net.bridge.bridge-nf-call-iptables = 1" >> /etc/sysctl.conf

# 执行命令以应用

sysctl -p

# 配置K8S的yum源

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

# 卸载旧版本

yum remove -y kubelet kubeadm kubectl

# 安装kubelet、kubeadm、kubectl

yum install -y kubelet-1.16.2 kubeadm-1.16.2 kubectl-1.16.2

# 修改docker Cgroup Driver为systemd

# # 将/usr/lib/systemd/system/docker.service文件中的这一行 ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock

# # 修改为 ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --exec-opt native.cgroupdriver=systemd

# 如果不修改,在添加 worker 节点时可能会碰到如下错误

# [WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd".

# Please follow the guide at https://kubernetes.io/docs/setup/cri/

sed -i "s#^ExecStart=/usr/bin/dockerd.*#ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --exec-opt native.cgroupdriver=systemd#g" /usr/lib/systemd/system/docker.service

# 设置 docker 镜像,提高 docker 镜像下载速度和稳定性

# 如果您访问 https://hub.docker.io 速度非常稳定,亦可以跳过这个步骤

curl -sSL https://get.daocloud.io/daotools/set_mirror.sh | sh -s http://f1361db2.m.daocloud.io

# 重启 docker,并启动 kubelet

systemctl daemon-reload

systemctl restart docker

systemctl enable kubelet && systemctl start kubelet

docker version

注意:此脚本安装docker版本docker-ce-18.09,kubeadm版本kubeadm-1.16.2。如果此时执行 service status kubelet 命令,将得到 kubelet 启动失败的错误提示,请忽略此错误,因为必须完成后续步骤中 kubeadm init 的操作,kubelet 才能正常启动。

初始化API Server

创建 ApiServer 的 Load Balancer(私网)

监听端口:6443 / TCP

后端资源组:包含 k8s-master01, k8s-master02, k8s-master-03

后端端口:6443

开启 按源地址保持会话

假设完成创建以后,Load Balancer的 ip 地址为 192.168.1.10,此处试用的是keepalive+haproxy,使用16643端口负载均衡到6443端口,可参考部署文档keepalive+haproxy

根据每个人实际的情况不同,实现 LoadBalancer 的方式不一样,本文不详细阐述如何搭建 LoadBalancer,请读者自行解决,可以考虑的选择有:

- nginx

- haproxy

- keepalived

- 云供应商提供的负载均衡产品

初始化第一个master节点

- 以 root 身份在 k8s-master01 机器上执行

- 初始化 master 节点时,如果因为中间某些步骤的配置出错,想要重新初始化 master 节点,请先执行

kubeadm reset操作

关于初始化时用到的环境变量

- APISERVER_NAME 不能是 master 的 hostname

- APISERVER_NAME 必须全为小写字母、数字、小数点,不能包含减号

- POD_SUBNET 所使用的网段不能与 master节点/worker节点 所在的网段重叠。该字段的取值为一个 CIDR 值,如果您对 CIDR 这个概念还不熟悉,请不要修改这个字段的取值 10.100.0.1/16

# 只在第一个 master 节点执行

# 替换 apiserver.demo 为 您想要的 dnsName

export APISERVER_NAME=apiserver.demo

# Kubernetes 容器组所在的网段,该网段安装完成后,由 kubernetes 创建,事先并不存在于您的物理网络中

export POD_SUBNET=10.100.0.1/16

echo "127.0.0.1 ${APISERVER_NAME}" >> /etc/hosts

#!/bin/bash

# 只在 master 节点执行

# 脚本出错时终止执行

set -e

if [ ${#POD_SUBNET} -eq 0 ] || [ ${#APISERVER_NAME} -eq 0 ]; then

echo -e "\033[31;1m请确保您已经设置了环境变量 POD_SUBNET 和 APISERVER_NAME \033[0m"

echo 当前POD_SUBNET=$POD_SUBNET

echo 当前APISERVER_NAME=$APISERVER_NAME

exit 1

fi

# 查看完整配置选项 https://godoc.org/k8s.io/kubernetes/cmd/kubeadm/app/apis/kubeadm/v1beta2

rm -f ./kubeadm-config.yaml

cat <<EOF > ./kubeadm-config.yaml

apiVersion: kubeadm.k8s.io/v1beta2

kind: ClusterConfiguration

kubernetesVersion: v1.16.2

imageRepository: registry.cn-hangzhou.aliyuncs.com/google_containers

controlPlaneEndpoint: "${APISERVER_NAME}:16443"

networking:

serviceSubnet: "10.96.0.0/16"

podSubnet: "${POD_SUBNET}"

dnsDomain: "cluster.local"

EOF

# kubeadm init

# 根据您服务器网速的情况,您需要等候 3 - 10 分钟

kubeadm init --config=kubeadm-config.yaml --upload-certs

# 配置 kubectl

rm -rf /root/.kube/

mkdir /root/.kube/

cp -i /etc/kubernetes/admin.conf /root/.kube/config

# 安装 calico 网络插件

# 参考文档 https://docs.projectcalico.org/v3.9/getting-started/kubernetes/

rm -f calico-3.9.2.yaml

wget https://kuboard.cn/install-script/calico/calico-3.9.2.yaml

sed -i "s#192\.168\.0\.0/16#${POD_SUBNET}#" calico-3.9.2.yaml

kubectl apply -f calico-3.9.2.yaml

执行结果

执行结果中:

- 第15、16、17行,用于初始化第二、三个 master 节点

- 第25、26行,用于初始化 worker 节点

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of the control-plane node running the following command on each as root:

kubeadm join apiserver.demo:16443 --token 4ix1k1.l8wg8pq9cnrm9fkd \

--discovery-token-ca-cert-hash sha256:cd2d7833513e58dc2eae952e674b2903d4f884d86fd47b00784d56aeeed2dcde \

--control-plane --certificate-key a549d21b53274b479e89b45d0a1d93799d70b385430290fbfccea9376b5f59ee

Please note that the certificate-key gives access to cluster sensitive data, keep it secret!

As a safeguard, uploaded-certs will be deleted in two hours; If necessary, you can use

"kubeadm init phase upload-certs --upload-certs" to reload certs afterward.

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join apiserver.demo:16443 --token 4ix1k1.l8wg8pq9cnrm9fkd \

--discovery-token-ca-cert-hash sha256:cd2d7833513e58dc2eae952e674b2903d4f884d86fd47b00784d56aeeed2dcde

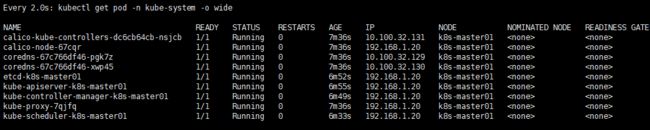

检查 master 初始化结果

# 只在第一个 master 节点执行

# 执行如下命令,等待 3-10 分钟,直到所有的容器组处于 Running 状态

watch kubectl get pod -n kube-system -o wide

# 查看 master 节点初始化结果

[root@k8s-master01 k8s]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master01 Ready master 7m4s v1.16.2

注意:请等到所有容器组(大约9个)全部处于 Running 状态,才进行下一步

初始化第二、三个master节点

获得 master 节点的 join 命令

可以和第一个Master节点一起初始化第二、三个Master节点,也可以从单Master节点调整过来,只需要

- 增加Master的 LoadBalancer

- 将所有节点的 /etc/hosts 文件中 apiserver.demo 解析为 LoadBalancer 的地址

- 添加第二、三个Master节点

- 初始化 master 节点的 token 有效时间为 2 小时

方式一:和第一个Master节点一起初始化(两小时内)

初始化第一个 master 节点时的输出内容中,第15、16、17行就是用来初始化第二、三个 master 节点的命令,如下所示:此时请不要执行该命令

kubeadm join apiserver.demo:16443 --token 4ix1k1.l8wg8pq9cnrm9fkd \

--discovery-token-ca-cert-hash sha256:cd2d7833513e58dc2eae952e674b2903d4f884d86fd47b00784d56aeeed2dcde \

--control-plane --certificate-key a549d21b53274b479e89b45d0a1d93799d70b385430290fbfccea9376b5f59ee

初始化第二、三个 master 节点

在 k8s-master02 和 k8s-master03 机器上执行

# 只在第二、三个 master 节点 k8s-master02 和 k8s-master03 执行

# 替换 192.168.1.10 为 ApiServer LoadBalancer 的 IP 地址

[root@k8s-master02 ~]# export APISERVER_IP=192.168.1.10

# 替换 apiserver.demo 为 前面已经使用的 dnsName

[root@k8s-master02 ~]# export APISERVER_NAME=apiserver.demo

[root@k8s-master02 ~]# echo "${APISERVER_IP} ${APISERVER_NAME}" >> /etc/hosts

# 使用前面步骤中获得的第二、三个 master 节点的 join 命令

[root@k8s-master02 ~]# kubeadm join apiserver.demo:16443 --token 4ix1k1.l8wg8pq9cnrm9fkd \

--discovery-token-ca-cert-hash sha256:cd2d7833513e58dc2eae952e674b2903d4f884d86fd47b00784d56aeeed2dcde \

--control-plane --certificate-key a549d21b53274b479e89b45d0a1d93799d70b385430290fbfccea9376b5f59ee

常见问题

如果一直停留在 pre-flight 状态,请在第二、三个节点上执行命令检查:

[root@k8s-master02 ~]# curl -ik https://apiserver.demo:16443/version

输出结果应该如下所示

HTTP/1.1 200 OK

Cache-Control: no-cache, private

Content-Type: application/json

Date: Wed, 30 Oct 2019 08:13:39 GMT

Content-Length: 263

{

"major": "1",

"minor": "16",

"gitVersion": "v1.16.2",

"gitCommit": "2bd9643cee5b3b3a5ecbd3af49d09018f0773c77",

"gitTreeState": "clean",

"buildDate": "2019-09-18T14:27:17Z",

"goVersion": "go1.12.9",

"compiler": "gc",

"platform": "linux/amd64"

}

否则,请您检查一下您的 Loadbalancer 是否设置正确

检查 master 初始化结果

# 只在第一个 master 节点 k8s-master01 执行

# 查看 master 节点初始化结果

[root@k8s-master01 k8s]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master01 Ready master 12m v1.16.2

k8s-master02 Ready master 7m27s v1.16.2

k8s-master03 Ready master 2m14s v1.16.2

[root@k8s-master01 k8s]#

方式二:第一个Master节点初始化2个小时后再初始化

获得 certificate key

在 k8s-master01 上执行

# 只在 第一个 master 节点 k8s-master01 上执行

[root@k8s-master01 ~]# kubeadm init phase upload-certs --upload-certs

输出结果如下:

[root@k8s-master01 ~]# kubeadm init phase upload-certs --upload-certs

W0902 09:05:28.355623 1046 version.go:98] could not fetch a Kubernetes version from the internet: unable to get URL "https://dl.k8s.io/release/stable-1.txt": Get https://dl.k8s.io/release/stable-1.txt: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers)

W0902 09:05:28.355718 1046 version.go:99] falling back to the local client version: v1.16.2

[upload-certs] Storing the certificates in Secret "kubeadm-certs" in the "kube-system" Namespace

[upload-certs] Using certificate key:

70eb87e62f052d2d5de759969d5b42f372d0ad798f98df38f7fe73efdf63a13c

获得 join 命令

在 k8s-master01 上执行

# 只在 第一个 master 节点 k8s-master01 上执行

[root@k8s-master01 ~]#kubeadm token create --print-join-command

输出结果如下:

[root@k8s-master01 ~]# kubeadm token create --print-join-command

kubeadm join apiserver.demo:6443 --token bl80xo.hfewon9l5jlpmjft --discovery-token-ca-cert-hash sha256:b4d2bed371fe4603b83e7504051dcfcdebcbdcacd8be27884223c4ccc13059a4

则,第二、三个 master 节点的 join 命令如下:

- 命令行中,蓝色部分来自于前面获得的 join 命令,红色部分来自于前面获得的 certificate key

kubeadm join apiserver.demo:6443 --token ejwx62.vqwog6il5p83uk7y

–discovery-token-ca-cert-hash sha256:6f7a8e40a810323672de5eee6f4d19aa2dbdb38411845a1bf5dd63485c43d303

–control-plane --certificate-key 70eb87e62f052d2d5de759969d5b42f372d0ad798f98df38f7fe73efdf63a13c

初始化第二、三个 master 节点

在 k8s-master02 和k8s-master03 机器上执行

# 只在第二、三个 master 节点 k8s-master02 和 k8s-master03 执行

# 替换 192.168.1.10 为 ApiServer LoadBalancer 的 IP 地址

[root@k8s-master02 ~]# export APISERVER_IP=192.168.1.10

# 替换 apiserver.demo 为 前面已经使用的 dnsName

[root@k8s-master02 ~]# export APISERVER_NAME=apiserver.demo

[root@k8s-master02 ~]# echo "${APISERVER_IP} ${APISERVER_NAME}" >> /etc/hosts

# 使用前面步骤中获得的第二、三个 master 节点的 join 命令

[root@k8s-master02 ~]# kubeadm join apiserver.demo:16443 --token ejwx62.vqwog6il5p83uk7y \

--discovery-token-ca-cert-hash sha256:6f7a8e40a810323672de5eee6f4d19aa2dbdb38411845a1bf5dd63485c43d303 \

--control-plane --certificate-key 70eb87e62f052d2d5de759969d5b42f372d0ad798f98df38f7fe73efdf63a13c

常见问题

如果一直停留在 pre-flight 状态,请在第二、三个节点上执行命令检查:

[root@k8s-master02 ~]# curl -ik https://apiserver.demo:16443/version

输出结果应该如下所示

HTTP/1.1 200 OK

Cache-Control: no-cache, private

Content-Type: application/json

Date: Wed, 30 Oct 2019 08:13:39 GMT

Content-Length: 263

{

"major": "1",

"minor": "16",

"gitVersion": "v1.16.2",

"gitCommit": "2bd9643cee5b3b3a5ecbd3af49d09018f0773c77",

"gitTreeState": "clean",

"buildDate": "2019-09-18T14:27:17Z",

"goVersion": "go1.12.9",

"compiler": "gc",

"platform": "linux/amd64"

}

否则,请您检查一下您的 Loadbalancer 是否设置正确

检查 master 初始化结果

# 只在第一个 master 节点 k8s-master01 执行

# 查看 master 节点初始化结果

[root@k8s-master01 ~]# kubectl get nodes

初始化 worker节点

方式一:和第一个Master节点一起初始化(k8s-mstaer01初始化两个小时内)

初始化第一个 master 节点时的输出内容中,第25、26行就是用来初始化 worker 节点的命令,如下所示:此时请不要执行该命令

kubeadm join apiserver.demo:16443 --token 4ix1k1.l8wg8pq9cnrm9fkd \

--discovery-token-ca-cert-hash sha256:cd2d7833513e58dc2eae952e674b2903d4f884d86fd47b00784d56aeeed2dcde

有效时间

该 token 的有效时间为 2 个小时,2小时内,您可以使用此 token 初始化任意数量的 worker 节点。

初始化worker

针对所有的 worker 节点执行

# 只在 worker 节点执行(k8s-node01、k8s-node02)

# 替换 192.168.1.10 为 ApiServer LoadBalancer 的 IP 地址

export MASTER_IP=192.168.1.10

# 替换 apiserver.demo 为初始化 master 节点时所使用的 APISERVER_NAME

export APISERVER_NAME=apiserver.demo

echo "${MASTER_IP} ${APISERVER_NAME}" >> /etc/hosts

# 替换为前面 kubeadm token create --print-join-command 的输出结果

kubeadm join apiserver.demo:16443 --token 4ix1k1.l8wg8pq9cnrm9fkd \

--discovery-token-ca-cert-hash sha256:cd2d7833513e58dc2eae952e674b2903d4f884d86fd47b00784d56aeeed2dcde

方式一:和第一个Master节点一起初始化(k8s-mstaer01初始化两个小时内)

重新获取token

在第一个 master 节点 k8s-master 节点执行

# 只在第一个 master 节点 k8s-master01 上执行

[root@k8s-master01 ~]# kubeadm token create --print-join-command

可获取kubeadm join 命令及参数,如下所示

kubeadm join apiserver.demo:6443 --token mpfjma.4vjjg8flqihor4vt --discovery-token-ca-cert-hash sha256:6f7a8e40a810323672de5eee6f4d19aa2dbdb38411845a1bf5dd63485c43d303

有效时间

该 token 的有效时间为 2 个小时,2小时内,您可以使用此 token 初始化任意数量的 worker 节点。

初始化worker

针对所有的 worker 节点执行

# 只在 worker 节点执行

# 替换 192.168.1.10 为 ApiServer LoadBalancer 的 IP 地址

[root@k8s-node01 ~]# export MASTER_IP=192.168.1.10

# 替换 apiserver.demo 为初始化 master 节点时所使用的 APISERVER_NAME

[root@k8s-node01 ~]# export APISERVER_NAME=apiserver.demo

[root@k8s-node01 ~]# echo "${MASTER_IP} ${APISERVER_NAME}" >> /etc/hosts

# 替换为前面 kubeadm token create --print-join-command 的输出结果

[root@k8s-node01 ~]# kubeadm join apiserver.demo:16443 --token mpfjma.4vjjg8flqihor4vt --discovery-token-ca-cert-hash sha256:6f7a8e40a810323672de5eee6f4d19aa2dbdb38411845a1bf5dd63485c43d303

检查 worker 初始化结果

在第一个master节点 k8s-master01 上执行

# 只在第一个 master 节点 k8s-master01 上执行

[root@k8s-master01 k8s]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master01 Ready master 47m v1.16.2

k8s-master02 Ready master 42m v1.16.2

k8s-master03 Ready master 37m v1.16.2

k8s-node01 Ready 3m29s v1.16.2

[root@k8s-master01 k8s]#

移除 worker 节点

WARNING 正常情况下,您无需移除 worker 节点

在准备移除的 worker 节点上执行

[root@k8s-node01 ~]# kubeadm reset

在第一个 master 节点k8s-master01 上执行

[root@k8s-master01 ~]# kubectl delete node k8s-nodex

- 将 k8s-nodex 替换为要移除的 worker 节点的名字

- worker 节点的名字可以通过在第一个 master 节点 k8s-master01 上执行 kubectl get nodes 命令获得

安装 Ingress Controller

kubernetes支持多种Ingress Controllers (traefic / Kong / Istio / Nginx 等),本文推荐使用 https://github.com/nginxinc/kubernetes-ingress

# 如果打算用于生产环境,请参考 https://github.com/nginxinc/kubernetes-ingress/blob/v1.5.5/docs/installation.md 并根据您自己的情况做进一步定制

#也可以直接下载https://kuboard.cn/install-script/v1.16.2/nginx-ingress.yaml

apiVersion: v1

kind: Namespace

metadata:

name: nginx-ingress

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: nginx-ingress

namespace: nginx-ingress

---

apiVersion: v1

kind: Secret

metadata:

name: default-server-secret

namespace: nginx-ingress

type: Opaque

data:

tls.crt: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUN2akNDQWFZQ0NRREFPRjl0THNhWFhEQU5CZ2txaGtpRzl3MEJBUXNGQURBaE1SOHdIUVlEVlFRRERCWk8KUjBsT1dFbHVaM0psYzNORGIyNTBjbTlzYkdWeU1CNFhEVEU0TURreE1qRTRNRE16TlZvWERUSXpNRGt4TVRFNApNRE16TlZvd0lURWZNQjBHQTFVRUF3d1dUa2RKVGxoSmJtZHlaWE56UTI5dWRISnZiR3hsY2pDQ0FTSXdEUVlKCktvWklodmNOQVFFQkJRQURnZ0VQQURDQ0FRb0NnZ0VCQUwvN2hIUEtFWGRMdjNyaUM3QlBrMTNpWkt5eTlyQ08KR2xZUXYyK2EzUDF0azIrS3YwVGF5aGRCbDRrcnNUcTZzZm8vWUk1Y2Vhbkw4WGM3U1pyQkVRYm9EN2REbWs1Qgo4eDZLS2xHWU5IWlg0Rm5UZ0VPaStlM2ptTFFxRlBSY1kzVnNPazFFeUZBL0JnWlJVbkNHZUtGeERSN0tQdGhyCmtqSXVuektURXUyaDU4Tlp0S21ScUJHdDEwcTNRYzhZT3ExM2FnbmovUWRjc0ZYYTJnMjB1K1lYZDdoZ3krZksKWk4vVUkxQUQ0YzZyM1lma1ZWUmVHd1lxQVp1WXN2V0RKbW1GNWRwdEMzN011cDBPRUxVTExSakZJOTZXNXIwSAo1TmdPc25NWFJNV1hYVlpiNWRxT3R0SmRtS3FhZ25TZ1JQQVpQN2MwQjFQU2FqYzZjNGZRVXpNQ0F3RUFBVEFOCkJna3Foa2lHOXcwQkFRc0ZBQU9DQVFFQWpLb2tRdGRPcEsrTzhibWVPc3lySmdJSXJycVFVY2ZOUitjb0hZVUoKdGhrYnhITFMzR3VBTWI5dm15VExPY2xxeC9aYzJPblEwMEJCLzlTb0swcitFZ1U2UlVrRWtWcitTTFA3NTdUWgozZWI4dmdPdEduMS9ienM3bzNBaS9kclkrcUI5Q2k1S3lPc3FHTG1US2xFaUtOYkcyR1ZyTWxjS0ZYQU80YTY3Cklnc1hzYktNbTQwV1U3cG9mcGltU1ZmaXFSdkV5YmN3N0NYODF6cFErUyt1eHRYK2VBZ3V0NHh3VlI5d2IyVXYKelhuZk9HbWhWNThDd1dIQnNKa0kxNXhaa2VUWXdSN0diaEFMSkZUUkk3dkhvQXprTWIzbjAxQjQyWjNrN3RXNQpJUDFmTlpIOFUvOWxiUHNoT21FRFZkdjF5ZytVRVJxbStGSis2R0oxeFJGcGZnPT0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=

tls.key: LS0tLS1CRUdJTiBSU0EgUFJJVkFURSBLRVktLS0tLQpNSUlFcEFJQkFBS0NBUUVBdi91RWM4b1JkMHUvZXVJTHNFK1RYZUprckxMMnNJNGFWaEMvYjVyYy9XMlRiNHEvClJOcktGMEdYaVN1eE9ycXgrajlnamx4NXFjdnhkenRKbXNFUkJ1Z1B0ME9hVGtIekhvb3FVWmcwZGxmZ1dkT0EKUTZMNTdlT1l0Q29VOUZ4amRXdzZUVVRJVUQ4R0JsRlNjSVo0b1hFTkhzbysyR3VTTWk2Zk1wTVM3YUhudzFtMApxWkdvRWEzWFNyZEJ6eGc2clhkcUNlUDlCMXl3VmRyYURiUzc1aGQzdUdETDU4cGszOVFqVUFQaHpxdmRoK1JWClZGNGJCaW9CbTVpeTlZTW1hWVhsMm0wTGZzeTZuUTRRdFFzdEdNVWozcGJtdlFmazJBNnljeGRFeFpkZFZsdmwKMm82MjBsMllxcHFDZEtCRThCay90elFIVTlKcU56cHpoOUJUTXdJREFRQUJBb0lCQVFDZklHbXowOHhRVmorNwpLZnZJUXQwQ0YzR2MxNld6eDhVNml4MHg4Mm15d1kxUUNlL3BzWE9LZlRxT1h1SENyUlp5TnUvZ2IvUUQ4bUFOCmxOMjRZTWl0TWRJODg5TEZoTkp3QU5OODJDeTczckM5bzVvUDlkazAvYzRIbjAzSkVYNzZ5QjgzQm9rR1FvYksKMjhMNk0rdHUzUmFqNjd6Vmc2d2szaEhrU0pXSzBwV1YrSjdrUkRWYmhDYUZhNk5nMUZNRWxhTlozVDhhUUtyQgpDUDNDeEFTdjYxWTk5TEI4KzNXWVFIK3NYaTVGM01pYVNBZ1BkQUk3WEh1dXFET1lvMU5PL0JoSGt1aVg2QnRtCnorNTZud2pZMy8yUytSRmNBc3JMTnIwMDJZZi9oY0IraVlDNzVWYmcydVd6WTY3TWdOTGQ5VW9RU3BDRkYrVm4KM0cyUnhybnhBb0dCQU40U3M0ZVlPU2huMVpQQjdhTUZsY0k2RHR2S2ErTGZTTXFyY2pOZjJlSEpZNnhubmxKdgpGenpGL2RiVWVTbWxSekR0WkdlcXZXaHFISy9iTjIyeWJhOU1WMDlRQ0JFTk5jNmtWajJTVHpUWkJVbEx4QzYrCk93Z0wyZHhKendWelU0VC84ajdHalRUN05BZVpFS2FvRHFyRG5BYWkyaW5oZU1JVWZHRXFGKzJyQW9HQkFOMVAKK0tZL0lsS3RWRzRKSklQNzBjUis3RmpyeXJpY05iWCtQVzUvOXFHaWxnY2grZ3l4b25BWlBpd2NpeDN3QVpGdwpaZC96ZFB2aTBkWEppc1BSZjRMazg5b2pCUmpiRmRmc2l5UmJYbyt3TFU4NUhRU2NGMnN5aUFPaTVBRHdVU0FkCm45YWFweUNweEFkREtERHdObit3ZFhtaTZ0OHRpSFRkK3RoVDhkaVpBb0dCQUt6Wis1bG9OOTBtYlF4VVh5YUwKMjFSUm9tMGJjcndsTmVCaWNFSmlzaEhYa2xpSVVxZ3hSZklNM2hhUVRUcklKZENFaHFsV01aV0xPb2I2NTNyZgo3aFlMSXM1ZUtka3o0aFRVdnpldm9TMHVXcm9CV2xOVHlGanIrSWhKZnZUc0hpOGdsU3FkbXgySkJhZUFVWUNXCndNdlQ4NmNLclNyNkQrZG8wS05FZzFsL0FvR0FlMkFVdHVFbFNqLzBmRzgrV3hHc1RFV1JqclRNUzRSUjhRWXQKeXdjdFA4aDZxTGxKUTRCWGxQU05rMXZLTmtOUkxIb2pZT2pCQTViYjhibXNVU1BlV09NNENoaFJ4QnlHbmR2eAphYkJDRkFwY0IvbEg4d1R0alVZYlN5T294ZGt5OEp0ek90ajJhS0FiZHd6NlArWDZDODhjZmxYVFo5MWpYL3RMCjF3TmRKS2tDZ1lCbyt0UzB5TzJ2SWFmK2UwSkN5TGhzVDQ5cTN3Zis2QWVqWGx2WDJ1VnRYejN5QTZnbXo5aCsKcDNlK2JMRUxwb3B0WFhNdUFRR0xhUkcrYlNNcjR5dERYbE5ZSndUeThXczNKY3dlSTdqZVp2b0ZpbmNvVlVIMwphdmxoTUVCRGYxSjltSDB5cDBwWUNaS2ROdHNvZEZtQktzVEtQMjJhTmtsVVhCS3gyZzR6cFE9PQotLS0tLUVORCBSU0EgUFJJVkFURSBLRVktLS0tLQo=

---

kind: ConfigMap

apiVersion: v1

metadata:

name: nginx-config

namespace: nginx-ingress

data:

server-names-hash-bucket-size: "1024"

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: nginx-ingress

rules:

- apiGroups:

- ""

resources:

- services

- endpoints

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- secrets

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- configmaps

verbs:

- get

- list

- watch

- update

- create

- apiGroups:

- ""

resources:

- pods

verbs:

- list

- apiGroups:

- ""

resources:

- events

verbs:

- create

- patch

- apiGroups:

- extensions

resources:

- ingresses

verbs:

- list

- watch

- get

- apiGroups:

- "extensions"

resources:

- ingresses/status

verbs:

- update

- apiGroups:

- k8s.nginx.org

resources:

- virtualservers

- virtualserverroutes

verbs:

- list

- watch

- get

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: nginx-ingress

subjects:

- kind: ServiceAccount

name: nginx-ingress

namespace: nginx-ingress

roleRef:

kind: ClusterRole

name: nginx-ingress

apiGroup: rbac.authorization.k8s.io

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: nginx-ingress

namespace: nginx-ingress

annotations:

prometheus.io/scrape: "true"

prometheus.io/port: "9113"

spec:

selector:

matchLabels:

app: nginx-ingress

template:

metadata:

labels:

app: nginx-ingress

spec:

serviceAccountName: nginx-ingress

containers:

- image: nginx/nginx-ingress:1.5.5

name: nginx-ingress

ports:

- name: http

containerPort: 80

hostPort: 80

- name: https

containerPort: 443

hostPort: 443

- name: prometheus

containerPort: 9113

env:

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

args:

- -nginx-configmaps=$(POD_NAMESPACE)/nginx-config

- -default-server-tls-secret=$(POD_NAMESPACE)/default-server-secret

#- -v=3 # Enables extensive logging. Useful for troubleshooting.

#- -report-ingress-status

#- -external-service=nginx-ingress

#- -enable-leader-election

- -enable-prometheus-metrics

#- -enable-custom-resources

在 master 节点上执行

# 只在第一个 master 节点 k8s-master01上执行

[root@k8s-master01 ~]# kubectl apply -f nginx-ingress.yaml

WARNING如果您打算将 Kubernetes 用于生产环境,请参考此文档 Installing Ingress Controller,完善 Ingress 的配置

在 IaaS 层完成如下配置(公网Load Balancer)

创建负载均衡 Load Balancer:

- 监听器 1:80 / TCP, SOURCE_ADDRESS 会话保持

- 服务器资源池 1: demo-worker-x-x 的所有节点的 80端口

- 监听器 2:443 / TCP, SOURCE_ADDRESS 会话保持

- 服务器资源池 2: demo-worker-x-x 的所有节点的443端口

假设刚创建的负载均衡 Load Balancer 的 IP 地址为: 192.168.1.10

配置域名解析

将域名 *.demo.yourdomain.com 解析到地址负载均衡服务器 的 IP 地址192.168.1.10

验证配置

在浏览器访问 a.demo.yourdomain.com,将得到 404 NotFound 错误页面

Metrics-Server部署

kubernetes中系统资源采集使用的是Metrics-server,可以通过metrics采集节点和Pod的内存、磁盘、CPU和网络的使用率等。

获取metrics-server.yaml

#获取地址https://addons.kuboard.cn/metrics-server/0.3.6/metrics-server.yaml

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: system:aggregated-metrics-reader

labels:

rbac.authorization.k8s.io/aggregate-to-view: "true"

rbac.authorization.k8s.io/aggregate-to-edit: "true"

rbac.authorization.k8s.io/aggregate-to-admin: "true"

rules:

- apiGroups: ["metrics.k8s.io"]

resources: ["pods", "nodes"]

verbs: ["get", "list", "watch"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: metrics-server:system:auth-delegator

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:auth-delegator

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: metrics-server-auth-reader

namespace: kube-system

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: extension-apiserver-authentication-reader

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: system:metrics-server

rules:

- apiGroups:

- ""

resources:

- pods

- nodes

- nodes/stats

- namespaces

verbs:

- get

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: system:metrics-server

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:metrics-server

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: apiregistration.k8s.io/v1beta1

kind: APIService

metadata:

name: v1beta1.metrics.k8s.io

spec:

service:

name: metrics-server

namespace: kube-system

group: metrics.k8s.io

version: v1beta1

insecureSkipTLSVerify: true

groupPriorityMinimum: 100

versionPriority: 100

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: metrics-server

namespace: kube-system

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: metrics-server

namespace: kube-system

labels:

k8s-app: metrics-server

spec:

selector:

matchLabels:

k8s-app: metrics-server

template:

metadata:

name: metrics-server

labels:

k8s-app: metrics-server

spec:

serviceAccountName: metrics-server

volumes:

# mount in tmp so we can safely use from-scratch images and/or read-only containers

- name: tmp-dir

emptyDir: {}

hostNetwork: true

containers:

- name: metrics-server

image: registry.cn-hangzhou.aliyuncs.com/google_containers/metrics-server-amd64:v0.3.6

# command:

# - /metrics-server

# - --kubelet-insecure-tls

# - --kubelet-preferred-address-types=InternalIP

args:

- --cert-dir=/tmp

- --secure-port=4443

- --kubelet-insecure-tls=true

- --kubelet-preferred-address-types=InternalIP,Hostname,InternalDNS,externalDNS

ports:

- name: main-port

containerPort: 4443

protocol: TCP

securityContext:

readOnlyRootFilesystem: true

runAsNonRoot: true

runAsUser: 1000

imagePullPolicy: Always

volumeMounts:

- name: tmp-dir

mountPath: /tmp

nodeSelector:

beta.kubernetes.io/os: linux

---

apiVersion: v1

kind: Service

metadata:

name: metrics-server

namespace: kube-system

labels:

kubernetes.io/name: "Metrics-server"

kubernetes.io/cluster-service: "true"

spec:

selector:

k8s-app: metrics-server

ports:

- port: 443

protocol: TCP

targetPort: main-port

部署metrics-server

[root@k8s-master01 k8s]# kubectl apply -f metrics-server.yaml

查看结果

[root@k8s-master01 k8s]# kubectl get pods -n kube-system

kuboard部署

①获取kuboard.yaml文件

#下载地址https://kuboard.cn/install-script/kuboard.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: kuboard

namespace: kube-system

annotations:

k8s.kuboard.cn/displayName: kuboard

k8s.kuboard.cn/ingress: "true"

k8s.kuboard.cn/service: NodePort

k8s.kuboard.cn/workload: kuboard

labels:

k8s.kuboard.cn/layer: monitor

k8s.kuboard.cn/name: kuboard

spec:

replicas: 1

selector:

matchLabels:

k8s.kuboard.cn/layer: monitor

k8s.kuboard.cn/name: kuboard

template:

metadata:

labels:

k8s.kuboard.cn/layer: monitor

k8s.kuboard.cn/name: kuboard

spec:

containers:

- name: kuboard

image: eipwork/kuboard:latest

imagePullPolicy: Always

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

---

apiVersion: v1

kind: Service

metadata:

name: kuboard

namespace: kube-system

spec:

type: NodePort

ports:

- name: http

port: 80

targetPort: 80

nodePort: 32567

selector:

k8s.kuboard.cn/layer: monitor

k8s.kuboard.cn/name: kuboard

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: kuboard-user

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kuboard-user

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: kuboard-user

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: kuboard-viewer

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kuboard-viewer

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: view

subjects:

- kind: ServiceAccount

name: kuboard-viewer

namespace: kube-system

---

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: kuboard

namespace: kube-system

annotations:

k8s.kuboard.cn/displayName: kuboard

k8s.kuboard.cn/workload: kuboard

nginx.org/websocket-services: "kuboard"

nginx.com/sticky-cookie-services: "serviceName=kuboard srv_id expires=1h path=/"

spec:

rules:

- host: kuboard.yourdomain.com

http:

paths:

- path: /

backend:

serviceName: kuboard

servicePort: http

②部署kuboard

[root@k8s-master01 ~]# kubectl apply -f kuboard.yaml

查看 Kuboard 运行状态:

[root@k8s-master01 ~]# kubectl get pods -l k8s.kuboard.cn/name=kuboard -n kube-system

NAME READY STATUS RESTARTS AGE

kuboard-5ffbc8466d-pqr7g 1/1 Running 0 2m49s

[root@k8s-master01 ~]#

③获取token

管理员用户

拥有的权限:

- 此Token拥有 ClusterAdmin 的权限,可以执行所有操作

执行命令查看token值

[root@k8s-master01 k8s]# echo $(kubectl -n kube-system get secret $(kubectl -n kube-system get secret | grep kuboard-user | awk '{print $1}') -o go-template='{{.data.token}}' | base64 -d)

eyJhbGciOiJSUzI1NiIsImtpZCI6Ik9kTk1ybDlfRUZPRHcyMUg1QWxEY2dTUlQwQlRGUDdIVVZDUFVNeWIweWsifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJrdWJvYXJkLXVzZXItdG9rZW4tcnhmZzgiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoia3Vib2FyZC11c2VyIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiNjdhMjUwYmYtMDkzNC00YjBmLThlYjQtOWZmYzA2YWViODkxIiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50Omt1YmUtc3lzdGVtOmt1Ym9hcmQtdXNlciJ9.UvcRk7BqiCvgqxFB3iAftkKbd514EGehh9KOhlSYi1o-OIUglgvYSShK3NqsWXVF48bReTv0FtvUYGujewovhsY2rpIAnfGHbO2MQOhJCaKHox3FKj0a7tVwbmmjGQrCOVxuGdybmPjMiX9AZPUO2dTH1uA4wiWRAYISgloEv4pDeOP6_C3C3OCAto6Owq3xPCcKBqelrAMi4f8023mMrMT-HAmJE5outnHysPX37xq1MXA8iZmoxakBoL77FOMz7Q92EofoqOV76CR53sT1PaQj2miChih3bgjA8veZff0lkLPrLXj16ddUaW-esYb3IsjZ_iQEwRqzyWJSb7RsVQ

[root@k8s-master01 k8s]#

只读用户

拥有的权限:

- view 可查看名称空间的内容

- system:node 可查看节点信息

- system:persistent-volume-provisioner 可查看存储类和存储卷声明的信息

适用场景

只读用户不能对集群的配置执行修改操作,非常适用于将开发环境中的 Kuboard 只读权限分发给开发者,以便开发者可以便捷地诊断问题

执行命令:

执行如下命令可以获得 只读用户 的 Token,取输出信息中 token 字段

[root@k8s-master01 k8s]# echo $(kubectl -n kube-system get secret $(kubectl -n kube-system get secret | grep kuboard-viewer | awk '{print $1}') -o go-template='{{.data.token}}' | base64 -d)

eyJhbGciOiJSUzI1NiIsImtpZCI6Ik9kTk1ybDlfRUZPRHcyMUg1QWxEY2dTUlQwQlRGUDdIVVZDUFVNeWIweWsifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJrdWJvYXJkLXZpZXdlci10b2tlbi05eHhtciIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50Lm5hbWUiOiJrdWJvYXJkLXZpZXdlciIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50LnVpZCI6IjFjMzU2ZDE1LTU5MjgtNDJlMC04ZGVjLWJkNmNhNTllMDU3MyIsInN1YiI6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDprdWJlLXN5c3RlbTprdWJvYXJkLXZpZXdlciJ9.AExLhMFCGOXecS4XQVENBedWVUf0TdA2qAat9qagvj9NEbuvPnsHIG6sahsNiSFPCVzLDdUCFWrwCw-iYGOkUglUgSDEMTrMtOBhq8U4aW3EuAQvY4aYNTV5h2F5Cq77JDwd0PMFVp_P0dOHFqwu05g59yCNrSf-RuqlmCYh81h-nVEEWPa96n_j59ResZgJsdl6dQEp2_n8pLVDphBA3F5LR7WhzIcwAlemSRBA2N60qPUv0KTsx_p_Y3dvgjpTFsS9RCLEAsdgugFHOaUMuSD9Ug2XpVraftE7TjaDFeCSoBIEjEhOGhpZi54HDcGMKtuO24rpOJm_lrEdA_fGtw

[root@k8s-master01 k8s]#

④访问kuboard

Kuboard Service 使用了 NodePort 的方式暴露服务, Kuboard.yaml 文件中NodePort 为 32567;您可以按如下方式访问 Kuboard。

http://任意一个Worker节点的IP地址:32567/

输入前一步骤中获得的 token,可进入 Kuboard 集群概览页

注意:

- 如果您使用的是阿里云、腾讯云等,请在其安全组设置里开放 worker 节点 32567 端口的入站访问,

- 您也可以修改 Kuboard.yaml 文件,使用自己定义的 NodePort 端口号

⑤直接访问集群概览页

如需要无登录访问集群概览页面,可使用如下格式的 url 进入:

http://任意一个Worker节点的IP地址:32567/dashboard?k8sToken=yourtoken

其他界面

其他任意 Kuboard 界面同理,只需要增加 k8sToken 作为查询参数,即可跳过输入 Token 的步骤

⑥直接访问终端界面

如果想要无登录直接访问容器组的控制台,可使用如下格式的 url 进入:

http://任意一个Worker节点的IP地址:32567/console/yournamespace/yourpod?containerName=yourcontainer&shell=bash&k8sToken=yourtoken

其中,shell 参数可选取值有:

bash,使用 /bin/bash 作为 shell

I6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDprdWJlLXN5c3RlbTprdWJvYXJkLXZpZXdlciJ9.AExLhMFCGOXecS4XQVENBedWVUf0TdA2qAat9qagvj9NEbuvPnsHIG6sahsNiSFPCVzLDdUCFWrwCw-iYGOkUglUgSDEMTrMtOBhq8U4aW3EuAQvY4aYNTV5h2F5Cq77JDwd0PMFVp_P0dOHFqwu05g59yCNrSf-RuqlmCYh81h-nVEEWPa96n_j59ResZgJsdl6dQEp2_n8pLVDphBA3F5LR7WhzIcwAlemSRBA2N60qPUv0KTsx_p_Y3dvgjpTFsS9RCLEAsdgugFHOaUMuSD9Ug2XpVraftE7TjaDFeCSoBIEjEhOGhpZi54HDcGMKtuO24rpOJm_lrEdA_fGtw

[root@k8s-master01 k8s]#

##### ④访问kuboard

Kuboard Service 使用了 NodePort 的方式暴露服务, Kuboard.yaml 文件中NodePort 为 32567;您可以按如下方式访问 Kuboard。

http://任意一个Worker节点的IP地址:32567/

输入前一步骤中获得的 token,可进入 **Kuboard 集群概览页**

注意:

- 如果您使用的是阿里云、腾讯云等,请在其安全组设置里开放 worker 节点 32567 端口的入站访问,

- 您也可以修改 Kuboard.yaml 文件,使用自己定义的 NodePort 端口号

##### ⑤直接访问集群概览页

如需要无登录访问集群概览页面,可使用如下格式的 url 进入:

```text

http://任意一个Worker节点的IP地址:32567/dashboard?k8sToken=yourtoken

其他界面

其他任意 Kuboard 界面同理,只需要增加 k8sToken 作为查询参数,即可跳过输入 Token 的步骤

⑥直接访问终端界面

如果想要无登录直接访问容器组的控制台,可使用如下格式的 url 进入:

http://任意一个Worker节点的IP地址:32567/console/yournamespace/yourpod?containerName=yourcontainer&shell=bash&k8sToken=yourtoken

其中,shell 参数可选取值有: