linux 安装环境-常用

JDK

#安装前清理工作

rpm -qa | grep jdk

rpm -qa | grep gcj

yum -y remove java-xxx-xxx

#安装

#压缩包

mkdir /usr/local/java

#mv 移动jdk

cd /usr/local/java

#解压

tar -xzvf jdk.file

#配置环境

vi /etc/profile

JAVA_HOME=/usr/local/java/jdk1.8.0_151

CLASSPATH=$JAVA_HOME/lib/

PATH=$PATH:$JAVA_HOME/bin

export PATH JAVA_HOME CLASSPATH

#刷新配置

source /etc/profile

#rpm

wget --no-check-certificate --no-cookies --header "Cookie: oraclelicense=accept-securebackup-cookie" http://download.oracle.com/otn-pub/java/jdk/8u131-b11/d54c1d3a095b4ff2b6607d096fa80163/jdk-8u131-linux-x64.rpm

chmod +x jdk-8u131-linux-x64.rpm

rpm -ivh jdk-8u131-linux-x64.rpm

#查看JDK是否安装成功

java -version

vim /etc/profile

export JAVA_HOME=/usr/java/jdk1.8.0_131

export JRE_HOME=${JAVA_HOME}/jre

export CLASSPATH=.:${JAVA_HOME}/lib:${JRE_HOME}/lib:$CLASSPATH

export JAVA_PATH=${JAVA_HOME}/bin:${JRE_HOME}/bin

export PATH=$PATH:${JAVA_PATH}

source /etc/profile

Mysql

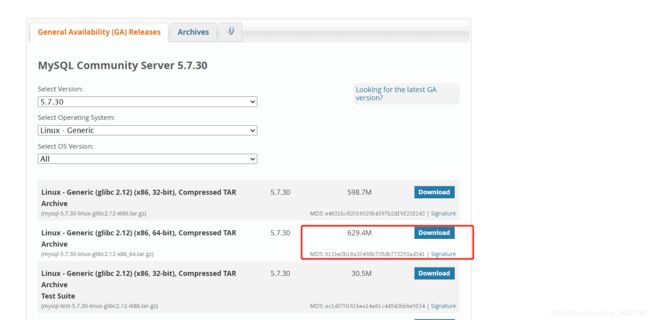

wget https://cdn.mysql.com//Downloads/MySQL-5.7/mysql-5.7.34-linux-glibc2.12-x86_64.tar.gz

/usr/local/

tar -zxvf mysql-5.7.30-linux-glibc2.12-x86_64.tar.gz

mv mysql-5.7.30-linux-glibc2.12-x86_64.tar.gz mysql

#创建mysql组,并创建mysql用户加入mysql组中

groupadd mysql

useradd -g mysql mysql

或

useradd -r -s /sbin/nologin -g mysql mysql -d /usr/local/mysql ------新建msyql用户禁止登录shell

#更改所属的组和用户

chown -R mysql mysql/

chgrp -R mysql mysql/

#创建data

mkdir -p /data/mysql

chown -R mysql /data/mysql

#查看etc/下是否有my.cnf

[mysql]

# 设置mysql客户端默认字符集

default-character-set=utf8

[mysqld]

skip-name-resolve

#设置3306端口

port = 3306

# 设置mysql的安装目录

basedir=/usr/local/mysql

# 设置mysql数据库的数据的存放目录

datadir=/data/mysql

# 允许最大连接数

max_connections=200

# 服务端使用的字符集默认为8比特编码的latin1字符集

character-set-server=utf8

# 创建新表时将使用的默认存储引擎

default-storage-engine=INNODB

lower_case_table_names=1

max_allowed_packet=16M

#有个密码 保存下来(ar_CgaorJ6d1)

./mysqld --initialize --user=mysql --basedir=/usr/local/mysql --datadir=/data/mysql

#切换到bin目录下

./mysql_ssl_rsa_setup --datadir=/data/mysql

cd /usr/local/mysql/support-files

cp mysql.server /etc/init.d/mysql

vim /etc/init.d/mysql

basedir=/usr/local/mysql

datadir=/data/mysql

/etc/init.d/mysql start

mysql -u root -p 输入密码

#如果没有mysql命令 ln -s /usr/local/mysql/bin/mysql /usr/bin

set password=password('root');

grant all privileges on *.* to root@"%" identified by "root";

flush privileges;

use mysql;

select host,user from user;

#配置mysql自动启动

chmod 755 /etc/init.d/mysql

#如果没有mysqld cd /usr/local/mysql-5.7.30/support-files cp mysql.server /etc/init.d/mysql

chkconfig --add mysql

chkconfig --level 345 mysql on

service mysql start

centos7以上还可以使用 systemctl管理服务

启动服务

systemctl start mysqld.service

关闭服务

systemctl stop mysqld.service

重启服务

systemctl restart mysqld.service

查看服务状态

systemctl status mysqld.service

设置开机自启

systemctl enable mysqld.service

停止开机自启

systemctl disable mysqld.service

ElasticSearch

#不能root启动

useradd zbing

passwd zbing

wget https://artifacts.elastic.co/downloads/elasticsearch/elasticsearch-7.12.1-linux-x86_64.tar.gz

tar -zxvf elasticsearch-7.12.1-linux-x86_64.tar.gz

mv elasticsearch-7.12.1 elasticsearch

chown -R zbing elasticsearch*

cd elasticsearch/config

vim elasticsearch.yml

discovery.type: single-node

http.cors.enabled: true

http.cors.allow-origin: "*"

cd elasticsearch/bin

./elasticsearch

#后台启动

./elasticsearch -d

常见错误

#[1]: max file descriptors [4096] for elasticsearch process is too low, increase to at least [65535]

vim /etc/security/limits.conf

* soft nofile 65535

* hard nofile 65535

#[2]: max number of threads [3818] for user [admin] is too low, increase to at least [4096]

vim /etc/security/limits.conf

* soft nproc 4096

* hard nproc 4096

#[3]: max virtual memory areas vm.max_map_count [65530] is too low, increase to at least [262144]

vim /etc/sysctl.conf

vm.max_map_count=262144

sysctl -p

elasticsearch-head

https://github.com/mobz/elasticsearch-head

wget https://github.com/mobz/elasticsearch-head/archive/refs/tags/v5.0.0.tar.gz

进入目录

npm install

#npm install报错Failed at the [email protected] install script

npm install [email protected] --ignore-scripts

npm install

npm run start

需要安装nodejs

wget https://nodejs.org/dist/v14.17.0/node-v14.17.0-linux-x64.tar.gz

创建连接

ln -s .... node /usr/bin/node

ln -s .... npm /usr/bin/npm

npm config set registry https://registry.npm.taobao.org

// 配置后可通过下面命令来验证是否成功

npm config ls

zookeeper

wget http://mirrors.hust.edu.cn/apache/zookeeper/zookeeper-3.6.2/apache-zookeeper-3.6.2-bin.tar.gz

tar -zxvf apache-zookeeper-3.6.2.bin.tar.gz -C /usr/local/

cd apache-zookeeper-3.6.2-bin/

cp conf/zoo_sample.cfg conf/zoo.cfg

vim conf/zoo.cfg

dataDir=/tmp/zookeeper/data

dataLogDir=/tmp/zookeeper/log

./zkServer.sh start

./zkCli.sh -server 127.0.0.1:2181

开机自启

进入到/etc/init.d目录下,新建一个zookeeper脚本

vim /etc/init.d/zookeeper

#!/bin/bash

#chkconfig:2345 20 90

#description:zookeeper

#processname:zookeeper

export JAVA_HOME=/usr/local/JAVA

case $1 in

start) su root /data/zookeeper-3.4.11/bin/zkServer.sh start;;

stop) su root /data/zookeeper-3.4.11/bin/zkServer.sh stop;;

status) su root /data/zookeeper-3.4.11/bin/zkServer.sh status;;

restart) su root /data/zookeeper-3.4.11/bin/zkServer.sh restart;;

*) echo "require start|stop|status|restart" ;;

esac

chmod +x zookeeper

#添加开机自启

chkconfig --add zookeeper

chkconfig --list zookeeper

使用service zookeeper start/stop命令来尝试启动关闭zookeeper,使用service zookeeper status查看zookeeper状态。

或者直接 zookeeper start/stop/status

相关书籍《从Paxos到Zookeeper 分布式一致性原理实战》

基于Apache Curator框架的ZooKeeper使用详解

https://www.cnblogs.com/erbing/p/9799098.html

http://www.throwable.club/2018/12/16/zookeeper-curator-usage/#Zookeeper%E5%AE%A2%E6%88%B7%E7%AB%AFCurator%E4%BD%BF%E7%94%A8%E8%AF%A6%E8%A7%A3

hadoop

单击部署

#新建立hadoop用户

useradd -m hadoop

passwd hadoop

#可选增加sudo权限

vim /etc/sudoers

#登录localhost

ssh localhost

#设置为无密码登录

cd ~/.ssh/

ssh-keygen -t rsa

cat ./id_rsa.pub >> ./authorized_keys

~/.ssh需要是700权限

authorized_keys需要是644权限

chmod 700 ~/.ssh

chmod 644 ~/.ssh/authorized_keys

#下载hadoop

wget http://mirrors.hust.edu.cn/apache/hbase/2.4.1/hbase-2.4.1-bin.tar.gz

tar -zxvf hadoop-3.3.0.tar.gz -C /usr/local/

chown -R hadoop hadoop-3.3.0/

cd /usr/local/hadoop-3.3.0/bin

./hadoop version

cd /usr/local/hadoop-3.3.0

mkdir input

cp ./etc/hadoop/*.xml ./input # 将配置文件复制到input目录下

./bin/hadoop jar ./share/hadoop/mapreduce/hadoop-mapreduce-examples-*.jar grep ./input ./output 'dfs[a-z.]+'

cat ./output/* # 查看运行结果1 dfsadmin

运行成功后,可以看到grep程序将input文件夹作为输入,从文件夹中筛选出所有符合正则表达式dfs[a-z]+的单词,并把单词出现的次数的统计结果输出到/usr/local/hadoop/output文件夹下。

【注意】:如果再次运行上述命令,会报错,因为Hadoop默认不会覆盖output输出结果的文件夹,所有需要先删除output文件夹才能再次运行。

伪分布式部署

在单个节点(一台机器上)一伪分布式的方式运行。需要修改 ./etc/hadoop/ 要文件夹下的core-site.xml和 hdfs-site.xml文件。

core-site.xml

<configuration>

<property>

<name>hadoop.tmp.dirname>

<value>file:/usr/local/hadoop-3.3.0/tmpvalue>

<description>Abase for other temporary directories.description>

property>

<property>

<name>fs.defaultFSname>

<value>hdfs://hadoop01:9000value>

property>

configuration>

hdfs-site.xml

<configuration>

<property>

<name>dfs.replicationname>

<value>1value>

property>

<property>

<name>dfs.namenode.name.dirname>

<value>file:/usr/local/hadoop-3.3.0/tmp/dfs/namevalue>

property>

<property>

<name>dfs.datanode.data.dirname>

<value>file:/usr/local/hadoop-3.3.0/tmp/dfs/datavalue>

property>

<property>

<name>dfs.http.addressname>

<value>0.0.0.0:50070value>

property>

configuration>

- dfs.replicaion:指定副本数量,在分布式文件系统中,数据通常会被冗余的存储多份,以保证可靠性和安全性,但是这里用的是伪分布式模式,节点只有一个,也有就只有一个副本。

- dfs.namenode.name.di:设定名称节点元数据的保存目录

- dfs.datanode.data.dir:设定数据节点的数据保存目录

运行hadoop不能使用root用户可以使用新建的hadoop用户

cd /usr/local/hadoop

#执行名称节点格式化

./bin/hdfs namenode -format

#启动Hadoop

./sbin/start-dfs.sh

如果出现JAVA_HOME未设置

Starting namenodes on [localhost]

localhost: Error: JAVA_HOME is not set and could not be found.

localhost: Error: JAVA_HOME is not set and could not be found.Starting secondary namenodes [0.0.0.0]

将/hadoop/etc/hadoop/hadoop-env.sh文件的JAVA_HOME改为绝对路径了。将export JAVA_HOME=$JAVA_HOME改为 export JAVA_HOME=/usr/lib/jvm/default-java

用jps命令查看Hadoop是否启动成功,如果出现DataNode、NameNode、SecondaryNameNode的进程说明启动成功。

jps

4821 Jps

4459 DataNode

4348 NameNode

4622 SecondaryNameNode

有问题操作

./sbin/stop-dfs.sh # 关闭

rm -r ./tmp # 删除 tmp 文件,注意这会删除 HDFS中原有的所有数据

./bin/hdfs namenode -format # 重新格式化名称节点

./sbin/start-dfs.sh # 重启

访问web

http://localhost:50070/dfshealth.html

vim /etc/profile

export HADOOP_HOME=/usr/local/hadoop-3.3.0

export PATH=$PATH:......:${HADOOP_HOME}/bin:${HADOOP_HOME}/sbin;

source /etc/profile

运行伪分布式实例

hdfs dfs -mkdir -p /user/hadoop # 在HDFS中创建用户目录

hdfs dfs -mkdir input #在HDFS中创建hadoop用户对应的input目录

hdfs dfs -put /usr/local/hadoop-3.3.0/etc/hadoop/*.xml input #把本地文件复制到HDFS中

hdfs dfs -ls input #查看文件列表

hadoop jar ./share/hadoop/mapreduce/hadoop-mapreduce-examples-3.3.0.jar grep input output 'dfs[a-z.]+'

hdfs dfs -cat output/* #查看运行结果

1 dfsadmin

1 dfs.replication

1 dfs.namenode.name.dir

1 dfs.http.address

1 dfs.datanode.data.dir

#再次运行需要删除output文件夹

./bin/hdfs dfs -rm -r output # 删除 output 文件夹

#关闭 hadoop

./sbin/stop-dfs.sh

参考:https://www.jianshu.com/p/d2f8c7153239

Python3

#1.安装依赖环境

yum -y install zlib-devel bzip2-devel openssl-devel ncurses-devel sqlite-devel readline-devel tk-devel gdbm-devel db4-devel libpcap-devel xz-devel

#2.下载

wget https://www.python.org/ftp/python/3.7.1/Python-3.7.1.tgz

#3.初始化

mkdir -p /usr/local/python3

tar -zxvf Python-3.7.1.tgz

#3.7版本之后需要一个新的包libffi-devel

yum install libffi-devel -y

cd Python-3.7.1

./configure --prefix=/usr/local/python3

make && make install

#4.环境配置

vim /etc/profile

# vim ~/.bash_profile

# .bash_profile

# Get the aliases and functions

if [ -f ~/.bashrc ]; then

. ~/.bashrc

fi

# User specific environment and startup programs

PATH=$PATH:$HOME/bin:/usr/local/python3/bin

export PATH

source ~/.bash_profile

#5.检查

python3 -V

pip3 -V

云盘

百度云 bypy

天翼云 cloudpan189-go

bypy 需要安装pytho

与百度网盘互通,默认互通文件夹为 百度网盘/应用文件/bypy

pip3 install bypy

#第一次需要登陆

bypy info

#常用命令

上传 不加参数上传当前目录

bypy syncup xxx

bypy upload xxx

下载

bypy downfile xxx

bypy syncdown

bypy downdir /

cloudpan189-go

可以直接到github下载页面进行下载:https://github.com/tickstep/cloudpan189-go/releases

也可以直接到天翼云盘分享链接下载:https://cloud.189.cn/t/RzUNre7nq2Uf(访问码:io7x)

#登陆

[root@zbing cloudpan189-go-v0.0.9-linux-386]# ./cloudpan189-go

提示: 方向键上下可切换历史命令.

提示: Ctrl + A / E 跳转命令 首 / 尾.

提示: 输入 help 获取帮助.

cloudpan189-go > login

请输入用户名(手机号/邮箱/别名), 回车键提交 > 15139176175

请输入密码(输入的密码无回显, 确认输入完成, 回车提交即可) >

天翼帐号登录成功: 15139176175@189.cn

cloudpan189-go:/ 15139176175@189.cn$

签到

sign

切换工作目录

cd

ll

下载

download XXX

上传

upload XXX