k8s日志收集方案及实战

文章目录

-

-

-

- k8s 日志收集方案

- 1、elasticsearch安装配置

-

- 1.1 es安装

- 1.2 es配置

- 1.3 启动es

- 2、kibana安装配置

-

- 2.1 kibana安装

- 2.2 kibana配置

- 2.3 启动kibana

- 3、zookeeper安装配置

-

- 3.1 zookeeper安装

- 3.2 启动zookeeper

- 3.3 检查zookeeper状态

- 4、kafka安装配置

-

- 4.1 kafka安装

- 4.2 配置kafka

- 4.3 启动kafka

- 5、安装配置logstash

-

- 5.1 安装logstash

- 5.2 启动logstash

- 6、配置日志收集

-

- 6.1 基于daemonset的日志收集

- 6.2 构建logstash镜像

- 6.3 部署logstash daemonset,yaml如下

- 6.4 修改logstash配置

- 6.5 配置kibana展示日志

- 7、基于sidecar容器的日志收集

-

- 7.1 构建sidecar镜像

- 7.2 构建镜像

- 7.3 部署pod

- 7.4 配置logstash

- 8、基于容器内置的日志收集进程的日志收集

-

- 8.1 构建镜像

- 8.2 部署业务容器

- 8.3 配置logstash

- 9、elasticsearch-head安装

- 10、kafka命令

-

-

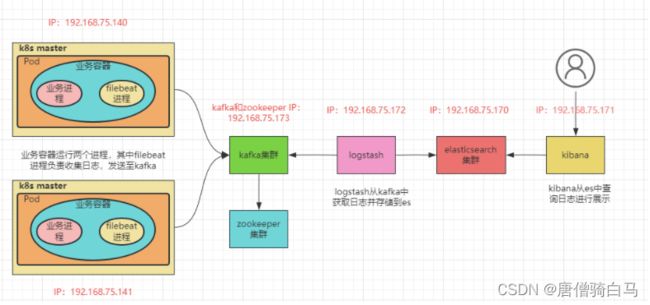

k8s 日志收集方案

三种收集方案的优缺点:

日志收集环境:

k8s日志收集架构

k8s日志收集所用到的安装包及软件:

链接:https://pan.baidu.com/s/1G8XB6dKP8nmgcGNdup_j6g?pwd=vpwu

提取码:vpwu

1、elasticsearch安装配置

1.1 es安装

ip:192.168.75.170

rpm -ivh elasticsearch-7.6.2-x86_64.rpm

1.2 es配置

[root@es es]# cat /etc/elasticsearch/elasticsearch.yml| grep -v "#"

cluster.name: log-cluster1

node.name: node-1

path.data: /var/lib/elasticsearch

path.logs: /var/log/elasticsearch

network.host: 192.168.75.170

http.port: 9200

discovery.seed_hosts: ["192.168.75.170"]

cluster.initial_master_nodes: ["node-1"]

action.destructive_requires_name: true

http.cors.enabled: true

http.cors.allow-origin: "*"

1.3 启动es

systemctl daemon-reload

systemctl enable elasticsearch

systemctl start elasticsearch

systemctl status elasticsearch

2、kibana安装配置

2.1 kibana安装

ip:192.168.75.171

rpm -ivh kibana-7.6.2-x86_64.rpm

2.2 kibana配置

[root@kinaba ~]# cat /etc/kibana/kibana.yml | grep -Ev "^#|^$"

server.port: 5601

server.host: "192.168.75.171"

server.name: "kibana"

elasticsearch.hosts: ["http://192.168.75.170:9200"]

2.3 启动kibana

systemctl enable kibana

systemctl start kibana

systemctl status kibana

3、zookeeper安装配置

3.1 zookeeper安装

ip:192.168.75.173

mkdir /usr/local/java

tar xf jdk-8u171-linux-x64.tar.gz -C /usr/local/java/

vim /etc/profile #配置环境变量,添加下面几行

export JAVA_HOME=/usr/local/java/jdk1.8.0_171

export JRE_HOME=${JAVA_HOME}/jre

export CLASSPATH=.:${JAVA_HOME}/lib:${JRE_HOME}/lib

export PATH=${JAVA_HOME}/bin:$PATH

source /etc/profile

java -version

下载安装包,下载地址:https://zookeeper.apache.org/releases.html

wget https://dlcdn.apache.org/zookeeper/zookeeper-3.6.3/apache-zookeeper-3.6.3-bin.tar.gz

mkdir /apps

tar xf apache-zookeeper-3.6.3-bin.tar.gz -C /apps/ && cd /apps

ln -s apache-zookeeper-3.6.3-bin zookeeper

修改zookeeper配置

mkdir -p /data/zookeeper/{data,logs}

cd /apps/zookeeper/

cp conf/zoo_sample.cfg conf/zoo.cfg

cat conf/zoo.cfg

tickTime=2000

initLimit=10

syncLimit=5

dataDir=/data/zookeeper/data

dataLogDir=/data/zookeeper/logs

clientPort=2181

server.0=192.168.75.173:2288:3388

echo 0 >/data/zookeeper/data/myid #此处myid在3个节点分别为0,1,2

3.2 启动zookeeper

cat /etc/systemd/system/zookeeper.service

[Unit]

Description=zookeeper.service

After=network.target

[Service]

Type=forking

ExecStart=/apps/zookeeper/bin/zkServer.sh start

ExecStop=/apps/zookeeper/bin/zkServer.sh stop

ExecReload=/apps/zookeeper/bin/zkServer.sh restart

[Install]

WantedBy=multi-user.target

system daemon reload

systemctl restart zookeeper

systemctl status zookeeper

3.3 检查zookeeper状态

[root@kafka ~]# /apps/zookeeper/bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /apps/zookeeper/bin/../conf/zoo.cfg

Client port found: 2181. Client address: localhost. Client SSL: false.

Mode: standalone

4、kafka安装配置

4.1 kafka安装

和zookeeper部署在相同的主机,下载安装包,下载地址:https://kafka.apache.org/downloads

wget https://archive.apache.org/dist/kafka/3.2.1/kafka_2.12-3.2.1.tgz

tar xf kafka_2.12-3.2.1.tgz -C /apps/ && cd /apps

ln -s kafka_2.12-3.2.1 kafka

4.2 配置kafka

mkdir -p /data/kafka/kafka-logs

cd /app/kafka

vim config/server.properties

broker.id=0 #修改id,3个节点分别为0、1、2

listeners=PLAINTEXT://192.168.75.173:9092 #修改监听地址为本机地址

num.network.threads=3

num.io.threads=8

socket.send.buffer.bytes=102400

socket.receive.buffer.bytes=102400

socket.request.max.bytes=104857600

log.dirs=/data/kafka/kafka-logs #修改数据存放目录

num.partitions=1

num.recovery.threads.per.data.dir=1

offsets.topic.replication.factor=1

transaction.state.log.replication.factor=1

transaction.state.log.min.isr=1

log.retention.hours=168

log.segment.bytes=1073741824

log.retention.check.interval.ms=300000

zookeeper.connect=192.168.75.173:2181 #指定zookeeper地址

zookeeper.connection.timeout.ms=18000`在这里插入代码片`

group.initial.rebalance.delay.ms=0

4.3 启动kafka

cat /etc/systemd/system/kafka.service

[Unit]

Description=kafka.service

After=network.target remote-fs.target zookeeper.service

[Service]

Type=forking

Environment=JAVA_HOME=/usr/local/java/jdk1.8.0_171

ExecStart=/apps/kafka/bin/kafka-server-start.sh -daemon /apps/kafka/config/server.properties

ExecStop=/apps/kafka/bin/kafka-server-stop.sh

ExecReload=/bin/kill -s HUP $MAINPID

[Install]

WantedBy=multi-user.target

systemctl daemon-reload

systemctl start kafka

systemctl status kafka

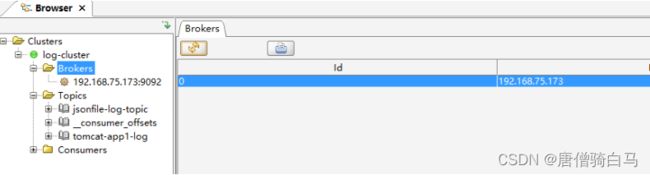

使用Kafka 客户端管理工具 Offset Explorer查看验证kafka集群状态,关于此工具的使用可以参考:https://blog.csdn.net/qq_39416311/article/details/123316904

5、安装配置logstash

5.1 安装logstash

ip:192.168.75.172

rpm -ivh logstash-7.6.2.rpm

5.2 启动logstash

systemctl start logstash

systemctl enable logstash

6、配置日志收集

6.1 基于daemonset的日志收集

6.2 构建logstash镜像

在k8s或者其他安装有docker的机器上构建logstash镜像,Dockerfile如下

[root@master Dockerfile]# pwd

/root/Dockerfile

[root@master Dockerfile]# cat Dockerfile

FROM logstash:7.6.2

LABEL author="[email protected]"

WORKDIR /usr/share/logstash

COPY logstash.yml /usr/share/logstash/config/logstash.yml

COPY logstash.conf /usr/share/logstash/pipeline/logstash.conf

USER root

RUN usermod -a -G root logstash

logstash.yml内容如下

[root@master Dockerfile]# cat logstash.yml

http.host: "0.0.0.0"

logstash.conf内容如下

[root@master Dockerfile]# cat logstash.conf

input {

file {

path => "/var/lib/docker/containers/*/*-json.log" #docker

#path => "/var/log/pods/*/*/*.log" #使用containerd时,Pod的log的存放路径

start_position => "beginning"

type => "applog" #日志类型,自定义

}

file {

path => "/var/log/*.log" #操作系统日志路径

start_position => "beginning"

type => "syslog"

}

}

output {

if [type] == "applog" { #指定将applog类型的日志发送到kafka的哪个topic

kafka {

bootstrap_servers => "${KAFKA_SERVER}"

topic_id => "${TOPIC_ID}"

batch_size => 16384 #logstash每次向ES传输的数据量大小,单位为字节

codec => "${CODEC}" #日志格式

} }

if [type] == "syslog" { ##指定将syslog类型的日志发送到kafka的哪个topic

kafka {

bootstrap_servers => "${KAFKA_SERVER}"

topic_id => "${TOPIC_ID}"

batch_size => 16384

codec => "${CODEC}" #系统日志不是json格式

}}

}

构建镜像

[root@master Dockerfile]# ls

Dockerfile logstash.conf logstash.yml

docker build -t logstash-daemonset:7.6.2 .

docker save -o logstash-daemonset.tar logstash-daemonset:7.6.2

docker load -i logstash-daemonset.tar

6.3 部署logstash daemonset,yaml如下

[root@master ~]# cat logstash.yaml

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: logstash-daemonset

namespace: log

spec:

selector:

matchLabels:

app: logstash

template:

metadata:

labels:

app: logstash

spec:

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

containers:

- name: logstash

image: logstash-daemonset-docker:7.6.2

imagePullPolicy: IfNotPresent

env:

- name: KAFKA_SERVER

value: "192.168.75.173:9092"

- name: TOPIC_ID

value: "logstash-log-test1"

- name: CODEC

value: "json"

volumeMounts:

- name: varlog

mountPath: /var/log

readOnly: False

- name: varlogpods

# mountPath: /var/log/pods

mountPath: /var/lib/docker/containers

readOnly: False

volumes:

- name: varlog

hostPath:

path: /var/log

- name: varlogpods

hostPath:

#path: /var/log/pods

path: /var/lib/docker/containers

[root@master ~]# kubectl apply -f logstash.yaml

daemonset.apps/logstash-daemonset created

[root@master ~]# kubectl get pods -n log

NAME READY STATUS RESTARTS AGE

logstash-daemonset-8j622 1/1 Running 0 7s

logstash-daemonset-dxnwk 1/1 Running 0 7s

6.4 修改logstash配置

这里的logstash是之前部署在主机上的logstash,而不是Pod。配置其从kafka读取日志然后发送到es

[root@logstash conf.d]# cat logstash-daemonset-kafka-to-es.conf

input {

kafka {

bootstrap_servers => "192.168.75.173:9092"

topics => ["logstash-log-test1"]

codec => "json"

}

}

output {

if [type] == "applog" {

elasticsearch {

hosts => ["192.168.75.170:9200"]

index => "applog-%{+YYYY.MM.dd}"

}}

if [type] == "syslog" {

elasticsearch {

hosts => ["192.168.75.170:9200"]

index => "syslog-%{+YYYY.MM.dd}"

}}

}

检查语法

./logstash -f /etc/logstash/conf.d/logstash-daemonset-kafka-to-es.conf -t

启动

./logstash -f /etc/logstash/conf.d/logstash-daemonset-kafka-to-es.conf

systemctl restart logstash

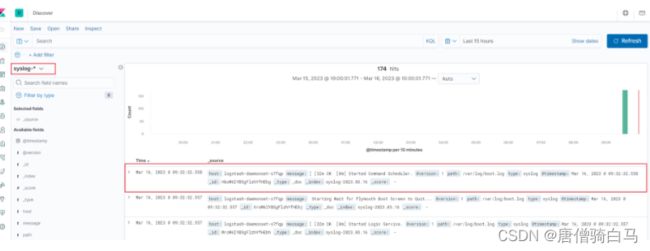

6.5 配置kibana展示日志

分别为applog和syslog创建日志索引模式

创建pod查看是否有日志上传

[root@master ~]# cat nginx1.yaml

apiVersion: v1

kind: Pod

metadata:

name: nginx2

namespace: log

labels:

app: nginx2

spec:

containers:

- name: nginx2

image: nginx:latest

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

7、基于sidecar容器的日志收集

在这种方式下node节点上的日志还是需要部署额外的服务去收集

7.1 构建sidecar镜像

[root@master Dockerfile-sidecar]# cat Dockerfile

FROM logstash:7.6.2

LABEL author="[email protected]"

WORKDIR /usr/share/logstash

COPY logstash.yml /usr/share/logstash/config/logstash.yml

COPY logstash.conf /usr/share/logstash/pipeline/logstash.conf

USER root

RUN usermod -a -G root logstash

logstash.yaml内容如下

[root@master Dockerfile-sidecar]# cat logstash.yml

http.host: "0.0.0.0"

logstash.conf内容如下:

[root@master Dockerfile-sidecar]# cat logstash.conf

input {

file {

path => "/var/log/applog/catalina.*.log"

start_position => "beginning"

type => "tomcat-app1-catalina-log"

}

file {

path => "/var/log/applog/localhost_access_log.*.txt"

start_position => "beginning"

type => "tomcat-app1-access-log"

}

}

output {

if [type] == "tomcat-app1-catalina-log" {

kafka {

bootstrap_servers => "${KAFKA_SERVER}"

topic_id => "${TOPIC_ID}"

batch_size => 16384 #logstash每次向ES传输的数据量大小,单位为字节

codec => "${CODEC}"

} }

if [type] == "tomcat-app1-access-log" {

kafka {

bootstrap_servers => "${KAFKA_SERVER}"

topic_id => "${TOPIC_ID}"

batch_size => 16384

codec => "${CODEC}" #系统日志不是json格式

}}

}

7.2 构建镜像

docker build -t logstash-sidecar:7.6.2 .

docker save -o logstash-sidecar.tar logstash-sidecar:7.6.2

7.3 部署pod

[root@master ~]# cat sidecar.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

namespace: log

name: tomcat-app1

spec:

replicas: 2

selector:

matchLabels:

app: tomcat-app1

template:

metadata:

labels:

app: tomcat-app1

spec:

containers:

- name: logstash-sidecar

image: logstash-sidecar:7.6.2

imagePullPolicy: IfNotPresent

env:

- name: KAFKA_SERVER

value: "192.168.75.173:9092"

- name: TOPIC_ID

value: "tomcat-log1"

- name: CODEC

value: "json"

volumeMounts:

- name: applog

mountPath: /var/log/applog

- name: tomcat

image: daocloud.io/library/tomcat:8.0.45

imagePullPolicy: IfNotPresent

volumeMounts:

- name: applog

mountPath: /usr/local/tomcat/logs/

volumes:

- name: applog

emptyDir: {}

[root@master ~]# kubectl get pods -n log

NAME READY STATUS RESTARTS AGE

tomcat-app1-55759b549-d6tgv 2/2 Running 0 117s

tomcat-app1-55759b549-r8dfd 2/2 Running 0 117s

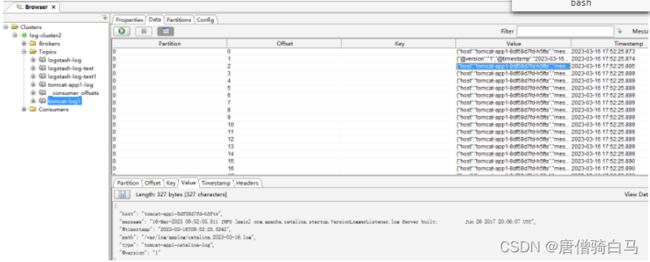

在kafka中查看,sidecar容器已经将日志发送到kafka

7.4 配置logstash

cat logstash-sidercat-kafka-to-es.conf

input {

kafka { #从kafka tomcat-app1-log topic中读取日志

bootstrap_servers => "192.168.75.173:9092"

topics => ["tomcat-app1"]

codec => "json"

}

}

output {

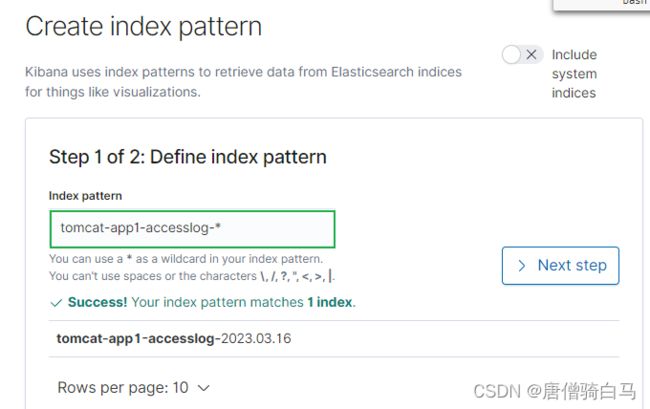

if [type] == "tomcat-app1-access-log" { #tomcat访问日志存储到es的tomcat-app1-accesslog-%{+YYYY.MM.dd}索引中

elasticsearch {

hosts => ["192.168.75.170:9200"]

index => "tomcat-app1-accesslog-%{+YYYY.MM.dd}"

}

}

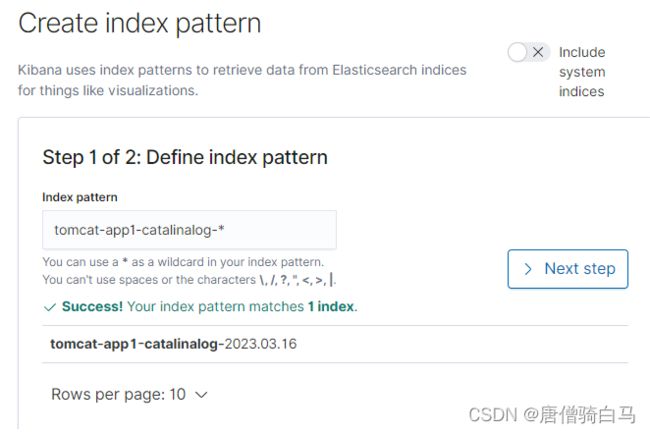

if [type] == "tomcat-app1-catalina-log" { #tomcat启动日志存储到es的tomcat-app1-catalinalog-%{+YYYY.MM.dd}索引中

elasticsearch {

hosts => ["192.168.75.170:9200"]

index => "tomcat-app1-catalinalog-%{+YYYY.MM.dd}"

}

}

}

systemctl restart logstash

8、基于容器内置的日志收集进程的日志收集

8.1 构建镜像

业务镜像内需要运行两个进程,一个是tomcat提供web服务,另一个是filebeat负责收集日志。Dockerfile如下:

[root@node1 Dockerfile]# cat Dockerfile

FROM centos:centos7.7.1908

WORKDIR /tmp

COPY jdk-8u171-linux-x64.tar.gz /tmp

RUN tar zxf /tmp/jdk-8u171-linux-x64.tar.gz -C /usr/local/ && rm -rf /tmp/jdk-8u171-linux-x64.tar.gz

RUN ln -s /usr/local/jdk1.8.0_171 /usr/local/jdk

ENV JAVA_HOME /usr/local/jdk

ENV JRE_HOME=${JAVA_HOME}/jre

ENV CLASSPATH=.:${JAVA_HOME}/lib:${JRE_HOME}/lib

ENV PATH=${JAVA_HOME}/bin:$PATH

COPY apache-tomcat-8.5.87.tar.gz /tmp

RUN tar zxf apache-tomcat-8.5.87.tar.gz -C /usr/local && rm -rf /tmp/apache-tomcat-8.5.87.tar.gz

RUN mv /usr/local/apache-tomcat-8.5.87 /usr/local/tomcat

COPY filebeat-7.6.2-x86_64.rpm /tmp/

RUN cd /tmp/ && rpm -ivh filebeat-7.6.2-x86_64.rpm && rm -f filebeat-7.6.2-x86_64.rpm

COPY filebeat.yml /etc/filebeat/

COPY run.sh /usr/local/bin/

RUN chmod 755 /usr/local/bin/run.sh

EXPOSE 8443 8080

CMD ["/usr/local/bin/run.sh"]

filebeat.yml内容如下

[root@node1 Dockerfile]# cat filebeat.yml

filebeat.inputs:

- type: log

enabled: true

paths:

- /usr/local/tomcat/logs/catalina.*.log

fields:

type: filebeat-tomcat-catalina

- type: log

enabled: true

paths:

- /usr/local/tomcat/logs/localhost_access_log.*.txt

fields:

type: filebeat-tomcat-accesslog

filebeat.config.modules:

path: ${path.config}/modules.d/*.yml

reload.enabled: false

setup.template.settings:

index.number_of_shards: 1

setup.kibana:

output.kafka:

hosts: ["192.168.75.173:9092"]

required_acks: 1

topic: "filebeat-tomcat-app1"

compression: gzip

max_message_bytes: 1000000

run.sh内容如下:

[root@node1 Dockerfile]# cat run.sh

#!/bin/bash

/usr/share/filebeat/bin/filebeat -e -c /etc/filebeat/filebeat.yml --path.home /usr/share/filebeat --path.config /etc/filebeat --path.data /var/lib/filebeat --path.logs /var/log/filebeat &

/usr/local/tomcat/bin/startup.sh && tail -f /usr/local/tomcat/logs/catalina.out

执行构建

docker build -t filebeat-log:7.6.2 .

8.2 部署业务容器

apiVersion: apps/v1

kind: Deployment

metadata:

name: tomcat-myapp

spec:

replicas: 3

selector:

matchLabels:

app: tomcat-myapp

template:

metadata:

labels:

app: tomcat-myapp

spec:

containers:

- name: tomcat-myapp

image: filebeat-log:7.6.2

imagePullPolicy: IfNotPresent

ports:

- name: http

containerPort: 8080

- name: https

containerPort: 8443

[root@master ~]# kubectl get pods -n log

NAME READY STATUS RESTARTS AGE

tomcat-myapp-78ff79cd6c-4dnsb 1/1 Running 0 3m4s

tomcat-myapp-78ff79cd6c-mpl4f 1/1 Running 0 3m4s

8.3 配置logstash

cat /etc/logstash/conf.d/filebeat-process-kafka-to-es.conf

input {

kafka {

bootstrap_servers => "192.168.75.173:9092"

topics => ["filebeat-tomcat-app1"]

codec => "json"

}

}

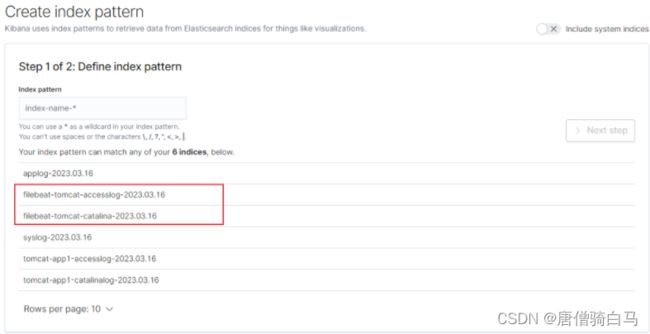

output {

if [fields][type] == "filebeat-tomcat-catalina" {

elasticsearch {

hosts => ["192.168.75.170:9200"]

index => "filebeat-tomcat-catalina-%{+YYYY.MM.dd}"

}}

if [fields][type] == "filebeat-tomcat-accesslog" {

elasticsearch {

hosts => ["192.168.75.170:9200"]

index => "filebeat-tomcat-accesslog-%{+YYYY.MM.dd}"

}}

}

systemctl restart logstash

9、elasticsearch-head安装

yum install git npm # npm在epel源中

git clone https://github.com/mobz/elasticsearch-head.git # 安装过程需要连接互联网

cd elasticsearch-head # git clone后会自动生成的一个目录

npm install

npm run start

如果想查询集群健康信息,那么需要在elasticsearch配置文件中授权

vim /etc/elasticsearch/elasticsearch.yml

http.cors.enabled: true # elasticsearch中启用CORS

http.cors.allow-origin: "*" # 允许访问的IP地址段,* 为所有IP都可以访问

10、kafka命令

查看已创建的topic列表:

./kafka-topics.sh --list --zookeeper localhost

查看对应topic的描述信息

[root@kafka bin]# ./kafka-topics.sh --describe --zookeeper 192.168.75.173 --topic logstash-log

Topic:logstash-log PartitionCount:1 ReplicationFactor:1 Configs:

Topic: logstash-log Partition: 0 Leader: 0 Replicas: 0 Isr: 0

消费消息:

[root@kafka bin]# ./kafka-console-consumer.sh --bootstrap-server 192.168.75.173:9092 --topic logstash-log --from-beginning

[root@kafka bin]# ./kafka-console-producer.sh --broker-list 192.168.75.173:9092 --topic logstash-log

[root@kafka bin]# ./kafka-console-consumer.sh --bootstrap-server 192.168.75.173:9092 --topic logstash-log --from-beginning

查询topic列表

./kafka-topics.sh --list --zookeeper 192.168.75.173:2181