第十五章 Seata处理分布式事务

Seata处理分布式事务

gitee:https://gitee.com/mougenan/springcloud_study.git

1. 分布式事务问题

例如: 用户购买商品的业务逻辑。整个业务逻辑由3个微服务提供克持: 仓储服务:对始定的向品扣除色储数量, 订单服务:根狐来购需求创建订单. 帐户服务:从用户帐户中扣除全额。

单体应用被拆分成微服务应用,原来的三个模块被拆分成三个独立的应用,分别使用三个独立的数据源,业务操作需要调用三个服务来完成。此时每个服务内部的数据—致性由本地事务来保证,但是全局的数据一致性问题没法保证。

一次业务操作需要跨多个数据源或需要跨多个系统进行远程调用,就会产生分布式事务问题

2. Seata简介

Seata是一款开源的分布式事务解决方案,致力于在微服务架构下提供高性能和简单易用的分布式事务服务。 官网:Seata | Seata

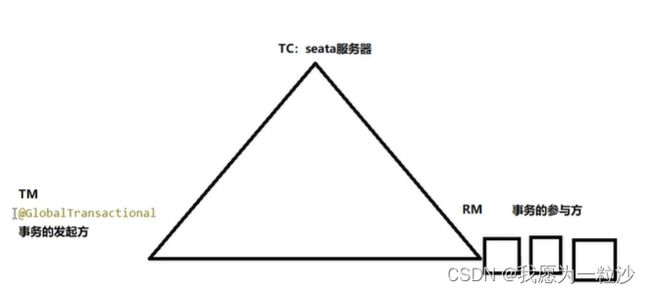

一个典型的分布式事务过程: 分布式事务处理过程的ID+三组件模型: 全局唯一的事务ID:Transaction ID XID 三组件概念: Transaction Coordinator(TC):事务协调器,维护全局事务的运行状态,负责协调并驱动全局事务的提交或回滚。 Transaction Manager(TM):控制全局事务的边界,负责开启一个全局事务,并最终发起全局提交或全局回滚的决议。 Resource Manager(RM):控制分支事务,负责分支注册、状态汇报,并接收事务协调器的指令,驱动分支(本地)事务的提交和回滚。 处理过程:

-

TM向TC申请开启一个全局事务,全局事务创建成功并生成一个全局唯一的XID;

-

XID在微服务调用链路的上下文中传播;

-

RM向TC注册分支事务,将其纳入XID对应全局事务的管辖;

-

TM向TC发起针对 XID的全局提交或回滚决议;

-

TC调度XID下管辖的全部分支事务完成提交或回滚请求。

下载地址:Releases · apache/incubator-seata · GitHub 怎么使用:本地@Transactional、全局@GlobalTreansactional

3. Seata-Server

-

官网:Seata | Seata

-

下载版本0.9.0

-

seata-server-0.9.0.zip解压到指定目录并修稿conf目录下的file.conf配置文件 修改:自定义事务组名称+事务日志存储模式为db+数据库连接信息

service {

#vgroup->rgroup

vgroup_mapping.my_test_tx_group = "fsp_txt_group"

#only support single node

default.grouplist = "127.0.0.1:8091"

#degrade current not support

enableDegrade = false

#disable

disable = false

#unit ms,s,m,h,d represents milliseconds, seconds, minutes, hours, days, default permanent

max.commit.retry.timeout = "-1"

max.rollback.retry.timeout = "-1"

}

mode = "db"

## file store

file {

dir = "sessionStore"

# branch session size , if exceeded first try compress lockkey, still exceeded throws exceptions

max-branch-session-size = 16384

# globe session size , if exceeded throws exceptions

max-global-session-size = 512

# file buffer size , if exceeded allocate new buffer

file-write-buffer-cache-size = 16384

# when recover batch read size

session.reload.read_size = 100

# async, sync

flush-disk-mode = async

}

## database store

db {

## the implement of javax.sql.DataSource, such as DruidDataSource(druid)/BasicDataSource(dbcp) etc.

datasource = "dbcp"

## mysql/oracle/h2/oceanbase etc.

db-type = "mysql"

driver-class-name = "com.mysql.jdbc.Driver"

url = "jdbc:mysql://127.0.0.1:3306/seata"

user = "root"

password = "123456"

min-conn = 1

max-conn = 3

global.table = "global_table"

branch.table = "branch_table"

lock-table = "lock_table"

query-limit = 100

}-

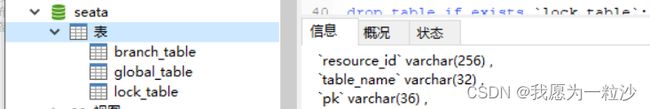

mysql5.7数据库建库seata,并在seata库中建表

-

修改seata-server-1.0.0\seata\conf目录下的registry.conf配置文件

type = "nacos"

nacos {

serverAddr = "localhost:8848"

namespace = ""

cluster = "default"

}-

先启动Nacos端口8848

-

在启动seata-server

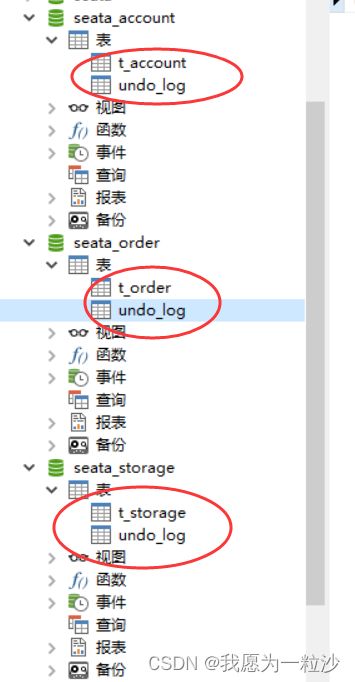

4. 订单/库存/账户业务数据库准备

业务说明:

这里我们会创建三个服务,一个订单服务,一个库存服务,一个账户服务。 当用户下单时,会在订单服务中创建一个订单,然后通过远程调用库存服务来扣减下单商品的库存,再通过远程调用账户服务来扣减用户账户里面的余额, 最后在订单服务中修改订单状态为已完成。 该操作跨越三个数据库,有两次远程调用,很明显会有分布式事务问题。

创建数据库:

seata_order:存储订单的数据库; seata_storage:存储库存的数据库; seata_acdount:存储账户信息的数据库。

创建业务表:

seata_order库下建t_order表

CREATE TABLE t_order (

`id BIGINT(11)NOT NULL AUTO_INCREMENT PRIMARY KEY,

'user_id` BIGINT(11) DEFAULT NULL COMMENT "用户id",

`product_id` BIGINT(11)DEFAULT NULL COMMENT "产品id",

`count` INT(11) DEFAULT NULL COMMENT "数量",

`money` DECIMAL(11,0)DEFAULT NULL COMMENT "金额",

`status` INT(1) DEFAULT NULL COMMENT "订单状态:0:创建手; 1:已完结"

)ENGINE=INNODB AUTO_INCREMENT=7 DEFAULT CHARSET=utf8;

seata storage库下建t_storage表

CREATE TABLE t_storage (

`id` BIGINT(11)NOT NULL AUTO_INCREMENT PRIMARY KEY,

`product_id` BIGINT(11) DEFAULT NULL COMMENT "产品id",

`total` INT(11) DEFAULT NULL COMMENT "总库存",

`used` INT(11) DEFAULT NULL COMMENT "已用库存",

`residue` INT(11) DEFAULT NULL COMMENT "剩余库存"

)ENGINE=INNODB AUTO_INCREMENT=2 DEFAULT CHARSET=utf8;

INSERT INTO seata_storage.t_storage(`id`,`product_id`,`total`,`used`,`residue`)VALUES (1,'1',"100" , "0", "100");

seata_account库下建t_account表

CREATE TABLE t_account (

`id`BIGINT(11)NOT NULL AUTO_INCREMENT PRIMARY KEY COMMENT "id",

`user_id` BIGINT(11) DEFAULT NULL COMMENT "用户id",

`total` DECIMAL(10,0) DEFAULT NULL COMMENT "总额度",

`usedT` DECIMAL(10,0) DEFAULT NULL COMMENT "已用余额",

`residue` DECIMAL(10,0)DEFAULT '0' COMMENT "剩余可用额度"

)ENGINE=INNODB AUTO_INCREMENT=2 DEFAULT CHARSET=utf8;

INSERT INTO seata_account.t_account(`id`,`user_id`,`total`,`usedT`,`residue`)

VALUES (1,1,"1000","0","1000");3个库分别创建回滚日志表:就是分别粘贴:\seata-server-0.9.0\seata\conf目录下的db_undo_log.sql。

最终效果:

5. 订单/库存/账户业务微服务准备

5.1 业务需求

下订单->减库存->扣余额->改(订单)状态

5.2 新建订单Order-Module

-

创建seata-order-service2001项目

-

pom文件:

com.alibaba.cloud

spring-cloud-starter-alibaba-seata

seata-all

io.seata

io.seata

seata-all

0.9.0

-

application.yml

server:

port: 2001

spring:

application:

name: seata-order-service

cloud:

alibaba:

seata:

tx-service-group: fsp_tx_group

nacos:

discovery:

server-addr: localhost:8848

datasource:

driver-class-name: com.mysql.jdbc.Driver

url: jdbc:mysql://localhost:3306/seata_order

password: 123456

username: root

feign:

sentinel:

enabled: true

logging:

level:

io:

seata: info

mybatis:

mapper-locations: classpath:mapper/*.xml-

file.conf

transport {

# tcp udt unix-domain-socket

type = "TCP"

#NIO NATIVE

server = "NIO"

#enable heartbeat

heartbeat = true

#thread factory for netty

thread-factory {

boss-thread-prefix = "NettyBoss"

worker-thread-prefix = "NettyServerNIOWorker"

server-executor-thread-prefix = "NettyServerBizHandler"

share-boss-worker = false

client-selector-thread-prefix = "NettyClientSelector"

client-selector-thread-size = 1

client-worker-thread-prefix = "NettyClientWorkerThread"

# netty boss thread size,will not be used for UDT

boss-thread-size = 1

#auto default pin or 8

worker-thread-size = 8

}

shutdown {

# when destroy server, wait seconds

wait = 3

}

serialization = "seata"

compressor = "none"

}

service {

#vgroup->rgroup

vgroup_mapping.fsp_txt_group = "default"

#only support single node

default.grouplist = "127.0.0.1:8091"

#degrade current not support

enableDegrade = false

#disable

disable = false

#unit ms,s,m,h,d represents milliseconds, seconds, minutes, hours, days, default permanent

max.commit.retry.timeout = "-1"

max.rollback.retry.timeout = "-1"

}

client {

async.commit.buffer.limit = 10000

lock {

retry.internal = 10

retry.times = 30

}

report.retry.count = 5

tm.commit.retry.count = 1

tm.rollback.retry.count = 1

}

## transaction log store

store {

## store mode: file、db

#mode = "file"

mode = "db"

## file store

file {

dir = "sessionStore"

# branch session size , if exceeded first try compress lockkey, still exceeded throws exceptions

max-branch-session-size = 16384

# globe session size , if exceeded throws exceptions

max-global-session-size = 512

# file buffer size , if exceeded allocate new buffer

file-write-buffer-cache-size = 16384

# when recover batch read size

session.reload.read_size = 100

# async, sync

flush-disk-mode = async

}

## database store

db {

## the implement of javax.sql.DataSource, such as DruidDataSource(druid)/BasicDataSource(dbcp) etc.

datasource = "dbcp"

## mysql/oracle/h2/oceanbase etc.

db-type = "mysql"

driver-class-name = "com.mysql.jdbc.Driver"

url = "jdbc:mysql://127.0.0.1:3306/seata"

user = "root"

password = "123456"

min-conn = 1

max-conn = 3

global.table = "global_table"

branch.table = "branch_table"

lock-table = "lock_table"

query-limit = 100

}

}

lock {

## the lock store mode: local、remote

mode = "remote"

local {

## store locks in user's database

}

remote {

## store locks in the seata's server

}

}

recovery {

#schedule committing retry period in milliseconds

committing-retry-period = 1000

#schedule asyn committing retry period in milliseconds

asyn-committing-retry-period = 1000

#schedule rollbacking retry period in milliseconds

rollbacking-retry-period = 1000

#schedule timeout retry period in milliseconds

timeout-retry-period = 1000

}

transaction {

undo.data.validation = true

undo.log.serialization = "jackson"

undo.log.save.days = 7

#schedule delete expired undo_log in milliseconds

undo.log.delete.period = 86400000

undo.log.table = "undo_log"

}

## metrics settings

metrics {

enabled = false

registry-type = "compact"

# multi exporters use comma divided

exporter-list = "prometheus"

exporter-prometheus-port = 9898

}

support {

## spring

spring {

# auto proxy the DataSource bean

datasource.autoproxy = false

}

}-

registry.conf

registry {

# file 、nacos 、eureka、redis、zk、consul、etcd3、sofa

#type = "file"

type = "nacos"

nacos {

serverAddr = "localhost:8848"

namespace = ""

cluster = "default"

}

eureka {

serviceUrl = "http://localhost:8761/eureka"

application = "default"

weight = "1"

}

redis {

serverAddr = "localhost:6379"

db = "0"

}

zk {

cluster = "default"

serverAddr = "127.0.0.1:2181"

session.timeout = 6000

connect.timeout = 2000

}

consul {

cluster = "default"

serverAddr = "127.0.0.1:8500"

}

etcd3 {

cluster = "default"

serverAddr = "http://localhost:2379"

}

sofa {

serverAddr = "127.0.0.1:9603"

application = "default"

region = "DEFAULT_ZONE"

datacenter = "DefaultDataCenter"

cluster = "default"

group = "SEATA_GROUP"

addressWaitTime = "3000"

}

file {

name = "file.conf"

}

}

config {

# file、nacos 、apollo、zk、consul、etcd3

type = "file"

nacos {

serverAddr = "localhost"

namespace = ""

}

consul {

serverAddr = "127.0.0.1:8500"

}

apollo {

app.id = "seata-server"

apollo.meta = "http://192.168.1.204:8801"

}

zk {

serverAddr = "127.0.0.1:2181"

session.timeout = 6000

connect.timeout = 2000

}

etcd3 {

serverAddr = "http://localhost:2379"

}

file {

name = "file.conf"

}

}

-

domain

@Data

@NoArgsConstructor

@AllArgsConstructor

public class Order {

private Long id;

private Long userId;

private Long productId;

private BigDecimal money;

private Integer status;

}

@Data

@NoArgsConstructor

@AllArgsConstructor

public class CommonResult {

private Integer code;

private String message;

private T data;

public CommonResult(Integer code,String message){

this(code,message,null);

}

} -

dao

@Mapper

public interface OrderDao {

void create(Order order);

void update(@Param("userId") Long userId,@Param("status")Integer status);

}

insert into t_order (id,user_id,product_id,`count`,money,status)

values (null,#{userId},#{productId},#{count},#{money},0);

update t_order set status=1 where user_id=#{userId} and status=#{status};

-

service

@Service

@Slf4j

public class OrderServiceImpl implements OrderService {

@Resource

private OrderDao orderDao;

@Resource

private StorageService storageService;

@Resource

private AccountService accountService;

@Override

public void create(Order order) {

log.info("---------->开始新建订单");

orderDao.create(order);

log.info("---------->订单微服务开始调用库存,做扣减count");

storageService.decrease(order.getProductId(),order.getCount());

log.info("---------->订单微服务开始调用库存,做扣减end");

log.info("---------->订单微服务开始调用账户,做扣减money");

accountService.decrease(order.getUserId(),order.getMoney());

log.info("---------->订单微服务开始调用账户,做扣减end");

log.info("---------->修改订单状态开始");

orderDao.update(order.getUserId(),0);

log.info("---------->修改订单状态结束");

log.info("---------->下订单结束了!");

}

}-

controller

@RestController

@Slf4j

public class OrderController {

@Resource

public OrderService orderService;

@GetMapping("/order/create")

public CommonResult create(Order order){

orderService.create(order);

return new CommonResult(200,"订单创建成功");

}

}-

config

@Configuration

public class DataSourceProxyConfig {

@Value("${mybatis.mapper-locations")

private String mapperLocations;

@Bean

@ConfigurationProperties(prefix = "spring.datasource")

public DataSource druidDataSource(){

return new DruidDataSource();

}

@Bean

public DataSourceProxy dataSourceProxy(DataSource dataSource){

return new DataSourceProxy(dataSource);

}

@Bean

public SqlSessionFactory sqlSessionFactoryBean(DataSourceProxy dataSourceProxy) throws Exception {

SqlSessionFactoryBean sqlSessionFactoryBean = new SqlSessionFactoryBean();

sqlSessionFactoryBean.setDataSource(dataSourceProxy);

sqlSessionFactoryBean.setMapperLocations(new PathMatchingResourcePatternResolver().getResources(mapperLocations));

sqlSessionFactoryBean.setTransactionFactory(new SpringManagedTransactionFactory());

return sqlSessionFactoryBean.getObject();

}

}-

主启动

@SpringBootApplication(exclude = DataSourceAutoConfiguration.class)

@EnableDiscoveryClient

@EnableFeignClients

public class OrderMain2001 {

public static void main(String[] args) {

SpringApplication.run(OrderMain2001.class,args);

}

}5.3 新建库存Storage -Module

-

创建seata-storage-service2002项目

-

pom.xml文件与2001一样

-

application.yml基本一样

-

file.conf和register.conf一样

-

domain

@AllArgsConstructor

@NoArgsConstructor

@Data

public class Storage {

private Long id;

private Long productId;

private Integer total;

private Integer used;

private Integer residue;

}-

dao

@Mapper

public interface StorageDao {

void decrease(@Param("productId")Long productId,@Param("count")Integer count);

}

update t_storage

set used=used+#{count},residue=residue-#{count}

where product_id=#{productId};

-

service

public interface StorageService {

void decrease(Long productId, Integer count);

}

@Service

public class StorageServiceImpl implements StorageService {

private static final Logger LOGGER= LoggerFactory.getLogger(StorageServiceImpl.class);

@Resource

private StorageDao storageDao;

@Override

public void decrease(Long productId, Integer count) {

LOGGER.info("--------->storage-service中扣减库存开始");

storageDao.decrease(productId,count);

LOGGER.info("------------->storage-service中扣减库存结束");

}

}-

config与2001一样

-

controller

@RestController

@Slf4j

public class StorageController {

@Autowired

private StorageService storageService;

@RequestMapping("/storage/decrease")

public CommonResult decrease(Long productId,Integer count){

storageService.decrease(productId,count);

return new CommonResult(200,"扣减库存成功!");

}

}5.4 新建账户Account-Module

-

创建seata-account-service2003项目

-

pom.xml文件与2001一样

-

application.yml基本一样

-

file.conf和register.conf一样

-

domain

@Data

@AllArgsConstructor

@NoArgsConstructor

public class Account {

private Long id;

private Long userId;

private BigDecimal total;

private BigDecimal usedT;

private BigDecimal residue;

}-

dao

@Mapper

public interface AccountDao {

void decrease(@Param("userId")Long userId, @Param("money")BigDecimal money);

}

update t_account

set residue = residue-#{money},usedT = usedT + #{money}

where user_id=#{userId};

-

service

public interface AccountService {

void decrease(Long userId, BigDecimal money);

}

@Service

public class AccountServiceImpl implements AccountService {

private static final Logger LOGGER= LoggerFactory.getLogger(AccountServiceImpl.class);

@Resource

private AccountDao accountDao;

@Override

public void decrease(Long userId, BigDecimal money) {

LOGGER.info("--------->account-service中扣减库存开始");

accountDao.decrease(userId,money);

LOGGER.info("------------->account-service中扣减库存结束");

}

}-

config与2001一样

-

controller

@RestController

@Slf4j

public class AccountController {

@Resource

AccountService accountService;

@RequestMapping("/account/decrease")

public CommonResult decrease(@RequestParam("userId")Long userId, @RequestParam("money")BigDecimal money){

accountService.decrease(userId,money);

return new CommonResult(200,"扣减账余额成功!");

}

}5.5 测试

正常下单:

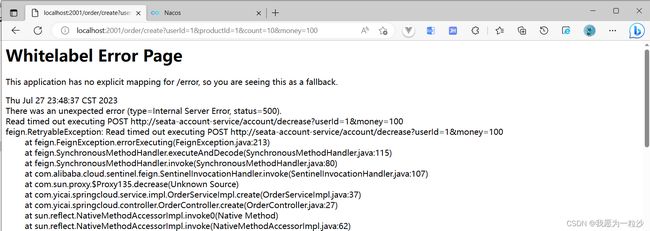

超时异常:

@Service

public class AccountServiceImpl implements AccountService {

private static final Logger LOGGER= LoggerFactory.getLogger(AccountServiceImpl.class);

@Resource

private AccountDao accountDao;

@Override

public void decrease(Long userId, BigDecimal money) {

LOGGER.info("--------->account-service中扣减库存开始");

try{

TimeUnit.SECONDS.sleep(20000);

}catch (InterruptedException e){

e.printStackTrace();

}

accountDao.decrease(userId,money);

LOGGER.info("------------->account-service中扣减库存结束");

}

}当库存和账户金额扣减后,订单状态并没有设置为已经完成,没有从零改为1而且由于feign的重试机制,账户余额还有可能被多次扣减。

@GlobalTransactional测试:

@Override

@GlobalTransactional(name = "fsp-create-order",rollbackFor = Exception.class)

public void create(Order order) {

log.info("---------->开始新建订单");

orderDao.create(order);

log.info("---------->订单微服务开始调用库存,做扣减count");

storageService.decrease(order.getProductId(),order.getCount());

log.info("---------->订单微服务开始调用库存,做扣减end");

log.info("---------->订单微服务开始调用账户,做扣减money");

accountService.decrease(order.getUserId(),order.getMoney());

log.info("---------->订单微服务开始调用账户,做扣减end");

log.info("---------->修改订单状态开始");

orderDao.update(order.getUserId(),0);

log.info("---------->修改订单状态结束");

log.info("---------->下订单结束了!");

}下单后数据库教据并没有任何改变;记录都添加不进来少。

5.6 Seata之原理简介

Seata:2019年1月份蚂蚁金服和阿里巴巴共同开源的分布式事务解决方案 Simple Extensible Autonomous Transaction Architecture,简单可扩展自治事务框架。

TM开启分布式事务(TM向TC注册全局事务记录); 按业务场景,编排数据库、服务等事务内资源(RM向TC汇报资源准备状态); TM结束分布式事务,事务一阶段结束(TM通知TC提交/回滚分布式事务); TC汇总事务信息,决定分布式事务是提交还是回滚; TC通知所有RM提交/回滚资源,事务二阶段结束。

在一阶段,Seata 会拦截“业务SQL”, 1.解析SQL语义,找到“业务SQL”要更新的业务数据,在业务数据被更新前,将其保存成“before image”, 2.执行“业务SQL”更新业务数据,在业务数据更新之后, 3.其保存成“after image”,最后生成行锁。 以上操作全部在一个数据库事务内完成,这样保证了一阶段操作的原子性。

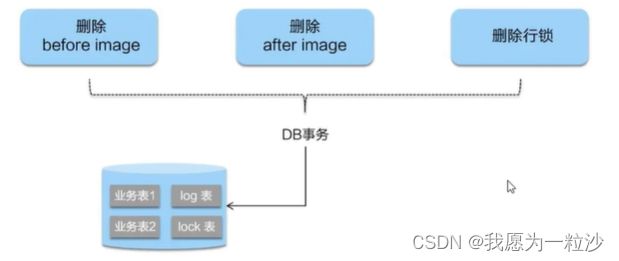

二阶段如是顺利提交的话,因为“业务SQL”在一阶段已经提交至数据库,所以Seata框架只需将一阶段保存的快照数据和行锁删掉,完成数据清理即可。

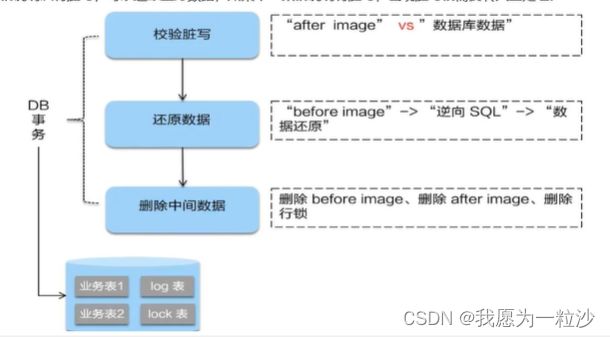

二阶段回滚:二阶段如果是回滚的话,Seata就需要回滚一阶段已经执行的“业务SQL”,还原业务数据。回滚方式便是用“before image”还原业务数据;但在还原前要首先要校验脏写,对比“数据库当前业务数据”和“after image"如果两份数据完全一致就说明没有脏写,可以还原业务数据,如果不一致就说明有脏写,出现脏写就需要转人工处理。

总结:Seata的 AT、XA模式都是基于全局事务实现的,在高并发的场景下会出现获取全局锁异常,因此这两种模式都不适用高并发场景;Seata TCC模式性能比AT模式的好一点,但是并发量大于100的话还是不适合;如果基本没有什么并发量的话,可以选择AT模式;并发量在一百内的话可以使用TCC模式。