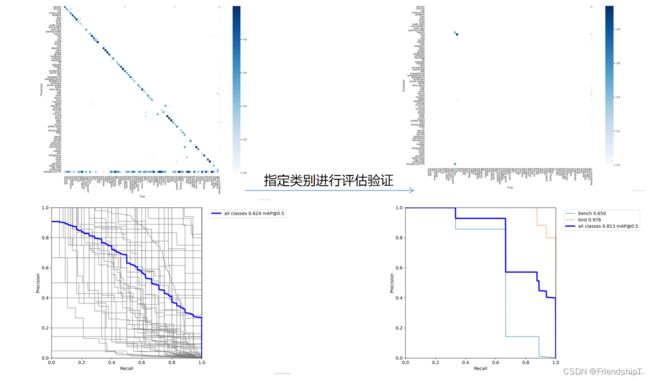

YOLOv5:指定类别进行评估验证

- 前言

- 前提条件

- 相关介绍

- 实验环境

- YOLOv5:指定类别进行评估验证

-

- 代码实现

- 进行验证

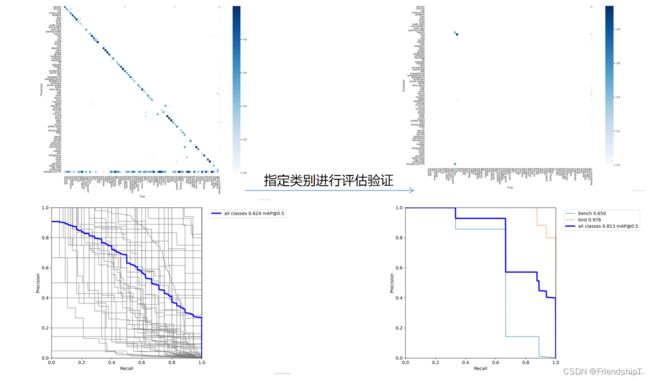

- 没有指定的结果

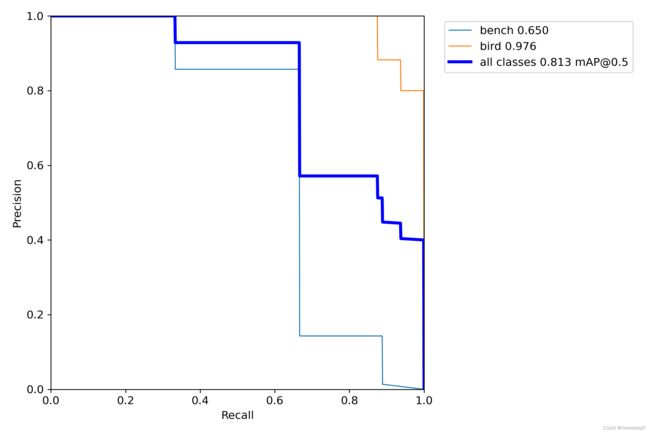

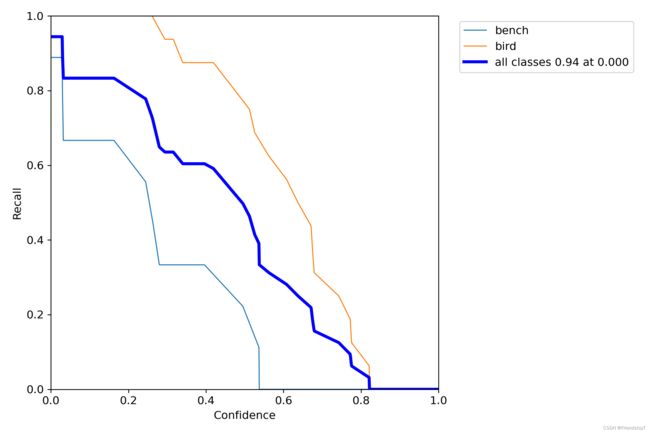

- 指定类别的结果

前言

- 由于本人水平有限,难免出现错漏,敬请批评改正。

- 更多精彩内容,可点击进入Python日常小操作专栏、OpenCV-Python小应用专栏、YOLO系列专栏、自然语言处理专栏或我的个人主页查看

- 基于DETR的人脸伪装检测

- YOLOv7训练自己的数据集(口罩检测)

- YOLOv8训练自己的数据集(足球检测)

- YOLOv5:TensorRT加速YOLOv5模型推理

- YOLOv5:IoU、GIoU、DIoU、CIoU、EIoU

- 玩转Jetson Nano(五):TensorRT加速YOLOv5目标检测

- YOLOv5:添加SE、CBAM、CoordAtt、ECA注意力机制

- YOLOv5:yolov5s.yaml配置文件解读、增加小目标检测层

- Python将COCO格式实例分割数据集转换为YOLO格式实例分割数据集

- YOLOv5:使用7.0版本训练自己的实例分割模型(车辆、行人、路标、车道线等实例分割)

- 使用Kaggle GPU资源免费体验Stable Diffusion开源项目

前提条件

相关介绍

- Python是一种跨平台的计算机程序设计语言。是一个高层次的结合了解释性、编译性、互动性和面向对象的脚本语言。最初被设计用于编写自动化脚本(shell),随着版本的不断更新和语言新功能的添加,越多被用于独立的、大型项目的开发。

- PyTorch 是一个深度学习框架,封装好了很多网络和深度学习相关的工具方便我们调用,而不用我们一个个去单独写了。它分为 CPU 和 GPU 版本,其他框架还有 TensorFlow、Caffe 等。PyTorch 是由 Facebook 人工智能研究院(FAIR)基于 Torch 推出的,它是一个基于 Python 的可续计算包,提供两个高级功能:1、具有强大的 GPU 加速的张量计算(如 NumPy);2、构建深度神经网络时的自动微分机制。

- YOLOv5是一种单阶段目标检测算法,该算法在YOLOv4的基础上添加了一些新的改进思路,使其速度与精度都得到了极大的性能提升。它是一个在COCO数据集上预训练的物体检测架构和模型系列,代表了Ultralytics对未来视觉AI方法的开源研究,其中包含了经过数千小时的研究和开发而形成的经验教训和最佳实践。

实验环境

YOLOv5:指定类别进行评估验证

- 背景:在特定场景下,只想关注特定类别的效果,即可指定类别进行评估验证。

- 目录结构示例

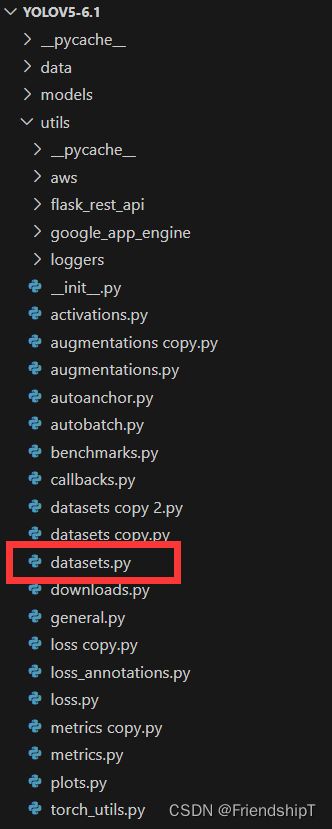

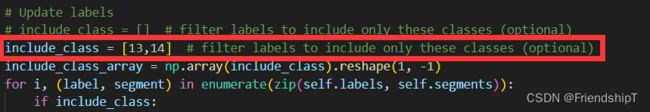

代码实现

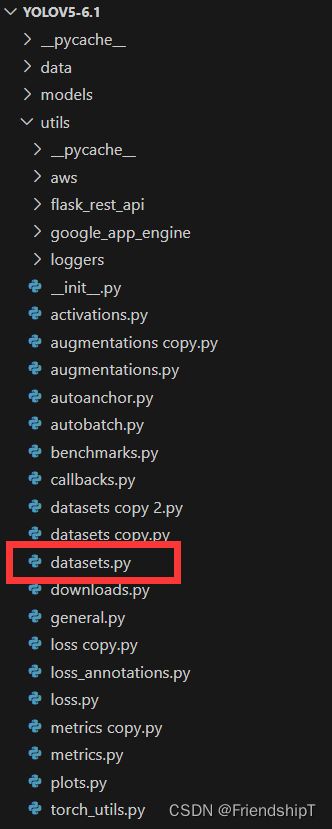

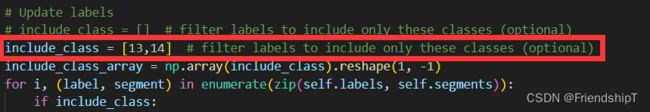

- 主要修改官方代码utils/datasets.py中552行的include_class变量。

"""

Dataloaders and dataset utils

"""

import glob

import hashlib

import json

import math

import os

import random

import shutil

import time

from itertools import repeat

from multiprocessing.pool import Pool, ThreadPool

from pathlib import Path

from threading import Thread

from zipfile import ZipFile

import cv2

import numpy as np

import torch

import torch.nn.functional as F

import yaml

from PIL import ExifTags, Image, ImageOps

from torch.utils.data import DataLoader, Dataset, dataloader, distributed

from tqdm import tqdm

from utils.augmentations import Albumentations, augment_hsv, copy_paste, letterbox, mixup, random_perspective

from utils.general import (DATASETS_DIR, LOGGER, NUM_THREADS, check_dataset, check_requirements, check_yaml, clean_str,

segments2boxes, xyn2xy, xywh2xyxy, xywhn2xyxy, xyxy2xywhn)

from utils.torch_utils import torch_distributed_zero_first

HELP_URL = 'https://github.com/ultralytics/yolov5/wiki/Train-Custom-Data'

IMG_FORMATS = ['bmp', 'dng', 'jpeg', 'jpg', 'mpo', 'png', 'tif', 'tiff', 'webp']

VID_FORMATS = ['asf', 'avi', 'gif', 'm4v', 'mkv', 'mov', 'mp4', 'mpeg', 'mpg', 'wmv']

for orientation in ExifTags.TAGS.keys():

if ExifTags.TAGS[orientation] == 'Orientation':

break

def get_hash(paths):

size = sum(os.path.getsize(p) for p in paths if os.path.exists(p))

h = hashlib.md5(str(size).encode())

h.update(''.join(paths).encode())

return h.hexdigest()

def exif_size(img):

s = img.size

try:

rotation = dict(img._getexif().items())[orientation]

if rotation == 6:

s = (s[1], s[0])

elif rotation == 8:

s = (s[1], s[0])

except Exception:

pass

return s

def exif_transpose(image):

"""

Transpose a PIL image accordingly if it has an EXIF Orientation tag.

Inplace version of https://github.com/python-pillow/Pillow/blob/master/src/PIL/ImageOps.py exif_transpose()

:param image: The image to transpose.

:return: An image.

"""

exif = image.getexif()

orientation = exif.get(0x0112, 1)

if orientation > 1:

method = {2: Image.FLIP_LEFT_RIGHT,

3: Image.ROTATE_180,

4: Image.FLIP_TOP_BOTTOM,

5: Image.TRANSPOSE,

6: Image.ROTATE_270,

7: Image.TRANSVERSE,

8: Image.ROTATE_90,

}.get(orientation)

if method is not None:

image = image.transpose(method)

del exif[0x0112]

image.info["exif"] = exif.tobytes()

return image

def create_dataloader(path, imgsz, batch_size, stride, single_cls=False, hyp=None, augment=False, cache=False, pad=0.0,

rect=False, rank=-1, workers=8, image_weights=False, quad=False, prefix='', shuffle=False):

'''

在train.py中被调用,用于生成Trainloader, dataset,testloader

自定义dataloader函数: 调用LoadImagesAndLabels获取数据集(包括数据增强) + 调用分布式采样器DistributedSampler +

自定义InfiniteDataLoader 进行永久持续的采样数据

:param path: 图片数据加载路径 train/test 如: ../datasets/VOC/images/train2007

:param imgsz: train/test图片尺寸(数据增强后大小) 640

:param batch_size: batch size 大小 8/16/32

:param stride: 模型最大stride=32 [32 16 8]

:param single_cls: 数据集是否是单类别 默认False

:param hyp: 超参列表dict 网络训练时的一些超参数,包括学习率等,这里主要用到里面一些关于数据增强(旋转、平移等)的系数

:param augment: 是否要进行数据增强 True

:param cache: 是否cache_images False

:param pad: 设置矩形训练的shape时进行的填充 默认0.0

:param rect: 是否开启矩形train/test 默认训练集关闭 验证集开启

:param rank: 多卡训练时的进程编号 rank为进程编号 -1且gpu=1时不进行分布式 -1且多块gpu使用DataParallel模式 默认-1

:param workers: dataloader的numworks 加载数据时的cpu进程数

:param image_weights: 训练时是否根据图片样本真实框分布权重来选择图片 默认False

:param quad: dataloader取数据时, 是否使用collate_fn4代替collate_fn 默认False

:param prefix: 显示信息 一个标志,多为train/val,处理标签时保存cache文件会用到

:param shuffle: 是否乱序,默认False

'''

if rect and shuffle:

LOGGER.warning('WARNING: --rect is incompatible with DataLoader shuffle, setting shuffle=False')

shuffle = False

with torch_distributed_zero_first(rank):

dataset = LoadImagesAndLabels(path, imgsz, batch_size,

augment=augment,

hyp=hyp,

rect=rect,

cache_images=cache,

single_cls=single_cls,

stride=int(stride),

pad=pad,

image_weights=image_weights,

prefix=prefix)

batch_size = min(batch_size, len(dataset))

nd = torch.cuda.device_count()

nw = min([os.cpu_count() // max(nd, 1), batch_size if batch_size > 1 else 0, workers])

sampler = None if rank == -1 else distributed.DistributedSampler(dataset, shuffle=shuffle)

loader = DataLoader if image_weights else InfiniteDataLoader

return loader(dataset,

batch_size=batch_size,

shuffle=shuffle and sampler is None,

num_workers=nw,

sampler=sampler,

pin_memory=True,

collate_fn=LoadImagesAndLabels.collate_fn4 if quad else LoadImagesAndLabels.collate_fn), dataset

class InfiniteDataLoader(dataloader.DataLoader):

""" Dataloader that reuses workers

Uses same syntax as vanilla DataLoader

当image_weights=False时就会调用这两个函数 进行自定义DataLoader

https://github.com/ultralytics/yolov5/pull/876

使用InfiniteDataLoader和_RepeatSampler来对DataLoader进行封装, 代替原先的DataLoader, 能够永久持续的采样数据

"""

def __init__(self, *args, **kwargs):

super().__init__(*args, **kwargs)

object.__setattr__(self, 'batch_sampler', _RepeatSampler(self.batch_sampler))

self.iterator = super().__iter__()

def __len__(self):

return len(self.batch_sampler.sampler)

def __iter__(self):

for i in range(len(self)):

yield next(self.iterator)

class _RepeatSampler:

""" Sampler that repeats forever

这部分是进行持续采样

Args:

sampler (Sampler)

"""

def __init__(self, sampler):

self.sampler = sampler

def __iter__(self):

while True:

yield from iter(self.sampler)

class LoadImages:

"""在detect.py中使用

load 文件夹中的图片/视频

定义迭代器 用于detect.py

"""

def __init__(self, path, img_size=640, stride=32, auto=True):

p = str(Path(path).resolve())

if '*' in p:

files = sorted(glob.glob(p, recursive=True))

elif os.path.isdir(p):

files = sorted(glob.glob(os.path.join(p, '*.*')))

elif os.path.isfile(p):

files = [p]

else:

raise Exception(f'ERROR: {p} does not exist')

images = [x for x in files if x.split('.')[-1].lower() in IMG_FORMATS]

videos = [x for x in files if x.split('.')[-1].lower() in VID_FORMATS]

ni, nv = len(images), len(videos)

self.img_size = img_size

self.stride = stride

self.files = images + videos

self.nf = ni + nv

self.video_flag = [False] * ni + [True] * nv

self.mode = 'image'

self.auto = auto

if any(videos):

self.new_video(videos[0])

else:

self.cap = None

assert self.nf > 0, f'No images or videos found in {p}. ' \

f'Supported formats are:\nimages: {IMG_FORMATS}\nvideos: {VID_FORMATS}'

def __iter__(self):

self.count = 0

return self

def __next__(self):

if self.count == self.nf:

raise StopIteration

path = self.files[self.count]

if self.video_flag[self.count]:

self.mode = 'video'

ret_val, img0 = self.cap.read()

while not ret_val:

self.count += 1

self.cap.release()

if self.count == self.nf:

raise StopIteration

else:

path = self.files[self.count]

self.new_video(path)

ret_val, img0 = self.cap.read()

self.frame += 1

s = f'video {self.count + 1}/{self.nf} ({self.frame}/{self.frames}) {path}: '

else:

self.count += 1

img0 = cv2.imread(path)

assert img0 is not None, f'Image Not Found {path}'

s = f'image {self.count}/{self.nf} {path}: '

img = letterbox(img0, self.img_size, stride=self.stride, auto=self.auto)[0]

img = img.transpose((2, 0, 1))[::-1]

img = np.ascontiguousarray(img)

return path, img, img0, self.cap, s

def new_video(self, path):

self.frame = 0

self.cap = cv2.VideoCapture(path)

self.frames = int(self.cap.get(cv2.CAP_PROP_FRAME_COUNT))

def __len__(self):

return self.nf

class LoadWebcam:

def __init__(self, pipe='0', img_size=640, stride=32):

self.img_size = img_size

self.stride = stride

self.pipe = eval(pipe) if pipe.isnumeric() else pipe

self.cap = cv2.VideoCapture(self.pipe)

self.cap.set(cv2.CAP_PROP_BUFFERSIZE, 3)

def __iter__(self):

self.count = -1

return self

def __next__(self):

self.count += 1

if cv2.waitKey(1) == ord('q'):

self.cap.release()

cv2.destroyAllWindows()

raise StopIteration

ret_val, img0 = self.cap.read()

img0 = cv2.flip(img0, 1)

assert ret_val, f'Camera Error {self.pipe}'

img_path = 'webcam.jpg'

s = f'webcam {self.count}: '

img = letterbox(img0, self.img_size, stride=self.stride)[0]

img = img.transpose((2, 0, 1))[::-1]

img = np.ascontiguousarray(img)

return img_path, img, img0, None, s

def __len__(self):

return 0

class LoadStreams:

def __init__(self, sources='streams.txt', img_size=640, stride=32, auto=True):

self.mode = 'stream'

self.img_size = img_size

self.stride = stride

if os.path.isfile(sources):

with open(sources) as f:

sources = [x.strip() for x in f.read().strip().splitlines() if len(x.strip())]

else:

sources = [sources]

n = len(sources)

self.imgs, self.fps, self.frames, self.threads = [None] * n, [0] * n, [0] * n, [None] * n

self.sources = [clean_str(x) for x in sources]

self.auto = auto

for i, s in enumerate(sources):

st = f'{i + 1}/{n}: {s}... '

if 'youtube.com/' in s or 'youtu.be/' in s:

check_requirements(('pafy', 'youtube_dl==2020.12.2'))

import pafy

s = pafy.new(s).getbest(preftype="mp4").url

s = eval(s) if s.isnumeric() else s

cap = cv2.VideoCapture(s)

assert cap.isOpened(), f'{st}Failed to open {s}'

w = int(cap.get(cv2.CAP_PROP_FRAME_WIDTH))

h = int(cap.get(cv2.CAP_PROP_FRAME_HEIGHT))

fps = cap.get(cv2.CAP_PROP_FPS)

self.frames[i] = max(int(cap.get(cv2.CAP_PROP_FRAME_COUNT)), 0) or float('inf')

self.fps[i] = max((fps if math.isfinite(fps) else 0) % 100, 0) or 30

_, self.imgs[i] = cap.read()

self.threads[i] = Thread(target=self.update, args=([i, cap, s]), daemon=True)

LOGGER.info(f"{st} Success ({self.frames[i]} frames {w}x{h} at {self.fps[i]:.2f} FPS)")

self.threads[i].start()

LOGGER.info('')

s = np.stack([letterbox(x, self.img_size, stride=self.stride, auto=self.auto)[0].shape for x in self.imgs])

self.rect = np.unique(s, axis=0).shape[0] == 1

if not self.rect:

LOGGER.warning('WARNING: Stream shapes differ. For optimal performance supply similarly-shaped streams.')

def update(self, i, cap, stream):

n, f, read = 0, self.frames[i], 1

while cap.isOpened() and n < f:

n += 1

cap.grab()

if n % read == 0:

success, im = cap.retrieve()

if success:

self.imgs[i] = im

else:

LOGGER.warning('WARNING: Video stream unresponsive, please check your IP camera connection.')

self.imgs[i] = np.zeros_like(self.imgs[i])

cap.open(stream)

time.sleep(1 / self.fps[i])

def __iter__(self):

self.count = -1

return self

def __next__(self):

self.count += 1

if not all(x.is_alive() for x in self.threads) or cv2.waitKey(1) == ord('q'):

cv2.destroyAllWindows()

raise StopIteration

img0 = self.imgs.copy()

img = [letterbox(x, self.img_size, stride=self.stride, auto=self.rect and self.auto)[0] for x in img0]

img = np.stack(img, 0)

img = img[..., ::-1].transpose((0, 3, 1, 2))

img = np.ascontiguousarray(img)

return self.sources, img, img0, None, ''

def __len__(self):

return len(self.sources)

def img2label_paths(img_paths):

sa, sb = os.sep + 'images' + os.sep, os.sep + 'labels' + os.sep

return [sb.join(x.rsplit(sa, 1)).rsplit('.', 1)[0] + '.txt' for x in img_paths]

class LoadImagesAndLabels(Dataset):

cache_version = 0.6

def __init__(self, path, img_size=640, batch_size=16, augment=False, hyp=None, rect=False, image_weights=False,

cache_images=False, single_cls=False, stride=32, pad=0.0, prefix=''):

"""

初始化过程并没有什么实质性的操作,更多是一个定义参数的过程(self参数),以便在__getitem()__中进行数据增强操作,所以这部分代码只需要抓住self中的各个变量的含义就算差不多了

:param path: 图片数据加载路径 train/test 如: ../datasets/VOC/images/train2007

:param img_size: train/test图片尺寸(数据增强后大小) 640

:param batch_size: batch size 大小 8/16/32

:param augment: 是否要进行数据增强 True

:param hyp: 超参列表dict 网络训练时的一些超参数,包括学习率等,这里主要用到里面一些关于数据增强(旋转、平移等)的系数

:param rect: 是否开启矩形train/test 默认训练集关闭 验证集开启

:param image_weights: 训练时是否根据图片样本真实框分布权重来选择图片 默认False

:param cache_images: 是否cache_images False

:param single_cls: 数据集是否是单类别 默认False

:param stride: 模型最大stride=32 [32 16 8]

:param pad: 设置矩形训练的shape时进行的填充 默认0.0

:param prefix: 显示信息 一个标志,多为train/val,处理标签时保存cache文件会用到

self.img_files: {list: N} 存放着整个数据集图片的相对路径

self.label_files: {list: N} 存放着整个数据集标签的相对路径

cache label -> verify_image_label

self.labels: 如果数据集所有图片中没有一个多边形label labels存储的label就都是原始label(都是正常的矩形label)

否则将所有图片正常gt的label存入labels 不正常gt(存在一个多边形)经过segments2boxes转换为正常的矩形label

self.shapes: 所有图片的shape

self.segments: 如果数据集所有图片中没有一个多边形label self.segments=None

否则存储数据集中所有存在多边形gt的图片的所有原始label(肯定有多边形label 也可能有矩形正常label 未知数)

self.batch: 记载着每张图片属于哪个batch

self.n: 数据集中所有图片的数量

self.indices: 记载着所有图片的index

self.rect=True时self.batch_shapes记载每个batch的shape(同一个batch的图片shape相同)

"""

self.img_size = img_size

self.augment = augment

self.hyp = hyp

self.image_weights = image_weights

self.rect = False if image_weights else rect

self.mosaic = self.augment and not self.rect

self.mosaic_border = [-img_size // 2, -img_size // 2]

self.stride = stride

self.path = path

self.albumentations = Albumentations() if augment else None

try:

f = []

for p in path if isinstance(path, list) else [path]:

p = Path(p)

if p.is_dir():

f += glob.glob(str(p / '**' / '*.*'), recursive=True)

elif p.is_file():

with open(p) as t:

t = t.read().strip().splitlines()

parent = str(p.parent) + os.sep

f += [x.replace('./', parent) if x.startswith('./') else x for x in t]

else:

raise Exception(f'{prefix}{p} does not exist')

self.img_files = sorted(x.replace('/', os.sep) for x in f if x.split('.')[-1].lower() in IMG_FORMATS)

assert self.img_files, f'{prefix}No images found'

except Exception as e:

raise Exception(f'{prefix}Error loading data from {path}: {e}\nSee {HELP_URL}')

self.label_files = img2label_paths(self.img_files)

cache_path = (p if p.is_file() else Path(self.label_files[0]).parent).with_suffix('.cache')

try:

cache, exists = np.load(cache_path, allow_pickle=True).item(), True

assert cache['version'] == self.cache_version

assert cache['hash'] == get_hash(self.label_files + self.img_files)

except Exception:

cache, exists = self.cache_labels(cache_path, prefix), False

nf, nm, ne, nc, n = cache.pop('results')

if exists:

d = f"Scanning '{cache_path}' images and labels... {nf} found, {nm} missing, {ne} empty, {nc} corrupt"

tqdm(None, desc=prefix + d, total=n, initial=n)

if cache['msgs']:

LOGGER.info('\n'.join(cache['msgs']))

assert nf > 0 or not augment, f'{prefix}No labels in {cache_path}. Can not train without labels. See {HELP_URL}'

[cache.pop(k) for k in ('hash', 'version', 'msgs')]

labels, shapes, self.segments = zip(*cache.values())

self.labels = list(labels)

self.shapes = np.array(shapes, dtype=np.float64)

self.img_files = list(cache.keys())

self.label_files = img2label_paths(cache.keys())

n = len(shapes)

bi = np.floor(np.arange(n) / batch_size).astype(np.int)

nb = bi[-1] + 1

self.batch = bi

self.n = n

self.indices = range(n)

include_class = [13,14]

include_class_array = np.array(include_class).reshape(1, -1)

for i, (label, segment) in enumerate(zip(self.labels, self.segments)):

if include_class:

j = (label[:, 0:1] == include_class_array).any(1)

self.labels[i] = label[j]

if segment:

self.segments[i] = segment[j]

if single_cls:

self.labels[i][:, 0] = 0

if segment:

self.segments[i][:, 0] = 0

if self.rect:

s = self.shapes

ar = s[:, 1] / s[:, 0]

irect = ar.argsort()

self.img_files = [self.img_files[i] for i in irect]

self.label_files = [self.label_files[i] for i in irect]

self.labels = [self.labels[i] for i in irect]

self.shapes = s[irect]

ar = ar[irect]

shapes = [[1, 1]] * nb

for i in range(nb):

ari = ar[bi == i]

mini, maxi = ari.min(), ari.max()

if maxi < 1:

shapes[i] = [maxi, 1]

elif mini > 1:

shapes[i] = [1, 1 / mini]

self.batch_shapes = np.ceil(np.array(shapes) * img_size / stride + pad).astype(np.int) * stride

self.imgs, self.img_npy = [None] * n, [None] * n

if cache_images:

if cache_images == 'disk':

self.im_cache_dir = Path(Path(self.img_files[0]).parent.as_posix() + '_npy')

self.img_npy = [self.im_cache_dir / Path(f).with_suffix('.npy').name for f in self.img_files]

self.im_cache_dir.mkdir(parents=True, exist_ok=True)

gb = 0

self.img_hw0, self.img_hw = [None] * n, [None] * n

results = ThreadPool(NUM_THREADS).imap(self.load_image, range(n))

pbar = tqdm(enumerate(results), total=n)

for i, x in pbar:

if cache_images == 'disk':

if not self.img_npy[i].exists():

np.save(self.img_npy[i].as_posix(), x[0])

gb += self.img_npy[i].stat().st_size

else:

self.imgs[i], self.img_hw0[i], self.img_hw[i] = x

gb += self.imgs[i].nbytes

pbar.desc = f'{prefix}Caching images ({gb / 1E9:.1f}GB {cache_images})'

pbar.close()

def cache_labels(self, path=Path('./labels.cache'), prefix=''):

"""用在__init__函数中 cache数据集label

加载label信息生成cache文件 Cache dataset labels, check images and read shapes

:params path: cache文件保存地址

:params prefix: 日志头部信息(彩打高亮部分)

:return x: cache中保存的字典

包括的信息有: x[im_file] = [l, shape, segments]

一张图片一个label相对应的保存到x, 最终x会保存所有图片的相对路径、gt框的信息、形状shape、所有的多边形gt信息

im_file: 当前这张图片的path相对路径

l: 当前这张图片的所有gt框的label信息(不包含segment多边形标签) [gt_num, cls+xywh(normalized)]

shape: 当前这张图片的形状 shape

segments: 当前这张图片所有gt的label信息(包含segment多边形标签) [gt_num, xy1...]

hash: 当前图片和label文件的hash值 1

results: 找到的label个数nf, 丢失label个数nm, 空label个数ne, 破损label个数nc, 总img/label个数len(self.img_files)

msgs: 所有数据集的msgs信息

version: 当前cache version

"""

x = {}

nm, nf, ne, nc, msgs = 0, 0, 0, 0, []

desc = f"{prefix}Scanning '{path.parent / path.stem}' images and labels..."

with Pool(NUM_THREADS) as pool:

pbar = tqdm(pool.imap(verify_image_label, zip(self.img_files, self.label_files, repeat(prefix))),

desc=desc, total=len(self.img_files))

for im_file, lb, shape, segments, nm_f, nf_f, ne_f, nc_f, msg in pbar:

nm += nm_f

nf += nf_f

ne += ne_f

nc += nc_f

if im_file:

x[im_file] = [lb, shape, segments]

if msg:

msgs.append(msg)

pbar.desc = f"{desc}{nf} found, {nm} missing, {ne} empty, {nc} corrupt"

pbar.close()

if msgs:

LOGGER.info('\n'.join(msgs))

if nf == 0:

LOGGER.warning(f'{prefix}WARNING: No labels found in {path}. See {HELP_URL}')

x['hash'] = get_hash(self.label_files + self.img_files)

x['results'] = nf, nm, ne, nc, len(self.img_files)

x['msgs'] = msgs

x['version'] = self.cache_version

try:

np.save(path, x)

path.with_suffix('.cache.npy').rename(path)

LOGGER.info(f'{prefix}New cache created: {path}')

except Exception as e:

LOGGER.warning(f'{prefix}WARNING: Cache directory {path.parent} is not writeable: {e}')

return x

def __len__(self):

'''

求数据集图片的数量。

'''

return len(self.img_files)

def __getitem__(self, index):

"""

这部分是数据增强函数,一般一次性执行batch_size次。

训练 数据增强: mosaic(random_perspective) + hsv + 上下左右翻转

测试 数据增强: letterbox

:return torch.from_numpy(img): 这个index的图片数据(增强后) [3, 640, 640]

:return labels_out: 这个index图片的gt label [6, 6] = [gt_num, 0+class+xywh(normalized)]

:return self.img_files[index]: 这个index图片的路径地址

:return shapes: 这个batch的图片的shapes 测试时(矩形训练)才有 验证时为None for COCO mAP rescaling

"""

index = self.indices[index]

hyp = self.hyp

mosaic = self.mosaic and random.random() < hyp['mosaic']

if mosaic:

img, labels = self.load_mosaic(index)

shapes = None

if random.random() < hyp['mixup']:

img, labels = mixup(img, labels, *self.load_mosaic(random.randint(0, self.n - 1)))

else:

img, (h0, w0), (h, w) = self.load_image(index)

shape = self.batch_shapes[self.batch[index]] if self.rect else self.img_size

img, ratio, pad = letterbox(img, shape, auto=False, scaleup=self.augment)

shapes = (h0, w0), ((h / h0, w / w0), pad)

labels = self.labels[index].copy()

if labels.size:

labels[:, 1:] = xywhn2xyxy(labels[:, 1:], ratio[0] * w, ratio[1] * h, padw=pad[0], padh=pad[1])

if self.augment:

img, labels = random_perspective(img, labels,

degrees=hyp['degrees'],

translate=hyp['translate'],

scale=hyp['scale'],

shear=hyp['shear'],

perspective=hyp['perspective'])

nl = len(labels)

if nl:

labels[:, 1:5] = xyxy2xywhn(labels[:, 1:5], w=img.shape[1], h=img.shape[0], clip=True, eps=1E-3)

if self.augment:

img, labels = self.albumentations(img, labels)

nl = len(labels)

augment_hsv(img, hgain=hyp['hsv_h'], sgain=hyp['hsv_s'], vgain=hyp['hsv_v'])

if random.random() < hyp['flipud']:

img = np.flipud(img)

if nl:

labels[:, 2] = 1 - labels[:, 2]

if random.random() < hyp['fliplr']:

img = np.fliplr(img)

if nl:

labels[:, 1] = 1 - labels[:, 1]

labels_out = torch.zeros((nl, 6))

if nl:

labels_out[:, 1:] = torch.from_numpy(labels)

img = img.transpose((2, 0, 1))[::-1]

img = np.ascontiguousarray(img)

return torch.from_numpy(img), labels_out, self.img_files[index], shapes

def load_image(self, i):

"""用在LoadImagesAndLabels模块的__getitem__函数和load_mosaic模块中

从self或者从对应图片路径中载入对应index的图片 并将原图中hw中较大者扩展到self.img_size, 较小者同比例扩展

loads 1 image from dataset, returns img, original hw, resized hw

:params self: 一般是导入LoadImagesAndLabels中的self

:param index: 当前图片的index

:return: img: resize后的图片

(h0, w0): hw_original 原图的hw

img.shape[:2]: hw_resized resize后的图片hw(hw中较大者扩展到self.img_size, 较小者同比例扩展)

"""

im = self.imgs[i]

if im is None:

npy = self.img_npy[i]

if npy and npy.exists():

im = np.load(npy)

else:

f = self.img_files[i]

im = cv2.imread(f)

assert im is not None, f'Image Not Found {f}'

h0, w0 = im.shape[:2]

r = self.img_size / max(h0, w0)

if r != 1:

im = cv2.resize(im,

(int(w0 * r), int(h0 * r)),

interpolation=cv2.INTER_LINEAR if (self.augment or r > 1) else cv2.INTER_AREA)

return im, (h0, w0), im.shape[:2]

else:

return self.imgs[i], self.img_hw0[i], self.img_hw[i]

def load_mosaic(self, index):

"""用在LoadImagesAndLabels模块的__getitem__函数 进行mosaic数据增强

将四张图片拼接在一张马赛克图像中 loads images in a 4-mosaic

:param index: 需要获取的图像索引

:return: img4: mosaic和随机透视变换后的一张图片 numpy(640, 640, 3)

labels4: img4对应的target [M, cls+x1y1x2y2]

"""

labels4, segments4 = [], []

s = self.img_size

yc, xc = (int(random.uniform(-x, 2 * s + x)) for x in self.mosaic_border)

indices = [index] + random.choices(self.indices, k=3)

random.shuffle(indices)

for i, index in enumerate(indices):

img, _, (h, w) = self.load_image(index)

if i == 0:

img4 = np.full((s * 2, s * 2, img.shape[2]), 114, dtype=np.uint8)

x1a, y1a, x2a, y2a = max(xc - w, 0), max(yc - h, 0), xc, yc

x1b, y1b, x2b, y2b = w - (x2a - x1a), h - (y2a - y1a), w, h

elif i == 1:

x1a, y1a, x2a, y2a = xc, max(yc - h, 0), min(xc + w, s * 2), yc

x1b, y1b, x2b, y2b = 0, h - (y2a - y1a), min(w, x2a - x1a), h

elif i == 2:

x1a, y1a, x2a, y2a = max(xc - w, 0), yc, xc, min(s * 2, yc + h)

x1b, y1b, x2b, y2b = w - (x2a - x1a), 0, w, min(y2a - y1a, h)

elif i == 3:

x1a, y1a, x2a, y2a = xc, yc, min(xc + w, s * 2), min(s * 2, yc + h)

x1b, y1b, x2b, y2b = 0, 0, min(w, x2a - x1a), min(y2a - y1a, h)

img4[y1a:y2a, x1a:x2a] = img[y1b:y2b, x1b:x2b]

padw = x1a - x1b

padh = y1a - y1b

labels, segments = self.labels[index].copy(), self.segments[index].copy()

if labels.size:

labels[:, 1:] = xywhn2xyxy(labels[:, 1:], w, h, padw, padh)

segments = [xyn2xy(x, w, h, padw, padh) for x in segments]

labels4.append(labels)

segments4.extend(segments)

labels4 = np.concatenate(labels4, 0)

for x in (labels4[:, 1:], *segments4):

np.clip(x, 0, 2 * s, out=x)

img4, labels4, segments4 = copy_paste(img4, labels4, segments4, p=self.hyp['copy_paste'])

img4, labels4 = random_perspective(img4, labels4, segments4,

degrees=self.hyp['degrees'],

translate=self.hyp['translate'],

scale=self.hyp['scale'],

shear=self.hyp['shear'],

perspective=self.hyp['perspective'],

border=self.mosaic_border)

return img4, labels4

def load_mosaic9(self, index):

"""用在LoadImagesAndLabels模块的__getitem__函数 替换mosaic数据增强

将九张图片拼接在一张马赛克图像中 loads images in a 9-mosaic

:param self:

:param index: 需要获取的图像索引

:return: img9: mosaic和仿射增强后的一张图片

labels9: img9对应的target

"""

labels9, segments9 = [], []

s = self.img_size

indices = [index] + random.choices(self.indices, k=8)

random.shuffle(indices)

hp, wp = -1, -1

for i, index in enumerate(indices):

img, _, (h, w) = self.load_image(index)

if i == 0:

img9 = np.full((s * 3, s * 3, img.shape[2]), 114, dtype=np.uint8)

h0, w0 = h, w

c = s, s, s + w, s + h

elif i == 1:

c = s, s - h, s + w, s

elif i == 2:

c = s + wp, s - h, s + wp + w, s

elif i == 3:

c = s + w0, s, s + w0 + w, s + h

elif i == 4:

c = s + w0, s + hp, s + w0 + w, s + hp + h

elif i == 5:

c = s + w0 - w, s + h0, s + w0, s + h0 + h

elif i == 6:

c = s + w0 - wp - w, s + h0, s + w0 - wp, s + h0 + h

elif i == 7:

c = s - w, s + h0 - h, s, s + h0

elif i == 8:

c = s - w, s + h0 - hp - h, s, s + h0 - hp

padx, pady = c[:2]

x1, y1, x2, y2 = (max(x, 0) for x in c)

labels, segments = self.labels[index].copy(), self.segments[index].copy()

if labels.size:

labels[:, 1:] = xywhn2xyxy(labels[:, 1:], w, h, padx, pady)

segments = [xyn2xy(x, w, h, padx, pady) for x in segments]

labels9.append(labels)

segments9.extend(segments)

img9[y1:y2, x1:x2] = img[y1 - pady:, x1 - padx:]

hp, wp = h, w

yc, xc = (int(random.uniform(0, s)) for _ in self.mosaic_border)

img9 = img9[yc:yc + 2 * s, xc:xc + 2 * s]

labels9 = np.concatenate(labels9, 0)

labels9[:, [1, 3]] -= xc

labels9[:, [2, 4]] -= yc

c = np.array([xc, yc])

segments9 = [x - c for x in segments9]

for x in (labels9[:, 1:], *segments9):

np.clip(x, 0, 2 * s, out=x)

img9, labels9 = random_perspective(img9, labels9, segments9,

degrees=self.hyp['degrees'],

translate=self.hyp['translate'],

scale=self.hyp['scale'],

shear=self.hyp['shear'],

perspective=self.hyp['perspective'],

border=self.mosaic_border)

return img9, labels9

@staticmethod

def collate_fn(batch):

"""这个函数会在create_dataloader中生成dataloader时调用:

整理函数 将image和label整合到一起

:return torch.stack(img, 0): 如[16, 3, 640, 640] 整个batch的图片

:return torch.cat(label, 0): 如[15, 6] [num_target, img_index+class_index+xywh(normalized)] 整个batch的label

:return path: 整个batch所有图片的路径

:return shapes: (h0, w0), ((h / h0, w / w0), pad) for COCO mAP rescaling

pytorch的DataLoader打包一个batch的数据集时要经过此函数进行打包 通过重写此函数实现标签与图片对应的划分,一个batch中哪些标签属于哪一张图片,形如

[[0, 6, 0.5, 0.5, 0.26, 0.35],

[0, 6, 0.5, 0.5, 0.26, 0.35],

[1, 6, 0.5, 0.5, 0.26, 0.35],

[2, 6, 0.5, 0.5, 0.26, 0.35],]

前两行标签属于第一张图片, 第三行属于第二张。。。

注意:这个函数一般是当调用了batch_size次 getitem 函数后才会调用一次这个函数,

对batch_size张图片和对应的label进行打包。

强烈建议这里大家debug试试这里return的数据是不是我说的这样定义的。

"""

img, label, path, shapes = zip(*batch)

for i, lb in enumerate(label):

lb[:, 0] = i

return torch.stack(img, 0), torch.cat(label, 0), path, shapes

@staticmethod

def collate_fn4(batch):

"""同样在create_dataloader中生成dataloader时调用:

这里是yolo-v5作者实验性的一个代码 quad-collate function 当train.py的opt参数quad=True 则调用collate_fn4代替collate_fn

作用: 如之前用collate_fn可以返回图片[16, 3, 640, 640] 经过collate_fn4则返回图片[4, 3, 1280, 1280]

将4张mosaic图片[1, 3, 640, 640]合成一张大的mosaic图片[1, 3, 1280, 1280]

将一个batch的图片每四张处理, 0.5的概率将四张图片拼接到一张大图上训练, 0.5概率直接将某张图片上采样两倍训练

"""

img, label, path, shapes = zip(*batch)

n = len(shapes) // 4

img4, label4, path4, shapes4 = [], [], path[:n], shapes[:n]

ho = torch.tensor([[0.0, 0, 0, 1, 0, 0]])

wo = torch.tensor([[0.0, 0, 1, 0, 0, 0]])

s = torch.tensor([[1, 1, 0.5, 0.5, 0.5, 0.5]])

for i in range(n):

i *= 4

if random.random() < 0.5:

im = F.interpolate(img[i].unsqueeze(0).float(), scale_factor=2.0, mode='bilinear', align_corners=False)[

0].type(img[i].type())

lb = label[i]

else:

im = torch.cat((torch.cat((img[i], img[i + 1]), 1), torch.cat((img[i + 2], img[i + 3]), 1)), 2)

lb = torch.cat((label[i], label[i + 1] + ho, label[i + 2] + wo, label[i + 3] + ho + wo), 0) * s

img4.append(im)

label4.append(lb)

for i, lb in enumerate(label4):

lb[:, 0] = i

return torch.stack(img4, 0), torch.cat(label4, 0), path4, shapes4

def create_folder(path='./new'):

if os.path.exists(path):

shutil.rmtree(path)

os.makedirs(path)

def flatten_recursive(path=DATASETS_DIR / 'coco128'):

new_path = Path(str(path) + '_flat')

create_folder(new_path)

for file in tqdm(glob.glob(str(Path(path)) + '/**/*.*', recursive=True)):

shutil.copyfile(file, new_path / Path(file).name)

def extract_boxes(path=DATASETS_DIR / 'coco128'):

path = Path(path)

shutil.rmtree(path / 'classifier') if (path / 'classifier').is_dir() else None

files = list(path.rglob('*.*'))

n = len(files)

for im_file in tqdm(files, total=n):

if im_file.suffix[1:] in IMG_FORMATS:

im = cv2.imread(str(im_file))[..., ::-1]

h, w = im.shape[:2]

lb_file = Path(img2label_paths([str(im_file)])[0])

if Path(lb_file).exists():

with open(lb_file) as f:

lb = np.array([x.split() for x in f.read().strip().splitlines()], dtype=np.float32)

for j, x in enumerate(lb):

c = int(x[0])

f = (path / 'classifier') / f'{c}' / f'{path.stem}_{im_file.stem}_{j}.jpg'

if not f.parent.is_dir():

f.parent.mkdir(parents=True)

b = x[1:] * [w, h, w, h]

b[2:] = b[2:] * 1.2 + 3

b = xywh2xyxy(b.reshape(-1, 4)).ravel().astype(np.int)

b[[0, 2]] = np.clip(b[[0, 2]], 0, w)

b[[1, 3]] = np.clip(b[[1, 3]], 0, h)

assert cv2.imwrite(str(f), im[b[1]:b[3], b[0]:b[2]]), f'box failure in {f}'

def autosplit(path=DATASETS_DIR / 'coco128/images', weights=(0.9, 0.1, 0.0), annotated_only=False):

""" Autosplit a dataset into train/val/test splits and save path/autosplit_*.txt files

Usage: from utils.datasets import *; autosplit()

Arguments

path: Path to images directory

weights: Train, val, test weights (list, tuple)

annotated_only: Only use images with an annotated txt file

"""

path = Path(path)

files = sorted(x for x in path.rglob('*.*') if x.suffix[1:].lower() in IMG_FORMATS)

n = len(files)

random.seed(0)

indices = random.choices([0, 1, 2], weights=weights, k=n)

txt = ['autosplit_train.txt', 'autosplit_val.txt', 'autosplit_test.txt']

[(path.parent / x).unlink(missing_ok=True) for x in txt]

print(f'Autosplitting images from {path}' + ', using *.txt labeled images only' * annotated_only)

for i, img in tqdm(zip(indices, files), total=n):

if not annotated_only or Path(img2label_paths([str(img)])[0]).exists():

with open(path.parent / txt[i], 'a') as f:

f.write('./' + img.relative_to(path.parent).as_posix() + '\n')

def verify_image_label(args):

im_file, lb_file, prefix = args

nm, nf, ne, nc, msg, segments = 0, 0, 0, 0, '', []

try:

im = Image.open(im_file)

im.verify()

shape = exif_size(im)

assert (shape[0] > 9) & (shape[1] > 9), f'image size {shape} <10 pixels'

assert im.format.lower() in IMG_FORMATS, f'invalid image format {im.format}'

if im.format.lower() in ('jpg', 'jpeg'):

with open(im_file, 'rb') as f:

f.seek(-2, 2)

if f.read() != b'\xff\xd9':

ImageOps.exif_transpose(Image.open(im_file)).save(im_file, 'JPEG', subsampling=0, quality=100)

msg = f'{prefix}WARNING: {im_file}: corrupt JPEG restored and saved'

if os.path.isfile(lb_file):

nf = 1

with open(lb_file) as f:

lb = [x.split() for x in f.read().strip().splitlines() if len(x)]

if any([len(x) > 8 for x in lb]):

classes = np.array([x[0] for x in lb], dtype=np.float32)

segments = [np.array(x[1:], dtype=np.float32).reshape(-1, 2) for x in lb]

lb = np.concatenate((classes.reshape(-1, 1), segments2boxes(segments)), 1)

lb = np.array(lb, dtype=np.float32)

nl = len(lb)

if nl:

assert lb.shape[1] == 5, f'labels require 5 columns, {lb.shape[1]} columns detected'

assert (lb >= 0).all(), f'negative label values {lb[lb < 0]}'

assert (lb[:, 1:] <= 1).all(), f'non-normalized or out of bounds coordinates {lb[:, 1:][lb[:, 1:] > 1]}'

_, i = np.unique(lb, axis=0, return_index=True)

if len(i) < nl:

lb = lb[i]

if segments:

segments = segments[i]

msg = f'{prefix}WARNING: {im_file}: {nl - len(i)} duplicate labels removed'

else:

ne = 1

lb = np.zeros((0, 5), dtype=np.float32)

else:

nm = 1

lb = np.zeros((0, 5), dtype=np.float32)

return im_file, lb, shape, segments, nm, nf, ne, nc, msg

except Exception as e:

nc = 1

msg = f'{prefix}WARNING: {im_file}: ignoring corrupt image/label: {e}'

return [None, None, None, None, nm, nf, ne, nc, msg]

def dataset_stats(path='coco128.yaml', autodownload=False, verbose=False, profile=False, hub=False):

""" Return dataset statistics dictionary with images and instances counts per split per class

To run in parent directory: export PYTHONPATH="$PWD/yolov5"

Usage1: from utils.datasets import *; dataset_stats('coco128.yaml', autodownload=True)

Usage2: from utils.datasets import *; dataset_stats('path/to/coco128_with_yaml.zip')

Arguments

path: Path to data.yaml or data.zip (with data.yaml inside data.zip)

autodownload: Attempt to download dataset if not found locally

verbose: Print stats dictionary

"""

def round_labels(labels):

return [[int(c), *(round(x, 4) for x in points)] for c, *points in labels]

def unzip(path):

if str(path).endswith('.zip'):

assert Path(path).is_file(), f'Error unzipping {path}, file not found'

ZipFile(path).extractall(path=path.parent)

dir = path.with_suffix('')

return True, str(dir), next(dir.rglob('*.yaml'))

else:

return False, None, path

def hub_ops(f, max_dim=1920):

f_new = im_dir / Path(f).name

try:

im = Image.open(f)

r = max_dim / max(im.height, im.width)

if r < 1.0:

im = im.resize((int(im.width * r), int(im.height * r)))

im.save(f_new, 'JPEG', quality=75, optimize=True)

except Exception as e:

print(f'WARNING: HUB ops PIL failure {f}: {e}')

im = cv2.imread(f)

im_height, im_width = im.shape[:2]

r = max_dim / max(im_height, im_width)

if r < 1.0:

im = cv2.resize(im, (int(im_width * r), int(im_height * r)), interpolation=cv2.INTER_AREA)

cv2.imwrite(str(f_new), im)

zipped, data_dir, yaml_path = unzip(Path(path))

with open(check_yaml(yaml_path), errors='ignore') as f:

data = yaml.safe_load(f)

if zipped:

data['path'] = data_dir

check_dataset(data, autodownload)

hub_dir = Path(data['path'] + ('-hub' if hub else ''))

stats = {'nc': data['nc'], 'names': data['names']}

for split in 'train', 'val', 'test':

if data.get(split) is None:

stats[split] = None

continue

x = []

dataset = LoadImagesAndLabels(data[split])

for label in tqdm(dataset.labels, total=dataset.n, desc='Statistics'):

x.append(np.bincount(label[:, 0].astype(int), minlength=data['nc']))

x = np.array(x)

stats[split] = {'instance_stats': {'total': int(x.sum()), 'per_class': x.sum(0).tolist()},

'image_stats': {'total': dataset.n, 'unlabelled': int(np.all(x == 0, 1).sum()),

'per_class': (x > 0).sum(0).tolist()},

'labels': [{str(Path(k).name): round_labels(v.tolist())} for k, v in

zip(dataset.img_files, dataset.labels)]}

if hub:

im_dir = hub_dir / 'images'

im_dir.mkdir(parents=True, exist_ok=True)

for _ in tqdm(ThreadPool(NUM_THREADS).imap(hub_ops, dataset.img_files), total=dataset.n, desc='HUB Ops'):

pass

stats_path = hub_dir / 'stats.json'

if profile:

for _ in range(1):

file = stats_path.with_suffix('.npy')

t1 = time.time()

np.save(file, stats)

t2 = time.time()

x = np.load(file, allow_pickle=True)

print(f'stats.npy times: {time.time() - t2:.3f}s read, {t2 - t1:.3f}s write')

file = stats_path.with_suffix('.json')

t1 = time.time()

with open(file, 'w') as f:

json.dump(stats, f)

t2 = time.time()

with open(file) as f:

x = json.load(f)

print(f'stats.json times: {time.time() - t2:.3f}s read, {t2 - t1:.3f}s write')

if hub:

print(f'Saving {stats_path.resolve()}...')

with open(stats_path, 'w') as f:

json.dump(stats, f)

if verbose:

print(json.dumps(stats, indent=2, sort_keys=False))

return stats

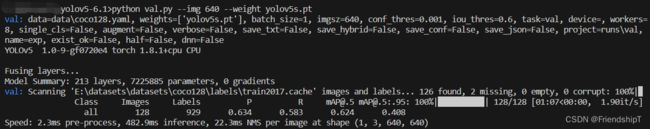

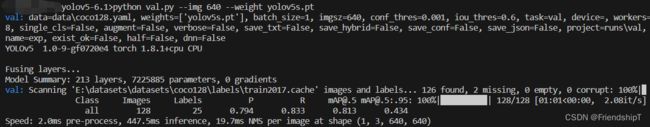

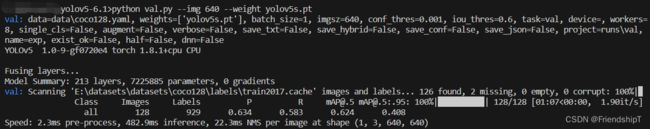

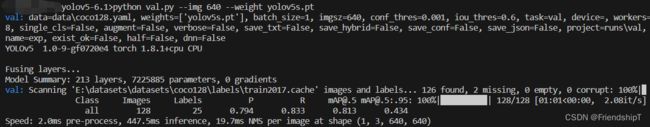

进行验证

python val.py --img 640 --weight yolov5s.pt

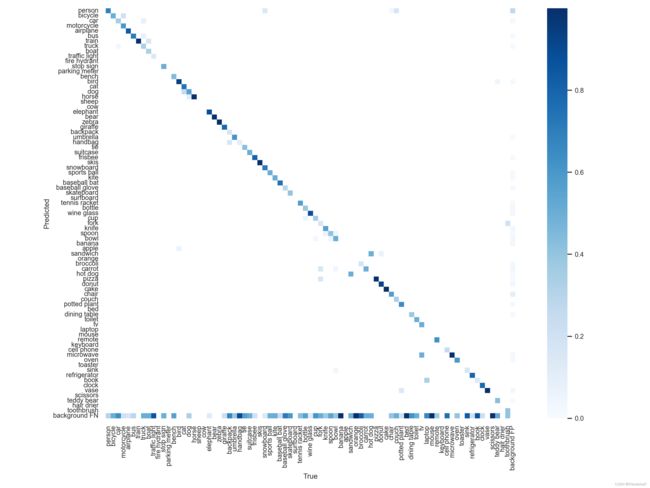

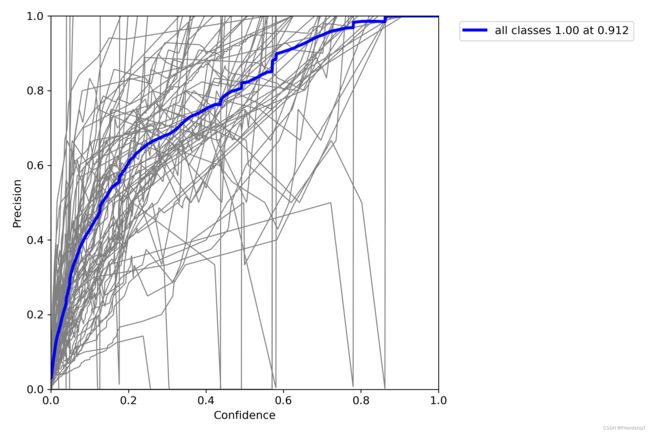

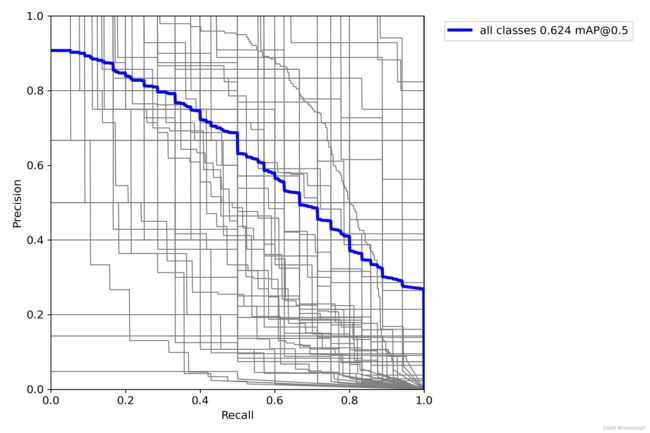

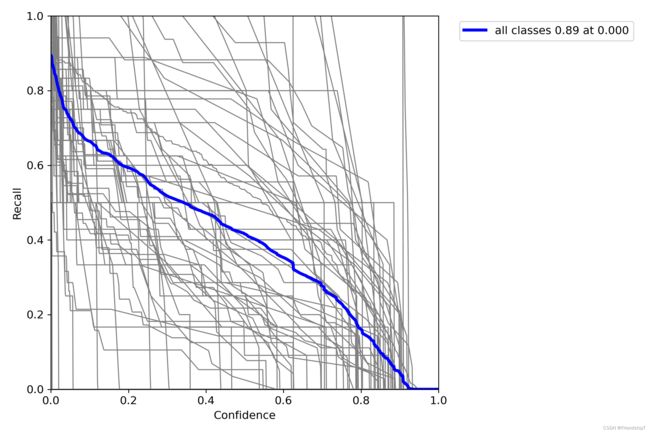

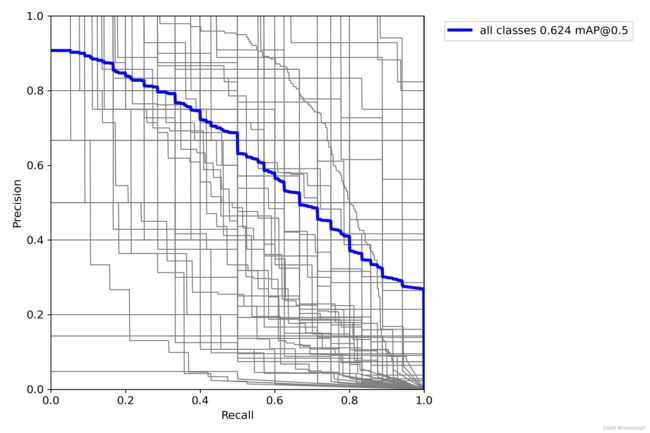

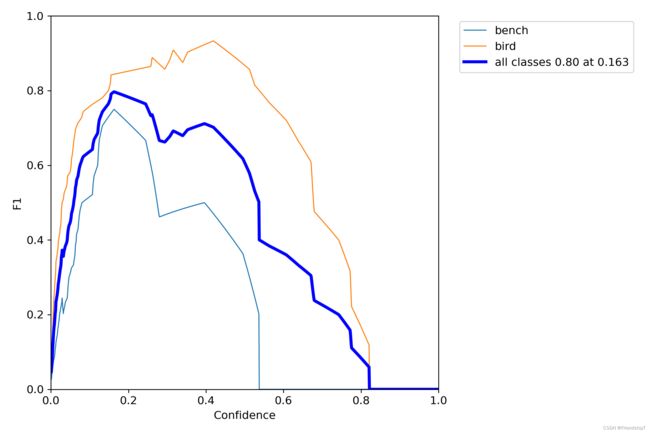

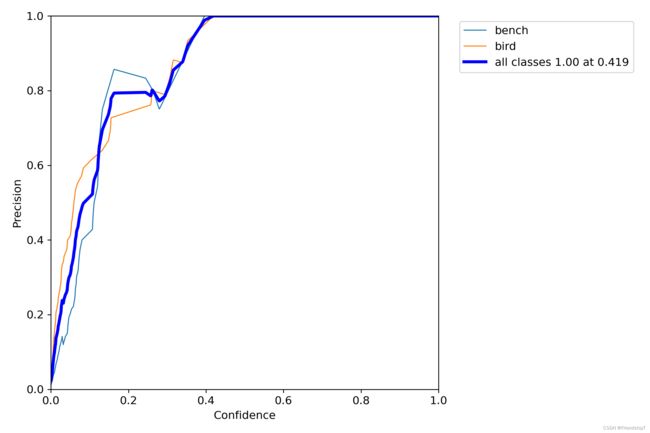

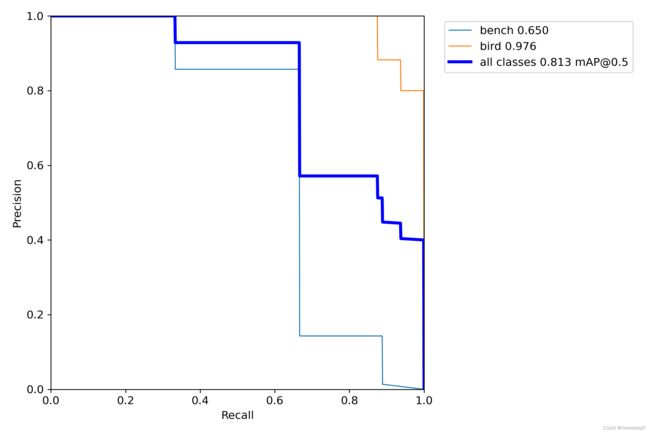

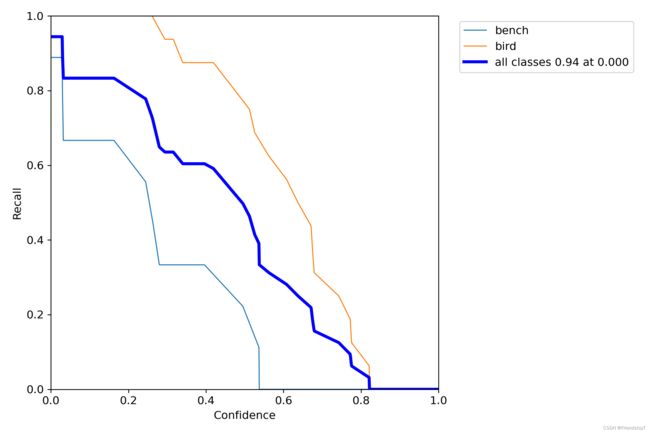

没有指定的结果

include_class = []

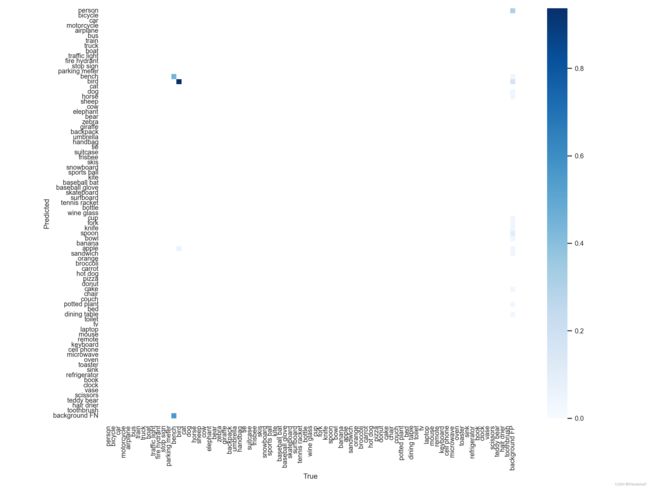

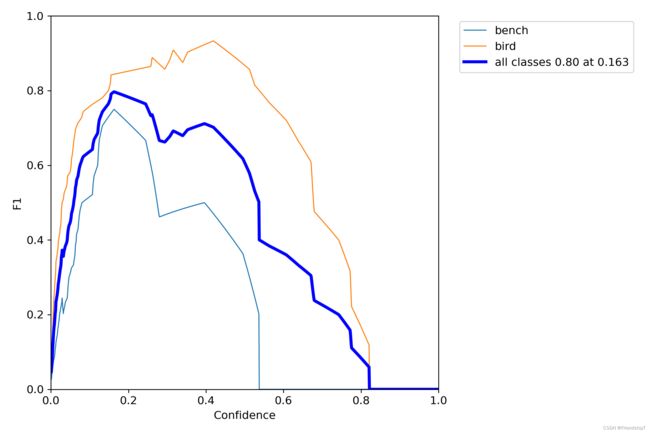

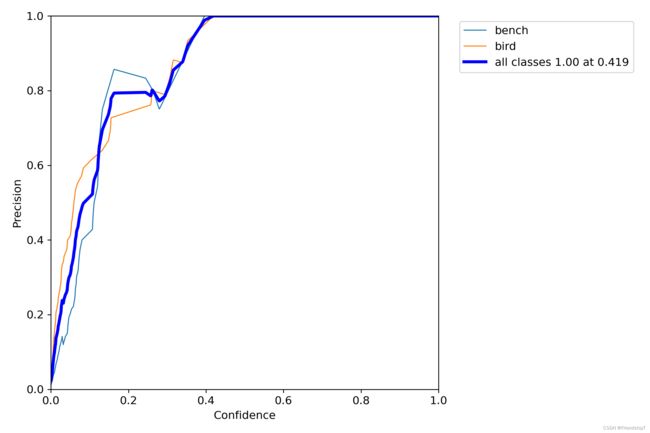

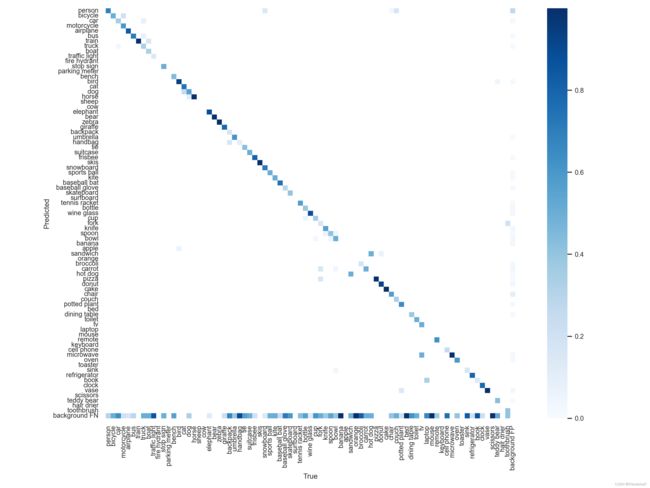

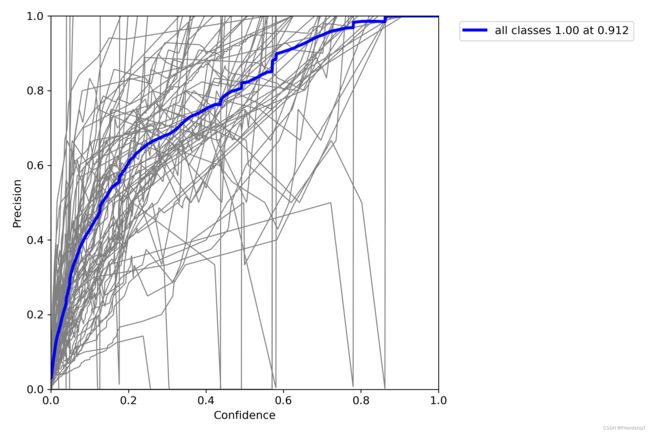

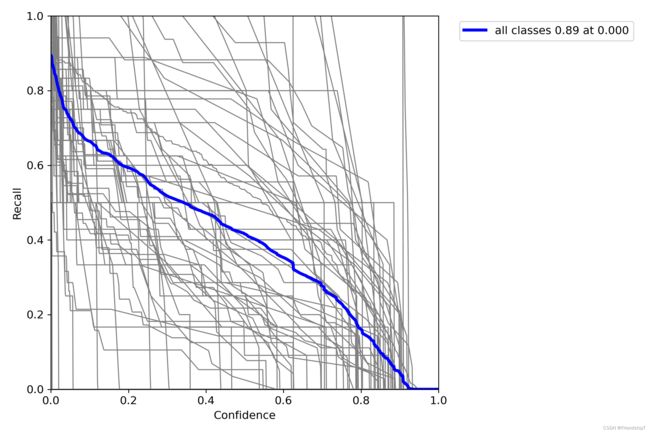

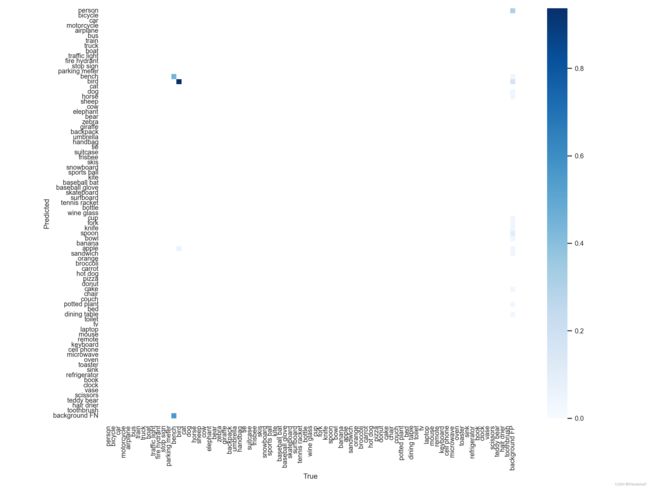

指定类别的结果

include_class = [13,14]

- 由于本人水平有限,难免出现错漏,敬请批评改正。

- 更多精彩内容,可点击进入Python日常小操作专栏、OpenCV-Python小应用专栏、YOLO系列专栏、自然语言处理专栏或我的个人主页查看

- 基于DETR的人脸伪装检测

- YOLOv7训练自己的数据集(口罩检测)

- YOLOv8训练自己的数据集(足球检测)

- YOLOv5:TensorRT加速YOLOv5模型推理

- YOLOv5:IoU、GIoU、DIoU、CIoU、EIoU

- 玩转Jetson Nano(五):TensorRT加速YOLOv5目标检测

- YOLOv5:添加SE、CBAM、CoordAtt、ECA注意力机制

- YOLOv5:yolov5s.yaml配置文件解读、增加小目标检测层

- Python将COCO格式实例分割数据集转换为YOLO格式实例分割数据集

- YOLOv5:使用7.0版本训练自己的实例分割模型(车辆、行人、路标、车道线等实例分割)

- 使用Kaggle GPU资源免费体验Stable Diffusion开源项目