【OpenMMLab AI实战营 学习笔记 DAY(三)-- mmclassification 安装配置 及 利用resnet训练flower模型】

OpenMMLab AI实战营 学习笔记 DAY(三)-- 在北京超算平台mmclassification 安装配置 及 利用resnet训练flower模型

- 北京超算平台

- 一、mmclassification 环境安装配置

- 二、模型搭建及训练

-

- 数据集

- MMCls 配置⽂件

- 提交计算

本次课程,仍然是由王若晖老师进行讲解,中间的答疑部分由张子豪(B站 同济子豪兄)进行答疑讲解,最后是由北京超级云计算的沈平岗老师进行北京超算平台的使用,以及具体的代码实现。 具体链接在这,也可以直接打开哔哩哔哩,搜索OpenMMLab,在其主页可以观看。

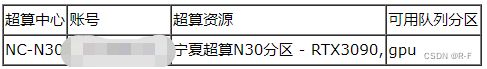

北京超算平台

点击下载客户端,并安装。参加openmmlab课程的同学可以通过给出的申请二维码扫描,完善信息问卷后等待几个工作日邮箱会发送回复(账号密码)。还可以自己搜索“北京超级云计算中心”微信公众号,关注后回复“2”即可获取申请试算通道。

##注意在申请核时要清楚自己申请CPU资源还是GPU资源,需要使用GPU的服务器有CPU

然后输入自己的账号密码,登录北京超算平台,并且通过ssh访问自己的账号。

一、mmclassification 环境安装配置

根据 mmclassification 的环境要求,需要⽤ anaconda、cuda、gcc 等基础环境模块。在 N30 分区可以使⽤module avail 命令可以使⽤模块信息。

- 加载 anaconda ,创建⼀个 python 3.8 的环境。

1 # 加载 anaconda/2021.05

2 module load anaconda/2021.05

3

4 # 创建 python=3.8 的环境

5 conda create --name opennmmlab_mmclassification python=3.8

6

7 # 激活环境

8 source activate opennmmlab_mmclassification

- 安装 torch,torch 参考 需求。注意在 RTX3090 的GPU上,cuda 版本需要 ≥ 11.1 。 如下安装的 torch是 1.10.0+cu111 。使⽤ pip 安装的torch 不包括 cuda,所以需要使⽤ module 加载 cuda/11.1 模块。

1 # 加载 cuda/11.1

2 module load cuda/11.1

3

4 # 安装 torch

5 pip install torch==1.10.0+cu111 torchvision==0.11.0+cu111 torchaudio==0.10.0 -f https://download.pytorch.org/whl/torch_stable.html

- 安装 mmcv-full 模块,mmcv-full 模块安装时候需要注意 torch 和 cuda 版本。参考这⾥ 。

1 pip install mmcv-full==1.7.0 -f https://download.openmmlab.com/mmcv/dist/cu111/torch1.10/index.html

- 安装 openmmlab/mmclassification 模块,建议通过下载编译的⽅式进⾏安装;安装该模块需要 gcc ≥ 5,使⽤ module 加载⼀个 gcc ,例如 module load gcc/7.3 。注意这里的pip install -e .(这个.是当级目录下)。

1 # 加载 gcc/7.3 模块

2 module load gcc/7.3

3

4 # git 下载 mmclassification 代码

5 git clone https://github.com/open-mmlab/mmclassification.git

6

7 # 编译安装

8 cd mmclassification

9 pip install -e .

- 准备 shell 脚本,将环境信息预先保存在脚本中。

1 #!/bin/bash

2 # 加载模块

3 module load anaconda/2021.05

4 module load cuda/11.1

5 module load gcc/7.3

6

7 # 激活环境

8 source activate opennmmlab_mmclassification

二、模型搭建及训练

数据集

flower 数据集包含 5 种类别的花卉图像:雏菊 daisy 588张,蒲公英 dandelion 556张,玫瑰 rose 583张,向⽇

葵 sunflower 536张,郁⾦⾹ tulip 585张。

数据集下载链接: https://pan.baidu.com/s/1RJmAoxCD_aNPyTRX6w97xQ 提取码: 9x5u

- 将数据集按照 8:2 的⽐例划分成训练和验证⼦数据集,并将数据集整理成ImageNet的格式

- 将训练⼦集和验证⼦集放到 train 和 val ⽂件夹下。

1 flower_dataset

2 |--- classes.txt

3 |--- train.txt

4 |--- val.txt

5 | |--- train

6 | | |--- daisy

7 | | | |--- NAME1.jpg

8 | | | |--- NAME2.jpg

9 | | | |--- ...

10 | | |--- dandelion

11 | | | |--- NAME1.jpg

12 | | | |--- NAME2.jpg

13 | | | |--- ...

14 | | |--- rose

15 | | | |--- NAME1.jpg

16 | | | |--- NAME2.jpg

17 | | | |--- ...

18 | | |--- sunflower

19 | | | |--- NAME1.jpg

20 | | | |--- NAME2.jpg

21 | | | |--- ...

22 | | |--- tulip

23 | | | |--- NAME1.jpg

24 | | | |--- NAME2.jpg

25 | | | |--- ...

26 | |--- val

27 | | |--- daisy

28 | | | |--- NAME1.jpg

29 | | | |--- NAME2.jpg

30 | | | |--- ...

31 | | |--- dandelion

32 | | | |--- NAME1.jpg

33 | | | |--- NAME2.jpg

34 | | | |--- ...

35 | | |--- rose

36 | | | |--- NAME1.jpg

37 | | | |--- NAME2.jpg

38 | | | |--- ...

39 | | |--- sunflower

40 | | | |--- NAME1.jpg

41 | | | |--- NAME2.jpg

42 | | | |--- ...

43 | | |--- tulip

44 | | | |--- NAME1.jpg

45 | | | |--- NAME2.jpg

46 | | | |--- ...

- 创建并编辑标注⽂件将所有类别的名称写到 classes.txt 中,每⾏代表⼀个类别。

1 tulip

2 dandelion

3 daisy

4 sunflower

5 rose

- ⽣成训练(可选)和验证⼦集标注列表 train.txt 和 val.txt ,每⾏应包含⼀个⽂件名和其对应的标签。如下,可将处理好的数据集迁移到 mmclassification/data ⽂件夹下。

1 ...

2 daisy/NAME**.jpg 0

3 daisy/NAME**.jpg 0

4 ...

5 dandelion/NAME**.jpg 1

6 dandelion/NAME**.jpg 1

7 ...

8 rose/NAME**.jpg 2

9 rose/NAME**.jpg 2

10 ...

11 sunflower/NAME**.jpg 3

12 sunflower/NAME**.jpg 3

13 ...

14 tulip/NAME**.jpg 4

15 tulip/NAME**.jpg 4

- 数据集划分代码 split_data.py 如下,执⾏:

1 python split_data.py [源数据集路径] [⽬标数据集路径]

1 import os

2 import sys

3 import shutil

4 import numpy as np

5

6

7 def load_data(data_path):

8 count = 0

9 data = {}

10 for dir_name in os.listdir(data_path):

11 dir_path = os.path.join(data_path, dir_name)

12 if not os.path.isdir(dir_path):

13 continue

14

15 data[dir_name] = []

16 for file_name in os.listdir(dir_path):

17 file_path = os.path.join(dir_path, file_name)

18 if not os.path.isfile(file_path):

19 continue

20 data[dir_name].append(file_path)

21

22 count += len(data[dir_name])

23 print("{} :{}".format(dir_name, len(data[dir_name])))

24

25 print("total of image : {}".format(count))

26 return data

27

28

29 def copy_dataset(src_img_list, data_index, target_path):

30 target_img_list = []

31 for index in data_index:

32 src_img = src_img_list[index]

33 img_name = os.path.split(src_img)[-1]

34

35 shutil.copy(src_img, target_path)

36 target_img_list.append(os.path.join(target_path, img_name))

37 return target_img_list

38

39

40 def write_file(data, file_name):

41 if isinstance(data, dict):

42 write_data = []

43 for lab, img_list in data.items():

44 for img in img_list:

45 write_data.append("{} {}".format(img, lab))

46 else:

47 write_data = data

48

49 with open(file_name, "w") as f:

50 for line in write_data:

51 f.write(line + "\n")

52

53 print("{} write over!".format(file_name))

54

55

56 def split_data(src_data_path, target_data_path, train_rate=0.8):

57 src_data_dict = load_data(src_data_path)

58

59 classes = []

60 train_dataset, val_dataset = {}, {}

61 train_count, val_count = 0, 0

62 for i, (cls_name, img_list) in enumerate(src_data_dict.items()):

63 img_data_size = len(img_list)

random_index = np.random.choice(img_data_size, img_data_size,

replace=False)

64

65

66 train_data_size = int(img_data_size * train_rate)

67 train_data_index = random_index[:train_data_size]

68 val_data_index = random_index[train_data_size:]

69

70 train_data_path = os.path.join(target_data_path, "train", cls_name)

71 val_data_path = os.path.join(target_data_path, "val", cls_name)

72 os.makedirs(train_data_path, exist_ok=True)

73 os.makedirs(val_data_path, exist_ok=True)

74

75 classes.append(cls_name)

train_dataset[i] = copy_dataset(img_list, train_data_index,

train_data_path)

76

77 val_dataset[i] = copy_dataset(img_list, val_data_index, val_data_path)

78

print("target {} train:{}, val:{}".format(cls_name,

len(train_dataset[i]), len(val_dataset[i])))

79

80 train_count += len(train_dataset[i])

81 val_count += len(val_dataset[i])

82

print("train size:{}, val size:{}, total:{}".format(train_count, val_count,

train_count + val_count))

83

84

85 write_file(classes, os.path.join(target_data_path, "classes.txt"))

86 write_file(train_dataset, os.path.join(target_data_path, "train.txt"))

87 write_file(val_dataset, os.path.join(target_data_path, "val.txt"))

88

89

90 def main():

91 src_data_path = sys.argv[1]

92 target_data_path = sys.argv[2]

93 split_data(src_data_path, target_data_path, train_rate=0.8)

94

95

96 if __name__ == '__main__':

97 main()

MMCls 配置⽂件

- 构建配置⽂件可以使⽤继承机制,从 configs/base 中继承 ImageNet 预训练的任何模型,ImageNet 的数据集配置,学习率策略等。

- 如下内容可命名为 resnet18_b32_flower.py,在 mmclassification/configs 下创建 resnet18 ⽬录,将该⽂件放到⾥⾯。

_base_ = ['../_base_/models/resnet18.py', '../_base_/datasets/imagenet_bs32.py', '../_base_/default_runtime.py']

model = dict(

head=dict(

num_classes=5,

topk = (1, )

))

data = dict(

samples_per_gpu = 32,

workers_per_gpu = 2,

train = dict(

data_prefix = '/HOME/yourname/run/mmclassification/data/flower/train',

ann_file = '/HOME/yourname/run/mmclassification/data/flower/train.txt',

classes = '/HOME/yourname/run/mmclassification/data/flower/classes.txt'

),

val = dict(

data_prefix = '/HOME/yourname/run/mmclassification/data/flower/val',

ann_file = '/HOME/yourname/run/mmclassification/data/flower/val.txt',

classes = '/HOME/yourname/run/mmclassification/data/flower/classes.txt'

)

)

optimizer = dict(type='SGD', lr=0.001, momentum=0.9, weight_decay=0.0001)

optimizer_config = dict(grad_clip=None)

lr_config = dict(

policy='step',

step=[1])

runner = dict(type='EpochBasedRunner', max_epochs=100)

# 预训练模型

load_from ='/HOME/yourname/run/mmclassification/checkpoints/resnet18_batch256_imagenet_20200708-34ab8f90.pth'

提交计算

- 单卡计算,在环境、数据集、MMCls 配置⽂件准备完成之后就可以提交计算。在 N30 提交计算可以通过作业脚本的⽅式,操作步骤如下:

1.新建⼀个作业脚本 run.sh,脚本的解释器可以是 /bin/sh、/bin/bash、/bin/csh 脚本内容如下:

########注意–work-dir 指定的work就是最后模型存放的空间########

#!/bin/bash

# 加载模块

module load anaconda/2021.05

module load cuda/11.1

module load gcc/7.3

# 激活环境

source activate opennmmlab_mmclassification

# 刷新⽇志缓存

export PYTHONUNBUFFERED=1

# 训练模型

python tools/train.py \

configs/resnet/resnet18_b32_flower.py \

--work-dir work/resnet18_b32_flower

- 使⽤ sbatch 命令提交作业脚本。例如:

1 sbatch --gpus=1 run.sh

- –gpus 可以指定申请 GPU 的卡数,在 N30 分区可以申请的 GPU 卡数范围为 1~8,默认每卡配置 6核CPU、60GB 内存。

- 执⾏ sbatch --gpus=1 run.sh 命令之后可申请到 1 GPU、6 核 CPU、60GB 内存。

- 提交成功之后会输出作业信息 “Submitted batch job 279689” 其中 279685

为作业ID,可以通过作业ID查看⽇志信息。

-

使⽤ squeue 或 parajobs 查看提交的作业。

第⼀列 JOBID 是作业号,作业号是唯⼀的。

第⼆列 PARTITION 是作业运⾏的队列名。

第三列 NAME 是作业名称

第四列 USER 是超算账号。

第五列 ST 是作业状态。R(RUNNING)表示正常运⾏,PD(PENDING)表示在排队,CG(COMPLETING)表示正在退出,S 是管理员暂时挂起,CD(COMPLETED)已完成,F(FAILED)作业已失败

第六列 TIME 是作业运⾏时间。

第七列 NODES 是作业运⾏的节点数量

第⼋列 NODELIST(REASON)对于运⾏作业(R状态)显示作业使⽤的节点列表;如果是排队作业,显示排队原因。

-

查看作业输出⽇志。默认标准输出和标准出错都定向到⼀个 slurm-%j.log (“%j” 为作业ID)⽂件中,当作业状态是 R 的时候,可在当前提交的路径下看到。可以通过 tail 等命令查看⽇志输出。例如:

后续还讲了单节点多卡计算,多节点计算,以及通过载入节点,利用命令nvidia-smi查看GPU利用率。详细的就去观看上面提到的视频,以及官网提供的技术文档。