k8s 部署 Atlas

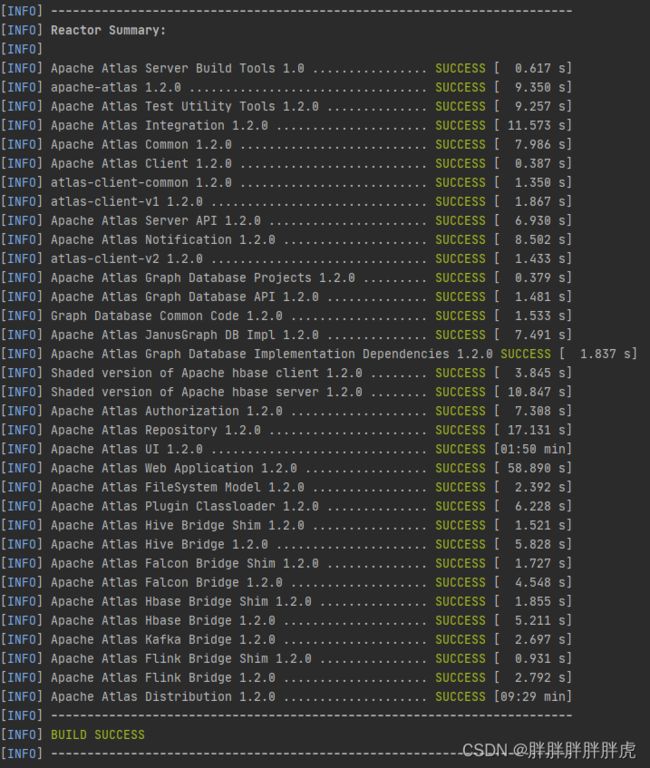

编译Atlas

mvn clean package -DskipTests -Dfast -Drat.skip=true -Pdist,embedded-hbase-solr

Atlas 内嵌 hbase solr

先启动 hbase + solr 再启动 atlas

/opt/apache-atlas-2.2.0/hbase/bin/start-hbase.sh

/opt/apache-atlas-2.2.0/solr/bin/solr start -c -z localhost:2181 -p 8983 -force

/opt/apache-atlas-2.2.0/solr/bin/solr create -c fulltext_index -force -d /opt/apache-atlas-2.2.0/conf/solr/

/opt/apache-atlas-2.2.0/solr/bin/solr create -c edge_index -force -d /opt/apache-atlas-2.2.0/conf/solr/

/opt/apache-atlas-2.2.0/solr/bin/solr create -c vertex_index -force -d /opt/apache-atlas-2.2.0/conf/solr/

/opt/apache-atlas-2.2.0/bin/atlas_start.py

[root@docker-registry-node apache-atlas-2.2.0]# netstat -anop | grep zk

[root@docker-registry-node apache-atlas-2.2.0]# netstat -anop | grep zookeeper

### 虽然没有 zookeeper 进程,却占用2181 端口

[root@docker-registry-node apache-atlas-2.2.0]# netstat -anop | grep 2181

tcp 0 0 127.0.0.1:39984 127.0.0.1:2181 ESTABLISHED 29800/java off (0.00/0/0)

tcp6 0 0 :::2181 :::* LISTEN 24397/java off (0.00/0/0)

tcp6 0 0 127.0.0.1:2181 127.0.0.1:39984 ESTABLISHED 24397/java off (0.00/0/0)

tcp6 0 0 127.0.0.1:2181 127.0.0.1:39820 ESTABLISHED 24397/java off (0.00/0/0)

tcp6 0 0 127.0.0.1:39478 127.0.0.1:2181 ESTABLISHED 24397/java off (0.00/0/0)

tcp6 0 0 ::1:2181 ::1:40142 ESTABLISHED 24397/java off (0.00/0/0)

tcp6 0 0 127.0.0.1:39474 127.0.0.1:2181 ESTABLISHED 24397/java off (0.00/0/0)

tcp6 0 0 127.0.0.1:2181 127.0.0.1:39474 ESTABLISHED 24397/java off (0.00/0/0)

tcp6 0 0 127.0.0.1:2181 127.0.0.1:39478 ESTABLISHED 24397/java off (0.00/0/0)

tcp6 0 0 127.0.0.1:2181 127.0.0.1:39486 ESTABLISHED 24397/java off (0.00/0/0)

tcp6 0 0 ::1:40142 ::1:2181 ESTABLISHED 27950/java off (0.00/0/0)

tcp6 0 0 127.0.0.1:39486 127.0.0.1:2181 ESTABLISHED 24397/java off (0.00/0/0)

tcp6 0 0 127.0.0.1:39820 127.0.0.1:2181 ESTABLISHED 27950/java off (0.00/0/0)

[root@docker-registry-node apache-atlas-2.2.0]# ps -aux | grep 24397

root 24397 1.1 7.5 3094532 293596 pts/1 Sl 10:28 2:39 /opt/module/jdk1.8.0_141/bin/java -Dproc_master -XX:OnOutOfMemoryError=kill -9 %p -XX:+UseConcMarkSweepGC -Dhbase.log.dir=/opt/module/atlas/apache-atlas-2.2.0/hbase/bin/../logs -Dhbase.log.file=hbase-nufront-master-docker-registry-node.log -Dhbase.home.dir=/opt/module/atlas/apache-atlas-2.2.0/hbase/bin/.. -Dhbase.id.str=nufront -Dhbase.root.logger=INFO,RFA -Dhbase.security.logger=INFO,RFAS org.apache.hadoop.hbase.master.HMaster start

Kafka

[root@docker-registry-node conf]# vim atlas-application.properties

[root@docker-registry-node conf]# netstat -anop | grep 9027

tcp 0 0 127.0.0.1:9027 0.0.0.0:* LISTEN 29800/java off (0.00/0/0)

tcp 1 0 127.0.0.1:38910 127.0.0.1:9027 CLOSE_WAIT 29800/java keepalive (4783.83/0/0)

tcp 0 0 127.0.0.1:38942 127.0.0.1:9027 ESTABLISHED 29800/java keepalive (4800.22/0/0)

tcp 0 0 127.0.0.1:9027 127.0.0.1:38936 ESTABLISHED 29800/java keepalive (4800.22/0/0)

tcp 0 0 127.0.0.1:38936 127.0.0.1:9027 ESTABLISHED 29800/java keepalive (4800.22/0/0)

tcp 0 0 127.0.0.1:9027 127.0.0.1:38942 ESTABLISHED 29800/java keepalive (4800.22/0/0)

[root@docker-registry-node version-2]# pwd

/opt/module/atlas/apache-atlas-2.2.0/data/hbase-zookeeper-data/zookeeper_0/version-2

[root@docker-registry-node version-2]# ll

total 580

-rw-r--r--. 1 root root 102416 Oct 14 10:27 log.1

-rw-r--r--. 1 root root 614416 Oct 14 13:59 log.4f

[root@docker-registry-node version-2]# cat /opt/module/atlas/apache-atlas-2.2.0/hbase/conf/hbase-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed to the Apache Software Foundation (ASF) under one or more

contributor license agreements. See the NOTICE file distributed with

this work for additional information regarding copyright ownership.

The ASF licenses this file to You under the Apache License, Version 2.0

(the "License"); you may not use this file except in compliance with

the License. You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

-->

<configuration>

<property>

<name>hbase.rootdir</name>

<value>file:///opt/module/atlas/apache-atlas-2.2.0/data/hbase-root</value>

</property>

<property>

<name>hbase.zookeeper.property.dataDir</name>

<value>/opt/module/atlas/apache-atlas-2.2.0/data/hbase-zookeeper-data</value>

</property>

<property>

<name>hbase.master.info.port</name>

<value>61510</value>

</property>

<property>

<name>hbase.regionserver.info.port</name>

<value>61530</value>

</property>

<property>

<name>hbase.master.port</name>

<value>61500</value>

</property>

<property>

<name>hbase.regionserver.port</name>

<value>61520</value>

</property>

<property>

<name>hbase.unsafe.stream.capability.enforce</name>

<value>false</value>

</property>

</configuration>

Atlas hbase | sorl 数据存储路径

[root@docker-registry-node data]# pwd

/opt/module/atlas/apache-atlas-2.2.0/data

[root@docker-registry-node data]# ll

total 0

drwxr-xr-x. 12 root root 188 Oct 14 10:28 hbase-root

drwxr-xr-x. 3 root root 25 Oct 14 10:25 hbase-zookeeper-data

drwxr-xr-x. 4 root root 29 Oct 14 13:47 kafka

drwxr-xr-x. 2 root root 22 Oct 14 10:25 solr

################################################

##### 创建 fulltext_index | edge_index | vertex_index 索引

################################################

[root@docker-registry-node bin]# ./solr create -c fulltext_index -force -d /opt/module/atlas/apache-atlas-2.2.0/conf/solr/

Created collection 'fulltext_index' with 1 shard(s), 1 replica(s) with config-set 'fulltext_index'

[root@docker-registry-node bin]# ./solr create -c edge_index -force -d /opt/module/atlas/apache-atlas-2.2.0/conf/solr/

Created collection 'edge_index' with 1 shard(s), 1 replica(s) with config-set 'edge_index'

[root@docker-registry-node bin]# ./solr create -c vertex_index -force -d /opt/module/atlas/apache-atlas-2.2.0/conf/solr/

Created collection 'vertex_index' with 1 shard(s), 1 replica(s) with config-set 'vertex_index'

[root@docker-registry-node bin]# pwd

/opt/module/atlas/apache-atlas-2.2.0/bin

[root@docker-registry-node bin]# ./atlas_start.py

Configured for local HBase.

Starting local HBase...

Local HBase started!

Configured for local Solr.

Starting local Solr...

Local Solr started!

Creating Solr collections for Atlas using config: /opt/module/atlas/apache-atlas-2.2.0/conf/solr

Starting Atlas server on host: localhost

Starting Atlas server on port: 21000

................................................................................................................................

Apache Atlas Server started!!!

[root@docker-registry-node conf]# jps

32103 Jps

29800 Atlas

24397 HMaster

27950 jar

[root@docker-registry-node bin]# curl http://localhost:21000/login.jsp

账号密码:admin / admin

问题记录

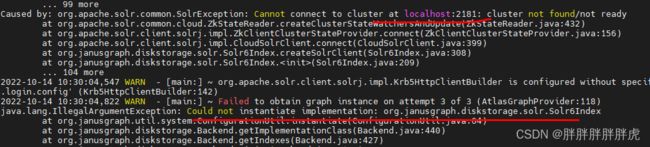

Could not instantiate implementation: org.janusgraph.diskstorage.hbase2.HBaseStoreManager

2022-10-13 17:00:55,863 INFO - [main:] ~ Logged in user root (auth:SIMPLE) (LoginProcessor:77)

2022-10-13 17:00:56,817 INFO - [main:] ~ Not running setup per configuration atlas.server.run.setup.on.start. (SetupSteps$SetupRequired:189)

2022-10-13 17:00:58,810 INFO - [main:] ~ Failed to obtain graph instance, retrying 3 times, error: java.lang.IllegalArgumentException: Could not instantiate implementation: org.janusgraph.diskstorage.hbase2.HBaseStoreManager (AtlasGraphProvider:100)

2022-10-13 17:01:28,845 WARN - [main:] ~ Failed to obtain graph instance on attempt 1 of 3 (AtlasGraphProvider:118)

java.lang.IllegalArgumentException: Could not instantiate implementation: org.janusgraph.diskstorage.hbase2.HBaseStoreManager

at org.janusgraph.util.system.ConfigurationUtil.instantiate(ConfigurationUtil.java:64)

at org.janusgraph.diskstorage.Backend.getImplementationClass(Backend.java:440)

at org.janusgraph.diskstorage.Backend.getStorageManager(Backend.java:411)

at org.janusgraph.graphdb.configuration.builder.GraphDatabaseConfigurationBuilder.build(GraphDatabaseConfigurationBuilder.java:50)

at org.janusgraph.core.JanusGraphFactory.open(JanusGraphFactory.java:161)

at org.janusgraph.core.JanusGraphFactory.open(JanusGraphFactory.java:132)

at org.janusgraph.core.JanusGraphFactory.open(JanusGraphFactory.java:112)

at org.apache.atlas.repository.graphdb.janus.AtlasJanusGraphDatabase.initJanus

Cannot connect to cluster at localhost:2181: cluster not found/not ready | Could not instantiate implementation: org.janusgraph.diskstorage.solr.Solr6Index

vim atlas-application.properties

#######

...

atlas.graph.storage.hostname=localhost:2181

...

Can not find the specified config set: vertex_index

2022-10-14 13:35:02,680 ERROR - [main:] ~ GraphBackedSearchIndexer.initialize() failed (GraphBackedSearchIndexer:376)

org.apache.solr.client.solrj.impl.HttpSolrClient$RemoteSolrException: Error from server at http://172.16.51.129:8983/solr: Can not find the specified config set: vertex_index

at org.apache.solr.client.solrj.impl.HttpSolrClient.executeMethod(HttpSolrClient.java:643)

at org.apache.solr.client.solrj.impl.HttpSolrClient.request(HttpSolrClient.java:255)

at org.apache.solr.client.solrj.impl.HttpSolrClient.request(HttpSolrClient.java:244)

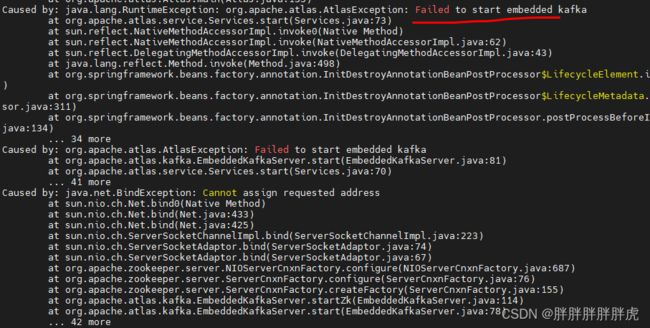

Failed to start embedded kafka

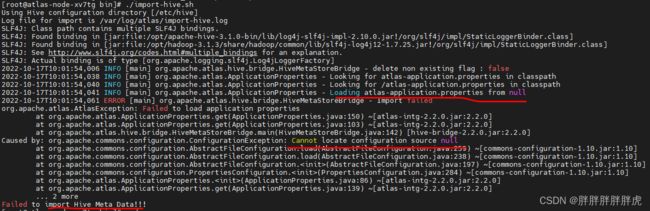

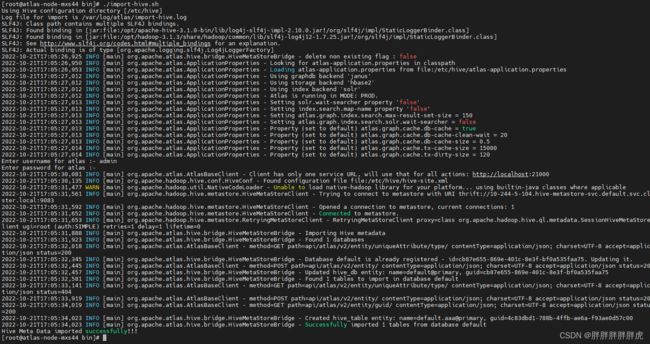

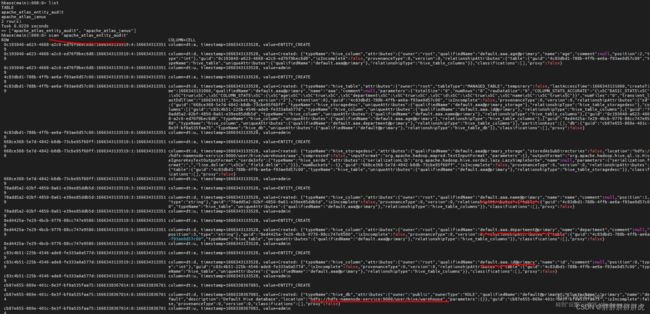

import_hive.sh

Failed to load application properties

拷贝 atlas-application.properties 至 HIVE_CONF_DIR

java.lang.NoSuchMethodError: com.google.common.base.Preconditions.checkArgum

将 hive lib的 guava 替换为 hadoop guava 版本

Unexpected end of file when reading from HS2 server. The root cause might be too many concurrent connections. Please ask the administrator to check the number of active connections, and adjust hive.server2.thrift.max.worker.threads if applicable.

客户端与hive服务端版本不一致(3.1.0 连接 2.3.2)

Exception in thread “main” java.lang.IllegalAccessError: tried to access method com.google.common.collect.Iterators.emptyIterator()Lcom/google/common/collect/UnmodifiableIterator; from class org.apache.hadoop.hive.ql.exec.FetchOperator

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-73mwhhL9-1666405043664)(aaa.assets/image-20221021112214783.png)]

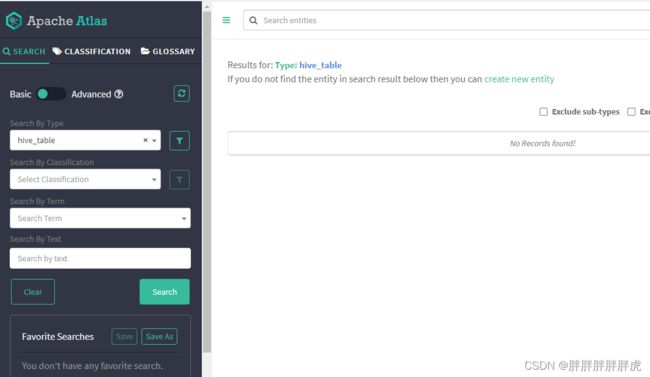

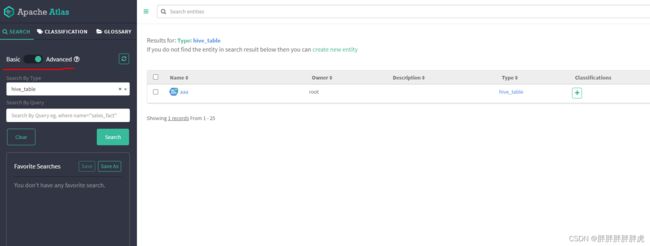

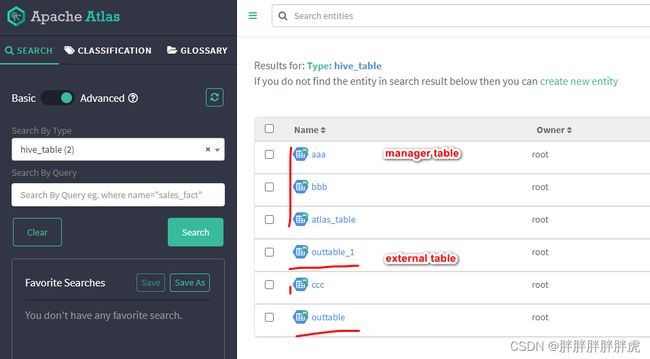

hive 导入成功,但atlas ui 查询不到

create table aaa(id int, name string, age int, department string) row format delimited fields terminated by ',' lines terminated by '\n' stored as textfile;

但 ui 界面上还是没显示…

__AtlasUserProfile with unique attribute {name=admin} does not exist

import_hive.sh 元数据导入成功,但是导航不显示hive_table数量,查询血缘关系时也是报的无血缘关系…可能是登录用户为admin的问题、

https://community.cloudera.com/t5/Support-Questions/Apache-atlas-data-lineage-issue/m-p/221542

##############################

### atlas application.log

##############################

2022-10-25 17:13:04,091 ERROR - [etp2008966511-516 - 6877d62d-0751-407b-abf8-6225510bd075:] ~ graph rollback due to exception AtlasBaseException:Instance __AtlasUserProfile with unique attribute {name=admin} does not exist (GraphTransactionInterceptor:202)

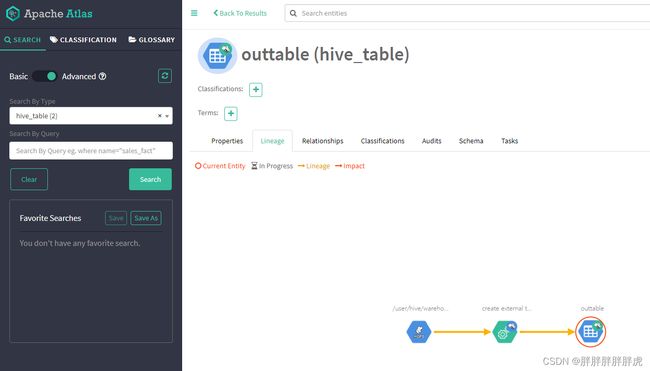

atlas 血缘不支持内部表

https://zhidao.baidu.com/question/1802196529111502747.html

https://community.cloudera.com/t5/Support-Questions/Lineage-is-not-visible-for-Hive-Table-in-Atlas/m-p/237484

import-hive.sh atlas 不支持hive内部表(managed table)的lineage,只有External修饰的表才能生成血缘!!!

### 外部表

create external table outtable(id int, name string, age int, department string) row format delimited fields terminated by ',' lines terminated by '\n' stored as textfile;

create external table outtable_1(id int, name string, age int, department string) row format delimited fields terminated by ',' lines terminated by '\n' stored as textfile;

insert into outtable select * from outtable_1;

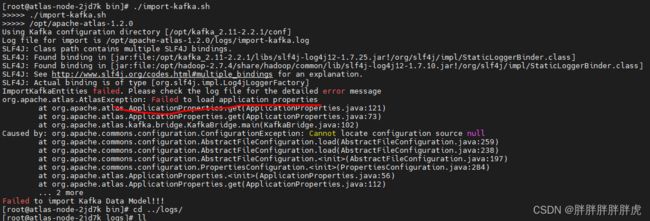

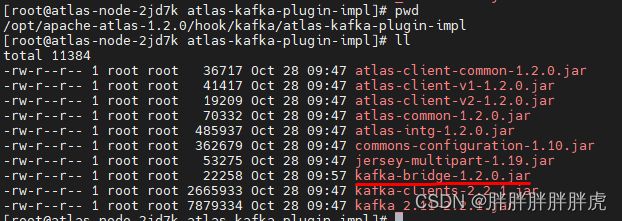

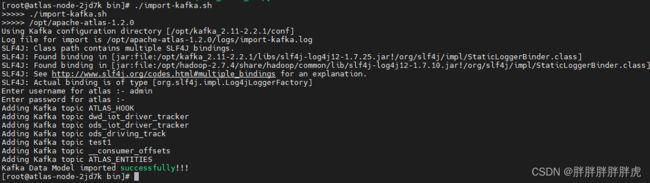

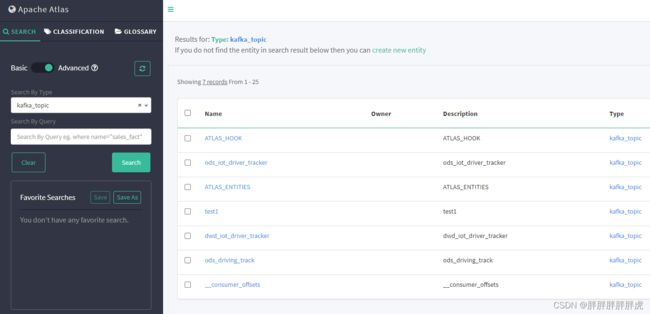

Kafka

添加 atlas-application.properties 进 kafka-bridge-1.2.0.jar

执行登录时提示: invalid user credentials

替换 atlas-configmap (之前是atlas 2.2.0 未更新) 的 users-credentials.properties 为 apache-atlas-1.2.0-bin 的users-credentials.properties

Dockerfile

FROM docker-registry-node:5000/centos:centos7.6.1810

ADD hadoop-2.7.4.tar.gz /opt

ADD kafka_2.11-2.2.1.tgz /opt

ADD apache-hive-2.3.2-bin.tar.gz /opt

ADD apache-atlas-1.2.0-bin.tar.gz /opt

ADD jdk-8u141-linux-x64.tar.gz /opt

ADD which /usr/bin/

RUN chmod +x /usr/bin/which

RUN cp /opt/apache-hive-2.3.2-bin/lib/libfb303-0.9.3.jar /opt/apache-hive-2.3.2-bin/lib/hive-exec-2.3.2.jar /opt/apache-hive-2.3.2-bin/lib/hive-jdbc-2.3.2.jar /opt/apache-hive-2.3.2-bin/lib/hive-metastore-2.3.2.jar /opt/apache-hive-2.3.2-bin/lib/jackson-core-2.6.5.jar /opt/apache-hive-2.3.2-bin/lib/jackson-databind-2.6.5.jar /opt/apache-hive-2.3.2-bin/lib/jackson-annotations-2.6.0.jar /opt/apache-atlas-1.2.0/hook/hive/atlas-hive-plugin-impl/

RUN rm -rf /opt/apache-atlas-1.2.0/hook/hive/atlas-hive-plugin-impl/jackson-annotations-2.9.9.jar /opt/apache-atlas-1.2.0/hook/hive/atlas-hive-plugin-impl/jackson-core-2.9.9.jar /opt/apache-atlas-1.2.0/hook/hive/atlas-hive-plugin-impl/jackson-databind-2.9.9.jar

ENV JAVA_HOME /opt/jdk1.8.0_141

ENV PATH ${JAVA_HOME}/bin:$PATH

EXPOSE 21000

atlas-configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: atlas-configmap

labels:

app: atlas-configmap

data:

atlas-application.properties: |-

atlas.graph.storage.backend=hbase2

atlas.graph.storage.hbase.table=apache_atlas_janus

### embedded hbase solr

atlas.graph.storage.hostname=localhost:2181

atlas.graph.storage.hbase.regions-per-server=1

atlas.EntityAuditRepository.impl=org.apache.atlas.repository.audit.HBaseBasedAuditRepository

atlas.graph.index.search.backend=solr

atlas.graph.index.search.solr.mode=cloud

atlas.graph.index.search.solr.zookeeper-url=localhost:2181

atlas.graph.index.search.solr.zookeeper-connect-timeout=60000

atlas.graph.index.search.solr.zookeeper-session-timeout=60000

atlas.graph.index.search.solr.wait-searcher=false

atlas.graph.index.search.max-result-set-size=150

atlas.notification.embedded=true

### embedded kafka

atlas.kafka.data=${sys:atlas.home}/data/kafka

atlas.kafka.zookeeper.connect=localhost:9026

atlas.kafka.bootstrap.servers=localhost:9027

atlas.kafka.zookeeper.session.timeout.ms=400

atlas.kafka.zookeeper.connection.timeout.ms=200

atlas.kafka.zookeeper.sync.time.ms=20

atlas.kafka.auto.commit.interval.ms=1000

atlas.kafka.hook.group.id=atlas

atlas.kafka.enable.auto.commit=false

atlas.kafka.auto.offset.reset=earliest

atlas.kafka.session.timeout.ms=30000

atlas.kafka.offsets.topic.replication.factor=1

atlas.kafka.poll.timeout.ms=1000

atlas.notification.create.topics=true

atlas.notification.replicas=1

atlas.notification.topics=ATLAS_HOOK,ATLAS_ENTITIES

atlas.notification.log.failed.messages=true

atlas.notification.consumer.retry.interval=500

atlas.notification.hook.retry.interval=1000

atlas.enableTLS=false

atlas.authentication.method.kerberos=false

atlas.authentication.method.file=true

atlas.authentication.method.ldap.type=none

atlas.authentication.method.file.filename=${sys:atlas.home}/conf/users-credentials.properties

atlas.rest.address=http://localhost:21000

atlas.audit.hbase.tablename=apache_atlas_entity_audit

atlas.audit.zookeeper.session.timeout.ms=1000

atlas.audit.hbase.zookeeper.quorum=localhost:2181

atlas.server.ha.enabled=false

atlas.hook.hive.synchronous=false

atlas.hook.hive.numRetries=3

atlas.hook.hive.queueSize=10000

atlas.cluster.name=primary

atlas.authorizer.impl=simple

atlas.authorizer.simple.authz.policy.file=atlas-simple-authz-policy.json

atlas.rest-csrf.enabled=true

atlas.rest-csrf.browser-useragents-regex=^Mozilla.*,^Opera.*,^Chrome.*

atlas.rest-csrf.methods-to-ignore=GET,OPTIONS,HEAD,TRACE

atlas.rest-csrf.custom-header=X-XSRF-HEADER

atlas.metric.query.cache.ttlInSecs=900

atlas.search.gremlin.enable=false

atlas.ui.default.version=v1

atlas-env.sh: |-

export MANAGE_LOCAL_HBASE=true

export MANAGE_LOCAL_SOLR=true

export MANAGE_EMBEDDED_CASSANDRA=false

export MANAGE_LOCAL_ELASTICSEARCH=false

atlas-log4j.xml: |-

<?xml version="1.0" encoding="UTF-8" ?>

<!--

~ Licensed to the Apache Software Foundation (ASF) under one

~ or more contributor license agreements. See the NOTICE file

~ distributed with this work for additional information

~ regarding copyright ownership. The ASF licenses this file

~ to you under the Apache License, Version 2.0 (the

~ "License"); you may not use this file except in compliance

~ with the License. You may obtain a copy of the License at

~

~ http://www.apache.org/licenses/LICENSE-2.0

~

~ Unless required by applicable law or agreed to in writing, software

~ distributed under the License is distributed on an "AS IS" BASIS,

~ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

~ See the License for the specific language governing permissions and

~ limitations under the License.

-->

atlas-simple-authz-policy.json: |-

{

"roles": {

"ROLE_ADMIN": {

"adminPermissions": [

{

"privileges": [ ".*" ]

}

],

"typePermissions": [

{

"privileges": [ ".*" ],

"typeCategories": [ ".*" ],

"typeNames": [ ".*" ]

}

],

"entityPermissions": [

{

"privileges": [ ".*" ],

"entityTypes": [ ".*" ],

"entityIds": [ ".*" ],

"entityClassifications": [ ".*" ],

"labels": [ ".*" ],

"businessMetadata": [ ".*" ],

"attributes": [ ".*" ],

"classifications": [ ".*" ]

}

],

"relationshipPermissions": [

{

"privileges": [ ".*" ],

"relationshipTypes": [ ".*" ],

"end1EntityType": [ ".*" ],

"end1EntityId": [ ".*" ],

"end1EntityClassification": [ ".*" ],

"end2EntityType": [ ".*" ],

"end2EntityId": [ ".*" ],

"end2EntityClassification": [ ".*" ]

}

]

},

"DATA_SCIENTIST": {

"entityPermissions": [

{

"privileges": [ "entity-read", "entity-read-classification" ],

"entityTypes": [ ".*" ],

"entityIds": [ ".*" ],

"entityClassifications": [ ".*" ],

"labels": [ ".*" ],

"businessMetadata": [ ".*" ],

"attributes": [ ".*" ]

}

]

},

"DATA_STEWARD": {

"entityPermissions": [

{

"privileges": [ "entity-read", "entity-create", "entity-update", "entity-read-classification", "entity-add-classification", "entity-update-classification", "entity-remove-classification" ],

"entityTypes": [ ".*" ],

"entityIds": [ ".*" ],

"entityClassifications": [ ".*" ],

"labels": [ ".*" ],

"businessMetadata": [ ".*" ],

"attributes": [ ".*" ],

"classifications": [ ".*" ]

}

],

"relationshipPermissions": [

{

"privileges": [ "add-relationship", "update-relationship", "remove-relationship" ],

"relationshipTypes": [ ".*" ],

"end1EntityType": [ ".*" ],

"end1EntityId": [ ".*" ],

"end1EntityClassification": [ ".*" ],

"end2EntityType": [ ".*" ],

"end2EntityId": [ ".*" ],

"end2EntityClassification": [ ".*" ]

}

]

}

},

"userRoles": {

"admin": [ "ROLE_ADMIN" ],

"rangertagsync": [ "DATA_SCIENTIST" ]

},

"groupRoles": {

"ROLE_ADMIN": [ "ROLE_ADMIN" ],

"hadoop": [ "DATA_STEWARD" ],

"DATA_STEWARD": [ "DATA_STEWARD" ],

"RANGER_TAG_SYNC": [ "DATA_SCIENTIST" ]

}

}

cassandra.yml.template: |-

cluster_name: 'JanusGraph'

num_tokens: 256

hinted_handoff_enabled: true

hinted_handoff_throttle_in_kb: 1024

max_hints_delivery_threads: 2

batchlog_replay_throttle_in_kb: 1024

authenticator: AllowAllAuthenticator

authorizer: AllowAllAuthorizer

permissions_validity_in_ms: 2000

partitioner: org.apache.cassandra.dht.Murmur3Partitioner

data_file_directories:

- ${atlas_home}/data/cassandra/data

commitlog_directory: ${atlas_home}/data/cassandra/commitlog

disk_failure_policy: stop

commit_failure_policy: stop

key_cache_size_in_mb:

key_cache_save_period: 14400

row_cache_size_in_mb: 0

row_cache_save_period: 0

saved_caches_directory: ${atlas_home}/data/cassandra/saved_caches

commitlog_sync: periodic

commitlog_sync_period_in_ms: 10000

commitlog_segment_size_in_mb: 32

seed_provider:

- class_name: org.apache.cassandra.locator.SimpleSeedProvider

parameters:

- seeds: "127.0.0.1"

concurrent_reads: 32

concurrent_writes: 32

trickle_fsync: false

trickle_fsync_interval_in_kb: 10240

storage_port: 7000

ssl_storage_port: 7001

listen_address: localhost

start_native_transport: true

native_transport_port: 9042

start_rpc: true

rpc_address: localhost

rpc_port: 9160

rpc_keepalive: true

rpc_server_type: sync

thrift_framed_transport_size_in_mb: 15

incremental_backups: false

snapshot_before_compaction: false

auto_snapshot: true

tombstone_warn_threshold: 1000

tombstone_failure_threshold: 100000

column_index_size_in_kb: 64

compaction_throughput_mb_per_sec: 16

read_request_timeout_in_ms: 5000

range_request_timeout_in_ms: 10000

write_request_timeout_in_ms: 2000

cas_contention_timeout_in_ms: 1000

truncate_request_timeout_in_ms: 60000

request_timeout_in_ms: 10000

cross_node_timeout: false

endpoint_snitch: SimpleSnitch

dynamic_snitch_update_interval_in_ms: 100

dynamic_snitch_reset_interval_in_ms: 600000

dynamic_snitch_badness_threshold: 0.1

request_scheduler: org.apache.cassandra.scheduler.NoScheduler

server_encryption_options:

internode_encryption: none

keystore: conf/.keystore

keystore_password: cassandra

truststore: conf/.truststore

truststore_password: cassandra

client_encryption_options:

enabled: false

keystore: conf/.keystore

keystore_password: cassandra

internode_compression: all

inter_dc_tcp_nodelay: false

hadoop-metrics2.properties: |-

*.period=30

atlas-debug-metrics-context.sink.atlas-debug-metrics-context.context=atlas-debug-metrics-context

users-credentials.properties: |-

admin=ADMIN::a4a88c0872bf652bb9ed803ece5fd6e82354838a9bf59ab4babb1dab322154e1

rangertagsync=RANGER_TAG_SYNC::0afe7a1968b07d4c3ff4ed8c2d809a32ffea706c66cd795ead9048e81cfaf034

atlas.yaml

apiVersion: v1

kind: Service

metadata:

name: atlas-service

spec:

type: NodePort

selector:

name: atlas-node

ports:

- name: atlas-port

port: 21000

targetPort: 21000

nodePort: 32100

---

apiVersion: v1

kind: ReplicationController

metadata:

name: atlas-node

labels:

name: atlas-node

spec:

replicas: 1

selector:

name: atlas-node

template:

metadata:

labels:

name: atlas-node

spec:

containers:

- name: atlas-node

image: docker-registry-node:5000/atlas:0.2

imagePullPolicy: IfNotPresent

env:

- name: TZ

value: Asia/Shanghai

- name: HIVE_HOME

value:

- name: HIVE_CONF_DIR

value:

- name: HADOOP_CLASSPATH

value:

- name: HBASE_CONF_DIR

value: /opt/apache-atlas-2.2.0/hbase/conf

volumeMounts:

- name: atlas-configmap-volume

mountPath: /etc/atlas

- name: hadoop-config-volume

mountPath: /etc/hadoop

- name: hive-config-volume

mountPath: /etc/hive

- name: flink-config-volume

mountPath: /etc/flink

#- name: hbase-config-volume

# mountPath: /etc/hbase

command: ["/bin/bash", "-c", "cd /etc/atlas; cp * /opt/apache-atlas-2.2.0/conf ; sleep infinity"]

restartPolicy: Always

volumes:

- name: atlas-configmap-volume

configMap:

name: atlas-configmap

items:

- key: atlas-application.properties

path: atlas-application.properties

- key: atlas-env.sh

path: atlas-env.sh

- key: atlas-log4j.xml

path: atlas-log4j.xml

- key: atlas-simple-authz-policy.json

path: atlas-simple-authz-policy.json

- key: cassandra.yml.template

path: cassandra.yml.template

- key: hadoop-metrics2.properties

path: hadoop-metrics2.properties

- key: users-credentials.properties

path: users-credentials.properties

- name: hive-config-volume

persistentVolumeClaim:

claimName: hive-config-nfs-pvc

- name: hadoop-config-volume

persistentVolumeClaim:

claimName: bde2020-hadoop-config-nfs-pvc

#claimName: hadoop-config-nfs-pvc

- name: flink-config-volume

configMap:

name: flink-config

#- name: hbase-config-volume

# persistentVolumeClaim:

# claimName: hbase-config-nfs-pvc