爬取财富500强的数据,用xpath定位,爬取两层链接

文章目录

- 前言

- 一、Xpath定位

-

- 1.安装lxml

- 2.引用etree

- 3.代码示例

- 4.解读xpath

- 4.html结构

- 二、使用步骤

-

- 1.引入库

- 2.拼接第二层链接的url

- 三、完整代码

前言

这篇文章的爬取对象是2021年《财富》中国500强排行榜 ,里面的数据是封装在两个页面里的,需要爬取两层链接。一、Xpath定位

应用的是lxml里的etree库,简单的应用笔记链接

1.安装lxml

pip install lxml

2.引用etree

from lxml import etree

3.代码示例

# 获取公司名位置的数据

req = etree.HTML(html)

companys = req.xpath('//table[@class="wt-table"]/tbody/tr/td[3]/a/text()')

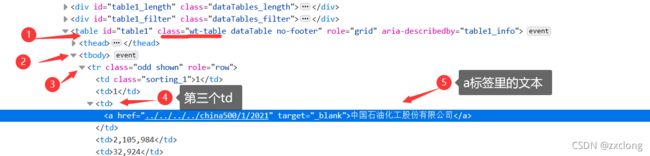

4.解读xpath

// table 选取所有 table 子元素,而不管它们在文档中的位置。//table[@class="wt-table"] 选取class属性是wt-table的table标签。

/tbody 选择其下的第一个tbody标签。

/td[3] 选择其下的第三个td标签。

/text() 获取这个位置的文本内容。

综上,'//table[@class="wt-table"]/tbody/tr/td[3]/a/text()'是获取全文的class属性是wt-table的table标签,里面的第一个tbody标签,tbody下的第一个tr,tr下的第三个td,td里的a标签,获取a里的文本

4.html结构

二、使用步骤

1.引入库

代码如下:

import requests, random

import os

from lxml import etree

import xlsxwriter

该处使用的url网络请求的数据。

2.拼接第二层链接的url

代码如下:

hrefs = req.xpath('//table[@class="wt-table"]/tbody/tr/td[3]/a/@href')

data_list = []

for href in hrefs:

href = href.replace('../', '') # 例:原href='../../../../global500/517/2021' 去掉../

href = "http://www.fortunechina.com/{}".format(href) # 拼接上前缀

三、完整代码

'''

xpath定点爬虫

'''

# -*- coding: UTF-8 -*-

import requests, random

import os

from lxml import etree

import xlsxwriter

class Httprequest(object):

ua_list = [

'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/535.1 (KHTML, like Gecko) Chrome/14.0.835.163 Safari/535.1',

'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/73.0.3683.103 Safari/537.36Chrome 17.0',

'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_7_0) AppleWebKit/535.11 (KHTML, like Gecko) Chrome/17.0.963.56 Safari/535.11',

'Mozilla/5.0 (Windows NT 6.1; WOW64; rv:6.0) Gecko/20100101 Firefox/6.0Firefox 4.0.1',

'Mozilla/5.0 (Macintosh; Intel Mac OS X 10.6; rv:2.0.1) Gecko/20100101 Firefox/4.0.1',

'Mozilla/5.0 (Macintosh; U; Intel Mac OS X 10_6_8; en-us) AppleWebKit/534.50 (KHTML, like Gecko) Version/5.1 Safari/534.50',

'Mozilla/5.0 (Windows; U; Windows NT 6.1; en-us) AppleWebKit/534.50 (KHTML, like Gecko) Version/5.1 Safari/534.50',

'Opera/9.80 (Windows NT 6.1; U; en) Presto/2.8.131 Version/11.11',

]

@property # 把方法变成属性的装饰器

def random_headers(self):

return {

'User-Agent': random.choice(self.ua_list)

}

class Get_data(Httprequest):

def __init__(self):

self.murl = "https://www.fortunechina.com/fortune500/c/2021-07/20/content_392708.htm"

def get_chinadata(self):

html = requests.get(self.murl, headers=self.random_headers, timeout=5).content.decode('utf-8')

# print(html)

req = etree.HTML(html)

# 用xpath路径定位所要获取的元素

rankings = req.xpath('//table[@class="wt-table"]/tbody/tr/td[1]/text()') # 排名

last_rankings = req.xpath('//table[@class="wt-table"]/tbody/tr/td[2]/text()') # 上年排名

companys = req.xpath('//table[@class="wt-table"]/tbody/tr/td[3]/a/text()') # 公司名

incomes = req.xpath('//table[@class="wt-table"]/tbody/tr/td[4]/text()') # 收入

profits = req.xpath('//table[@class="wt-table"]/tbody/tr/td[5]/text()') # 利润

hrefs = req.xpath('//table[@class="wt-table"]/tbody/tr/td[3]/a/@href') # 下一页面的url地址

data_list = []

for ranking, last_ranking, company, income, profit, href in zip(

rankings, last_rankings, companys, incomes, profits, hrefs

):

data = [

ranking, last_ranking, company, income, profit # 第一页能获取到的5条数据

]

href = href.replace('../', '') # 例:原href='../../../../global500/517/2021' 去掉../

href = "http://www.fortunechina.com/{}".format(href) # 拼接上前缀

data.extend(self.get_datahref(href)) # 调用获取第二层链接数据的函数

print(data)

data_list.append(data)

print('\n')

self.write_to_zxlsx(data_list)

def get_datahref(self, url): # 用于获取第二层链接里的数据,url是拼接好的href

html = requests.get(url, headers=self.random_headers, timeout=5).content.decode('utf-8')

req = etree.HTML(html)

data = []

# 第二个页面的,xpath路径定位所要获取的元素

zc = req.xpath('//div[@class="top"]/div[@class="table"]/table/tr[4]/td[2]/text()')

shizhi = req.xpath('//div[@class="top"]/div[@class="table"]/table/tr[5]/td[2]/text()')

gdqy = req.xpath('//div[@class="top"]/div[@class="table"]/table/tr[6]/td[2]/text()')

# lrzb=req.xpath('//div[@class="top"]/div[@class="table"]/table/tr[7]/td[2 ]/text()')

jlr = req.xpath('//div[@class="top"]/div[@class="table"]/table/tr[8]/td[3]/text()')

jzcsyl = req.xpath('//div[@class="top"]/div[@class="table"]/table/tr[9]/td[3]/text()')

zcsyl = req.xpath('//div[@class="top"]/div[@class="table"]/table/tr[10]/td[3]/text()')

# 获取到元素添加到data表里

data.extend(zc)

data.extend(shizhi)

data.extend(gdqy)

# data.extend(lrzb)

data.extend(jlr)

data.extend(jzcsyl)

data.extend(zcsyl)

print('href.......',data,end='\n')

return data

def write_to_zxlsx(self, data_list): # 把数据存入xlsx文件里

#

mypath = os.path.dirname((os.path.abspath(__file__))) # 去掉当前文件名的绝对路径

workbook = xlsxwriter.Workbook(mypath + '\{}.xlsx'.format("2021年《财富》中国500强排行榜")) # 生成一个xlsx文件,format后是文件名

worksheet = workbook.add_worksheet("2021年《财富》中国500强排行榜") # 生成一个工作表,(表名)

title = ['排名', '上年排名', '公司名称(中文)', '营业收入(百万美元)', '利润(百万美元)', '资产', '市值', '股东权益', '净利率', '净资产收益率', '资产收益率']

worksheet.write_row('A1', title)

for index, data in enumerate(data_list):

num0 = str(index + 2)

row = 'A' + num0

worksheet.write_row(row, data)

workbook.close()

if __name__ == "__main__":

spider = Get_data()

spider.get_chinadata()

注:代码主体是修改自他人,但没有注释,对于小白难以理解和改写。现对其标注,望能帮助到有需要的人。