SAM2:环境安装&代码调试

引子

时隔大半年,SAM 2代终于来了,之前写过一篇《Segment Anything(SAM)环境安装&代码调试》,感兴趣童鞋请移步Segment Anything(SAM)环境安装&代码调试-CSDN博客,OK,让我们开始吧。

一、模型介绍

Meta 公司去年发布了 SAM 1 基础模型,已经可以在图像上分割对象。而最新发布的 SAM 2 可用于图片和视频,并可以实现实时、可提示的对象分割。SAM 2 在图像分割准确性方面超越了以往的能力,在视频分割性能方面优于现有成果,同时所需的交互时间减少了三倍。SAM 2 还可以分割任何视频或图像中的任何对象(通常称为零镜头泛化),这意味着它可以应用于以前未见过的视觉内容,而无需进行自定义调整。

二、环境搭建

1、模型下载

https://github.com/facebookresearch/segment-anything-2?tab=readme-ov-file

代码下载

git clone https://github.com/facebookresearch/segment-anything-2.git

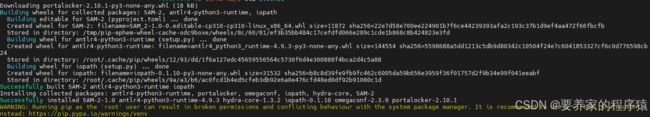

2、环境安装

docker pull pytorch/pytorch:2.3.1-cuda12.1-cudnn8-devel

docker run -it --rm --gpus=all -v /datas/work/zzq:/workspace pytorch/pytorch:2.3.1-cuda12.1-cudnn8-devel bash

cd /workspace/SAM2/segment-anything-2-main

pip install -e .(PS:安装时间巨长,要有耐心)

apt-get update && apt-get install libgl1

apt-get install libglib2.0-0

三、推理测试

import numpy as np

import torch

import matplotlib.pyplot as plt

from PIL import Image

import cv2

# use bfloat16 for the entire notebook

torch.autocast(device_type="cuda", dtype=torch.float16).__enter__()

if torch.cuda.get_device_properties(0).major >= 8:

# turn on tfloat32 for Ampere GPUs (https://pytorch.org/docs/stable/notes/cuda.html#tensorfloat-32-tf32-on-ampere-devices)

torch.backends.cuda.matmul.allow_tf32 = True

torch.backends.cudnn.allow_tf32 = True

def apply_color_mask(image, mask, color, color_dark = 0.5):#对掩体进行赋予颜色

for c in range(3):

image[:, :, c] = np.where(mask == 1, image[:, :, c] * (1 - color_dark) + color_dark * color[c], image[:, :, c])

return image

def show_anns(anns, borders=True):

if len(anns) == 0:

return

sorted_anns = sorted(anns, key=(lambda x: x['area']), reverse=True)

ax = plt.gca()

ax.set_autoscale_on(False)

img = np.ones((sorted_anns[0]['segmentation'].shape[0], sorted_anns[0]['segmentation'].shape[1], 4))

img[:,:,3] = 0

for ann in sorted_anns:

m = ann['segmentation']

color_mask = np.concatenate([np.random.random(3), [0.5]])

img[m] = color_mask

if borders:

import cv2

contours, _ = cv2.findContours(m.astype(np.uint8),cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_NONE)

# Try to smooth contours

contours = [cv2.approxPolyDP(contour, epsilon=0.01, closed=True) for contour in contours]

cv2.drawContours(img, contours, -1, (0,0,1,0.4), thickness=1)

ax.imshow(img)

image = Image.open('images/cars.png')

image = np.array(image.convert("RGB"))

from sam2.build_sam import build_sam2

from sam2.automatic_mask_generator import SAM2AutomaticMaskGenerator

sam2_checkpoint = "models/sam2_hiera_large.pt"

model_cfg = "sam2_hiera_l.yaml"

sam2 = build_sam2(model_cfg, sam2_checkpoint, device ='cuda', apply_postprocessing=False)

mask_generator = SAM2AutomaticMaskGenerator(sam2)

masks = mask_generator.generate(image)

print(len(masks))

print(masks[0].keys())

# plt.figure(figsize=(20,20))

# plt.imshow(image)

# show_anns(masks)

# plt.axis('off')

# plt.show()

image_select = image.copy()

for i in range(len(masks)):

color = tuple(np.random.randint(0, 256, 3).tolist())#随机列表颜色,就是

selected_mask=masks[i]['segmentation']

selected_image = apply_color_mask(image_select,selected_mask, color)

cv2.imwrite("res.jpg", selected_image)测试效果: