第T7周:咖啡豆识别

- 文为「365天深度学习训练营」内部文章

- 参考本文所写文章,请在文章开头带上「 声明」

1.设置GPU

import tensorflow as tf

gpus = tf.config.list_physical_devices("GPU")

if gpus:

tf.config.experimental.set_memory_growth(gpus[0], True) #设置GPU显存用量按需使用

tf.config.set_visible_devices([gpus[0]],"GPU")from tensorflow import keras

from tensorflow.keras import layers,models

import numpy as np

import matplotlib.pyplot as plt

import os,PIL,pathlib

data_dir = "E:/T3/kafeidao"

data_dir = pathlib.Path(data_dir)image_count = len(list(data_dir.glob('*/*.png')))

print("图片总数为:",image_count)图片总数为: 1200

batch_size = 32

img_height = 224

img_width = 224"""

关于image_dataset_from_directory()的详细介绍可以参考文章:https://mtyjkh.blog.csdn.net/article/details/117018789

"""

train_ds = tf.keras.preprocessing.image_dataset_from_directory(

data_dir,

validation_split=0.2,

subset="training",

seed=123,

image_size=(img_height, img_width),

batch_size=batch_size)Found 1200 files belonging to 4 classes. Using 960 files for training.

"""

关于image_dataset_from_directory()的详细介绍可以参考文章:https://mtyjkh.blog.csdn.net/article/details/117018789

"""

val_ds = tf.keras.preprocessing.image_dataset_from_directory(

data_dir,

validation_split=0.2,

subset="validation",

seed=123,

image_size=(img_height, img_width),

batch_size=batch_size)Found 1200 files belonging to 4 classes. Using 240 files for validation.

class_names = train_ds.class_names

print(class_names)['Dark', 'Green', 'Light', 'Medium']

plt.figure(figsize=(10, 4)) # 图形的宽为10高为5

for images, labels in train_ds.take(1):

for i in range(10):

ax = plt.subplot(2, 5, i + 1)

plt.imshow(images[i].numpy().astype("uint8"))

plt.title(class_names[labels[i]])

plt.axis("off")for image_batch, labels_batch in train_ds:

print(image_batch.shape)

print(labels_batch.shape)

break(32, 224, 224, 3) (32,

AUTOTUNE = tf.data.AUTOTUNE

train_ds = train_ds.cache().shuffle(1000).prefetch(buffer_size=AUTOTUNE)

val_ds = val_ds.cache().prefetch(buffer_size=AUTOTUNE)normalization_layer = layers.experimental.preprocessing.Rescaling(1./255)

train_ds = train_ds.map(lambda x, y: (normalization_layer(x), y))

val_ds = val_ds.map(lambda x, y: (normalization_layer(x), y))image_batch, labels_batch = next(iter(val_ds))

first_image = image_batch[0]

# 查看归一化后的数据

print(np.min(first_image), np.max(first_image))0.0 1.0

from tensorflow.keras import layers, models, Input

from tensorflow.keras.models import Model

from tensorflow.keras.layers import Conv2D, MaxPooling2D, Dense, Flatten, Dropout

def VGG16(nb_classes, input_shape):

input_tensor = Input(shape=input_shape)

# 1st block

x = Conv2D(64, (3,3), activation='relu', padding='same',name='block1_conv1')(input_tensor)

x = Conv2D(64, (3,3), activation='relu', padding='same',name='block1_conv2')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block1_pool')(x)

# 2nd block

x = Conv2D(128, (3,3), activation='relu', padding='same',name='block2_conv1')(x)

x = Conv2D(128, (3,3), activation='relu', padding='same',name='block2_conv2')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block2_pool')(x)

# 3rd block

x = Conv2D(256, (3,3), activation='relu', padding='same',name='block3_conv1')(x)

x = Conv2D(256, (3,3), activation='relu', padding='same',name='block3_conv2')(x)

x = Conv2D(256, (3,3), activation='relu', padding='same',name='block3_conv3')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block3_pool')(x)

# 4th block

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block4_conv1')(x)

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block4_conv2')(x)

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block4_conv3')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block4_pool')(x)

# 5th block

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block5_conv1')(x)

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block5_conv2')(x)

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block5_conv3')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block5_pool')(x)

# full connection

x = Flatten()(x)

x = Dense(4096, activation='relu', name='fc1')(x)

x = Dense(4096, activation='relu', name='fc2')(x)

output_tensor = Dense(nb_classes, activation='softmax', name='predictions')(x)

model = Model(input_tensor, output_tensor)

return model

model=VGG16(len(class_names), (img_width, img_height, 3))

model.summary()odel: "model"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

input_1 (InputLayer) [(None, 224, 224, 3)] 0

block1_conv1 (Conv2D) (None, 224, 224, 64) 1792

block1_conv2 (Conv2D) (None, 224, 224, 64) 36928

block1_pool (MaxPooling2D) (None, 112, 112, 64) 0

block2_conv1 (Conv2D) (None, 112, 112, 128) 73856

block2_conv2 (Conv2D) (None, 112, 112, 128) 147584

block2_pool (MaxPooling2D) (None, 56, 56, 128) 0

block3_conv1 (Conv2D) (None, 56, 56, 256) 295168

block3_conv2 (Conv2D) (None, 56, 56, 256) 590080

block3_conv3 (Conv2D) (None, 56, 56, 256) 590080

block3_pool (MaxPooling2D) (None, 28, 28, 256) 0

block4_conv1 (Conv2D) (None, 28, 28, 512) 1180160

block4_conv2 (Conv2D) (None, 28, 28, 512) 2359808

block4_conv3 (Conv2D) (None, 28, 28, 512) 2359808

block4_pool (MaxPooling2D) (None, 14, 14, 512) 0

block5_conv1 (Conv2D) (None, 14, 14, 512) 2359808

block5_conv2 (Conv2D) (None, 14, 14, 512) 2359808

block5_conv3 (Conv2D) (None, 14, 14, 512) 2359808

block5_pool (MaxPooling2D) (None, 7, 7, 512) 0

flatten (Flatten) (None, 25088) 0

fc1 (Dense) (None, 4096) 102764544

fc2 (Dense) (None, 4096) 16781312

predictions (Dense) (None, 4) 16388

=================================================================

Total params: 134276932 (512.23 MB)

Trainable params: 134276932 (512.23 MB)

Non-trainable params: 0 (0.00 Byte)

_________________________________________________________________

# 设置初始学习率

initial_learning_rate = 1e-4

lr_schedule = tf.keras.optimizers.schedules.ExponentialDecay(

initial_learning_rate,

decay_steps=30, # 敲黑板!!!这里是指 steps,不是指epochs

decay_rate=0.92, # lr经过一次衰减就会变成 decay_rate*lr

staircase=True)

# 设置优化器

opt = tf.keras.optimizers.Adam(learning_rate=initial_learning_rate)

model.compile(optimizer=opt,

loss=tf.keras.losses.SparseCategoricalCrossentropy(from_logits=False),

metrics=['accuracy'])epochs = 20

history = model.fit(

train_ds,

validation_data=val_ds,

epochs=epochs

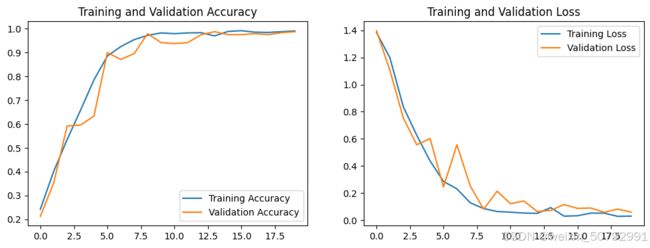

)poch 1/20 WARNING:tensorflow:From D:\dl\envs\pytorch_gpu\lib\site-packages\keras\src\utils\tf_utils.py:492: The name tf.ragged.RaggedTensorValue is deprecated. Please use tf.compat.v1.ragged.RaggedTensorValue instead. WARNING:tensorflow:From D:\dl\envs\pytorch_gpu\lib\site-packages\keras\src\engine\base_layer_utils.py:384: The name tf.executing_eagerly_outside_functions is deprecated. Please use tf.compat.v1.executing_eagerly_outside_functions instead. 30/30 [==============================] - 114s 4s/step - loss: 1.3847 - accuracy: 0.2427 - val_loss: 1.3976 - val_accuracy: 0.2125 Epoch 2/20 30/30 [==============================] - 124s 4s/step - loss: 1.1982 - accuracy: 0.4021 - val_loss: 1.1049 - val_accuracy: 0.3542 Epoch 3/20 30/30 [==============================] - 123s 4s/step - loss: 0.8367 - accuracy: 0.5354 - val_loss: 0.7545 - val_accuracy: 0.5917 Epoch 4/20 30/30 [==============================] - 121s 4s/step - loss: 0.6268 - accuracy: 0.6583 - val_loss: 0.5559 - val_accuracy: 0.5958 Epoch 5/20 30/30 [==============================] - 121s 4s/step - loss: 0.4366 - accuracy: 0.7854 - val_loss: 0.6017 - val_accuracy: 0.6333 Epoch 6/20 30/30 [==============================] - 122s 4s/step - loss: 0.2857 - accuracy: 0.8854 - val_loss: 0.2449 - val_accuracy: 0.9000 Epoch 7/20 30/30 [==============================] - 123s 4s/step - loss: 0.2309 - accuracy: 0.9250 - val_loss: 0.5561 - val_accuracy: 0.8708 Epoch 8/20 30/30 [==============================] - 126s 4s/step - loss: 0.1277 - accuracy: 0.9542 - val_loss: 0.2514 - val_accuracy: 0.8958 Epoch 9/20 30/30 [==============================] - 122s 4s/step - loss: 0.0856 - accuracy: 0.9719 - val_loss: 0.0807 - val_accuracy: 0.9792 Epoch 10/20 30/30 [==============================] - 121s 4s/step - loss: 0.0637 - accuracy: 0.9823 - val_loss: 0.2138 - val_accuracy: 0.9417 Epoch 11/20 30/30 [==============================] - 122s 4s/step - loss: 0.0589 - accuracy: 0.9792 - val_loss: 0.1214 - val_accuracy: 0.9375 Epoch 12/20 30/30 [==============================] - 121s 4s/step - loss: 0.0522 - accuracy: 0.9823 - val_loss: 0.1414 - val_accuracy: 0.9417 Epoch 13/20 30/30 [==============================] - 122s 4s/step - loss: 0.0503 - accuracy: 0.9833 - val_loss: 0.0634 - val_accuracy: 0.9750 Epoch 14/20 30/30 [==============================] - 121s 4s/step - loss: 0.0924 - accuracy: 0.9698 - val_loss: 0.0720 - val_accuracy: 0.9875 Epoch 15/20 30/30 [==============================] - 122s 4s/step - loss: 0.0294 - accuracy: 0.9885 - val_loss: 0.1154 - val_accuracy: 0.9750 Epoch 16/20 30/30 [==============================] - 123s 4s/step - loss: 0.0326 - accuracy: 0.9917 - val_loss: 0.0866 - val_accuracy: 0.9750 Epoch 17/20 30/30 [==============================] - 122s 4s/step - loss: 0.0520 - accuracy: 0.9854 - val_loss: 0.0899 - val_accuracy: 0.9792 Epoch 18/20 30/30 [==============================] - 124s 4s/step - loss: 0.0517 - accuracy: 0.9844 - val_loss: 0.0580 - val_accuracy: 0.9750 Epoch 19/20 30/30 [==============================] - 122s 4s/step - loss: 0.0280 - accuracy: 0.9875 - val_loss: 0.0817 - val_accuracy: 0.9833 Epoch 20/20 30/30 [==============================] - 122s 4s/step - loss: 0.0299 - accuracy: 0.9906 - val_loss: 0.0590 - val_accuracy: 0.9875

acc = history.history['accuracy']

val_acc = history.history['val_accuracy']

loss = history.history['loss']

val_loss = history.history['val_loss']

epochs_range = range(epochs)

plt.figure(figsize=(12, 4))

plt.subplot(1, 2, 1)

plt.plot(epochs_range, acc, label='Training Accuracy')

plt.plot(epochs_range, val_acc, label='Validation Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.subplot(1, 2, 2)

plt.plot(epochs_range, loss, label='Training Loss')

plt.plot(epochs_range, val_loss, label='Validation Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()