- 机器学习与深度学习间关系与区别

ℒℴѵℯ心·动ꦿ໊ོ꫞

人工智能学习深度学习python

一、机器学习概述定义机器学习(MachineLearning,ML)是一种通过数据驱动的方法,利用统计学和计算算法来训练模型,使计算机能够从数据中学习并自动进行预测或决策。机器学习通过分析大量数据样本,识别其中的模式和规律,从而对新的数据进行判断。其核心在于通过训练过程,让模型不断优化和提升其预测准确性。主要类型1.监督学习(SupervisedLearning)监督学习是指在训练数据集中包含输入

- Long类型前后端数据不一致

igotyback

前端

响应给前端的数据浏览器控制台中response中看到的Long类型的数据是正常的到前端数据不一致前后端数据类型不匹配是一个常见问题,尤其是当后端使用Java的Long类型(64位)与前端JavaScript的Number类型(最大安全整数为2^53-1,即16位)进行数据交互时,很容易出现精度丢失的问题。这是因为JavaScript中的Number类型无法安全地表示超过16位的整数。为了解决这个问

- LocalDateTime 转 String

igotyback

java开发语言

importjava.time.LocalDateTime;importjava.time.format.DateTimeFormatter;publicclassMain{publicstaticvoidmain(String[]args){//获取当前时间LocalDateTimenow=LocalDateTime.now();//定义日期格式化器DateTimeFormatterformat

- 消息中间件有哪些常见类型

xmh-sxh-1314

java

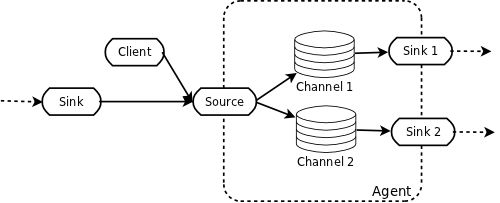

消息中间件根据其设计理念和用途,可以大致分为以下几种常见类型:点对点消息队列(Point-to-PointMessagingQueues):在这种模型中,消息被发送到特定的队列中,消费者从队列中取出并处理消息。队列中的消息只能被一个消费者消费,消费后即被删除。常见的实现包括IBM的MQSeries、RabbitMQ的部分使用场景等。适用于任务分发、负载均衡等场景。发布/订阅消息模型(Pub/Sub

- 每日一题——第九十题

互联网打工人no1

C语言程序设计每日一练c语言

题目:判断子串是否与主串匹配#include#include#include//////判断子串是否在主串中匹配//////主串///子串///boolisSubstring(constchar*str,constchar*substr){intlenstr=strlen(str);//计算主串的长度intlenSub=strlen(substr);//计算子串的长度//遍历主字符串,对每个可能得

- 每日一题——第八十四题

互联网打工人no1

C语言程序设计每日一练c语言

题目:编写函数1、输入10个职工的姓名和职工号2、按照职工由大到小顺序排列,姓名顺序也随之调整3、要求输入一个职工号,用折半查找法找出该职工的姓名#define_CRT_SECURE_NO_WARNINGS#include#include#defineMAX_EMPLOYEES10typedefstruct{intid;charname[50];}Empolyee;voidinputEmploye

- C#中使用split分割字符串

互联网打工人no1

c#

1、用字符串分隔:usingSystem.Text.RegularExpressions;stringstr="aaajsbbbjsccc";string[]sArray=Regex.Split(str,"js",RegexOptions.IgnoreCase);foreach(stringiinsArray)Response.Write(i.ToString()+"");输出结果:aaabbbc

- python os.environ

江湖偌大

python深度学习

os.environ['TF_CPP_MIN_LOG_LEVEL']='0'#默认值,输出所有信息os.environ['TF_CPP_MIN_LOG_LEVEL']='1'#屏蔽通知信息(INFO)os.environ['TF_CPP_MIN_LOG_LEVEL']='2'#屏蔽通知信息和警告信息(INFO\WARNING)os.environ['TF_CPP_MIN_LOG_LEVEL']='

- python os.environ_python os.environ 读取和设置环境变量

weixin_39605414

pythonos.environ

>>>importos>>>os.environ.keys()['LC_NUMERIC','GOPATH','GOROOT','GOBIN','LESSOPEN','SSH_CLIENT','LOGNAME','USER','HOME','LC_PAPER','PATH','DISPLAY','LANG','TERM','SHELL','J2REDIR','LC_MONETARY','QT_QPA

- LLM 词汇表

落难Coder

LLMsNLP大语言模型大模型llama人工智能

Contextwindow“上下文窗口”是指语言模型在生成新文本时能够回溯和参考的文本量。这不同于语言模型训练时所使用的大量数据集,而是代表了模型的“工作记忆”。较大的上下文窗口可以让模型理解和响应更复杂和更长的提示,而较小的上下文窗口可能会限制模型处理较长提示或在长时间对话中保持连贯性的能力。Fine-tuning微调是使用额外的数据进一步训练预训练语言模型的过程。这使得模型开始表示和模仿微调数

- 将cmd中命令输出保存为txt文本文件

落难Coder

Windowscmdwindow

最近深度学习本地的训练中我们常常要在命令行中运行自己的代码,无可厚非,我们有必要保存我们的炼丹结果,但是复制命令行输出到txt是非常麻烦的,其实Windows下的命令行为我们提供了相应的操作。其基本的调用格式就是:运行指令>输出到的文件名称或者具体保存路径测试下,我打开cmd并且ping一下百度:pingwww.baidu.com>./data.txt看下相同目录下data.txt的输出:如果你再

- Git常用命令-修改远程仓库地址

猿大师

LinuxJavagitjava

查看远程仓库地址gitremote-v返回结果originhttps://git.coding.net/*****.git(fetch)originhttps://git.coding.net/*****.git(push)修改远程仓库地址gitremoteset-urloriginhttps://git.coding.net/*****.git先删除后增加远程仓库地址gitremotermori

- 探索OpenAI和LangChain的适配器集成:轻松切换模型提供商

nseejrukjhad

langchaineasyui前端python

#探索OpenAI和LangChain的适配器集成:轻松切换模型提供商##引言在人工智能和自然语言处理的世界中,OpenAI的模型提供了强大的能力。然而,随着技术的发展,许多人开始探索其他模型以满足特定需求。LangChain作为一个强大的工具,集成了多种模型提供商,通过提供适配器,简化了不同模型之间的转换。本篇文章将介绍如何使用LangChain的适配器与OpenAI集成,以便轻松切换模型提供商

- python是什么意思中文-在python中%是什么意思

编程大乐趣

Python中%有两种:1、数值运算:%代表取模,返回除法的余数。如:>>>7%212、%操作符(字符串格式化,stringformatting),说明如下:%[(name)][flags][width].[precision]typecode(name)为命名flags可以有+,-,''或0。+表示右对齐。-表示左对齐。''为一个空格,表示在正数的左侧填充一个空格,从而与负数对齐。0表示使用0填

- 利用LangChain的StackExchange组件实现智能问答系统

nseejrukjhad

langchainmicrosoft数据库python

利用LangChain的StackExchange组件实现智能问答系统引言在当今的软件开发世界中,StackOverflow已经成为程序员解决问题的首选平台之一。而LangChain作为一个强大的AI应用开发框架,提供了StackExchange组件,使我们能够轻松地将StackOverflow的海量知识库集成到我们的应用中。本文将详细介绍如何使用LangChain的StackExchange组件

- 如何部分格式化提示模板:LangChain中的高级技巧

nseejrukjhad

langchainjava服务器python

标题:如何部分格式化提示模板:LangChain中的高级技巧内容:如何部分格式化提示模板:LangChain中的高级技巧引言在使用大型语言模型(LLM)时,提示工程是一个关键环节。LangChain提供了强大的提示模板功能,让我们能更灵活地构建和管理提示。本文将介绍LangChain中一个高级特性-部分格式化提示模板,这个技巧可以让你的提示管理更加高效和灵活。什么是部分格式化提示模板?部分格式化提

- python八股文面试题分享及解析(1)

Shawn________

python

#1.'''a=1b=2不用中间变量交换a和b'''#1.a=1b=2a,b=b,aprint(a)print(b)结果:21#2.ll=[]foriinrange(3):ll.append({'num':i})print(11)结果:#[{'num':0},{'num':1},{'num':2}]#3.kk=[]a={'num':0}foriinrange(3):#0,12#可变类型,不仅仅改变

- MongoDB Oplog 窗口

喝醉酒的小白

MongoDB运维

在MongoDB中,oplog(操作日志)是一个特殊的日志系统,用于记录对数据库的所有写操作。oplog允许副本集成员(通常是从节点)应用主节点上已经执行的操作,从而保持数据的一致性。它是MongoDB副本集实现数据复制的基础。MongoDBOplog窗口oplog窗口是指在MongoDB副本集中,从节点可以用来同步数据的时间范围。这个窗口通常由以下因素决定:Oplog大小:oplog的大小是有限

- node.js学习

小猿L

node.jsnode.js学习vim

node.js学习实操及笔记温故node.js,node.js学习实操过程及笔记~node.js学习视频node.js官网node.js中文网实操笔记githubcsdn笔记为什么学node.js可以让别人访问我们编写的网页为后续的框架学习打下基础,三大框架vuereactangular离不开node.jsnode.js是什么官网:node.js是一个开源的、跨平台的运行JavaScript的运行

- 番茄西红柿叶子病害分类数据集12882张11类别

futureflsl

数据集分类数据挖掘人工智能

数据集类型:图像分类用,不可用于目标检测无标注文件数据集格式:仅仅包含jpg图片,每个类别文件夹下面存放着对应图片图片数量(jpg文件个数):12882分类类别数:11类别名称:["Bacterial_Spot_Bacteria","Early_Blight_Fungus","Healthy","Late_Blight_Water_Mold","Leaf_Mold_Fungus","Powdery

- 回溯算法-重新安排行程

chirou_

算法数据结构图论c++图搜索

leetcode332.重新安排行程这题我还没自己ac过,只能现在凭着刚学完的热乎劲把我对题解的理解记下来。本题我认为对数据结构的考察比较多,用什么数据结构去存数据,去读取数据,都是很重要的。classSolution{private:unordered_map>targets;boolbacktracking(intticketNum,vector&result){//1.确定参数和返回值//2

- 【华为OD技术面试真题 - 技术面】- python八股文真题题库(4)

算法大师

华为od面试python

华为OD面试真题精选专栏:华为OD面试真题精选目录:2024华为OD面试手撕代码真题目录以及八股文真题目录文章目录华为OD面试真题精选**1.Python中的`with`**用途和功能自动资源管理示例:文件操作上下文管理协议示例代码工作流程解析优点2.\_\_new\_\_和**\_\_init\_\_**区别__new____init__区别总结3.**切片(Slicing)操作**基本切片语法

- python os 环境变量

CV矿工

python开发语言numpy

环境变量:环境变量是程序和操作系统之间的通信方式。有些字符不宜明文写进代码里,比如数据库密码,个人账户密码,如果写进自己本机的环境变量里,程序用的时候通过os.environ.get()取出来就行了。os.environ是一个环境变量的字典。环境变量的相关操作importos"""设置/修改环境变量:os.environ[‘环境变量名称’]=‘环境变量值’#其中key和value均为string类

- Redis系列:Geo 类型赋能亿级地图位置计算

Ly768768

redisbootstrap数据库

1前言我们在篇深刻理解高性能Redis的本质的时候就介绍过Redis的几种基本数据结构,它是基于不同业务场景而设计的:动态字符串(REDIS_STRING):整数(REDIS_ENCODING_INT)、字符串(REDIS_ENCODING_RAW)双端列表(REDIS_ENCODING_LINKEDLIST)压缩列表(REDIS_ENCODING_ZIPLIST)跳跃表(REDIS_ENCODI

- ARM驱动学习之4小结

JT灬新一

嵌入式C++arm开发学习linux

ARM驱动学习之4小结#include#include#include#include#include#defineDEVICE_NAME"hello_ctl123"MODULE_LICENSE("DualBSD/GPL");MODULE_AUTHOR("TOPEET");staticlonghello_ioctl(structfile*file,unsignedintcmd,unsignedlo

- C++ | Leetcode C++题解之第409题最长回文串

Ddddddd_158

经验分享C++Leetcode题解

题目:题解:classSolution{public:intlongestPalindrome(strings){unordered_mapcount;intans=0;for(charc:s)++count[c];for(autop:count){intv=p.second;ans+=v/2*2;if(v%2==1andans%2==0)++ans;}returnans;}};

- 2019-3-23晨间日记

红红火火小耳朵

今天是什么日子起床:7点40就寝:23点半天气:有太阳,不过一会儿出来一会儿进去特别清爽的凉意,还蛮舒服的心情:小激动要给女朋友过生日啦纪念日:田田女士过生日任务清单昨日完成的任务,最重要的三件事:1.英语一对一2.运动计划3.认真护肤习惯养成:调整状态周目标·完成进度英语七天打卡(5/7)轻课阅读(87/180)音标课(25/30)读书(福尔摩斯一章)学习·信息·阅读#英语课#Cookingte

- mac电脑命令行获取电量

小米人er

我的博客macos命令行

在macOS上,有几个命令行工具可以用来获取电量信息,最常用的是pmset命令。你可以通过以下方式来查看电池状态和电量信息:查看电池状态:pmset-gbatt这个命令会返回类似下面的输出:Nowdrawingfrom'BatteryPower'-InternalBattery-0(id=1234567)95%;discharging;4:02remainingpresent:true输出中包括电

- nosql数据库技术与应用知识点

皆过客,揽星河

NoSQLnosql数据库大数据数据分析数据结构非关系型数据库

Nosql知识回顾大数据处理流程数据采集(flume、爬虫、传感器)数据存储(本门课程NoSQL所处的阶段)Hdfs、MongoDB、HBase等数据清洗(入仓)Hive等数据处理、分析(Spark、Flink等)数据可视化数据挖掘、机器学习应用(Python、SparkMLlib等)大数据时代存储的挑战(三高)高并发(同一时间很多人访问)高扩展(要求随时根据需求扩展存储)高效率(要求读写速度快)

- SpringBlade dict-biz/list 接口 SQL 注入漏洞

文章永久免费只为良心

oracle数据库

SpringBladedict-biz/list接口SQL注入漏洞POC:构造请求包查看返回包你的网址/api/blade-system/dict-biz/list?updatexml(1,concat(0x7e,md5(1),0x7e),1)=1漏洞概述在SpringBlade框架中,如果dict-biz/list接口的后台处理逻辑没有正确地对用户输入进行过滤或参数化查询(PreparedSta

- Java常用排序算法/程序员必须掌握的8大排序算法

cugfy

java

分类:

1)插入排序(直接插入排序、希尔排序)

2)交换排序(冒泡排序、快速排序)

3)选择排序(直接选择排序、堆排序)

4)归并排序

5)分配排序(基数排序)

所需辅助空间最多:归并排序

所需辅助空间最少:堆排序

平均速度最快:快速排序

不稳定:快速排序,希尔排序,堆排序。

先来看看8种排序之间的关系:

1.直接插入排序

(1

- 【Spark102】Spark存储模块BlockManager剖析

bit1129

manager

Spark围绕着BlockManager构建了存储模块,包括RDD,Shuffle,Broadcast的存储都使用了BlockManager。而BlockManager在实现上是一个针对每个应用的Master/Executor结构,即Driver上BlockManager充当了Master角色,而各个Slave上(具体到应用范围,就是Executor)的BlockManager充当了Slave角色

- linux 查看端口被占用情况详解

daizj

linux端口占用netstatlsof

经常在启动一个程序会碰到端口被占用,这里讲一下怎么查看端口是否被占用,及哪个程序占用,怎么Kill掉已占用端口的程序

1、lsof -i:port

port为端口号

[root@slave /data/spark-1.4.0-bin-cdh4]# lsof -i:8080

COMMAND PID USER FD TY

- Hosts文件使用

周凡杨

hostslocahost

一切都要从localhost说起,经常在tomcat容器起动后,访问页面时输入http://localhost:8088/index.jsp,大家都知道localhost代表本机地址,如果本机IP是10.10.134.21,那就相当于http://10.10.134.21:8088/index.jsp,有时候也会看到http: 127.0.0.1:

- java excel工具

g21121

Java excel

直接上代码,一看就懂,利用的是jxl:

import java.io.File;

import java.io.IOException;

import jxl.Cell;

import jxl.Sheet;

import jxl.Workbook;

import jxl.read.biff.BiffException;

import jxl.write.Label;

import

- web报表工具finereport常用函数的用法总结(数组函数)

老A不折腾

finereportweb报表函数总结

ADD2ARRAY

ADDARRAY(array,insertArray, start):在数组第start个位置插入insertArray中的所有元素,再返回该数组。

示例:

ADDARRAY([3,4, 1, 5, 7], [23, 43, 22], 3)返回[3, 4, 23, 43, 22, 1, 5, 7].

ADDARRAY([3,4, 1, 5, 7], "测试&q

- 游戏服务器网络带宽负载计算

墙头上一根草

服务器

家庭所安装的4M,8M宽带。其中M是指,Mbits/S

其中要提前说明的是:

8bits = 1Byte

即8位等于1字节。我们硬盘大小50G。意思是50*1024M字节,约为 50000多字节。但是网宽是以“位”为单位的,所以,8Mbits就是1M字节。是容积体积的单位。

8Mbits/s后面的S是秒。8Mbits/s意思是 每秒8M位,即每秒1M字节。

我是在计算我们网络流量时想到的

- 我的spring学习笔记2-IoC(反向控制 依赖注入)

aijuans

Spring 3 系列

IoC(反向控制 依赖注入)这是Spring提出来了,这也是Spring一大特色。这里我不用多说,我们看Spring教程就可以了解。当然我们不用Spring也可以用IoC,下面我将介绍不用Spring的IoC。

IoC不是框架,她是java的技术,如今大多数轻量级的容器都会用到IoC技术。这里我就用一个例子来说明:

如:程序中有 Mysql.calss 、Oracle.class 、SqlSe

- 高性能mysql 之 选择存储引擎(一)

annan211

mysqlInnoDBMySQL引擎存储引擎

1 没有特殊情况,应尽可能使用InnoDB存储引擎。 原因:InnoDB 和 MYIsAM 是mysql 最常用、使用最普遍的存储引擎。其中InnoDB是最重要、最广泛的存储引擎。她 被设计用来处理大量的短期事务。短期事务大部分情况下是正常提交的,很少有回滚的情况。InnoDB的性能和自动崩溃 恢复特性使得她在非事务型存储的需求中也非常流行,除非有非常

- UDP网络编程

百合不是茶

UDP编程局域网组播

UDP是基于无连接的,不可靠的传输 与TCP/IP相反

UDP实现私聊,发送方式客户端,接受方式服务器

package netUDP_sc;

import java.net.DatagramPacket;

import java.net.DatagramSocket;

import java.net.Ine

- JQuery对象的val()方法执行结果分析

bijian1013

JavaScriptjsjquery

JavaScript中,如果id对应的标签不存在(同理JAVA中,如果对象不存在),则调用它的方法会报错或抛异常。在实际开发中,发现JQuery在id对应的标签不存在时,调其val()方法不会报错,结果是undefined。

- http请求测试实例(采用json-lib解析)

bijian1013

jsonhttp

由于fastjson只支持JDK1.5版本,因些对于JDK1.4的项目,可以采用json-lib来解析JSON数据。如下是http请求的另外一种写法,仅供参考。

package com;

import java.util.HashMap;

import java.util.Map;

import

- 【RPC框架Hessian四】Hessian与Spring集成

bit1129

hessian

在【RPC框架Hessian二】Hessian 对象序列化和反序列化一文中介绍了基于Hessian的RPC服务的实现步骤,在那里使用Hessian提供的API完成基于Hessian的RPC服务开发和客户端调用,本文使用Spring对Hessian的集成来实现Hessian的RPC调用。

定义模型、接口和服务器端代码

|---Model

&nb

- 【Mahout三】基于Mahout CBayes算法的20newsgroup流程分析

bit1129

Mahout

1.Mahout环境搭建

1.下载Mahout

http://mirror.bit.edu.cn/apache/mahout/0.10.0/mahout-distribution-0.10.0.tar.gz

2.解压Mahout

3. 配置环境变量

vim /etc/profile

export HADOOP_HOME=/home

- nginx负载tomcat遇非80时的转发问题

ronin47

nginx负载后端容器是tomcat(其它容器如WAS,JBOSS暂没发现这个问题)非80端口,遇到跳转异常问题。解决的思路是:$host:port

详细如下:

该问题是最先发现的,由于之前对nginx不是特别的熟悉所以该问题是个入门级别的:

? 1 2 3 4 5

- java-17-在一个字符串中找到第一个只出现一次的字符

bylijinnan

java

public class FirstShowOnlyOnceElement {

/**Q17.在一个字符串中找到第一个只出现一次的字符。如输入abaccdeff,则输出b

* 1.int[] count:count[i]表示i对应字符出现的次数

* 2.将26个英文字母映射:a-z <--> 0-25

* 3.假设全部字母都是小写

*/

pu

- mongoDB 复制集

开窍的石头

mongodb

mongo的复制集就像mysql的主从数据库,当你往其中的主复制集(primary)写数据的时候,副复制集(secondary)会自动同步主复制集(Primary)的数据,当主复制集挂掉以后其中的一个副复制集会自动成为主复制集。提供服务器的可用性。和防止当机问题

mo

- [宇宙与天文]宇宙时代的经济学

comsci

经济

宇宙尺度的交通工具一般都体型巨大,造价高昂。。。。。

在宇宙中进行航行,近程采用反作用力类型的发动机,需要消耗少量矿石燃料,中远程航行要采用量子或者聚变反应堆发动机,进行超空间跳跃,要消耗大量高纯度水晶体能源

以目前地球上国家的经济发展水平来讲,

- Git忽略文件

Cwind

git

有很多文件不必使用git管理。例如Eclipse或其他IDE生成的项目文件,编译生成的各种目标或临时文件等。使用git status时,会在Untracked files里面看到这些文件列表,在一次需要添加的文件比较多时(使用git add . / git add -u),会把这些所有的未跟踪文件添加进索引。

==== ==== ==== 一些牢骚

- MySQL连接数据库的必须配置

dashuaifu

mysql连接数据库配置

MySQL连接数据库的必须配置

1.driverClass:com.mysql.jdbc.Driver

2.jdbcUrl:jdbc:mysql://localhost:3306/dbname

3.user:username

4.password:password

其中1是驱动名;2是url,这里的‘dbna

- 一生要养成的60个习惯

dcj3sjt126com

习惯

一生要养成的60个习惯

第1篇 让你更受大家欢迎的习惯

1 守时,不准时赴约,让别人等,会失去很多机会。

如何做到:

①该起床时就起床,

②养成任何事情都提前15分钟的习惯。

③带本可以随时阅读的书,如果早了就拿出来读读。

④有条理,生活没条理最容易耽误时间。

⑤提前计划:将重要和不重要的事情岔开。

⑥今天就准备好明天要穿的衣服。

⑦按时睡觉,这会让按时起床更容易。

2 注重

- [介绍]Yii 是什么

dcj3sjt126com

PHPyii2

Yii 是一个高性能,基于组件的 PHP 框架,用于快速开发现代 Web 应用程序。名字 Yii (读作 易)在中文里有“极致简单与不断演变”两重含义,也可看作 Yes It Is! 的缩写。

Yii 最适合做什么?

Yii 是一个通用的 Web 编程框架,即可以用于开发各种用 PHP 构建的 Web 应用。因为基于组件的框架结构和设计精巧的缓存支持,它特别适合开发大型应

- Linux SSH常用总结

eksliang

linux sshSSHD

转载请出自出处:http://eksliang.iteye.com/blog/2186931 一、连接到远程主机

格式:

ssh name@remoteserver

例如:

ssh

[email protected]

二、连接到远程主机指定的端口

格式:

ssh name@remoteserver -p 22

例如:

ssh i

- 快速上传头像到服务端工具类FaceUtil

gundumw100

android

快速迭代用

import java.io.DataOutputStream;

import java.io.File;

import java.io.FileInputStream;

import java.io.FileNotFoundException;

import java.io.FileOutputStream;

import java.io.IOExceptio

- jQuery入门之怎么使用

ini

JavaScripthtmljqueryWebcss

jQuery的强大我何问起(个人主页:hovertree.com)就不用多说了,那么怎么使用jQuery呢?

首先,下载jquery。下载地址:http://hovertree.com/hvtart/bjae/b8627323101a4994.htm,一个是压缩版本,一个是未压缩版本,如果在开发测试阶段,可以使用未压缩版本,实际应用一般使用压缩版本(min)。然后就在页面上引用。

- 带filter的hbase查询优化

kane_xie

查询优化hbaseRandomRowFilter

问题描述

hbase scan数据缓慢,server端出现LeaseException。hbase写入缓慢。

问题原因

直接原因是: hbase client端每次和regionserver交互的时候,都会在服务器端生成一个Lease,Lease的有效期由参数hbase.regionserver.lease.period确定。如果hbase scan需

- java设计模式-单例模式

men4661273

java单例枚举反射IOC

单例模式1,饿汉模式

//饿汉式单例类.在类初始化时,已经自行实例化

public class Singleton1 {

//私有的默认构造函数

private Singleton1() {}

//已经自行实例化

private static final Singleton1 singl

- mongodb 查询某一天所有信息的3种方法,根据日期查询

qiaolevip

每天进步一点点学习永无止境mongodb纵观千象

// mongodb的查询真让人难以琢磨,就查询单天信息,都需要花费一番功夫才行。

// 第一种方式:

coll.aggregate([

{$project:{sendDate: {$substr: ['$sendTime', 0, 10]}, sendTime: 1, content:1}},

{$match:{sendDate: '2015-

- 二维数组转换成JSON

tangqi609567707

java二维数组json

原文出处:http://blog.csdn.net/springsen/article/details/7833596

public class Demo {

public static void main(String[] args) { String[][] blogL

- erlang supervisor

wudixiaotie

erlang

定义supervisor时,如果是监控celuesimple_one_for_one则删除children的时候就用supervisor:terminate_child (SupModuleName, ChildPid),如果shutdown策略选择的是brutal_kill,那么supervisor会调用exit(ChildPid, kill),这样的话如果Child的behavior是gen_