Linux 高可用(HA)集群之Pacemaker详解

大纲

说明:本来我不想写这篇博文的,因为前几篇博文都有介绍pacemaker,但是我觉得还是得写一下,试想应该会有博友需要,特别是pacemaker 1.1.8(CentOS 6.4)以后,pacemaker 有些特别要注意的变化 ,最后想说,敬开源,敬开源精神。(pacemaker官方网站:http://clusterlabs.org/)

一、pacemaker 是什么

二、pacemaker 特点

三、pacemaker 软件包供应商

四、pacemaker 版本信息

五、pacemaker 配置案例

六、pacemaker 支持集群架构

七、pacemaker 内部结构

八、pacemaker 源代码组成

九、Centos6.4+Corosync+Pacemaker 实现高可用的Web集群

一、pacemaker 是什么

1.pacemaker 简单说明

pacemaker(直译:心脏起搏器),是一个群集资源管理器。它实现最大可用性群集服务(亦称资源管理)的节点和资源级故障检测和恢复使用您的首选集群基础设施(OpenAIS的或Heaerbeat)提供的消息和成员能力。

它可以做乎任何规模的集群,并配备了一个强大的依赖模型,使管理员能够准确地表达群集资源之间的关系(包括顺序和位置)。几乎任何可以编写脚本,可以管理作为心脏起搏器集群的一部分。

我再次说明一下,pacemaker是个资源管理器,不是提供心跳信息的,因为它似乎是一个普遍的误解,也是值得的。pacemaker是一个延续的CRM(亦称Heartbeat V2资源管理器),最初是为心跳,但已经成为独立的项目。

2.pacemaker 由来

大家都知道,Heartbeat 到了V3版本后,拆分为多个项目,其中pacemaker就是拆分出来的资源管理器。

Heartbeat 3.0拆分之后的组成部分:

Heartbeat:将原来的消息通信层独立为heartbeat项目,新的heartbeat只负责维护集群各节点的信息以及它们之前通信;

Cluster Glue:相当于一个中间层,它用来将heartbeat和pacemaker关联起来,主要包含2个部分,即为LRM和STONITH。

Resource Agent:用来控制服务启停,监控服务状态的脚本集合,这些脚本将被LRM调用从而实现各种资源启动、停止、监控等等。

Pacemaker : 也就是Cluster Resource Manager (简称CRM),用来管理整个HA的控制中心,客户端通过pacemaker来配置管理监控整个集群。

二、pacemaker 特点

主机和应用程序级别的故障检测和恢复

几乎支持任何冗余配置

同时支持多种集群配置模式

配置策略处理法定人数损失(多台机器失败时)

支持应用启动/关机顺序

支持,必须/必须在同一台机器上运行的应用程序

支持多种模式的应用程序(如主/从)

可以测试任何故障或群集的群集状态

注:说白了意思就是功能强大,现在最主流的资源管理器。

三、pacemaker 软件包供应商

目前pacemaker支持主流的操作系统,

Fedora(12.0)

红帽企业Linux(5.0,6.0)

openSUSE(11.0)

Debian

Ubuntu的LTS(10.4)

CentOS (5.0,6.0)

四、pacemaker 版本信息

目前,最新版的是pacemaker 1.1.10 ,是2013年7月发布的

五、pacemaker 配置案例

1.主/从架构

说明:许多高可用性的情况下,使用Pacemaker和DRBD的双节点主/从集群是一个符合成本效益的解决方案。

2.多节点备份集群

说明:支持多少节点,Pacemaker可以显着降低硬件成本通过允许几个主/从群集要结合和共享一个公用备份节点。

3.共享存储集群

说明:有共享存储时,每个节点可能被用于故障转移。Pacemaker甚至可以运行多个服务。

4.站点集群

说明:Pacemaker 1.2 将包括增强简化设立分站点集群

六、pacemaker 支持集群

1.基于OpenAIS的集群

2.传统集群架构,基于心跳信息

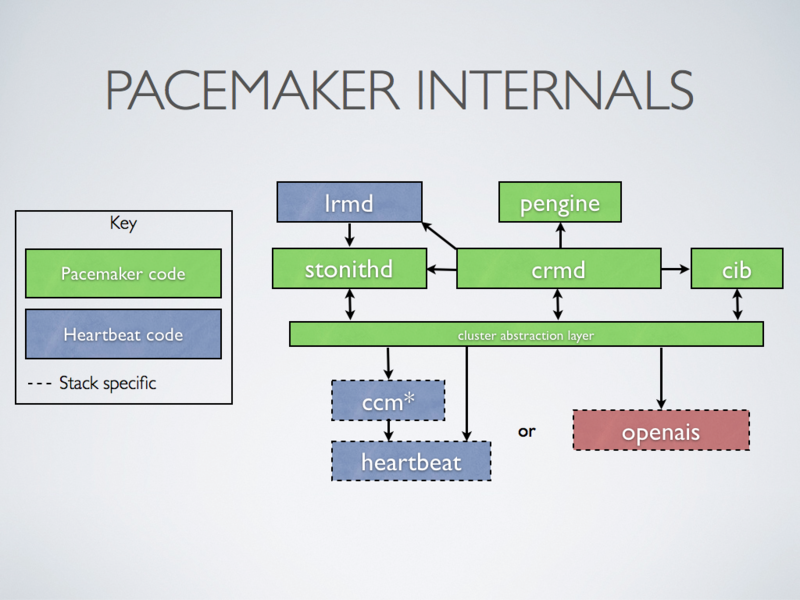

七、pacemaker 内部结构

1.群集组件说明:

stonithd:心跳系统。

lrmd:本地资源管理守护进程。它提供了一个通用的接口支持的资源类型。直接调用资源代理(脚本)。

pengine:政策引擎。根据当前状态和配置集群计算的下一个状态。产生一个过渡图,包含行动和依赖关系的列表。

CIB:群集信息库。包含所有群集选项,节点,资源,他们彼此之间的关系和现状的定义。同步更新到所有群集节点。

CRMD:集群资源管理守护进程。主要是消息代理的PEngine和LRM,还选举一个领导者(DC)统筹活动(包括启动/停止资源)的集群。

OpenAIS:OpenAIS的消息和成员层。

Heartbeat:心跳消息层,OpenAIS的一种替代。

CCM:共识群集成员,心跳成员层。

2.功能概述

CIB使用XML表示集群的集群中的所有资源的配置和当前状态。CIB的内容会被自动在整个集群中同步,使用PEngine计算集群的理想状态,生成指令列表,然后输送到DC(指定协调员)。Pacemaker 集群中所有节点选举的DC节点作为主决策节点。如果当选DC节点宕机,它会在所有的节点上, 迅速建立一个新的DC。DC将PEngine生成的策略,传递给其他节点上的LRMd(本地资源管理守护程序)或CRMD通过集群消息传递基础结构。当集群中有节点宕机,PEngine重新计算的理想策略。在某些情况下,可能有必要关闭节点,以保护共享数据或完整的资源回收。为此,Pacemaker配备了stonithd设备。STONITH可以将其它节点“爆头”,通常是实现与远程电源开关。Pacemaker会将STONITH设备,配置为资源保存在CIB中,使他们可以更容易地监测资源失败或宕机。

八、pacemaker 源代码组成

说明:大家可以看到Pacemaker主要是由C语言写的,其次是Python,说明其效率非常高。最后我们来说一个小案例,实现高可用的Web集群。

九、Centos6.4+Corosync+Pacemaker 实现高可用的Web集群

1.环境说明

(1).操作系统

CentOS 6.4 X86_64 位系统

(2).软件环境

Corosync 1.4.1

Pacemaker 1.1.8

crmsh 1.2.6

(3).拓扑准备

2.Corosync与Pacemaker 安装与配置

Corosync与Pacemaker安装与配置我就不在这里重复说明了,大家参考一下这篇博文:http://freeloda.blog.51cto.com/2033581/1272417 (Linux 高可用(HA)集群之Corosync详解)

3.Pacemaker 配置资源方法

(1).命令配置方式

crmsh

pcs

(2).图形配置方式

pygui

hawk

LCMC

pcs

注:本文主要的讲解的是crmsh

4.crmsh 简单说明

注:以下上pacemaker 1.1.8的更新说明,最重要的我用红色标记出来,从pacemaker 1.1.8开始,crm sh 发展成一个独立项目,pacemaker中不再提供,说明我们安装好pacemaker后,是不会有crm这个命令行模式的资源管理器的。

[root@node1 ~]# cd /usr/share/doc/pacemaker-1.1.8/ [root@node1 pacemaker-1.1.8]# ll 总用量 132 -rw-r--r-- 1 root root 1102 2月 22 13:05 AUTHORS -rw-r--r-- 1 root root 109311 2月 22 13:05 ChangeLog -rw-r--r-- 1 root root 18046 2月 22 13:05 COPYING [root@node1 pacemaker-1.1.8]# vim ChangeLog * Thu Sep 20 2012 Andrew Beekhof <[email protected]> Pacemaker-1.1.8-1 - Update source tarball to revision: 1a5341f - Statistics: Changesets: 1019 Diff: 2107 files changed, 117258 insertions(+), 73606 deletions(-) - All APIs have been cleaned up and reduced to essentials - Pacemaker now includes a replacement lrmd that supports systemd and upstart agents - Config and state files (cib.xml, PE inputs and core files) have moved to new locations - The crm shell has become a separate project and no longer included with Pacemaker (crm shell 已成为一个独立的项目,pacemaker中一再提供) - All daemons/tools now have a unified set of error codes based on errno.h (see crm_error) [root@node1 ~]# crm crmadmin crm_diff crm_failcount crm_mon crm_report crm_shadow crm_standby crm_verify crm_attribute crm_error crm_master crm_node crm_resource crm_simulate crm_ticket

注:大家可以看到,安装好pacemaker后,就没有crm shell命令行工具,我们得单独安装。下面我们就来说说怎么安装crm sh

5.安装crmsh资源管理工具

(1).crmsh官方网站

https://savannah.nongnu.org/forum/forum.php?forum_id=7672

(2).crmsh下载地址

http://download.opensuse.org/repositories/network:/ha-clustering:/Stable/

(3).安装crmsh

[root@node1 ~]# rpm -ivh crmsh-1.2.6-0.rc2.2.1.x86_64.rpm

warning: crmsh-1.2.6-0.rc2.2.1.x86_64.rpm: Header V3 DSA/SHA1 Signature, key ID 7b709911: NOKEY

error: Failed dependencies:

pssh is needed by crmsh-1.2.6-0.rc2.2.1.x86_64

python-dateutil is needed by crmsh-1.2.6-0.rc2.2.1.x86_64

python-lxml is needed by crmsh-1.2.6-0.rc2.2.1.x86_64

注:大家可以看到年缺少依赖包,我们先用yum安装依赖包

[root@node1 ~]# yum install -y python-dateutil python-lxml

[root@node1 ~]# rpm -ivh crmsh-1.2.6-0.rc2.2.1.x86_64.rpm --nodeps

warning: crmsh-1.2.6-0.rc2.2.1.x86_64.rpm: Header V3 DSA/SHA1 Signature, key ID 7b709911: NOKEY

Preparing... ########################################### [100%]

1:crmsh ########################################### [100%]

[root@node1 ~]# crm #安装好后出现一个crm命令,说明安装完成

crm crm_attribute crm_error crm_master crm_node crm_resource crm_simulate crm_ticket

crmadmin crm_diff crm_failcount crm_mon crm_report crm_shadow crm_standby crm_verify

[root@node1 ~]# crm #输入crm命令,进入资源配置模式

Cannot change active directory to /var/lib/pacemaker/cores/root: No such file or directory (2)

crm(live)# help #查看一下帮助

This is crm shell, a Pacemaker command line interface.

Available commands:

cib manage shadow CIBs

resource resources management

configure CRM cluster configuration

node nodes management

options user preferences

history CRM cluster history

site Geo-cluster support

ra resource agents information center

status show cluster status

help,? show help (help topics for list of topics)

end,cd,up go back one level

quit,bye,exit exit the program

crm(live)#

注:到此准备工作全部完成,下面我们来具体配置一下高可用的Web集群,在配置之前我们还得简的说明一下,crm sh 如何使用!

6.crmsh使用说明

注:简单说明一下,其实遇到一个新命令最好的方法就是man一下!简单的先熟悉一下这个命令,然后再慢慢尝试。

[root@node1 ~]# crm #输入crm命令,进入crm sh 模式

Cannot change active directory to /var/lib/pacemaker/cores/root: No such file or directory (2)

crm(live)# help #输入help查看一下,会出下很多子命令

This is crm shell, a Pacemaker command line interface.

Available commands:

cib manage shadow CIBs

resource resources management

configure CRM cluster configuration

node nodes management

options user preferences

history CRM cluster history

site Geo-cluster support

ra resource agents information center

status show cluster status

help,? show help (help topics for list of topics)

end,cd,up go back one level

quit,bye,exit exit the program

crm(live)# configure #输入configure就会进入,configure模式下,

crm(live)configure# #敲两下tab键就会显示configure下全部命令

? default-timeouts group node rename simulate

bye delete help op_defaults role template

cd edit history order rsc_defaults up

cib end load primitive rsc_template upgrade

cibstatus erase location property rsc_ticket user

clone exit master ptest rsctest verify

collocation fencing_topology modgroup quit save xml

colocation filter monitor ra schema

commit graph ms refresh show

crm(live)configure# help node #输入help加你想了解的任意命令,就会显示该命令的使用帮助与案例

The node command describes a cluster node. Nodes in the CIB are

commonly created automatically by the CRM. Hence, you should not

need to deal with nodes unless you also want to define node

attributes. Note that it is also possible to manage node

attributes at the `node` level.

Usage:

...............

node <uname>[:<type>]

[attributes <param>=<value> [<param>=<value>...]]

[utilization <param>=<value> [<param>=<value>...]]

type :: normal | member | ping

...............

Example:

...............

node node1

node big_node attributes memory=64

...............

注:好了,简单说明就到这,其实就是一句话,不会的命令help一下。下面我们开始配置,高可用的Web集群。

7.crmsh 配置高可用的Web集群

(1).查看一下默认配置

[root@node1 ~]# crm

Cannot change active directory to /var/lib/pacemaker/cores/root: No such file or directory (2)

crm(live)# configure

crm(live)configure# show

node node1.test.com

node node2.test.com

property $id="cib-bootstrap-options" \

dc-version="1.1.8-7.el6-394e906" \

cluster-infrastructure="classic openais (with plugin)" \

expected-quorum-votes="2" \

(2).检测一下配置文件是否有错

crm(live)# configure

crm(live)configure# verify

crm_verify[5202]: 2011/06/14_19:10:38 ERROR: unpack_resources: Resource start-up disabled since no STONITH resources have been defined

crm_verify[5202]: 2011/06/14_19:10:38 ERROR: unpack_resources: Either configure some or disable STONITH with the stonith-enabled option

crm_verify[5202]: 2011/06/14_19:10:38 ERROR: unpack_resources: NOTE: Clusters with shared data need STONITH to ensure data integrity

注:说我们的STONITH resources没有定义,因我们这里没有STONITH设备,所以我们先关闭这个属性

crm(live)configure# property stonith-enabled=false

crm(live)configure# show

node node1.test.com

node node2.test.com

property $id="cib-bootstrap-options" \

dc-version="1.1.8-7.el6-394e906" \

cluster-infrastructure="classic openais (with plugin)" \

expected-quorum-votes="2" \

stonith-enabled="false"

crm(live)configure# verify #现在已经不报错

(3).查看当前集群系统所支持的类型

crm(live)# ra crm(live)ra# classes lsb ocf / heartbeat pacemaker redhat service stonith

(4).查看某种类别下的所用资源代理的列表

crm(live)ra# list lsb auditd blk-availability corosync corosync-notifyd crond halt htcacheclean httpd ip6tables iptables killall lvm2-lvmetad lvm2-monitor messagebus netconsole netfs network nfs nfslock ntpd ntpdate pacemaker postfix quota_nld rdisc restorecond rpcbind rpcgssd rpcidmapd rpcsvcgssd rsyslog sandbox saslauthd single sshd svnserve udev-post winbind crm(live)ra# list ocf heartbeat AoEtarget AudibleAlarm CTDB ClusterMon Delay Dummy EvmsSCC Evmsd Filesystem ICP IPaddr IPaddr2 IPsrcaddr IPv6addr LVM LinuxSCSI MailTo ManageRAID ManageVE Pure-FTPd Raid1 Route SAPDatabase SAPInstance SendArp ServeRAID SphinxSearchDaemon Squid Stateful SysInfo VIPArip VirtualDomain WAS WAS6 WinPopup Xen Xinetd anything apache conntrackd db2 drbd eDir88 ethmonitor exportfs fio iSCSILogicalUnit iSCSITarget ids iscsi jboss lxc mysql mysql-proxy nfsserver nginx oracle oralsnr pgsql pingd portblock postfix proftpd rsyncd scsi2reservation sfex symlink syslog-ng tomcat vmware crm(live)ra# list ocf pacemaker ClusterMon Dummy HealthCPU HealthSMART Stateful SysInfo SystemHealth controld o2cb ping pingd

(5).查看某个资源代理的配置方法

crm(live)ra# info ocf:heartbeat:IPaddr

Manages virtual IPv4 addresses (portable version) (ocf:heartbeat:IPaddr)

This script manages IP alias IP addresses

It can add an IP alias, or remove one.

Parameters (* denotes required, [] the default):

ip* (string): IPv4 address

The IPv4 address to be configured in dotted quad notation, for example

"192.168.1.1".

nic (string, [eth0]): Network interface

The base network interface on which the IP address will be brought

online.

If left empty, the script will try and determine this from the

routing table.

Do NOT specify an alias interface in the form eth0:1 or anything here;

rather, specify the base interface only.

Prerequisite:

There must be at least one static IP address, which is not managed by

the cluster, assigned to the network interface.

If you can not assign any static IP address on the interface,

:

(6).接下来要创建的web集群创建一个IP地址资源(IP资源是主资源,我们查看一下怎么定义一个主资源)

crm(live)# configure

crm(live)configure# primitive

usage: primitive <rsc> {[<class>:[<provider>:]]<type>|@<template>}

[params <param>=<value> [<param>=<value>...]]

[meta <attribute>=<value> [<attribute>=<value>...]]

[utilization <attribute>=<value> [<attribute>=<value>...]]

[operations id_spec

[op op_type [<attribute>=<value>...] ...]]

crm(live)configure# primitive vip ocf:heartbeat:IPaddr params ip=192.168.18.200 nic=eth0 cidr_netmask=24 #增加一个VIP资源

crm(live)configure# show #查看已增加好的VIP,我用红色标记了一下

node node1.test.com

node node2.test.com

primitive vip ocf:heartbeat:IPaddr \

params ip="192.168.18.200" nic="eth0" cidr_netmask="24"

property $id="cib-bootstrap-options" \

dc-version="1.1.8-7.el6-394e906" \

cluster-infrastructure="classic openais (with plugin)" \

expected-quorum-votes="2" \

stonith-enabled="false"

crm(live)configure# verify #检查一下配置文件有没有错误

crm(live)configure# commit #提交配置的资源,在命令行配置资源时,只要不用commit提交配置好资源,就不会生效,一但用commit命令提交,就会写入到cib.xml的配置文件中

crm(live)# status #查看一下配置好的资源状态,有一个资源vip,运行在node1上

Last updated: Thu Aug 15 14:24:45 2013

Last change: Thu Aug 15 14:21:21 2013 via cibadmin on node1.test.com

Stack: classic openais (with plugin)

Current DC: node1.test.com - partition with quorum

Version: 1.1.8-7.el6-394e906

2 Nodes configured, 2 expected votes

1 Resources configured.

Online: [ node1.test.com node2.test.com ]

vip (ocf::heartbeat:IPaddr): Started node1.test.com

查看一下node1节点上的ip,大家可以看到vip已经生效,而后我们到node2上通过如下命令停止node1上的corosync服务,再查看状态

[root@node1 ~]# ifconfig

eth0 Link encap:Ethernet HWaddr 00:0C:29:91:45:90

inet addr:192.168.18.201 Bcast:192.168.18.255 Mask:255.255.255.0

inet6 addr: fe80::20c:29ff:fe91:4590/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:375197 errors:0 dropped:0 overruns:0 frame:0

TX packets:291575 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:55551264 (52.9 MiB) TX bytes:52697225 (50.2 MiB)

eth0:0 Link encap:Ethernet HWaddr 00:0C:29:91:45:90

inet addr:192.168.18.200 Bcast:192.168.18.255 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:16436 Metric:1

RX packets:6473 errors:0 dropped:0 overruns:0 frame:0

TX packets:6473 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:875395 (854.8 KiB) TX bytes:875395 (854.8 KiB)

测试,停止node1节点上的corosync,可以看到node1已经离线

[root@node2 ~]# ssh node1 "service corosync stop" Signaling Corosync Cluster Engine (corosync) to terminate: [确定] Waiting for corosync services to unload:..[确定] [root@node2 ~]# crm status Cannot change active directory to /var/lib/pacemaker/cores/root: No such file or directory (2) Last updated: Thu Aug 15 14:29:04 2013 Last change: Thu Aug 15 14:21:21 2013 via cibadmin on node1.test.com Stack: classic openais (with plugin) Current DC: node2.test.com - partition WITHOUT quorum Version: 1.1.8-7.el6-394e906 2 Nodes configured, 2 expected votes 1 Resources configured. Online: [ node2.test.com ] OFFLINE: [ node1.test.com ]

重点说明:上面的信息显示node1.test.com已经离线,但资源vip却没能在node2.test.com上启动。这是因为此时的集群状态为"WITHOUT quorum"(红色标记),即已经失去了quorum,此时集群服务本身已经不满足正常运行的条件,这对于只有两节点的集群来讲是不合理的。因此,我们可以通过如下的命令来修改忽略quorum不能满足的集群状态检查:property no-quorum-policy=ignore

crm(live)# configure

crm(live)configure# property no-quorum-policy=ignore

crm(live)configure# show

node node1.test.com

node node2.test.com

primitive vip ocf:heartbeat:IPaddr \

params ip="192.168.18.200" nic="eth0" cidr_netmask="24"

property $id="cib-bootstrap-options" \

dc-version="1.1.8-7.el6-394e906" \

cluster-infrastructure="classic openais (with plugin)" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore"

crm(live)configure# verify

crm(live)configure# commit

片刻之后,集群就会在目前仍在运行中的节点node2上启动此资源了,如下所示:

[root@node2 ~]# crm status Cannot change active directory to /var/lib/pacemaker/cores/root: No such file or directory (2) Last updated: Thu Aug 15 14:38:23 2013 Last change: Thu Aug 15 14:37:08 2013 via cibadmin on node2.test.com Stack: classic openais (with plugin) Current DC: node2.test.com - partition WITHOUT quorum Version: 1.1.8-7.el6-394e906 2 Nodes configured, 2 expected votes 1 Resources configured. Online: [ node2.test.com ] OFFLINE: [ node1.test.com ] vip (ocf::heartbeat:IPaddr): Started node2.test.com

好了,验正完成后,我们正常启动node1.test.com

[root@node2 ~]# ssh node1 "service corosync start" Starting Corosync Cluster Engine (corosync): [确定] [root@node2 ~]# crm status Cannot change active directory to /var/lib/pacemaker/cores/root: No such file or directory (2) Last updated: Thu Aug 15 14:39:45 2013 Last change: Thu Aug 15 14:37:08 2013 via cibadmin on node2.test.com Stack: classic openais (with plugin) Current DC: node2.test.com - partition with quorum Version: 1.1.8-7.el6-394e906 2 Nodes configured, 2 expected votes 1 Resources configured. Online: [ node1.test.com node2.test.com ] vip (ocf::heartbeat:IPaddr): Started node2.test.com [root@node2 ~]#

正常启动node1.test.com后,集群资源vip很可能会重新从node2.test.com转移回node1.test.com,但也可能不回去。资源的这种在节点间每一次的来回流动都会造成那段时间内其无法正常被访问,所以,我们有时候需要在资源因为节点故障转移到其它节点后,即便原来的节点恢复正常也禁止资源再次流转回来。这可以通过定义资源的黏性(stickiness)来实现。在创建资源时或在创建资源后,都可以指定指定资源黏性。好了,下面我们来简单回忆一下,资源黏性。

(7).资源黏性

资源黏性是指:资源更倾向于运行在哪个节点。

资源黏性值范围及其作用:

0:这是默认选项。资源放置在系统中的最适合位置。这意味着当负载能力“较好”或较差的节点变得可用时才转移资源。此选项的作用基本等同于自动故障回复,只是资源可能会转移到非之前活动的节点上;

大于0:资源更愿意留在当前位置,但是如果有更合适的节点可用时会移动。值越高表示资源越愿意留在当前位置;

小于0:资源更愿意移离当前位置。绝对值越高表示资源越愿意离开当前位置;

INFINITY:如果不是因节点不适合运行资源(节点关机、节点待机、达到migration-threshold 或配置更改)而强制资源转移,资源总是留在当前位置。此选项的作用几乎等同于完全禁用自动故障回复;

-INFINITY:资源总是移离当前位置;

我们这里可以通过以下方式为资源指定默认黏性值: rsc_defaults resource-stickiness=100

crm(live)configure# rsc_defaults resource-stickiness=100

crm(live)configure# verify

crm(live)configure# show

node node1.test.com

node node2.test.com

primitive vip ocf:heartbeat:IPaddr \

params ip="192.168.18.200" nic="eth0" cidr_netmask="24"

property $id="cib-bootstrap-options" \

dc-version="1.1.8-7.el6-394e906" \

cluster-infrastructure="classic openais (with plugin)" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"

crm(live)configure# commit

(8).结合上面已经配置好的IP地址资源,将此集群配置成为一个active/passive模型的web(httpd)服务集群

Node1:

[root@node1 ~]# yum -y install httpd [root@node1 ~]# echo "<h1>node1.magedu.com</h1>" > /var/www/html/index.html

Node2:

[root@node2 ~]# yum -y install httpd [root@node2 ~]# echo "<h1>node2.magedu.com</h1>" > /var/www/html/index.html

测试一下:

node1:

node2:

而后在各节点手动启动httpd服务,并确认其可以正常提供服务。接着使用下面的命令停止httpd服务,并确保其不会自动启动(在两个节点各执行一遍):

node1:

[root@node1 ~]# /etc/init.d/httpd stop [root@node1 ~]# chkconfig httpd off

node2:

[root@node2~]# /etc/init.d/httpd stop [root@node2 ~]# chkconfig httpd off

接下来我们将此httpd服务添加为集群资源。将httpd添加为集群资源有两处资源代理可用:lsb和ocf:heartbeat,为了简单起见,我们这里使用lsb类型:

首先可以使用如下命令查看lsb类型的httpd资源的语法格式:

crm(live)# ra

crm(live)ra# info lsb:httpd

start and stop Apache HTTP Server (lsb:httpd)

The Apache HTTP Server is an efficient and extensible \

server implementing the current HTTP standards.

Operations' defaults (advisory minimum):

start timeout=15

stop timeout=15

status timeout=15

restart timeout=15

force-reload timeout=15

monitor timeout=15 interval=15

接下来新建资源httpd:

crm(live)# configure

crm(live)configure# primitive httpd lsb:httpd

crm(live)configure# show

node node1.test.com

node node2.test.com

primitive httpd lsb:httpd

primitive vip ocf:heartbeat:IPaddr \

params ip="192.168.18.200" nic="eth0" cidr_netmask="24"

property $id="cib-bootstrap-options" \

dc-version="1.1.8-7.el6-394e906" \

cluster-infrastructure="classic openais (with plugin)" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"

crm(live)configure# verify

crm(live)configure# commit

来查看一下资源状态

[root@node1 ~]# crm status Cannot change active directory to /var/lib/pacemaker/cores/root: No such file or directory (2) Last updated: Thu Aug 15 14:55:04 2013 Last change: Thu Aug 15 14:54:14 2013 via cibadmin on node1.test.com Stack: classic openais (with plugin) Current DC: node2.test.com - partition with quorum Version: 1.1.8-7.el6-394e906 2 Nodes configured, 2 expected votes 2 Resources configured. Online: [ node1.test.com node2.test.com ] vip (ocf::heartbeat:IPaddr): Started node2.test.com httpd (lsb:httpd): Started node1.test.com

从上面的信息中可以看出vip和httpd有可能会分别运行于两个节点上,这对于通过此IP提供Web服务的应用来说是不成立的,即此两者资源必须同时运行在某节点上。有两种方法可以解决,一种是定义组资源,将vip与httpd同时加入一个组中,可以实现将资源运行在同节点上,另一种是定义资源约束可实现将资源运行在同一节点上。我们先来说每一种方法,定义组资源。

(9).定义组资源

crm(live)# configure

crm(live)configure# group webservice vip httpd

crm(live)configure# show

node node1.test.com

node node2.test.com

primitive httpd lsb:httpd

primitive vip ocf:heartbeat:IPaddr \

params ip="192.168.18.200" nic="eth0" cidr_netmask="24"

group webservice vip httpd

property $id="cib-bootstrap-options" \

dc-version="1.1.8-7.el6-394e906" \

cluster-infrastructure="classic openais (with plugin)" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"

crm(live)configure# verify

crm(live)configure# commit

再次查看一下资源状态

[root@node1 ~]# crm status

Cannot change active directory to /var/lib/pacemaker/cores/root: No such file or directory (2)

Last updated: Thu Aug 15 15:33:09 2013

Last change: Thu Aug 15 15:32:28 2013 via cibadmin on node1.test.com

Stack: classic openais (with plugin)

Current DC: node2.test.com - partition with quorum

Version: 1.1.8-7.el6-394e906

2 Nodes configured, 2 expected votes

2 Resources configured.

Online: [ node1.test.com node2.test.com ]

Resource Group: webservice

vip (ocf::heartbeat:IPaddr): Started node2.test.com

httpd (lsb:httpd): Started node2.test.com

大家可以看到,所有资源全部运行在node2上,下面我们来测试一下

下面我们模拟故障,测试一下

crm(live)# node

crm(live)node#

? cd end help online show status-attr

attribute clearstate exit list quit standby up

bye delete fence maintenance ready status utilization

crm(live)node# standby

[root@node1 ~]# crm status

Cannot change active directory to /var/lib/pacemaker/cores/root: No such file or directory (2)

Last updated: Thu Aug 15 15:39:05 2013

Last change: Thu Aug 15 15:38:57 2013 via crm_attribute on node2.test.com

Stack: classic openais (with plugin)

Current DC: node2.test.com - partition with quorum

Version: 1.1.8-7.el6-394e906

2 Nodes configured, 2 expected votes

2 Resources configured.

Node node2.test.com: standby

Online: [ node1.test.com ]

Resource Group: webservice

vip (ocf::heartbeat:IPaddr): Started node1.test.com

httpd (lsb:httpd): Started node1.test.com

[root@node1 ~]# ifconfig

eth0 Link encap:Ethernet HWaddr 00:0C:29:91:45:90

inet addr:192.168.18.201 Bcast:192.168.18.255 Mask:255.255.255.0

inet6 addr: fe80::20c:29ff:fe91:4590/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:408780 errors:0 dropped:0 overruns:0 frame:0

TX packets:323137 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:60432533 (57.6 MiB) TX bytes:57541647 (54.8 MiB)

eth0:0 Link encap:Ethernet HWaddr 00:0C:29:91:45:90

inet addr:192.168.18.200 Bcast:192.168.18.255 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:16436 Metric:1

RX packets:6525 errors:0 dropped:0 overruns:0 frame:0

TX packets:6525 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:882555 (861.8 KiB) TX bytes:882555 (861.8 KiB)

[root@node1 ~]# netstat -ntulp | grep :80

tcp 0 0 :::80 :::* LISTEN 16603/httpd

大家可以看到时,当node2节点设置为standby时,所有资源全部切换到node1上,下面我们再来访问一下Web页面

好了,组资源的定义与说明,我们就先演示到这,下面我们来说一说怎么定义资源约束。

(10).定义资源约束

我们先让node2上线,再删除组资源

crm(live)node# online

[root@node1 ~]# crm_mon

Last updated: Thu Aug 15 15:48:38 2013

Last change: Thu Aug 15 15:46:21 2013 via crm_attribute on node2.test.com

Stack: classic openais (with plugin)

Current DC: node2.test.com - partition with quorum

Version: 1.1.8-7.el6-394e906

2 Nodes configured, 2 expected votes

2 Resources configured.

Online: [ node1.test.com node2.test.com ]

Resource Group: webservice

vip (ocf::heartbeat:IPaddr): Started node1.test.com

httpd (lsb:httpd): Started node1.test.com

删除组资源操作

crm(live)# resource

crm(live)resource# show

Resource Group: webservice

vip (ocf::heartbeat:IPaddr): Started

httpd (lsb:httpd): Started

crm(live)resource# stop webservice #停止资源

crm(live)resource# show

Resource Group: webservice

vip (ocf::heartbeat:IPaddr): Stopped

httpd (lsb:httpd): Stopped

crm(live)resource# cleanup webservice #清理资源

Cleaning up vip on node1.test.com

Cleaning up vip on node2.test.com

Cleaning up httpd on node1.test.com

Cleaning up httpd on node2.test.com

Waiting for 1 replies from the CRMd. OK

crm(live)# configure

crm(live)configure# delete

cib-bootstrap-options node1.test.com rsc-options webservice

httpd node2.test.com vip

crm(live)configure# delete webservice #删除组资源

crm(live)configure# show

node node1.test.com

node node2.test.com \

attributes standby="off"

primitive httpd lsb:httpd

primitive vip ocf:heartbeat:IPaddr \

params ip="192.168.18.200" nic="eth0" cidr_netmask="24"

property $id="cib-bootstrap-options" \

dc-version="1.1.8-7.el6-394e906" \

cluster-infrastructure="classic openais (with plugin)" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore" \

last-lrm-refresh="1376553277"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"

crm(live)configure# commit

[root@node1 ~]# crm_mon

Last updated: Thu Aug 15 15:56:59 2013

Last change: Thu Aug 15 15:56:12 2013 via cibadmin on node1.test.com

Stack: classic openais (with plugin)

Current DC: node2.test.com - partition with quorum

Version: 1.1.8-7.el6-394e906

2 Nodes configured, 2 expected votes

2 Resources configured.

Online: [ node1.test.com node2.test.com ]

vip (ocf::heartbeat:IPaddr): Started node1.test.com

httpd (lsb:httpd): Started node2.test.com

大家可以看到资源又重新运行在两个节点上了,下面我们来定义约束!使资源运行在同一节点上。首先我们来回忆一下资源约束的相关知识,资源约束则用以指定在哪些群集节点上运行资源,以何种顺序装载资源,以及特定资源依赖于哪些其它资源。pacemaker共给我们提供了三种资源约束方法:

Resource Location(资源位置):定义资源可以、不可以或尽可能在哪些节点上运行;

Resource Collocation(资源排列):排列约束用以定义集群资源可以或不可以在某个节点上同时运行;

Resource Order(资源顺序):顺序约束定义集群资源在节点上启动的顺序;

定义约束时,还需要指定分数。各种分数是集群工作方式的重要组成部分。其实,从迁移资源到决定在已降级集群中停止哪些资源的整个过程是通过以某种方式修改分数来实现的。分数按每个资源来计算,资源分数为负的任何节点都无法运行该资源。在计算出资源分数后,集群选择分数最高的节点。INFINITY(无穷大)目前定义为 1,000,000。加减无穷大遵循以下3个基本规则:

任何值 + 无穷大 = 无穷大

任何值 - 无穷大 = -无穷大

无穷大 - 无穷大 = -无穷大

定义资源约束时,也可以指定每个约束的分数。分数表示指派给此资源约束的值。分数较高的约束先应用,分数较低的约束后应用。通过使用不同的分数为既定资源创建更多位置约束,可以指定资源要故障转移至的目标节点的顺序。因此,对于前述的vip和httpd可能会运行于不同节点的问题,可以通过以下命令来解决:

crm(live)configure# colocation httpd-with-ip INFUNTY: httpd vip

crm(live)configure# show

node node1.test.com

node node2.test.com \

attributes standby="off"

primitive httpd lsb:httpd

primitive vip ocf:heartbeat:IPaddr \

params ip="192.168.18.200" nic="eth0" cidr_netmask="24"

colocation httpd-with-ip INFUNTY: httpd vip

property $id="cib-bootstrap-options" \

dc-version="1.1.8-7.el6-394e906" \

cluster-infrastructure="classic openais (with plugin)" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore" \

last-lrm-refresh="1376553277"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"

crm(live)configure# show xml

<rsc_colocation id="httpd-with-ip" score-attribute="INFUNTY" rsc="httpd" with-rsc="vip"/>

[root@node2 ~]# crm_mon

Last updated: Thu Aug 15 16:12:18 2013

Last change: Thu Aug 15 16:12:05 2013 via cibadmin on node1.test.com

Stack: classic openais (with plugin)

Current DC: node2.test.com - partition with quorum

Version: 1.1.8-7.el6-394e906

2 Nodes configured, 2 expected votes

2 Resources configured.

Online: [ node1.test.com node2.test.com ]

vip (ocf::heartbeat:IPaddr): Started node1.test.com

httpd (lsb:httpd): Started node1.test.com

大家可以看到,所有资源全部运行在node1上,下面我们来测试访问一下

模拟一下故障,再进行测试

crm(live)# node

crm(live)node# standby

crm(live)node# show

node1.test.com: normal

standby: on

node2.test.com: normal

standby: off

[root@node2 ~]# crm_mon

Last updated: Thu Aug 15 16:14:33 2013

Last change: Thu Aug 15 16:14:23 2013 via crm_attribute on node1.test.com

Stack: classic openais (with plugin)

Current DC: node2.test.com - partition with quorum

Version: 1.1.8-7.el6-394e906

2 Nodes configured, 2 expected votes

2 Resources configured.

Node node1.test.com: standby

Online: [ node2.test.com ]

vip (ocf::heartbeat:IPaddr): Started node2.test.com

httpd (lsb:httpd): Started node2.test.com

大家可以看到,资源全部移动到node2上了,再进行测试

接着,我们还得确保httpd在某节点启动之前得先启动vip,这可以使用如下命令实现:

crm(live)# configure

crm(live)configure# order httpd-after-vip mandatory: vip httpd

crm(live)configure# verify

crm(live)configure# show

node node1.test.com \

attributes standby="on"

node node2.test.com \

attributes standby="off"

primitive httpd lsb:httpd \

meta target-role="Started"

primitive vip ocf:heartbeat:IPaddr \

params ip="192.168.18.200" nic="eth0" cidr_netmask="24" \

meta target-role="Started"

colocation httpd-with-ip INFUNTY: httpd vip

order httpd-after-vip inf: vip httpd

property $id="cib-bootstrap-options" \

dc-version="1.1.8-7.el6-394e906" \

cluster-infrastructure="classic openais (with plugin)" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore" \

last-lrm-refresh="1376554276"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"

crm(live)configure# show xml

<rsc_order id="httpd-after-vip" score="INFINITY" first="vip" then="httpd"/>

crm(live)configure# commit

此外,由于HA集群本身并不强制每个节点的性能相同或相近。所以,某些时候我们可能希望在正常时服务总能在某个性能较强的节点上运行,这可以通过位置约束来实现:

crm(live)configure# location prefer-node1 vip node_pref::200: node1

好了,到这里高可用的Web集群的基本配置全部完成,下面我们来讲一下增加nfs资源。

8.crmsh 配置nfs资源

(1).配置NFS服务器

[root@nfs ~]# mkdir -pv /web mkdir: 已创建目录 “/web” [root@nfs ~]# vim /etc/exports /web/192.168.18.0/24(ro,async) [root@nfs /]# echo '<h1>Cluster NFS Server</h1>' > /web/index.html [root@nfs ~]# /etc/init.d/rpcbind start 启动 rpcbind : [确定] [root@nfs /]# /etc/init.d/nfs start 启动 NFS 服务: [确定] 关掉 NFS 配额: [确定] 启动 NFS 守护进程: [确定] 启动 NFS mountd: [确定] [root@nfs /]# showmount -e 192.168.18.208 Export list for192.168.18.208: /web192.168.18.0/24

(2).节点测试挂载

node1:

[root@node1 ~]# mount -t nfs 192.168.18.208:/web /mnt [root@node1 ~]# cd /mnt/ [root@node1 mnt]# ll 总计 4 -rw-r--r-- 1 root root 28 08-07 17:41 index.html [root@node1 mnt]# mount /dev/sda2on / typeext3 (rw) proc on /proctypeproc (rw) sysfs on /systypesysfs (rw) devpts on /dev/ptstypedevpts (rw,gid=5,mode=620) /dev/sda3on /datatypeext3 (rw) /dev/sda1on /boottypeext3 (rw) tmpfs on /dev/shmtypetmpfs (rw) none on /proc/sys/fs/binfmt_misctypebinfmt_misc (rw) sunrpc on /var/lib/nfs/rpc_pipefstyperpc_pipefs (rw) 192.168.18.208:/webon /mnttypenfs (rw,addr=192.168.18.208) [root@node1 ~]# umount /mnt [root@node1 ~]# mount /dev/sda2on / typeext3 (rw) proc on /proctypeproc (rw) sysfs on /systypesysfs (rw) devpts on /dev/ptstypedevpts (rw,gid=5,mode=620) /dev/sda3on /datatypeext3 (rw) /dev/sda1on /boottypeext3 (rw) tmpfs on /dev/shmtypetmpfs (rw) none on /proc/sys/fs/binfmt_misctypebinfmt_misc (rw) sunrpc on /var/lib/nfs/rpc_pipefstyperpc_pipefs (rw)

node2:

[root@node2 ~]# mount -t nfs 192.168.18.208:/web /mnt [root@node2 ~]# cd /mnt [root@node2 mnt]# ll 总计 4 -rw-r--r-- 1 root root 28 08-07 17:41 index.html [root@node2 mnt]# mount /dev/sda2on / typeext3 (rw) proc on /proctypeproc (rw) sysfs on /systypesysfs (rw) devpts on /dev/ptstypedevpts (rw,gid=5,mode=620) /dev/sda3on /datatypeext3 (rw) /dev/sda1on /boottypeext3 (rw) tmpfs on /dev/shmtypetmpfs (rw) none on /proc/sys/fs/binfmt_misctypebinfmt_misc (rw) sunrpc on /var/lib/nfs/rpc_pipefstyperpc_pipefs (rw) 192.168.18.208:/webon /mnttypenfs (rw,addr=192.168.18.208) [root@node2 ~]# umount /mnt [root@node2 ~]# mount /dev/sda2on / typeext3 (rw) proc on /proctypeproc (rw) sysfs on /systypesysfs (rw) devpts on /dev/ptstypedevpts (rw,gid=5,mode=620) /dev/sda3on /datatypeext3 (rw) /dev/sda1on /boottypeext3 (rw) tmpfs on /dev/shmtypetmpfs (rw) none on /proc/sys/fs/binfmt_misctypebinfmt_misc (rw) sunrpc on /var/lib/nfs/rpc_pipefstyperpc_pipefs (rw)

(3).配置资源 vip 、httpd、nfs

crm(live)# configure

crm(live)configure# show

node node1.test.com

node node2.test.com

property $id="cib-bootstrap-options" \

dc-version="1.1.8-7.el6-394e906" \

cluster-infrastructure="classic openais (with plugin)" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore" \

last-lrm-refresh="1376555949"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"

crm(live)configure# primitive vip ocf:heartbeat:IPaddr params ip=192.168.18.200 nic=eth0 cidr_netmask=24

crm(live)configure# primitive httpd lsb:httpd

crm(live)configure# primitive nfs ocf:heartbeat:Filesystem params device=192.168.18.208:/web directory=/var/www/html fstype=nfs

crm(live)configure# verify

WARNING: nfs: default timeout 20s for start is smaller than the advised 60

WARNING: nfs: default timeout 20s for stop is smaller than the advised 60

crm(live)configure# show

node node1.test.com

node node2.test.com

primitive httpd lsb:httpd

primitive nfs ocf:heartbeat:Filesystem \

params device="192.168.18.208:/web" directory="/var/www/html" fstype="nfs"

primitive vip ocf:heartbeat:IPaddr \

params ip="192.168.18.200" nic="eth0" cidr_netmask="24"

property $id="cib-bootstrap-options" \

dc-version="1.1.8-7.el6-394e906" \

cluster-infrastructure="classic openais (with plugin)" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore" \

last-lrm-refresh="1376555949"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"

crm(live)configure# commit

WARNING: nfs: default timeout 20s for start is smaller than the advised 60

WARNING: nfs: default timeout 20s for stop is smaller than the advised 60

查看一下定义的三个资源,大家可以看到三个资源不在同一个节点上,下面我们定义一下组资源,来使三个资源在同一节点上。

[root@node2 ~]# crm_mon Last updated: Thu Aug 15 17:00:17 2013 Last change: Thu Aug 15 16:58:44 2013 via cibadmin on node1.test.com Stack: classic openais (with plugin) Current DC: node2.test.com - partition with quorum Version: 1.1.8-7.el6-394e906 2 Nodes configured, 2 expected votes 3 Resources configured. Online: [ node1.test.com node2.test.com ] vip (ocf::heartbeat:IPaddr): Started node1.test.com httpd (lsb:httpd): Started node2.test.com nfs (ocf::heartbeat:Filesystem): Started node1.test.com

(4).定义组资源

crm(live)# configure

crm(live)configure# group webservice vip nfs httpd

crm(live)configure# verify

crm(live)configure# show

node node1.test.com

node node2.test.com

primitive httpd lsb:httpd

primitive nfs ocf:heartbeat:Filesystem \

params device="192.168.18.208:/web" directory="/var/www/html" fstype="nfs"

primitive vip ocf:heartbeat:IPaddr \

params ip="192.168.18.200" nic="eth0" cidr_netmask="24"

group webservice vip nfs httpd

property $id="cib-bootstrap-options" \

dc-version="1.1.8-7.el6-394e906" \

cluster-infrastructure="classic openais (with plugin)" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore" \

last-lrm-refresh="1376555949"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"

crm(live)configure# commit

查看一下资源状态,所有资源全部在node1上,下面我们测试一下

[root@node2 ~]# crm_mon

Last updated: Thu Aug 15 17:03:20 2013

Last change: Thu Aug 15 17:02:44 2013 via cibadmin on node1.test.com

Stack: classic openais (with plugin)

Current DC: node2.test.com - partition with quorum

Version: 1.1.8-7.el6-394e906

2 Nodes configured, 2 expected votes

3 Resources configured.

Online: [ node1.test.com node2.test.com ]

Resource Group: webservice

vip (ocf::heartbeat:IPaddr): Started node1.test.com

nfs (ocf::heartbeat:Filesystem): Started node1.test.com

httpd (lsb:httpd): Started node1.test.com

(5).最后我们模拟一下资源故障

crm(live)# node

crm(live)node# standby

crm(live)node# show

node1.test.com: normal

standby: on

node2.test.com: normal

[root@node2 ~]# crm_mon

Last updated: Thu Aug 15 17:05:52 2013

Last change: Thu Aug 15 17:05:42 2013 via crm_attribute on node1.test.com

Stack: classic openais (with plugin)

Current DC: node2.test.com - partition with quorum

Version: 1.1.8-7.el6-394e906

2 Nodes configured, 2 expected votes

3 Resources configured.

Node node1.test.com: standby

Online: [ node2.test.com ]

Resource Group: webservice

vip (ocf::heartbeat:IPaddr): Started node2.test.com

nfs (ocf::heartbeat:Filesystem): Started node2.test.com

httpd (lsb:httpd): Started node2.test.com

当node1故障时,所有资源全部移动到时node2上,下面我们再来访问一下吧

大家可以看到,照样能访问,好了今天的博客就到这边,在下一篇博客中我们将重点讲解DRDB知识。^_^……