01 ceph 快速部署 实战

ceph-deploy admin node Installation

2015年10月19日。

准备:

ceph官方源,支持以下debian类linux

precise Ubuntu 12.04

quantal Ubuntu 12.10

raring Ubuntu 13.04

squeeze debian 6

trusty Ubuntu 14.04

wheezy debian 7

root@ceph-admin:~# vim /etc/apt/sources.list 如果是debian7在sources.list文件顶部添加 deb http://ceph.com/debian/ wheezy main 如果是Ubuntu 14.04 在sources.list文件顶部添加 deb http://ceph.com/debian/ trusty main

中科大的ceph源,支持的Debian类linux

jessie debian 8

precise Ubuntu 12.04

trusty Ubuntu 14.04

wheezy debian 8

root@ceph-admin:~# vim /etc/apt/sources.list 如果是debian7在sources.list文件顶部添加 deb http://mirrors.ustc.edu.cn/ceph/debian/ wheezy main 如果是Ubuntu 14.04 在sources.list文件顶部添加 deb http://mirrors.ustc.edu.cn/ceph/debian/ trusty main

[Note]

You can also use the EU mirror eu.ceph.com for downloading your packages.

Simply replace http://ceph.com/ by

http://eu.ceph.com/

范例:

系统版本,我的是server版的ubuntu 14.04

ubuntu-14.04.3-server-amd64.iso下载

root@ceph-admin:~# lsb_release -a No LSB modules are available. Distributor ID: Ubuntu Description: Ubuntu 14.04.3 LTS Release: 14.04 Codename: trusty

系统内核

root@ceph-admin:~# uname -rm 3.19.0-25-generic x86_64

Ready Go!

配置源,我选国内的

root@ubuntu:~# cat /etc/apt/sources.list deb http://mirrors.ustc.edu.cn/ceph/debian/ trusty main deb http://mirrors.163.com/ubuntu/ trusty main restricted universe multiverse deb http://mirrors.163.com/ubuntu/ trusty-security main restricted universe multiverse deb http://mirrors.163.com/ubuntu/ trusty-updates main restricted universe multiverse deb http://mirrors.163.com/ubuntu/ trusty-proposed main restricted universe multiverse deb http://mirrors.163.com/ubuntu/ trusty-backports main restricted universe multiverse deb-src http://mirrors.163.com/ubuntu/ trusty main restricted universe multiverse deb-src http://mirrors.163.com/ubuntu/ trusty-security main restricted universe multiverse deb-src http://mirrors.163.com/ubuntu/ trusty-updates main restricted universe multiverse deb-src http://mirrors.163.com/ubuntu/ trusty-proposed main restricted universe multiverse deb-src http://mirrors.163.com/ubuntu/ trusty-backports main restricted universe multiverse debian7的问题: 如果你的是debian7,使用官方推荐的deb http://ceph.com/debian/ wheezy main 这个源 , 在2015年10月20日执行apt-get install ceph-deploy 会提示找不到文件包 解决办法: 可以使用中科大的ceph源,替换上面sources.list文件的最后一行。 deb http://mirrors.ustc.edu.cn/ceph/debian/ wheezy main

Add the release key:

root@ubuntu:~# wget -O- 'https://ceph.com/git/?p=ceph.git;a=blob_plain;f=keys/release.asc' | sudo apt-key add - --2015-10-24 10:29:54-- https://ceph.com/git/?p=ceph.git;a=blob_plain;f=keys/release.asc 正在解析主机 ceph.com (ceph.com)... 173.236.248.54, 2607:f298:6050:51f3:f816:3eff:fe62:31d3 正在连接 ceph.com (ceph.com)|173.236.248.54|:443... 已连接。 已发出 HTTP 请求,正在等待回应... 301 Moved Permanently 位置:https://git.ceph.com/?p=ceph.git;a=blob_plain;f=keys/release.asc [跟随至新的 URL] --2015-10-24 10:29:59-- https://git.ceph.com/?p=ceph.git;a=blob_plain;f=keys/release.asc 正在解析主机 git.ceph.com (git.ceph.com)... 67.205.20.229 正在连接 git.ceph.com (git.ceph.com)|67.205.20.229|:443... 已连接。 已发出 HTTP 请求,正在等待回应... 200 OK 长度: 1645 (1.6K) [text/plain] 正在保存至: “STDOUT” 100%[======================================================>] 1,645 --.-K/s 用时 0s 2015-10-24 10:30:15 (14.5 MB/s) - 已写入至标准输出 [1645/1645] OK 如果上面的这条命令执行出错 root@ceph-admin:~# wget --no-check-certificate -O- 'https://ceph.com/git/?p=ceph.git;a=blob_plain;f=keys/release.asc' | apt-key add -

安装 ntodate

更新源 root@ceph-admin:~# apt-get update root@ceph-admin:~# apt-get -y install ntpdate openssh-server 时间要同步 root@ceph-admin:~# vim /etc/crontab */5 * * * * root ntpdate cn.pool.ntp.org >> /dev/null 2>&1 保存退出。 关机 root@ubuntu:~# init 0

用克隆的方式,创建其他节点:

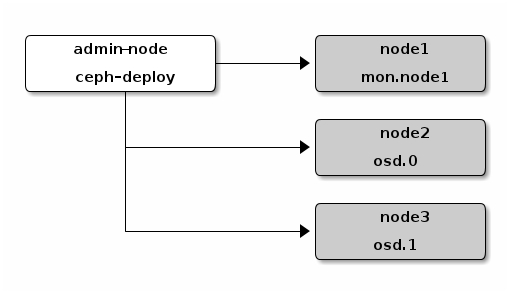

mon --> node1

osd.0 --> node2

osd.1 --> node3

关机,在此基础上再克隆3个虚拟机node1,node2,node3,

按顺序开机 admin-node1,node1,node2,node3,

配置hosts文件,ssh无密登录

【关于主机名】主机名建议不要使用短线,应该使用全字母+数字,尽量不要使用特殊符号,在初始化mon时,会报错。

推荐的主机名格式:ceph1

推荐的主机名格式:cephnodemon1

推荐的主机名格式:node1

我的mon节点是node1

root@ubuntu:~# vim /etc/hosts 192.168.81.152 admin-node 192.168.81.153 node1 192.168.81.154 node2 192.168.81.155 node3 root@ubuntu:~# ssh-keygen -t rsa -P '' Generating public/private rsa key pair. Enter file in which to save the key (/root/.ssh/id_rsa): Created directory '/root/.ssh'. Your identification has been saved in /root/.ssh/id_rsa. Your public key has been saved in /root/.ssh/id_rsa.pub. The key fingerprint is: 83:fb:7b:8f:60:7e:e1:a9:62:f0:02:4c:64:33:c3:f4 root@ubuntu The key's randomart image is: +--[ RSA 2048]----+ | o. | | B. | | o +E | | . . | | o . S | | o . . .. | | . o. o. o | | . ++ .=. | | o .==... | +-----------------+ root@ubuntu:~# ssh-copy-id admin-node root@ubuntu:~# ssh-copy-id node1 root@ubuntu:~# ssh-copy-id node2 root@ubuntu:~# ssh-copy-id node3 [无密登录测试,并修改主机名] root@admin-node:/home# ssh admin-node root@ubuntu:~# hostname admin-node root@ubuntu:~# vim /etc/hostname admin-node root@ubuntu:~# exit root@admin-node:/home# ssh node1 root@ubuntu:~# hostname node1 root@ubuntu:~# vim /etc/hostname node1 root@ubuntu:~# exit root@admin-node:/home# ssh node2 root@ubuntu:~# hostname node2 root@ubuntu:~# vim /etc/hostname node2 root@ubuntu:~# exit root@admin-node:/home# ssh node3 root@ubuntu:~# hostname node3 root@ubuntu:~# vim /etc/hostname node3 root@ubuntu:~# exit 把hosts文件发出去,不发也没有影响。 root@ubuntu:~# scp /etc/hosts node1:/etc/hosts root@ubuntu:~# scp /etc/hosts node2:/etc/hosts root@ubuntu:~# scp /etc/hosts node3:/etc/hosts 现在就有4个节点了

admin节点:

install ceph-deploy

root@ceph-admin:~# apt-get install -y ceph-deploy 命中 http://ceph.com trusty InRelease 命中 http://ceph.com trusty/main amd64 Packages 命中 http://ceph.com trusty/main i386 Packages 忽略 http://ceph.com trusty/main Translation-zh_CN 忽略 http://ceph.com trusty/main Translation-zh 忽略 http://ceph.com trusty/main Translation-en 正在读取软件包列表... 完成 正在读取软件包列表... 完成 正在分析软件包的依赖关系树 正在读取状态信息... 完成 将会安装下列额外的软件包: python-setuptools 下列【新】软件包将被安装: ceph-deploy python-setuptools 升级了 0 个软件包,新安装了 2 个软件包,要卸载 0 个软件包,有 65 个软件包未被升级。 需要下载 343 kB 的软件包。 解压缩后会消耗掉 1,529 kB 的额外空间。 您希望继续执行吗? [Y/n] y 获取:1 http://mirrors.163.com/ubuntu/ trusty-updates/main python-setuptools all 3.3-1ubuntu2 [230 kB] 获取:2 http://ceph.com/debian/ trusty/main ceph-deploy all 1.5.28trusty [113 kB] 下载 343 kB,耗时 2秒 (124 kB/s) 正在选中未选择的软件包 python-setuptools。 (正在读取数据库 ... 系统当前共安装有 56100 个文件和目录。) 正准备解包 .../python-setuptools_3.3-1ubuntu2_all.deb ... 正在解包 python-setuptools (3.3-1ubuntu2) ... 正在选中未选择的软件包 ceph-deploy。 正准备解包 .../ceph-deploy_1.5.28trusty_all.deb ... 正在解包 ceph-deploy (1.5.28trusty) ... 正在设置 python-setuptools (3.3-1ubuntu2) ... 正在设置 ceph-deploy (1.5.28trusty) ...

admin节点:

ceph的帮助命令

root@ceph-admin:~# ceph-deploy --help usage: ceph-deploy [-h] [-v | -q] [--version] [--username USERNAME] [--overwrite-conf] [--cluster NAME] [--ceph-conf CEPH_CONF] COMMAND ... Easy Ceph deployment -^- / \ |O o| ceph-deploy v1.5.28 ).-.( '/|||\` | '|` | '|` Full documentation can be found at: http://ceph.com/ceph-deploy/docs optional arguments: -h, --help show this help message and exit -v, --verbose be more verbose -q, --quiet be less verbose --version the current installed version of ceph-deploy --username USERNAME the username to connect to the remote host --overwrite-conf overwrite an existing conf file on remote host (if present) --cluster NAME name of the cluster --ceph-conf CEPH_CONF use (or reuse) a given ceph.conf file commands: COMMAND description new Start deploying a new cluster, and write a CLUSTER.conf and keyring for it. install Install Ceph packages on remote hosts. rgw Ceph RGW daemon management mds Ceph MDS daemon management mon Ceph MON Daemon management gatherkeys Gather authentication keys for provisioning new nodes. disk Manage disks on a remote host. osd Prepare a data disk on remote host. admin Push configuration and client.admin key to a remote host. repo Repo definition management config Copy ceph.conf to/from remote host(s) uninstall Remove Ceph packages from remote hosts. purge Remove Ceph packages from remote hosts and purge all data. purgedata Purge (delete, destroy, discard, shred) any Ceph data from /var/lib/ceph forgetkeys Remove authentication keys from the local directory. pkg Manage packages on remote hosts. calamari Install and configure Calamari nodes. Assumes that a repository with Calamari packages is already configured. Refer to the docs for examples (http://ceph.com/ceph-deploy/docs/conf.html) root@ceph-admin:~#

【不要让iptables耽误了你的时间 iptables】

root@ceph-osd1:/home/osd# iptables -L -nv Chain INPUT (policy ACCEPT 0 packets, 0 bytes) Chain FORWARD (policy ACCEPT 0 packets, 0 bytes) Chain OUTPUT (policy ACCEPT 0 packets, 0 bytes)

【ceph-deploy 快速部署集群】

========================================================

如果在任何时候你遇到了麻烦,你想重新开始,执行以下来清除配置:

ceph-deploy purgedata {ceph-node} [{ceph-node}]

ceph-deploy forgetkeys

如果你想把已经安装的ceph程序也清除掉

ceph-deploy purge {ceph-node} [{ceph-node}]

========================================================

admin节点:

创建一个集群

root@ubuntu:~# mkdir /home/my-cluster root@ubuntu:~# cd /home/my-cluster 创建 root@ubuntu:/home/my-cluster# ceph-deploy new node1 [ceph_deploy.cli][INFO ] Invoked (1.4.0): /usr/bin/ceph-deploy new node1 [ceph_deploy.new][DEBUG ] Creating new cluster named ceph [ceph_deploy.new][DEBUG ] Resolving host node1 [ceph_deploy.new][DEBUG ] Monitor node1 at 192.168.81.153 [ceph_deploy.new][INFO ] making sure passwordless SSH succeeds [node1][DEBUG ] connected to host: admin-node [node1][INFO ] Running command: ssh -CT -o BatchMode=yes node1 [ceph_deploy.new][DEBUG ] Monitor initial members are ['node1'] [ceph_deploy.new][DEBUG ] Monitor addrs are ['192.168.81.153'] [ceph_deploy.new][DEBUG ] Creating a random mon key... [ceph_deploy.new][DEBUG ] Writing initial config to ceph.conf... [ceph_deploy.new][DEBUG ] Writing monitor keyring to ceph.mon.keyring... root@ubuntu:/home/my-cluster# ll 总用量 20 drwxr-xr-x 2 root root 4096 10月 24 11:29 ./ drwxr-xr-x 3 root root 4096 10月 24 11:28 ../ -rw-r--r-- 1 root root 229 10月 24 11:29 ceph.conf -rw-r--r-- 1 root root 732 10月 24 11:29 ceph.log -rw-r--r-- 1 root root 73 10月 24 11:29 ceph.mon.keyring root@ubuntu:/home/my-cluster# vim ceph.conf 添加 osd pool default size = 2

admin节点:

为所有节点安装部署ceph

如果你的网络质量不好,易报错,

2015年10月24日,再次实践3次执行

ceph-deploy install admin-node node1 node2 node3

发现,批量安装,在admin-node总是报错

错误信息:

[admin-node][ERROR ] RuntimeError: command returned non-zero exit status: 100 [ceph_deploy][ERROR ] RuntimeError: Failed to execute command: env DEBIAN_FRONTEND=noninte active DEBIAN_PRIORITY=critical apt-get -q -o Dpkg::Options::=--force-confnew --no-install-recommends --assume-yes install -- ceph ceph-mds ceph-common ceph-fs-common gdisk

admin-node虽然报了个错,导致后面的node1 node2 node3无法继续安装,

但执行 ceph -v还是可以显示版本信息的。

去掉admin-node 安装一切正常

于是,安装步骤改为

root@admin-node:/home/my-cluster# ceph-deploy install node1 node2 node3 admin节点: root@admin-node:/home/my-cluster# apt-get update root@admin-node:/home/my-cluster# apt-get install -y ceph

添加ceph monitor

root@admin-node:/home/my-cluster# ceph-deploy mon create-initial root@admin-node:/home/my-cluster# ll total 72 -rw------- 1 root root 71 Oct 21 14:48 ceph.bootstrap-mds.keyring -rw------- 1 root root 71 Oct 21 14:48 ceph.bootstrap-osd.keyring -rw------- 1 root root 71 Oct 21 14:48 ceph.bootstrap-rgw.keyring -rw------- 1 root root 63 Oct 21 14:48 ceph.client.admin.keyring -rw-r--r-- 1 root root 253 Oct 21 14:41 ceph.conf -rw-r--r-- 1 root root 44601 Oct 21 14:48 ceph.log -rw------- 1 root root 73 Oct 21 14:41 ceph.mon.keyring

*******************************

如果报错了,monitor xxx does not exist in monmap

请清除各节点的ceph程序和配置文件

ceph-deploy purge admin-node node1 node2 node3 ceph-deploy purgedata admin-node node1 node2 node3

admin节点 reboot admin节点执行 ceph-deploy --help admin节点如果执行不了,那么就安装 apt-get remove ceph-deploy apt-get install ceph-deploy

回到【创建集群】,再来一次就好了

*********************************

Add two OSDs

注意权限:给777或者特殊权限 root@node4:~# ll /var/local/osd2/ -d drwxr-sr-x 3 root staff 4096 11月 4 08:23 /var/local/osd2// 在node2节点 mkdir /var/local/osd0 在node3节点 mkdir /var/local/osd1 *********************************************** 或者单独在存储节点挂载一块盘,推荐btrfs文件系统 # apt-get install btrfs-tools # mkfs.btrfs /dev/sdb # vim /etc/fstab /dev/sdb /ceph1 btrfs defaults 0 2

prepare the OSDs.

root@admin-node:/home/my-cluster# ceph-deploy osd prepare node2:/var/local/osd0 node3:/var/local/osd1 [ceph_deploy.osd][DEBUG ] Host node2 is now ready for osd use. [ceph_deploy.osd][DEBUG ] Host node3 is now ready for osd use.

Activate the OSDs.

root@admin-node:/home/my-cluster# ceph-deploy osd activate node2:/var/local/osd0 node3:/var/local/osd1

admin节点:

拷贝配置文件和admin key到 所有节点

root@admin-node:/home/my-cluster# ceph-deploy admin admin-node node1 node2 node3

root@admin-node:/home/my-cluster# chmod +r /etc/ceph/ceph.client.admin.keyring

在任意节点检查状态:

root@node2:~# ceph -s

cluster 34e6e6b5-bb3e-4185-a8ee-01837c678db4

health HEALTH_OK

monmap e1: 1 mons at {node1=172.16.66.142:6789/0}

election epoch 2, quorum 0 node1

osdmap e9: 2 osds: 2 up, 2 in

pgmap v17: 64 pgs, 1 pools, 0 bytes data, 0 objects

13176 MB used, 917 GB / 980 GB avail

64 active+clean

root@node2:~#

【推荐:此时,把4个节点,全部做一次快照!,便于做实验】

本文出自 “魂斗罗” 博客,谢绝转载!