开始研究搜索了,在自己虚拟机上搭建了一个简易ElasticSearch搜索集群,与大家分享一下,希望能有所帮助。

操作系统环境: Red Hat 4.8.2-16

elasticsearch : elasticsearch-1.4.1

集群搭建方式: 一台虚拟机上2个节点.

集群存放路径:/export/search/elasticsearch-cluster

必备环境: java运行环境

集群搭建实例展示:

1. 解压tar包,创建集群节点

#进入到集群路径

[root@localhost elasticsearch-cluster]# pwd

/export/search/elasticsearch-cluster

#重命名解压包

[root@localhost elasticsearch-cluster]# ls

elasticsearch-1.4.1

[root@localhost elasticsearch-cluster]# mv elasticsearch-1.4.1 elasticsearch-node1

#进入到节点配置路径

[root@localhost elasticsearch-cluster]# cd elasticsearch-node1/config/

[root@localhost config]# ls

elasticsearch.yml logging.yml

2.创建集群配置信息:

# elasticsearch-node1配置

# 配置集群名称

cluster.name: elasticsearch-cluster-CentOS

# 配置节点名称

node.name: "es-node1"

# 为节点之间的通信设置一个自定义端口(默认为9300)

transport.tcp.port: 9300

# 设置监听HTTP传输的自定义端(默认为9200)

http.port: 9200

elasticsearch配置文件说明见: http://www.linuxidc.com/Linux/2015-02/114244.htm

3.安装head插件

#进入到节点bin路径

[root@localhost bin]# pwd

/export/search/elasticsearch-cluster/elasticsearch-node1/bin

安装插件

[root@localhost bin]# ./plugin -install mobz/elasticsearch-head

安装完插件之后会在es节点bin路径同级创建一个plugins目录,存放安装的插件

4.复制一份配置好的节点为elasticsearch-node2

[root@localhost elasticsearch-cluster]# ls

elasticsearch-node1 elasticsearch-node2

5.修改节点2中的集群配置信息

# elasticsearch-node2配置

# 配置集群名称

cluster.name: elasticsearch-cluster-centos

# 配置节点名称

node.name: "es-node2"

# 为节点之间的通信设置一个自定义端口(默认为9300)

transport.tcp.port: 9301

# 设置监听HTTP传输的自定义端(默认为9200)

http.port: 9201

说明:

上面配置表示集群中有2个节点,节点名为别为,"es-node1"和 "es-node2",同属于集群"elasticsearch-cluster-centos"

节点二中端口可以不用配置,es在启动时会去检测,如果目标端口被占用,会检测下一个端口.因为两节点部署在同一天虚拟机上为了更好的说明问题,这里手动配置了对应的端口.

我们可以从es对应日志中()查看对应的启动信息,以及端口绑定信息。

6.分别启动节点

[root@localhost bin]# pwd

/export/search/elasticsearch-cluster/elasticsearch-node1/bin

[root@localhost bin]# ./elasticsearch -d -Xms512m -Xmx512m

如上,为启动节点1的命令,es启动配置相关日志查看elasticsearch-cluster-centos.log即可.

[root@localhost logs]# pwd

/export/search/elasticsearch-cluster/elasticsearch-node2/logs

[root@localhost logs]# ls

elasticsearch-cluster-centos_index_indexing_slowlog.log elasticsearch-cluster-centos.log elasticsearch-cluster-centos_index_search_slowlog.log

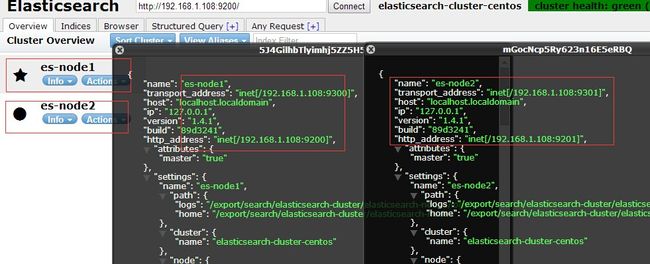

7. 至此我们的简易集群配置完成.查看集群

因为我们安装了head插件,所以可以通过该插件查看,虚拟机ip为192.168.1.108.

http://192.168.1.108:9200/_plugin/head/ (对应节点1)

http://192.168.1.108:9201/_plugin/head/ (对应节点2)

集群状态如图:

8.安装Marvel插件

Marvel是Elasticsearch的管理和监控工具,对于开发使用免费的。它配备了一个叫做Sense的交互式控制台,方便通过浏览器直接与Elasticsearch交互。

Marvel是一个插件,在Elasticsearch目录中运行以下代码来下载和安装:

./bin/plugin -i elasticsearch/marvel/latest

如果要禁止Marvel,可以通过如下方式

echo 'marvel.agent.enabled: false' >> ./config/elasticsearch.yml

Elasticsearch安装使用教程 http://www.linuxidc.com/Linux/2015-02/113615.htm

分布式搜索ElasticSearch单机与服务器环境搭建 http://www.linuxidc.com/Linux/2012-05/60787.htm

ElasticSearch的工作机制 http://www.linuxidc.com/Linux/2014-11/109922.htm

ElasticSearch 的详细介绍:请点这里

ElasticSearch 的下载地址:请点这里

本文永久更新链接地址:http://www.linuxidc.com/Linux/2015-02/114243.htm

##################### Elasticsearch Configuration Example #####################

# This file contains an overview of various configuration settings,

# targeted at operations staff. Application developers should

# consult the guide at <http://elasticsearch.org/guide>.

#

# The installation procedure is covered at

# <http://elasticsearch.org/guide/en/elasticsearch/reference/current/setup.html>.

#

# Elasticsearch comes with reasonable defaults for most settings,

# so you can try it out without bothering with configuration.

#

# Most of the time, these defaults are just fine for running a production

# cluster. If you're fine-tuning your cluster, or wondering about the

# effect of certain configuration option, please _do ask_ on the

# mailing list or IRC channel [http://elasticsearch.org/community].

# Any element in the configuration can be replaced with environment variables

# by placing them in ${...} notation. For example:

#

#node.rack: ${RACK_ENV_VAR}

# For information on supported formats and syntax for the config file, see

# <http://elasticsearch.org/guide/en/elasticsearch/reference/current/setup-configuration.html>

################################### Cluster ###################################

# Cluster name identifies your cluster for auto-discovery. If you're running

# multiple clusters on the same network, make sure you're using unique names.

#

cluster.name: search

#################################### Node #####################################

# Node names are generated dynamically on startup, so you're relieved

# from configuring them manually. You can tie this node to a specific name:

#

#node.name: "Franz Kafka"

# Every node can be configured to allow or deny being eligible as the master,

# and to allow or deny to store the data.

#

# Allow this node to be eligible as a master node (enabled by default):

#

#node.master: true

#

# Allow this node to store data (enabled by default):

#

#node.data: true

# You can exploit these settings to design advanced cluster topologies.

#

# 1. You want this node to never become a master node, only to hold data.

# This will be the "workhorse" of your cluster.

#

#node.master: false

#node.data: true

#

# 2. You want this node to only serve as a master: to not store any data and

# to have free resources. This will be the "coordinator" of your cluster.

#

#node.master: true

#node.data: false

#

# 3. You want this node to be neither master nor data node, but

# to act as a "search load balancer" (fetching data from nodes,

# aggregating results, etc.)

#

#node.master: false

#node.data: false

# Use the Cluster Health API [http://localhost:9200/_cluster/health], the

# Node Info API [http://localhost:9200/_nodes] or GUI tools

# such as <http://www.elasticsearch.org/overview/marvel/>,

# <http://github.com/karmi/elasticsearch-paramedic>,

# <http://github.com/lukas-vlcek/bigdesk> and

# <http://mobz.github.com/elasticsearch-head> to inspect the cluster state.

# A node can have generic attributes associated with it, which can later be used

# for customized shard allocation filtering, or allocation awareness. An attribute

# is a simple key value pair, similar to node.key: value, here is an example:

#

#node.rack: rack314

# By default, multiple nodes are allowed to start from the same installation location

# to disable it, set the following:

#node.max_local_storage_nodes: 1

#################################### Index ####################################

# You can set a number of options (such as shard/replica options, mapping

# or analyzer definitions, translog settings, ...) for indices globally,

# in this file.

#

# Note, that it makes more sense to configure index settings specifically for

# a certain index, either when creating it or by using the index templates API.

#

# See <http://elasticsearch.org/guide/en/elasticsearch/reference/current/index-modules.html> and

# <http://elasticsearch.org/guide/en/elasticsearch/reference/current/indices-create-index.html>

# for more information.

# Set the number of shards (splits) of an index (5 by default):

#

index.number_of_shards: 10

# Set the number of replicas (additional copies) of an index (1 by default):

#

#index.number_of_replicas: 1

# Note, that for development on a local machine, with small indices, it usually

# makes sense to "disable" the distributed features:

#

#index.number_of_shards: 1

#index.number_of_replicas: 0

# These settings directly affect the performance of index and search operations

# in your cluster. Assuming you have enough machines to hold shards and

# replicas, the rule of thumb is:

#

# 1. Having more *shards* enhances the _indexing_ performance and allows to

# _distribute_ a big index across machines.

# 2. Having more *replicas* enhances the _search_ performance and improves the

# cluster _availability_.

#

# The "number_of_shards" is a one-time setting for an index.

#

# The "number_of_replicas" can be increased or decreased anytime,

# by using the Index Update Settings API.

#

# Elasticsearch takes care about load balancing, relocating, gathering the

# results from nodes, etc. Experiment with different settings to fine-tune

# your setup.

# Use the Index Status API (<http://localhost:9200/A/_status>) to inspect

# the index status.

#################################### Paths ####################################

# Path to directory containing configuration (this file and logging.yml):

#

#path.conf: /path/to/conf

# Path to directory where to store index data allocated for this node.

#

path.data: /data/esdata

#

# Can optionally include more than one location, causing data to be striped across

# the locations (a la RAID 0) on a file level, favouring locations with most free

# space on creation. For example:

#

#path.data: /path/to/data1,/path/to/data2

# Path to temporary files:

#

#path.work: /path/to/work

# Path to log files:

#

#path.logs: /path/to/logs

# Path to where plugins are installed:

#

#path.plugins: /path/to/plugins

#################################### Plugin ###################################

# If a plugin listed here is not installed for current node, the node will not start.

#

#plugin.mandatory: mapper-attachments,lang-groovy

################################### Memory ####################################

# Elasticsearch performs poorly when JVM starts swapping: you should ensure that

# it _never_ swaps.

#

# Set this property to true to lock the memory:

#

#bootstrap.mlockall: true

# Make sure that the ES_MIN_MEM and ES_MAX_MEM environment variables are set

# to the same value, and that the machine has enough memory to allocate

# for Elasticsearch, leaving enough memory for the operating system itself.

#

# You should also make sure that the Elasticsearch process is allowed to lock

# the memory, eg. by using `ulimit -l unlimited`.

############################## Network And HTTP ###############################

# Elasticsearch, by default, binds itself to the 0.0.0.0 address, and listens

# on port [9200-9300] for HTTP traffic and on port [9300-9400] for node-to-node

# communication. (the range means that if the port is busy, it will automatically

# try the next port).

# Set the bind address specifically (IPv4 or IPv6):

#

#network.bind_host: 192.168.0.1

# Set the address other nodes will use to communicate with this node. If not

# set, it is automatically derived. It must point to an actual IP address.

#

network.publish_host: 192.168.2.99

# Set both 'bind_host' and 'publish_host':

#

#network.host: 192.168.0.1

# Set a custom port for the node to node communication (9300 by default):

#

transport.tcp.port: 4300

# Enable compression for all communication between nodes (disabled by default):

#

#transport.tcp.compress: true

# Set a custom port to listen for HTTP traffic:

#

http.port: 4200

# Set a custom allowed content length:

#

#http.max_content_length: 100mb

# Disable HTTP completely:

#

#http.enabled: false

################################### Gateway ###################################

# The gateway allows for persisting the cluster state between full cluster

# restarts. Every change to the state (such as adding an index) will be stored

# in the gateway, and when the cluster starts up for the first time,

# it will read its state from the gateway.

# There are several types of gateway implementations. For more information, see

# <http://elasticsearch.org/guide/en/elasticsearch/reference/current/modules-gateway.html>.

# The default gateway type is the "local" gateway (recommended):

#

#gateway.type: local

# Settings below control how and when to start the initial recovery process on

# a full cluster restart (to reuse as much local data as possible when using shared

# gateway).

# Allow recovery process after N nodes in a cluster are up:

#

#gateway.recover_after_nodes: 1

# Set the timeout to initiate the recovery process, once the N nodes

# from previous setting are up (accepts time value):

#

#gateway.recover_after_time: 5m

# Set how many nodes are expected in this cluster. Once these N nodes

# are up (and recover_after_nodes is met), begin recovery process immediately

# (without waiting for recover_after_time to expire):

#

#gateway.expected_nodes: 2

############################# Recovery Throttling #############################

# These settings allow to control the process of shards allocation between

# nodes during initial recovery, replica allocation, rebalancing,

# or when adding and removing nodes.

# Set the number of concurrent recoveries happening on a node:

#

# 1. During the initial recovery

#

#cluster.routing.allocation.node_initial_primaries_recoveries: 4

#

# 2. During adding/removing nodes, rebalancing, etc

#

#cluster.routing.allocation.node_concurrent_recoveries: 2

# Set to throttle throughput when recovering (eg. 100mb, by default 20mb):

#

#indices.recovery.max_bytes_per_sec: 20mb

# Set to limit the number of open concurrent streams when

# recovering a shard from a peer:

#

#indices.recovery.concurrent_streams: 5

################################## Discovery ##################################

# Discovery infrastructure ensures nodes can be found within a cluster

# and master node is elected. Multicast discovery is the default.

# Set to ensure a node sees N other master eligible nodes to be considered

# operational within the cluster. Its recommended to set it to a higher value

# than 1 when running more than 2 nodes in the cluster.

#

#discovery.zen.minimum_master_nodes: 1

# Set the time to wait for ping responses from other nodes when discovering.

# Set this option to a higher value on a slow or congested network

# to minimize discovery failures:

#

#discovery.zen.ping.timeout: 3s

# For more information, see

# <http://elasticsearch.org/guide/en/elasticsearch/reference/current/modules-discovery-zen.html>

# Unicast discovery allows to explicitly control which nodes will be used

# to discover the cluster. It can be used when multicast is not present,

# or to restrict the cluster communication-wise.

#

# 1. Disable multicast discovery (enabled by default):

#

discovery.zen.ping.multicast.enabled: false

#

# 2. Configure an initial list of master nodes in the cluster

# to perform discovery when new nodes (master or data) are started:

#

discovery.zen.ping.unicast.hosts: ["hdslave5", "hdslave1", "hdslave6", "hdslave3", "hdslave4"]

# EC2 discovery allows to use AWS EC2 API in order to perform discovery.

#

# You have to install the cloud-aws plugin for enabling the EC2 discovery.

#

# For more information, see

# <http://elasticsearch.org/guide/en/elasticsearch/reference/current/modules-discovery-ec2.html>

#

# See <http://elasticsearch.org/tutorials/elasticsearch-on-ec2/>

# for a step-by-step tutorial.

# GCE discovery allows to use Google Compute Engine API in order to perform discovery.

#

# You have to install the cloud-gce plugin for enabling the GCE discovery.

#

# For more information, see <https://github.com/elasticsearch/elasticsearch-cloud-gce>.

# Azure discovery allows to use Azure API in order to perform discovery.

#

# You have to install the cloud-azure plugin for enabling the Azure discovery.

#

# For more information, see <https://github.com/elasticsearch/elasticsearch-cloud-azure>.

################################## Slow Log ##################################

# Shard level query and fetch threshold logging.

#index.search.slowlog.threshold.query.warn: 10s

#index.search.slowlog.threshold.query.info: 5s

#index.search.slowlog.threshold.query.debug: 2s

#index.search.slowlog.threshold.query.trace: 500ms

#index.search.slowlog.threshold.fetch.warn: 1s

#index.search.slowlog.threshold.fetch.info: 800ms

#index.search.slowlog.threshold.fetch.debug: 500ms

#index.search.slowlog.threshold.fetch.trace: 200ms

#index.indexing.slowlog.threshold.index.warn: 10s

#index.indexing.slowlog.threshold.index.info: 5s

#index.indexing.slowlog.threshold.index.debug: 2s

#index.indexing.slowlog.threshold.index.trace: 500ms

################################## GC Logging ################################

#monitor.jvm.gc.young.warn: 1000ms

#monitor.jvm.gc.young.info: 700ms

#monitor.jvm.gc.young.debug: 400ms

index.cache.filter.expire: 1m

index.cache.filter.max_size: 20

index.cache.field.max_size: 50000

index.cache.field.expire: 5m

index.cache.field.type: soft

#monitor.jvm.gc.old.warn: 10s

#monitor.jvm.gc.old.info: 5s

#monitor.jvm.gc.old.debug: 2s

index:

analysis:

analyzer:

ik:

alias: [news_analyzer_ik,ik_analyzer]

type: org.elasticsearch.index.analysis.IkAnalyzerProvider

index.analysis.analyzer.default.type : "ik"