一个使用FFmpeg库读取3gp视频的例子-Android中使用FFmpeg媒体库

在续系列文章在32位的Ubuntu 11.04中为Android NDK r6编译FFmpeg0.8.1版-Android中使用FFmpeg媒体库(一)和在Android中通过jni方式使用编译好的FFmpeg库-Android中使用FFmpeg媒体库(二)文章后,本文将根据github中churnlabs的一个开源项目,来深入展开说明如何使用FFmpeg库进行多媒体的开发。

本文中的代码来自于https://github.com/churnlabs/android-ffmpeg-sample,更多的可以参考这个项目代码。我会在代码中加一些自己的注释。感谢作者churnlabs给我们提供这么好的例子以供我们学习。

在Android的一些系统层应用开发大多数是采用jni的方式调用,另外对于一些比较吃CPU或者处理逻辑比较复杂的程序,也可以考虑使用jni方式来封装。可以提高程序的执行效率。

本文涉及到以下几个方面:

1 将3gp文件push到模拟机器的sdcard中

2 写jni代码,内部调用ffmpeg库的方法,编译jni库

3 loadLibrary生成的库,然后撰写相应的java代码

4 执行程序,并查看最终运行结果。

最终程序的显示效果如下:

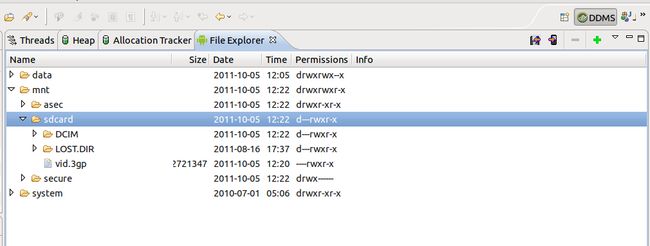

1 使用eclipse的DDMS工具,将vid.3pg push到sdcard中

2 撰写相应的jni文件

/*

* Copyright 2011 - Churn Labs, LLC

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/

/*

* This is mostly based off of the FFMPEG tutorial:

* http://dranger.com/ffmpeg/

* With a few updates to support Android output mechanisms and to update

* places where the APIs have shifted.

*/

#include <jni.h>

#include <string.h>

#include <stdio.h>

#include <android/log.h>

#include <android/bitmap.h>

//包含ffmpeg库头文件,这些文件都直接方案jni目录下

#include <libavcodec/avcodec.h>

#include <libavformat/avformat.h>

#include <libswscale/swscale.h>

#define LOG_TAG "FFMPEGSample"

#define LOGI(...) __android_log_print(ANDROID_LOG_INFO,LOG_TAG,__VA_ARGS__)

#define LOGE(...) __android_log_print(ANDROID_LOG_ERROR,LOG_TAG,__VA_ARGS__)

/* Cheat to keep things simple and just use some globals. */

//全局对象

AVFormatContext *pFormatCtx;

AVCodecContext *pCodecCtx;

AVFrame *pFrame;

AVFrame *pFrameRGB;

int videoStream;

/*

* Write a frame worth of video (in pFrame) into the Android bitmap

* described by info using the raw pixel buffer. It's a very inefficient

* draw routine, but it's easy to read. Relies on the format of the

* bitmap being 8bits per color component plus an 8bit alpha channel.

*/

//定义的静态方法,将某帧AVFrame在Android的Bitmap中绘制

static void fill_bitmap(AndroidBitmapInfo* info, void *pixels, AVFrame *pFrame)

{

uint8_t *frameLine;

int yy;

for (yy = 0; yy < info->height; yy++) {

uint8_t* line = (uint8_t*)pixels;

frameLine = (uint8_t *)pFrame->data[0] + (yy * pFrame->linesize[0]);

int xx;

for (xx = 0; xx < info->width; xx++) {

int out_offset = xx * 4;

int in_offset = xx * 3;

line[out_offset] = frameLine[in_offset];

line[out_offset+1] = frameLine[in_offset+1];

line[out_offset+2] = frameLine[in_offset+2];

line[out_offset+3] = 0;

}

pixels = (char*)pixels + info->stride;

}

}

//定义java回调函数,相当与 com.churnlabs中的ffmpegsample中的MainActivity类中的openFile方法。

void Java_com_churnlabs_ffmpegsample_MainActivity_openFile(JNIEnv * env, jobject this)

{

int ret;

int err;

int i;

AVCodec *pCodec;

uint8_t *buffer;

int numBytes;

//注册所有的函数

av_register_all();

LOGE("Registered formats");

//打开sdcard中的vid.3gp文件

err = av_open_input_file(&pFormatCtx, "file:/sdcard/vid.3gp", NULL, 0, NULL);

LOGE("Called open file");

if(err!=0) {

LOGE("Couldn't open file");

return;

}

LOGE("Opened file");

if(av_find_stream_info(pFormatCtx)<0) {

LOGE("Unable to get stream info");

return;

}

videoStream = -1;

//定义设置videoStream

for (i=0; i<pFormatCtx->nb_streams; i++) {

if(pFormatCtx->streams[i]->codec->codec_type==CODEC_TYPE_VIDEO) {

videoStream = i;

break;

}

}

if(videoStream==-1) {

LOGE("Unable to find video stream");

return;

}

LOGI("Video stream is [%d]", videoStream);

//定义编码类型

pCodecCtx=pFormatCtx->streams[videoStream]->codec;

//获取解码器

pCodec=avcodec_find_decoder(pCodecCtx->codec_id);

if(pCodec==NULL) {

LOGE("Unsupported codec");

return;

}

//使用特定的解码器打开

if(avcodec_open(pCodecCtx, pCodec)<0) {

LOGE("Unable to open codec");

return;

}

//分配帧空间

pFrame=avcodec_alloc_frame();

//分配RGB帧空间

pFrameRGB=avcodec_alloc_frame();

LOGI("Video size is [%d x %d]", pCodecCtx->width, pCodecCtx->height);

//获取大小

numBytes=avpicture_get_size(PIX_FMT_RGB24, pCodecCtx->width, pCodecCtx->height);

分配空间

buffer=(uint8_t *)av_malloc(numBytes*sizeof(uint8_t));

avpicture_fill((AVPicture *)pFrameRGB, buffer, PIX_FMT_RGB24,

pCodecCtx->width, pCodecCtx->height);

}

//定义java回调函数,相当与 com.churnlabs中的ffmpegsample中的MainActivity类中的drawFrame方法。

void Java_com_churnlabs_ffmpegsample_MainActivity_drawFrame(JNIEnv * env, jobject this, jstring bitmap)

{

AndroidBitmapInfo info;

void* pixels;

int ret;

int err;

int i;

int frameFinished = 0;

AVPacket packet;

static struct SwsContext *img_convert_ctx;

int64_t seek_target;

if ((ret = AndroidBitmap_getInfo(env, bitmap, &info)) < 0) {

LOGE("AndroidBitmap_getInfo() failed ! error=%d", ret);

return;

}

LOGE("Checked on the bitmap");

if ((ret = AndroidBitmap_lockPixels(env, bitmap, &pixels)) < 0) {

LOGE("AndroidBitmap_lockPixels() failed ! error=%d", ret);

}

LOGE("Grabbed the pixels");

i = 0;

while((i==0) && (av_read_frame(pFormatCtx, &packet)>=0)) {

if(packet.stream_index==videoStream) {

avcodec_decode_video2(pCodecCtx, pFrame, &frameFinished, &packet);

if(frameFinished) {

LOGE("packet pts %llu", packet.pts);

// This is much different than the tutorial, sws_scale

// replaces img_convert, but it's not a complete drop in.

// This version keeps the image the same size but swaps to

// RGB24 format, which works perfect for PPM output.

int target_width = 320;

int target_height = 240;

img_convert_ctx = sws_getContext(pCodecCtx->width, pCodecCtx->height,

pCodecCtx->pix_fmt,

target_width, target_height, PIX_FMT_RGB24, SWS_BICUBIC,

NULL, NULL, NULL);

if(img_convert_ctx == NULL) {

LOGE("could not initialize conversion context\n");

return;

}

sws_scale(img_convert_ctx, (const uint8_t* const*)pFrame->data, pFrame->linesize, 0, pCodecCtx->height, pFrameRGB->data, pFrameRGB->linesize);

// save_frame(pFrameRGB, target_width, target_height, i);

fill_bitmap(&info, pixels, pFrameRGB);

i = 1;

}

}

av_free_packet(&packet);

}

AndroidBitmap_unlockPixels(env, bitmap);

}

//内部调用函数,不对外,用来查找帧

int seek_frame(int tsms)

{

int64_t frame;

frame = av_rescale(tsms,pFormatCtx->streams[videoStream]->time_base.den,pFormatCtx->streams[videoStream]->time_base.num);

frame/=1000;

if(avformat_seek_file(pFormatCtx,videoStream,0,frame,frame,AVSEEK_FLAG_FRAME)<0) {

return 0;

}

avcodec_flush_buffers(pCodecCtx);

return 1;

}

//定义java回调函数,相当与 com.churnlabs中的ffmpegsample中的MainActivity类中的drawFrameAt方法。

void Java_com_churnlabs_ffmpegsample_MainActivity_drawFrameAt(JNIEnv * env, jobject this, jstring bitmap, jint secs)

{

AndroidBitmapInfo info;

void* pixels;

int ret;

int err;

int i;

int frameFinished = 0;

AVPacket packet;

static struct SwsContext *img_convert_ctx;

int64_t seek_target;

if ((ret = AndroidBitmap_getInfo(env, bitmap, &info)) < 0) {

LOGE("AndroidBitmap_getInfo() failed ! error=%d", ret);

return;

}

LOGE("Checked on the bitmap");

if ((ret = AndroidBitmap_lockPixels(env, bitmap, &pixels)) < 0) {

LOGE("AndroidBitmap_lockPixels() failed ! error=%d", ret);

}

LOGE("Grabbed the pixels");

seek_frame(secs * 1000);

i = 0;

while ((i== 0) && (av_read_frame(pFormatCtx, &packet)>=0)) {

if(packet.stream_index==videoStream) {

avcodec_decode_video2(pCodecCtx, pFrame, &frameFinished, &packet);

if(frameFinished) {

// This is much different than the tutorial, sws_scale

// replaces img_convert, but it's not a complete drop in.

// This version keeps the image the same size but swaps to

// RGB24 format, which works perfect for PPM output.

int target_width = 320;

int target_height = 240;

img_convert_ctx = sws_getContext(pCodecCtx->width, pCodecCtx->height,

pCodecCtx->pix_fmt,

target_width, target_height, PIX_FMT_RGB24, SWS_BICUBIC,

NULL, NULL, NULL);

if(img_convert_ctx == NULL) {

LOGE("could not initialize conversion context\n");

return;

}

sws_scale(img_convert_ctx, (const uint8_t* const*)pFrame->data, pFrame->linesize, 0, pCodecCtx->height, pFrameRGB->data, pFrameRGB->linesize);

// save_frame(pFrameRGB, target_width, target_height, i);

fill_bitmap(&info, pixels, pFrameRGB);

i = 1;

}

}

av_free_packet(&packet);

}

AndroidBitmap_unlockPixels(env, bitmap);

}

3 撰写相应的Android.mk文件

LOCAL_PATH := $(call my-dir) include $(CLEAR_VARS) LOCAL_MODULE := ffmpegutils LOCAL_SRC_FILES := native.c LOCAL_C_INCLUDES := $(LOCAL_PATH)/include LOCAL_LDLIBS := -L$(NDK_PLATFORMS_ROOT)/$(TARGET_PLATFORM)/arch-arm/usr/lib -L$(LOCAL_PATH) -lavformat -lavcodec -lavdevice -lavfilter -lavcore -lavutil -lswscale -llog -ljnigraphics -lz -ldl -lgcc include $(BUILD_SHARED_LIBRARY)

这里需要注意一下文件的目录情况,我截图说明一下。

在Android.mk中有意个LOCAL_C_INCLUDES :=$(LOCAL_PATH)/include指明了相应的FFmpeg的头文件路径。故在代码中包含

#include <libavcodec/avcodec.h> #include <libavformat/avformat.h> #include <libswscale/swscale.h>

就可以。

4 调用ndk-build,生成libffmpegutils.so文件,将这个文件拷贝到/root/develop/android-ndk-r6/platforms/android-8/arch-arm/usr/lib目录,使得我们在下面使用Android AVD2.2的时候,可以加载到这个so文件。

5 撰写相应的Eclipse项目代码,由于在native.c文件中指明了项目的工程名词以及类名词还有函数名词,故我们的项目为com.churnlabs.ffmpegsample下面的MainActivity.java文件

package com.churnlabs.ffmpegsample;

import android.app.Activity;

import android.graphics.Bitmap;

import android.os.Bundle;

import android.view.View;

import android.view.View.OnClickListener;

import android.widget.Button;

import android.widget.ImageView;

public class MainActivity extends Activity {

private static native void openFile();

private static native void drawFrame(Bitmap bitmap);

private static native void drawFrameAt(Bitmap bitmap, int secs);

private Bitmap mBitmap;

private int mSecs = 0;

static {

System.loadLibrary("ffmpegutils");

}

/** Called when the activity is first created. */

@Override

public void onCreate(Bundle savedInstanceState) {

super.onCreate(savedInstanceState);

//setContentView(new VideoView(this));

setContentView(R.layout.main);

mBitmap = Bitmap.createBitmap(320, 240, Bitmap.Config.ARGB_8888);

openFile();

Button btn = (Button)findViewById(R.id.frame_adv);

btn.setOnClickListener(new OnClickListener() {

public void onClick(View v) {

drawFrame(mBitmap);

ImageView i = (ImageView)findViewById(R.id.frame);

i.setImageBitmap(mBitmap);

}

});

Button btn_fwd = (Button)findViewById(R.id.frame_fwd);

btn_fwd.setOnClickListener(new OnClickListener() {

public void onClick(View v) {

mSecs += 5;

drawFrameAt(mBitmap, mSecs);

ImageView i = (ImageView)findViewById(R.id.frame);

i.setImageBitmap(mBitmap);

}

});

Button btn_back = (Button)findViewById(R.id.frame_back);

btn_back.setOnClickListener(new OnClickListener() {

public void onClick(View v) {

mSecs -= 5;

drawFrameAt(mBitmap, mSecs);

ImageView i = (ImageView)findViewById(R.id.frame);

i.setImageBitmap(mBitmap);

}

});

}

}

6 编译运行即可,最终效果图

7 项目代码下载:

https://github.com/churnlabs/android-ffmpeg-sample/zipball/master

参考:

1 https://github.com/churnlabs/android-ffmpeg-sample

2 http://www.360doc.com/content/10/1216/17/474846_78726683.shtml

3 https://github.com/prajnashi

本文同发布地址:

http://doandroid.info/?p=497

感谢原作者分享,转载:http://www.cnblogs.com/doandroid/archive/2011/11/09/2242558.html