Memcached - In Action

Memcached

标签 : Java与NoSQL

With Java

比较知名的Java Memcached客户端有三款:Java-Memcached-Client、XMemcached以及Spymemcached, 其中以XMemcached性能最好, 且维护较稳定/版本较新:

<dependency>

<groupId>com.googlecode.xmemcached</groupId>

<artifactId>xmemcached</artifactId>

<version>2.0.0</version>

</dependency>XMemcached以及其他两款Memcached客户端的详细信息可参考博客XMemcached-一个新的开源Java memcached客户端、Java几个Memcached连接客户端对比选择.

实践

任何技术都有其最适用的场景,只有在合适的场景下,才能发挥最好的效果.Memcached使用内存读写数据,速度比DB和文件系统快得多, 因此,Memcached的常用场景有:

- 缓存DB查询数据: 作为缓存“保护”数据库, 防止频繁的读写带给DB过大的压力;

- 中继MySQL主从延迟: 利用其“读写快”特点实现主从数据库的消息同步.

缓存DB查询数据

- JDBC模拟Memcached缓存DB数据:

/** * @author jifang. * @since 2016/6/13 20:08. */

public class MemcachedDAO {

private static final int _1M = 60 * 1000;

private static final DataSource dataSource;

private static final MemcachedClient mc;

static {

Properties properties = new Properties();

try {

properties.load(ClassLoader.getSystemResourceAsStream("db.properties"));

} catch (IOException ignored) {

}

/** 初始化连接池 **/

HikariConfig config = new HikariConfig();

config.setDriverClassName(properties.getProperty("mysql.driver.class"));

config.setJdbcUrl(properties.getProperty("mysql.url"));

config.setUsername(properties.getProperty("mysql.user"));

config.setPassword(properties.getProperty("mysql.password"));

config.setMaximumPoolSize(Integer.valueOf(properties.getProperty("pool.max.size")));

config.setMinimumIdle(Integer.valueOf(properties.getProperty("pool.min.size")));

config.setIdleTimeout(Integer.valueOf(properties.getProperty("pool.max.idle_time")));

config.setMaxLifetime(Integer.valueOf(properties.getProperty("pool.max.life_time")));

dataSource = new HikariDataSource(config);

/** 初始化Memcached **/

try {

mc = new XMemcachedClientBuilder(properties.getProperty("memcached.servers")).build();

} catch (IOException e) {

throw new RuntimeException(e);

}

}

public List<Map<String, Object>> executeQuery(String sql) {

List<Map<String, Object>> result;

try {

/** 首先请求MC **/

String key = sql.replace(' ', '-');

result = mc.get(key);

// 如果key未命中, 再请求DB

if (result == null || result.isEmpty()) {

ResultSet resultSet = dataSource.getConnection().createStatement().executeQuery(sql);

/** 获得列数/列名 **/

ResultSetMetaData meta = resultSet.getMetaData();

int columnCount = meta.getColumnCount();

List<String> columnName = new ArrayList<>();

for (int i = 1; i <= columnCount; ++i) {

columnName.add(meta.getColumnName(i));

}

/** 填充实体 **/

result = new ArrayList<>();

while (resultSet.next()) {

Map<String, Object> entity = new HashMap<>(columnCount);

for (String name : columnName) {

entity.put(name, resultSet.getObject(name));

}

result.add(entity);

}

/** 写入MC **/

mc.set(key, _1M, result);

}

} catch (TimeoutException | InterruptedException | MemcachedException | SQLException e) {

throw new RuntimeException(e);

}

return result;

}

public static void main(String[] args) {

MemcachedDAO dao = new MemcachedDAO();

List<Map<String, Object>> execute = dao.executeQuery("select * from orders");

System.out.println(execute);

}

}注: 代码仅供展示DB缓存思想,因为一般项目很少会直接使用JDBC操作DB,而是会选用像MyBatis之类的ORM框架代替之,而这类框架框架一般也会开放接口出来实现与缓存产品的整合(如MyBatis开放出一个

org.apache.ibatis.cache.Cache接口,通过实现该接口,可将Memcached与MyBatis整合, 细节可参考博客MyBatis与Memcached集成.

中继MySQL主从延迟

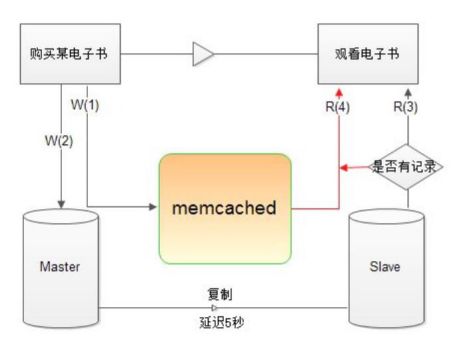

MySQL在做replication时,主从复制时会由一段时间延迟,尤其是主从服务器分处于异地机房时,这种情况更加明显.FaceBook官方的一篇技术文章提到:其加州的数据中心到弗吉尼亚州数据中心的主从同步延迟达到70MS. 考虑以下场景:

- 用户U购买电子书B:

insert into Master (U,B); - 用户U观看电子书B:

select 购买记录 [user='A',book='B'] from Slave.

由于主从延迟的存在,第②步中无记录,用户无权观看该书.

此时可以利用Memcached在Master与Slave之间做过渡:

- 用户U购买电子书B:

memcached->add('U:B',true); - 主数据库:

insert into Master (U,B); - 用户U观看电子书B:

select 购买记录 [user='U',book='B'] from Slave;

如果没查询到,则memcached->get('U:B'),查到则说明已购买但有主从延迟. - 如果Memcached中也没查询到,用户无权观看该书.

分布式缓存

Memcached虽然名义上是分布式缓存,但其自身并未实现分布式算法.当一个请求到达时,需要由客户端实现的分布式算法将不同的key路由到不同的Memcached服务器中.而分布式取模算法有着致命的缺陷(详细可参考分布式之取模算法的缺陷), 因此Memcached客户端一般采用一致性Hash算法来保证分布式.

- 目标:

- key的分布尽量均匀;

- 增/减服务器节点对于其他节点的影响尽量小.

一致性Hash算法

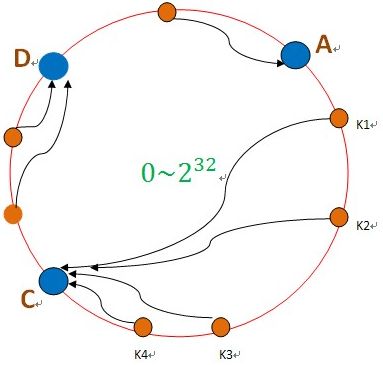

首先开辟一块非常大的空间(如图中:0~232),然后将所有的数据使用

hash函数(如MD5、Ketama等)映射到这个空间内,形成一个Hash环. 当有数据需要存储时,先得到一个hash值对应到hash环上的具体位置(如k1),然后沿顺时针方向找到一台机器(如B),将k1存储到B这个节点中:

这样,只会影响C节点,对其他的节点如A、D的数据都不会造成影响. 然而,这样又会带来一定的风险,由于B节点的负载全部由C节点承担,C节点的负载会变得很高,因此C节点又会很容易宕机,依次下去会造成整个集群的不稳定.

理想的情况下是当B节点宕机时,将原先B节点上的负载平均的分担到其他的各个节点上. 为此,又引入了“虚拟节点”的概念: 想象在这个环上有很多“虚拟节点”,数据的存储是沿着环的顺时针方向找一个虚拟节点,每个虚拟节点都会关联到一个真实节点,但一个真实节点会对应多个虚拟节点,且不同真实节点的多个虚拟节点是交差分布的:

图中A1、A2、B1、B2、C1、C2、D1、D2 都是“虚拟节点”,机器A负责存储A1、A2的数据, 机器B负责存储B1、B2的数据… 只要虚拟节点数量足够多且分布均匀,当其中一台机器宕机之后,原先机器上的负载就会平均分配到其他所有机器上(如图中节点B宕机,其负载会分担到节点A和节点D上).

Java实现

/** * @author jifang. * @since 2016/6/5 11:55. */

public class ConsistentHash<Node> {

/** * 虚拟节点-真实节点Map */

public SortedMap<Long, Node> VRNodesMap = new TreeMap<>();

/** * 虚拟节点数目 */

private int vCount = 50;

/** * 真实节点数目 */

private int rCount = 0;

public ConsistentHash() {

}

public ConsistentHash(int vCount) {

this.vCount = vCount;

}

public ConsistentHash(List<Node> rNodes) {

init(rNodes);

}

public ConsistentHash(List<Node> rNodes, int vCount) {

this.vCount = vCount;

init(rNodes);

}

private void init(List<Node> rNodes) {

if (rNodes != null) {

for (Node node : rNodes) {

add(rCount, node);

++rCount;

}

}

}

public void addRNode(Node rNode) {

add(rCount, rNode);

++rCount;

}

public void rmRNode(Node rNode) {

--rCount;

remove(rCount, rNode);

}

public Node getRNode(String key) {

// 沿环的顺时针找到一个虚拟节点

SortedMap<Long, Node> tailMap = VRNodesMap.tailMap(hash(key));

if (tailMap.size() == 0) {

return VRNodesMap.get(VRNodesMap.firstKey());

}

return tailMap.get(tailMap.firstKey());

}

private void add(int rIndex, Node rNode) {

for (int j = 0; j < vCount; ++j) {

VRNodesMap.put(hash(String.format("RNode-%s-VNode-%s", rIndex, j)), rNode);

}

}

private void remove(int rIndex, Node rNode) {

for (int j = 0; j < vCount; ++j) {

VRNodesMap.remove(hash(String.format("RNode-%s-VNode-%s", rIndex, j)));

}

}

/** * MurMurHash算法,是非加密HASH算法,性能很高, * 比传统的CRC32,MD5,SHA-1(这两个算法都是加密HASH算法,复杂度本身就很高,带来的性能上的损害也不可避免) * 等HASH算法要快很多,而且据说这个算法的碰撞率很低. * http://murmurhash.googlepages.com/ */

private Long hash(String key) {

ByteBuffer buf = ByteBuffer.wrap(key.getBytes());

int seed = 0x1234ABCD;

ByteOrder byteOrder = buf.order();

buf.order(ByteOrder.LITTLE_ENDIAN);

long m = 0xc6a4a7935bd1e995L;

int r = 47;

long h = seed ^ (buf.remaining() * m);

long k;

while (buf.remaining() >= 8) {

k = buf.getLong();

k *= m;

k ^= k >>> r;

k *= m;

h ^= k;

h *= m;

}

if (buf.remaining() > 0) {

ByteBuffer finish = ByteBuffer.allocate(8).order(

ByteOrder.LITTLE_ENDIAN);

// for big-endian version, do this first:

// finish.position(8-buf.remaining());

finish.put(buf).rewind();

h ^= finish.getLong();

h *= m;

}

h ^= h >>> r;

h *= m;

h ^= h >>> r;

buf.order(byteOrder);

return h;

}

}- 测试

public class ConsistentHashMain {

private static final int KEY_COUNT = 1000;

@Test

public void test() {

ConsistentHash<String> nodes = new ConsistentHash<>(new ArrayList<String>(), 50);

nodes.addRNode("10.45.156.11");

nodes.addRNode("10.45.156.12");

nodes.addRNode("10.45.156.13");

nodes.addRNode("10.45.156.14");

nodes.addRNode("10.45.156.15");

nodes.addRNode("10.45.156.16");

nodes.addRNode("10.45.156.17");

nodes.addRNode("10.45.156.18");

nodes.addRNode("10.45.156.19");

nodes.addRNode("10.45.156.10");

Map<String, String> map = new HashMap<>();

initMap(map, nodes);

// 删除节点

nodes.rmRNode("10.45.156.19");

// 增加节点

nodes.addRNode("10.45.156.20");

int mis = 0;

for (Map.Entry<String, String> entry : map.entrySet()) {

String key = entry.getKey();

String value = entry.getValue();

if (!nodes.getRNode(key).equals(value)) {

++mis;

}

}

System.out.println(String.format("当前命中率为:%s%%", (KEY_COUNT - mis) * 100.0 / KEY_COUNT));

}

private void initMap(Map<String, String> map, ConsistentHash<String> nodes) {

for (int i = 0; i < KEY_COUNT; ++i) {

String key = String.format("key-%s", i);

map.put(key, nodes.getRNode(key));

}

}

}经过实际测试: 当有十台真实节点,而每个真实节点有50个虚拟节点时,在发生一台实际节点宕机/新增一台节点的情况时,命中率仍然能够达到90%左右.对比简单取模Hash算法:

当节点从N到N-1时,缓存的命中率直线下降为1/N(N越大,命中率越低);一致性Hash的表现就优秀多了:

命中率只下降为原先的 (N-1)/N ,且服务器节点越多,性能越好.因此一致性Hash算法可以最大限度地减小服务器增减时的缓存重新分布带来的压力.

XMemcached实现

实际上XMemcached客户端自身实现了很多一致性Hash算法(KetamaMemcachedSessionLocator/PHPMemcacheSessionLocator), 因此在开发中没有必要自己去实现:

- 示例: 支持分布式的MemcachedFilter:

/** * @author jifang. * @since 2016/5/21 15:50. */

public class MemcachedFilter implements Filter {

private MemcachedClient memcached;

private static final int _1MIN = 60;

@Override

public void init(FilterConfig filterConfig) throws ServletException {

try {

MemcachedClientBuilder builder = new XMemcachedClientBuilder(

AddrUtil.getAddresses("10.45.156.11:11211" +

"10.45.156.12:11211" +

"10.45.156.13:11211"));

builder.setSessionLocator(new KetamaMemcachedSessionLocator());

memcached = builder.build();

} catch (IOException e) {

throw new RuntimeException(e);

}

}

@Override

public void doFilter(ServletRequest req, ServletResponse response, FilterChain chain) throws IOException, ServletException {

// 对PrintWriter包装

MemcachedWriter mWriter = new MemcachedWriter(response.getWriter());

chain.doFilter(req, new MemcachedResponse((HttpServletResponse) response, mWriter));

HttpServletRequest request = (HttpServletRequest) req;

String key = request.getRequestURI();

Enumeration<String> names = request.getParameterNames();

if (names.hasMoreElements()) {

String name = names.nextElement();

StringBuilder sb = new StringBuilder(key)

.append("?").append(name).append("=").append(request.getParameter(name));

while (names.hasMoreElements()) {

name = names.nextElement();

sb.append("&").append(name).append("=").append(request.getParameter(name));

}

key = sb.toString();

}

try {

String rspContent = mWriter.getRspContent();

memcached.set(key, _1MIN, rspContent);

} catch (TimeoutException | InterruptedException | MemcachedException e) {

throw new RuntimeException(e);

}

}

@Override

public void destroy() {

}

private static class MemcachedWriter extends PrintWriter {

private StringBuilder sb = new StringBuilder();

private PrintWriter writer;

public MemcachedWriter(PrintWriter out) {

super(out);

this.writer = out;

}

@Override

public void print(String s) {

sb.append(s);

this.writer.print(s);

}

public String getRspContent() {

return sb.toString();

}

}

private static class MemcachedResponse extends HttpServletResponseWrapper {

private PrintWriter writer;

public MemcachedResponse(HttpServletResponse response, PrintWriter writer) {

super(response);

this.writer = writer;

}

@Override

public PrintWriter getWriter() throws IOException {

return this.writer;

}

}

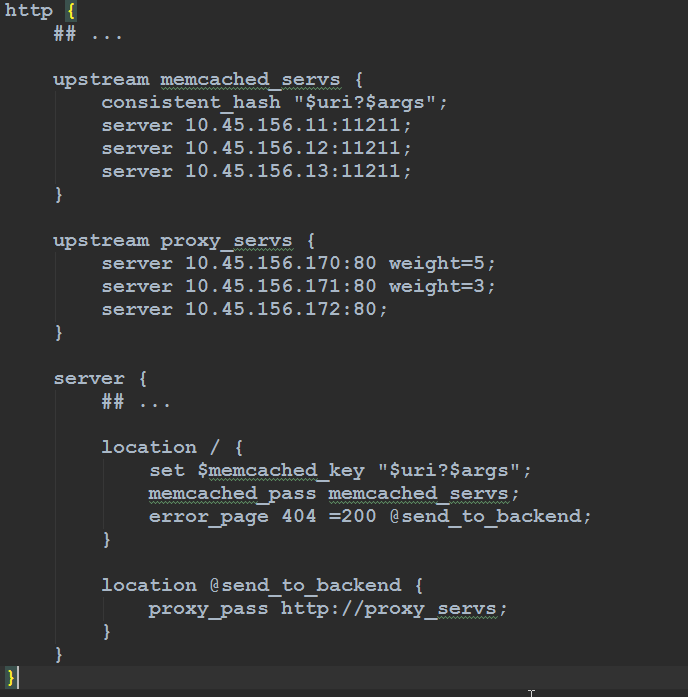

}以上代码最好有Nginx的如下配置支持:

Nginx以前端请求的"URI+Args"作为key去请求Memcached,如果key命中,则直接由Nginx从缓存中取出数据响应前端;未命中,则产生404异常,Nginx捕获之并将request提交后端服务器.在后端服务器中,request被MemcachedFilter拦截, 待业务逻辑执行完, 该Filter会将Response的数据拿到并写入Memcached, 以备下次直接响应.

- 参考:

- 缓存系统MemCached的Java客户端优化历程

- memcached Java客户端spymemcached的一致性Hash算法

- 一致性哈希算法及其在分布式系统中的应用

- 陌生但默默一统江湖的MurmurHash

- Hash 函数概览