Pyhton 爬虫案例(四)--item插入MySQL数据库

本片来讲如何将爬取到的数据插入到mysql数据库中,用到的工具有PyCharm和Navicat for MySQL或者是Navicat Premium这两个.

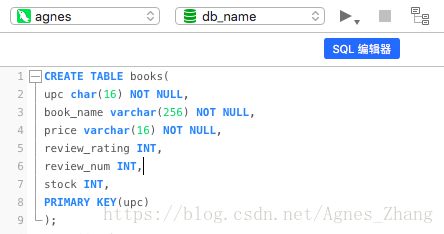

爬取的网站是:http://books.toscrape.com/,这个很多教程里都有讲解,这里不做赘述,我们先打开navicat, 在新建查询,编辑器里边创建table(用的是db_name这个库):

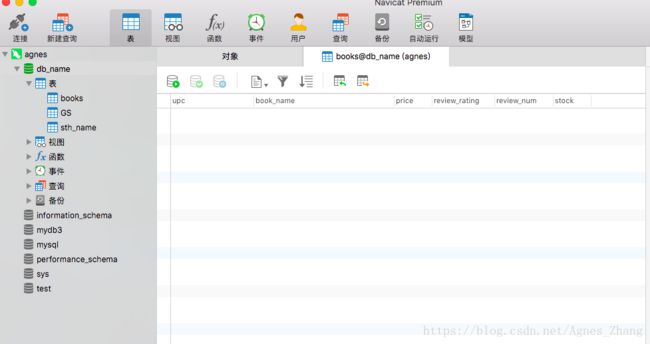

成功之后,books就像这样:

打开PyCharm,先建toscrape_book为名的爬虫项目,在items.py中的代码如下:

from scrapy import Item,Field

class BookItem(Item):

name = Field()

price =Field()

review_rating =Field() #评价星级

review_num =Field() #评价数量

upc =Field() #产品编码

stock =Field() # 存库量爬虫文件中books.py:

# -*- coding: utf-8 -*-

import scrapy

from scrapy.linkextractors import LinkExtractor

from toscrape_book.items import BookItem

class BooksSpider(scrapy.Spider):

name = 'books'

allowed_domains = ['books.toscrape.com']

start_urls = ['http://books.toscrape.com/']

cats={ #这里主要是将评价星级的英文匹配成了数字

'One':'1',

'Two':'2',

'Three':'3',

'Four':'4',

'Five':'5'

}

def parse(self, response):

le = LinkExtractor(restrict_css='article.product_pod h3')#获取每本书详细页面的链接

for link in le.extract_links(response):

yield scrapy.Request(link.url,callback=self.parse_book,dont_filter=True)

le = LinkExtractor(restrict_css='ul.pager li.next') #获取翻页的链接

links = le.extract_links(response)

if links:

next_link = links[0].url

yield scrapy.Request(next_link,callback=self.parse,dont_filter=True)

def parse_book(self,response):

item=BookItem()

sel = response.css('div.product_main')

item['name']= sel.xpath('./h1/text()').extract_first()

item['price']= sel.css('p.price_color::text').extract_first()

rating = sel.css('p.star-rating::attr(class)').re_first('star-rating ([A-Za-z]+)')

item['review_rating'] = self.cats[rating] #将评价星级的英文匹配成数字

sel= response.css('table.table.table-striped')

item['review_num']=sel.xpath('.//tr[last()]/td/text()').extract_first()

item['upc']=sel.xpath('.//tr[1]/td/text()').extract_first()

item['stock']=sel.xpath('.//tr[last()-1]/td/text()').re_first('\((\d+) available\)') #用正则找available前的数字

yield itemsettings.py:

# Obey robots.txt rules

ROBOTSTXT_OBEY = False

ITEM_PIPELINES = {

'toscrape_book.pipelines.MySQLPipeline': 100,

}

# Mysql数据库连接,以下是我的数据库信息

MYSQL_HOST = 'localhost'

MYSQL_DB_NAME = 'db_name'

MYSQL_USER = 'root'

MYSQL_PASSWORD = '你自己的数据库密码'

MYSQL_PORT =3306pipelines.py:

# -*- coding: utf-8 -*-

import pymysql #用来连接数据库的第三方库文件

from scrapy.conf import settings

class MySQLPipeline(object):

def process_item(self,item,spider):

db= settings['MYSQL_DB_NAME'] #调用settings.py中的对应数据信息

host = settings['MYSQL_HOST']

port = settings['MYSQL_PORT']

user = settings['MYSQL_USER']

passwd =settings['MYSQL_PASSWORD']

db_conn = pymysql.connect(host = host,port = port, db= db,user=user,passwd=passwd,charset ='utf8')

db_cur = db_conn.cursor() #创建cursor对象取执行SQL语句

print("数据库连接成功")

values =( #这是我们要传入数据库的值

item['upc'],

item['name'],

item['price'],

item['review_rating'],

item['review_num'],

item['stock'],

)

try:

sql = 'INSERT INTO books VALUES (%s,%s,%s,%s,%s,%s)' #SQL语句

db_cur.execute(sql,values) #用execute执行

print("数据插入成功")

except Exception as e:

print('Insert error:', e)

db_conn.rollback()

else:

db_conn.commit() #每插入一次就commit一次,数据才算保存了下来

db_cur.close()

return item可以啦,这样就建立了一个简单的连接和插入啦,在terminal中运行爬虫:

scrapy crawl books

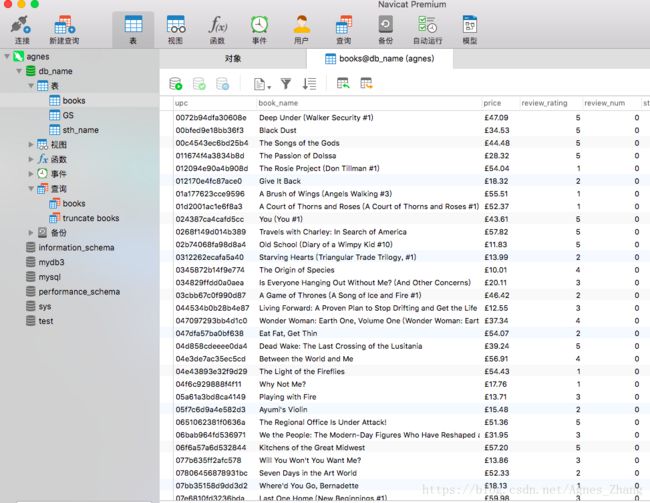

打开查看navicat,结果如下图:

但是这样建立的插入连接非常低效,每插入一条就commit一次且连接一次数据库,解决方法就是实现异步网络框架,利用 Twisted.enterprise 中的 adbapi模块,即可显著提高程序访问数据库的效率,这点将在案例(五)中讲解。